Generalised linear mixed model analysis via sequential Monte Carlo sampling

We present a sequential Monte Carlo sampler algorithm for the Bayesian analysis of generalised linear mixed models (GLMMs). These models support a variety of interesting regression-type analyses, but performing inference is often extremely difficult,…

Authors: Y. Fan, D.S. Leslie, M.P. W

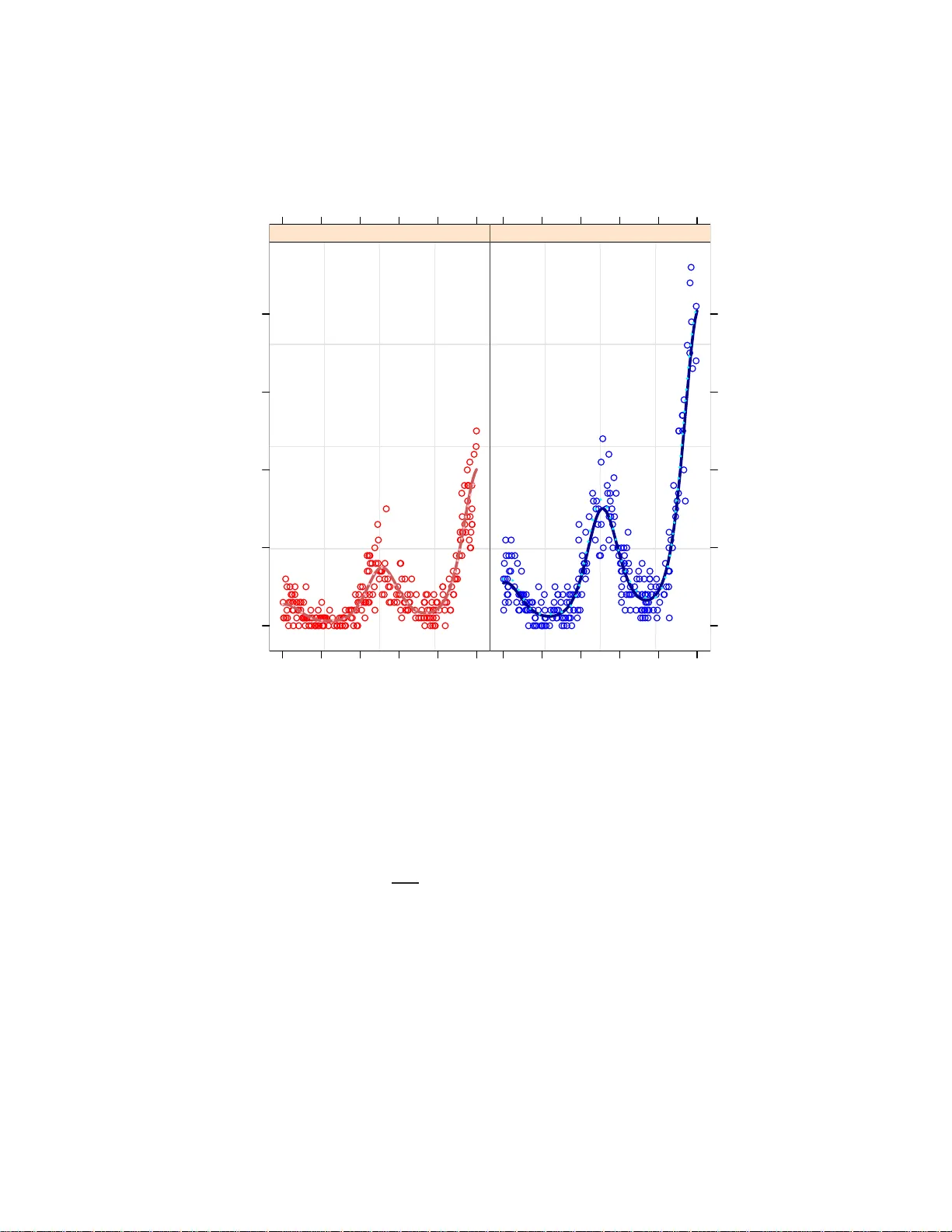

Electronic Journal of Stati stics V ol. 2 (2008) 916–938 ISSN: 1935-7524 DOI: 10.1214/ 07-EJS15 8 Generalise d linear mixed mo de l analysi s via se quen tial Mon te C arlo sampling Y. F an Scho ol of Mathematics and Statistics, University of New South Wales, Sydney 20 52, A ustr alia e-mail: Y.Fan@un sw.edu.a u D.S. Lesl ie Scho ol of Mathematics, University of Bristol, University Walk, Bristol, BS8 1TW, Unite d Kingdom e-mail: David.Le slie@bri stol.ac.uk M.P . W and Scho ol of Mathematics and Applie d Statistics, University of Wol longong, Wol longong 2522 , Aus tr al ia e-mail: mwand@uo w.edu.au Abstract: W e presen t a sequential Monte Car lo sampler algorithm for the Ba yesian analysis of generalised li near mixed mo dels (GLMMs). These models support a v ariet y of i nteresting regression-type analyses, b ut p er- forming inference is often extremely difficult, even when using the Bay esi an approac h com bined with Marko v ch ain Mon te Carl o (MCMC). The Sequen- tial Mon te Car lo sampler (SMC) i s a new and general method for producing samples from p osterior distributions. In this article we demonstrate use of the SMC m ethod for perform ing inference f or G LMMs. W e demonstrate the effective ness of the method on b oth sim ulated and real data, and find that sequ en tial Mon te Carlo is a competitiv e alternativ e to the a v ail able MCMC tec hniques. Keywords and p hrases: generalised additive models, l ongitudinal data analysis, nonparametric regression, sequen tial Monte Carl o sampler . Receiv ed Decem ber 2007. 1. In troductio n Effective strategies for generalis ed linear mixed mo del (GLMM) analysis con- tin ues to b e a vibrant research are a. Reasons include: • GLMMs hav e become a n indisp ensable vehicle for analysing a significant po rtion of co nt empo rary complex data sets. • GLMMs are inherently difficult to fit compar ed with or dinary linea r mixed mo dels and ge neralised linear mo dels. 916 Y. F an et al./GLM mo del analysis via SMC sampling 917 • Existing strateg ies inv olve a num ber of tra de-offs co ncerning, for example, approximation accur acy , computational times a nd Markov chain conv er- gence. Overviews of the usefulness and difficulties of GLMM-based a nalysis may be found in, for example, [ 23 , 27 ] and [ 28 ]. Most prac tica l GLMM metho dolog y falls into t w o categories: analytic a pprox- imations (e.g. [ 2 ]) a nd Mon te Carlo metho ds (e.g. [ 6 ]). Monte Carlo methods hav e the adv an tage of providing direct approximations to quantities of in terest [ 1 ]. O n the other hand, analytic approximations, such as Laplace a pproximation, are indir ect a nd pr one to substantial bias (e.g. [ 3 ]). The mo st co mmon Monte Carlo appr oach is Mar ko v Chain Monte Carlo (MCMC), wher e approximation accuracy is as s o ciated with Markov chain con vergence. [ 30 ] is a rece nt example of rese arch concerned with practical GLMM analy- sis via Markov chain Mo n te Carlo . Those author s explored use of the MCMC computing pack age Wi nBUGS and showed it to exhibit go o d p erfor mance for a nu m ber of exa mples. One of the ma jor difficulties asso ciated with using MCMC is the need to a s- sess conv ergence. Popular metho ds for convergence asses sment often r ely on the compariso n of multiple sa mple o utput; see [ 7 ] for a compa rative review. These metho ds can inv aria bly fail to detect a lack of con v ergence a nd one nee ds to b e cautious when taking such an appro ach. Another ma jor drawbac k of MCMC is the difficulty in desig ning efficient sa mplers for co mplex problems. The use of historical informa tion fr o m MCMC sample paths has to be treated v ery car e - fully , so that the eq uilibrium distribution of the Ma rko v chain is not disturbe d. V ario us metho ds ha v e b een pr op osed in the liter a ture, (see [ 14 ]), how ever the practical applicability of these s o -called adaptive metho ds can b e limited. Both problems as so ciated with MCMC discussed a bove are inherently due to the Markovian nature of the MCMC sampler. Seq uen tial Mo nt e Carlo (SMC) metho ds provide an alternative framework for po sterior sa mpling, which is not depe ndent o n the conv ergence of a Marko v chain as in the MCMC s ampler ca se. Though careful a ssessment o f p osterior s a mples is also a pplicable in the SMC case, these ar e more in line with the standard Monte Car lo metho ds. SMC sam- plers can b e seen as an extension of the well known imp orta nce sampling method. The fact that SMC metho ds do not rely on Markov chain theory means that it is a more flexible sampler . In the sens e that fo r example, if the historica l sample path of the SMC s ampler is informative for the de s ign o f a n efficient a lgorithm, this can be done quite ea s ily in the SMC framework. In this a r ticle we s how that sequential Monte Car lo metho ds pr ovide an effective means o f Bayesian GLMM analysis. W e provide a g eneral yet s imple framework for efficient design of the sampler, and demonstrate that this approach is a viable alternative to MCMC, and since SMC samplers require a num b er o f user-sp ecified inputs, w e will give recommendations in the GLMM fra mew ork on how these are chosen. Section 2 contains a brief summary of Bay esian approaches to genera lised linear mix e d mo dels. In Section 3 we provide details on ana lysis for such mo dels via sequential Mon te Car lo sampling. In Section 4 we presen t tw o examples. In Y. F an et al./GLM mo del analysis via SMC sampling 918 a simulated Poisson regr ession example, we compar e the efficiencies of the SMC sampler with alternativ e Mo nt e Ca rlo metho ds, and then demonstrate the ef- fectiveness of the SMC sampler in a binary logistic regre s sion example inv olving real data. Some co mparisons of algo rithm e fficie ncies for the tw o ex amples are carried out in Section 5 and concluding rema rks are given in Section 6 . The softw are us ed for this pap er is av ailable from the authors o n r equest. 2. Ba y esi an generalise d l inear m ixed mo dels GLMMs for ca nonical one-para meter exp onential families (e.g. Poisson, log istic) and Gaussian r a ndom effects take the general form [ y | β β β , u , G ] = exp { y T ( X β β β + Zu ) − 1 T b ( X β β β + Zu ) + 1 T c ( y ) } , (1) [ u | G ] ∼ N ( 0 , G ) (2) where her e, a nd thro ughout, the dis tribution o f a r andom vector x is denoted by [ x ] and the conditiona l distribution of y giv en x is denoted by [ y | x ]. In the Poisson cas e b ( x ) = e x , while in the logistic case b ( x ) = log(1 + e x ). An impo rtant s p ecia l case of ( 1 )–( 2 ) is the v ariance comp onents model [ y | β β β , u , σ 2 u 1 , . . . , σ 2 uL ] = exp { y T ( X β β β + Zu ) − 1 T b ( X β β β + Zu ) + 1 T c ( y ) } , u = u 1 . . . u L , [ u | σ 2 u 1 , . . . , σ 2 uL ] ∼ N ( 0 , blockdiag 1 ≤ ℓ ≤ L ( σ 2 uℓ I q ℓ )) . (3) where q ℓ is the num b er of ele ments in u ℓ . While ( 3 ) is not a s genera l as ( 1 )– ( 2 ) it still handles man y importa n t situations suc h as r andom in tercepts and generalise d additive mo dels [ 30 ]. With simplicity in mind, we will fo cus on this GLMM for the remainder of the pap er. Ho wev er, the metho dology of Section 3 is quite gener al and is extendable to more elab orate GLMMs. In this study we have worked with diffuse conjugate priors although, once again, the metho dology extends to other types of prior s. T o ensure scale-inv aria nce all cont in uous predictors a re s tandardised at the s tart o f the B ayesian analysis. The prior on β β β is a diffuse Gaussian: β β β ∼ N ( 0 , σ 2 β I ) (4) for some large σ 2 β > 0. The prior fo r ( σ 2 u 1 , . . . , σ 2 uL ) is assumed to hav e indep en- dent components; i.e. [ σ 2 u 1 , . . . , σ 2 uL ] = [ σ 2 u 1 ] · · · [ σ 2 uL ] . A n um ber of possibilities for [ σ 2 uℓ ] could b e considered [ 18 ]. These include an inv e r se gamma distr ibution, a uniform distr ibutio n, and a folded Cauch y distri- bution. In this pa per we use a conditionally conjugate inv erse gamma distribu- tion: [ σ 2 uℓ ] = A A uℓ uℓ Γ( A uℓ ) ( σ 2 uℓ ) − A uℓ − 1 e − A uℓ /σ 2 uℓ , σ 2 uℓ > 0 . (5) Y. F an et al./GLM mo del analysis via SMC sampling 919 This prior distribution was advo cated by [ 30 ] for A uℓ = 0 . 0 1. The prior is there- fore fairly non-informative, yet r esults in a slig h tly simpler sampling pr o cedure. It will b e convenien t to introduce some additional no ta tion to enable the mo del to b e des crib ed more succinctly . W e star t b y writing C = [ X Z ] and ν ν ν = β β β u . W e a lso write q β for the num ber of elements in β , and V = blo ckdiag( σ 2 β I q β , σ 2 u 1 I q 1 , . . . , σ 2 uL I q L ) for the prio r cov ar iance of ν ν ν . W riting σ σ σ 2 for ( σ 2 u 1 , . . . , σ 2 uL ), we ca n then combine ( 3 ), ( 4 ) and ( 5 ) to g ive the joint dens ity of all parameter s a nd data: [ y , ν ν ν , σ σ σ 2 ] = exp y T C ν ν ν − 1 T b ( C ν ν ν ) + 1 T c ( y ) − 1 2 ν ν ν T V − 1 ν ν ν − L X ℓ =1 q ℓ 2 log( σ 2 uℓ ) + L X ℓ =1 A uℓ log( A uℓ ) − log Γ( A uℓ ) − ( A uℓ + 1) log( σ 2 uℓ ) − A uℓ /σ 2 uℓ . F rom this, a nd noting that ν ν ν T V − 1 ν ν ν = k β β β k 2 /σ 2 β + P L ℓ =1 k u ℓ k 2 /σ 2 uℓ , it is c le a r that the density of the p osterio r distr ibutio n of the parameters is simply pr o- po rtional to the function π ( ν ν ν , σ σ σ 2 ) = exp y T C ν ν ν − 1 T b ( C ν ν ν ) − 1 2 σ 2 β k β β β k 2 − L X ℓ =1 ( A uℓ + q ℓ 2 + 1) log( σ 2 uℓ ) + ( A uℓ + 1 2 k u ℓ k 2 ) /σ 2 uℓ . (6) In Section 3 , we will develop a sequential Monte Ca rlo s a mpler to pro duce samples from the distribution pr op ortional to π . 3. Sequen tial Monte Carlo sampl ing The Monte Carlo appro ach to GLMM ana lysis p erfor ms inference by drawing samples from the joint p oster ior distribution of the parameter s θ θ θ = ( β β β , u , σ 2 u 1 , . . . , σ 2 uL ). W e wr ite π ( θ θ θ ) for the (unnormalised) density of this p osterio r distri- bution. Instead of using a Ma rko v chain with π as its s tationary distribution to pro duce these s amples, the sequential Mon te Carlo (SMC) sampling metho d is a genera lisation of importance sampling that pro duces a weigh ted sa mple fr om π while r etaining some of the b enefits o f MCMC analys is [ 8 ]. The use of SMC for static pr o blems (a s opp o sed to particle filters for dynamic problems; [ 12 ] requir es the intro duction of auxiliar y dis tr ibutions π 0 , π 1 ,. . . , π S − 1 . At stage s of the sampler we use a (w eighted) sample from the previo us Y. F an et al./GLM mo del analysis via SMC sampling 920 distribution π s − 1 to pr o duce a (w eigh ted) sample from π s . W e s et π S = π so that after S stag e s w e hav e a sample from the p os terior distr ibution of in terest. The in tro duction of the intermediate distributions allows the initial distribution π 0 to b e gradually co r rected to r esemble the target distribution π , and can often ov ercome pr o blems such as particle depletion where, if the tw o consecutive distributions are to o dissimila r , then a sma ll num ber of particles ca rry all the weigh t in the final s ample. The aux iliary distributions can b e constructed in several w ays: [ 5 ] introduces the obser v ations incre mentally to evolve the distributio n from the pr ior to the po sterior; [ 13 ] uses a similar technique, but increases the size of the state spa ce as more obser v ations are added; [ 8 ] use π s ∝ π 1 − γ s 0 π γ s , where (7) 0 = γ 0 ≤ γ 1 ≤ · · · ≤ γ S = 1 and π 0 is c hosen to be the prior distribution for the para meters. In this ar ti- cle, due to the diffuse na tur e o f the pr ior distr ibution, the initial dis tribution π 0 is ins tea d c hosen to be a m ultiv ar iate Normal distribution with mean and cov a riance matrix chosen based on estimates obtained using c lassical metho ds for fitting GLMMs. The SMC sampler algor ithm starts by sampling N samples, termed “pa rti- cles”, fro m the initial dis tr ibution π 0 . Denote by θ θ θ 0 i the i th particle at initial stage s = 0, a nd a llo cate weigh t w 0 i ≡ 1 to each of the N particles, so that { θ θ θ 0 i , w 0 i } is a weigh ted sample from π 0 . The SMC sampling technique uses the w eigh ted pa r ticles from distribution π s − 1 to pro duce par ticles from distribution π s through moving, reweigh ting and (po ssibly) res ampling; see [ 8 ]. F or s implicit y , the formulation we use is that describ ed in detail in Section 3 .3 .2.3 o f that pap er, which essentially res ults in the resample–mov e alg orithm used b y [ 5 ] and [ 19 ]. This is also similar to the annealed imp orta nc e sampling metho d of [ 24 ], but the use of resampling within the algorithm greatly improves the efficienc y of the metho d. W r iting θ θ θ s i for the i th par ticle a t stage s , a t each stage 0 < s ≤ S of the algorithm we p erfo r m the following steps: Rew eight Given N weigh ted particles { θ θ θ s − 1 i , w s − 1 i } fro m π s − 1 , set w s i = w s − 1 i π s ( θ θ θ s − 1 i ) π s − 1 ( θ θ θ s − 1 i ) . { θ θ θ s − 1 i , w s i } is now a weigh ted sample from π s . Resample If the effectiv e sample size (ESS, [ 20 ]), defined as ( P N i =1 w s i ) 2 / P N i =1 ( w s i ) 2 , is les s than k N , where k is so me cons ta n t typically taken to b e 1/2, then we p erfor m stratified resampling [ 21 ]. ESS estimates the n um ber of s imple random samples from the target distribution that ar e requir ed to obtain an estimate with the same Mon te Ca r lo v ariation a s the esti- mate using the N weigh ted particles. Resampling refers to a suite of tech- niques that replica te the par ticles in such a way that the exp ected v alue Y. F an et al./GLM mo del analysis via SMC sampling 921 of par ticle-based estimator s is retained, but par ticles with lo w w eights are discarded and particles with high weigh ts multiplied; s e e [ 11 ] for a sum- mary and compariso n of several such a pproaches. This standar d pr actise in the sequential Monte Ca rlo literatur e a llows further computational ef- fort to focus on sa mples that a re likely to contribute non-neg ligibly to the final estimate. Finally , resampled par ticle weigh ts ar e reset to { w s i } ≡ 1. Mo v e Let { ˜ θ θ θ s , w s i } , i = 1 , . . . , N denote samples from at the current distribu- tion π s after reweighting a nd (po ssibly) res ampling. T o incre a se particle diversit y w e replace each sample according to θ θ θ s i ∼ K s ( ˜ θ θ θ s i , · ) where K s is an MCMC trans ition kernel that a dmits π s as sta tionary distribution. [ 15 ] provides detail on MCMC transition kernels. It is known [ 8 ] that this particular formulation of the SMC s a mpling is sub- optimal, in terms of the v arianc e o f the impo rtance weights { w s i } , especia lly if the distributions on consecutive stag e s are to o far apart. Howev er, since the optimal formulation is intractable, and for the static pro ble m we hav e here it is easy to ensure that the difference b etw e en π s − 1 and π s is small, we use this simpler formulation. (Con trast this situation with that of an SMC algorithm for a dynamic problem, or the technique o f [ 5 ] where data arr ive ov er time a nd there is no control over the distance b etw een π s − 1 and π s .) The “parameter s” of the algor ithm that must b e chosen whe n implementing this sampler ar e therefore: • the initial dis tribution π 0 , • the s e quence of v alues γ s that g ov ern the ra te of transition from the initia l distribution π 0 to the po sterior distribution π , • the tr ansition kernels K s , used to mov e the particles within the distr ibu- tion pro p or tional to π s , and • the num ber of particles N . • the total num b er o f distributions S . Spec ific choices o f these parameter s used in this pap er are discuss ed in the following subsections. W e g ive a more alg orithmic description of our method in the App endix. 3.1. Initi al di stribution π 0 As previously obser ved, using the pr ior distribution as an initial distribution is flaw ed in this ca s e, s ince the prior is highly diffuse. Ins tead we use the p enalis ed quasi-likeliho o d (PQL) method [ 2 ] to o btain an approximate fit of the mo del. Let b ν ν ν PQL and b σ σ σ 2 PQL be the estima te o f ν ν ν a nd σ σ σ 2 obtained using PQL. W e will calculate a normal approximation o f the p osterior distribution of ν ν ν centred at this approximate maximum likelihoo d estimate, which ca n then be use d to construct an initial distributio n π 0 for the SMC sa mpling pro cedure. Note from Y. F an et al./GLM mo del analysis via SMC sampling 922 ( 6 ) that π ( ν ν ν , σ σ σ 2 ) = ex p y T C ν ν ν − 1 T b ( C ν ν ν ) − 1 2 ν ν ν T V − 1 ν ν ν + f ( σ σ σ 2 ) , where f is so me function that do es not dep end on ν ν ν . It is a simple ca lculation to see that the matrix of second deriv a tives with resp ect to comp onents of ν ν ν is − C T diag { b ′′ ( C ν ν ν ) } C − V − 1 ; we therefo r e initia lise o ur a lgorithm by taking a normal distribution for ν ν ν with mean b ν ν ν PQL and cov aria nce ma tr ix Σ Σ Σ = h C T diag { b ′′ ( C b ν ν ν PQL ) } C + b V − 1 PQL i − 1 , (8) where the entries in b V PQL are taken from b σ σ σ 2 PQL . It remains to spec ify a distribution for the v aria nce vector σ σ σ 2 . W e hav e found it co n venien t to sp ecify this co nditional on ν ν ν , and of a fo r m that is consistent with the p oster ior distribution π . W e take π 0 ( σ σ σ 2 | ν ν ν ) = L Y ℓ =1 ( A uℓ + 1 2 k u ℓ k 2 ) ( A uℓ + q ℓ / 2) Γ( A uℓ + q ℓ / 2) ( σ 2 uℓ ) − A uℓ − q ℓ / 2 − 1 e − ( A uℓ + 1 2 k u ℓ k 2 ) /σ 2 uℓ , (9) i.e. the σ 2 uℓ are conditionally independent giv en ν ν ν , and each has an inv erse gamma distribution dep ending on the corr esp o nding components of u . Hence, an initial sample from π 0 can easily b e gener ated by first s ampling fro m the normal distribution for ν ν ν then sampling the σ 2 uℓ from their conditional distributions. F urthermor e, we will see in Sections 3.2 and 3 .3 that this results in simple conditional distributions fo r σ 2 uℓ at all stages of the sampler . Putting tog ether the initial distributions of ν ν ν and σ σ σ 2 , we see that π 0 ( ν ν ν , σ σ σ 2 ) ∝ exp − 1 2 ( ν ν ν − b ν ν ν PQL ) T Σ Σ Σ − 1 ( ν ν ν − b ν ν ν PQL ) + L X ℓ =1 ( A uℓ + q ℓ 2 ) log( A uℓ + 1 2 k u ℓ k 2 ) − ( A uℓ + q ℓ 2 + 1) log σ 2 uℓ − ( A uℓ + 1 2 k u ℓ k 2 ) /σ 2 uℓ . (10) In the GLMM examples of this pap er, there is no reaso n to susp ect that the p osterio r distributio n is particularly sprea d out or multi-modal. Hence this π 0 is sufficient, as demo nstrated by the fact that several different Monte Carlo metho ds pr ovide identical inference in the examples of Section 4 . In other ex- amples where these complications are likely to o ccur , π 0 should be chosen with an infla ted v ariance, or to b e a t distribution, to help dominate the p os terior distribution. Note that multi-mo dality is le ss of a pro blem for the sequential Monte Carlo approach than it would be for MCMC, since there is no difficulty in having samples in b oth mo des simultaneously , wherea s an MCMC a ppr oach m ust mov e betw een the no des throug h areas of low pos terior probability . Y. F an et al./GLM mo del analysis via SMC sampling 923 3.2. Se qu enc e of interme diary distributions In this section w e describ e the sequence of distributio ns used to trans ition from π 0 to π S = π . Recall that we c hoo se to use the formulation ( 7 ). Using ( 10 ) and ( 6 ) it is clear that the intermediate distributions are pr op ortional to π s where π s ( ν ν ν , σ σ σ 2 ) = exp γ s y T C ν ν ν − 1 T b ( C ν ν ν ) − 1 2 σ 2 β k β β β k 2 + L X ℓ =1 ( A uℓ + q ℓ 2 ) log( A uℓ + 1 2 k u ℓ k 2 ) − L X ℓ =1 ( A uℓ + q ℓ 2 + 1) log( σ 2 uℓ ) + ( A uℓ + k u ℓ k 2 ) /σ 2 uℓ +(1 − γ s ) − 1 2 ( ν ν ν − b ν ν ν PQL ) T Σ Σ Σ − 1 ( ν ν ν − b ν ν ν PQL ) − L X ℓ =1 ( A uℓ + q ℓ 2 + 1) log σ 2 uℓ + ( A uℓ + 1 2 k u ℓ k 2 ) /σ 2 uℓ = exp γ s y T C ν ν ν − 1 T b ( C ν ν ν ) − 1 2 σ 2 β k β β β k 2 + L X ℓ =1 ( A uℓ + q ℓ 2 ) log( A uℓ + 1 2 k u ℓ k 2 ) + (1 − γ s ) − 1 2 ( ν ν ν − b ν ν ν PQL ) T Σ Σ Σ − 1 ( ν ν ν − b ν ν ν PQL ) (11) − L X ℓ =1 ( A uℓ + q ℓ 2 + 1) log σ 2 uℓ + ( A uℓ + 1 2 k u ℓ k 2 ) /σ 2 uℓ In the absence of any a dditional information ab out the sha p es o f these distr i- butions, it is difficult to sp ecify a sensible gener ic sequence o f γ s v alues. Hence for the rest of the pap er we choose to increa se γ s from γ 0 = 0 to γ S − 5 = 1 in a linear fashion, that is , v alues o f γ s are sequentially incremented by the same amount. Additiona lly , we app end γ S − 4 = · · · = γ S = 1 to this sequence to give five stages at the end of the sampler on which the par ticles are not resa mpled. This means that the fina l sample is well spr ead out over the distr ibutio n π (it was found that if resampling ha ppened to o close to the end of the sampler then several samples might b e iden tical, resulting in po o r densit y estimates b eing pro duced using the s tandard techniques). It is an in teresting and op en research ques tio n as to whether the sequence γ s can b e ch osen in a more principled manner . One option would be to cho ose the sequence in adv ance us ing some pro per ties o f the dis tributions π 0 and π . An alter native w ould b e to choo se the next γ s adaptively while the sampler pro ceeds through the sequence of distr ibutions; how ev er it is no t straightforw ard to generalis e the pro ofs of v a lidit y of the sampler in this case . Y. F an et al./GLM mo del analysis via SMC sampling 924 3.3. T r ansition kernels F or this pap er we cho ose to use Metrop olis- Ha stings transition kernels for the parameters in ν ν ν . The choice of inverse gamma distributions for the comp onents of σ σ σ 2 within π 0 means that w e ca n s imply use Gibbs sampling steps, [ 17 ], to up- date thos e co mp onents. A t each step s we use a Metr op olis–Hastings transition kernels K s . Since π 0 is an approximation to π , and π s is in some sense b etw een π 0 and π , w e use the same propos al distributions at each step s . Thes e prop osa l distributions are der ived fr om π 0 as describ ed in this section. W e for m a partition {I 1 , . . . , I J } of { 1 , . . . , P } , where P is the n um ber o f columns in C , so that [ C I 1 · · · C I J ] is the matr ix C , but with columns p ossibly re-orde r ed; a nd ν ν ν I 1 . . . ν ν ν I J is the co rresp onding partition of ν ν ν . (The case J = 1 corre s po nds to no par - titioning.) On ea ch mov e step of the algorithm we mov e thr ough the se ries of subsets I j , for j = 1 , . . . , J . W e apply a Metrop olis -Hastings transitio n kernel to the comp onents ν ν ν I j = ( ν i ) i ∈I j . T o describ e the tr ansitions we int ro duce the matr ic es Σ Σ Σ I j , whe r e Σ Σ Σ I j is the conditional cov aria nce under π 0 of ν ν ν I j given the v alues of ν ν ν −I j = ( ν i ) i / ∈I j . These c a n b e calculated at the start of the algorithm. Recall that since π 0 is a n approximation of π , the Σ Σ Σ I j matrices ther efore corr e spo nd to approximations of the conditional cov ar iance of ν ν ν I j given ν ν ν −I j under the p oster ior distr ibutio n π . Note that we are here assuming that the approximate co v aria nce matrices Σ Σ Σ I j are close e no ugh to the truth to be useful as prop osal distributions in the random walk Metropo lis–Hastings kernel. The pr op osal distribution for ν ν ν I j is then a no r mal distribution centered on the current v alue of ν ν ν I j with cov ariance τ ν j Σ Σ Σ I j . The accepta nce probability for the move, applied after r eweigh ting to get a w eigh ted dis tr ibution from π s , is simply ca lculated from the r atio of π s v alues for the prop os ed and curr ent v alues. The scaling pa rameters τ ν j are by default c hosen to b e 2 . 4 / p |I j | following the heuristic of [ 25 ]. Howev er in pra ctice they a re usua lly chosen, based o n s e veral runs o f the alg orithm, to ensure that the acc e pta nce rates rema in close to 0.23 (again following [ 25 ]). Details of sp ecific c hoices used are given in the ex amples. T o upda te the v ar iance parameter s σ σ σ 2 , a Gibbs s ampling step can b e applied. Note fro m ( 11 ) that for each s the full co nditional distribution of σ 2 uℓ is simply an inv e r se gamma distr ibution, dep ending on the corresp onding vector of regressio n co efficients u ℓ . Howev er if a different prior is used fo r σ σ σ 2 then Gibbs sa mpling will no t b e av aila ble and a Metr op olis–Hastings update should b e p erfor med for each σ 2 uℓ in turn. Y. F an et al./GLM mo del analysis via SMC sampling 925 4. Examples In this s e ction we will demonstrate the methodolo gy on tw o examples. The first example is a semiparametric Poisson r egressio n mo del, with simulated data so that fair c o mparisons ca n be dra wn with alternative MCMC approaches. The second example is a binary lo gistic regr ession inv o lving respirator y infectio n in Indo nesian children, w ith b oth a semipar ametric co mpo nen t and random effects. All computations were carried out in the R language [ 29 ], using a single core AMD Optero n 2.0GHz, this is similar to r unning the progr am on a standa rd PC. 4.1. Semip ar ametric Poiss on r e gr ession In this section, we generate n = 5 00 Poisson random v ar iables y i , i = 1 , . . . , n from y i ∼ Poisson( exp { 0 . 7 x 1 i + 2 x 2 i + cos(4 π x 2 i ) } ) where x 1 i is 0 or 1 with proba bilit y 0.5, and x 2 i is uniformly s ampled from the int erv al [0 , 1]. W e fit mo del ( 3 ), with b ( x ) = e x , β β β = β 0 β 1 β 2 , X = 1 x 11 x 21 1 x 12 x 22 . . . . . . . . . 1 x 1 n x 2 n . The radial cubic basis function is used to mo del the function f ( x 2 i ) = co s(4 π x 2 i ). This implies mo delling f ( x 2 i ) = β x 2 x 2 i + Z x 2 i u , wher e for knot p oints κ k , chosen to b e the ( k +1 K +2 )th quantile of the unique predictor v alues, for k = 1 , . . . , K, K = 10, u = u 1 . . . u 10 , [ u | σ 2 u ] ∼ N ( 0 , σ 2 u I ) , and Z x 2 i = [ | x 2 i − κ k | 3 1 ≤ k ≤ 10 ][ | κ k ′ − κ k | 3 1 ≤ k,k ′ ≤ 10 ] − 1 / 2 The gl mmPQL metho d of the R statistical pack ag e gives a n approximate MLE for the regr e ssion co efficients b ν ν ν PQL , a nd the v a riance pa rameters b σ σ σ 2 PQL .W e follow the genera l algorithm given in Section 3 . There a re 13 regressio n co efficients to b e es timated for this mo del, and one v aria nce par ameter. W e upda ted each of the regression c o efficient s ν ν ν singly using random w alk Metr op olis-Hastings (R WMH) up dates for the mov e step of the algor ithm and a Gibbs update for the v ariance par ameter. As with MCMC, the tuning of this kernel is crucial to the success of the alg o rithm; to a chiev e an acceptance rate in the MCMC step b etw een 20 –30% we set τ ν I = 1 / 3. W e also choose the num ber of steps S = 105 and the num ber o f particles N = 1000 ba sed on preliminary runs. W e will discuss the choices of N and S in mo re detail in Sectio n 5 . Y. F an et al./GLM mo del analysis via SMC sampling 926 W e compare the p e rformance of the SMC sampler simulations by monitoring the QQ-plo ts of samples from a simple imp ortance sampler , a sing le-v ar iable slice sampler which upda tes one parameter at a time and a standard R WMH sa mpler with the same transition k ernels as use d in the SMC sa mpler (i.e. those describ ed in Section 3.3 ). Figur e 1 (a) shows the Q Q-plot for the β 1 parameter, and the corres p onding densit y estimates for β 1 is given in (b). With the exception of the impo rtance sampler, which can per form badly on different sim ulated data sets, the remaining sampler s achiev ed g o o d concordance. This required 1,00 0 particles with 105 steps for the SMC sampler. F or co mparison, we used 2 0,000 iterations of bo th slice sampler and R WMH MCMC scheme, with the first 1 0,000 discarded as burn-in. These to ok approximately 1394 a nd 2430 se conds r e s pec tively , wherea s the SMC sa mpler to ok approximately 700 seco nds. The ma jority of the g a ins in computational time for the SMC sa mpler come from the fact that the par ticle s at each step c an b e up dated simultaneously without the need to cycle through a lo op, compar ed with MCMC sa mplers where each iteration has to b e up dated sequentially dep ending o n the v alue of the parameter at the previous step. In the R programming languag e use d for this resear ch, as with many other high level progr amming languag es, this provides a very significant computatio nal adv antage. The nonpar ametric fits of the mo del, calcula ted using the estimated p osterio r mean of u , are display ed in Figure 2 . The mo del has successfully recov ered the nonlinearity in the dep endency on x 2 and fits the data well. 4.2. Example: R espir atory infe ction in Indonesian childr en Here we apply seq uen tial Monte Carlo algor ithm to an example involving res- piratory infection in Indonesia n children (see [ 10 , 22 ]). The data cont ain lon- gitudinal measurements on 275 Indo ne s ian c hildren, where the indicator for respirato ry infectio n is the bina r y resp onse. The cov ar iates include age, height, indicators for vitamin A deficiency , sex, stunting and visit num ber s (one to six). Previous analyses have sho wn the effect of age of the child to be non-linea r, hence we use a logistic additive mixed mo del o f the form logit { P (respira tory infection ij = 1 | U i , u k ) } = β 0 + U i + β β β T x ij + f (age ij ) for 1 ≤ i ≤ 275 children and 1 ≤ j ≤ n i rep eated meas ures within a c hild. U i i.i.d. ∼ N (0 , σ 2 U ) is a random c hild effect, x ij is the meas urement on a vector of the re ma ining 9 cov ariates, and f is mo delled using penalized splines with spline basis co efficients u k i.i.d. N (0 , σ 2 u ). As re c ommended by [ 16 ], we use hier archical centering of random effects. All contin uo us cov aria tes are s ta ndardised to hav e zero mean and unit standar d deviation, so that the choices of hyperpa rameters ca n b e independent of sc a le. Radial cubic ba sis functions are use d to fit the cov ariate age, where f (age) = β age age + Z age u Y. F an et al./GLM mo del analysis via SMC sampling 927 0.65 0.70 0.75 0.80 0.85 0.65 0.75 0.85 SMC IS 0.65 0.75 0.85 0.65 0.75 0.85 SMC Slice 0.65 0.75 0.85 0.65 0.75 0.85 SMC RWMH 0.65 0.75 0.85 0.65 0.75 0.85 Slice RWMH (a) 0.6 0.7 0.8 0.9 0 5 10 15 20 beta1 density SMC IS Slice RWMH (b) Fig 1 . Q Q-plots of SMC sampler output against simple i mp ortanc e sampler, the slic e sampler and the R W Me tr op olis-Hastings sampler f or β 1 (a). The co rr esp onding density estimates (b). Y. F an et al./GLM mo del analysis via SMC sampling 928 x2 y 0 10 20 30 40 0.0 0.2 0.4 0.6 0.8 1.0 x1 = 0 0.0 0.2 0.4 0.6 0.8 1.0 x1 = 1 Fig 2 . The data, the true me an values (solid line s), and t he estimate d me an values (dotte d lines) for the simulate d Poisson e xample. The fit was b ase d on a SMC sampler run with 1000 p articles and 105 interme diate steps, as describ e d in the text. where Z age = [ | age − κ k 1 ≤ κ ≤ K | 3 ][ | κ k − κ k ′ 1 ≤ k,k ′ ≤ K | 3 ] − 1 / 2 and u ∼ N (0 , σ 2 u I ) with κ k chosen to b e the ( k +1 K +2 )th quantile of the unique predictor v alues . W e take K = 20 in this e x ample. W e use a v ag ue prior N (0 , 10 8 ) for the fixed effects. F or b oth v ariance co m- po nent s, w e us e the conjugate In v erse Ga mma prior IG(0 . 01 , 0 . 01). Other prio r choices ar e av ailable, see [ 30 ]. Her e, rando m walk Metro po lis-hastings up dates were carr ied out for each regressio n co efficients sepa rately , with Gibbs sampling used for the v ariance parameter s. The tuning parameters τ ν I j for the Metrop olis- Hastings up date a r e again chosen to a ch ieve a n accepta nc e rate in the MCMC Y. F an et al./GLM mo del analysis via SMC sampling 929 coeff. density summary vit. A defic. −2 −1 0 1 2 3 posterior mean: 0.61 95% credible interval: (−0.542,1.62) male −0.5 0 0.5 1 1.5 posterior mean: 0.563 95% credible interval: (0.0439,1.06) height −0.05 0 0.05 0.1 0.15 posterior mean: 0.0338 95% credible interval: (−0.0208,0.0893) stunted −2 −1 0 1 2 posterior mean: 0.474 95% credible interval: (−0.402,1.31) visit 2 −3 −2 −1 0 posterior mean: −1.2 95% credible interval: (−2.1,−0.431) visit 3 −2 −1 0 1 posterior mean: −0.629 95% credible interval: (−1.41,0.11) visit 4 −3 −2 −1 0 posterior mean: −1.37 95% credible interval: (−2.3,−0.467) visit 5 −1 −0.5 0 0.5 1 1.5 posterior mean: 0.468 95% credible interval: (−0.158,1.14) visit 6 −1 0 1 posterior mean: −0.0384 95% credible interval: (−0.722,0.67) st.dev.(subject) 0.5 1 1.5 posterior mean: 0.928 95% credible interval: (0.7,1.23) Fig 3 . Summary of c o efficie nts in r espir atory infe ctions in Indo nesian childr en example. step b etw een 2 0–30%, we use d τ ν I j = 3 for the fixed effect co efficients, τ ν I j = 6 for the ra ndom effect co efficients, and τ ν I j = 5 for the s pline co efficients. Figure 3 show the results from simulation, using 10 00 particles and 305 in- termediate steps . The Fig ure shows bor derline p ositive effect of Vita min A de- ficiency , sex a nd some vis it num ber s on r e spiratory infection. These r e sults ar e in keeping with previous analyses . Figure 4(a) shows the nonlinear effect o f age; 4(b) s hows the effective sample size a t each of 300 seq uen tial steps of the simulation, v ertical lines indicate the o cc ur rence of res a mpling. Again, we compa re the p erfo rmance of the SMC sampler with the impo rtance sampler, slice sampler and R WMH sampler with the same transition kernel as Step 3 o f the SMC s ampler. Results for 5,0 00 samples of the imp or tance sa m- pler, 1,000 SMC par ticles and 5,000 slice samples with 5,00 0 burn-in and 5,000 R WMH samples with 5,000 burn-in are plo tted in Figure 5 , go o d ag reements Y. F an et al./GLM mo del analysis via SMC sampling 930 1 2 3 4 5 6 7 0.00 0.05 0.10 0.15 0.20 age (years) est. P(respiratory infection) (a) 0 50 100 150 200 250 300 0 200 400 600 800 1000 sequential distributions (s) effective sample size (b) Fig 4 . R e spir atory infe ctions in Indonesian childr en e xample. (a) Posterior me an of the esti- mate d pr ob ability of r espir atory infe ct ion f ( age ) with al l other c ovariates set to their aver age values. (b) Effe ctive sample size over 300 distributions, v ertic al lines indic ate instanc es of r esampling. are found betw een the SMC, slice and MCMC sampler s. The sa mpler which per formed badly app ears to be the imp ortance sa mpler, where in this case, the sampler app ears to suffers from par ticle depletion wher e one or few particles from an are a of high p osterio r density is dominating the o ther particles. Finally , the SMC sampler to ok a ppr oximately the same amount of time as the slice sampler at 2.8 hours and the R WMH to ok a bo ut 9 ho urs (similarly 9 hours w as required in W inBUGS ). In the next section w e discus s the effect of the sample size and step size sp ecifications on the efficiency of the SMC sa mpler. 5. Impro ving s ampler p erformance In this se c tion we inv e stigate the SMC sampler pe r formance by lo ok ing at the effects of user -defined s pecific a tions s uch as the num ber of sequential steps ( S ); the num ber of particles to s a mple ( N ) and blo ck updating stra tegies. Here w e base efficienc y compariso ns o n the effectiv e sample size diagno s tic calculation of [ 4 ]. (Note this is different from the ESS [ 2 0 ] used in determining whether res ampling is p erfor med.) This diagnostic is es sent ially a n a na lysis of v aria nce approach based o n several parallel runs of the alg o rithm, whic h provides the num ber of indep e nden t sa mples from the p osterior distribution that would be requir ed to ga in the sa me degre e of accur acy: higher num ber s are obviously preferrable. This estimate of effective sample size is not affected by resampling and in addition, can be used to compar e SMC sampler with MCMC a ppr oaches under a co ns istent fra mework. Thus, for a given parameter, we calculate the Y. F an et al./GLM mo del analysis via SMC sampling 931 −1.0 0.0 1.0 2.0 −1 0 1 2 SMC IS −1.0 0.0 1.0 2.0 −1 0 1 2 SMC Slice −1.0 0.0 1.0 2.0 −1 0 1 2 SMC RWMH −1 0 1 2 −1 0 1 2 Slice RWMH (a) −2 −1 0 1 2 0.0 0.2 0.4 0.6 0.8 Vitamin A Deficit density SMC IS Slice RWMH (b) Fig 5 . Q Q-plots of SMC sampler output against simple i mp ortanc e sampler, the slic e sampler and the R W Me tr op olis-Hastings sampler for the c o efficient of vitamin A deficie ncy (a). The c orr esp onding density estimates (b). Y. F an et al./GLM mo del analysis via SMC sampling 932 10s 20s 50s 100s 200s RWMH slice 0 500 1000 1500 2000 2500 3000 3500 one block N=1000 no blocking N=1000 one block N=2000 Algorithm efficiency for PMM example Fig 6 . Comp arison of effe ctive sample size for β 1 fr om t he SMC sampler (over i ncr ea sing numb er of se quential distributions ( S ), and t he slic e and R WMH samplers in the Poisson r egr ession examples. ratio of the average estimate o f the p os terior v ariance of the para meter to the v aria nce of the p osterior means o f the parameter a c ross indep enden t runs. All our experiments were ca rried o ut using the t w o exa mples in Sections 4.1 and 4.2 , whe r e β 1 is used for the Poisson r egressio n example, and the co efficient of Vitamin A deficiency is used for the logis tic regress io n exa mple. In exp erimenting with blo ck/simultaneous up dating the par ameters for the mov e step of the SMC sampler, we found that in some cases, particula rly for the logistic example, na ¨ ıve blocking up dating (i.e., blo cking r egressio n co efficients, random effects co efficients, a nd spline co efficients) can in fa ct adversely affect the p erformance o f the sampler . W e als o found that c a reful tuning of a ccep- tance pro babilities in the R WMH step to b e b etw een 2 0-30% ca n b e crucial to the p erfo rmance. Finally , w e found that increa sing the num b er of sequential dis- tributions S , as well as increasing the num ber o f particles N can grea tly improv e the effective sample size. Figure 6 shows the e ffective sample size for β 1 calculated from a total of Y. F an et al./GLM mo del analysis via SMC sampling 933 10 indep endent runs of the sampler for the P oisson regr ession example. W e calculated the diag nostic for the SMC sampler over S = 10 , 20 , 50 , 100 , 200. F or each S , w e implemented the sampler with a sing le block upda te using N = 10 00 and N = 2000 particles , a nd a s ampler without blo cking with N = 1000 . F or compariso n, the diagnostic for β 1 was also calculated for a (single-v ariable) slice sampler and a R WMH sampler , ea ch o f 20,000 iterations with 10,000 burnin. The R WMH alg orithm used the same transition kernels as Step 3 of the SMC sampler, with a nd without blo ck updating. Clearly , the effectiv e sample size of the sampler with no blocking is larger than that o f the sampler with one block for all S , and increases with larger v alues of S and N . W e can see that the effective sa mple size o f the slice sa mpler is comparable to the SMC sampler with S = 50 and N = 1000 , while the R WMH sampler achiev es the smallest effective sample size. F or the logistic r egressio n example of Section 4.2 , we found that b y upda ting regres s ion parameters sing ly , a nd choos ing S = 200 and N = 2 000 a chieved a n effective sample size of 2604, and S = 300 and N = 1000 achieved an effective sample size o f 2377. The tw o sa mplers to ok about 3.8 and 2.9 hours to r un resp ectively . As a comparison, the slice sampler with 10 ,000 itera tions with 5,000 bur n in too k 2.8 hours to run and a ch ieves an effectiv e s ample s ize of 1948, while a R WMH sampler of length 10,000 with 5 ,000 burn in achieves an effective sa mple size of o nly 177 , and taking 9 hours to run. 6. Conclusion In this pa per we pre s ent ed a gener al sequential Monte Carlo alg orithm to pro- duce samples fr om the p osterio r dis tr ibution for Bayesian analy s is o f genera lised linear mixed mo dels. The a lgorithm is a n alterna tive to the po pular Markov chain Mon te Carlo metho ds . W e ha v e demonstrated that the algorithm can handle problems whe r e the num b er of parameter s to be estimated in the mo del is high. F or exa mple, in the spline formulation of the Indo nesian children exam- ple, there were ov er 300 parameters in the mo del. In addition, the alg orithm is generally easy to apply . W e have also demonstrated that it can hav e subs tan- tial gains in computational time o ver tra ditional MCMC in bo th a simulated po isson exa mple and a r eal data binomial example. Finally , p er haps the biggest adv antage o f SMC ov er MCMC samplers is the fact co n vergence of SMC sam- plers do es not rely on con v ergence o f Marko v chains, which can be problema tic in designing more e fficien t algo rithms in complex problems. W e have found that in the context o f Bayesian GLMM analys is , the design of the initial seq uen tial Monte Ca rlo dis tribution may be helped b y using a p- proximate para meter e s timates from cla ssical GLMM analy sis, s uc h as using the PQL metho d to find MLE of the likelihoo d. Note that in the case where such estimates cannot b e easily found, and the only sensible c hoice is a diffuse prior, then a SMC sampler with man y more particle s and sequential dis tributions will be needed to o btain goo d results. In choos ing the schedule for the temper ing sequence γ s in ( 7 ), w e hav e found no subs tant ial difference b et ween the differ en t Y. F an et al./GLM mo del analysis via SMC sampling 934 t yp e s of schedules currently used in the literature , henc e we r ecommend that a simple linear schedule b e adopted in the GLMM con text. W e hav e a lso found that b y tuning the acceptance rate of the Rando m- W alk Metropo lis-Hastings kernel in the Mo ve step of the SMC sampler to around 20-30% sig nificantly improv es the p erforma nc e of the sampler, see [ 9 ], although this practica l finding do es not yet hav e rig orous theoretical supp ort. Finally , in implementing the Mo v e step of the SMC sa mpler, one has so me degree of flexibilit y when the Mar ko v chain Monte Ca rlo update is used. F or example, one may cons ider a b etter ch oice of pro po sal distr ibutions for the Metrop olis-Hasting s algo r ithm, by a llowing the alg orithm to automatically scale a prop os al distribution, see for example [ 5 ]. Here a ma jor adv antage over the traditional MCMC is that the alg o rithm do es not suffer from the restrictions asso ciated w ith a Markov chain, and information from pre vious samples can b e freely used to obtain future s a mples. F urthermo re, one is not restric ted to only MCMC type of mov es in this step, other move types a re po s sible, see [ 8 ]. How ev er, sequential Mon te C a rlo algorithms are not black-b ox a lg orithms, requiring a certain amount o f tuning and us er input. In particular, one needs to set the n um ber o f se quent ial distributions ( S ) the num ber of par ticles to sample ( N ) and tuning para meters for the Metrop o lis -Hastings kernels in the mov e step of the algo rithm. Ac kno wle dgements This resear ch was par tially s uppor ted by Australian Resear ch Co uncil Discov ery Pro ject DP0877 432 (Y. F an), Nuffield F oundatio n g r ant num ber NAL/0 0803/ G (D.S. Leslie) and Australia n Resear ch Co uncil Discovery Pro ject DP08770 55 (M.P . W and). App endix: Algori thmic descripti on o f the SMC sampl er m etho d for GLMMs In this app endix we g ive a detailed description o f how to use the SMC sampler metho d to p erfo rm inference in GLMMs. W e us e the no ta tion of Section 2 ; choices made in the implementation of the algor ithm ar e explained in Sectio n 3 . F or any subset I of { 1 , . . . , P } we wr ite C I for the s ubmatrix of of the design matrix C consisting of columns in I , C −I for the submatrix consis ting of columns o f C not in I , ν ν ν I and ν ν ν −I for the analogously defined sub vectors of ν ν ν . Also for any sq ua re matrix Q w e write Q I I for the square submatrix corres p onding to r ows a nd columns in I , Q I , −I for the s ubmatrix with r ows in I and columns not in I , Q −I , I for the submatrix with ro ws not in I and columns in I , and Q −I , −I for the square submatrix with rows and co lumns not in I . Y. F an et al./GLM mo del analysis via SMC sampling 935 Initialisation • Set the nu m ber of particles N and the num ber of intermediary distribu- tions S . • Construct a vector γ γ γ with s th en try ψ ( s ), s = 0 , 1 , . . . , S , where ψ : { 0 , 1 , . . . , S } → [0 , 1] is a n increa s ing function such that ψ (0) = 0 and ψ ( S ) = 1. F or the results in this pap er w e us ed ψ ( s ) = min { 1 , s/ ( S − 5) } . • Construct subsets I 1 , . . . , I J of { 1 , . . . , P } such that S J j =1 I j = { 1 , . . . , P } . The case J = 1 corresp onds to no blo cking of v ar iables for the mov e step. • Set tuning parameters τ ν j > 0, j = 1 , . . . , J for the Metropolis –Hastings upda tes. Usually these will b e set based on preliminar y runs o f the algo- rithm, and conv enien t defaults are τ ν j = 2 . 4 / p |I j | . • Use the Br eslow & Clayton (1993 ) p enalised quasi-likelihoo d (PQL) a lgo- rithm to obtain initial e s timates: b ν ν ν PQL and b σ σ σ 2 PQL . This is fac ilita ted by softw are such as gl mmPQL( ) in the R pa ck ag e MAS S [ 29 ]. Use these estimates in ( 8 ) to calculate Σ Σ Σ. • F or each j = 1 , . . . , J , ca lc ulate the co nditional cov aria nce under π 0 of ν ν ν I conditional of ν ν ν −I . If Q = Σ − 1 , then this conditiona l cov a riance is Σ Σ Σ I j := ( Q I I ) − 1 . Initial sample fr om π 0 • Pro duce a sample of size N from π 0 : for each i = 1 , . . . , N sample ν i from the normal distribution with mean b ν ν ν PQL and c ov ar iance Σ Σ Σ, then sample σ σ σ 2 i from the conditio na l inv e r se gamma distributions ( 9 ). • Set the weights w i = 1 / N for each i = 1 , . . . , N . Se quential sampling fr om e ach π s F or ea ch s = 1 , . . . , S in turn, Rew eight F or ea ch i = 1 , . . . , N , up date w i according to w i ← w i π s ( ν i , σ σ σ 2 i ) π s − 1 ( ν i , σ σ σ 2 i ) = π ( ν i , σ σ σ 2 i ) π 0 ( ν i , σ σ σ 2 i ) γ s − γ s − 1 then normalis e the weights by setting w i ← w i / P N j =1 w j . T o av oid ov er- flow and underflow problems it is r ecommended that logarithms b e used in this s tep. Resample Calculate the effective s a mple size (ESS) using E S S = ( N X i =1 w i ) 2 / N X i =1 ( w i ) 2 . Y. F an et al./GLM mo del analysis via SMC sampling 936 If E S S < N / 2 (or if s = min { s : γ s = 1 } ) then resample the pa rticles. The naive v ersion of resampling, which in tr o duces unnecessary Monte Carlo v aria tion into the scheme, simply samples (with replacement) from the po o l of par ticles , with par ticle i selected with probability w i . Howev er in our implemen tation w e use stratified resampling [ 21 ] to r educe the Monte Carlo v ariation. After resampling set w i = 1 / N for all i = 1 , . . . , N . Mo v e • F or ea ch j = 1 , . . . , J a nd each i = 1 , . . . , N , generate prop osals ( ˜ ν ν ν i ) I j ∼ N (( ˜ ν ν ν i ) I j , τ ν j Σ Σ Σ I j ), 1 ≤ i ≤ N . With proba bilit y α ( i ) = max ( 1 , π s (( ˜ ν ν ν i ) I j | ( ν ν ν i ) − I j , σ σ σ 2 i ) π s ( ν ν ν i , σ σ σ 2 i ) ) accept the pro po sal and set ( ν ν ν i ) I j = ( ˜ ν ν ν i ) I j . Otherwise reject the prop osal and lea ve ( ν ν ν i ) I j unch anged. Again, it is reco mmended that logarithms b e used when ca lculating α to av oid ov erflow a nd under- flow problems. Note that several par ts of the ra tio in the ca lculation of α ar e the s ame in b oth the numerator and denominator and need not b e ca lculated. • F or eac h ℓ = 1 , . . . , L , a nd for each i = 1 , . . . , N , sa mple ( σ σ σ 2 i ) ℓ from the inv erse gamma distribution with sha pe A uℓ + q ℓ / 2 and rate A uℓ + k u ℓ k 2 / 2. No te that if in verse gamma distributions are not used as the prior distribution for σ σ σ 2 then sa mpling from in v erse gamma distributions here would not result in a transition kernel that a dmits π s as a stationa ry distr ibution. Instead further Metrop olis– Hastings can b e used for each σ 2 uℓ in turn. Note tha t the decision to resample o n the first step at which γ s = 1 means that the fina l sample is an un w eighted sample from π . Hence standard techniques for dealing with samples from p osterio r distr ibutio ns can be used. How ev er the plug-in rule for the bandwidth used in the density estimates p erfor med p o or ly for res ampling close to step S, since some particles were identical. This is the reason that w e genera lly s et γ S − 5 = 1 and finish with five applica tions of the transition kernel to the un w eighted sa mple, resulting in a suitably diverse sample from π . References [1] Besag, J., Gre e n, P .J ., Higdon, D. and Mengersen, K. (199 5 ). Bay esian com- putation and stochastic systems. Stat istic al Scienc e , 10 , 3–66 . MR13498 18 [2] Breslow, N.E. and Clayton, D.G. (19 9 3). Approximate inference in ge ner al- ized linear mixed mo dels. Jour nal of the Americ an St atistic al Ass o ciation , 88 , 9– 25. [3] Breslow, N.E. and Lin X. (19 95). Bias cor rection in gener alised linear mixed mo dels with a single comp onent of disper sion. Biometrika , 82 , 81–9 1. MR13328 40 Y. F an et al./GLM mo del analysis via SMC sampling 937 [4] Carp enter, J ., Clifford, P . and F earnhea d, P . (19 99). An impr ov ed parti- cle filter for non-linear problems. IEE pr o c e e dings — R adar, Sonar a nd Navigation , 14 6 , 2–7. [5] Chopin, N. (2002). A Sequen tial Particle Filter for Static Mo dels. Biometrika , 89 , 5 39–55 1. MR19291 61 [6] Clayton, D. (19 9 6). Generalized linear mixed mo dels, pp. 2 75–30 1. In Markov Chain Monte Carlo in Pr actic e , eds . Gilks, W.R., Ric hardson, S. and Spiegelha lter, D. J. Londo n: Chapman & Ha ll. MR13979 72 [7] Cowles, M. K. and Carlin, B. P . (199 6). Mar ko v chain Monte Carlo conv er- gence diag nostics: A compara tiv e review. Journal of Americ an Statistics Asso ciation , 91 , 88 3–904 . MR13957 55 [8] Del Moral, P ., Doucet, A. and Jasra, A. (2006). Sequen tial Mo nt e Carlo samplers. Journal of the R oyal Statistic al So ciety, Series B , 68 , 41 1–436 . MR22783 33 [9] Del Moral, P ., Doucet, A. and Jasr a, A. (2007 ). Sequential Monte Carlo for Bay esian computation. In Bayesian Statistics 8, eds. J. M. Berna rdo, M. J. Bay arri, J. O. Berger, A. P . Dawid, D. Heck e r man, A. F. M. Smith and M. W est. [10] Diggle, P ., Lia ng, K-L. and Zeger, S. (1995). Analysis of L ongitudinal Data. Oxford: Ox fo rd Universit y P ress. [11] Douc, R., Capp ´ e, O . and Mo ulines, E. (2005). Co mparison of r esampling schemes for pa rticle filtering . Pr o c e e dings of the 4th International Symp o- sium on I mage and Signal Pr o c essing and Analysis , 6 4 –69. [12] Doucet, A., Godsill, S. and Andrieu C. (2000 ). On sequen tial Mon te Carlo sampling metho ds fo r Bay esian filtering . Statistics and Computing , 10 , 197 – 208. [13] F earnhead, P . (200 4). Particle filters for mixture mo dels with an unknown nu m ber of co mpo nents. Statistics and Computing , 14 , 1 1–21. MR20199 02 [14] F rigess i, A. (2003). On Some Curr ent Resea rch in MCMC, pp. 172– 178. In H ighly Str uctur e d Sto cha stic Syst ems , e ds . Green, P .J ., Hjor t, N.L. and Richardson, S. Oxford Universit y Press. MR2082 403 [15] Gamerman, D. (1997). Markov chai n Monte Carlo: Sto chastic simulation for Bayesian infer enc e. Chapman & Ha ll. MR2 26071 6 [16] Gelfand, A.E., Sa hu, S.K. and Car lin, B.P . (19 95). Efficient par a meterisa- tions fo r nor mal linea r mixed mo dels. Biometrika , 82 , 4 79–48 8. MR1366275 [17] Gelfand, A.E. and Smith, A. F. M. (199 0). Sampling Based Approaches to Calculating Mar g inal Densities. Journal of the Americ an Statistic al Asso- ciation , 85 , 398– 409. MR11417 40 [18] Gelman, A. (2006). Pr ior distributions for v ariance parameter s in hierar - chical models. Bayesian Analysis , 1 , 515– 533. MR22212 84 [19] Gilks, W. R. and Berzuini, C. (2001 ). F ollowing a moving ta rget—Monte Carlo inference for dynamic Bay esian mo dels. Journal of t he R oya l St atis- tic al So ciety, Series B , 63 , 12 7–146 . MR18119 95 [20] Kong, A., Liu, J.S., and W o ng, W.H. (1994). Sequential imputations and Bay esian miss ing data problems. Journal of Americ an Statistics Asso cia- tion , 89 (42 5), 278–2 88. Y. F an et al./GLM mo del analysis via SMC sampling 938 [21] Kitagaw a, G. (1996). Mon te Carlo filter a nd smoother for non-Gaussian, non-linear state space mo dels. Journal of Computational and Gr aphi c al Statistics , 5 , 1 –25. MR13808 50 [22] Lin, X. a nd Carr oll, R.J. (2001) Semipar ametric regr ession for clustered data. Biometrika , 8 8 , 117 9–186 5. MR18722 28 [23] McCullo ch, C .E ., and Sear le, S.R. (2 000). Gener alize d, Line ar, and Mixe d Mo dels . New Y o rk: John Wiley & Sons. MR18845 06 [24] Neal, R. (2001 ). Anneale d importanc e sampling. Statistics and Computing , 11 , 12 5–139 . MR1837 132 [25] Rober ts, G. O., Gelman, A. and Gilks, W. R. (1 997). W eak convergence and optimal scaling of ra ndom walk Metro po lis alg orithms. Annals of Applie d Pr ob abil ity , 7 , 1 10–12 0. MR142 8751 [26] Rober ts, G. O. a nd Sahu, S. K. (1997). Updating s c hemes, cov a r iance struc- ture, blo cking and parameter isation for the Gibbs s ampler. Journal of the R oya l St atistic al So ciety, Series B , 59 , 291–3 1 7. MR14405 84 [27] Ruppert, D., W and, M. P . and Carroll, R.J. (2 003). Semip ar ametric R e- gr ession . New Y ork: Cambridge Universit y P ress. MR199 8720 [28] Skrondal, A. and Rab e-Hesketh, S. (2004). Gener alize d L atent V ariable Mo deling: Multilevel, L ongitudinal and Structur al Equation Mo dels. Bo ca Raton, Flor ida: Chapman & Hall. MR2 0 5902 1 [29] V enables, W. & Ripley , B.D. (20 05). MASS . R pa c k age within the bundle VR 7.2-24 . http://c ran.r- p roject.org . [30] Zhao, Y., Staudenmay er, J ., Co ull, B.A. and W and, M.P . (200 6 ). Genera l design Bay esian generalized linear mixed mo dels. Statistic al Scienc e , 21 , 35–51 . MR22759 66

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment