A Simple Converse Proof and a Unified Capacity Formula for Channels with Input Constraints

Given the single-letter capacity formula and the converse proof of a channel without constraints, we provide a simple approach to extend the results for the same channel but with constraints. The resulting capacity formula is the minimum of a Lagrang…

Authors: Youjian Liu

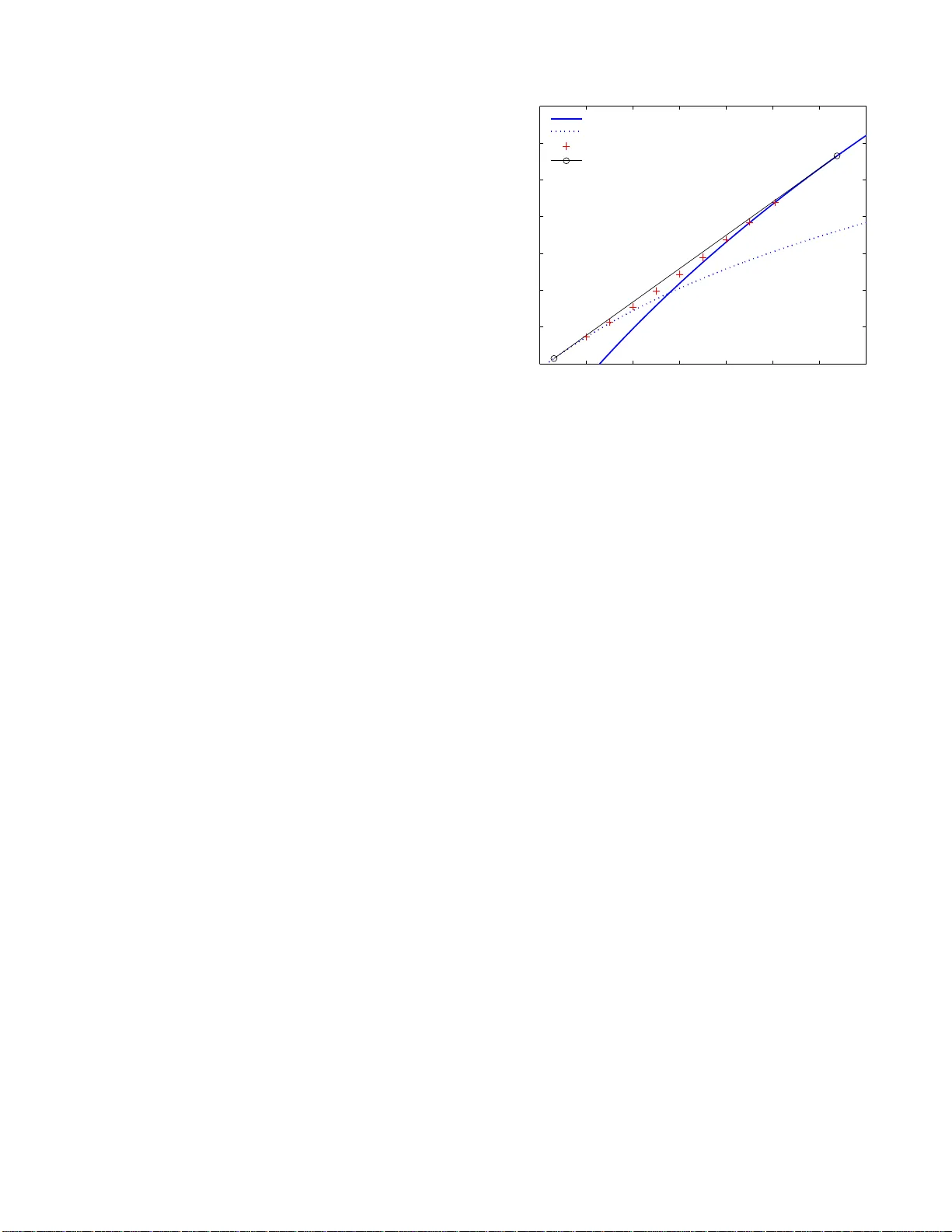

1 A Simple Con v erse Proof a nd a Unified Capacit y F ormula for Channels with Input Constraints Y oujian (Eugen e) Liu Departmen t of Electrical and Com puter Eng ineering University of Colorado at Bould er eugeneliu @ieee.org Abstract — Giv en the single-letter capac ity fo rmula and the con ver se proof of a channel wit hout inpu t constraints, we p ro vide a simple approach to extend the results f or the same channel but with in put constraints. The resulting capacity f orm ula is the minimum of a Lagrange d ual functi on. It gives an unified fo rmula in the sense that it wo rks regardless whether the pro blem is con vex. If the p roblem is non-con vex, we show that the capacity can be larger than the formula obtained b y t he naive approach of imposing constraints on the maximization in th e capacity fo rmula of the case wi thout the constraints. The extension on th e con verse proo f is simply b y adding a term in v olving the Lagrange multiplier and the constraints. The rest of the proof d oes n ot n eed to be changed. W e name the proof method the Lagrangian Con verse Proof . In contrast, traditional approaches need to construct a better input distribution fo r con vex problems or need to introduce a time sharing variable fo r non-conv ex p roblems. W e illustrate th e Lagrangian Conv erse Proof f or three ch annels, the classic d iscrete time memoryless channel, the channel with non-causal channel-state info rmation at the transmitter , the channel with li mited channel-state fee dback. The extension to th e rate distortion theory is also prov ided. Index T erms — Con verse, Coding Theor em, Capacity , Rate Distortion, Duality , L agrange Dual Fun ction I . I N T RO D U C T I O N Naiv ely imposing inp ut constrain ts on the m aximization in the single-letter capacity formula of a chann el without input constraints of ten produces th e ca pacity formula of the same ch annel with the constraints. For examp le, the classic discrete time memo ryless channel withou t input con straints has ca pacity C ′ = max p X I ( X ; Y ) , and with a power constraint, the cap acity is C = max p X : E X 2 ≤ ρ 0 I ( X ; Y ) . Such cases are so prevalent that on e may suspect it is always the case. W e started with this belief while working on channels with limited channel- state feedback. If one denotes the single letter ca pacity for the case with out constraint as C ′ = max Mutu al Info rmation , (1) contrary to th e con ventional belief, we found in [1], [2] that the capac ity for the ca se with the constraint can be larger than R = max constraint Mutual In formatio n This work w as supporte d by NSF Grants C CF-0728955, ECCS-07 25915, and Thomson Inc. A single column v ersion of this paper was submitte d to IEEE Transacti ons on Information Theo ry on J une 17, 20 08. and the capacity can b e expr essed as C = min λ ≥ 0 Lagrang e Dual Functio n ( λ ) (2) = R + Duality Gap ≥ R, (3) where the Lagra nge dual function [3] to the p rimary problem R counts for the constrain t. Capacity formu la (2) reduc es to the maximum of the mu- tual info rmation when the duality g ap is zero and therefore , Equation (2) is an unified expression for cases with no n-zero or zero duality gaps. During the discovery of the capacity result for th e ch annel with limited feed back and with constrain ts, we foun d a new proof of th e converse part of the capacity theor em. It is obtained via mod ifying the conv erse proof for the case withou t the con straints by adding to the second to the last expression a term inv olving the Lag range mu ltiplier a nd the con straints. The rest of the pro of is unchan ged. W e call such a pr oof the Lagrangian Conver se Pr o of . W ith little m odification, the method can a lso be used to prove the con verse part of the rate distortio n the orem. The u nexpected simplicity and the potential to o btain n ew results with ease motiv ates u s to r eport it her e. A meaningful theory should b e ab le to explain the past an d predict the future. In this paper, we show that the Lagrang ian Con verse Proo f ca n simplify the existing p roof of the capacity of the classic discrete me moryless ch annels an d the proof of the capacity of the cha nnels with non -causal ch annel-state informa tion at the tr ansmitters (CSIT) [4 ]–[6] . In addition, we illustrate how to use it to obtain new capacity results o f the channels with limited channel- state feedback [1] , [2] . T o understan d why the capacity can be gr eater t han the max- imum o f t he mutual inf ormation a s s hown in (3), we provides a conve x hu ll explanation of the capacity region of the single user channel. Y es, even for single user chann els, investigating the capac ity region is mean ingful when the capacity n eeds to be achieved using time sharing. The minimum of the Lagrang e dual fu nction conv eniently characterize the capacity region’ s bound ary poin ts without explicitly employing the time sharing. The intu ition is that when the duality gap is gr eater than zero, multiple solutio ns to (2) exist. Some solution is below the constraint and some is ab ove the c onstraint. A time sharing of th e solutions will ach iev e the capacity and at the same time, satisfy th e con straint exactly . Th erefore, the capacity can 2 ( | ) p y x Y Tr ansmitter Receiver X W ˆ W Fig. 1. A discrete memoryless channel alternatively be expressed a s th e max imum o f the time sharing of the mu tual informa tion. In summ ary , the co ntributions of the paper are as fo llows. • A simple con verse p roof is p rovided for the capac ities of channels with constraints and for rate d istortion theorems; • Expressed using the Lag range du al fun ction, an unified capacity form ula is pr esented and shown to have an intimate relation to the conve x hull of the capacity region and the time sharing. Free o f time shar ing variables, the expression also m akes the calculation of the capacities easier . T he capacity formu la also has a pleasant sym met- ric relation to rate d istortion fu nction. In Section II, the simplicity of th e Lagr angian conv erse proof is illustrated fo r th ree channe ls, the discrete me moryless channel, the chan nel with non-cau sal ch annel-state infor ma- tion, and the channel with limited channel-state fee dback. For the latter , the relation among the capacity formula, the capacity region, an d the time sharing is explained. In Section III, the conv erse p roof is extended to the rate distortion theor y . T he dual relation of chann el capacity and rate distor tion is briefly discussed. Section I V sum marizes the usag e of the propo sed conv erse proof . I I . T H E L AG R A N G I A N C O N V E R S E P RO O F F O R C H A N N E L C A PAC I T I E S There are two tra ditional methods of c on verse proo f for channels with in put constra ints. The first metho d takes ad- vantage of the conve xity of the pro blem and produ ces a better inp ut distribution from any input distribution induced by the info rmation message and th e code. Th is better input distribution m ust also satisfy the in put constraints. Section II-A compares this method with the Lagrang ian Converse Proof for the classic discrete memoryless channels. The second method is to introduce a time sharing variable for non-convex problem s. Section II-B and II-C co mpares it with the new conv erse proof f or chan nels with n on-cau sal channel-state informa tion at th e transmitter and fo r channels with limited feedback , of which an example of nonzer o dua lity g ap is provided. A. The Capacity of the Discr e te Memoryless Chann els The chan nel ( X , p Y | X , Y ) in Figure 1 is a memory less channel with finite alphabets ( X , Y ) for in put X ∈ X and output Y ∈ Y . The inp uts over N channel satisfy the constraint, 1 N N X n =1 E [ α ( X n )] ≤ ρ 0 , (4) where the expectation is over the information message an d α ( · ) : X → R is a real valued fu nction. For example, it is a power constraint if α ( X ) = X 2 . It is well known that th e capacity of this channel without the con straint is C ′ 1 = max p X I ( X ; Y ) , (5 ) and with the constraint, th e cap acity is R 1 = max p X : E [ α ( X )] ≤ ρ 0 I ( X ; Y ) . (6) The Lag range dual func tion of (6) is L 1 ( λ, ρ 0 ) , max p X I ( X ; Y ) − λ ( E [ α ( X )] − ρ 0 ) , (7) which is an upp er bou nd to R 1 for all λ ≥ 0 and all p X that satisfy the co nstraint E [ α ( X )] ≤ ρ 0 [3]. T he d uality gap G 1 is defin ed as the least u pper bou nd minus R 1 , i.e., G 1 = inf λ ≥ 0 L 1 ( λ, ρ 0 ) − R 1 . Because the mutu al in formation is a conv ex ∩ fun ction of the input distribution p X and th e inp ut constraint is conve x , R 1 is a conve x ∩ fu nction o f ρ 0 . Therefor e, the duality g ap G 1 is zero [7] and th e capacity can b e expr essed as C 1 = min λ ≥ 0 L 1 ( λ, ρ 0 ) (8) = L 1 ( λ ∗ , ρ 0 ) = R 1 . (9) W e compare the conv erse proo f with and withou t the constraint. The la st step o f th e con verse proof for th e case without th e constrain t is N X n =1 I ( X n ; Y n ) ≤ N C ′ 1 , where C ′ 1 dominates I ( X n ; Y n ) fo r every n . W ith input constraint th e additional steps of the traditional proo f of the conv erse [8, Chapter 7.3] are N X n =1 I ( X n ; Y n ) ≤ N I ( X ; Y ) (10) ≤ N R 1 , (11) where, unlike the ca se without input constraint, R 1 may not do minate every I ( X n ; Y n ) because the constraint (4) is av eraged over N channel uses and th us it is possible that E [ α ( X n )] > ρ 0 for some n . O ne has to co nstruct a ne w input distribution P X ( x ) = 1 N P N n =1 p X n ( x ) an d use the property that th e m utual in formation is a con vex ∩ function of inpu t distribution to o btain (10). L uckily , the ne w input distrib ution satisfies the constraint E [ α ( X )] ≤ ρ 0 because E [ α ( X )] is a conv ex function of p X , and thus one o btains (1 1). Using th e Lagran gian Conv erse Proof, th e key step is to add a term of Lagrang e multiplier: N X n =1 I ( X n ; Y n ) ≤ N X n =1 ( I ( X n ; Y n ) − λ ∗ ( E [ α ( X n )] − ρ 0 )) (12) ≤ N C 1 , (13) 3 1 2 ( | , , ) p y x s s 1 2 ( , ) p s s 1 N Y 2,1 N S Tr ansmitter Receiver 1 N X W ˆ W 1, 1 N S Fig. 2. A channe l with non-causal channel-stat e information at the transmitt er where λ ∗ ≥ 0 is the solu tion in (9); (12) f ollows from the fact that X n ’ s satisfy the c onstraint an d thus − λ ∗ P N n =1 E [ α ( X n )] − N ρ 0 ≥ 0 ; ( 13) follows f rom the fact that C 1 of (9) domin ates the summand in (12) fo r every n , as in the case without co nstraints, b ecause the power penalty λ ∗ ( E [ α ( X n )] − ρ 0 ) punishes excessi ve power use. The simplification is that we do not need to con struct a better input distribution p X . It will be significan t wh en there is no obvious way to find a be tter p X . B. The Capa city of Chan nels with Non-causal Channel-sta te Information a t the T ransmitter As s hown in Figure 2, the memory less ch annel with finite al- phabets is characterized by ( X , Y , S 1 , S 2 , p S 1 ,S 2 , p Y | X, S 1 ,S 2 ) , where X ∈ X is the chan nel input; ( S 1 , S 2 ) ∈ ( S 1 , S 2 ) is the channel-state with distribution p S 1 ,S 2 ; p Y | X, S 1 ,S 2 is the channel transition pro bability; and ( Y ∈ Y , S 2 ∈ S 2 ) is the chan nel outpu t, i.e., the channel-state S 2 is no n-causally known at the receiver . The channel-state S 1 is non -causally known at the transmitter . In the proof of th e conv erse, the inputs over N channel uses satisfy the constraint, 1 N N X n =1 E [ α ( X n )] ≤ ρ 0 , (14) where th e exp ectation is over the inf ormation message and the state S 1 . W itho ut inpu t con straints, the capacity is directly obtained in [5] or can b e obtaine d fro m [ 4] by considering ( Y ∈ Y , S 2 ∈ S 2 ) as the chann el outpu t. The cap acity is C ′ 2 = max U , X = ϕ ( U,S 1 ) ,p U | S 1 I ( U ; S 2 , Y ) − I ( U ; S 1 ) , where X is a d eterministic function of U an d S 1 , U ∈ U is an aux iliary ran dom variable. W ith the input c onstraint, the capac ity is C 2 = min λ ≥ 0 L 2 ( λ, ρ 0 ) ( 15) = L 2 ( λ ∗ , ρ 0 ) (16) = R 2 , (17) where R 2 = max U , X = ϕ ( U,S 1 ) ,p U | S 1 : E [ α ( X )] ≤ ρ 0 I ( U ; S 2 , Y ) − I ( U ; S 1 ); (18) L 2 ( λ, ρ 0 ) , max U , X = ϕ ( U,S 1 ) ,p U | S 1 I ( U ; S 2 , Y ) − I ( U ; S 1 ) − λ ( E [ α ( X )] − ρ 0 ) } , (19) is the Lagr ange dual fu nction to the p rimary pr oblem (18); E [ α ( X )] = X s 1 X u p S 1 ( s 1 ) p U | S 1 ( u | s 1 ) α ( ϕ ( u, s 1 )) ; (17) follows from the fact tha t U can include a time sharing variable [ 7] in it, and thus, R 2 is a c onv ex ( ∩ ) function of ρ 0 , and the refore, the duality gap is zero. The traditiona l proof for th e case with the con straint intro- duces a time sh aring variable as follows [6]. I ( W ; Y N 1 , S N 2 , 1 ) ≤ N X n =1 I ( U n ; Y n , S 2 ,n ) − I ( U n ; S 1 ,n ) (20) = N ( I ( U ; Y , S 2 | Q ) − I ( U ; S 1 | Q )) (21) = N ( I ( U, Q ; Y , S 2 ) − I ( Q ; Y , S 2 ) − I ( U, Q ; S 1 ) + I ( Q ; S 1 )) (22) ≤ N ( I ( U, Q ; Y , S 2 ) − I ( U, Q ; S 1 )) (23) = N I ( ¯ U ; Y , S 2 ) − I ( ¯ U ; S 1 ) (24) ≤ N R 2 (25) where W is the informatio n message; U n = ( W , Y n − 1 1 , S n − 1 2 , 1 , S N 1 ,n +1 ) ; (20) is obtained in [ 5]; (21) is obtained by th e d efinition of cond itional mutual informa tion and by letting Q be uniformly distributed over { 1 , 2 , ..., N } , U = U Q , S 1 = S 1 ,Q , S 2 = S 2 ,Q , and Y = Y Q ; (22) follows from the chain ru le of the m utual in formatio n; (23) follows from the fact th at { S 1 , 1 , ..., S 1 ,N } are i.i.d. and thus, I ( Q ; S 1 ) = 0 ; (24) follows from defining ¯ U = ( U, Q ) ; (25) follows fr om 1 N P N n =1 E [ α ( X n )] = E [ α ( X Q )] = E [ α ( X )] ≤ ρ 0 and th e fact th at ( 24) is a conve x ∪ fu nction o f p X | ¯ U ,S 1 when p ¯ U | S 1 is fixed, which imp lies that the op timal X is a d eterministic function o f of ¯ U and S 1 . Using th e Lagrangian Con verse Pro of, the same capacity result can be obtained without resorting to the time sharing variable: I ( W ; Y N 1 , S N 2 , 1 ) ≤ N X n =1 I ( U n ; Y n , S 2 ,n ) − I ( U n ; S 1 ,n ) ≤ N X n =1 · [ I ( U n ; Y n , S 2 ,n ) − I ( U n ; S 1 ,n ) − λ ∗ ( E [ α ( X n )] − ρ 0 )] , (26) ≤ N C 2 , (27) where ( 26) follows from the fact that X n ’ s satisfy the average power constraint. So far , we have seen two examples where the duality gap is zero . One might worry wh ether the proof works wh en the duality gap is not ze ro. In th e next subsection , we show that it works even when the du ality gap is n ot zero . 4 ( | , ) p y x v Feedback Generator ( ) p v Y V U Tr ansmitter Receiver fb Feedback Rate= (bits/c hannel use) R X W ˆ W Designable Fig. 3. A channel with limited and designable feedback C. Capacity o f Chann els with Limited F eedb ack a nd Inp ut Constraint W e consider a channel with d esignable finite-rate/limited feedback . As shown in Figu re 3, the memo ryless cha nnel with finite alp habets is ch aracterized by ( X , Y , V , U , p V , p Y | X, V ) , where X ∈ X is the chan nel input, V ∈ V is th e channel- state with distribution p V , p Y | X, V is the chan nel tr ansition probab ility , and ( Y ∈ Y , V ∈ V ) is the channel ou tput, i.e., the c hannel-state is kn ow at th e receiver . For the n th channel use, the tran smitter r eceiv es a causal, but not strictly causal, finite-rate, and error free channel-state feedback U n ∈ U = { 1 , ..., 2 R fb } from th e r eceiv er . The feedb ack U n could be designed as a deterministic or rando m functio n of cur rent channel- state V n and/or past chann el-states V n − 1 1 . Because the receiver pr oduces U n , it is assumed k nown to the recei ver . In the pr oof of the converse, the inp uts over N channel uses satisfy th e constrain t, 1 N N X n =1 E [ α ( X n )] ≤ ρ 0 , (28) where th e exp ectation is over the inf ormation message and the feedback . The capacity [9 ] of this chann el without input constraint is C ′ 3 = max ϕ ( · ) ,p X | U I ( X ; Y | U = ϕ ( V ) , V ) = max ϕ ( · ) ,p X | U X v p ( v ) · I p X | U ( ·| ϕ ( v )) , p Y | X, V ( ·|· , v ) , (29) where the importan t c laim is that th e feedb ack U = ϕ ( V ) is a deterministic and m emoryless function of the current ch annel- state V ; in (29) th e mu tual infor mation is w ritten as a function of its inp ut distribution and its chan nel transition probab ility . Based on C ′ 3 , one mig ht expect the cap acity with input constraint to be R 3 = max ϕ ( · ) , p X | U : E [ α ( X )] ≤ ρ 0 I ( X ; Y | U = ϕ ( V ) , V ) (30) The surprising r esult is that the ca pacity may be larger than R 3 . Theor em 1: [1], [2] The capacity of the chan nel ( X , Y , V , U , p V , p Y | X, V ) with design able finite-rate ( |U | = 2 R fb ) chan nel-state feed back and inp ut con straint ρ 0 is C 3 = min λ ≥ 0 L 3 ( λ, ρ 0 ) (31) = L 3 ( λ ∗ , ρ 0 ) (32) = R 3 + duality gap ≥ R 3 where L 3 ( λ, ρ 0 ) , max ϕ ( · ) ,p X | U { I ( X ; Y | U = ϕ ( V ) , V ) − λ ( E [ α ( X )] − ρ 0 ) } (33) is the Lagr ange dual fu nction to the p rimary pr oblem (30). 1) W itho ut the In put Constraint: W e first revie w the key steps of th e co n verse proo f without the in put constraint [2 ]. The mutual inf ormation b etween the info rmation message and the received signal is bou nded as I ( W ; Y N 1 , V N 1 , U N 1 ) ≤ N X n =1 X u n − 1 1 p ( u n − 1 1 ) · f 3 f ( u n − 1 1 ) 1 ( u | v ) , f ( u n − 1 1 ) 2 ( x | u ) (34) ≤ N X n =1 X u n − 1 1 p ( u n − 1 1 ) · f 3 p ∗ U | V ( u | v ) , p ∗ X | U ( x | u ) (35) = N C ′ 3 , (36) where (3 4) is o btained in [1] , [2] and f 3 ( f 1 ( u | v ) , f 2 ( x | u )) , X v p V ( v ) X u f 1 ( u | v ) · I f 2 ( ·| u ) , p Y | X,V ( ·|· , v ) , f ( u n − 1 1 ) 1 ( u | v ) = p U n | V n ,U n − 1 1 ( u | v , u n − 1 1 ) , f ( u n − 1 1 ) 2 ( x | u ) = p X n | U n ,U n − 1 1 ( x | u, u n − 1 1 ) . Let p ∗ U | V ( u | v ) and p ∗ X | U ( x | u ) be the solution to max p U | V ( u | v ) , p X | U ( x | u ) f 3 p U | V ( u | v ) , p X | U ( x | u ) = X v p V ( v ) X u p ∗ U | V ( u | v ) · I p ∗ X | U ( ·| u ) , p Y | X, V ( ·|· , v ) . Note that p ∗ U | V ( u | v ) and p ∗ X | U ( x | u ) are no t functions of u n − 1 1 because f 3 ( · , · ) is no t a function of u n − 1 1 . Furthermo re, f 3 p U | V ( u | v ) , p X | U ( x | u ) is a linear fu nction o f simplex { p U | V ( u | v ) , u ∈ U } , and thus, the o ptimal p ∗ U | V ( u | v ) is ob - tained at the extrem e point p ∗ U | V ( u | v ) = δ [ u − ϕ ∗ ( v )] for so me deterministic f unction ϕ ∗ ( · ) , where δ [ x ] = ( 1 x = 0 0 else wh ere . Therefo re, (35) and (36) are ob tained. 5 2) W ith the Input Co nstraint: The traditional method reviewed in Section II-A will n ot work here. One canno t pro duce a better feed back fu nction and input distribution p U | V ( u | v ) , p X | U ( x | u ) by av eraging f ( u n − 1 1 ) 1 ( u | v ) , f ( u n − 1 1 ) 2 ( x | u ) because f 3 p U | V ( u | v ) , p X | U ( x | u ) is not a convex function of p U | V ( u | v ) , p X | U ( x | u ) . Howev er , one c ould introduce a time sharing variable, as shown in Section II- B, but th e time sharing variable cann ot be absorbed into an e xisting auxiliary variable of the capacity formu la as in (24). Therefo re, we r esort to the Lagrangian Con verse Proof [2]. The key steps are I ( W ; Y N 1 , V N 1 , U N 1 ) ≤ N X n =1 X u n − 1 1 p ( u n − 1 1 ) · f 3 f ( u n − 1 1 ) 1 ( u | v ) , f ( u n − 1 1 ) 2 ( x | u ) − λ ∗ N X n =1 ( E [ α ( X n )] − ρ 0 ) (37) ≤ N X n =1 X u n − 1 1 p ( u n − 1 1 ) · f 4 p ∗ U | V ( u | v ) , p ∗ X | U ( x | u ) , λ ∗ (38) = N C 3 , (39) where λ ∗ is the solution to (3 2); (3 7) follows from the fact tha t the c onstraint is satisfied and thus − λ ∗ P N n =1 ( E [ α ( X n )] − ρ 0 ) ≥ 0 ; and f 4 ( f 1 ( u | v ) , f 2 ( x | u ) , λ ) , X v p V ( v ) X u f 1 ( u | v ) · I f 2 ( ·| u ) , p Y | X, V ( ·|· , v ) − λ X x f 2 ( x | u ) α ( x ) − ρ 0 !# . Let p ∗ U | V ( u | v ) and p ∗ X | U ( x | u ) be the solution to max p U | V ( u | v ) , p X | U ( x | u ) f 4 p U | V ( u | v ) , p X | U ( x | u ) , λ ∗ . Again, b ecause f 4 ( · , · , · ) is n ot a fu nction o f u n − 1 1 and f 4 p U | V ( u | v ) , p X | U ( x | u ) , λ ∗ is a lin ear function of the sim- plex { p U | V ( u | v ) , u ∈ U } , one o btains that p ∗ U | V ( u | v ) = δ [ u − ϕ ∗ ( v )] an d p ∗ X | U ( x | u ) are not function s of u n − 1 1 . Therefor e, (38) and (39) are o btained. 3) Relation of th e Lagrange Dual Fu nction to the T ime Sharing and the Capacity Region: In the following, we illustrates the cen tral role of the Lagran ge du al f unction L 3 from two aspects. T ime Sharing : W e fir st discuss a time shar ing expr ession C TS 3 of the capacity and then show that C TS 3 = C 3 using the Lagr ange dual f unction L 3 . The alternati ve conv erse p roof using time shar ing is as follows. Define the r andom variable Q 1 to b e un iformly distributed over { 1 , ..., N } and an other one to be Q 2 = U Q 1 − 1 1 . Then d efine the time sharing ran dom variable Q , ( Q 1 , Q 2 ) ∈ Q . W e obtain I ( W ; Y N 1 , V N 1 , U N 1 ) ≤ N X n =1 X u n − 1 1 p ( u n − 1 1 ) · f 3 f ( u n − 1 1 ) 1 ( u | v ) , f ( u n − 1 1 ) 2 ( x | u ) = N X q p Q ( q ) X v p ( v ) X u p U | V ,Q ( u | v , q ) · I p X | U,Q ( ·| u, q ) , p Y | X, V ( ·|· , v ) = N I ( X ; Y | U, V , Q ) (40) ≤ N C TS 3 , (41) where C TS 3 = max Q ,p Q ,ϕ Q ( · ) ,p X | U,Q : E [ α ( X )] ≤ ρ 0 I ( X ; Y | U = ϕ Q ( V ) , V , Q ); (42) and (41) follows fr om the fact th at (4 0) is a linear fun ction o f the simplex { p U | V ,Q ( u | v , q ) , u ∈ U } an d thus the deterministic feedback U = ϕ Q ( V ) does n ot lose the optim ality . It tu rns ou t that the Lagran ge dual f unction L 3 in ( 33) is not only the dual to th e primary problem R 3 in (30), b ut also the dua l to the op timization of C TS 3 in ( 42): L TS 3 ( λ, ρ 0 ) , max Q ,p Q ,ϕ Q ( · ) ,p X | U,Q X q ∈Q p Q ( q ) · { I ( X ; Y | U = ϕ ( V ) , V , Q = q ) − λ ( E [ α ( X ) | Q = q ] − ρ 0 ) } (43) = L 3 , (44) where (44) follows the fact that the function to be optim ized in (43) is a linear f unction o f the s implex { p Q ( q ) , q ∈ Q} an d thus, the op timal solution is obtained at ce rtain q ∗ for which p Q ( q ∗ ) = 1 . Therefor e, the one dua l fu nction for two prim ary problem s shows that C TS 3 = C 3 . Capacity Re g ions: W e show that the Lagrange dual function L 3 characterizes the boundar y poin ts of the two expr essions, C TS 3 and C 3 , of the single user capac ity region. Eq uation ( 40) shows that any achievable rate r und er constra int ρ must belong to the following capacity r egion: C TS 3 = closure [ Q ,p Q ,p U | V ,Q ,p X | U,Q C TS 3 , Fixed Q , p Q , p U | V ,Q , p X | U,Q , (45) where C TS 3 , Fixed Q , p Q , p U | V ,Q , p X | U,Q = { ( r , ρ ) : 0 ≤ r ≤ I ( X ; Y | U, V , Q ) , E [ α ( X )] ≤ ρ } . (46) Note that following the leads b y Gallager in the stud y of non- conv ex multiple acce ss cap acity r egion [10 ], we have includ ed ρ to make the capacity region a two dimen sional set. Since a 6 conv ex hull perfo rms the time sharing for y ou, an equivalent capacity r egion is C 3 = closure co n vex [ p U | V ,p X | U C 3 , Fixed p U | V , p X | U (47) = C TS 3 , where C 3 , Fixed p U | V , p X | U = { ( r , ρ ) : 0 ≤ r ≤ I ( X ; Y | U , V ) , E [ α ( X )] ≤ ρ } . (48) Characterizing the bou ndary of C TS 3 and C 3 can be reduce d to solving the L agrange dual function L 3 . Let (1 , − λ ) be the normal vector of a hyper plane. F inding the poin ts of C TS 3 that touch th e hyp erplane needs to solve B TS 3 ( λ ) , max ( r,ρ ) ∈C TS 3 (1 , − λ ) · ( r , ρ ) = max ( r,ρ ) ∈C TS 3 r − λρ , which can be redu ced to r = I ( X ; Y | U, V , Q ) ρ = E [ α ( X )] B TS 3 ( λ ) = L TS 3 ( λ, ρ 0 ) + λρ 0 = L 3 ( λ, ρ 0 ) + λρ 0 . The same is true f or C 3 : r = I ( X ; Y | U, V ) ρ = E [ α ( X )] B 3 ( λ ) = L 3 ( λ, ρ 0 ) + λρ 0 . Therefo re, we ha ve seen that the Lag range d ual fun ction plays the central role to connect the bou ndary p oints of the capacity r egion and th e capacity expressions: B TS 3 ( λ ) − λρ 0 = L TS 3 ( λ, ρ 0 ) = B 3 ( λ ) − λρ 0 = L 3 ( λ, ρ 0 ) ≥ min λ ≥ 0 L 3 ( λ, ρ 0 ) = C 3 ( ρ 0 ) = C TS 3 ( ρ 0 ) ≥ R 3 ( ρ 0 ) . Remark 1: E xpressing the capa city as the minimum o f the Lagrang e d ual function a lso helps to calculate the capacity because one does not need to worry abo ut the tim e sharing while p erform ing the optimization. If m ultiple solution s, i.e., input distributions etc., achiev e the same v alue of the Lagr ange dual fu nction, then the c apacity ac hieving strategy is a time sharing of these solution s an d th e tim e sh aring coefficients are chosen to satisfy the constrain t. See [1] , [2] fo r details. Example 1 : T o illustrate the capacity with non zero d uality gap, we pro duced an example, whose detailed deri vation is giv en in [1], [2]. Th e ch annel is an additive Gau ssian n oise channel with three states, good, modera te, and bad states, correspo nding to small, moderate, and large n oise variances. The feedb ack is limited to 1 bit/chan nel u se. For small lo ng 0.8 1 1.2 1.4 1.6 1.8 2 2.2 0.75 0.8 0.85 0.9 0.95 1 1.05 1.1 Transmit Power (watts) Rate (nats/channel use) Comparison of random feedback and time sharing Always on Always off P r (On)=[0.00, 0.09, 0.18, 0.29, 0.41, 0.53, 0.67, 0.82, 1.00,] Time Sharing Fig. 4. An example of n onzero duality gap. term average power constraint, the optimal strategy is to turn on the tran smitter with a fixed p ower only wh en the ch annel is in the good state, as shown b y the dotted curve in Figure 4. For large power c onstraint, the optimal strategy is to turn on the transmitter when th e chann el is in g ood or moder ate state with another fixed power , as shown by the solid cu rve in Figure 4. For the power constraint in between , the optimal strategy is a time sha ring of the above tw o strategies, as sh own by the line segment terminated by the “o”s. The gap b etween the line s egment and the ma ximum of th e dotted and th e solid curves is e x actly the n onzero d uality gap between C 3 and R 3 . The slope o f the line segmen t is λ ∗ . The “+” markers are fo r random feedb ack discussed in [1 ], [2 ]. I I I . T H E E X T E N S I O N T O T H E R A T E D I S T O RT I O N T H E O RY A. The Conver se Pr oof It is straig ht forward to extend the Lag rangian Con verse Proof to the rate d istortion theor y . W e illustrate it u sing the classic i.i. d. so urce as an example. Th e ra te d istortion fu nction of qu antizing i.i.d. source X to ˆ X in a vector manner is R ′ 1 ( D ) = min p ˆ X | X : E [ d ( X, ˆ X )] ≤ D I ( X ; ˆ X ) , where d ( · , · ) m easures the distortion . Use the L agrange dual function , we have another expression R 1 ( D ) = max λ ≥ 0 L 1 ( λ, D ) , (49) where L 1 ( λ, D ) , min p ˆ X | X I ( X ; ˆ X ) + λ E [ d ( X , ˆ X )] − D . In general, the Lagrang e dual function is a lower boun d and we h av e R 1 ( D ) ≤ R ′ 1 ( D ) . Due to the co n vexity of the mu tual informa tion, we have R 1 ( D ) = R ′ 1 ( D ) . 7 The last f ew steps of the co n ventional con verse proof is [ 11] N X n =1 I ( X n ; ˆ X n ) ≥ N X n =1 R ′ 1 ( E [ d ( X n , ˆ X n )]) ≥ nR ′ 1 1 n N X n =1 E [ d ( X n , ˆ X n )] ! (50) = nR ′ 1 ( D ) , where (50) u sed the pro perty that R ′ 1 ( D ) is a conve x ∪ function o f D . The Lag rangian Converse Proof do es not need to prove the the co n vexity pr operty of R ′ 1 ( D ) befo re performing the conv erse proof : N X n =1 I ( X n ; ˆ X n ) ≥ N X n =1 I ( X n ; ˆ X n ) + λ ∗ E [ d ( X n , ˆ X n )] − D (51) ≥ nR 1 ( D ) , (52) where λ ∗ ≥ 0 is the solution to (49); (51) follows from the fact that the distortion requ irement is satisfied by ˆ X n ’ s and thus λ ∗ P N n =1 E [ d ( X n , ˆ X n )] − N D ≤ 0 ; (52) follows from the fact that R 1 ( D ) lo wer bound the summand in (51) for every n . The ben efit of the Lag rangian Converse Proof may no t appear to be significan t in this simple example. But it can b e easily ap plied to mo re com plex cases when the time sharing has to be u sed in R ′ 1 ( D ) . An other example is when there are other constraints in addition to the distortion, in which case, simply intr oducing m ore Lagran ge m ultipliers solves th e problem . B. Dual R elation between Channe l Capacity and Rate Distor- tion W e note that u sing expression s inv olv ing L agrang e dua l function s, the chann el capacity and the r ate d istortion fu nction has a plea sant symmetric form , as evident in C 1 ( ρ 0 ) (8) and R 1 ( D ) (49) for ch annels witho ut side in formation . The symmetric f orm shows a du al relation in the sense of [5]. It can b e easily extended to the case of n on-cau sal side informa tion considered in [5], whe re the co nstraints of the capacity is not considered. W ith the constraint, the capacity (53) and the rate distortion (54) are shown at the top o f the next page. The dual r elation defined in [5] is the following isomorph ism. Channel Capa city Rate Distortion min ← → max max ← → min − λ ← → + λ T r ansmitted Symbo l X ← → ˆ X Estimation Receiv ed Symb ol Y ← → X Sou rce State to En coder S 1 ← → S 2 State to Deco der State to Deco der S 2 ← → S 1 State to En coder Auxiliary U ← → U Auxiliary Input Cost α ( · ) ← → d ( · , · ) Distortion Measure Input Constrain t ρ 0 ← → D Distortio n . A stronger dual relation is defined in [12], wh ere the capacity and th e rate d istortion can be made eq ual by selecting proper con straints. But it d oes not work whe n the o ptimal solutions need time sharing. Since (53) and (54) do not include the time sharing variables, it is a future research to see whether th e stronger dual relation can be es tablished with some mo dification. The dual r elation for the limited f eedback case is no t dis- cussed h ere. The reason is that the n ot-strictly-cau sal feedback to the tr ansmitter in chan nel capacity correspo nds to fin ite rate state information to the decoder in rate d istortion. While the encoder in ch annel cap acity can not use future feedback, the decoder in r ate distortion can wait to use both past and futu re finite r ate state in formatio n. I V . C O N C L U S I O N S W e have in troduc ed a simple con verse proo f th at uses the Lagrang e dual functio n to upp er b ound the in formation rate. It p rovides th e f ollowing approach to d eal with constraints: 1) Based on th e capacity of the chan nel without c onstraints, express the capacity fo r th e c ase with the constraints a s the minimum of the Lagrange dua l function; 2) Simply modify the converse proof for th e case witho ut the con straints by adding to the seco nd to the last expression a term inv olving the Lagran ge multip lier and the constraints, to pro duce the conv erse proof for the case with the constraints; 3) For the achiev ability , study the d uality ga p to determ ine whethe r the time sharing is n eeded. W e show that the un ified capacity expr ession, C = min λ ≥ 0 Lagrang e Dual Functio n ( λ ) , plays a cen tral role to co nnect th e charac terization o f the single user cap acity region, th e time shar ing capacity form ula, and the fo rmula resulted by imp osing the constrain t to the maximization in the cap acity for mula of the case without constraints. The Lagr angian capacity formula works regardless whether the p roblem is con vex or no t. T his formula a lso simplifies the ev a luation o f the capacity , by deferr ing the consideratio n of the time sharing. The above is extended to the r ate distortion theory . A sym- metric form of cap acity and r ate distortion function is shown to 8 C 2 ( ρ 0 ) = min λ ≥ 0 max U , X = ϕ ( U,S 1 ) ,p U | S 1 I ( U ; S 2 , Y ) − I ( U ; S 1 ) − λ ( E [ α ( X )] − ρ 0 ) (53) R 2 ( D ) = max λ ≥ 0 min U , ˆ X = f ( U,S 2 ) ,p U | X,S 1 I ( U ; S 1 , X ) − I ( U ; S 2 ) + λ ( E [ d ( X, ˆ X )] − D ) , (54) demonstra te the dual relation between them. Fu rther extension to the case of multiple constrain ts is straight fo rward. W e have discu ssed the single letter c apacity form ula in this p aper . The extension of the Lag rangian Con verse Proo f to multi- letter cap acity fo rmula, multiaccess channels, an d broa dcast channels is defe rred to f uture r esearch. R E F E R E N C E S [1] Y . Liu, “Capacity the orems for channels with designable fe edback, ” in Pr oc. Asilomar Confer ence on Signals, Systems and Computer s, inivite d paper , Califor nia, USA, Nove mber 2007. [2] ——, “Capac ity theorems for single-user and multiuse r channels with limited chan nel-state feedbac k, ” to be submitted to IEEE T ransacti ons on Information Theory , 2008. [3] S. Boyd and L. V ande nberghe , Con vex Opti mization . Cambridge Uni versi ty Pre ss, 2004. [4] S. Gelfand and M. Pinsker , “Cod ing fo r channels with random param- eters, ” Pr ob. Contr ol and Information Th eory , vol. 9, pp. 19–31, 1980. [5] T . Cov er and M. Chiang, “Duality between channel capacit y and rate distorti on with two-sided state information, ” IEEE T rans. Info. Theory , vol. 48, no. 6, pp. 1629–1638, 2 002. [6] P . Moulin and J. O’Sulli van, “Information-the oretic analysis of infor- mation hiding, ” IEEE T rans. Info. Theory , vol. 49, no. 3, pp. 563–593, 2003. [7] W . Y u and R. Lui, “Dual methods for noncon vex spectrum optimizat ion of multi carrier systems, ” IEEE T rans. Commun. , vol. 54, no. 7, pp. 1310– 1322, 2006. [8] R. G. Galla ger , Information Theory and Reliable Communicat ion . Ne w Y ork, USA: John W ile y & Sons, Inc., 1968. [9] V . K. N. Lau, Y . Liu, and T . -A. Chen, “Capacity of memoryless chan- nels and bloc k f ading c hannels wi th d esignable c ardinal ity-constr ained channe l state feedback, ” IEEE T rans. Info. The ory , vol. 50, no. 9, pp. 2038–2049, 2004. [10] R. G. Gallager , “Energ y limite d channels: Coding, multiac cess, and spread spectrum, ” Report, LIDS-P-1714, M.I.T ., Laboratory for Infor- mation and Decision Systems , November 1987. [11] T . M. Cove r and J . A. T homas, Elements of information theory , 2nd ed. Ne w Y ork, USA: John Wi ley & Sons, Inc., 19 91. [12] S. Pradhan, J. Chou, and K. Ramchandran, “Duali ty between source coding and channel coding and its exte nsion to the side informati on case, ” IEEE T rans. Info. Theory , vol. 49, no. 5, pp. 1181–1203, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment