A High Performance Memory Database for Web Application Caches

This paper presents the architecture and characteristics of a memory database intended to be used as a cache engine for web applications. Primary goals of this database are speed and efficiency while running on SMP systems with several CPU cores (fou…

Authors: ** - Ivan Voras (Faculty of Electrical Engineering, Computing, University of Zagreb

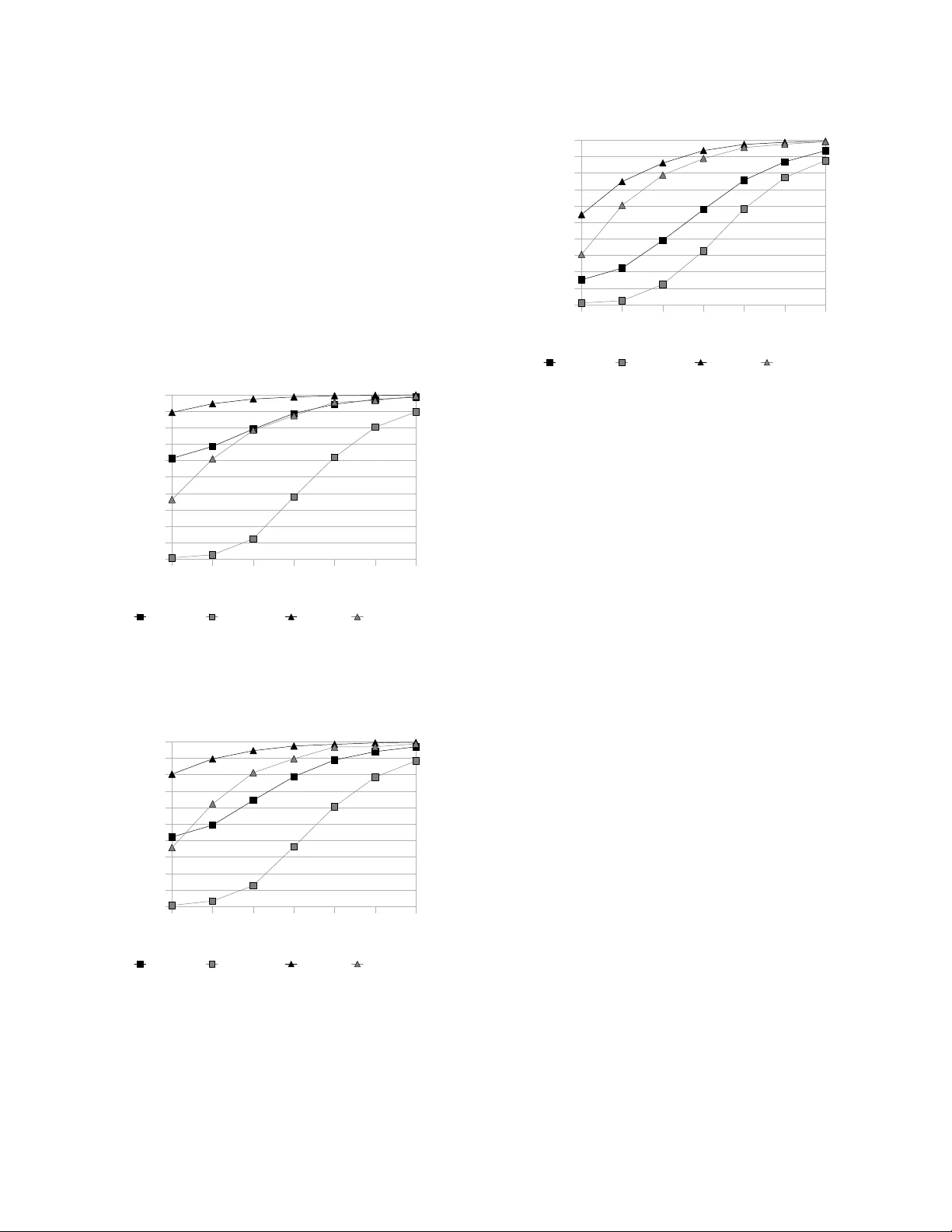

Abstract —This paper presents the architecture and charac teristics of a m em or y database intended to be used as a cache engine for web app lications. Prim ar y goals of this databa se are speed and efficiency while run ning on SMP system s w ith several CPU cores (four and m ore ). A secondary goal is the suppo rt f or simp le m etada ta structures asso ciated w ith cached data that can aid in efficient use of the cache. Due to these g oals, som e data structu res and alg orith m s norm ally associated w ith this field of com putin g needed to be ad apted to the n ew environ m ent. Index Term s —web cache , cache databa se, cach e dae m on, m em ory datab ase, SMP, SMT, concurren cy I. I NTRODUCTION e observe that, as the num ber of web applications created in scripting languages and rapid pro toty ping framew orks continues to grow, the importance of off- applicatio n cache engines is r apidly increasing [1]. Our own experiences in building high-performance web applications has yielded an outline o f a spe cification for a cache engine that would be best suited for dynamic w eb applicatio ns w ritten in an inherently stateless enviro nm ent like the PHP lang uage and framew ork 1 . Fin ding no Open Source solutions that w ould satisfy this specification, we have created o ur ow n. W II. S PECIFICA TION The basic form for an add ressable data base is a stor e of key- value pairs (a dictionary), where both the key and the value ar e more or less o paque binary strings. T he keys ar e treated as addresses by which the values ar e stor ed an d accessed. Because of the simplicity o f this model, it can be implemented efficiently , and it's often used for fast cache datab ases. The first point o f our sp ecification is that the cache daem on will implement key-value stora ge . However, key- value databases ca n be limitin g and inflexible. In our experie nce one of the most valuab le features 1 Because of the stat el ess n ature of the HTTP, most lan guages and framew orks widel y used to build w eb application s are stateless, with various workarounds like HTTP cookies and server-side sessions tha t are more-or -less integrated int o frame wor ks. This work i s sup ported in part by th e Croati an Ministry of Sc ience, Educat ion an d Sports, un der t he research project “Software En gineer ing in Ubiqui tous Computing”. I van Voras, Danko B asch and Ma rio Žagar are with Faculty of Electrical Engineering and Compu ting, Un ive rsity of Z agreb, Croatia . (e-mail: {ivan.voras, dan ko.basch, mario.zagar}@fer.hr). a cache databa se could have is the ability to gro up cached keys by some cr iterion, so multiple keys belonging to the same group can be fetched and expired together, saving comm unication round-trips and removing grouping logic from the application. To make the cache database more versatile , the cache d aemon will imp lement simple metada ta for cached records in th e form of type d numeric tag s. Tod ay 's per vasiven ess o f multi-core CPUs and servers w ith multiple CPU sockets has re sults in a significantly different environment than wh at w as comm on when sing le-core single- CPU co m puters were dominant. Algorithms and str uctures that were efficient in the old environment are so m etimes suboptimal in the new. Thus, the c ache daem on will be optimized in its architectu re and alg orithms for multi- processor servers with symmetric multi-processin g (SMP). Finally, b ecause of the many o perating system s a nd environments available to day, the cache daemo n will b e written a ccording to the POS IX spe cification and usable on multiple p latforms. A. R ationale a nd discussion Cache databa ses should be fast. The primary purp ose o f this proj ect was to create a cache d atabase for use in w eb applicatio ns w ritten in r elatively slow languages and framew orks such as PHP , P yth on and Ruby. T he common way of using cache databases in web applications is for storing results o f complex calculations, both internal ( such as generating HT ML content) and exter nal ( such as from SQL databases). T he usage of d edicated cache datab ases p ay s off if the cost of storing (and especially retrieving) data to (and from) the cache database is lower than the cost of perfor m ing the o peration that generates the ca ched da ta. T his cost might be in IO and mem ory allocation but we obser ve that the m ore likely cost is in CPU load . Caching often-generated data instead o f generating it rep eatedly (which is a common case in web applicatio ns, where the same content is p resented to a large number of u sers) can dramatically im prove the applicatio n' s performance. Though they are efficient, w e have observed that pure key - value cac he databa ses face a pr oblem w hen the application needs to atomically retr ieve or expir e multiple re cords at once. While this can be solved by keep ing track o f groups o f recor ds in the application or (to a lesser extent) f olding group qualifiers into key names, w e have observed that the added complexity of boo kkeeping this information in a slow language (e.g. PHP ) distracts from th e sim plicity of the cache A High Perfor m ance Memory Dat abase for W eb Applic ation Caches Iv an Voras, Danko Basch an d Mario Žagar engine and can even lead to slowdowns. T hus we added the requirement for m etadata in the form of ty ped numeric "tags" wh ich can be queried. Adding tags to key-valu e pair s would enable the application to off-load group operatio ns to the cache daemon where the data is stored . With the r ecent trend of increasing the number of CPU cores in computers, especially with multi-core CPUs [1 0], we decided the cache d aemon must be designed from the star t to make use of multiprocessing cap abilities. The proj ect is to explore e ff icient algorithms that should be em ployed to achieve the b est per form ance o n server s with 4 and more CPU cores. Due to the read-mostly nature of its purp ose, the cache database should never allow readers (clients that only fetch data) to block other rea ders. Multi-platform usability is non-critical, it is an added bo nus that will m ake the result of the pro ject usable for many m ore users. III. I MPLEMENT A TION O VER VIEW We have implemented the cache daemon in the C programming language, using o nly the standard or widespread library functions to incre ase its portab ility on POSIX-like operating sys tems. POSIX Thread s ( pth reads ) were used to achieve concurrency on multi-processor hardware. The d eamon c an be roughly d ivided into three modules: network interface, worker threads and d ata storage. The following sec tions w ill describe the implementation details of each of the modules. A. Netwo rk interface The network interface module is responsible for accepting and proce ssing commands from clients. It uses the standard BSD " sockets" API in non-blocking mode. By de fau lt, the daemon crea tes b oth a "Local Unix" socket and a TCP soc ket. The netw ork interface is run in the starting thread of the process and handles all inco m ing data asynchronously, dispatching complete req uests to w orker threads. The network p rotocol (for both " Local Unix " and TCP connections) is binary, designed to minim ize proto col parsing and the number of system calls necessary to proce ss a request. B. Worker th reads The daemon implements a pool of worker threads w hich accept r equests from th e network code, p arse them and execute them. The threads commun icate with the network inter face using a p rotected (thread-safe) job queue. T he num ber of threads is adj ustable via comman d-line arguments . This feature includes a spe cial support for "threadless" operatio n, in which the netw ork interface calls the proto col p arser as a fu nction call in the same thread, eliminating sy nchronization overheads. C. Data storage Data storage is the most important p art o f the cac he daemon as it has the biggest influence on its per form ance and capabilities. T here are two large da ta structures implemented in the cache daemon. The first is a hash ta ble of static size whose elements (buckets) a re ro ots of r ed-black tr ees which c ontain key- value pairs. These elements ar e protecte d by reader -w riter locks (also called " shared-exclusive" locks). T he hash tab le is populated by hashing the key p ortion of the pair. This mixing of data structures ensures a very high level o f concurrency in accessing the data. Reader-writer lo cks per hash b uckets allo w for the highly d esirable behaviour that reade rs (clients that only re ad data) never block other readers, greatly increasing performance for usual c ache usage. T he intention behind the design of this data structure was that, u sing a reasonably w ell distributed hash function, high concurrency of writers can also be achieved (up to the number of hash buckets). An illustration of the data stor age organisation is presented in Fig. 1 . Fig. 1. Illustration of t he key-value data st ructure u sed as t he prima ry data storage pool The second big d ata structure inde xes the m etadata ta gs for key- value reco rds. It is a red-bla ck tree of tag types (an integer value) whose e lem ents ar e again red-black trees containing ta g data (also an integer value) with po inters to the key-value record s to wh ich they are attached. The purpose of this organization is to e nable performing queries on the data tags of the form " fin d all records of the given tag type" and "find all record s o f the given type and wh ose tag data co nf orms to a simple numerical comparison o peration (lesser than, greater then)". The tre e of d ata types is pro tected by a read er-w riter lock and each o f the tag d ata trees is protected by its ow n reader-writer lo ck, as illustrated in Fig. 2. Again, reader s never block other readers and lo cking f or write oper ations is localised in a way that a llow s co ncurrent access for tag queries. . . . Fig. 2. Il lustration of th e tag tree structu re Records without metadata tags don't have any influence on or co nn ection with the tag trees. D. Ra tionale an d discussion We chose to implement both asynchronous netw ork access and multithreading to achieve the maximum perfor m ance for the cache daemon [2]. This model is a hybrid o f pure mu lti- process architecture ( MP ) and the even-driven ar chitecture, and is so m etimes called asymmetric multi-process eve nt- driven ( AMPED ) [3] . In it, we ded icate a thread to network IO and ac cepting new connections. This model has be en explor ed in part in [13], with the difference that o ur focus is on maxim izing performed op erations per seco nd instead of network bandwidth. Our implementation tries hard to avoid unnecessary sys tem calls, context switches and mem ory reallocatio n [1 1] [12 ]. The implementation has avoided most of pro tocol parsing overhead s by using a binary pr otocol wh ich in cludes data structure sizes and count fields in comm and packet head ers. Since the number of clients in the intended usage (web cache d aemon) is relatively low (in the ord er of hundreds), we have avoided explicit connection scheduling descr ibed in [1 4]- [16]. We have opte d for a thread-po ol design (in which a fixed num ber o f worker threa ds perform protoc ol parsing and data operatio ns) to allow the administrator to tune the number of worker threads ( via co m man d line argum ents) and thus the acceptab le CPU load on the server. We have also implemented a spec ial "thread less" mode in which there are no worker threads, but the network code makes a direct function call into the p rotocol p arser, effectively making the dae m on single process event drive n ( S PED ). This mode can not make use of multiple CPUs, but is included for co m parison w ith the other model. As discussed in [7] and [9 ], the use of multi-processing and the relatively high standards w e have set for concurrency of the r equests have r esulted in a need for car eful choice o f the structures and algorithms used for data storage. Tr aditional structures and algorithm s used in caches, such as LRU and Splay trees [4 ], ar e not directly usable in high-concurrency environments. LRU and its derivatives need to maintain a global queue of ob jects, the maintenance of which needs to happen o n every r ead access to a tracked object, w hich effectively ser ializes r ead o perations. Splay trees rad ically change with every access and thus need to be exclusively locked for every acce ss, serializing both read ers and w riters (much m ore seriously than LRU). In orde r to maxim ize concurrency (m inimi ze exclusive locking) a nd to limit the in-mem ory working set used during transactions (as discussed in [11]) , we have cho sen to use a combination of data structures, specifically a hash table and binary searc h trees, for the principal data storage structure. Each bucket of the hash table contains one binary sea rch tree holding elements that hash to the bucket and a shared - exclusive locking o bject ( pth read rwlock ), thus setting a hard limit to the granularity of concurrency: write o perations (and other operatio ns requiring exclusive access to data) exclusively loc k at most one bucket (one binary tr ee). Read operatio ns acquire shared locks and do not b lock one another. The hash ta ble is the principal source of writer concurre ncy . Given an u niform distrib ution o f the hash function an d significantly more hash buckets than there are worker threads (e.g. 256 vs. 4), the pro bability of thread s blocking o n data access is negligibly small, wh ich is confirmed by our simu lations. T o increase o verall performance and reduce the total num ber of locks, the size of the h ash tab le is determin ed and f ixed at program start and the table itself is not p rotected by loc ks. The garb age collec tor (which is implemented naively instead of a LRU-like m echanism) operates when the exclusive lock is alr eady acquired ( probabilistically, during write operatio ns) and o perates per hash- bucket. The co nsequence of operating per hash-bucket is a lower flexibility and accuracy in keeping tra ck of the total size o f allocated memory, and mem ory limits a re forced to beco m e per -bucket instead of per entire data p ool. The metadata tags structures design was driven by the same concerns, but also with the need to mak e certain q uery operatio ns efficient (ranged comparison and gro uping, i.e. less-than or greater-than). We have decid ed to allow the flexibility of queries on b oth the type and the value parts of metadata tags, and thus we im plemented binary trees which are effective for this purpose. IV . S IMULA TIONS To aid in understand ing of th e performance and behaviour of the key-value sto re ( the hash table co ntainin g binary search trees), we have created a GP SS simulation. The simulation models the b ehaviour of the system with a tunable number of worker threads and hash buckets. T he simulated parts are: a task generator, w orker threads, lock acq uisition and release accord ing to p thread rwlock semantics (with increased writer priority to avoid writer star vation) and the hash buckets. T he task generator attempts to saturate the system . The timings used in the m odel ar e a pproximate and thus w e're only interested in trends and p roportio ns in the results. Fig. 3, 4 and 5 illustrate the perc entage o f "fast" loc k acquisitions from the simu lations, where " fast" is either uncontested lock acquisition T V V V V V T T or co ntested where to tal time spent waiting o n a lock is deemed insignificant (less than 5% of average time spent holding the lock i.e. pro cessing the task). Graphs ar e plotted with the number o f hash buckets on the X-axis and a percentage of fast lock acq uisitions o n the Y-axis. Results cover the timings for both shared lo cks and exclusive locks, obtained during same simulations. T he simulations were run for two cases: with 64 worker thre ads (which could today realistically be run on p roducts such as the UltraSP A RC T 2 from Sun M icrosystem s [ 5]) and with 8 worker threads (which can be run on r eadily a vailable servers on the common x86 platform [6] [1 0]). Ind ividual figures describ e the system behaviour with a varied ratio of reader and w riter tasks. Fig. 3. Cach e behaviour with 90% readers and 10% writers Pred ictably, Fig 3. show s how a high ratio of hash buckets to threads makes almost all lock acquisitions fast. Fig. 4. Cach e behaviour with 80% readers and 20% writers Trend s in the simulated system continue in Fig. 4 with expected effects of having a larger number of exclu sive lo cks in the system. We ob serve that this load marks the bo undary wh ere having the sam e num ber of hash buckets a nd worker threads makes 90% of shared lock acquisitions fast. Fig. 5. Cach e behaviour with 50% readers and 50% writers In the situation pr esented in Fig. 5 the abundance of exclusive acce sses (locks) in the sy stem intro duces significant increases in time spent w aiting for hash bucket locks. Both kinds of locks ar e acquired with noticeable delays and the num ber of fast loc k acquisitions falls appropria tely . The simu lation results emphasise the lock contention, showin g that equal relative performance can be achieved w ith the same r atio o f w orker threads and hash tab le buckets, and show an o ptim istic p icture when the number of hash buckets is high. From these results, we have set the de fau lt number of buckets used b y the p rogram to 25 6, as that is clea rly adequate for tod ay' s hardware. T he graphs do not show the number o f tasks dr opped b y the worker threa ds due to timeouts (simulated by the length o f the queue in the task genera tor). Both types of simulated system s were subjecte d to the same load and the length o f the task q ueue in systems with 8 worker threads was from 1 .5 to 13 times as lar ge as the same length in system s with 64 worker threads. T his, co upled w ith simulated inefficiencies (additio nal d elays ) when lock c ontention is high between worker thread s can have the e ff ect of favouring system s with a lower number of worker threads (loc k acquisition is faster because the contention is lower, but on the other hand less actual work is being do ne). V . E XPERIMENT AL R ESUL TS As this is a work in pro gress, we have p erformed o nly preliminary measurements of system performance and behaviour (of the key-value data stor e), on a limited varie ty of hardware. To disco ver the impact of thread synchronization pr im itives, we benchm arked the pr ogram' s performance on a single-CPU system with Pentium M @ 1.5 GHz, running FreeB SD 7.0, with both the daemon and the client on the same system, comm unicating via Unix Local sockets. As this is a single- CPU system, w e present the results of measurements in "thread less" mode and with a single worker thread, to illustrate the tradeoffs pre sent in th e chosen architecture. 4 8 16 32 6 4 128 256 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100 % N C PU =64, SH AR ED N C PU =64, EX C LU SI VE N C PU =8, SH AR ED N C PU =8, EX C LU SI VE # of has h t able buc k et s Pe rcen ta g e of f as t l o ck a cqu i s i t i o ns 4 8 16 32 6 4 128 256 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100 % N C PU =64, SH AR ED N C PU =64, EX C LU SI VE N C PU =8, SH AR ED N C PU =8, EX C LU SI VE # of has h t able buc k et s Pe rcen ta g e of f as t l o ck a cqu i s i t i o ns 4 8 16 32 64 128 256 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100 % N C PU =64, SH AR ED N C PU =64, EX C LU SI VE N C PU =8, SH AR ED N C PU =8, EX C LU SI VE # of has h t able buc k et s Pe rcen ta g e of f as t l o ck a cqu i s i t i o ns Fig. 6. Tradeoffs of multi-threading Fig. 6 shows that the best option for single-CPU systems is the " threadless" mode (with a minim um 25% perfor m ance edge), in which the d aemon degenerates into SP ED-like behaviour. The co sts of managing the thread -saf e queue of tasks and the co ntext switches involved in handing off the tasks from the netw ork thread to the worker thread is high enough to r esult in noticeable slowdowns. The results lea d us to conclude that in case o f " Null transactions" (which ar e complete transactions, only without a p ay load comm and), these costs ar e almost the same as the p rocessing time req uired for pro cessing th e transactions themselves. Another type o f b enchm ark was performed to explore the limits of per form ance o f the c ache d aemon in its current implem entation. T hese be nchm a rks use a mix of r ead and write o perations ( 90% reads, 10 % writes) on a preco m puted data set of 30 ,000 recor ds with size o f 1 KB +/- 500 bytes, with a varied number of simultaneous clients. TA BLE I B ENCHMARK R ESULT S OF THE M EMORY C ACHE S ERVER ON V ARIOUS S YSTEMS AND L OADS System No. clie nts Ops / sec. AMD Athlon 64 @ 1.8 G Hz (32-bit), 2 core, Free BSD 7.0, 2 worker threads, Lo cal Sockets 10 71,10 0 40 72,25 0 I ntel Core 2 Duo @ 1.8 G Hz (32-bit), 2 core, Linux 2.6.2 2, 2 worker threads, Lo cal Sockets 10 75,00 0 40 79,70 0 Tw o I ntel Xeon 5320, 1 .9 GHz (32-bit), 4 core systems, F reeBSD 7.0, 4 worker threads, Remote TCP (gigabit Et hernet) 10 95,15 0 40 113,6 50 The results presented in Ta ble 1 ar e pro m ising and ade quate for many "real-world" p urposes, however we be lieve that ther e is ro om for improvement and that testing on faster hardware with more CPU cores may yield information abo ut possible areas of improvement in p erformance and scalability (which is on our future researc h agenda). We have p erformed a pr elim inary comparison of our mem ory c ache ser ver to an existing solution, Memcached 1.2.1 , used b y many existing high-performance web sites [ 1] [8], with the same data set as used for results in T able 1 and on the system from the first row in the table. TA BLE I I B ENCHMARK R ESULT S OF O UR M EMORY C ACHE S ERVER C OMPARED TO M EMCACHED No. Clients Ops / sec. Our cache server, 2 worker threads 10 71,10 0 Memcached, t hreadle ss 10 35,15 0 We attr ibute the differences in per form ance presented in Tab le 2 to the inefficient text network proto col used by Memcached and a design that doesn't scale well to m ulti-CPU system s. VI. C ONCLUSION This p aper presents the design and implementation of a high-performance memory cache datab ase server. In its creation w e have d esigned many optimizations, including data structures permittin g highly co ncurrent operations, m ulti- threaded cor e based on the thread -pool model and an optimized network co m mu nication model. W e have analysed and simulated the d esigned structures a nd algorithms, ad apted and implemented it, and performed benchmarks o f the resulting server program. The intended usage for this server is as an external cache database for web app lications, and preliminary analy sis of its performance and b ehaviour suggests that the current implem entation of the server is sufficient for this purpo se. The result of this proj ect is a d irectly usable product w hich will soon be implement ed in our Fac ulty 's w eb applications. VII. R EFERENCE S [1] I . Voras, M. Žagar, "Web-enabling cache daemon for c omple x data", to be publish ed [2] D. Pariag , T. Brecht, A. Harji, P. Buhr, A. Shuk la, and D. R. Cheriton, "Comparing the performance of web serv er arch itectures," SIGOPS Operatin g System Rev iew 41, 3 , pages 231-24 3, Jun. 2 007. [3] T. Brecht, D. Pariag, and L. Gammo "Acceptable strategies for improving we b server performance" in Proceedi ngs of the USENIX Annua l Technica l Confere nce 2004 , MA, USA , June 27 - July 02, 2 004. [4] D. Sle ator, Daniel and E. Tarjan, "Self-A djustin g Binary Search Tre es", Journal of the ACM 32 (3 ), pp. 65 2-686, 19 85. [5] OpenSPARC T2 Co re Microarchit ecture Sp ecificati on , Sun Microystems, Inc., http:// opensparc.sunsource.net/specs/UST1- UASuppl-current-draft-P-EXT.pdf, Jan 20 07. [6] Intel multi -core platf orms , I ntel.. Available: http:// w ww .intel.com/multi- core/index.htm, Jan 2007. [7] J. Zhou, J. Cieslew icz, K. Ross, an d M. Sh ah, "Improv ing dat abase performance on simultan eo us multithreadin g processors", i n Proceed ings of the 31st i nternatio nal Confe rence on Very Large Data Bases, Norway, A ugust 30 - September 02, 2005 . [8] B. Fitzpatrick, "memcach ed: users ", http:// w ww .danga.com/memcach ed/users.bml, retriev ed 2007 -10-14 N ull t rans ac tions A D D tr ans ac tions GE T tr ans a c ti o ns 0 20000 40000 60000 80000 10000 0 T hre adl es s 1 Wor k er T r ans ac tions per s ec on d [9] P. Garcia and H. F. Korth, "Database hash-join algorithms on multithreaded computer a rchitectures", i n Procee dings of t he 3rd Confe rence on C omputing Frontiers, Italy, May 2006. [10] Mu lti-core pro cessors – the next e volution in compu ting , AMD White Paper, 2005 . [11] A. Ailamaki, D. J. DeWitt, M. D Hill, D. A . Woo d, "DBMSs on a Modern Processor: Where Does Time Go?", in Proceed ings o f the 2 5th intern ational Conferen ce on Very Large Data Ba ses , 1999. [12] L. K. M cDow ell , S. J. Eggers and S. D. Gribble, "Improv ing server software support for simultaneous mu ltithreaded p roce ssors" in Proceed ings of t he Ninth ACM SIGPLAN S ymposium on Princip les and Practic e of Paral lel Programmin g, California, USA, Jun 2 003. [13] P. L i, and S. Z dancewic, "Combining eve nts and threads fo r sc alable network services implementation and evaluati on of monadic , applicat ion-le vel concurrency primitives", SI GPL AN Not. 4 2, 6 Jun. 2007 . [14] M. Crovel la, R. Frangioso, and M. Harchol-Balter, "Conn ection scheduling in web server s" in Proceed ings o f the 2n d USENIX Symposyu m on Intern et Techn ologies and Syst ems , Colorado, USA, Oct 1999 . [15] M. Welsh, D. E. Cu lle r, and E.A. Brewe r, "SEDA: An architectu re for wel l-conditioned, sc alable I nternet services" in Proceedi ngs o f the 1 8th ACM Symposium on Operat ing S ystem Prin ciples , Banff, Canada, Oct. 2001 . [16] Y. Ruan, and V. Pai, "T he origins of network server latency & the my th of conn ection sch eduling", SIGMET RICS Performance Evaluati on, Rev. 32, 1 , pages 424-425 . Jun. 20 04. VIII. B IOGRAPHIES I. Vo ras (M'06), was born in Slavonski Brod, Croatia. He receive d Dipl.ing. in Compu ter Engineering (200 6) from the Faculty of Electrical Engineering and C omputing (FER) at the University of Zagre b, Croatia . Since 2006 he has been employe d b y th e Faculty as an I nternet Services Architect a nd i s a graduate stud ent (PhD) at the same Faculty, where he has p articipated in research projects a t the Department of Control an d Computer Enginee ring. His c urrent research interests a re in th e fields of distrib uted systems and network communi cations, with a special intere st in performance optimizations. He is an active member of several Open source projects a nd is a regular contribu tor to the Free BSD operating system. Contact e-mail address: ivan.voras@fer.hr. D. Basc h w as born in Ri jeka, Croatia. He rece ived Dipl.in g. in Electrical Engineering (1991), M.Sc. (199 4) and Ph. D. (2000) in Compu ter Science from the Faculty of Ele ctrical Engineering an d Comput ing (FER) a t th e University of Zagreb (Croatia). I n 199 2 he joined Department of C ontrol and Computer Engineering (at FER) as a researcher. At present he works at th e same department as an associate professor. His research interests i nclude programming lan guage d esign and implementation, garbage collection algorithms, and modelling and simulation. C ontact e-mail address: danko.b asch@fer.hr. M. Žaga r (M'9 3-SM'04), p rofe ssor of comput ing at the University of Zagreb, Croatia, received Dipl.ing., M.Sc.CS an d Ph .D.CS degre es, a ll from the University of Z agreb, Faculty of El ectrical Engineering and Computin g (F ER) in 1975, 1978, 1985 re spectively . I n 1977 M. Ž agar joined FER and since then has b ee n involved in different scientific p rojects an d educational activiti es. He receive d British C ouncil fel low ship (UMIST - Manch este r, 198 3) an d Fulbright fell ow ship (UCSB - Santa Ba rbara, 1983 /84). His current professional interests inc lude: computer archit ectures, design automation, real-time microcomputers, distributed measurements/control, ub iquitous/ pervasive computing, open c omputing (JavaWorld, XML ,..). M. Žagar i s auth or/co-author of 5 books and a bout 1 00 scientific/ professional journal and conference pap ers . He is senior member in Croatian Academy of Engineering. In 200 6 h e received “ Best edu cator” award fr om the IEEE/CS Croatia Section.. Contact e-mail address: mario.zagar@fer.hr.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment