Enabling Loosely-Coupled Serial Job Execution on the IBM BlueGene/P Supercomputer and the SiCortex SC5832

Our work addresses the enabling of the execution of highly parallel computations composed of loosely coupled serial jobs with no modifications to the respective applications, on large-scale systems. This approach allows new-and potentially far larger…

Authors: ** Ioan Raicu*, Zhao Zhang+, Mike Wilde#+

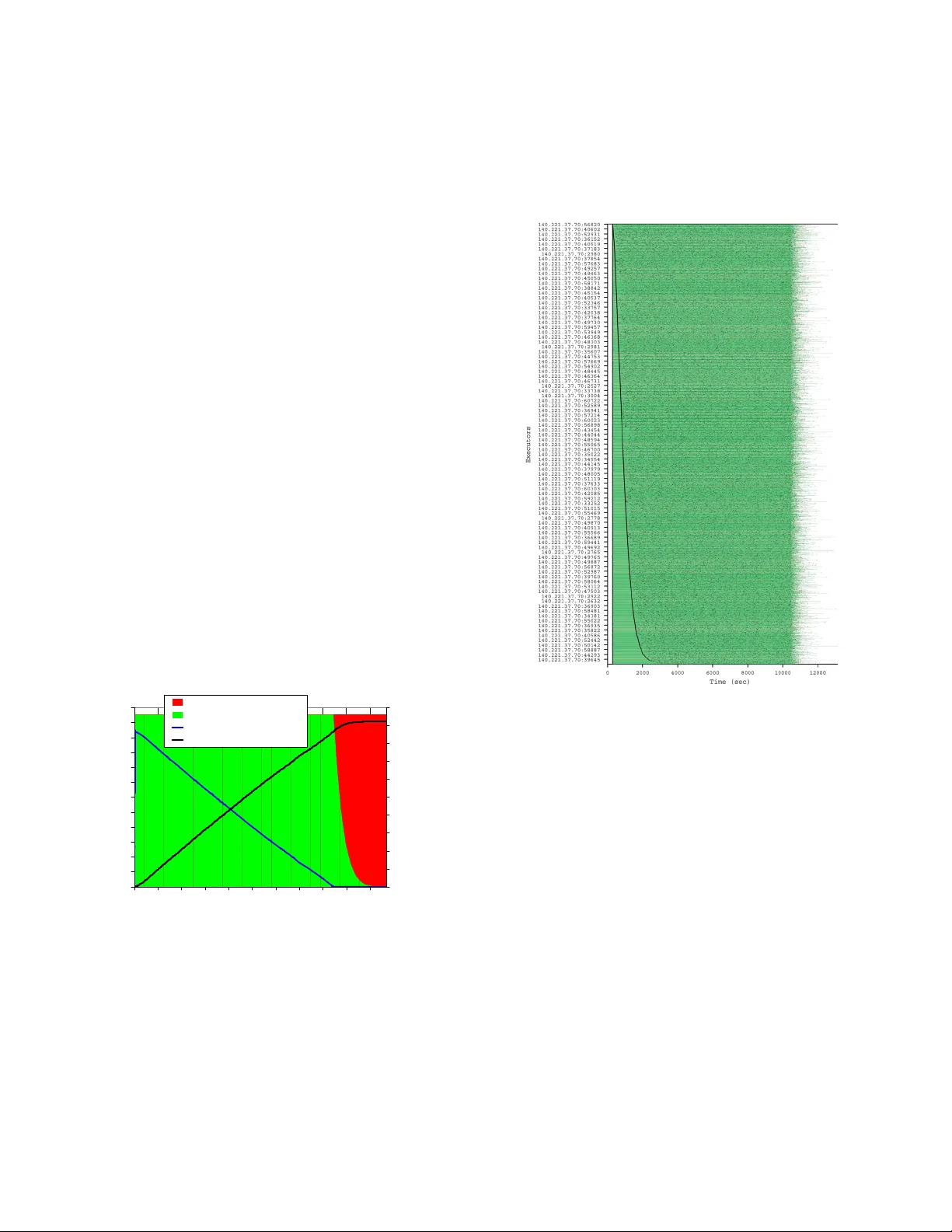

Enabling Loosely-Coupled Serial Job Execution on the IBM BlueGene/P Supercomp uter and th e SiCortex SC5832 Ioan Raicu * , Zhao Zhang + , Mike W ilde #+ , Ian Foster #*+ * Departm ent of Com puter Sc ience, University of Chicago, IL, USA + Computation Ins titute, University of Chicago & Argonne National Labor atory, USA # Math and Computer Science Divis ion, Argonne National Laboratory, Argonne IL, USA iraicu@cs. uchi cago.edu , zhao zhang@u chicago .edu, wilde@mcs.anl. gov, foster@mcs.anl .gov Abstract Our work address es the e nabling of the execution o f hig hly parallel computations c omposed of loos ely couple d serial jobs with no modifications to the respec tive applic ations, on large- scale s ystem s. This approac h allows ne w-and potentially far larger-cl asses of a ppli catio n to l everage systems su ch as th e IBM Blue Gene /P superc omputer and s imilar e merging petascale architec tures. W e pres ent here the challe nges of I/O perform ance encounter ed in making this m odel practic al, and show re sults using both micro- benchmar ks and real applic ations on two large - scale s ystem s, the BG/P and the SiCor tex SC5832. Our preliminar y benchm arks s how that we can scale to 4096 proce ssors on the Blue Gene /P and 5832 proces sors on the SiCortex with high e fficiency , and can ac hi eve t housands of tasks/sec su stain ed executio n rat es for paral lel workl oads of ordinary s erial applications . We me asured applications from two domains, ec onomic ene rgy mode ling and molecular dy namics. Keyword s: high throug hput computing , loosely c oupled applications , petascale systems, Blue Gene, SiCort ex, Falkon , Swif t 1. Introduction Emerging petascale co mputin g systems are primarily dedicate d to tightly coupled, m assive ly parallel applications imple mente d using mes sage passing paradig ms. Suc h sy stem s— typif ied by IBM’s B lue Ge ne/P [1] —include f ast integra ted custom inte rconnects, m ulti-core processors, and m ulti-level I/O subsy stem s, tec hnologies tha t are als o found in s ma ller, low er- cost, and e nergy -ef fic ient sy stem s such a s the SiCorte x SC5832 [2] . These arch itectur es are well suited for a large class of applications that require a tightly coupled prog ram ming approac h. However, th ere is a po tential ly larger class of “ordinary” serial appli cation s that are preclu ded from leveraging the increasi ng powe r of m odern para llel sy stem s due to the lack of ef fic ient support in those sys tem s for the “sc ripting” progra mm ing mode l in which application and utility program s are link ed into usef ul work flow s throug h the looser ta sk-c oupling m odel of pa ssing data via f iles. With the advanc es in e-Scienc es and the g rowing comple xity of scient ific analyses, more and more scienti sts and research ers are re lying on various form s of application scripting sy stem s to autom ate the w orkf low of proce ss coordina tion, deri vation auto mation, pro venance t racking, and b ookkeep ing. Their approac hes are ty pically based on a m odel of loos ely coupled com putation, exchang ing data via files, da tabase s or XML docum ents, or a combination of these . Furtherm ore, w ith technolog y adv ances in both scie ntific instrum entation and simulation, the volum e of scientific datasets is grow ing exponentia lly. T his vas t increas e in data volum e com bined w ith the grow ing c omplex ity of data analy sis procedure s and algorithm s have rendered traditional m anua l and eve n automa ted seria l process ing and ex ploration unf avora ble as c ompar ed with mode rn high perf orm ance computing proc esse s autom ate d by scientific workflow sy stems. We f ocus in this paper on the ability to exe cute large scale applications lev eraging e xisting scripting system s on petascale system s such as the IBM Blue Gene/P. Blue Ge ne-class system s have been traditionally ca lled high perf orm ance c omputing ( HPC) sy stem s, as they alm ost exclus ively exe cute tightly coupled parallel jobs w ithin a partic ular ma chine over low-late ncy interconne cts; the applications typica lly us e a m essag e pass ing interface (e.g. MP I) to ach ieve the needed inter-process com munica tion. Converse ly, hig h throughput com puting (HT C) system s (which scientific workflow s can more readily utilize) gene rally involve the exec ution of independe nt, sequentia l jobs that ca n be individually sc heduled on m any diff erent computing resource s across multiple adm ini strativ e boundaries . HTC sy stems achiev e this using various grid com puting techniques , and alm ost exc lusively use files , documents or databas es rathe r than m essag es for inter-process communication. The hy pothesis is that loosel y c oupled applications can be exe cuted eff icie ntly on today ’s supe rcom puters; this pa per provide s em pirical evidenc e to prove our hy pothesis. The pa per also desc ribes the set of problem s that m ust be ov ercom e to m ake loosely -couple d program mi ng prac tical on em er ging pe tasca le architecture s: local resourc e ma nager sca lability and g ranularity , eff icient utilization of the raw ha rdware, sha red file sy stem contention, and application scalability . It describe s how w e addres s these problem s, and identif ies the re maining problem s that nee d to be solve d to mak e loosel y couple d superc omputing a practical and accep ted reality. Through our work, we have enabled the BG/P to ef ficie ntly support loosely c oupled para llel progra mm ing without any m odifica tions to the res pective appl ication s, en ablin g the same a pplications that e xecute in a distributed g rid envir onment to be run ef ficie ntly on the BG /P and similar sy stems. We va lidate our hypothe sis by testing and me asuring two sy stem s, Swif t and Falk on, which ha ve been us ed ex tensive ly to exe cute larg e-sca le loosel y couple d applications on clusters and grids. W e present re sults of both mic ro-benchm ark s and re al appli cation s execut ed o n two rep resentati ve large scale systems, the BG /P and the SiCorte x SC5832. Focused mi cro-benc hma rks show that we can scal e to thousands of proce ssors with high eff icie ncy , and can a chieve sustaine d exec ution rates of thousands of task s per second. W e inves tigate tw o applica tions from diff erent dom ains, e conom ic energ y m odeling and m olecular dyna mic s, and show ex cellent s peedup and e ff icienc y as the y scal e to thousands of proce ssors. 2. Related Wor k Due to the o nly recent availability of parallel sy stems with 10K cores o r more, and the even scarcer exp erience o r success in loosely -couple d program ming at this scale , we f ind that the re is little exis ting work with w hich we c an com pare. T he Condor high-throug hput sy stem , and in particula r its c oncept of g lide-ins, has bee n com pared to Falk on in prev ious pa pers [3]. This s ys tem was e valuated on the BG/L sy stem [4] is currently being tested on the BG/P architectu re, bu t performance measurements and application e xperie nces a re not y et published. Ot her loca l resourc e ma nage rs we re ev aluated in [3] as w ell. In the world of hig h throughput com puting, s yst ems such as Map-Reduce [5] , Hadoop [6] and BOINC [7] hav e utilize d highly distributed pools of proces sors, but the f ocus (a nd metr ics) of these systems has not b een on single hi ghly-parallel machin es such as thos e we focus on he re. Map/re duce is ty pically applie d to a data m odel c onsisting of nam e/va lue pair s, proc essed at the program ming language lev el. It has seve ral similaritie s to the approach w e apply here, in partic ular its ability to spread the proces sing of a larg e datas et to thousands of proces sors. H owev er, it is far less amenable to the utiliz ation and chaining of ex iting application pr ogram s, and of ten involv es the de velopm ent of custom f iltering scripts. A n approach by Reid called “ task far ming ” [8], also at the pr ogram m ing la nguage lev el, ha s bee n eva luated on the BG /L. Coordination la nguag es dev eloped in the 1980s and 1990s [9, 10, 11, 12], descri be indivi dual com putation com ponents a nd their ports and channels , and the data and eve nt flow betw een them . They also coordinate the exe cution of the compone nts, often on parallel com puting resources . In the scientif ic com munity there are a nu mber of emerging system s for scientific progra mm ing a nd com putation [ 15 , 5, 13, 14 Our w ork here builds on the Swift para llel progra mm ing sy ste m [16, 15 ], in large part becau se its pro gramm ing model ab stracts th e unit of data passing as a dataset rathe r than directly exposing se ts of files, and because its dat a-flow model exposes th e information needed to efficiently compile it for a wide variet y of archit ectures while ma intaining a sim ple com mon prog ramm ing m odel. De tailed da ta flow analys is towards autom ating the compilation of conve ntional in-m emor y prog ram mi ng m odels f or thousands of c ores is descr ibed by Hwu e t. al [40], but this does not a ddress the s imple r and more accessible d ata flow approach taken b y Swift f or scripting loosely coupled applic ations, whic h levera ges implic it parallelism as w ell. 3. Requirements and Implementation Our goa l of running loosely -c oupled applic ations e ff iciently and routinely on petas cale s ys tem s require s that both HT C and HPC applications be a ble to co-exist on sy stems suc h as the BG/P. Petascale systems have been designed as HPC systems, so it is not surprisin g that th e naïve use o f these systems for HTC applications yie lds poor utilization and pe rform ance. The contribution of the work w e describe here is the ability to e nable a new class of applic ations – larg e-sc ale loose ly- coupled – to efficiently execute on petascale systems. This is accomplished prima rily throug h three m echanism s : 1) multi-leve l scheduling, 2) efficient task d ispatch , and 3) exten sive use o f caching to avoid shared in frastructure (e. g. f ile system s and intercon nects). Multi-level sche duling is essen tial on a sy stem such as the BG/P b ecause the l ocal resou rce manager (LRM, Cobalt [ 17] ) work s at a granula rity of proce ssor-se ts, or PSETs [18], rathe r than individua l computing nodes or proces sor c ores. On the BG/P, a PSET is a group of 64 com pute nodes ( each with 4 proces sor core s) and one I /O node. PSET s m ust be alloc ated in their en tirety to user ap plicat ion jobs by the L RM, whic h impose s the constraint tha t the applica tions m ust ma ke use of all 256 c ores, or wa ste va luable CPU res ources. Tightly c oupled MPI applications are w ell suited f or this constraint, but loose ly- coupled applic ation w orkfl ows, on the other hand, g enera lly have many single pr ocessor jo bs, each with possib ly uniqu e executabl es and almost al way s with un iqu e parameters. Naively running suc h applications on the BG/P using the sy ste m’s Cobalt LRM would y ield, at w orst cas e, a 1/256 utiliza tion if the si ngle processor job is not m ulti-threaded, or 1/64 if it is. In the work w e describe he re, we use multi-lev el scheduling to alloc ate com pute resourc es f rom Coba lt at the PSET g ranularity , and then ma ke these compu tatio nal resou rces availab le to ap plicat ions at a single processo r core granul arity in o rder to en able sin gle threaded jobs to exec ute with up to 100% utilization. Using this m ulti-leve l scheduling me chanism , we are a ble to la unch a unique application, or the sam e applic ation w ith unique argum ents, on each core, and to launch su ch tasks repetitively throug hout the allocation pe riod. This ca pability is m ade possible through Fa lkon and its re source prov isioning m echa nisms. A re lated obsta cle to loose ly c oupled progr amm ing w hen using the nativ e BG/P L RM is the overhe ad of sche duling and starting resource s. The BG /P compute nodes are powe red off whe n not in use, and he nce m ust be boote d when allocat ed to a job. As the com pute nodes do not have loc al disks , the boot up proces s involves reading a L inux (or POSIX- based Ze ptOS [19]) kernel image from a sh ared file system, which can be exp ensive if com pute nodes a re alloca ted and de- allocate d freque ntly. U sing multi-lev el scheduling allows this hi gh initial cost to be a mortize d over m any jobs, reducing it to a n insignifica nt overhea d. With the use of m ulti-leve l scheduling, ex ecuting a job is reduced to its bare and lig htwe ight esse ntials : loading the application into me mory , executing it, a nd returning its ex it code – a pr ocess tha t can occur in milliseconds . We contrast this w ith the cost to re boot com pute nodes, w hich is on the orde r of m ultiple seconds (for a single node) and c an be as high as hundreds of seconds if m any com pute nodes a re rebooting concurre ntly. The s econd m echanis m that e nables loosely -coupled applications to be executed on the BG /P is a streamline d task subm ission f ram ewor k (Falk on [3]) which provides ef fici ent tas k dispatch. This is m ade possible through Falk on’s focus : it relies on LRMs f or ma ny f unctions (e .g., res ervation, polic y-ba sed scheduling , acc ounting) and c lient fra me work s such as work flow system s or distrib uted scrip ting sy stems f or others (e.g., recovery , data st aging, job dependenc y m anag em ent). In contr ast with typic al LR M perfor manc e, Falkon a chieve s sev eral orders of ma gnitude hig her perf orm ance ( 607 to 3773 task s/se c in a L inux cluste r environm ent, 3057 task s/sec on the SiC ortex, 1758 tasks/sec on BG/P). To quantif y the nee d and benef it of s uch high throughputs , we analyze (in Figure 1 and Figure 2) the achievab le resource ef ficie ncy at two dif fer ent super compute r sca les (the 4K processors o f the BG/P test syst em current ly available to us, an d the ultim ate size of the A LCF BG /P sy stem – 160K process ors [1, 20]) for v arious throug hput leve ls (1, 10, 100, 1K, and 10K tasks /sec) . This gr aph show s the m inimum task durations neede d to achiev e a ra nge of ef fici encies up to 1.0 (100%) , giv en the pea k task submis sion rates of the v arious actua l and hypothetic al ta sk scheduling f acilities. In this a nalysis, e ff iciency is define d as (achieved sp ee dup) / (ide al speedup) . Small Supercom puter (4K processo rs) 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 0.001 0.1 10 1000 100000 Task Leng th (sec) Efficiency 1 task/sec (i.e. PBS, Condor 6.8) 10 tasks/se c (i.e. Condo r 6.9.2) 100 tasks/s ec 1K task s/sec (i.e . Falkon) 10K task s/sec Figure 1: Theoretical resource efficiency for both a sm all and large supercom puter in executing 1 M tasks of various lengths at various dispat ch rates Larg e S uper com pute r ( 16 0K P roce sso rs ) 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 0.001 0.1 10 1000 100000 Task Lengt h (s ec) Efficiency 1 task/se c (i.e. PBS, Condor 6.8) 10 t asks/sec (i.e. Condor 6.9.2) 100 t asks/ sec 1K t asks/ sec (i. e. Fa lkon) 10K t asks/ sec Figure 2 : Theoretical resource efficiency for both a small and large supercom puter in executing 1 M tasks of various lengths at various dispat ch rates We note that curre nt production LR Ms require relativ ely long tasks in orde r to mainta in high efficien cy. For example, in the sma ll superc omputer case with 4096 proce ssors, f or a schedule r that ca n submit 10 ta sks/s ec, tas ks nee d to be 520 seconds in duration in order to g et eve n 90% ef fici ency ; for the sam e throughput, the requir ed task duration is incr eased to 30,000 sec onds (~9 hours) f or 160K proc essor s in order to m aintain 90% eff icie ncy. W ith throughputs of 1000 tas ks/se c (whic h Falkon can sustain on th e BG/P), th e same 90% efficiency can be reached with ta sks of length 3.75 s econds a nd 256 seconds for the sa me two cases. Empirical result s presente d in a later section (Fig ure 8) show s that on the BG /P with 2048 proc essor s, 4 second task s yi eld 94% ef fi ciency , and on the SiCortex with 5760 proc essors , we need 8 second ta sks to a chieve the sam e 94% e ff iciency . T he calcula tions in this analy sis s how that the higher the thr oughput rates (tasks/sec) th at can be d ispat ched to and executed on a set of resources, the higher the m a xim um re source utiliza tion and efficiency for the same w orklo ads and the faster the applicatio ns turn-around tim es will be , assum ing the I/O needed for the application s cales with the num ber of proce ssors. Finally , the third me chanism w e em ploy for enabling loosely coupled applic ations to exe cute ef fic iently on the BG /P is extensive use of caching to allow better application scalability by avoidin g shared file system s. As workflow systems frequently em ploy f iles as the prim ary comm unica tion medium betw een data - dependent jobs , having eff icient m ec hanism s to r ead a nd w rite files is critica l. The c ompute node s on the BG /P do not have loc al disks, but they ha ve both a shared f ile sy stem ( GPFS [21]) a nd local file system im plemented in RA M (“ram disk”); the SiCortex is similar as it al so has a shared file system (NFS and PV FS), as well a s a local f ile syste m in RA M. We mak e exte nsive use of the ramdisk local filesystem, to cach e file obj ects such as ap pli cation scripts and binary exe cutables, st atic input data w hich is cons tant acros s many jobs running an applic ation, and in som e c ases, output data f rom the application until enough data is collec ted to allow ef fic ient writes to the sha red file s yst em. W e found tha t naively executin g appl icatio ns di rectly against th e shared file system y ielded u naccept ably poor pe rform anc e, but w ith successive level s of caching we were able to increase the executio n efficiency to withi n a few percent o f ideal in many cases. 3.1 Swift and Falkon To harnes s a wide a rray of loose ly coupled applica tions that have al ready been implemented and execu ted in clust ers and grids, w e decided to build upon ex isting sy ste ms ( Swif t [ 15 ] and Falkon [3]). Swift is a n emerg ing syste m that e nables scientif ic work flow s throug h a data-f low- based functiona l para llel progra mm ing mode l. It is a parallel s cripting tool f or rapid a nd reliable specif ication, ex ecution, and ma nagem ent of la rge-s cale science and engin eering workflows. Swift takes a structured approac h to workf low s pecif ication, sc heduling a nd exec ution. It consists of a si mple f uncti onal scripting languag e calle d Swif tScript for concise spec ifications of comple x parallel com putations based on datase t typing and iterator s, and dy nam ic dataset mappings for accessin g complex, large scale d atasets repres ented in dive rse data form ats. The runtime system in Swif t relies on the CoG Karajan [22] work flow engi ne for e ff icient s cheduling a nd load balanc ing, and it integrate s with the Falkon [3] light-weig ht task exec ution serv ice f or optimiz ed task thr oughput and re source e ff iciency . Falkon e nables the r apid and ef fic ient ex ecution of m any independent jobs on larg e com pute clus ters. It c ombines two techniques to a chieve this goa l: (1) m ulti-level sc heduling w ith separ ate treatm ents of resourc e provis ioning and the dis patch of user ta sks to thos e resour ces [23, 24 ], an d a streamlined task dispatche r used to achieve order-of -ma gnitude highe r task dispatch r ates than c onventional s chedulers [3] ; and (2) data caching and th e use of a d ata-aware schedul er to leverage t he co- located computat ional and st orage resou rces to mini mize the use of share d storag e infra structure [25, 26, 27]. Note that we ma ke full use of the f irst tec hnique on both the BG /P and SiCorte x sy stem s. We will inve stigate harness ing the se cond technique (data dif fus ion) to ensure loosely coupled a pplications ha ve the best opportunity to sc ale to the f ull BG/P sc ale of 160K proces sors. We be lieve the sy nergy found be twee n Swif t and Falkon off ers a comm on ge neric inf rastr uctures a nd platf orms in the scienc e domai n for work flow adm inistration, s cheduling , executio n, mon itorin g, pro venance t racking et c. The science com munity is dem anding both specializ ed, dom ain-spec ific languag es to im prove produc tivity and ef fic iency in w riting concurr ent progra ms and coordina tion tools, and g eneric platf orms and infrastructur es for the exec ution and ma nage ment of large sc ale scientif ic applications, w here sca lability a nd performance are major concern . High p erformance computin g support has becom e an indispensa ble tool to addre ss the lar ge storage and co mputin g pro blems emerging in every discip lin e of 21 st century e-science. Both Swif t [ 15 , 28] and Falkon [3] have been use d in a variety of environments from clusters (i.e. T eraPort [29 ]), to multi-site G rids (i.e. Open Scie nce Grid [30], TeraG rid [ 31 ]), to specialized large machines (Si Cortex [ 2] ), to su percomput ers (i.e. IBM BlueG ene /P [ 1]). Larg e sca le a pplications from m any doma ins (i.e. a stronom y [ 32 , 3], medicine [ 34 , 3, 33], chemistry [28], mole cular dy nam ics [36], and ec onomic s [38, 37]) have been run at scal es of tens of thousands of jobs on thousa nds of proces sors, with a n order of mag nitude larg er sca le on the horizon. 3.2 Implementation Details Significa nt engineering eff orts were inv ested to get Fa lkon and Swift to work o n systems such as the Si Cortex and the BG/P. This sec tion discusses extensions we m ade to both sy ste ms, a nd the problem s and bottleneck s they addressed. 3.2.1 Static Resource Provisioning Falkon a s prese nted in our prev ious w ork [3] has support for dyna mic resourc e provis ioning, whic h allow s the re source pool to grow and shrink based on loa d (i.e. w ait queue length at the Falkon se rvice ). Dy nami c resourc e provis ioning in its curre nt imple me ntation depends on G RAM4 [39] to alloca te res ources in Grids and clusters . Neither the SiCortex nor the BG/P support GRA M4; the SiCortex uses the SLURM LRM [2] while the BG /P supports the Cobalt LR M [17]. As a f irst step, w e im plem ented static resource prov isioning on both of these sy stem s through the respective LRM. With static res ource pr ovisioning the application reques ts a number of process ors for a fix ed duration; r esourc es are allocat ed based on the requirem ents of the applic ation. In future work, w e w ill port our GRA M4 based dynam ic resource provisioning to support SLUR M and Cobalt and/or pursue GRA M support on the se sy ste ms. 3.2.2 A lternative Implementations Perform anc e depends critic ally on the be havior of our tas k dispat ch mechan isms; the nu mber of messages needed to i nteract betw een the v arious c omponents of the s yst em; a nd the hardw are , progra mm ing lang uage , and com piler used. W e im plem ented Falkon in J ava a nd use the Sun JD K to compile and run Falk on. We us e the G T4 Ja va W S-Core to handle Web Servic es com munica tions. [ 35 ] Running with Jav a-only com ponents w orks we ll on typica l Linux cluster s and G rids, but the lac k of Ja va on the BG/L , BG/P, and SiCortex e xecution e nvironm ents prom pted us to rep lace two main pi eces from Falkon. The first change was the rewrit e of the Falko n execut or cod e in C. This allow ed us to com pile and run on the targ et sy stems. Once w e had the e xecutor im plem ented in C, w e a dapted it to interact w ith the Falk on service. In orde r to keep the protocol a s lightw eight as possible, w e use d a sim ple T CP-based protocol to replace th e existing WS-based p rotocol . The process of writing the C execut or was more complicated t han th e Java variation, and require d more debugg ing due to exce ption handling , m em ory allocation, arr ay handling, etc. Table 1 has a s umm ary of the differences between th e two implementatio ns. Table 1: Feature comparison betw een the Java E xecut or imp lementation an d the new C implem entation Description J ava C Robustne ss high Medium Security GSI Trans port, GSIC onvers ation, GSIMessageLevel none could support SSL Comm unicati on Protocol WS-based TCP -based Error Recovery yes yes Lifetim e Manag ement ye s no Concurrent Tasks ye s no Push/Pu ll Mod e l PUS H notifica tion based P ULL Firew all no ye s NAT / Private Networks no in gene ral yes in certain cases yes Persistent Sockets no - GT 4.0 ye s - G T4.2 yes Performan ce Medium ~High 600~3700 task s/s High 1700~3200 task s/s Scala bility High ~ 54K CPUs Medium ~ 10K CPUs Porta bility me dium high (needs recom pile) Data Caching ye s no It was not sufficien t to ch ange the worker implementation , as the serv ice required c orresponding rev isions. In addition to the existing support f or WS-bas ed protocol, w e im pleme nted a new com ponent called “T CPCore” to handle the TC P-based com munica tion protocol. T CPCore is a c omponent to m ana ge a pool of thre ads that liv es in the sa me J VM as the Fa lkon s ervice , and uses in-me mory notif ications a nd shar ed objec ts to communicate with th e Falkon service. In o rder to make the protocol as ef fic ient as poss ible, w e imple mente d persiste nt TCP sock ets (w hich are stored in a hash table based on e xecutor ID or task ID, depe nding on what state the tas k is in). Fig ure 3 is the TCPCo re overview of the i nteractio n b etween TCPCo re and the Falkon service, and between TCP Core an d th e executors. TASK QUEU E 2. GET TASK 3. REMOV E SOCKET 5. DISPAT CH TASK 4. PUT SO CKET 6. GET RESULT 7. REMOV E SOCKET 8. PUT SO CKET FALKON SERVICE 9. SEND RESULT 1. DISPATC H TASK EXECUTOR EXECUTOR SOCKET MAP INDEX ED BY TASK ID SOCKET MAP IND EXED B Y EXE CUT OR I D Figure 3: The TCPCore overview , replacing the WS-Core com ponent fro m th e GT4 3.3 Reliability Issues at Large Sc ale We discuss re liability only brief ly he re, to expla in how our appro ach ad dresses this crit ical requ irement. The BG/L has a me an-time-to-f ailure (MT BF) of 10 day s [4], which m eans that MPI p arallel jo bs that span more than 10 d ays are almost guara nteed to fa il, as a sing le node f ailing w ould cause the e ntire allocation a nd application to f ail as w ell. As the BG/P will sc ale to severa l orders of m agnitude larg er than the BG /L, we expe ct its MTBF to continue to pos e challe nges f or long-r unning applications . When running loosely coupled applic ations via Swif t and Falk on, the fa ilure of a single CPU or node only aff ects the individual task s that we re being e xecuted at the time of the f ailure. Falkon h as mechanisms to id entify specific errors, and act upon them with spe cific actions. Mos t errors are genera lly passe d back up to the application ( Swif t) to deal with them , but other (know n) errors ca n be handled by Falk on. For exa mple we ha ve the "Stale NFS handle" error tha t Falkon w ill retry on. This e rror is a fa il-fast error w hich can ca use many f ailures to happen in a short perio d of time, h owever Falkon h as the mechani sms in place to suspend the off ending node if it fa ils too m any jobs. Furtherm ore, Fa lkon retrie s any jobs that f ailed due to communication errors between t he service and the workers, esse ntially any errors not caus ed due to the a pplication or the shared file system . Swif t also has persistent state that allow s it to restart a paralle l application sc ript from the point of failur e, re-ex ecuting only un completed t asks. There is n o need for explicit check- pointing as is the ca se w ith MP I applications; che ck-pointing occurs inherently with e very task that complete s and is com munica ted back to Sw ift. Com pute node f ailures are all treated i ndepen dent ly, as each failure on ly affe cts th e particul ar task that it w as exe cuting at the tim e of f ailure. 4. Micro-Bench marks Performance We de velope d a set of m icro-be nchm arks to identif y perform ance c haracteristics a nd potential bottlene cks on syste ms with m ass ive num bers of cor es. We des cribe these ma chines in detail and measure bo th th e task disp atch rates we can ach ieve for sy nthetic benc hma rks and the costs f or va rious file sy ste m operations (read, re ad+w rite, invoki ng scri pts, m kdir, etc) on the shared f ile sy stem s tha t we us e w hen running larg e-sca le applications (GPFS and NFS). 4.1 Testbeds D escription The late st IBM BlueGe ne/P Supercom puter [1] has quad core proce ssors w ith a total of 160K- cores, a nd has support f or a lightw eight L inux ke rnel (ZeptO S [19]) on the com pute nodes, making it sign ificantly more accessible t o new app licatio ns. A ref erenc e BG /P with 16 PSETs (1024 node s, 4096 proces sors) has been availa ble to us for testing, and the full 640 PSET BG /P will be online at Arg onne Nationa l Labora tory (ANL ) later this ye ar [20 ]. The BG/P arch itectur e overview is depi cted in Figure 4 . 13.6 GF/s 8 MB EDRAM 4 processors 1 chip, 1x1x1 13.6 GF/s 2 GB DDR (32 chips 4x4x2) 32 compute, 0-4 IO c ards 435 GF/s 64 GB 32 Node Cards 32 Racks 500TF/s 64 TB Cabled 8x8x16 Rack Baseline System Node Card Comput e Card Chip 14 TF/s 2 TB Figure 4: BG/P Architecture Overview The full BG /P at AN L w ill be 111 TFlops w ith 160K PPC450 processors running at 850MH z, with a total of 80 TB of main mem ory. The system architecture i s designed to scale t o 3 PFlops for a total of 4.3 million proce ssors. The BG /P has both GPFS and PV FS file s ys tems availa ble; in the f inal production syste m, the GPFS will be able to sustain 80Gb/s I/O ra tes. All expe rime nt involving the BG /P were perf orme d on the ref erenc e pre-produc tion imple menta tion that had 4096 proc essor s and using the G PFS shared file sy stem. AN L als o acquire d a new 6 TFlop m achine name d the SiCortex [2 ]; it has 6-cor e proces sors f or a total of 5832-cores each r unning at 500 MHz , has a tota l of 4T B of m em ory , and runs a sta ndard Linux environm ent w ith kerne l 2.6. The s yst em is conn ected to a NFS sh ared file system which is on ly served by one serv er, and can sust ain only about 320 Mb/s rea d perform ance. A PVFS shared file sy stem is a lso planned that w ill increa se the read perf orma nce to 12,000 Mb/s, but that w as not ava ilable to us during out testing. A ll ex perim ent on the SiCor tex we re perform ed using the NFS shared file sy stem , the only available sh ared file system at the time of the exp eriments. Figure 5: SiCort ex Model 5832 In some experim ents , we a lso use d the AN L/UC Linux cluste r (a 128 node cluster from the T eraG rid), w hich consiste d of dual Xeon 2.4 G Hz CPUs or dual I tanium 1.3GH z CPUs w ith 4GB of m emor y and 1G b/s netw ork connectiv ity. W e a lso use d two other systems f or some of the m easure ments involv ing the SiCortex an d th e ANL/UC Linux cluster. One m achin e (VIP ER.CI) was a du al Xeo n 3GHz with HT (4 hardware threads ), 2GB of RA M, Linux kernel 2.6, a nd 100 Mb/s netw ork connectiv ity. T he other sys tem (GTO.C I) wa s a dual Xe on 2.33 GHz with quad cores each (8-cores), 2GB of RAM, L inu x kernel 2.6, and 100 Mb/s netw ork c onnectivity . Both m achine s had a netw ork latenc y of less than 2 ms to and from both the SiCorte x com pute nodes a nd the AN L/UC L inux c luster. The v arious s ys tem s we used in the ex perim ents c onducted in this paper are outlined in Table 2. Table 2 : S umm ary of t estbeds used in s ecti on 5 and se ction 6 Name Nodes CPUs CPU Type Speed RAM Fil e System Peak Operat ing System BG/P 1024 4096 PPC450 0.85GH z 2TB GPFS 775Mb/s L inux (ZeptOS) BG/P.Login 8 32 PPC 2.5GH z 32GB GPFS 775Mb/s L inux Ke rnel 2.6 SiCortex 972 5832 MI PS64 0.5GH z 3.5TB NFS 320Mb/s L inux Ke rnel 2.6 ANL/ UC 98 196 Xeon 2.4GH z 0.4TB GPFS 3.4Gb/s L inux Ke rnel 2.4 62 124 It a n i u m 1.3GH z 0.25T B GPFS 3.4Gb/s L inux Ke rnel 2.4 VI PER.CI 1 2 Xeon 3GH z 2GB L ocal 800Mb/s L inux Ke rnel 2.6 GTO. C I 1 8 Xeon 2.3GH z 2GB L ocal 800Mb/s L inux Ke rnel 2.6 4.2 Falkon Task Disp atch Performance One ke y com ponent to ac hieving high utiliza tion of large scale sy stem s is the ability to g et high dispatch and ex ecute rates. In prev ious work [3] we m easure d that Falkon with the Ja va Exec utor and WS-based c omm unication pr otocol achie ves 487 tasks /sec in a Linux c luster (A NL /UC) w ith 256 CPUs, w here each task was a “sleep 0 ” task with no I/O; the machine we used in our prev ious study w as VIPER.C I. We re peate d the pe ak throughput expe rim ent on a va riety of sys tem s (A NL/U C Linux cluste r, SiCortex , and BG/P) f or both v ersions of the ex ecutor (Java and C, WS-based and TCP -base resp ectively); we also used two diff ere nt mac hines to run the s ervic e, GT O.CI a nd BG/P.Login ; see Table 2 for a descri ptio n of each machine. Figure 6 s hows the results w e obtai ned for the pea k throughput as m ea sured while submitting , exec uting, and getting the results fr om 100K ta sks on the various sy stem s. We see tha t the ANL /UC L inux cl uster is up to 604 ta sks/s ec f rom 487 tasks/sec (u sing t he Java executo r and t he WS-based p roto col); we attribute the g ain in perf ormanc e solely due to the faster m achine GTO.CI (8-cores at 2.3 3GHz vs. 2 CP Us with HT at 3GHz each). The tes t was perf orm ed on 200 CPUs, the m ost CPUs that w ere available at the ti me of the experiment. The same testbed but using the C exe cutor and T CP-based pr otocol y ielded 2534 tasks /sec, a sig nifica nt improv eme nt in peak throug hput. We attribute this to the lesse r overhe ad of the TCP-base d protocol (as opposed to the W S-bas ed protocol), a nd the fa ct tha t the C executor i s much simpler in logic and features than the Java exe cutor. The sam e pe ak throug hput on the SiCortex with 5760 CPUs is ev en higher, 3186 ta sks/s ec; note that the SiC ortex does not support Jav a. Finally , the BG /P peak throug hput wa s only 1758 tasks /sec f or the C ex ecutor; sim ilar to the SiCortex , Jav a is not supported on the BG /P compute node s. We a ttribute the low er throughput of the BG /P as com pared to the SiC ortex to the machine that was used to ru n the F alkon service. On the BG/P, we used BG /P.Login (a 4-core PPC at 2.5G Hz) w hile on the SiCorte x we used GT O.CI (a 8- core Xe on at 2.33G Hz). T hese diff ere nces in test harness w ere unav oidable due to fire wall c onstraints. 604 3773 2534 3186 1758 0 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 Throughput (tasks/sec) ANL/UC, Ja va 200 CPUs ANL/UC, Ja va Bundlin g 10 200 CPUs ANL/UC, C 200 CPUs SiCortex, C 5760 CP Us BlueGe ne/P, C 1024 C PUs Executor Implementation and Various Sy stems Fig ure 6: Task di spatch and e xe cution t hroughput for t rivi al tasks with no I/O (sleep 0) Note t hat there is also an entr y f or th e ANL/UC Linux cluster , with the J ava e xecutor and bundling attribute of 10. The bundling ref ers to the dispatche r bundling 10 tas ks in each communicatio n message th at i s sent to a worker; the worker th en unbundles the 10 tas ks, puts the m in a loc al queue , and exe cutes one task per CPU (2 in our cas e) at a time. T his has the added benef it of amortiz ing the com munic ation over head ove r multiple task s, whic h drastica lly im prove s throughput from 604 to 3773 tasks/sec (h igher t han all the C executo rs and TCP -based protocol). Bundling ca n be usef ul when one knows a-priori the task gr anularity , and expects the dispatc h throughput to be a bottleneck . The bundling fea ture has not be en imple mente d in the C executor, which means that the C execut ors were receiving each task separa tely pe r executor. In try ing to unders tand the va rious cost s leading to the throughputs a chieve d in Figure 6, Figur e 7 profile s the s ervic e code, and break s down the CPU tim e by code block . This te st w as done on the VIPER.C I and the A NL /UC L inux clus ter w ith 200 CPUs, with throug hputs r eaching 487 task s/sec and 1021 tasks/sec for the Java an d C implementatio ns respect ively. A signific ant portion of the CPU tim e is spent in comm unication (WS and/or TC P). With bundling (not shown in Fig ure 7), the com munic ation costs are re duced to 1.2 ms (dow n fr om 4.2 m s), as w ell as othe r costs . Our conclus ion is that the pe ak throug hput for sm all task s ca n be increa sed by both adding f aste r process ors, more proces sor core s to the ser vice hos t, and reduc ing the com munica tion costs by lighter w eight pr otocols or by bundling where possib le. 0 1 2 3 4 5 6 JAVA C CPU Time per Task (ms) Task Su bmit (Cli ent -> S ervice ) Notif ication for Tas k Availa bility (Serv ice -> E xecutor ) Task Dispatc h (Servi ce -> E xecut or) Task R esult s (Exe cutor - > Ser vice) Notif icati ons fo r Task R esults (Serv ice -> Clien t) WS commun icat ion / T CPCore (Se rvice - > Execut or & Exe cutor - > Servic e) Figure 7 : Falkon p rofiling com paring the Java and C im plem entation on VI PER.CI (dual X eon 3GHz w/ HT) Peak throug hput perf orm ance only gi ves us a rough ide a of the kind of utilization a nd eff icienc y w e can e xpect; theref ore, to better unders tand the eff icie ncy of exe cuting dif fer ent work loads, measured th e efficiency of executing varying t ask length s. We me asured on the ANL /UC L inux cl uster w ith 200 C PUs, the SiCortex with 5760 CP Us, and the BG/P with 2048 C PUs. We vari ed the tas k lengths from 0.1 sec onds to 256 sec onds (us ing slee p tasks with no I/O), and ran w orkloa ds ranging from 1K tas ks to 100K task s (depending on the task lengths) . Figure 8 shows th e efficiency we were able to achi eve. Note that on a re latively sm all cluste r (200 CPUs), w e can a chieve 95%+ e ff iciency w ith 1 second ta sks. Ev en with 0.1 sec ond task s, using the C exe cutor, w e can a chieve 70% e ff icienc y on 200 CPUs. E fficiency can reach 99%+ with 16 sec ond tasks. W ith larger sy stem s, with m ore CPUs to keep busy, it takes longer task s to achiev e a giv en ef fici ency level. With 2048 CPUs (B G/P), w e need 4 se cond task s to r each 94% efficiency, while with 5760 CPUs (SiCorte x), w e need 8 se cond task s to r each the same eff icie ncy. W ith 64 second ta sks, the B G/P ac hieves 99.1% efficiency while the SiCo rtex achieves 9 8.5 %. 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 0.1 1 2 4 8 16 32 64 128 256 Task Length (sec) Efficiency ANL/UC, Java, 200 CPUs ANL/UC, C, 200 CPUs SiCortex, C, 5760 CPUs BG/P, C, 2048 CPUs Figure 8 : Efficiency g raph of various system s (BG/P, SiCortex , and Linux cluster) for both the Java and C w orker im plem e ntati on fo r var ious ta sk length s (0.1 to 256 se con ds) 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% 1 2 4 8 16 3 2 6 4 12 8 25 6 512 10 2 4 2048 Number of Processors Efficiency 32 se co nds 16 se co nds 8 sec on ds 4 sec on ds 2 sec on ds 1 sec on d Figure 9: Effic ienc y graph for the BG/P f or 1 to 2048 processo rs and tas k lengths rangi ng fr om 1 to 32 seconds Figure 9 investigat es more closely the effects of efficiency as the num ber of proces sors incr ease s from 1 to 2048. W ith 4 s econd tasks , we can get hig h eff icie ncy w ith any numbe r of proc essors ; with 1 a nd 2 second tas ks, w e ac hieve hig h eff icienc y w ith a sm aller num ber of proces sors: 512 and 1024 r espec tively . The prev ious several ex perime nts all investiga ted the throughput and e ff icienc y of exe cuting tas ks w hich had a s mall and co mpact descript ion. For example, th e task “/bin/sleep 0” requires only 12 by tes of inf ormation. T he f ollowing expe rim ent (Figure 10) investigate s how the throughput is a ff ected by increas ing the task de scription size. For t his experiment, we com pose 4 differ ent task s, “/bin/ec ho ‘string’” , whe re string is replaced with a different length string to make the task descriptio n 10B, 100B, 1KB, and 10KB. W e ra n this expe rim ent on the SiCortex with 1002 CPUs and the ser vice on G TO.C I, and proces sed 100K ta sks f or each case. We s ee the throug hput with 10B ta sks is simila r to that of slee p 0 tasks on 5760 CPUs with a throug hput of 3184 tas ks/s ec. When the task s ize is inc rease d to 100B, 1K B, and 10KB, the throughput is r educed to 3011, 2001, and 662 tas ks/se c respe ctively . T o better unders tand the throug hput reduction, we also measured t he n etwork level t raffic that th e service experienced d urin g th e experiments. We ob served th at the agg rega te throug hput (both r eceived an d sent on a ful l duplex 100Mb/s netw ork link ) increa ses f rom 2.9MB/s to 14.4MB/s as we vary the tas k size from 10B to 10KB. 0 5000 10000 15000 20000 25000 30000 35000 10 100 1000 10000 Task Description Size (bytes) Throughput (KB/s) Bytes/Task 0 500 1000 1500 2000 2500 3000 3500 Throughput (tasks/sec) Throughpu t (tasks/sec) Throughpu t (KB/s) Bytes/Task Figure 10 : Task description size on the SiCortex and 1K CPUs T he bytes /task va ries from 934 byte s to 22.3 KB f or the 10B to 10KB tasks. The formula to c ompute the by tes per task is 2* task _size + overhe ad of T CP-base d protocol ( including TCP/IP heade rs) + ov erhea d of W S-base d submis sion protocol (including XML, SOA P, HTTP, and T CP/IP) + notifica tions of re sults fr om executors b ack to th e service, an d from the service t o the user. We need to double the task s ize s ince the ser vice firs t r eceives the task descr iption from the user (or application), a nd then dispatc hes it to the rem ote exec utor. Only a br ie f notifica tion with the task ID and exit code of the application is s ent back . We m ight as sume that the ove rhead is 934 – 2* 10 = 914 by tes, but f rom looking a t the 10KB tasks , we see that the ove rhead is 22.3KB – 2* 10KB = 2.3KB (hig her than 0.9KB ). We m easured the num ber of TCP pack ets to be 7.36 pa ckets /task ( 10B task s) and 28.67 packe ts/task (10KB tas ks). T h e dif fere nce in T CP overhea d 853 byte s (w ith 40 byte header s for T CP/IP , 28.67* 40 - 7.36*40) e xplains most of the difference. We su spect th at the remaind er of the di fference (513 bytes ) is due to extra ove rhead in XML /SOAP/HT TP whe n submitting the tasks. 4.3 NFS /GPFS Per fo rma nc e Anothe r key com ponent to getting high utilization and eff iciency on large scale sys tem s is to unders tand the shar ed resourc es w ell, and to m ake sure that the com pute-to-I/O ratio is proportional in orde r to achiev e the de sired perfor manc e. T his sub-se ction discuss es the s hared f ile sy stem per form ance of the BG/P. This is an important fac tor, as Swif t uses file s for inter- proces s com munica tion, and these files are tra nsfe rred f rom one node to another by m eans of the shared file sy stem . Future w ork will rem ove this bottleneck (i.e. using T CP pipes, MPI messages, or data diff usion [25, 27]), but the current im plementation is based on f iles on shared f ile sy stems, a nd hence w e believe it is importa nt to investigate and m easure the perf orma nce of the BG/P’s GPFS shared filesystem . We c onducted se vera l experi ments with v arious data sizes (1B to 100MB) on a vary ing numbe r of CPUs from 4 to 2048; w e conducted both r ead-only tests and rea d+write tests. Fig u re 11 shows the agg rega te throughput in te rms of Mb/s. Note that it requires relat ively large access sizes (1MB and larger) in ord er to satura te the GPFS file sy stem ( and/or the I/O nodes that ha ndle the GPFS traff ic). The pe ak throug hput achieve d for re ad tests wa s 775 Mb/s with 1MB data siz es, and 326 Mb/s re ad+w rite throughput with 10MB data siz es. A t these pe ak num bers, 2048 CPUs are con currentl y accessing the shared file system , so th e peak per proce ssor throug hput is a me re 0.379 Mb/s a nd 0.16 Mb/s for read and read+write respe ctively . This implie s that care must be ta ken to ensure tha t the com pute to I/O ratio to and from the shared file system is balanced in such a way that it fits w ithin the rela tively low per proce ssor throug hput. 0.0001 0.001 0.01 0.1 1 10 100 1000 1B 1KB 10KB 100KB 1M B 10M B 100MB Dat a Size Aggregate Thro ughput (Mb/s) 4 CPUs (re ad) 256 CPUs ( read) 2048 C PUs (r ead) 4 CPUs (re ad+write) 256 CPUs ( read+w r ite) 2048 CPUs (r ead+ write ) Fig ure 1 1: A gg regat e throug hput for GP FS on the B G/P Figure 12 shows the sam e inform ation as Fig ure 11, but shows task len gth necessary to ach ieve 90% efficiency; we sh ow the task length re quired when r ea ding from GPFS in solid lines and read+ write f rom G P FS in dotted lines. 0.1 1 10 100 1000 1000 0 100000 1B 1KB 10K B 100KB 1MB 10MB 100MB Data Size Task Length s (sec) at 90% Efficienc y 4 CPUs (read) 256 CP Us (read ) 2048 C PUs (rea d) 4 CPUs (read+w rite) 256 CP Us (read +wri te) 2048 C PUs (rea d+write ) Fig ure 1 2: M i nimum task leng ths ( sec) w i th vary ing input dat a required to m aintain 90 % efficiency Look ing at the m easures of 1 P-SET (blue) and 8 P-SETs (red), w e see that no m atter how sm all the input/output data is (1B ~ 100KB), we need to hav e at lea st 60+ se cond task s to achie ve 90% efficiency. If we do bo th reads an d writes, we need at least 129 sec ta sks a nd 260 sec ta sks for the 1 by te case for re ad and read+write resp ectively. This p aints a b leak pict ure of th e BG/P's performance when we need to access GPFS. It is essenti al that these ratio s (task length vs. data size) be consid ered when imple menting a n application on the BG /P which nee ds to acce ss the data from the share d file s yste m (us ing the loose ly c oupled mode l under conside ration). Figure 13 show s another as pect of the G PFS perform ance on the BG /P for 3 diff erent sca les, 4, 256, and 2048 proce ssors. It investig ates 2 differe nt benchm arks, the speed at which sc ripts ca n be i nvoked from GP FS, and t he speed to create an d remove director ies on GPFS. We s how both ag gregate throughput and time (ms ) per opera tion per proce ssor. 32 44 91 41 6241 823 10 207585 125 2342 109 2488 1 10 100 1000 10000 100000 1000000 Invo ke script throughput (ops/sec) Invo ke script ms/op per processor mkdir/rm throughput (ops/sec) mkdir/rm ms/op per processor Throughput (tasks /sec) ms/task per processor 4 256 2048 Figure 13 : invoking s imple script and mk dir/r m Looking at the script invocation f irst (left two colum ns), we see that w e can only invoke scripts at 109 task s/sec with 256 processors; it is intere sting to note that if this sc ript was on ram disk, w e can a chieve over 1700 tas ks/se c. A s we add ex tra proces sors up to 2048 (a lso incre asing I/ O nodes f rom 1 to 8) , w e get alm o st linear increas e in throughput, w ith 823 tasks /sec. T h is leads us to conclude that the I/O nodes a re the main bottle neck for invoking scripts from GPFS, and not G PFS itself . Also, note the time increas e per s cript invoca tion per proce ssor, g oing fr om 4 to 256 proces sors incr eases from 32 ms to 2342 m s, a sig nifica nt overhe ad for r elative ly sm all tasks . The second micr obenchm ark investigat ed t he performance o f creating and removing direct ories (right two colum ns). We see that the ag greg ate throug hput stay s relativ ely constant w ith 4 and 256 processors (within 1 PSET) at 44 and 41 task s/sec , but drops s ignific antly to 10 tasks /sec with 2048 processor s. Note at 2048 proc essors , the time needed pe r proces sor to create and rem ove a direc tory on GPFS is ove r 207 seconds , an extrem ely large ov erhea d in compar ison with a ramdisk create/remove direct ory overhead t hat is in the ran ge of millis econds. It is likely that these numbe rs will im prove w ith time, a s the BG/P moves from an early testing machin e to a full -scale production sy stem . For exam ple, the peak a dvertise d GPFS perf orma nce is ra ted at 80G b/s, yet we only achiev ed 0.77G b/s. We only used 2048 proces sors (of the total 160K pr ocess ors tha t will ev entually mak e up the AL CF BG /P), so if GPFS sca les linearly , we will ac hieve 61.6 G b/s. It is possible that in the production sys tem w ith 160K process ors, we w ill not require the full m achine to a chieve the peak shared file sy stem throughput (a s is typica l in most large clusters with sha red file sy stems). 5. Loosely Coupled Applications Synthe tic tests and applications off er a gr eat w ay to understa nd the perf orma nce char acter istics of a par ticular sys tem , but they do not alwa ys trivially tr anslate into predictions of how real applications with re al I/O will be have. W e have w orked with two sepa rate groups of scientists f rom dif ferent dom ains as a f irst step to show tha t large -scale loosely -coupled applica tions can run efficiently on the BG/P and the SiCo rtex system s. The applications are from two doma ins, mole cular dy namics and econom ic modeling, and both show excelle nt speedup and eff iciency as they scale to thous ands of pr ocess ors. 5.1 Molecular Dyn amics: DOCK Our f irst applica tion is DOCK Version 5 [36], whic h we have run on both the BG/P and the SiCortex s ys tems via Sw ift [ 15 , 28] and Falkon [3]. DOC K addres ses the problem of "docki ng" molecu les to each ot her. In general, "d ockin g" is th e identific ation of the low -energy binding m odes of a sm all molec ule, or ligand, w ithin the activ e site of a m acrom olecule, or receptor, whose structu re is kno wn. A com pound that interacts strongly with, or bind s, a recepto r (such as a protei n molecule) associated with a disease may inhi bit it s functio n and thus act as a benef icial drug. Prior to running the re al w orkload, w hich exhibits wide variability in its job durations, we investig ated the scala bility of the application under larg er than norm al I/O to com pute ratios a nd by r educing the numbe r of v ariables . From the ligand sear ch space, we selected o ne th at need ed 17 .3 sec onds to complete. W e then ran a w orkload with this specific molecule (repli cated to ma ny f iles) on a va ry ing num ber of process ors f rom 6 to 5760 on the SiCortex . The ratio of I /O to com pute w as about 35 tim es higher in this synthe tic workloa d th an the real workload who se ave rage tas k exe cution tim e wa s 660 sec onds. Figure 14 s hows the results of the synthe tic work load on th e SiCort ex system . 0 10 20 30 40 50 60 70 80 90 100 0 120 240 36 0 480 600 720 Time (sec) Execution Time per Task (sec) 1 10 100 1000 10000 6 12 24 48 96 1 92 3 84 7 68 15 36 3 072 5760 Numbe r of CP U Cores Speedup 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% Effici ency Speedup Ideal Spe edup Efficienc y Figure 14 : Synthetic w orkload with deterministic job ex ecuti on times (1 7.3 seco nds) w hil e vary ing the number of pro cess ors f rom 6 to 5760 on the SiC ortex Up to 1536 proce ssors, the a pplication had e xce llent scalability with 98% ef ficiency , but due to shared f ile sy stem contention in rea ding the input data and writing the output data, the ef ficienc y droppe d to below 70% for 3072 proce ssors and below 40% for 5760 process ors. We conc luded that shar ed f ile system contentio n caused the lo ss in efficiency, du e to t he average executio n time per jo b and the stan dard d eviation as w e increased the n umber o f processo rs. Noti ce in the l ower left corner of Figu re 14 how s table the e xecution tim es are w hen running on 768 proces sors, 17.3 seconds avera ge and 0.336 s econds standar d devia tion. Howe ver, the lower right corne r show s the perf orma nce on 5760 process ors to be an a ver age of 42.9 seconds, and a standa rd devia tion of 12.6 se conds. Note that we ran another sy nthetic work load that had no I/O (sleep 18) a t the f ull 5760 proces sor ma chine scale , which show ed an ave rage of 18.1 se cond exe cution time (0.1 second st andard dev iation), w hich rules out the di spatch /execute mechani sm. The likely content ion was due to the application’s I/O pa tterns to the sh ared file system. The real workload of the DOCK ap plicati on in volves a wide range of job ex ecution times , ranging from 5.8 seconds to 4178 seconds , with a standard de viation of 478.8 sec onds. T his work load (Figur e 15 and Figur e 16) has a 35X sm aller I/O to compute ratio than the sy nthetic work load presented in Fig ure 14. Expec ting that the a pplication w ould scale to 5760 proce ssors, we ran a 92K job workloa d on 5760 process ors. In 3.5 hours , we consum ed 1.94 CPU yea rs, and ha d 0 f ailures thr oughout the exe cution of the work load. We a lso ran the sam e wor kload on 102 proces sors to com pute spee dup and ef fici ency , which g ave the 5760 process or exper ime nt a spee dup of 5650X (ide al being 5760) and an eff iciency of 98.2% . Each horizonta l gre en line represent s a job computati on, and each black ti ck m ark represents the beg inning and end of the computa tion. Note that a lar ge part of th e efficiency was lost towards th e end o f the experiment as t he wide range of job ex ecution times yie lded the s low ram p-down of the ex perim ent and lea ving a grow ing num ber of process ors idle. 0 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 5500 6000 0 120 0 2400 3600 4 800 60 00 7200 8400 9 600 1 0800 12000 Time (sec ) Proc essors 0 10000 20000 30000 40000 50000 60000 70000 80000 90000 100000 Tasks Idle CPUs Busy CPUs Wait Queu e Leng th Comp leted Micro- Tasks Figure 15 : DOCK application (summ ary view ) on the SiCor tex; 92K job s usin g 5760 proce ssor core s Despite the loosely coupled nature of this application, our prelim inary results show that the DOCK a pplication per form s and scale s well on thousands of processors. The excelle nt scala bility (98% ef fici ency when com paring the 5760 pr ocessor run with the sam e workloa d exec uted on 102 proce ssors) w as ac hieved only after careful co nsid eration was taken to avo id th e shared file system , which included th e caching of the multi-m egabyte appli cation binari es, and t he cachin g of 35M B of static i nput data that would h ave oth erwise been read from the sh ared file system for each job. Note that each jo b still had so me m inimal read and write operations to the share d file sy stem , but they were on the order of 10s of K B, with the major ity of the com putations being in the 100s of seconds , with an a vera ge of 660 seconds . Figure 16 : DOCK application (per processor view) on the SiCor tex; 92K job s usin g 5760 proce ssor core s To gr asp the m agnitude of D OCK applic ation, the 92K jobs we perform ed represents only 0.0092% of the s earc h space being considered by the scientists we are working w ith; simple calculati ons p rojects a search over the en tire p arameter space to need 20,938 CPU ye ars, the e quivale nt of 4.9 y ears on today ’s 4K CPU BG/P, or 48 day s on the 160K-core BG/P tha t w ill be online later this y ear a t Arg onne National L aboratory. T h is is a la rge problem , that cannot be solved in a reas onable am ount of time (<1 ye ar) w ithout a sy stem that has at le ast 10K proc essors or m ore, but our loosely -coupled approa ch holds g reat prom ise for making this p rob lem tractable an d manageabl e. 5.2 Economic Modeling: MARS The second applic ation whos e perf ormance we evalua ted on our target architectu res was MARS – th e Macro Analysis of Ref inery Sys tem s, an ec onomic m odeling applica tion for petroleum refining develope d by D . Hanson and J. L aitner at Arg onne [38] . This m odeling code pe rform s a fa st but broad- based sim ulation of the economic and envi ronme ntal param eters of pe troleum refi ning, cove ring ov er 20 prim ary & secondary refinery processes. MARS anal yzes the processin g stages for six grade s of cr ude oil (fr om low -sulfur light to high-s ulfur very - heav y a nd synthe tic crude ), as w ell as proc esses f or upgra ding heav y oils and oil sands. It include s eight m ajor refi nery products including ga soline, diesel and jet f uel, and eval uates ra nges of product sha res. It m odels the econom ic and envi ronme ntal impa cts of the consum ption of natural g as, the production and use of hy drogen, a nd coal-to- liquids co-produc tion, and see ks to provid e insigh ts int o ho w refineries can beco me m ore efficient throu gh the cap ture o f waste energy. While MA RS analy zes this la rge num ber of proce sses and varia bles, it does so at a coarse le vel w ithout involving inte nsive nume rics. It consists of about 16K lines of C c ode, a nd can proces s one itera tion of a m odel exec ution in about 0.5 sec onds of BG/P CPU time. Using the power of the BG/P w e can perf orm detailed m ulti-variable param eter studies of the behavior of a ll aspec ts of pe troleum ref ining cove red by MARS. As a simple test of utilizing the BG/P f or refine ry modeling , we pe rform ed a 2D param eter sweep to ex plore the sensitivity of the inve stme nt required to m aintain production ca pacity ove r a 4- decade sp an on variation s in t he diesel pr oduction yie lds f rom low sulfur light c rude and m edium sulf ur heavy crude oils. T his mim ics one possible segm ent of the m any com plex m ultivariate parameter studi es that b ecome possib le with ample compu ting powe r. A sing le MA RS mode l exe cution inv olves an applic ation binary of 0.5MB, static input da ta of 15KB, 2 fl oating point input varia bles and a single floa ting point output var iable. T he aver age mic ro-task exec ution time is 0.454 seconds. T o scale this eff iciently, w e perform ed task-ba tching of 144 model runs into a single task, y ielding a w o rkload w ith 1KB of input a nd 1KB of output data, and a n aver age e xecution tim e of 65.4 sec onds. We ex ecuted a w orkload with 7 million m ode l runs (49K task s) on 2048 proces sors on the BG/P (Fig ure 17 and Fig ure 18). The ex perim ent consume d 894 CPU hours and took 1601 s econds to com plete. A t the sca le of 2048 proc essors , the per m icro-task exec ution times wer e quite deter ministic with a n av erage of 0.454 seconds and a st andard dev iation of 0.026 sec onds; this ca n also be seen from Figu re 18 where we see all pro cessors start and stop exe cuting task s at about the sam e tim e, the banding ef fec ts in the graph) . As a comparison, a 4 proces sor ex perim ent of the sam e work load had an a vera ge of 0.449 seconds with a standar d devia tion of 0.003 se conds. T he ef fic iency of the 2048 proce ssor run in com parison to the 4 process or run w as 97.3% w ith a speedup of 1993 (compa red to the idea l spee dup of 2048). 0 200 400 600 800 1000 1200 1400 1600 1800 2000 0 180 360 540 720 900 1080 1260 1440 Time (sec ) CPU C or es 0 1000000 2000000 3000000 4000000 5000000 6000000 7000000 8000000 0 180 360 540 720 900 1080 1260 1440 Micr o-Tasks Idle CPUs Busy CPUs Wait Queue L ength Completed Micr o-Tasks Figure 17 : MARS application (sum mary view ) on the BG/P; 7M m icro- task s (49K task s) usin g 2048 proce ssor core s Figure 18 : MARS applicati on (per processor view ) on the BG/P; 7M m icro -task s (49K task s) usin g 2048 proces sor c ore s The results p resented in th ese figures are from a static work load processe d directly with Falkon. Sw ift on the other hand can b e us ed to make the workload more dynamic, reliable, and provide a natural f low from the re sults of this applic ation to the input of the f ollowing stag e in a more complex workf low. Sw ift incurs its ow n overheads in addition to w hat Fa lkon e xperie nces whe n running the MARS applica tion. Thes e over heads incl ude 1) ma naging the data (s taging data in and out, copy ing data from its original location to a w orkflow -specif ic location, and back from the workflow directo ry to the resu lt archival location) , 2) cr eating per-task working d irectori es (via mkdir on th e shared file system), and 3 ) creation and t racking o f status lo gs files for each task. We a lso ran a 16K task (2.4M m icro-task s) w orkload on 2048 CPUs w hich took an e nd-to-end tim e of 739.8 sec onds. The per-m icro-task time w as highe r than bef o re – 0.602 se conds (up from 0.454 seconds in the 1 node/4 CPU case without any Swift or Falko n overhead ). The efficiency between th e average time per mic ro-task is 75%, but due to the slow er dispatc h rates ( about 100 tasks/sec ) and the higher va riability in ex ecution tim es (whic h yi elded a slow ramp dow n of the ex perim ent), the end-to-end ef fici ency was only 70% w ith a spee dup of 1434X (2048 be ing ideal). Th e extra overhead (70% vs. 9 7% efficiency) between the Swif t+Falkon execution a nd Falkon only execution can be attributed to the three things m entioned earlier (m anaging data, crea ting sand-box es, and kee ping track of sta tus f iles, all on a per task basis). It is interesting to note that Swift w ith the defa ult settings and im pleme ntation, y ielded only 20% eff iciency for this workloa d. We investig ated the m a in bottleneck s, and they seem ed to be shared file system related. We applie d three distinct opti mizations to the Swift wrapper scrip t: 1) the pl acement of temporary director ies in lo cal ramdisk rather than the shared files yst em; 2) copies the input data to the loca l ram disk of the com pute node for each jo b executi on; an d 3) creates the p er job logs on loc al ram disk and only c opies them at the com pletion of each job (rather th an app endin g a file on sh ared file system at each j ob status chan ge). These opt imization s allowed u s to increa se the eff iciency from 20% to 70% on 2048 processors for the MA RS application w ith task durations of 65.4 se conds (in ideal case). We w ill be working to na rrow the ga p betw een the ef ficie ncies f ound when running Sw ift and thos e w hen running Falkon alone, and hope to g et the Sw ift ef ficiencie s up in the 90% range without incr easing the minim um task duration tim es pe r task. A relatively straight forw ard approac h to incr easing efficiency would be to increase th e per task execut ion times, which c ould am ortize the pe r task overhea d better. H owev er, at this stage of its d evelopment , 70% efficiency for a generic p arallel scripting sys tem running on 2K+ cores with 65 se cond task s is a reasonab le level of success. 6. Conclusions and F uture Work This paper f ocused on the ability to m anage and exec ute large scale app licatio ns on petascale class system s. Clusters with 50K+ proc essor c ores ar e begi nning to com e online (i.e. TACC Sun Constellation Sy stem - Ra nger), G rids (i.e. T eraGrid) with a dozen sites and 100K+ proc essor s, and super com puters w ith 160K process ors (i.e. IBM BlueG ene/P). Larg e clusters a nd superc omputer s have traditionally been high perf orma nce computin g (HP C) systems, as they are efficient at executing tightly coupled para llel jobs w ithin a particula r mac hine with low - latency interconnects; the applica tions ty pically use messag e passing in terface (MPI) to achieve the n eeded i nter-pro cess com munica tion. On the other hand, Gr ids have been the pref erred platf orm f o r m ore loosely coupled applications that te nd to be ma nage d and exe cuted throug h work flow sys tem s. In contrast to HPC (tightly couple d applications), the loosely coupled applications are k nown to m ake up high throug hput computing (HTC). HTC system s generally invo lve the execution of independent, se quential jobs that ca n be individually scheduled on many differe nt computing re sources ac ross m ultiple adm inistrative boundaries . HTC sy stems achieve this using vari ous grid com puting techniques, a nd often tim es use files to achiev e the inter -process comm unication (as oppose d to MPI for HPC). Our work shows t hat t oday’s existin g HPC systems are a viable platform to host loosely coupled HT C applic ations. W e identifie d challenges that arise in large scale loosely coupled applications whe n run on petasc ale-pre cursor s ys tem s, whic h can ham per the ef ficiency and utilization of the se large scale sy stems. These challenge s vary from local re source m anager sc alability and granular ity, ef ficient utilization of the raw ha rdware, sha red file syste m conte ntion and scalability , reliability at sc ale, applic ation scala bility, and unde rstanding the limitations of the HPC s yste ms in order to identif y prom ising and scientific ally v aluable loose ly- coupled applic ations. T his paper prese nted new resea rch, imple menta tions, and applications experi ence in sc aling loose ly coupled large -sca le applica tions on the IBM Bl ueGe ne/P and the SiCortex. A lthough our experim ents are s till on precursor sys tems (4K proce ssors for the B G/P and 5.8K proc essor s for the SiCortex), the experi ence we gathered i s invaluabl e in plannin g to scal e these applic ations anothe r one to tw o orders of magnitude over the course of the nex t fe w m onths as the 160K proc essor BG/P com es online. We expec t to present results on 40K -160K core sy stems in the final ve rsion of this paper. For future work, we plan to imple ment and e valuate enhance ments , such as tas k pre-f etching, alternativ e technolog ies, improv ed data mana gem ent, and a three -tier architec ture. T ask pre-f etching is comm only done in m anage r-wor ker sy stem s, whe re executors can request n ew tasks before they complete execu tion of old tasks, thus ov erlapping comm unication and exe cution. Many Swif t applications read and w rite large am ounts of data. Our eff o rts will in large part be focused on hav ing all data ma nagem ent operations av oid the use of shared file sys tem resources when local file-systems can hand le the scale o f data involved. As w e have se en in the results of this paper, data a ccess is the ma in bottleneck as a pplications sca le. We e xpect tha t data caching, pr oactive data rep licatio n, an d dat a-aware scheduli ng will off er significa nt perform ance im provem ents for applica tions that exhib it locality in their data access patterns. [ 26] We have alrea dy im pleme nted a data -awa re sc heduler, and s upport for caching i n th e Falkon Java executor. In pr evious work, we have show n that in both m icro-benc hma rks a nd a lar ge-s cale astr onomy application, that a m odest sm all Linux cluster (128 CPUs) can achiev e agg regate I/O data rate s of te ns of Gb/s of I/O throug hput [25, 27]. We plan to port the s am e data ca ching m echanisms from the Java execut or to t he C executo r so we can use these techniques on the BG /P. Finally, w e plan on ev olving the Falkon architectu re from the curren t 2-Tier archi tecture t o a 3-Tier one. We a re expec ting that this arc h itecture cha nge will a llow us to introduce m ore parallelism and distribution of the curre ntly centra lized m anage me nt compone nt in Falkon, and hence offer higher disp atch an d executi on rates t han Fal kon cu rrently supports, w hich will be critical a s we scal e to the entire 160K- core BG/P an d we get d ata cachin g implemented an d r unning efficiently to avoid the sh ared file system overheads. 7. References [1] IB M Bl ueG ene /P (B G /P), ht tp : // www. re sea rc h. i bm. co m/b l u ege ne /, 2008 [ 2] S iC o rt ex , ht tp : // www. si co rt ex .c om/ , 2008 [3] I. Raicu , Y. Zhao , C. Dumitrescu, I. Foster, M. Wilde. “Falkon : a Fast and Lightweigh t Task Execution Fram ework ”, IEEE/ACM SC, 2007 [4] A. Gara, et al. ”Overview of the Blue Gene/L system architectu re”, IBM Jo urnal of Research and Development, Volume 49, Numbe r 2/3, 2005 [5] J. Dean , S. Ghemawat. “MapRed uce: Si mplified dat a proces sing on lar ge clus ters” . In OSDI, 2004 [6] A. Bialec ki, M. Cafa rella, D. Cutting, O. O’Malley. “Hadoop: a fr ame work for running applic ations on larg e clusters built of comm odity hardwa re”, http://lucene.a pache.org /hadoop/, 2005 [7] D.P. Ande rson. “BO INC: A Syste m for Public-Resourc e Computing and Storage.” 5th IEEE/A CM International Work shop on Gr id Computing , 2004 [8] F.J.L. Reid, “T ask f arm ing on Blue G ene”, EEPC, Edinburgh Univers ity, 2006 [9] S. Ahuja, N. Carrier o, and D. G elernter . “L inda a nd Friends”, IEEE Com puter 19 (8), 1986, pp. 26-34 [10 ] M.R. Barbacci , C.B. Weinsto ck, D.L. D ouble day, M.J . Ga rdner, R.W. Li chota. “D urra: A Structure D escri ption Lang uage for Dev eloping Dis tributed A pplications”, Softw are Engineering Journal, IEEE, pp. 83-94, Marc h 1996 [11] I. Foster. “Com positional Parallel Program ming L anguag es”, ACM T ransactions on Program ming L anguag es and Sy stem s 18 (4), 1996, pp. 454-476 [12] I. Foster, S. Tay lor. “Strand: New Concepts in Parallel Program ming”, Prentice Hall, Engl ewood Clif fs, N. J. 1990 [13] “The Fortress Program ming L anguage ”, http://fortres s.sunsource .net/, 2008 [ 14 ] “ St ar -P ” , ht tp : // www.i n te ra ct iv es up e rco mp u ti n g. co m, 2008 [15 ] Y. Zhao , M. Hate gan, B . Cliffo rd , I. Foster, G. von Laszew ski, I. Raicu, T. Stef-Praun, M. Wilde. “Sw ift: Fast, Reliable, Loosely Coupled Parallel Com putation”, IEEE Worksh op on Scientif ic Workf low s 2007 [1 6 ] “S wi ft Wo rkfl o w Syst em”: www.c i. u ch ica go .e du / swift , 2007 [17] N. Desai. “Cobalt: A n Open Source P latform for HPC Sys tem Softwar e Resear ch”, Edinburgh B G/L Syste m Softw are Work shop, 2005 [18] J.E. Moreira, e t al. “B lue Ge ne/L prog ram ming and opera ting environm ent”, IBM Journa l of R esea rch a nd Dev elopm ent, Volume 49, Numbe r 2/3, 2005 [19] “ZeptoOS: T h e Sm all Linux for Big Compute rs”, ht t p: / /www- un i x. mcs .a n l. go v/ ze pt o o s/ , 2008 [20] R. Stevens . “The L LNL /ANL /IBM Collaboration to De velop BG/P and BG /Q”, DOE A SCAC Report, 2006 [21] F. Schm uck and R. Haskin, “GPFS: A Shared-D isk File Sys tem for La rge C omputing C lusters,” FAST 2002 [22] G.v. L asze wsk i, M. Hateg an, D. Kodeboy ina. “Jav a CoG K it Work flow ”, in Tay lor, I.J., De elm an, E., Ga nnon, D.B. and Shields, M. eds . Work flow s for eScienc e, 2007, 340-356 [23 ] J.A. St ankovic, K. Ramamritham,, D. Ni ehaus, M . Humphrey, G. Wallace. “The Spring System: Integrated Support for Com plex Real-T ime Sy stems” , Real-T ime Sys tem s, May 1999, Vol 16, No. 2/3, pp. 97-125 [24] J. Frey, T. Tannenbaum , I. Foster, M. Frey , S. Tuecke. “Condo r-G : A Computa tion M anage ment Agent for Multi-Ins titutional Grids,” Clu ster Computin g, 2002 [25 ] I. Raicu , Y. Z hao, I. Foster, A. Szalay. “A Data Diffusion Approa ch to Large Sc ale Scientif ic Exploration,” Micr osoft eScie nce W orkshop at REN CI 2007 [26] A. Sza lay , A. Bunn, J . Gray , I. Fost er, I. Raicu. “T he Importanc e of Data Locality in Distributed Computing Applic ations”, N SF Workf low Work shop 2006 [27 ] I. Raicu , Y. Zh ao, I. F oster, A. Szalay. "Acceleratin g Large- scale Data Ex ploration throug h Data Diff usion", to a ppear at Internationa l Works hop on Data-A ware Distribute d Com puting 2008 [28] Y. Zhao, I. Raicu, I. Fos ter, M. Ha tegan, V. N efedov a, M. Wilde. “R ealizing Fa st, Scalable and Relia ble Scie ntific Computa tions in Gr id Envi ronm ents”, G rid Computing Rese arch Progr ess, N ova Pub. 2008 [29] Tera Port Cluster, http://teraport.uchica go.edu/, 2007 [ 30 ] Ope n S ci en c e Gri d (OS G), ht t p: // www. op e ns ci en ce gri d .o r g/, 2008 [31] C. Catlett, et al. “TeraGrid: An alysis of Org anization, Sys tem A rchitecture, and Middlew are Enabling New Types of Applicat ions,” HPC 2006 [32] J.C. Jacob, et al. “The Montage A rchitecture for Grid- Enabled Science Processi ng of Large, Distributed Datasets ,” Earth Scien ce Technology Conferen ce 2004 [33] T. Stef-Praun, B. Clif ford, I. Foster, U. H asson, M. Ha tegan, S. Small, M . Wilde an d Y. Z hao. “Accelerating Med ical Research using the Swif t Workf low Sy stem” , Health Grid , 2007 [34 ] T he Funct iona l Magne tic Reso nance Imaging Data Ce n t e r , ht t p : / /www. f mr i d c . o r g / , 2007 [35] I. Foster, “ Globus Toolkit Vers ion 4: Sof tw are for Service-Orien ted System s,” Conference on Netw ork and Parallel Com putin g, 2005 [36] D.T. Moustaka s, et al . “Dev elopm ent and Validation of a Modular, Extensible Docking Progra m: D OCK 5”, J. Comput. A ided Mol. Des., 2006, 20, 601-619 [37] T. Stef- Praun, G. Made ira, I. Foste r, R. T ownsend. “Accelerating sol utio n of a moral hazard probl em with Swif t”, e-Soc ial Scienc e, 2007 [38] D. Hanson. “Enha ncing T echnolog y Re presenta tions within the Stanford Energy Modeling Forum (EMF) Climate Econom ic Models” , Works hop on Energy and Economic Policy Mode ls: A Re exam ination of Fundam entals, 2006 [39] M. Feller, I. Foster, and S. Ma rtin. “GT 4 GRA M: A Functionality and P erform ance Study ”, Tera Grid Confer ence 2007 [40] W. Hwu et. Al . “Implicitly P arallel Program ming Models f or Thousa nd-Core Mic roproces sors” , Desig n A utoma tion Confe rence , (DA C-44), 2007

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment