Text Modeling using Unsupervised Topic Models and Concept Hierarchies

Statistical topic models provide a general data-driven framework for automated discovery of high-level knowledge from large collections of text documents. While topic models can potentially discover a broad range of themes in a data set, the interpre…

Authors: Chaitanya Chemudugunta, Padhraic Smyth, Mark Steyvers

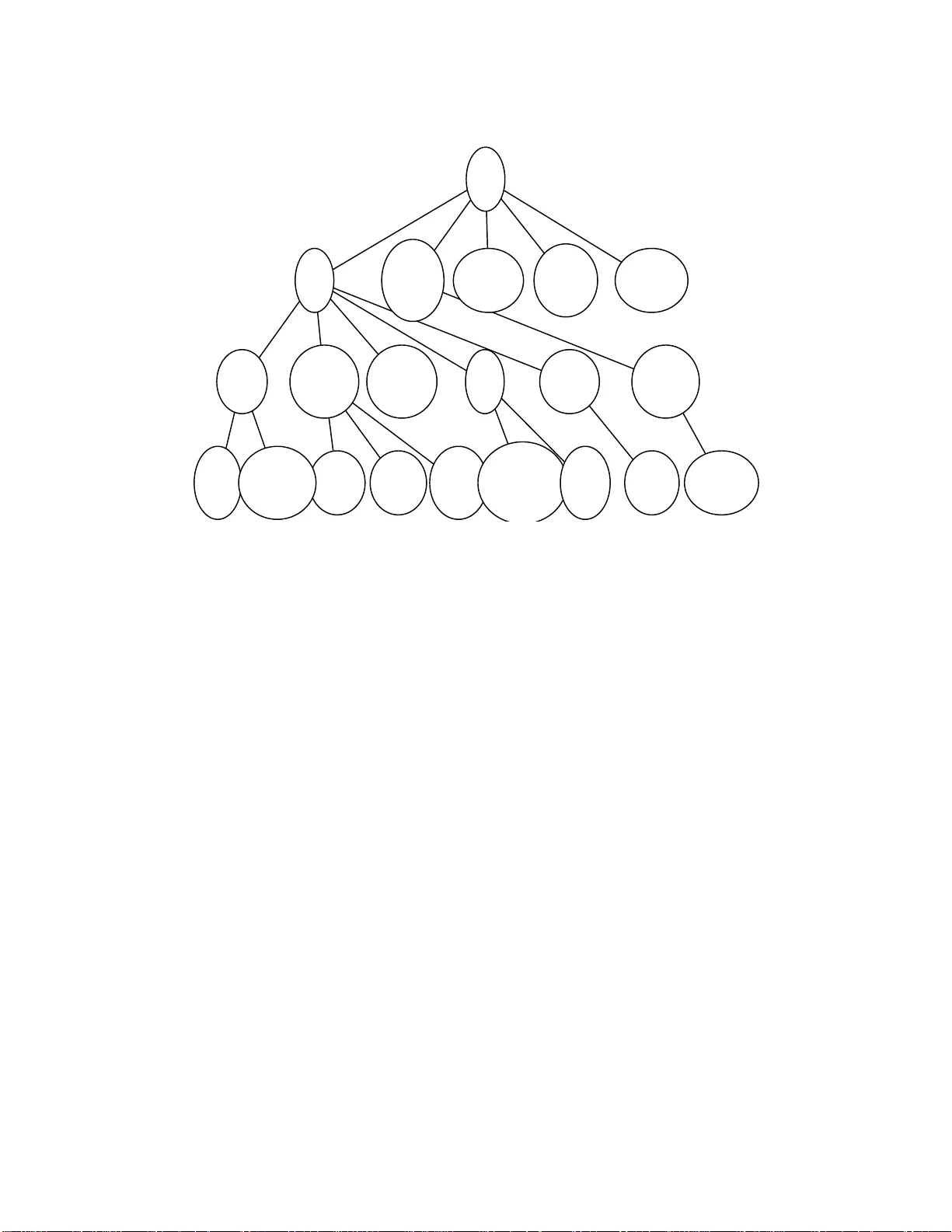

T ext Modeling using Unsuper vised T opic Models and Concept Hierar chies Chaitanya Chemudu gunta C H A N D R A @ I C S . U C I . E D U Department of Computer Science University of California, Irvine Irvine, CA, USA Padhraic Smyth S M Y T H @ I C S . U C I . E D U Department of Computer Science University of California, Irvine Irvine, CA, USA Mark Steyve rs M S T E Y V E R @ U C I . E D U Department of Cognitive Sciences University of California, Irvine Irvine, CA, USA Abstract Statistical topic models provide a general data-driv en framew ork for automated disco very of high- lev el knowledge from large collection s of text documen ts. While topic mo dels can poten tially dis- cover a broad ran ge of themes in a data set, the interpretability of the learned topics is no t always ideal. Human -defined concepts, on the other hand, tend to be semantically richer due to careful se- lection of words to define concepts b ut they t end not to cover the themes in a data set e xhaustively . In this paper , we prop ose a p robabilistic frame work to combine a hierarchy o f human-defined se- mantic con cepts with statistical topic models to seek the be st of b oth worlds. Experime ntal results using two dif ferent sources of c oncept h ierarchies and two c ollections o f text docum ents ind icate that this combina tion lead s to systematic improvements in the q uality of th e associated lan guage models as well as e nabling n ew tech niques fo r inf erring and visualizing the semantics of a docu - ment. 1. Intr oduction There are a va riety of popular and useful techniq ues for automati cally summarizi ng the thematic conten t of a s et of documents including doc ument cluste ring (Nigam et al., 200 0) and l atent se man- tic analys is (Landau er and Dumais, 1997). A some what more recent and general frame w ork that has been dev elop ed in this contex t is latent Dirichlet analysis (Blei et al., 2003 ), also referre d to as statist ical topic modelin g (Grif fiths and Stey ver s, 2004 ). T he basic conce pt underly ing statistical topic modeling is that each documen t is compose d of a probabi lity distri b ution ove r topics, where each topic is represen ted as a multinomial probabili ty distrib uti on ove r words. The document -topic and topic-w ord distrib utio ns are learn ed automati cally from the data in an unsupervis ed manner with no human labeli ng or prior kno wledg e required. The underl ying statistical framew ork of topic modeling enables a v ariet y of intere sting exten sions to be de vel oped in a systematic manner , such 1 as author -t opic models (Steyv ers et al., 2004), correlat ed topics (Blei and Lafferty, 200 5), and hier- archic al topic m odels (Blei et al., 2007; Mimno et al., 2007). W ord T opic A T opic B databa se 0.50 0.01 query 0.30 0.01 algori thm 0.18 0.08 semantic 0.01 0.40 kno wledg e 0.01 0.50 T able 1: T oy ex ample illustratin g 2 topics each with 5 words. As an illustrat i ve examp le, T able 1 shows two ex ample topics defined ov er a toy voc ab ular y with 5 words I ndi vid ual documents could th en be mode led as co ming entirely fro m topic A or fro m topic B, or more general ly as a mixture (50-50 , 70-30, 10-90 , etc) from the two topics. The topics learned by a topic m odel can be thought of as themes that are discov ered from a corpus of documents, where the topic-word distri b ution s “focus ” on the high proba bility words that are rele vant to a theme. An entirely dif ferent approa ch is to manually define semantic concep ts using human kn o wledge and jud gement. In the construct ion of ontolo gies an d thesau ri it is typical ly the case that for each concept a relati vel y small set of importan t words associated with the concept are defined based on prior kno wledg e. Concept names and sets of relations among concept s (for ontolo gies) are also often pro vided . F A M I L Y Concept F A M I L Y T opic beg et family (0.208) birthri ght child (0.171) brood parent (0.073) brothe r young (0.040) childr en boy (0.028) distan tly mother (0.027) dynas tic fath er (0.021) elder schoo l (0.020) T able 2: CALD F A M I L Y concep t and learned F A M I L Y topic Concepts (as defined by humans) and topics ( as learned from d ata) represent similar information b ut in dif feren t ways . As an example, the left column in T able 2 lists some of the 204 words that ha v e been m anuall y defined as part of the conce pt F A M I LY in the Cambridge Advanc ed Learners Dictiona ry (more details on this set of conce pts are provid ed later in the paper) . The right column sho ws the high probabilit y words for a learned topic, also about families. This topic was learned automati cally from a text corpu s using a statistical topic model. The numbers in paren theses are the p robab ilities that a wo rd will be generat ed condition ed on th e learne d topic—the se probabi lities sum to 1 ove r the entire v ocab ulary of words, specifyin g a multinomial distrib utio n. The concept F A M I L Y in effec t p uts probabilit y mass 1 on the set of 20 4 words w ithin the concept, and probabi lity 0 on all other words . The topic multinomial on the other hand could be viewed as a “soft” version 2 of this idea, w ith non-zero probabil ities for all word s in the vo cab ul ary—b ut significantly ske wed, with most of the proba bility m ass focu sed on a relativ ely small set of words . Human-define d concept s are like ly to be more interpre table than topics and can be broader in cov era ge, e.g., by incl uding words s uch as be get and br ood in the conc ept F A M I L Y in T able 1 . Such relati v ely rare words w ill occur rarely (if at all) in a particu lar corpus and are thus far less likely to be learned by the topic model as being associ ated w ith the more common famil y words. T opics on the other hand hav e the adv antage of b eing tuned to the themes in the particular corpus the y are traine d on. In addition, the probabilist ic model that underlie s the topic model allows one to automatically tag each word in a document with the topic most likely to ha v e generat ed it. In contra st, there are no genera l techniqu es that we are aware of that can automatically tag words in a documen t w ith rele v an t concepts from an ontology or thesaurus. In this paper we propose a general frame wor k for combining data-d ri ve n topic s and semantic concep ts, with the goal of taking adv an tage of the best features of both appro aches. Section 2 descri bes the two lar ge ontologie s and the text corpus that we use as the basis for our experiments . W e beg in Section 3 by rev ie wing the basic principles of topic models and then introduce the concept- topic model which combines concepts and topics into a single probab ilistic m odel. In S ection 4 we then extend the frame wo rk to the hierarch ical c oncep t-topi c model to take adv antag e of known hierarc hical structure among conc epts. In Sec tion 5 we discuss a numb er of e xamples that i llustr ate ho w the hiera rchica l conc ept-to pic mod el works, s ho wing for exampl e how an ontolog y can be matched to a corpus and ho w documents can be tagged at the word-le v el with concep ts from an ontolo gy . Sect ion 6 describes a series of experi ments that ev alua te the predict i ve perfor mance of a number of dif fer ent models , sho w ing for exa mple that prior kno wledg e of concept words and concep t relati ons can lead to better topic-based language models. Section s 7 and 8 conclude the paper with a brief discu ssion of future directions and final comments. In terms of rel ated work, our approach b uilds on the general topic mod eling framew ork of Blei et al. (2003) and Griffiths and Steyv ers (2004) and the hierarchical Pachink o models of Mimno et al. (2007). A lmost all earlier wo rk on topic modeling i s purely data-dri ve n in that no hu man kno wledge is us ed in lea rning the topic mo dels. One exce ption is the work by Ifrim et al. (20 05) who ap ply the aspect mo del ( Hofmann, 2 001) to model ba ckgrou nd kno wledge in the form of conc epts to imp rov e tex t classificatio n. Another except ion is the work of Boyd-Grab er et al. (2007) w ho de v elop a topic modeling frame work that combines human-deri v ed lingu istic kno wled ge w ith unsupervis ed topic models for the purpo se of word-sense disambig uation . O ur frame wo rk is some what more general than both of these appro aches in tha t we not only impro v e the qual ity of making predict ions on text data by using prior human concepts and conce pt-hie rarchy , b ut also are able to make inferences in the re ve rse directi on about concept words and hier archie s giv en data. There is also a si gnificant amount o f prior w ork on using da ta to help with o ntolog y cons tructio n and ev aluation, e.g., learning ontolog ies f rom text data (e.g., Maedche and S taab (2001)) or method- ologie s for e v aluat ing ho w well ontolog ies are matched to specific tex t corpora (Bre wst er et al., 2004; Alani and Brewst er, 2006). Our work is broad er in scope in that we propose general-pu rpose probab ilisti c models that combine concepts and topics within a single framew ork, allowing us to use the data to make inferen ces about ho w docu ments and concept s are related (for example). It should be noted that in the work described in this paper w e do not expl icitly in v estiga te techniques for modifying an ontolog y in a data-d ri v en manner (e.g., adding/dele ting word s from concep ts or relatio nship s among concepts)—ho weve r , the framew ork we propose could certainly be used as a basis for ex plorin g such ideas. 3 2. T ext Data and Concept Sets The e xperi ments in th is pape r are base d on o ne lar g e tex t corpu s and tw o dif ferent concept sets. For the text corpus, we used the T ouchstone Applied Science A ssocia tes (T AS A) datase t (Landauer and Dumais, 1997). This corpus consists of D = 37 , 651 document s with passages exc erpted from educati onal tex ts used in curricula from the first year of school to the first year of colle ge. The documents are di vide d into 9 differ ent educati onal genres. In this paper , we focus on the documents classi fied as S C I E N C E and S O C I A L S T U D I E S , consist ing of D = 5 , 356 and D = 10 , 501 documents and 1.7 Million and 3.4 Million word tok ens respec ti ve ly . For human-ba sed concep ts the first source w e used was a thesaurus from the C ambridg e Ad- v anced L earner ’ s D ictiona ry (CALD; http://www .cambridge.o r g/elt/dictionaries/cald.htm ). CALD consis ts of C = 2 , 183 hierarch ically o r gani zed s emantic catego ries. In contrast to other ta xono mies such as W ordNet (Fellbaum, 1998 ), CALD groups words primarily according to semantic topics with th e top ics hier archic ally org anize d. The hi erarch y starts with the conce pt E V E RY T H I N G which splits into 1 7 co ncept s at the secon d lev el (e .g. S C I E N C E , S O C I E T Y , G E N E R A L / A B S T R A C T , C O M - M U N I C A T I O N , etc). The hierarc hy has up to 7 lev els. The conc epts va ry in the n umber of th e word s with a median of 54 words and a maximum of 3074. Each word can be a member of multiple concep ts, especia lly if the word has multiple senses. The second sourc e of concepts in our experi ments was the Open Directo ry Proje ct (ODP), a human-edit ed hierarchi cal director y of the web (a v ailab le at http://www .dmoz.org ). The ODP databa se contains descrip tions and U RLs on a large number of hierarchic ally or ganiz ed topics. W e ext racted all the topics in the S C I E N C E subtree, w hich consi sts of C = 10 , 817 concept nodes after prepro cessin g. The top concept in this hierarchy starts with S C I E N C E and di vide s into topics such as A S T R O N O M Y , M ATH , P H Y S I C S , etc. E ach of thes e topics divid es agai n int o more specific to pics with a maximum number of 11 lev els . Each node in the hierarchy is associated with a set of URL s related to the topic plus a set of human-edited descr iption s of the site content. T o create a bag of words representati on for each node, we collected all the word s in the textual descripti ons and also crawle d the URL s associat ed with the no de (a total of 78K si tes). T his led t o a vector of w ord counts for each node. For both the concept sets, w e propagat e the words upwar ds in the concept tree so that an inter- nal conce pt node is associate d with its own words and all the words associated with its children . W e created a single W = 21 , 072 word v ocab ulary based on the 3-way interse ction between the v ocab u laries of T ASA, CALD , and ODP . This vo cab ul ary cov ers approximately 70% of all of the word tokens i n the T ASA corpus and is the vo cab ula ry that is used in all of the exp eriments r eporte d in this paper . W e also generated the same set of exp eriment al results using the union of words in T ASA, CA LD, and ODP , and found the same general beha vior as with the intersecti on vocab ulary . W e repor t the intersectio n results and omit the union result s as the y are essent ially identical to the interse ction results. A useful feature of using the intersecti on is that it allo ws us to e v alua te two dif fere nt sets of conce pts (CA LD and ODP) on a common data set (T ASA) and v ocab ulary . 3. Combining Concepts and T opics In this section, we describe the concept-to pic model and detail its generati ve proces s and describe an illustra ti ve example. W e first begin with a brief re vie w of the topic model. 4 3.1 T opic Model The topic model (or latent D irichle t allocatio n model) is a statistical learning techniq ue for extract - ing a set of topics that d escrib e a collection o f documents (Blei et al., 2003). A topic z is represe nted as a mu ltinomia l dis trib uti on ove r t he V uni que wo rds in a corpus, p ( w | z ) = [ p ( w 1 | z ) , ..., p ( w V | z )] such that P v p ( w v | z ) = 1 . Therefore, a topic can be viewed a V -sided die and generating n words from a topic is akin to throwing the topic-die n times. There are a total of T topics and a docu - ment d is repre sented as a multinomial distrib utio n over those T topics p ( z | d ) , 1 ≤ z ≤ T and sum z p ( z | d ) = 1 . Generating a word from a document d in v olv es first selectin g a topic z from the documen t-topi c distrib ution p ( z | d ) and then selectin g a word from the topic distrib ut ion p ( w | z ) . This process is repeated for each word in the document . The condit ional probabili ty of a word in a documen t is gi ve n by , p ( w | d ) = X z p ( w | z ) p ( z | d ) (1) Giv en the words in a corpus, the infere nce problem in v o lve s estimating the word-top ic distri- b ution s p ( w | z ) and the topic-doc ument distrib u tions p ( z | d ) for the corpus. For the standar d topic model, collap sed Gibbs samplin g has been successfu lly applied to do inferen ce on lar ge text col- lection s in an unsup ervise d fash ion (Grif fiths and Stey ver s, 2004 ). Under this technique, words are initial ly assigned randomly to topics and the algori thm then iterates through each word in the cor- pus and samples a topic assig nment gi ven the topic assign ments of all other word s in the corpus. This process is repeated until a steady state is reached (e.g. the likeliho od of the model on the cor - pus is not increasing with subsequent iteratio ns) and the topic assignmen ts to words are then used to estimate the word- topic p ( w | z ) and topic-documen t p ( z | d ) distrib u tions. The topic model uses Dirichlet priors on the multinomial distrib utions p ( w | z ) and p ( z | d ) . In this paper , we use a fixed symmetric prior on p ( w | z ) word-top ic distri b ution s and optimize the asymmetric Dirichlet prior paramete rs on p ( z | d ) topic-documen t distrib utio ns using fi xed point update equation s (as giv en in Minka (2000)). See Appendix A for more details on inferenc e. 3.2 Concept-T opic Model The concept-top ic model is a simple ex tensio n to the topic model where we add C concept s to the T topic s of the topic model result ing in an ef fect i ve set of T + C “topic s” for each document. Recall that a concept is represented as a set of words. The human-defined concepts only gi v e us a m embersh ip functi on ov er words—eith er a word is a member of the concep t or it is not. One straigh tforw ard way to incor porate concepts into the topic m odelin g frame wor k is to con vert them to “topics” by representi ng them as probabilit y distri b ution s ov er their associa ted w ord sets. In other words , a conc ept c can be represented by a multino mial distrib utio n p ( w | c ) such that P w p ( w | c ) = 1 where w ∈ c (therefore, p ( w | c ) = 0 for w / ∈ c ). A document is no w represente d as a distrib ution ov er topics and concepts , p ( z | d ) where 1 ≤ z ≤ T + C . The conditiona l proba bility of a word w gi v en a document d is, p ( w | d ) = X t =1 T p ( w | t ) p ( t | d ) + X c =1 C p ( w | c ) p ( T + c | d ) (2) The generati ve process for a document collec tion with D documents unde r the concept-to pic model is as follo ws: 5 tag P( c | d ) Concept P( w | c ) a 0.1702 P HYSICS electrons ( 0.2767) electron ( 0.1367) radiatio n (0.0899) proto ns (0.0723) ions (0.0532) radioactive ( 0.0476) proton (0.0282) b 0.1325 C HEMICAL E LEMENTS oxygen (0.3023) hydr ogen (0.1871) c arbon (0.0710) ni trogen (0.0670) so dium (0.0562) su lfur (0.0414) chlori ne (0.0398) c 0.0959 A TOMS , M OLECULES , AND S UB -A TOMIC P ARTICLES atoms (0.3009) mol ecules (0.2965) atom (0.2291) mole cule (0.1085) i ons (0.026 2) isotope s (0.0135) ion (0.0105) is otope (0.0069 ) d 0.0924 E LECTRICITY AND E LECTRONICS e lectricity (0.2464) e lectric ( 0.2291) electrical (0.1082) c urrent (0.0882) fl ow (0.0448) magnetism (0.0329) o 0.5091 O THER The hydrogen b ions a immediately o attach o themselves to water o molecules c to form o combinations o called o hydronium ions a . The chlorine b ions a also associate o with water o molecules c and become hydrated . Ordinarily o , the positive o hydronium ions a and the negative o chlorine b ions a wander o about freely o in the solution o in all directions o . However , when the electro lytic cell o is connected o to a battery o , the anode d becomes positively o charged a and the cathode d becomes n egatively o charged a . The positively o charged a hydronium ions a are then attracted o toward the catho de d and the negatively o charged a chlorine b ions a are attracted o toward the anode d . The flow d of current d inside o the cell o therefore consists of positive o hydronium ions a flowing d in one direction o and negative o chlorine b ions a f lowing d in the opposite o di rection o . When the hydronium ions a reach o the catho de d , which has an excess o of electrons a , each takes o one electron a from it and thus neutralizes o the positively o cha rged a hydrogen b i on a attached o to it . The h ydrogen b i ons a thus become hydrogen b at oms c and are released o into the solution o . Here they pair o up to form o hydrogen b molecules c which gradually o come out of the solution o as bubbles o of hydrogen b gas o . When the chlorine b ions a reach o the anode d , which has a shortage o of electrons a , they give o up their extra o electrons a and become neutral a chlorine b atoms c . These pair o up to form o chlorine b molecules c which g radually o come out of the solution o as bubbles o of chlorine b gas o . The behavior o of hydrochloric aci d o solution o is typical o of all electrolytes o . In general o , when acids o , bases o , and salts o are dissolved o in water o , many of their molecules c break o up into positively o and negatively o charged a ions a which are fre e o to move o in the solution o . Figure 1: Illustrati v e example of tagging a document excerpt using the concept model (CM) with concep ts from CALD . 1. For each topic t ∈ { 1 , ..., T } , select a word distri b ution φ t ∼ Dir( β φ ) 2. For each conc ept c ∈ { 1 , ..., C } , select a word dis trib uti on ψ c ∼ Dir( β ψ ) 1 3. For each docu ment d ∈ { 1 , ..., D } (a) Select a distrib uti on ove r topics and concepts θ d ∼ Dir( α ) (b) For each word w of document d i. Select a component z ∼ Mult( θ d ) ii. If z ≤ T ge nerate a word from topic z , w ∼ Mult( φ z ); otherwise generate a word from concept c = z − T , w ∼ M ult( ψ c ) where φ t repres ents the p ( w | t ) w ord-to pic distrib ution for topic t , ψ c repres ents the p ( w | c ) word- concep t distrib ution for concept c and θ d repres ents the p ( z | d ) distrib utio n over topics and concep ts for documen t d . β φ , β ψ and α are the parameters of the Dirichle t priors for φ , ψ and θ respec ti ve ly . Every element in the abov e process is unkno wn exce pt for the words in the corpus and the m embersh ip of wor ds in the human- defined conc epts. Thus, th e infere nce proble m in v o lve s estimatin g the distrib uti ons φ , ψ and θ gi v en the words in the corpus. The standar d coll apsed Gibbs sampling scheme pre vious ly used to do inferen ce for the topic model can be modified to do inferen ce for the concept-to pic model. W e also optimize the Dirichlet paramete rs using the fi xed point updates from Minka (2000) after each Gibbs samplin g sweep through the corpus. 1. Note that ψ c is a constrained word distribution defined over only the word s that are members of the human-d efined concept c 6 The topic model can be viewed as a special case of the concept-top ic m odel when there are no concep ts present, i.e. w hen C = 0 . T he other extreme of this model where T = 0 , which we refer to as the concept model, is used for illustra ti v e purposes. In our experiment s, we refer to the topic model, concep t m odel and the conce pt-top ic model as TM, CM and CTM respe cti v ely . W e note that the concept-t opic model is not the only way to incorporate semanti c concepts. For example , we could use the concept -word associatio ns to b uild informat i ve priors for the topic model and then allo w the inferenc e algorithm to learn word proba bilitie s for all word s (for each concep t), giv en the prior and the data. W e chose the current approach to exploi t the sparsity in the concept -word association s (topic s a re distrib utions ov er all the words in the v ocab u lary b ut concep ts are restricted to just their associ ated words ). This allo ws us to easily do inference with tens of thousan ds of concepts on large document collect ions. A moti v atio n for this approac h is that there might be topi cs prese nt in a co rpus (that can be learned ) that are no t rep resent ed in the concep t set. Similarly , there may be conce pts that are either missing fro m the tex t corpu s or are rare en ough that they are not found in the data-dri v en topics of the topic model. This marriage of concepts and topics provi des a simple way to augment conce pts with topics and has the flexibili ty to mix and match topics and concep ts to descri be a document. Figure 1 illustrates concept assignments to indivi dual words in a T ASA document with CAL D concep ts, using the co ncept m odel (CM). The four most likely concepts are listed for this d ocumen t. For each conce pt, the estimated proba bility distrib ution ov er words is shown next to the concep t. In the document, words assigned to the four most likely concepts are tagged with letters a-d (and color coded if viewing in color). The words assigned to any other concept are tagged with “o” and words outside the vocab ulary are not tagged. In the concept model, the distrib utio ns over concep ts within a document are highly ske wed such that most probability goes to only a small number of concep ts. In the example document, the four most likely concepts cov er about 50% of all words in the documen t. The figure i llustra tes that the model c orrect ly disambiguate s words th at ha v e se v eral co ncep tual interp retatio ns. For example, the word ch ar g ed has many differ ent meaning s and appears in 20 CALD concepts. In the exa mple document, this word is assigned to the PHYS ICS concept w hich is a reason able interpret ation in th is docu ment conte xt. S imilarly , the ambiguo us words cu rr e nt and flow are correc tly assign ed to the ELECTR ICITY concept. 4. Hierarch ical Concept-T opic Model Concepts are often arran ged in a tree-str ucture d hierarchy . While the concept-top ic model provid es a simple w ay to combin e co ncepts and topics, it does not take in to ac count t he hierarchical structu re of th e concep ts. In t his s ection , we descr ibe an exte nsion , the hiera rchica l concept-top ic model, that ext ends the concept- topic model to incorpor ate the hierarc hical structure of the conce pt set. Similar to the concep t-topi c model described in the pre vious section, there are T to pics and C concep ts in the hierarchical concept- topic m odel. For each documen t d , we introduce a “switch” distrib ution p ( x | d ) which determines if a word should be generate d via the topic route or the concept route. Every word token in the corpus is associated with a binary switch var iable x . If x = 0, the pre vio usly describ ed standa rd topic m echani sm of Section 3.1 is used to genera te the word. That is, we first select a topic t from a documen t-spe cific mixture of topics p ( t | d ) and gener ate a word from the word distrib utio n associated with topic t . If x = 1, we genera te the word from one of the C concepts in the concept tree. T o do that, we associate with each concept node c in the concept 7 tree a document-sp ecific m ultino mial distrib u tion with dimensional ity equal to N c + 1, where N c is the number of childre n of the concept node c . This distrib utio n allo ws us to trav erse the concept tree and exi t at any of the C nodes in the tree — gi v en that we are at a conce pt node c , there are N c child conc epts to choose from and an additio nal option to choose an “exit ” child to exi t the concep t tree a t co ncept node c . W e s tart our walk thro ugh the con cept tree at the root node and select a chi ld node from one of its children. W e repeat this proces s until w e reach an exit node and the word is genera ted from the the parent of the exit node. Note that for a concept tree with C nodes, there are exa ctly C distin ct ways to selec t a path and exit the tree — one for each conce pt. In the hierar chical concept-to pic model, a document is represe nted as a weighted combina tion of mixtures of T topics and C paths through the concept tree and the conditi onal probab ility of a word w giv en a documen t d is giv en by , p ( w | d ) = P ( x = 0 | d ) X t p ( w | t ) p ( t | d ) + P ( x = 1 | d ) X c p ( w | c ) p ( c | d ) (3) where p ( c | d ) = p ( exit | c ) p ( c | par ent ( c )) ...p ( . | r oot ) The generati v e pr ocess f or a d ocumen t col lection with D documents under the hierarchic al concep t- topic model is as follo w s: - 1. For each topic t ∈ { 1 , ..., T } , select a word distri b ution φ t ∼ Dir( β φ ) 2. For each conc ept c ∈ { 1 , ..., C } , select a word dis trib uti on ψ c ∼ Dir( β ψ ) 2 3. For each docu ment d ∈ { 1 , ..., D } (a) Select a switch distrib uti on ξ d ∼ Beta( γ ) (b) Select a distrib uti on ove r topics θ d ∼ Dir( α ) (c) For each conce pt c ∈ { 1 , ..., C } i. Select a distrib uti on ove r children of c , ζ cd ∼ Dir( τ c ) (d) For each word w of document d i. Select a binary switch var iable x ∼ Bernoulli( ξ d ) ii. If x = 0 A. Select a topic z ∼ Mult( θ d ) B. Generate a word from topic z , w ∼ Mult( φ z ) iii. Otherwise, create a path starting at the root concept node, λ 1 = 1 A. Repeat Select a child of node λ j , λ j +1 ∼ Mult( ζ λ j d ) Until λ j +1 is an exit nod e 2. Note that ψ c is a constrained word distribution defined over only the word s that are members of the human-d efined concept c 8 B. Generate a word from concept c = λ j , w ∼ Mult( ψ c ); set z to T + c where φ t , ψ c , β φ and β ψ are analogou s to the c orresp ondin g symbols in the co ncept- topic model descri bed in the pre vious section . ξ d repres ents t he p ( x | d ) switch distrib u tion for document d , θ d repres ents the p ( t | d ) distrib ution ov er topics for documen t d , ζ cd repres ents the multinomia l distrib ution o v er children of concept node c for document d and γ , α , τ c are the paramete rs of the priors on ξ d , θ d , ζ cd respec ti ve ly . As before, all elements abov e are unkno wn except words and the word-co ncept membership s in the genera ti ve process. Details of the inferenc e techniqu e based on collapsed Gibbs sampling (Grif fiths and Steyv ers, 2004) and fixed point update equations to optimiz e the D irichle t parameters (Minka, 2000) are pro vide d in Appendi x A. The g enerat i ve proce ss abo ve is quite flexible a nd can handle an y directed-ac ycli c concep t graph. The model ca nnot, ho w e ver , handle c ycle s in the con cept str ucture a s the walk of the con cept graph starting at the root node is not guara nteed to termina te at an exit node. The word generatio n mechanism via the concept route in the hierarchica l concept-top ic model is related to the Hierarchical Pachink o Allocatio n model 2 (HP AM 2) as describe d in Mimno et al. (2007). In the H P AM 2 mode l, topi cs are arrange d in a 3 -le v el hier archy with root, super -to pics and sub-to pics at le v els 1,2 and 3 respec ti v ely and words a re ge nerate d by tra v ersing the top ic hiera rchy and exitin g at a specific lev el and node. In our model, we use a similar mechanism but only for word genera tion via the c oncep t route. There is ad dition al machinery in ou r mode l to in corpo rate T data-d ri ve n topics (in additio n to the hierarch y of concepts) and a switching mechanis m to choose the word ge nerati on process via the concept route or the topic route. In our experi ments, w e refer to the hierarchic al co ncept- topic m odel as HCTM and the version of the model withou t topics, which we use for illust rati v e purpos es, as HCM . Note tha t the models we descri bed earl ier i n Section 3 (CM, C TM etc) ignore any hierarch ical infor mation. There are sev eral adv ant ages of modeling the concept hierar chy . W e learn the correl ations between the children of a concept via its Dirichlet parameters ( τ c in the generati v e proces s). This enables the model to a prior i prefer certain paths in the concep t hierar chy giv en a ne w documen t. For example , when trained on scientific documents the model can automatica lly adjust the D irichle t parameters to giv e more weight to the child node “science” of root than say to node “society”. W e experiment ally in vestiga te this aspect of the model by comparing HCM with CM (more details later). S econd ly , by selecti ng a path along the conce pt hiera rchy , the learning algorithm of the hiera rchica l m odel also reinforces the probab ility of the other concep t nodes that lie along the path. This is desirab le since we expect the concepts to be arranged in the hierarc hy by their “semantic proximity”. W e measured the a v erage minimum pa th length of 5 hig h probabi lity concept nodes for 1000 randomly selecte d scien ce d ocument s from the T ASA corpus for both HCM and CM u sing the C ALD concept set. HCM has an av era ge v alue of 3.92 and C M has an av erag e valu e of 4.09, the diffe rence across the 1000 documents is significant under a t-test at the 0.05 le ve l. T his result indicates that the hierarc hical model prefe rs semantical ly similar concepts to describe document s. W e sho w some illustr ati v e exampl es in the next sectio n to demonstrate the usefulness of the hierarchic al model. 5. Illustrative Examples In th is secti on, we pro vid e two illustr ati v e example s from th e hiera rchica l concept model tr ained on the science genre of the T ASA documen t set. Figure 2 shows the 20 highest probabili ty concepts (along w ith the ancesto rs of those nodes) for a random subset of 200 documents. The concepts are from the CALD concep t set. For each conc ept, the name of th e conce pt is sho wn in all caps and the 9 .10427 ROOT known times developed human found .01149 SCIENCE process wastes cell carry chemical .02236 LIFE, DEATH AND THE LIVING WORLD food live living grow eat .01182 USING THE MIND nature find study understand idea .01061 MOVEMENT AND LOCATION move place ways hold quickly .00464 COMMUNICATION answer question observe say words .00216 FARMING AND FORESTRY soil ground grow topsoil humus .00961 CHEMISTRY oxygen carbon dioxide nitrogen hydrogen .00670 THE EARTH AND OUTER SPACE earth atmosphere sun erosion space .00355 MEASURES AND QUANTITIES measure unit gram scale kilogram .00228 PHYSICS molecule molecular ion atom fission .00417 TECHNOLOGY current electrical voltage electronic electric .00109 ANIMAL FARMING water thanks vaccination field politics .00364 ASTRONOMY planet earth mars jupiter saturn .00587 ELECTRICITY electricity electric current wire flow .00571 CHEMICAL ELEMENTS hydrogen sodium element chlorine helium .00128 GEOGRAPHY sea ocean coast waters marine .00382 WEATHER AND CLIMATE cold warm dry cool hot .00635 ATOMS, MOLECULES AND SUB−ATOMIC PARTICLES atom nucleus electron proton neutron .00343 THE STATE OF MATTER liquid solid gas molten melted .00391 CHEMISTRY − GENERAL WORDS chemical atomic chemistry chemist chemically Figure 2: Illustrati v e example of m ar gina l concep t distrib utio ns from the hierarchi cal concept model learne d on scienc e documents using C ALD conce pts. number represe nts the margin al proba bility for the concept. The margina l probabili ty is computed based on the product of probabil ities along the path of reaching the node as well as the probab ility of ex iting at the node and producin g the word, mar ginali zed (av eraged ) across 200 documents . Many of the most likely concep ts as inferred by the model relate to specific science conce pts (e.g. G E O G R A P H Y , A S T RO N O M Y , C H E M I S T RY , etc.). These conce pts all fall under the general S C I E N C E concept whi ch is als o on e of the most likely concepts for this documen t col lectio n. There- fore, the m odel is able to summariz e the s emantic themes i n a set of doc uments at mu ltiple le vels of granul arity . T he figure also sh o ws the 5 most l ike ly w ords associa ted with each c oncep t. In the orig- inal CALD conc ept set , each c oncep t consis ts of a set of words and n o kno wledg e is p rov ided abou t the promine nce, frequen cy or representati veness of words within the concept. In the hierarch ical concep t model, for each conce pt a distrib ut ion over words is inferred that is tuned to the specific collec tion of documen ts. For example, for the concept A S T RO N O M Y (sec ond from left, bottom ro w), the word “planet” receiv es much higher probabi lity than the word “satur n” or “equino x” (not sho wn), all of which are members of the concept. This highligh ts the ability of the model to adapt to v aria tions in word usage across document collecti ons. Figure 3 sho ws the result of inferring the hierarchi cal concep t mixture for an indiv idual docu- ment using both the CALD and the ODP concept sets (Figures 3(b) and 3(c) respecti v ely). For the hierarc hy visualiza tion, we selecte d the 8 concepts with the hig hest probabi lity and included all an- cestor s of these concepts when visualiz ing the tree. This illustratio n shows th at the model is able t o gi v e interpret able results for an indi vidua l document at multiple lev els of granularit y . For exa mple, the CALD subtree (Figure 3(b)) hi ghligh ts the speci fic semantic themes o f F O R E S T RY , L I G H T , and 10 Forest bio mes in the tempera te zo ne are characte rized by ample rainfall, seas onal t emperature changes, a nd day l ength that varies with the seaso n. There a re t wo types of fo rest bio mes in No rth A merica: deciduous fo rest a nd eve rgreen fo rest biomes. Tre es that los e their leaves i n res ponse to s hortening periods of day light are ca lled dec iduous t rees. The deciduous fores t bio me co ntains many tree s, such as maple, oa k, and hicko ry, that lose their leaves each a utumn. The fal len leaves fo rm a thick laye r o f fo rest litte r on the ground, which is slowly broke n down by dec ompose rs. Tree s use la rge amou nts of water during photosynthes is. So me wate r esca pes thr ough openings in the leaves . Du ring the winte r, when t he ground is fro zen an d canno t abs orb water, the leafl ess trees use and lose ve ry li ttle moisture. Losing leaves is an adaptatio n that helps dec iduous trees s tay a live throug h the winter. A var iety o f wildflo wers and shr ubs gro w in the deciduous fo rest. These p lants gro w and bloo m ea rly each spr ing, before the tre e l eaves hav e gro wn back. T he ca nopy of tr ees s hades much of the sunlight f rom the fo rest flo or in late spring and su mmer. In the deciduous fo rest, each l ayer of plant lif e has different adaptations. The a daptatio ns enable plant s to su rvive the given a mounts of s unlight and mois ture in eac h layer o f the fo rest. F or exa mple, mosses and fe rns have st ructures that al low the m to g row succes sfully on th e damp, shady, fo res t floor. The large number of produce rs in the dec iduous fo res t pro vide fo od for a large numbe r o f c onsume rs. De er, mice, pheasants, and quail f eed o n t he leaves, berries, and se eds of plants on the forest flo or. .04 897 RO OT .01 377 SC IENC E .06 290 LIF E , DEATH AN D T H E LIV I NG WOR LD .00 159 LIG HT AN D CO L OU R .00 053 FAR MIN G AN D FO RES T RY .00 050 AN IMAL AND PL ANT BI OLO GY .012 37 PL ANT S AN D AN I MAL S .01 418 LIG HT .00 015 AN ATOM Y .12 350 FO REST RY .00 037 PL ANT S I N GEN ER AL .01 049 PL ANT AN AT OM Y .01 014 T R EES .00 78 7 RO O T .04 16 4 BIO L OG Y .00 001 EC OLOG Y .00 00 2 FLO R A AN D FA UNA .00 00 2 EC OSY STEM S .00 45 7 PLAN T AE .00 002 FO REST .00 007 CO N I FER OP HYT A .00 00 4 MAGN O LI OP H YTA .00 01 2 CA NO PY RE SEAR CH .00 228 CU P RESSAC EAE .00 007 PIN A CE AE .00 005 MAGN O LI OP SI DA (a) (b) (c) Figure 3: Example o f a single T ASA documen t from the sci ence genre (a). The concept d istrib ution inferre d by the hierar chical concept m odel using the CALD conce pts (b) and the ODP concep ts (c). 11 P L A N T A N A T O M Y along with the more general themes of S C I E N C E and L I F E A N D D E ATH . For the O DP concept set (Figure 3 (c)), the likely concepts focus specifically on C A N O P Y R E S E A R C H , C O N I F E R O P H Y TA and more gene ral themes such as E C O L O G Y and F L O R A A N D F AU NA . This sho ws that differe nt conc ept sets can each produce interp retable and usefu l document summaries focusi ng on dif fe rent aspects of the document. 6. Experiments W e assess the predicti v e performance of the topic model, concept- topic model and the hierarchi - cal concept-t opic model by comparing their perplexi ty on unseen words in test documents using concep ts from CALD and O DP . Perplex ity is a quantit ati v e measure to compare languag e m odels (Bro wn et al., 1992) a nd is widely us ed t o comp are t he p redicti ve performan ce of top ic mod els ( e.g. Blei et al. (2003); G rif fiths and Ste yv ers (2004); Chemudugunta et al. (2 007); Blei et al. ( 2007)). While perple xity does not necess arily directly measure aspects of a model such as interpreta bil- ity or co ver age, it is nonethe less a usefu l genera l predicti v e metric for assessing the quality of a langua ge model. In simulated expe riments (not described in this paper) where we swap word pairs randomly across concep ts to graduall y int roduc e noise, w e found a positi v e correlation of the quality of concep ts with perplex ity . In the exper iments belo w , we randomly split documents from science and social studies genres into disjoint train and test sets with 90% of the documents included in the train set and the remainin g 10% in the test set. This resulted in training and test sets w ith D train = 4,820 and D test = 536 documents for the science genre and D train = 9450 and D test = 1051 documen ts for the socia l studies genre respecti ve ly . 6.1 Perple xity Perple xity is equi v alen t to the in v erse of the geometric m ean of per -wo rd likelih ood of the heldout data. It ca n be interpreted as being proport ional to the distance (cross entrop y to be precise) between the wor d distri b ution learned by a model and the w ord di strib ut ion in an un obser ved test document. Lower perplexi ty scores indica te that the model predict ed distrib uti on of heldout data is closer to the true distrib uti on. More details about the perplexi ty computation are provide d in the Appendix B. For eac h test document, we use a random 50% of words of the document to estimate documen t specific distrib ution s and m easure perplex ity on the remaining 50% of words using the estimated distrib utions. 6.2 Perple xity Comparison acro ss Models W e compare the perplexi ty of the topic model (TM ), conce pt-top ic model (CT M) and the hierar - chical concept-top ic model (HCTM) trained on documen t sets from the scien ce and social studies genres o f the T AS A collecti on and u sing concep ts from CALD and ODP concept sets . For the mod- els using conce pts, w e indicate the concept set used by appen ding the name of the concept set to the model name, e.g. HCT M-CALD to indicate that HCTM was trained using concept s from the CALD concept set. Figure 4 shows the perpl exi ty of T M, CTM and HC TM using training docu- ments from the science genre in T A SA and testing on documents from the scienc e (top) and social studie s (bottom) genres in T A SA resp ecti v ely as a function of number of data-dri v en topics T . The point T = 0 indicates that there are no topics used in the model, e.g. for HCTM this point refers 12 0 50 100 200 300 400 500 1000 2000 500 550 600 650 700 750 800 // // TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP 0 50 100 200 300 400 500 1000 2000 2200 2400 2600 2800 3000 3200 3400 3600 3800 4000 4200 // // TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP Figure 4: Comp aring perplexity f or TM, CTM and HCTM using training documents from science and test- ing on science (top) and social studies (botto m) as a function of num ber of topics 13 0 50 100 200 300 400 500 1000 2000 1150 1200 1250 1300 1350 1400 1450 1500 1550 // // TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP 0 50 100 200 300 400 500 1000 2000 800 1000 1200 1400 1600 1800 2000 2200 2400 // // TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP Figure 5: Comp aring per plexity f or TM, CTM and HCTM using training d ocumen ts fro m social s tudies an d testing on social studies (top) and science (bottom ) as a fun ction of number of t opics 14 to HCM . The results clearl y indicate that incorp oratin g concepts great ly improv es the perp lex ity of the models. This differe nce is e ve n more significant when the model is trained on one genre of documents and tested on documents from a dif ferent genre (e.g. s ee bottom plot of Figure 4), indica ting that the models using concepts are rob ust and can handle noise. T M, on the other hand, is complete ly data-d ri ve n and does not use any human kno wledge, so it is not as robus t. One im- portan t point to note is t hat this impro v ed perfor mance by the conc ept models is not du e to the h igh number of ef fecti v e topics ( T + C ). In fact, ev en w ith T = 2,000 to pics TM does no t improv e its per- ple xity and ev en shows signs of deteriora tion in quality in some cases. In contra st, CT M-ODP and HCTM-ODP , using ov er 10,000 effe cti v e topics, are able to achie v e significan tly lo wer perplexit y than TM. The correspon ding plots for models usin g traini ng document s from s ocial studie s genre in T ASA and te sting on do cuments from the so cial studie s (top) and science (bo ttom) genres in T A SA respec ti ve ly are shown in Figure 5 with similar qualit ati v e results as in Figure 4. C ALD and ODP concep t sets mainly c ontain scie nce-re lated concep ts and do not cont ain many social studies related concep ts. This is reflected in th e results where the per ple xity value s between TM and CTM/HCTM trained on documents from the socia l studi es genre are relati v ely closer (e.g. as shown in the top plot of Figure 5. This is, of cours e, not true for the bottom plot as in this case TM again suf fers due to the dispa rity in themes in train and test documen ts). Figures 4 a nd 5 also allo w us to compare th e a dv anta ges of modeling the hierarchy of the concep t sets. In both these figures when T = 0 , the perfo rmance of HCTM is alw ays better than the performanc e of CT M for all cases and for both co ncept sets. This ef fect c an be attrib ute d to modeling the correlati ons of the child concep t nodes. Note that the one-to-one comparison of concep t models with and without the hierarch y to assess the utility of modeling the hierarc hy is not straigh tforw ard when T > 0 because of the differe nces in the wa ys the models mix with data-dri ven topics (e.g. CTM could choose to genera te 30% of words using topics whereas HCTM may choos e a dif fere nt fractio n). W e next look at th e ef fect of v ar ying the amount of tra ining data for all mode ls. F igure 6 sh o ws the perp lex ity of the models as a functio n of v arying amount of t rainin g data using docume nts from the science genre in T ASA for training and testing on documents from the science (top) and social studie s ( bottom) genres respecti v ely . F igure 7 sho ws the correspo nding plot s for m odels using trainin g documents from the social stud ies genre in T ASA and t esting o n do cuments from the soci al studie s (top) and science (bottom) genres in T A SA respecti v ely . In both these fi gures when there is insuf fi cient trainin g data, the m odels using concepts significant ly outperform the topic model. Among the concep t models, HCTM consisten tly outperforms CTM . Both the concep t models take adv ant age of the restric ted w ord associatio ns us ed for modeling the concepts that are manually selecte d on the basis of the semantic similarily of the words. T hat is, CTM and HCTM make use of prior human kno wledge in the form of concep ts and the hierarch ical structure of concepts (in the case of HCT M) whereas TM relies solely on the training data to learn topics. Prior kno wledge is ver y important when there is insuf ficie nt training data (e.g. in the ex treme case where there is no trainin g data a v ail able, topics of TM wil l jus t be un iform dis trib uti ons and will not perform well for predic tion tas ks. Concept s, on the other ha nd, can s till use their restricted wo rd associati ons to make reason able predictions) . This effec t is more prono unced when we train on on genre of documents and test on a dif ferent genre (bottom plots in both Figures 6 and 7), i.e. prior kno wledge becomes e ve n more important for this case. The gap between the concept models and the topic model narro ws as we increase the amount of training data. Even at the 100% trainin g data point CTM and HCT M ha v e lower perp lex ity values tha n T M. 15 0 10 20 30 40 50 60 70 80 90 100 500 1000 1500 2000 Percentage of training documents Perplexity TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP 0 10 20 30 40 50 60 70 80 90 100 2000 3000 4000 5000 6000 7000 8000 Percentage of training documents Perplexity TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP Figure 6: Comp aring perplexity f or TM, CTM and HCTM using training documents from science and test- ing on science ( top) and soc ial studies (bottom ) as a function of percen tage of training documen ts 16 0 10 20 30 40 50 60 70 80 90 100 1000 1200 1400 1600 1800 2000 2200 2400 2600 2800 Percentage of training documents Perplexity TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP 0 10 20 30 40 50 60 70 80 90 100 500 1000 1500 2000 2500 3000 3500 4000 4500 Percentage of training documents Perplexity TM CTM−CALD HCTM−CALD CTM−ODP HCTM−ODP Figure 7: Comp aring perp lexity f or TM, CTM an d HCTM using tr aining doc uments from social studies and testing o n social studies (top ) and scien ce (bo ttom) as a function of perc entage of training docume nts 17 7. Futur e Dire ctions There are se veral potentia lly useful direction s in which the hi erarch ical concept-top ic model can be ext ended . One interest ing ex tensio n to try is to substitute the Dirichlet prior on the concepts with a Dirichlet Process prior . Under this variat ion, each concept will no w hav e a potenti ally infinite number of children, a finite number of which are observed at any giv en instan ce (e.g. see T eh et al. (2006)). When we do a random walk through the concept hierarchy to generate a word, we no w ha ve an additio nal option to create a child topic and generate a word from that topic. There would be no need for the switch ing mechanism as data-dri ven topics are no w part of the co ncept hierarchy . This model would allo w us to add new top ics to an existin g concep t set hiearar chy and could potentially be useful in b uildin g a recommende r system for updatin g concept ontologies. An alterna ti ve direction to pursue would be to introduce additiona l machinery in the generat i ve model to handle dif fere nt aspects of transitio ns throug h the concept hierarchy . In HCTM, w e cur - rently l earn one se t of pat h co rrelati ons for t he en tire corpus (c aptur ed by the Diric hlet pa rameters τ in HCTM). It wo uld be interes ting to introduce anoth er latent va riable t o model mul tiple pa th c orre- lation s. Under this extensio n, documents from differ ent genres can learn dif feren t path correlatio ns (similar to B oyd -Graber et al. (2007)). For example , scient ific documents could prefer to utiliz e paths in volvi ng scientific concepts and humanities concepts could prefer to utilize a differe nt set of path correla tions when they are modeled together . This model would also be able to make use of class labels of documents if av ai lable. Other potential future directions in v olv e modeling multiple corpor a and multiple concept sets and so forth . 8. Conclusions W e hav e propose d a probabilis tic frame w ork for combining data-dri v en topics and semantically- rich human-d efined conce pts. W e first introd uced the concept- topic model, which is a straightfor ward ext ension of the topic model, to utilize semantic concepts in the topic modeling frame work . This model represen ts documents as a mixture of topic s and concept s thereb y allo wing us to describe documen ts using the semantically rich concepts. W e furth er ex tende d this mode l with the hierarc hi- cal concept-t opic model where we incorp orate the concept- set hierarchy into the generat i ve model by modeling the parent- child relation ship in the conce pt hierarchy . Experiment al results, using two docume nt collections and two concept sets with approximately 2,000 and 10,000 conce pts, in dicate that using the semantic concepts significa ntly improv es the qualit y of the resulting language models. This improv ement is more pronoun ced w hen the training documen ts and test documen ts belong to dif fere nt genres. Mod eling concepts and their associated hierarc hies appears to be particularl y useful when there is limited training data — the hierarchi cal concep t-top ic model has the best predicti ve performan ce ov erall in this regime. W e vie w the cu rrent set of mode ls as a starting point for e xpl oring more expressi v e generati v e m odels that can potentiall y ha v e wide-ranging application s, partic ularly in areas of document modeling and tagging, ontology modeling and refining, infor mation retrie v al, and so forth. Acknowledgeme nts The work of Chaitanya C hemudug unta, Padhra ic Smyth, and Mark Steyv ers was supported in part by the National Science Foun dation under A ward Number IIS-0082 489. The work of Padhraic Smyth was als o supported by a Research A ward from Google. 18 Refer ences H. Alani and C . Brewster . Metrics for ranking ontolo gies. In 4th Int’l. EON W orksho p, 15th Int’l W orld W ide W eb Conf. , 2006. D. M . Blei, A. Y . Ng, and M. I. Jordan . Latent Dirichl et allocat ion. J . M ach . Learn. Res. , 3: 993–1 022, 2003. Dav id M. Blei and John D. Laf ferty . Correlat ed topic models . In N IPS , 2005 . Dav id M. Blei, Thomas L . Griffiths, and Michael I. Jordan. The nested chinese restauran t process and bayesi an inferen ce of topic hierarchies, 2007. D. Bo yd-Grabe r , D . Blei, and X. Z hu. A top ic model fo r word sense disambig uation . In Pr oc. 200 7 J oint Conf. Em pirica l Method s in Nat’l. Lang. P r ocessing and Compt’l. Nat’l. Lang. Learning , pages 1024–1 033, 2007. C. Brewst er , H. Alani, S. Dasmahapatra, and Y . W il ks. Data dri ven ontology ev al uation . In Int’l. Conf. Lang uag e Resour c es and Evaluation , 2004. P . F . Bro wn, P . V . deSouza, R. L. Mercer , V . J. Della Pietra, and J. C. Lai. Class-b ased n-gram models of natural langua ge. Compt’l. Linguis tics , pages 467–479, 1992. Chaitan ya Chemudugu nta, Padh raic Smyth, and Mark Steyv ers. Modeling general and specific aspect s of document s with a probabilis tic topic model. In Advances in Neura l Information Pr o- cessin g Systems 19 , pages 241–248, Cambridge, MA, 2007 . C. F ellbau m, editor . W or dNet: An Electr o nic Lexic al Database (L angua g e, Speech and C ommuni- cation ) . MIT Press, May 1998 . T . L. Grif fiths and M. Steyv ers. Finding scientific topics. In Pr oc. of Nat’l. Academy of Science , v olume 101, pages 5228– 5235, 2004. T . H ofmann. Unsupervise d learning by proba bilisti c latent semanti c analysis. Mach. L earn. J. , 42 (1):17 7–196 , 2001. Geor gian a I frim, Martin Theobal d, and Gerhard W eikum. Learn ing word-to -conc ept mappin gs for automati c tex t classification . In Luc De Raedt and S tefa n Wrobel, editors, Pr oce eding s of the 22nd Interna tional Confer ence on Machi ne Learning - Learning in W eb Sear ch (L WS 200 5) , pages 18–26, Bonn, Germany , 2005. ISBN 1-59593 -180- 5. T . K. Landauer and S. T . Dumais. A so lution to Pla to’ s problem: The latent semantic analysis th eory of the acquisitio n, inductio n and representat ion of kno wledge. P sych olo gical R evi ew , 104:211– 240, 1997. Alexa nder Maedche and Stef fen S taab . Ontology learni ng for the semantic web . IE EE Intellig ent Systems , 16(2) :72–7 9, 2001. ISS N 1541 -1672 . doi: http://dx.doi .or g/10.1109/5254.920602. Dav id M . Mimno, W ei Li, and Andre w McCallum. M ixture s of hierarchica l topics with pachink o alloca tion. In ICML , page s 633–640, 2007. 19 Thomas P . Minka. Estimating a dirichlet distrib uti on. T ec hnical report, Massachus ettes Institu te of T echnolo gy , 2000. Kamal Nigam, Andre w K. McCallum, Sebastian Thrun, and T om M. Mitchell. T ext classificati on from label ed and unlabe led documents using E M. Mach ine Learning , 39(2/3):103 –134, 2000. Mark Steyve rs, Padhraic S myth, Michal Rosen-Zvi, and Thomas L . Griffiths . Proba bilist ic author - topic models for informatio n disco ve ry . In KDD , pages 306–315 , 2004. Y . W . T eh, M . I. Jordan, M. J. Beal, and D. M. Blei. Hierarchi cal Dirichlet processe s. J ou rnal of the American Statis tical A ssocia tion , 101(476):1 566–1 581, 2006. Ap pendix A. Inferenc e for the Hierarch ical Concept-T opic Model In th is se ction, we provide mor e de tails on inferenc e using coll apsed Gibbs sampling and par ameter estimatio n for the hierarchical c oncep t-topi c model. For all t he m odels used in the paper (TM, CTM, HCTM etc), we run G ibbs sampling chains for 500 iterations and estimate th e expect ed v alues of the model dis trib uti ons by a ver aging ov er sampl es from 5 ind epend ent chains by collect ing one sample from the la st it eratio n of ea ch c hain. W e use a s ymmetric Dirichlet p rior o f 0 . 0 1 for the multinomial distrib utions ov er words (i.e. β φ = β ψ = 0 . 01 where they are defined) and use asymmetric Dirichlet priors f or all the ot her multin omial distrib utions (corresp ondin gly , w e use an asymmetric Beta prior γ for the Bernoulli switch distrib utio n ζ of HCTM) and optimize these paramet ers using the fixed point u pdate eq uatio ns gi ve n in Minka (20 00). W e update t he Diric hlet pa rameters a fter eac h sweep of the Gibbs sampler throug h the corpus. In th e hie rarchi cal concept-t opic model, φ and ψ correspond to the set of p ( w | t ) word -topic and p ( w | c ) concep t-topic m ultino mial distrib utio ns with Dirichlet prior β φ and β ψ respec ti ve ly . ξ is the set of p ( x | d ) document -speci fic Bernoull i switch distrib ution s with Beta prior γ . θ corres ponds to the set of p ( t | d ) topic-doc ument m ultino mial distrib u tions with Diric hlet prior α . ζ cd repres ents the multinomia l distrib u tion ov er the children of concep t node c for document d w ith Dirichlet prior τ c —for a data set with C concept s and D documents, there are C × D such distrib uti ons. Usin g the collap sed Gibbs sampling procedure, the component va riable s z i and binary switch va riable x i can be ef ficiently sampled (after margin alizin g the distrib ution s φ , ψ , ξ , θ and ζ ). The Gibbs sampling equati ons for the hierarchical concept-top ic model are as follo ws: - case (i): x i = 0 and 1 ≤ z i ≤ T P ( x i = 0 , z i = t | w i = w , w − i , x − i , z − i , γ , α, τ , β φ , β ψ ) ∝ ( 4) ( N 0 d, − i + γ 0 ) × C td, − i + α t P t ′ ( C t ′ d, − i + α t ′ ) × C w t, − i + β φ P w ′ ( C w ′ t, − i + β φ ) case (ii): x i = 1 , z i = T + c and 1 ≤ c ≤ C 20 P ( x i = 1 , z i = T + c | w i = w , w − i , x − i , z − i , γ , α, τ , β φ , β ψ ) ∝ (5) ( N 1 d, − i + γ 1 ) × | λ | Y j =2 C λ j − 1 λ j d, − i + τ λ j − 1 λ j P k ( C λ j − 1 k d, − i + τ λ j − 1 k ) × C w c, − i + β ψ P w ′ ∈ c ( C w ′ c, − i + β ψ ) where C w t and C w c and are the number of times word w is assigned to topic t and concep t c respec ti ve ly . N 0 d and N 1 d are the number of times words in documen t d are generate d by topics and by concepts respect i vel y . C td is the number of times topic t is associated with document d . λ is a vector representin g the path from the root to the sampled concept node c and exitin g at c (i.e. λ 1 is the root, λ | λ |− 1 = c and λ | λ | is the exit child of concep t node c ). C λ j − 1 λ j d is the number of times concept node λ j was visited from its parent concept node λ j − 1 in document d . Subscript − i denote s that the ef fect of the curren t word w i being sampled is remov ed from the cou nts. As mentioned earlier , we use the fixed point update equatio ns descri bed in Minka (2000) to optimize the asymmetric Dirichlet and Beta distrib ut ion parameters. In the hierarc hical concept - topic model, the Dirichlet distrib ution parameters α are updates as follo ws: α t new = α t old P d (Ψ( C td + α t ) − Ψ ( α t )) P d (Ψ( P t ′ C t ′ d + P t ′ α ′ t ) − Ψ ( P t ′ α t ′ )) (6) where Ψ( . ) denot es the digamma function (loga rithmic der i v ati v e o f the Gamma function ). Dirichlet distrib utio n paramet ers τ c for c ∈ { 1 , ..., C } and Beta distrib u tion parameters γ are up- dated in a similar fa shion. Point estimates for the distrib ution s margin alized for Gibbs sampling can be obtained by using the counts of assig nment variab les z i and x i . The point estimates for φ , ψ , ξ , θ and ζ c are gi v en by , E [ φ w t | w , z , β φ ] = C w t + β φ P w ′ ( C w ′ t + β φ ) (7) E [ φ w c | w , z , β ψ ] = C w c + β ψ P w ′ ∈ c ( C w ′ c + β ψ ) E [ ξ xd | x , γ ] = N xd + γ x P x ′ ( N x ′ d + γ x ′ ) E [ θ td | z , α ] = C td + α t P t ′ ( C t ′ d + α t ′ ) E [ ζ ck d | z , τ c ] = C ck d + τ ck P k ′ ( C ck ′ d + τ ck ′ ) 21 Inferen ce using Gibbs sampling and point estimates for the topic model and the concept-t opic model can be done in a similar fash ion. Ap pendix B. Perplexity Perple xity of a collection of test document s gi ven the train ing set is defined as: Perp ( w test |D train ) = exp − P D test d =1 log p ( w d |D train ) P D test d =1 N d where w test is the words in test documents , w d are words in document d of the test set, D train is the traini ng set, and N d is the number of words in docu ment d . For the hierarchica l c oncep t-topi c mode l, we generate sample-based approximat ions to p ( w d |D train ) as follo w : - p ( w d |D train ) ≈ 1 S S X s =1 p ( w d |{ ξ s θ s ζ s φ s ψ s } ) where ξ s , θ s , ζ s , φ s and ψ s are point estimates from s = 1 : S diffe rent Gibbs sampling runs as defined in A ppendi x A. Give n these point estimates from S chains for document d , the probabilit y of the word s w d in docu ment d can be comput ed as follows: p ( w d |{ ξ s θ s ζ s φ s ψ s } ) = N d Y i =1 ξ s 0 d T X t =1 φ s w i t θ s td + ξ s 1 d C X c =1 ψ s w i c | λ | Y j =2 ζ s λ j − 1 λ j d where N d is the number of words in the test document d and w i is the i th word being predicted in the test document and λ represents a path to the exit child of concept node c , starting at the root concep t node. Perplexity can be computed for the topic m odel and the concept-to pic model by follo wing a similar proc edure. 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment