Local Procrustes for Manifold Embedding: A Measure of Embedding Quality and Embedding Algorithms

We present the Procrustes measure, a novel measure based on Procrustes rotation that enables quantitative comparison of the output of manifold-based embedding algorithms (such as LLE (Roweis and Saul, 2000) and Isomap (Tenenbaum et al, 2000)). The me…

Authors: Y. Goldberg, Y. Ritov

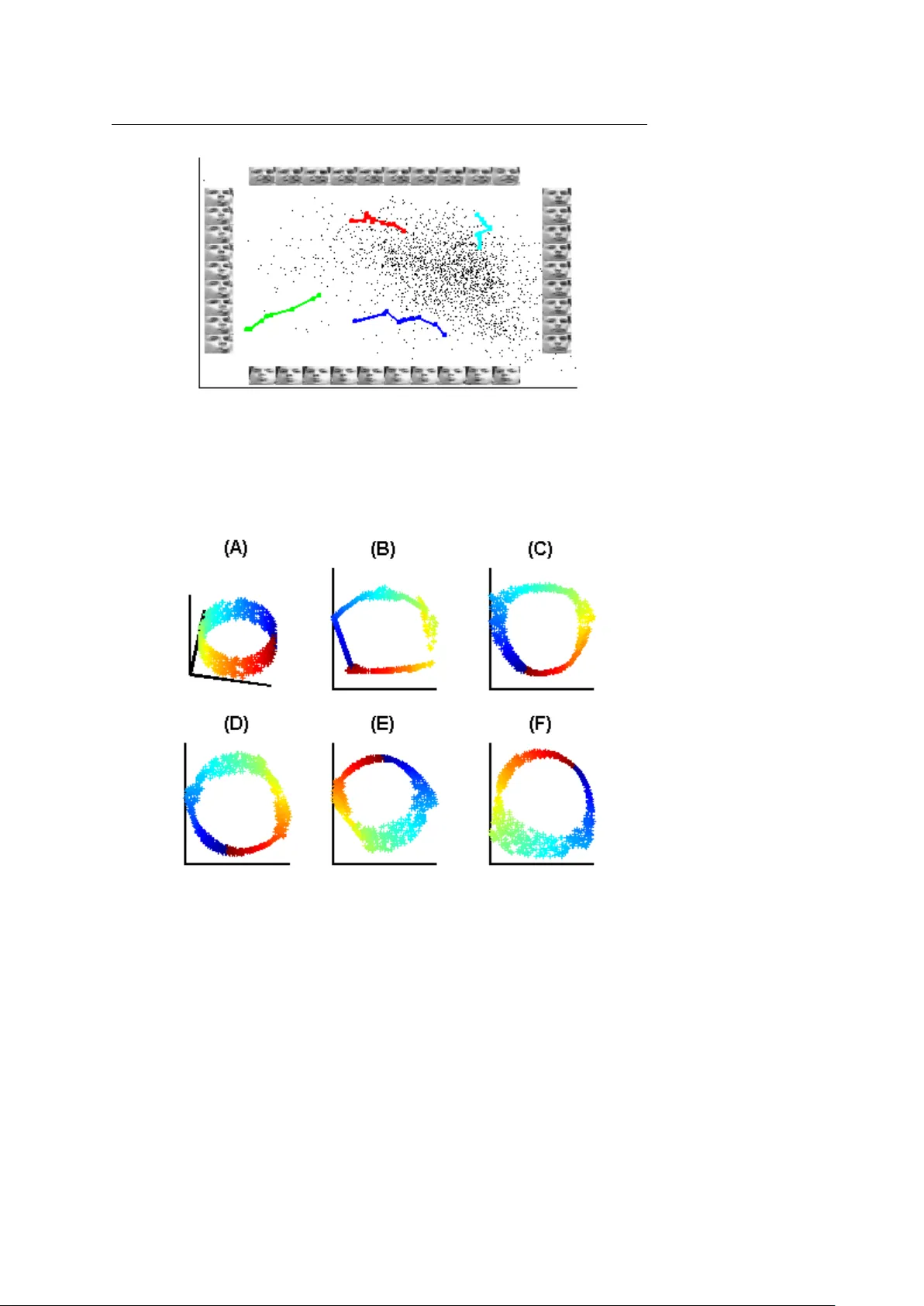

Machine Learning manuscript No. (will be inserted by the editor) Local Procrustes f or Manif old Embedding: A Measur e of Embedding Quality and Embedding Algorithms Y air Goldber g · Y a’acov Rito v Receiv ed: date / Accepted: date Abstract W e present the Procrustes measure, a no vel measure based on Procrustes rotation that enables quantitati ve comparison of the output of manifold-based embedding algorithms (such as LLE (Roweis and Saul, 2000) and Isomap (T enenbaum et al, 2000)). The measure also serv es as a natural tool when choosing dimension-reduction parameters. W e also present two nov el dimension-reduction techniques that attempt to minimize the suggested measure, and compare the results of these techniques to the results of existing algorithms. Finally , we suggest a simple iterativ e method that can be used to improv e the output of existing algorithms. Keyw ords Dimension reducing · Manifold learning · Procrustes analysis, · Local PCA · Simulated annealing 1 Introduction T echnological advances constantly improv e our ability to collect and store large sets of data. The main difficulty in analyzing such high-dimensional data sets is, that the number of observations required to estimate functions at a set le vel of accuracy grows exponentially with the dimension. This problem, often referred to as the curse of dimensionality , has led to various techniques that attempt to reduce the dimension of the original data. Historically , the main approach to dimension reduction is the linear one. This is the ap- proach used by principle component analysis (PCA) and factor analysis (see Mardia et al, 1979, for both). While these algorithms are largely successful, the assumption that a linear projection describes the data well is incorrect for many data sets. A more realistic assump- tion than that of an underlying linear structure is that the data is on, or next to, an embedded manifold of low dimension in the high-dimensional space. Here a manifold is defined as a This research was supported in part by Israeli Science Foundation grant. Y . Goldberg Department of Statistics, The Hebrew Uni versity , 91905 Jerusalem, Israel E-mail: yair .goldberg@mail.huji.ac.il Y . Ritov E-mail: yaacov .ritov@huji.ac.il 2 topological space that is locally equiv alent to a Euclidean space. Locally , the manifold can be estimated by linear approximations based on small neighborhoods of each point. Many algorithms were developed to perform embedding for manifold-based data sets, including the algorithms suggested by Roweis and Saul (2000); T enenbaum et al (2000); Belkin and Niyogi (2003); Zhang and Zha (2004); Donoho and Grimes (2004); W einber ger and Saul (2006); Dollar et al (2007). Indeed, these algorithms have been shown to succeed e ven where the assumption of linear structure does not hold. Howe ver , to date there exists no good tool to estimate the quality of the result of these algorithms. Ideally , the quality of an output embedding could be judged based on a comparison to the structure of the original manifold. Indeed, a measure based on the idea that the manifold structure is known to a good de gree was recently suggested by Dollar et al (2007). Ho wev er, in the general case, the manifold structure is not given, and is difficult to estimate accurately . As such ideal measures of quality cannot be used in the general case, an alternate quantitati ve measure is required. In this work we suggest an easily computed function that measures the quality of any giv en embedding. W e belie ve that a faithful embedding is an embedding that preserves the structure of the local neighborhood of each point. Therefore the quality of an embedding is determined by the success of the algorithm in preserving these local structures. The function we present, based on the Procrustes analysis, compares each neighborhood in the high- dimensional space and its corresponding low-dimensional embedding. Theoretical results regarding the con ver gence of the proposed measure are presented. W e further suggest two ne w algorithms for discovering the low-dimensional embedding of a high-dimensional data set, based on minimization of the suggested measure function. The first algorithm performs the embedding one neighborhood at a time. This algorithm is extremely fast, b ut may suffer from incremental distortion. The second algorithm, based on simulated annealing (Kirkpatrick et al, 1983), performs the embedding of all local neigh- borhoods simultaneously . A simple iterativ e procedure that improves on an existing output is also presented. The paper is or ganized as follows. The problem of manifold learning is presented in Section 2. A discussion regarding the quality of embeddings in general and the suggested measure of quality are presented in Section 3. The embedding algorithms are presented in Section 4. In Section 5 we present numerical examples. All proofs are presented in the Appendix. 2 Manifold-lear ning problem setting and definitions In this section we pro vide a formal definition of the manifold-learning dimension-reduction problem. Let M be a d -dimensional manifold embedded in R q . Assume that a sample is taken from M . The goal of manifold-learning is to find a faithful embedding of the sample in R d . The assumption that the sample is taken from a manifold is translated to the fact that small distances on the manifold M can be approximated well by the Euclidian distance in R q . Therefore, to find an embedding, one first needs to approximate the structure of small neighborhoods on the manifold using the Euclidian metric in R q . Then one must find a unified embedding of the sample in R d that preserves the structure of local neighborhoods on M . In order to adhere to this scheme, we need two more assumptions. First, we assume that M is isometrically embedded in R q . By definition, an isometric mapping between two man- 3 ifolds preserves the inner product on the tangent bundle at each point. Less formally , this means that distances and angles are preserved by the mapping. This assumption is needed because we are interested in an embedding that e verywhere preserves the local structure of distances and angles between neighboring points. If this assumption does not hold, close points on the manifold may originate from distant points in R d and vice versa. In this case, the structure of the local neighborhoods on the manifold will not reveal the structure of the original neighborhoods in R d . W e remark here that the assumption that the embedding is isometric is strong but can be relaxed. One may assume instead that the embedding map- ping is conformal. This means that the inner products on the tangent bundle at each point are preserved up to a scalar c that may change continuously from point to point. Note that the class of isometric embeddings is included in the class of conformal embeddings. While our main discussion regards isometric embeddings, we will also discuss the conformal em- bedding problem, which is the frame work of algorithms such as c-Isomap (de Silv a and T enenbaum, 2003) and Conformal Embeddings (CE) (Sha and Saul, 2005). The second assumption is that the sample taken from the manifold M is dense . W e need to prev ent the situation in which the local neighborhood of a point, which is computed ac- cording to the Euclidian metric in R q , includes distant geodesic points on the manifold. This can happen, for example, if the manifold is twisted. The result of having distant geodesic points in the same local neighborhood is that these distant points will be embedded close to each other instead of preserving the true geodesic distance between them. T o define the problem formally , we require some definitions. The neighborhood of a point x i ∈ M is a set of points X i that consists of points close to x i with respect to the Euclidean metric in R q . For example, neighbors can be K -nearest neighbors or all the points in an ε -ball around x i . The minimum radius of curvatur e r 0 = r 0 ( M ) is defined as follows: 1 r 0 = max γ , t n ¨ γ ( t ) o where γ varies ov er all unit-speed geodesics in M and t is in a domain of γ . The minimum branch separation s 0 = s 0 ( M ) is defined as the largest positive number for which k x − ˜ x k < s 0 implies d M ( x , ˜ x ) ≤ π r 0 , where x , ˜ x ∈ M and d M ( x , ˜ x ) is the geodesic distance between x and ˜ x (see Bernstein et al, 2000., for both definitions). W e define the radius r ( i ) of neighborhood i to be r ( i ) = max j ∈{ 1 ,..., k ( i ) } x i − x i j where x i j is the j -th out of the k ( i ) neighbors of x i . Finally , we define r max to be the maximum ov er r ( i ) . W e say that the sample is dense with respect to the chosen neighborhoods if r max < s 0 . Note that this condition depends on the manifold structure, the given sample, and the choice of neighborhoods. Howev er, for a giv en compact manifold, if the distribution that produces the sample is supported throughout the entire manifold, this condition is valid with probability increasing tow ards 1 as the size of the sample is increased and the radius of the neighborhoods is decreased. W e now state the manifold-learning problem more formally . Let D ⊂ R d be a com- pact set and let φ : D → R q be a smooth and in vertible isometric mapping. Let M be the d -dimensional image of D in R q . Let x 1 , . . . , x n be a sample taken from M . Define neighbor- hoods X i for each of the points x i . Assume that the sample x 1 , . . . , x n is dense with respect to the choice of X i . Find y 1 , . . . , y n ∈ R d that approximate φ − 1 ( x 1 ) , . . . , φ − 1 ( x n ) up to rotation and translation. 4 3 Faithful embedding As discussed in Section 2, a faithful embedding should preserve the structure of local neigh- borhoods on the manifold, while finding a global embedding mapping. In this section, we will attempt to answer the following tw o questions: 1. How do we define “preserv ation of the local structure of a neighborhood”? 2. How do we find a global embedding that preserv es the local structure? W e now address the first question. Under the assumption of isometry , it seems reason- able to demand that neighborhoods on the manifold and their corresponding embeddings be closely related. A neighborhood on the manifold and its embedding can be compared using the Pr ocrustes statistic . The Procrustes statistic measures the distance between tw o configu- rations of points. The statistic computes the sum of squares between pairs of corresponding points after one of the configurations is rotated and translated to best match the other . In the remainder of this paper we will represent any set of k points x 1 , . . . , x k ∈ R q as a matrix X k × q = [ x 0 1 , . . . , x 0 k ] ; i.e., the j -th row of the matrix X corresponds to x j . Let X be a k -point set in R q and let Y be a k -point set in R d , where d ≤ q . W e define the Pr ocrustes statistic G ( X , Y ) as G ( X , Y ) = inf { A , b : A 0 A = I , b ∈ R q } k ∑ i = 1 k x i − A y i − b k 2 (1) = inf { A , b : A 0 A = I , b ∈ R q } tr ( X − Y A 0 − 1 b 0 ) 0 ( X − Y A 0 − 1 b 0 ) where the rotation matrix A is a columns-orthogonal q × d matrix, A 0 is the adjoint of A , and 1 is a k -dimensional vector of ones. The Pr ocrustes rotation matrix A and the Pr ocrustes translation vector b that minimize G ( X , Y ) can be computed explicitly , as follows. Let Z = X 0 HY where H ≡ I − 1 k 11 0 is the centering matrix. Let U L V 0 be the singular-v alue decomposition (svd) of Z , where U is an orthogonal q × d matrix, L is a non-negati ve d × d diagonal matrix, and V is a d × d orthogonal matrix (Mardia et al, 1979). Then, the Procrustes r otation matrix A is giv en by U V 0 (Sibson, 1978). The Pr ocrustes translation vector b is giv en by ¯ x − A ¯ y , where ¯ x and ¯ y are the sample means of X and Y , respectively . Due to the last fact, we may write G ( X , Y ) without the translation vector b as G ( X , Y ) = inf { A : A 0 A = I } tr ( X − Y A 0 ) 0 H ( X − Y A 0 ) = inf { A : A 0 A = I } H ( X − Y A 0 ) 2 F , where k k F is the Frobenius norm. Giv en X , the minimum of G ( X , Y ) can be computed explicitly and is achieved by the first d principal components of X . This result is a consequence of the following lemma. Lemma 1 Let X = X k × q be a center ed matrix of rank q and let d ≤ q. Then inf { ˜ X : rank ( ˜ X )= d } k X − ˜ X k 2 F (2) is obtained when ˜ X equals the pr ojection of X on the subspace spanned by the first d prin- cipal components of X . 5 For proof, see Mardia et al (1979, page 220). Returning to the questions posed at the beginning of this section, we will define how well an embedding preserves the local neighborhoods using the Procrustes statistic G ( X i , Y i ) of each neighborhood-embedding pair ( X i , Y i ) . Therefore, a global embedding that preserves the local structure can be found by minimizing the sum of the Procrustes statistics of all neighborhood-embedding pairs. More formally , let X be the q -dimensional sample from the manifold and let Y be a d -dimensional embedding of X . Let X i be the neighborhood of x i ( i = 1 , . . . , n ) and Y i its embedding. Define R ( X , Y ) = 1 n n ∑ i = 1 G ( X i , Y i ) . (3) The function R measures the average quality of the neighborhood embeddings. Embed- ding Y is considered better than embedding ˜ Y in the local-neighborhood-preserving sense if R ( X , Y ) < R ( X , ˜ Y ) . This means that on the average, Y preserves the structure of the local neighborhoods better than ˜ Y . The function R ( X , Y ) is sensitive to scaling, therefore normalization should be consid- ered. A reasonable normalization is R N ( X , Y ) = 1 n n ∑ i = 1 G ( X i , Y i ) / k H X i k 2 F . (4) The i -th summand of R N ( X , Y ) examines how well the rotated and translated Y i “explains” X i , independent of the size of X i . This normalization solv es the problem of increased weight- ing for lar ger neighborhoods that e xists in the unnormalized R ( X , Y ) . It also allo ws compar- ison of embeddings for data sets of different sizes. Hence, this normalized version is used to compare the results of different outputs (see Section 5). In the remainder of this section, we will justify our choice of the Procrustes measure R for a quantitati ve comparison of embeddings. W e will also present two additional, closely related measures. One measure is R P C A , which can ease computation when the input space is of high dimension. The second measure is R C , which is a statistic designed for conformal mappings (see Section 2). Finally , we will discuss the relation between the measures sug- gested in this w ork to the objecti ve functions of other algorithms, namely L TSA (Zhang and Zha, 2004) and SDE (W einber ger and Saul, 2006). W e now justify the use of the Procrustes statistic G ( X i , Y i ) as a measure of the quality of the local embedding. First, G ( X i , Y i ) estimates the relation between the entire input neigh- borhood and its embedding as one entity , instead of comparing angles and distances within the neighborhood with those within its embedding. Second, the Procrustes statistic is not highly sensitiv e to small perturbations of the embedding. More formally , G ( X , Y ) = O ( ε 2 ) , where Y = X + ε Z and Z is a general matrix (see Sibson, 1979). Finally , the function G is l 2 -norm-based and therefore prefers small differences at many points to big differences at fewer points. This is preferable in our context, as the local embedding of the neighborhood should be compatible with the embeddings of nearby neighborhoods. The usage of R as a measure of the quality of the global embedding of the manifold is justified by Theorem 1. Theorem 1 claims that when the number of input points X increases, the low-dimensional points Z = φ − 1 ( X ) of the input data tend to zero R . This implies that the minimizer Y of R should be close to the original data set Z (up to rotation and translation). 6 Theorem 1 Let D be a compact connected set. Let φ : D → R q be an isometry . Let X ( n ) be an n-point sample taken fr om φ ( D ) , and let Z ( n ) = φ − 1 ( X ( n ) ) . Assume that the sample X ( n ) is dense with r espect to the choice of neighborhoods for all n ≥ N 0 . Then for all n ≥ N 0 R X ( n ) , Z ( n ) = O ( r 4 max ) . (5) See Appendix A.1 for proof. Replacing R ( X , Y ) with the normalized version R N ( X , Y ) (see Eq. 4) and noting that k H X i k 2 F = O ( r 2 i ) , we obtain Corollary 1 R N ( X ( n ) , Z ( n ) ) = O ( r 2 max ) . T o a void heavy computations, a slightly different version of R ( X , Y ) can be considered. Instead of measuring the dif ference between the original neighborhoods on the manifold and their embeddings, one can compare the local PCA projections (Mardia et al, 1979) of the original neighborhoods with their embeddings. W e therefore define R P C A ( X , Y ) = 1 n n ∑ i = 1 G ( X i P i , Y i ) , (6) where P i is the d -dimensional PCA projection matrix of X i . The con ver gence theorem for R P C A is similar to Theorem 1, but the con ver gence is slower . Theorem 2 Let D be a compact connected set. Let φ : D → R q be an isometry . Let X ( n ) be an n-point sample taken fr om φ ( D ) , and let Z ( n ) = φ − 1 ( X ( n ) ) . Assume that the sample X ( n ) is dense with r espect to the choice of neighborhoods for all n ≥ N 0 . Then for all n ≥ N 0 R P C A X ( n ) , Z ( n ) = O ( r 3 max ) , See Appendix A.2 for proof. W e now present another version of the Procrustes measure R C ( X , Y ) , suitable for con- formal mappings. Here we want to compare between each original neighborhood X i and its corresponding embedding Y i , where we allow Y i not only to be rotated and translated but also to be rescaled. Define G C ( X , Y ) = inf { A : A 0 A = I , 0 < c ∈ R } tr X − Y ( cA 0 ) 0 H X − Y ( cA 0 ) . Note that the scalar c was introduced here to allow scaling of Y . Let Z = X 0 HY and let U L V 0 be the svd of Z . The minimizer rotation matrix A is gi ven by U V 0 . The minimizer con- stant c is gi ven by tr ( L ) / tr ( Y 0 Y ) (see Sibson, 1978). The (normalized) conformal Procrustes measure is giv en by R C ( X , Y ) = 1 n n ∑ i = 1 G C ( X i , Y i ) / k H X i k 2 F . (7) Note that R C ( X , Y ) ≤ R N ( X , Y ) since the constraints are relaxed in the definition of R C ( X , Y ) . Howe ver , the lower bound in both cases is equal (see Lemma 1). W e present a con ver gence theorem, similar to that of R and R P C A . 7 Theorem 3 Let D be a compact connected set. Let ˜ φ : D → R q be a conformal mapping. Let X ( n ) be an n-point sample taken fr om ˜ φ ( D ) , and let Z ( n ) = ˜ φ − 1 ( X ( n ) ) . Assume that the sample X ( n ) is dense with r espect to the choice of neighborhoods for all n ≥ N 0 . Then for all n ≥ N 0 we have R C X ( n ) , Z ( n ) = O ( r 2 max ) . See Appendix A.3 for proof. Note that this result is of the same con vergence rate as of R N (see Corollary 1). A cost function somewhat similar to the measure R P C A was presented by Zhang and Zha (2004) in a slightly different context. The local PCA projection of neighborhoods was used as an approximation of the tangent plane at each point. The resulting algorithm, local tangent subspaces alignment (L TSA), is based on minimizing the function n ∑ i = 1 ( I − P i P 0 i ) HY i 2 F , where the k ( i ) × d matrices P i are as in R P C A . The minimization performed by L TSA is under a normalization constraint. This means that as a measure, L TSA ’ s objective function is designed to compare normalized outputs Y (otherwise Y = 0 would be the trivial minimizer) and is therefore unsuitable as a measure. Another algorithm worth mentioning here is SDE (W einberger and Saul, 2006). The constraints on the output required by this algorithm are that all the distances and angles within each neighborhood be preserved. Therefore the output of this algorithm should al- ways be close to the minimum of R ( X , Y ) . The maximization of the objective function of this algorithm ∑ i , j y i − y j 2 is reasonable when the aforementioned constraints are enforced. Howe ver , it is not rele vant as a measure for comparison of general outputs that do not fulfill these constraints. In summary , we return to the questions posed at the beginning of this section. W e choose to define preservation of local neighborhoods as minimization of the Procrustes measure R (see Eq. 3). W e therefore find a global embedding that best preserves local structure by min- imizing R . For computational reasons, minimization of R P C A (see Eq. 6) may be preferred. For conformal maps we suggest the measure R C (see Eq. 7), which allows separate scaling of each neighborhood. 4 Algorithms In Section 3 we sho wed that a faithful embedding should yield low R ( X , Y ) and R P C A ( X , Y ) values. Therefore we may attempt to find a faithful embedding by minimizing these func- tions. Ho wev er, R ( X , Y ) and R P C A ( X , Y ) are not necessarily con vex functions and may ha ve more than one local minimum. In this section we present two algorithms for minimization of R ( X , Y ) or R P C A ( X , Y ) . In addition, we present an iterativ e method that can improve the output of the two algorithms, as well as other existing algorithms. The first algorithm, greedy Procrustes (GP), performs the embeddings one neighborhood at a time. The first neighborhood is embedded using the PCA projection (see Lemma 1). At each stage, the following neighborhood is embedded by finding the best embedding with respect to the embeddings already found. This greedy algorithm is presented in Section 4.1. 8 The second algorithm, Procrustes subspaces alignment (PSA), is based on an align- ment of the local PCA projection subspaces. The use of local PCA produces a good low- dimensional description of the neighborhoods, but the description of each of the neighbor- hoods is in an arbitrary coordinate system. PSA performs the global embedding by finding the local embeddings and then aligning them. PSA is described in Section 4.2. Simulated annealing (SA) is used to find the alignment of the subspaces (see Section 4.3). After an initial solution is found using either GP or PSA, the solution can be improved using an iterativ e procedure until there is no improvement (see Section 4.4). 4.1 Greedy Procrustes (GP) GP finds the neighborhoods’ embeddings one by one. The flo w of the algorithm is described below . 1. Initialization: – Find the neighbors X i of each point x i and let Neighbors ( i ) be the indices of the neighbors X i . – Initialize the list of all embedded points’ indices to N : = / 0. 2. Embedding of the first neighborhood: – Choose an index i (randomly). – Calculate the embedding Y i = X i P i , where P i is the PCA projection matrix of X i . – Update the list of indices of embedded points N = Neighbors ( i ) . 3. Find all other embeddings (iteratively): – Find j , where x j is the unembedded point with the largest number of embedded neighbors, j = argmax p / ∈ N | { Neighbors ( p ) ∩ N } | . – Define X j = x p | p ∈ Neighbors ( j ) ∩ N , the points in X j that are already embed- ded. Define Y j as the (previously calculated) embedding of X j . Define e X j = x p | p ∈ Neighbors ( j ) \ N , the points in X j that are not embedded yet. – Compute the Procrustes rotation matrix A j and translation vector b j between X j and Y j . – Define the embedding of the points in e X j as e Y j = e X j A j + b j . – Update the list of indices of embedded points N N : = N ∪ Neighbors ( j ) . 4. Stopping condition: Stop when | N | = n , i.e., when all points are embedded. The main advantage of GP is that it is fast. The embedding for X i is computed in O ( k ( i ) 3 ) where k ( i ) is the size of X i . Therefore, the overall computation time is O ( nK 3 ) , where K = max i k ( i ) . While GP does not claim to find the global minimum, it does find an embedding that preserv es the local neighborhood’ s structure. The main disadv antage of GP is that it has incremental errors. 9 4.2 Procrustes Subspaces Alignment (PSA) R ( X , Y ) can be written in terms of the Procrustes rotation matrices A i as R ( X , Y ) = 1 n n ∑ i = 1 inf { A i : A 0 i A i = I } H ( X i − Y i A 0 i ) 2 F , where H is the centering matrix. A i can be calculated, giv en X and Y . Howe ver , as Y is not giv en, one way to find Y is by first guessing the matrices A i and then finding the Y that minimizes R ( X , Y | A 1 , . . . , A n ) = 1 n n ∑ i = 1 H ( X i − Y i A 0 i ) 2 F . (8) Y can be found by taking deri vati ves of R ( X , Y | A 1 , . . . , A n ) . W e therefore need to choose A i wisely . The choice of the Jacobian matrices J i ≡ J φ ( z i ) as a guess for A i is justified by the following, as shown in the proof of Theorem 1 (see Eq. 14). As the size of the sample is increased, 1 n ∑ n i = i k H ( X i − Z i J 0 i ) k → 0. This means that choosing J i will lead to a solution Y that is close to the minimizer of R ( X , Y ) . The PCA projection matrices P i approximate the unknown Jacobian matrices J i up to a rotation. T o use them in place of J i , we must first align the projections correctly . Therefore, our guess for A i is of the form A i = P i O i , where O i are d × d rotation matrices that minimize f ( A 1 , . . . , A n ) = n ∑ i = 1 ∑ j ∈ Neighbors ( i ) A i − A j 2 F . (9) The rotation matrices O i can be found using simulated annealing, as described in Section 4.3. Once the matrices A i are found, we need to minimize R ( X , Y | A 1 , . . . , A n ) . W e first write R ( X , Y | A 1 , . . . , A n ) in matrix notation as R ( X , Y | A 1 , . . . , A n ) = 1 n n ∑ i = 1 tr ( X − Y A 0 i ) 0 H i ( X − Y A 0 i ) . Here H i is the centering matrix of neighborhood X i H i ( k , l ) = 1 − 1 k ( i ) k = l and k ∈ Neighbors ( i ) − 1 k ( i ) k 6 = l and k , l ∈ Neighbors ( i ) 0 elsewhere . The ro ws of the matrix H i X at Neighbors ( i ) indices are x i ’ s centered neighborhood, where all the other rows equal zero, and similarly for H i Y . . T aking the deriv ativ e of R ( X , Y | A 1 , . . . , A n ) (Eq. 8) with respect to Y (see Mardia et al, 1979) and using the fact that A 0 i A i = I , we obtain ∂ ∂ Y R ( X , Y | A 1 , . . . , A n ) = 2 n n ∑ i = 1 H i X A i − 2 n n ∑ i = 1 H i Y . (10) Using the general in verse of ∑ n i = 1 H i we can write Y as Y = n ∑ i = 1 H i ⊥ n ∑ i = 1 H i X A i . (11) Summarizing, we present the PSA algorithm. 10 1. Initialization: – Find the neighbors X i of each point x i . – Find the PCA projection matrices P i of the neighborhood X i . 2. Alignment of the projection matrices: Find A i that minimize Eq. 9 using, for example, simulated annealing (see Section 4.3). 3. Find the embedding: Compute Y according to Eq. 11. The advantage of this algorithm is that it is global. The computation time of this al- gorithm (assuming that the matrices A i are already known) depends on multiplying by the in verse of the sparse symmetric semi-positive definite matrix ∑ n i = 1 H i , which can be very costly . Howe ver , this matrix need not be computed explicitly . Instead, one may solve d - linear-equation systems of the form ( ∑ n i = 1 H i ) x = b , which can be computed much faster (see, for example, Munksgaard, 1980). 4.3 Simulated Annealing (SA) alignment procedure In step 2. of PSA (see Section 4.2), it is necessary to align the PCA projection matri- ces P i . In the follo wing we suggest an alignment method based on simulated annealing (SA) (Kirkpatrick et al, 1983). The aim of the suggested algorithm is to find a set of columns- orthonormal matrices A 1 , . . . , A n that minimize Eq. 9. A number of closely-related algo- rithms, designed to find embeddings using alignment of some local dimensionally-reduced descriptions, were previously suggested. Roweis et al (2001) and V erbeek et al (2002) intro- duced algorithms based on probabilistic mixtures of local F A and PCA structures, respec- tiv ely . As Eq. 9 and these two algorithms suffer from local minima, the use of simulated annealing may be beneficial. Another algorithm, suggested by T eh and Roweis (2003), uses a con ve x objectiv e function to find the alignment. The output matrices of this algorithm are not necessarily columns-orthonormal, as is required in our case . Minimizing Eq. 9 is similar to the Ising model problem (see, for example, Cipra, 1987). The Ising model consists of a neighbor-graph and a configuration space that is the set of all possible assignments of + 1 or − 1 to each vertex of the graph. A low-ener gy state is one in which neighboring points have the same sign. Our problem consists of a neighbor-graph with a configuration space that includes all of the rotations of the projection matrices P i at each point x i . Minimizing the function f is similar to finding a low-ener gy state of the Ising model. As solutions to the Ising model usually inv olve algorithms such as simulated annealing , we take the same path here. W e present the SA algorithm, following the algorithm suggested by Siarry et al (1997), modified for our problem. 1. Initialization: – Choose an initial configuration (for example, using GP). – Compute the initial temperature (see Siarry et al, 1997). – Define the cooling scheme (see Siarry et al, 1997). 2. Single SA step: – Choose i randomly . Generate a random d × d rotation matrix O i (see Stew art, 1980). Define A New i ≡ A i O i . – Compute f ( A 1 , . . . , A New i , . . . , A n ) . Note that it is enough to compute ∑ Neighbors ( i ) A New i − A j 2 F . 11 – Accept A New i if either f ( A 1 , . . . , A New i , . . . , A n ) < f ( A 1 , . . . , A i , . . . , A n ) or with some probability depending on the current temperature. – Decrease the temperature and stop if the lowest temperature is reached. 3. Outer iterations: – First iteration: Perform a run of SA on all matrices A 1 , . . . , A n . Find the largest cluster of aligned matrices (for example, use BFS (Corman et al, 1990) and define an alignment criterion). – Other iterations: Apply SA to the matrices that are not in the largest cluster . Update the largest cluster after each run. – Repeat until the size of the largest cluster includes almost all of the matrices A i . Using SA is complicated. The cooling scheme requires the estimation of many parame- ters, and the run-time depends hea vily on the correct choice of these parameters. For output of large dimension, the alignment is difficult, and the output of SA can be poor . Although SA is a time-consuming algorithm, each iteration is v ery simple, in volving only O ( K qd 3 ) oper- ations, where K is the maximum number of neighbors, and q and d are the input and output dimensions, respecti vely . In addition, the memory requirements are modest. Therefore, SA can run ev en when the number of points is large. 4.4 Iterativ e procedure Giv en a solution of GP , PSA, or any other technique, it is usually possible to modify Y so that R ( X , Y ) is further decreased. The idea of the iterativ e procedure we present here was suggested independently by Zhang and Zha (2004), but no details were supplied. In Section 4.2, we sho wed that giv en Y , the improved matrices A i are obtained by finding the Procrustes matrices between Y i and X i . Giv en the matrices A i , the embedding Y can be found using Eq. 11. An iterativ e procedure would require finding first the new matrices A i and then a new embedding Y at each stage. This would be repeated until the change in the value of R ( X , Y ) was small. The problem with this scheme is that it in volv es the computation of the in verse of the matrix ∑ n i = 1 H i (see end of Section 4.2). W e therefore suggest a modified v ersion of this iterativ e procedure, which is easier to compute. Recall that R ( X , Y ) = n ∑ i = 1 ∑ j ∈ Neighbors ( i ) x j − A i y j − b i 2 . The least-squares solution for b i is 1 |{ Neighbors ( i ) }| ∑ j ∈ Neighbors ( i ) ( x j − A i y j ) . (12) The least-squares solution for y j is 1 |{ i : j ∈ Neighbors ( i ) }| ∑ { i : j ∈ Neighbors ( i ) } A 0 i ( x j − b i ) . (13) Note that we get a different solution than that in Eq. 10. The reason is that here we consider b i as constants when we look for a solution for y j . In fact, y j appear in the definition of 12 the b i . Howe ver , as y j appear there multiplied by 1 / k ( i ) , these terms make only a small contribution. W e suggest performing the iterations as follows. First, find the Procrustes rotation ma- trices A i and the translation vectors b i using Eq. 12. Then find y j using Eq. 13. Repeat until R ( X , Y ) no longer decreases significantly . 5 Numerical Examples In this section we ev aluate the algorithms GP and PSA on data sets that we assumed to be sampled from underlying manifolds. W e compare the results to those obtained by LLE (Ro weis and Saul, 2000), Isomap (T enenbaum et al, 2000), and L TSA (Zhang and Zha, 2004), both visually and using the measures R N ( X , Y ) and R C ( X , Y ) (see T able 2). The algorithms GP and PSA were implemented in the Matlab en vironment, running on a Pentium 4 with a 3 Ghz CPU and 0.98 GB of RAM. The alignment stage of PSA was implemented using SA (see Section 4.3). The runs of both GP and PSA were followed by the iterative procedure described in Section 4.4 to improve the minimization of R ( X , Y ) . LLE, Isomap, and L TSA were ev aluated using the Matlab code taken from the sites of the respectiv e authors. The algorithm SDE (W einber ger and Saul, 2006), whose minimization is closest in spirit to ours, was also tested; howev er, it suffers from heavy computational demands, and the results of this algorithm could not be obtained using the code provided in the site. The data sets are described in T able 1. W e ran all five algorithms using k = 6 , 9 , 12 , 15 and 18 nearest neighbors. The minimum v alues for R N ( X , Y ) and R C ( X , Y ) are presented in T able 2. The results in all cases were qualitativ ely the same, therefore in the images we show the results for k = 12 only . Name n q d Description Figure Swissroll 1600 3 2 isometrically embedded in R 3 Fig 1 Hemisphere 2500 3 2 not isometrically embedded Fig 2 in R 3 Cylinder 800 3 2 locally isometric to R 2 , Fig 3 has no global embedding in R 2 Faces 1965 560 3 20 × 28 pix el grayscale face images Fig 4 (see Roweis, retrie ved Nov . 2006) T wos 638 256 10 images of handwritten “2”s None,due from the USPS data set to output of handwritten digits (Hull, 1994) dimension T able 1 Description of fiv e data sets used to compare the dif ferent algorithms. n is the sample size and q and d are the input and output dimensions, respectiv ely . Overall, GP and PSA perform satisfactorily as sho wn both in the figures and in T able 2. The fact that in most of the examples GP and PSA get lower values than LLE, Isomap, and L TSA is perhaps not surprising, as GP and PSA are designed to minimize R ( X , Y ) . The run-times of the algorithms excluding PSA is on the order of seconds while it takes PSA a few hours to run. Memory requirements of GP and PSA are lower than those of the other algorithms. As a consequence of the memory requirements, results could not be obtained for LLE, Isomap and L TSA for n > 3000. 13 Fig. 1 A 1600-point sample taken from the three-dimensional Swissroll input is presented in (A). (B)-(F) show the output of GP , PSA, LLE, Isomap, and L TSA, respecti vely , for k = 12. Both GP and PSA, like Isomap, succeed in finding the proportions of the original data. Use of the measure R ( X , Y ) allows a quantitati ve comparison of visually similar outputs. Regarding the output of the cylinder (see Fig. 3), for example, PSA and Isomap both giv e topologically sound results; howe ver , R ( X , Y ) shows that locally , PSA does a better job . In addition, R ( X , Y ) can be used to optimize embedding parameters such as neighborhood size (see Fig.5). Swissroll Hemisphere Cylinder Faces T wos GP 0.00 [0.00] 0.02 [0.01] 0.13 [0.01] 0.45 [0.36] 0.00 [0.00] PSA 0.00 [0.00] 0.03 [0.01] 0.02 [0.01] 0.35 [0.30 0.00 [0.00] LLE 0.81 [0.23] 0.60 [0.00] 0.73 [0.13] 0.99 [0.79] 0.82 [0.23] Isomap 0.01 [0.01] 0.03 [0.02] 0.34 [0.25] 0.5 [0.38] 0.02 [0.01] L TSA 0.99 [0.22] 0.93 [0.04] 0.59 [0.48] 0.99 [0.53] 0.98 [0.37] Lower Bound 0.00 0.00 0.00 0.11 0.00 T able 2 Comparison of the output of the different algorithms using R N ( X , Y ) [ R C ( X , Y )] as the measures of the quality of the embeddings. These values are the minima of both measures as a function of neighborhood size k , for k = 6 , 9 , 12 , 15 , 18. The lower bound was computed using local PCA at each neighborhood (see Lemma 1). 14 Fig. 2 The input of a 2500-point sample taken from a hemisphere is presented in (A). (B)-(F) sho w the output of GP , PSA, LLE, Isomap, and L TSA, respecti vely , for k = 12. Both GP and PSA, like the other algorithms, perform the embedding, although the assumption of isometry does not hold for the hemisphere. 6 Discussion In this section, we emphasize the main results of this work and indicate possible directions for future research. W e demonstrated that overall, the measure R ( X , Y ) provides a good estimation of the quality of the embedding. It allows a quantitative comparison of the outputs of various embedding algorithms. Moreover , it is quickly and easily computed. Howe ver , two points should be noted. First, R ( X , Y ) measures only the local quality of the embedding. As emphasized in Fig. 3, ev en outputs that do not preserve the global structure of the input may yield rela- tiv ely low R -v alues if the local neighborhood structure is generally preserved. This problem is shared by all manifold-embedding techniques that try to minimize only local attrib utes of the data. The problem can be circumvented by adding a penalty for outputs that embed dis- tant geodesic points close to each other . Distant geodesic points can be defined, for example, as points at least s -distant on the neighborhood graph, with s > 1. Second, R ( X , Y ) is not an ideal measure of the quality of embedding for algorithms that normalize their output, such as LLE (Roweis and Saul, 2000), Laplacian Eigenmap (Belkin and Niyogi, 2003), and L TSA (Zhang and Zha, 2004). This is because normalization of the output distorts the structure of the local neighborhoods and therefore yields high R - values e ven if the output seems to find the underlying structure of the input. This point (see also discussion in Sha and Saul, 2005, Section 2) raises the questions, which qualities are preserved by these algorithms and ho w can one quantify these qualities. Ho wever , it is clear that these algorithms do not perform faithful embedding in the sense defined in Section 3. The measure R C ( X , Y ) addresses this problem to some degree, by allo wing separate scaling 15 Fig. 3 The input of an 800-point sample taken from a cylinder is presented in (A). (B)-(F) show the output of GP , PSA, LLE, Isomap, and L TSA, respectively , for k = 12. Note that the cylinder has no embedding in R 2 and it is not clear what is the best embedding in this case. While PSA, Isomap, and LLE succeeded in finding the topological ring structure of the cylinder , only PSA and LLE succeed in preserving the width of the cylinder . GP and L TSA collapse the cylinder and therefore fail to find the global structure, though they preform well for most of the neighborhoods (see T able 2). of each neighborhood (see T able 2). One could consider an even more relaxed measure which allows not only rotation, translations and scaling but a general linear transformation of each neighborhood. Ho wev er , it is not clear what e xactly such measure would quantify . T wo new embedding algorithms were introduced. W e discuss some aspects of these algorithms below . PSA, in the form we suggested in this work, uses SA to align the tangent subspaces at all points. While PSA works reasonably well for small input sets and low output dimension spaces, it is not suitable for large data sets. Howe ver , the algorithm should not be rejected as a whole. Rather, a different or modified technique for subspaces alignment, for example the use of landmarks (de Silva and T enenbaum, 2003), is required in order to make this algorithm truly useful. GP is very fast ( O ( n ) where n is the number of sample points), can work on very large input sets (even 100 , 000 in less than an hour), and obtains good results as shown both in Figs. 1-4 and in T able 2. This algorithm is therefore an efficient alternativ e to the existing algorithms. It may also be used to choose optimal parameters, such as neighborhood size and output dimension, before other algorithms are applied. R ( X , Y ) can be used for the comparison of GP outputs for varied parameters. An important issue that was not considered in depth in this paper is that of noisy in- put data. The main problem with noisy data is that, locally , the data seems q -dimensional, ev en if the manifold is d -dimensional, d < q . T o o vercome this problem, one should choose neighborhoods that are large relative to the magnitude of the noise, but not too large with 16 Fig. 4 The projection of the three-dimensional output, as computed by PSA, on the first two coordinates (small points). The input used was a 1965-point sample of grayscale images of faces (see T able 1). The boxes connected by lines are nearby points in the output set. The images are the corresponding face images from the input, in the same order. W e see that nearby images in the input space correspond to nearby points in the output space. Fig. 5 The input of an 800-point sample taken from a cylinder is presented in (A). (B)-(F) show the output of LLE for k = 6 , 9 , 12 , 15 and 18, respectively . The respective values of R C ( X , Y ) are 0 . 25 , 0 . 15 , 0 . 13 , 0 . 19 , 0 . 17. While qualitati vely the results are similar, R C ( X , Y ) indicates that k = 12 is opti- mal. 17 respect to the curv ature of the manifold. If the neighborhood size is chosen wisely , both PSA and GP should overcome the noise and perform the embedding well (see Fig. 6). This is due to the fact that both algorithms are based on Procrustes analysis and PCA, which are relativ ely robust ag ainst noise. Further study is required to define a method for choosing the optimal neighborhood size. Fig. 6 The profile of noisy input of a 2500-point sample taken from a swissroll is presented in (A). (B)-(F) show the output of GP , PSA, LLE, Isomap and L TSA respectively . Note that only GP and PSA succeed to find the structure of the swissroll. A Proofs A.1 Proof of Theorem 1 In this section, we denote the points of neighborhood X i as x i 1 , . . . , x i k ( i ) , where k ( i ) is the number of neighbors in X i . Pr oof In order to prove that R ( X , Z ) = 1 n ∑ n i = 1 G ( X i , Z i ) is O ( r 4 max ) , it is enough to show that for each i ∈ 1 , . . . , n , G ( X i , Z i ) = O ( r 4 i ) , where r i is the radius of the i -th neighborhood. The proof consists of replacing the Procrustes rotation matrix A i by J i ≡ J φ ( z i ) , the Jacobian of the mapping φ at z i . Note that the fact that φ is an isometry ensures that J 0 i J i = I . The Procrustes translation vector b i is replaced by x i − J i z i . 18 Recall that by definition x j − x i = φ ( z j ) − φ ( z i ) ; therefore x j − x i = J i ( z i − z j ) + O z j − z i 2 . Hence, G ( X i , Z i ) = inf A i , b i k ( i ) ∑ j = 1 x i j − A i z i j − b i 2 (14) ≤ k ( i ) ∑ j = 1 x i j − J i z i j − ( x i − J i z i ) 2 = k ( i ) ∑ j = 1 ( x i j − x i ) − J i ( z i j − z i ) 2 = k ( i ) ∑ j = 1 O z i j − z i 4 . φ is an isometry , therefore d M ( x i j , x i ) = z i j − z i , where d M is the geodesic metric. The sample is assumed to be dense, hence x i j − x i < s 0 , where s 0 is the minimum branc h separation (see Section 2). Using Bernstein et al (2000., Lemma 3) we conclude that z i j − z i = d M ( x i j , x i ) < π 2 x i j − x i . W e can therefore write O z i j − z i 4 = O ( r 4 i ) , which completes the proof. A.2 Proof of Theorem 2 The proof of Theorem 2 is based on the idea that the PCA projection matrix P i is usually a good approximation of the span of the Jacobian J i . The structure of the proof is as follo ws. First we quantify the relations between X i P i and X i J i , the projections of the i -th neighborhood using the PCA projection matrix and the Jacobian, respectiv ely . Then we follow the lines of the proof of Theorem 1, using the bounds obtained pre viously . T o compare the PCA projection subspace and tangent subspace at x i we use the notation of angle between subspaces. Note that both subspaces are d -dimensional and are embedded in the Euclidian space R q . The columns of the matrices P i and J i consist of orthonormal bases of the PCA projection space and of the tangent space, respectiv ely . Denote these subspaces by P i and J i , respectiv ely . Surprisingly , the angle between P i and J i can be arbitrarily large. Howe ver , in the following we show that even if the angle between the subspaces is large, the projected neighborhoods are close. W e start with some definitions. The principal angles σ 1 , . . . , σ d between J i and P i may be defined recursiv ely for p = 1 , . . . , d as (see Golub and Loan, 1983) cos ( σ p ) = max v ∈ P i max w ∈ J i v 0 w , subject to k v k = k w k = 1 , v 0 v k = 0 , w 0 w k = 0 ; k = 1 , . . . , p − 1 . The vectors v 1 , . . . , v d and w 1 , . . . , w d are called principal vectors . The fact that P i and J i hav e orthogonal columns leads to a simple way to calculate the principal vectors and angles explicitly . Let U L V 0 be the svd of J 0 i P i . Then (see Golub and Loan, 1983) 1. v 1 , . . . , v d are giv en by the columns of P i V . 2. w 1 , . . . , w d are giv en by the columns of J i U . The relations between the two sets of vectors plays an important role in our computations. Write w p = α p v p + β p v ⊥ p , where α p = w 0 p v p , β p = w p − α p v p and v ⊥ p = w p − α p v p k w p − α p v p k . Note that α p is the cosine of the p -th principal angle between P i and J i . The angle between the subspaces is defined as arccos ( α d ) and the distance between the two subspaces is defined to be sin ( α d ) . W e no w prove some basic claims related to the principal v ectors. Lemma 2 Let P i be the pr ojection matrix of the neighborhood X i and let J i be the Jacobian of φ at z i . Let U L V 0 be the svd of J 0 i P i and v 1 , . . . , v d and w 1 , . . . , w d be the columns of P i V and J i U , r espectively . Then 19 1. v 1 , . . . , v d ar e an orthonormal vector system. 2. w 1 , . . . , w d ar e an orthonormal vector system. 3. v p ⊥ w q for p 6 = q. 4. v ⊥ p ⊥ v ⊥ q for p 6 = q. 5. v ⊥ p ⊥ v q for q = 1 , . . . , d . Pr oof 1. T rue, since P i V is an orthonormal matrix. 2. T rue, since J i U is an orthonormal matrix. 3. Note that ( J i U ) 0 ( P i V ) = U 0 ( J 0 i P i ) V = L where L is a diagonal non-negativ e matrix. 4. ( β p v ⊥ p ) 0 ( β q v ⊥ q ) = ( w p − α p v p ) 0 ( w q − α p v q ) = w 0 p w q − v p 0 w q − v 0 q w p + v p 0 v q = 0 . 5. ( β p v ⊥ p ) 0 v q = ( w p − α p v p ) 0 v q = w 0 p v q − α p v p 0 v q = δ pq α p − δ pq α p = 0 . Using the relation between the principal vectors, we can compare the description of the neighborhood X i in the local PCA coordinations and its description in the tangent space coordinations. Here we need to exploit two main f acts. The first fact is that the local PCA projection of a neighborhood is the best approximation, in the l 2 sense, to the original neighborhood. Specifically , it is a better approximation than the tangent space in the l 2 sense. The second is that in a small neighborhood of x i , the tangent space itself is a good approximation to the original neighborhood. Formally , the first assertion means that k ( i ) ∑ j = 1 ( x i j − ¯ x i ) 2 ≥ k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) 2 ≥ k ( i ) ∑ j = 1 J 0 i ( x i j − ¯ x i ) 2 (15) while the second assertion means that k ( i ) ∑ j = 1 ( x i j − ¯ x i ) 2 − k ( i ) ∑ j = 1 J 0 i ( x i j − ¯ x i ) 2 = O ( r 4 i ) . (16) The proof of Eq. 16 is straightforward. First note that ( x i j − ¯ x i ) = ( x i j − x i ) − ( ¯ x i − x i ) = J i ( z i j − z i ) − J i ( ¯ z i − z i ) + O ( r 2 i ) = J i ( z i j − ¯ z i ) + O ( r 2 i ) . Hence ( x i j − ¯ x i ) 2 − J 0 i ( x i j − ¯ x i ) 2 = q ∑ p = d + 1 ( w 0 p ( x i j − ¯ x i )) 2 = ( x i j − ¯ x i ) − J i J 0 i ( x i j − ¯ x i ) 2 = J i ( z i j − ¯ z i ) − J i J 0 i ( J i ( z i j − ¯ z i )) + O ( r 2 i ) 2 = O ( r 2 i ) 2 = O ( r 4 i ) , where w d + 1 , . . . , w q are a completion of w 1 , . . . , w d to an orthonormal basis of R q and we used the fact that J 0 i J i = I . The following is a lemma regarding the relations between the PCA projection matrix and the Jacobian projection. It is a consequence of Eq. 15. 20 Lemma 3 1. ∑ k ( i ) j = 1 ( x i j − ¯ x i ) 2 − ∑ k ( i ) j = 1 V 0 P 0 i ( x i j − ¯ x i ) 2 = O ( r 4 i ) . 2. ∑ k ( i ) j = 1 V 0 P 0 i ( x i j − ¯ x i ) 2 − ∑ k ( i ) j = 1 U 0 J 0 i ( x i j − ¯ x i ) 2 = O ( r 4 i ) . 3. ( x i j − ¯ x i ) 0 v p = O ( r i ) . 4. ( x i j − ¯ x i ) 0 v ⊥ p = O ( r 2 i ) . Pr oof 1. and 2. follow from Eqs. 15 and 16. 3. follows from the definition of r i . 4. is a consequence of 1., indeed, k ( i ) ∑ j = 1 d ∑ p = 1 v ⊥ p 0 ( x i j − ¯ x i ) 2 ≤ k ( i ) ∑ j = 1 ( x i j − ¯ x i ) 2 − k ( i ) ∑ j = 1 V 0 P 0 i ( x i j − ¯ x i ) 2 = O ( r 4 i ) . W e now prove Theorem 2. Similarly to the proof of Theorem 1, it is enough to show that G ( X i P i , Z i ) = O ( r 3 i ) . G ( X i P i , Z i ) = inf A i , b i k ( i ) ∑ j = 1 P 0 i x i j − A i z i j − b i 2 ≤ k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) − O i ( z i j − ¯ z i ) 2 = k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) − O i J 0 i ( x i j − ¯ x i ) + O i J 0 i ( x i j − ¯ x i ) − O i ( z i j − ¯ z i ) 2 ≤ k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) − O i J 0 i ( x i j − ¯ x i ) 2 + k ( i ) ∑ j = 1 O i J 0 i ( x i j − ¯ x i ) − O i ( z i j − ¯ z i ) 2 ≡ Exp1 + Exp2 , where O i is some d × d orthogonal matrix. Note that due to its orthogonality , Exp2 is independent of the specific choice of O i . W e choose O i = V U 0 . Rewriting Exp1 we obtain Exp1 = k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) − O i J 0 i ( x i j − ¯ x i ) 2 = k ( i ) ∑ j = 1 P 0 i ( x i j − ¯ x i ) − V U 0 J 0 i ( x i j − ¯ x i ) 2 = k ( i ) ∑ j = 1 V 0 P 0 i ( x i j − ¯ x i ) − ( V 0 V ) U 0 J 0 i ( x i j − ¯ x i ) 2 = k ( i ) ∑ j = 1 d ∑ p = 1 v p 0 ( x i j − ¯ x i ) − w 0 p ( x i j − ¯ x i ) 2 . 21 This last expression brings out the difference between the description of the neighborhood X i in the local PCA coordinations and its description in the tangent space coordinates. Using Lemma 2, we can write Exp1 = k ( i ) ∑ j = 1 d ∑ p = 1 ( v p − w p ) 0 ( x i j − ¯ x i ) 2 (17) = k ( i ) ∑ j = 1 d ∑ p = 1 v p − α p v p − β p v ⊥ p ) 0 ( x i j − ¯ x i ) 2 = k ( i ) ∑ j = 1 d ∑ p = 1 ( 1 − α p ) 2 v p 0 ( x i j − ¯ x i ) 2 − k ( i ) ∑ j = 1 d ∑ p = 1 2 ( 1 − α p ) β p v p 0 ( x i j − ¯ x i ) v ⊥ p 0 ( x i j − ¯ x i ) + k ( i ) ∑ j = 1 d ∑ p = 1 β 2 p v ⊥ p 0 ( x i j − ¯ x i ) 2 . W e will use Lemma 3 to bound the first expression of the RHS. O ( r 4 i ) = k ( i ) ∑ j = 1 V 0 P 0 i ( x i j − ¯ x i ) 2 − k ( i ) ∑ j = 1 U 0 J 0 i ( x i j − ¯ x i ) 2 = k ( i ) ∑ j = 1 d ∑ p = 1 n v p 0 ( x i j − ¯ x i ) 2 − ( α p v p + β p v ⊥ p ) 0 ( x i j − ¯ x i ) 2 o = k ( i ) ∑ j = 1 d ∑ p = 1 ( 1 − α 2 p ) v p 0 ( x i j − ¯ x i ) 2 + 2 k ( i ) ∑ j = 1 d ∑ p = 1 α p β p v p 0 ( x i j − ¯ x i ) v ⊥ p 0 ( x i j − ¯ x i ) − k ( i ) ∑ j = 1 d ∑ p = 1 β 2 p v ⊥ p 0 ( x i j − ¯ x i ) 2 . Note also that ( 1 − α p ) 2 ≤ 1 − α 2 p . Hence, k ( i ) ∑ j = 1 d ∑ p = 1 ( 1 − α p ) 2 v p 0 ( x i j − ¯ x i ) 2 ≤ k ( i ) ∑ j = 1 d ∑ p = 1 β 2 p v ⊥ p 0 ( x i j − ¯ x i ) 2 − 2 k ( i ) ∑ j = 1 d ∑ p = 1 α p β p v p 0 ( x i j − ¯ x i ) v ⊥ p 0 ( x i j − ¯ x i ) + O ( r 4 i ) . Plugging it into Eq. 17 we get Exp1 ≤ k ( i ) ∑ j = 1 d ∑ p = 1 2 β 2 p v ⊥ p 0 ( x i j − ¯ x i ) 2 − k ( i ) ∑ j = 1 d ∑ p = 1 2 β p v p 0 ( x i j − ¯ x i ) v ⊥ p 0 ( x i j − ¯ x i ) + O ( r 4 i ) ≤ O ( r 4 i ) + O ( r 3 i ) + O ( r 4 i ) = O ( r 3 i ) , where the last inequality is due to Lemma 3. 22 Proving that Exp2 is O ( r 4 i ) is straightforward. Exp2 = k ( i ) ∑ j = 1 O i J 0 i ( x i j − ¯ x i ) − O i ( z i j − ¯ z i ) 2 = k ( i ) ∑ j = 1 J 0 i ( x i j − ¯ x i ) − ( z i j − ¯ z i ) 2 = O ( r 4 i ) , which concludes the proof of Theorem 2. A.3 Proof of Theorem 3 The proof is similar to the proof of Theorem 1 (see Section A.1). The proof consists of replacing the Pro- crustes rotation matrix A i and the constant c i by J i ≡ J ˜ φ ( z i ) , the Jacobian of the mapping ˜ φ at z i . Note that as ˜ φ is an conformal mapping which ensures that J 0 i J i = cI . The Procrustes translation vector b i is replaced by x i − J i z i . Recall that by definition x j − x i = ˜ φ ( z j ) − ˜ φ ( z i ) ; therefore x j − x i = J i ( z i − z j ) + O z j − z i 2 . Hence, G ( X i , Z i ) = inf A i , b i , c i k ( i ) ∑ j = 1 x i j − c i A i z i j − b i 2 (18) ≤ k ( i ) ∑ j = 1 x i j − J i z i j − ( x i − J i z i ) 2 = k ( i ) ∑ j = 1 O z i j − z i 4 . As ˜ φ is an conformal mapping, we have c min z i j − z i ≤ d M ( x i j , x i ) , where d M is the geodesic metric and c min > 0 is the minimum of the scale function c ( z ) measures the scaling change of φ at z . The minimum c min is attained as D is compact. The last inequality holds true since the geodesic distance d M ( x i j , x i ) equals to the integral ov er c ( z ) for some path between z i j and z i . The sample is assumed to be dense, hence x i j − x i < s 0 , where s 0 is the minimum branch separation (see Section 2). Using again Bernstein et al (2000., Lemma 3) we conclude that z i j − z i ≤ 1 c min d M ( x i j , x i ) < π 2 c min x i j − x i . W e can therefore write O z i j − z i 4 = O ( r 4 i ) . Dividing by the normalization k H X i k 2 F for each neighbor- hood we obtain R C ( X , Y ) = O ( r 2 max ) which completes the proof. Acknowledgements W e would like to thank S. Kirkpatrick and J. Goldberger for meaningful discussions. W e are grateful to the anonymous revie wers of an earlier version of this manuscript for their helpful sugges- tions. References Belkin M, Niyogi P (2003) Laplacian eigenmaps for dimensionality reduction and data representation. Neural Comp 15(6):1373–1396 Bernstein M, de Silva V , Langford JC, T enenbaum JB (2000.) Graph approximations to geodesics on embed- ded manifolds, technical report, Stanford Univ ersity , Stanford, A vailable at http://isomap.stanford.edu Cipra B (1987) An introduction to the I sing model. Am Math Monthly 94(10):937–959 23 Corman T , Leiserson C, Riv est R (1990) Introduction to Algorithms. MIT Press Dollar P , Rabaud V , Belongie SJ (2007) Non-isometric manifold learning: analysis and an algorithm. In: Ghahramani Z (ed) Proceedings of the 24th Annual International Conference on Machine Learning (ICML), Omnipress, pp 241–248 Donoho D, Grimes C (2004) Hessian eigenmaps: Locally linear embedding techniques for high-dimensional data. Proc Natl Acad Sci USA 100(10):5591–5596 Golub GH, Loan CFV (1983) Matrix Computations. Johns Hopkins Univ ersity Press, Baltimore, Maryland Hull JJ (1994) A database for handwritten text recognition research. IEEE Trans Pattern Anal Mach Intell 16(5):550–554 Kirkpatrick S, Gelatt CD, V ecchi MP (1983) Optimization by simulated annealing. Science 220, 4598:671– 680 Mardia K, Kent J, Bibby J (1979) Multi variate Analysis. Academic Press Munksgaard N (1980) Solving sparse symmetric sets of linear equations by preconditioned conjugate gradi- ents. A CM Trans Math Softw 6(2):206–219 Roweis S (retrie ved No v . 2006) Frey face on sam roweis’ page, http://www.cs.toronto.edu/ ~ roweis/ data.html Roweis ST , Saul LK (2000) Nonlinear dimensionality reduction by locally linear embedding. Science 290(5500):2323–2326 Roweis ST , Saul LK, Hinton GE (2001) Global coordination of local linear models. In: Advances in Neural Information Processing Systems 14, MIT Press, pp 889–896 Sha F , Saul LK (2005) Analysis and extension of spectral methods for nonlinear dimensionality reduction. In: Machine Learning, Proceedings of the T wenty-Second International Conference (ICML), pp 784–791 Siarry P , Berthiau G, Durdin F , Haussy J (1997) Enhanced simulated annealing for globally minimizing functions of many-continuous v ariables. ACM T rans Math Softw 23(2):209–228 Sibson R (1978) Studies in robustness of multidimensional-scaling: Procrustes statistics. J Roy Statist Soc 40(2):234–238 Sibson R (1979) Studies in the robustness of multidimensional-scaling: Perturbational analysis of classical scaling. J Roy Statist Soc 41(2):217–229 de Silva V , T enenbaum JB (2003) Global versus local methods in nonlinear dimensionality reduction. In: Advances in Neural Information Processing Systems 15, MIT Press Stew art GW (1980) The efficient generation of random orthogonal matrices with an application to condition estimators. SIAM Journal on Numerical Analysis 17(3):403–409 T eh YW , Roweis S (2003) Automatic alignment of local representations. In: Becker S, Thrun S, Obermayer K (eds) Advances in Neural Information Processing Systems 15, MIT Press T enenbaum JB, de Silva V , Langford JC (2000) A global geometric framework for nonlinear dimensionality reduction. Science 290(5500):2319–2323 V erbeek J, Vlassis N, Kr ¨ ose B (2002) Coordinating principal component analyzers. In: Proceedings of Inter- national Conference on Artificial Neural Networks W einberger K, Saul L (2006) Unsupervised learning of image manifolds by semidefinite programming. Int J Comput V ision 70(1):77–90 Zhang Z, Zha H (2004) Principal manifolds and nonlinear dimensionality reduction via tangent space align- ment. SIAM J Sci Comp 26(1):313–338

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment