Significan t Diagnostic Coun terexamples in Probabilistic Mo del Chec king Miguel E. Andr´ es 1 ⋆ , Pedro D’Argenio 2 ⋆⋆ , Peter v an Rossum 1 1 Institute fo r Computing and Information Sciences, The Netherlands. { mandres, petervr } @cs.ru.n l 2 F aMAF, Universidad Nacional de C´ ordoba, CONICET, Argentina. dargenio@f amaf.unc.edu.ar Abstract. This p aper presents a no vel technique for counterexample genera- tion in probabilistic model chec king of Mark ov C hains and Mark ov Decis ion Processes. ( Finite) paths in co unterexamples are group ed tog ether in witnesses that are lik ely to pro vide similar debugging informa tion to the user. W e l ist five prop erties that witnesses should satisfy in order to b e useful as debugging aid: similarity , accuracy , originalit y , significance, and finiteness. Our witnesses conta in paths th at behav e similar outside strongly connected components. This pap ers sho ws h ow to compute th ese w itnesses by re du cing the problem of generating counterexamples for general properties o ver Mark ov Decision P ro- cesses, in several steps, to the easy problem of generating counterexamples for reac hability prop erties ov er acyclic Marko v Chains. 1 In tro duction Mo del ch ecking is a n automated technique that, g iv en a finite-state mo del of a system and a prop erty stated in an appropriate lo gical forma lism, systematically c hecks the v a lidit y of this pr operty . Mo del checking is a ge neral a ppr oac h and is applied in areas like hardware verification and soft ware eng ineering. Now adays, the interaction geometry o f distributed sys tems and net work proto cols calls for pro ba bilistic, or more generally , qua n titative estimates of, e.g., pe r formance and cos t measures. Randomized a lgorithms are increasing ly utilized to achiev e high per formance a t the cos t of obta ining corre ct a nsw ers only with high proba bilit y . F or all this, there is a wide rang e o f mo dels and a pplications in computer s cience requir- ing qua n titative analy sis. P robabilistic mo del chec king allow us to chec k whether or not a probabilistic prop erty is satisfied in a given mo del, e.g., “Is every messa ge sent successfully received with probability greater or equal than 0 . 9 9?”. A ma jor str ength of model chec king is the p ossibilit y o f generating diagnostic infor- mation in case the pro perty is violated. This diagno stic information is provided through a c ounter example s ho wing an executio n of the mo del that inv alida tes the prop ert y under verification. Apart from the immediate feedba c k in mo del chec king, co un terexamples are also used in a bs traction-refinement techniques [CGJ + 00], and provide the fo unda - tions for schedule der iv ation (see, e.g., [BLR0 5 ]). Although counterexample genera tion was studied from the very beg inning in most mo del chec king techniques, this ha s not b een the case for probabilistic mo del checking. Only recently a tten tion was drawn to this sub ject [AHL05,AL06,HK07a,HK07b,AL07], fifteen years after the fir st s tudies on probabilistic mo del chec king. Contrarily to other mo del chec king techniques, counterexamples in this setting are not g iv en by a single execution path. Ins tea d, they a re sets of exe cutions of the sy stem satisfying a cer tain undesired prop ert y whos e probability mass is higher than a given b ound. Since coun- terexamples a re used a s a diag nostic to o l, previous works on counterexamples have pr e - sented them as sets of finite paths o f la r ge enough proba bilit y . W e refer to these sets as ⋆ Supp orted by NWO pro ject 612.00 0.526 ⋆⋆ Supp orted by the ANPCyT p ro ject PICT 26135 and CONICET pro ject PIP 6391 r epr esent ative c ount er examples . Ele men ts of representativ e co un terexamples with high probability have b een cons ide r ed the mo st informative since they contribute mostly to the prop erty re futatio n. A c hallenge in counterexample generation for probabilistic mo del c hecking is that (1) represe ntative co un terexamples are very large (often infinite), (2) many of its el- ement s hav e very low proba bilit y , and (3) that ele ments ca n b e extremely simila r to each o ther (consequently providing similar diagnos tic infor mation). Even worse, (4) sometimes the finite paths with highest probability do no t indicate the mos t likely violation of the prop erty under consider ation. F or example, loo k at the Markov chain M in Fig ure 1. The prop ert y M | = ≤ 0 . 5 ♦ ψ stating that ex e cution rea c hes a state satisfying ψ (i.e., reaches s 3 or s 4 ) with proba- bilit y low er or equal than 0 . 5 is violated (since the proba bilit y of rea c hing ψ is 1). The left hand side of table in Figure 2 lists finite paths reaching ψ ranked a ccording to their probability . Note that finite pa ths with hig hest pr obabilit y take the left branch in the system, wherea s the right branch in itself has higher pro babilit y , illustrating Pro blem 4. T o adjust the mo del so that it do es satisfy the prop ert y (bug fixing), it is not sufficient to mo dify the left hand side of the system alone; no matter how one changes the left hand side, the pr obabilit y of r eac hing ψ remains at least 0 . 6. F urthermore, the first six finite paths provide similar diagnostic information: they just make extra lo ops in s 1 . This is an ex ample of Pr oblem 3. Also, the probability of every sing le finite path is far b elow the bo und 0 . 5, making it unclea r if a particular path is imp ortant; se e Problem 2 ab ov e. Finally , the (unique) counterexample for the prop ert y M | = < 1 ♦ ψ consists o f infinitely man y finite paths (namely a ll finite paths of M ); se e Problem 1 . T o ov ercome these pro blems, we par tition a representativ e c o un terexample into sets of s 0 s 1 s 2 s 3 ψ s 4 ψ 0 , 5 0 , 99 0 , 4 0 , 6 0 , 5 0 , 01 Fig. 1: Marko v chain Single paths Witnesses Rank F. Path Prob Witness Mass 1 s 0 ( s 1 ) 1 s 3 0.2 [ s 0 s 2 s 4 ] 0.6 2 s 0 ( s 1 ) 2 s 3 0.1 [ s 0 s 1 s 3 ] 0.4 3 s 0 ( s 1 ) 3 s 3 0.05 4 s 0 ( s 1 ) 4 s 3 0.025 5 s 0 ( s 1 ) 5 s 3 0.0125 6 s 0 ( s 1 ) 6 s 3 0.00625 7 s 0 ( s 2 ) 1 s 4 0.006 8 s 0 ( s 2 ) 2 s 4 0.0059 9 s 0 ( s 2 ) 3 s 4 0.0058 . . . . . . . . . Fig. 2: Co mparison T a ble finite paths that follow a similar patter n. W e call thes e sets witnesses . T o ensure that witnesses provide v aluable diag nostic informatio n, we desire that the set of witnesses that form a co unterexample satisfies several prop erties: tw o different witnesses should provide differe nt diagnostic informa tion (solving Pro blem 3) and elements of a single witness sho uld pr o vide simila r diag nostic infor mation, as a conseq uence witnesses hav e a high proba bilit y mass (solving Pro blems 2 and 4 ), and the num b er of witnesses o f a representative counterexample should be finite (so lving Pr oblem 1). In our s etting, witnesses co nsist of paths that b ehav e the same outside stro ngly connected comp onen ts. In the exa mple of Figure 1, there ar e t wo witnesses: the set of all finite pa ths g oing rig ht, represented by [ s 0 s 2 s 4 ] whose probability (ma s s) is 0 . 6, and the set o f a ll finite paths g oing left, r epresen ted by [ s 0 s 1 s 3 ] with pro babilit y (mass) 0 . 4. In this pap er, we show how to obtain s uc h s ets of witness es for bo unded probabilis tic L TL prop erties on Mar k ov dec is ion pro cesses (MDP). In fact, we fir st show how to reduce this problem to finding witness e s for upp er bo unded probabilistic rea c hability 2 prop erties on discr ete time Mar k ov chains (MCs). The ma jor technical matters lie on this last problem to which mo s t of the pap er is devoted. In a nutshell, the pro cess to find witnesses for the violatio n o f M | = ≤ p ♦ ψ , with M being a MC, is as follows. W e first eliminate fro m the original MC all the “uninteresting” parts. This pro ceeds as the first steps of the mo del c hecking pro cess: mak e absorbing all state sa tisfying ψ , and a ll states that cannot r eac h ψ , o btaining a new MC M ψ . Next reduce this last MC to an acyclic MC Ac ( M ψ ) in which all strongly connected comp onen ts have been conv eniently abstracted with a single proba bilis tic transitio n. The or iginal and the a cyclic MCs are related by a mapping that, to ea ch finite path in Ac( M ψ ) (that we ca ll rail), assigns a set of finite paths b ehaving similarly in M (that we call tor ren t). This map preserves the proba bilit y of rea c hing ψ and hence relates counterexamples in Ac( M ψ ) to counterexamples in M . Finally , counterexamples in Ac( M ψ ) are co mputed by reducing the pro ble m to a k shortes t path problem, as in [HK07a]. Because Ac( M ψ ) is a cyclic, the complex ity is lower than the c orresp onding problem in [HK07a]. It is worth to mention that o ur technique ca n a lso be applied to simple pCTL formulas without nested path q uan tifiers. Organization of the pap er. Section 2 pres en ts the necessa ry background o n Mar k ov chains (MC), Markov Decision Pro cesses (MDP), and Linea r T empor a l Log ic (L TL). Section 3 presents the definition of counterexamples and discuss the r eduction from general L TL formulas to upper bo unded proba bilistic r eac hability prop erties, and the extraction o f the maximizing MC in a MDP. Sectio n 4 dis c us ses desire pro p erties o f counterexamples. In Sections 5 and 6, w e introduce the fundamentals on rails a nd tor - rents, the r eduction of the origina l MC to the ac y clic one, a nd our notion of significant diagnostic co un terexamples. Section 7 then present the techniques to actually co mpute counterexamples. In Section 8 w e discuss rela ted work and g iv e final conclusions. 2 Preliminaries 2.1 Mark o v Decision Pro cesses and Marko v chains Marko v Decision Pro cesses (MDPs) constitute a formalism tha t combines nondetermin- istic and pr obabilistic choices. They a re the dominant mo del in corp orate finance, sup- ply chain optimization, s ystem verification and optimization. There are many slightly different v ariants of this formalis m such a s action-la beled MDPs [Bel57,FV97], proba- bilistic automata [SL95,SdV04]; we work with the state-la beled MDPs from [BdA95]. Definition 2. 1. Let S b e a set. A discr ete pr ob ability distribution on S is a function p : S → [0 , 1] with co un table or finite ca rrier and suc h that P s ∈ S p ( s ) = 1. W e deno te the set o f all discrete probability distributions on S by Distr( S ). Additionally , we define the Dir ac distribution on an element s ∈ S as 1 s , i.e., 1 s ( s ) = 1 a nd 1 s ( t ) = 0 for all t ∈ S \ { s } . Definition 2. 2. A Markov De cision Pr o c ess (MDP) is a four-tuple D = ( S, s 0 , L, τ ), where • S is the finite state spa ce o f the s y stem; • s 0 ∈ S is the initial state; • L is a lab eling function tha t asso ciates to each state s ∈ S a set L ( s ) o f propo sitional v a riables that ar e valid in s ; • τ : S → ℘ (Distr( S )) is a function that asso ciates to each s ∈ S a non-empty a nd finite subset of Distr( S ) of probability dis tr ibutions. Definition 2.3. Let D = ( S, s 0 , τ , L ) be a MDP. W e define a su c c essor r elation δ ⊆ S × S by δ , { ( s, t ) |∃ π ∈ τ ( s ) . π ( t ) > 0 } and for each state s ∈ S we define the sets Paths( D , s ) , { s 0 s 1 s 2 . . . ∈ S ω | s 0 = s ∧ ∀ n ∈ N . δ ( s n , s n +1 ) } and Paths ⋆ ( D , s ) , { s 0 s 1 . . . s n ∈ S ⋆ | s 0 = s ∧ ∀ 0 ≤ i < n . δ ( s n , s n +1 ) } 3 of paths a nd finite pa ths resp ectively b eginning at s . W e usually omit D from the notation; we also a bbreviate Paths( D , s 0 ) as Paths( D ) and Paths ⋆ ( D , s 0 ) as Paths ⋆ ( D ). F or ω ∈ Paths( s ), we write the ( n + 1)-st state of ω as ω n . As usual, we let B s ⊆ ℘ (Paths( s )) b e the Bo r el σ -algebra on the cones h s 0 . . . s n i , { ω ∈ Paths( s ) | ω 0 = s 0 ∧ . . . ∧ ω n = s n } . Additiona lly , for a set of finite paths Λ ⊆ Paths ⋆ ( s ), w e de fine h Λ i , S σ ∈ Λ h σ i . s 0 { w } s 1 s 2 { w } { w } s 3 s 4 { w } s 5 s 6 s 7 s 8 s 9 s 10 s 11 s 12 { v } s 13 { v, w } s 14 { v } π 1 π 2 1 0 , 4 0 , 6 0 , 3 0 , 4 0 , 3 0 , 2 0 , 3 0 , 5 0 , 6 .. 0 , 4 . 0 , 3 . 0 , 5 0 , 2 1 .. 1 0 , 2 0 , 8 1 1 0 , 4 0 , 6 0 , 6 0 , 4 Fig. 3: Marko v Decisio n P roces s Figure 3 shows a MDP . Absorbing states (i.e., s ta tes s w ith τ ( s ) = { 1 s } ) are represented b y double lines. This MDP features a s ingle nondeterministic dec ision, to be made in state s 0 , namely π 1 and π 2 . Definition 2 .4. Let D = ( S, s 0 , τ , L ) b e a MDP a nd A ⊆ S . W e define the sets of paths and finite paths r eac hing A as Reach ( D , s, A ) , { ω ∈ Paths( D , s ) | ∃ i ≥ 0 .ω i ∈ A} and Reach ⋆ ( D , s, A ) , { σ ∈ Paths ⋆ ( D , s ) | la st( σ ) ∈ A ∧ ∀ i ≤| σ |− 1 .σ i 6∈ A} resp ectiv ely . Note that Reach ⋆ ( D , s, A ) consists of those finite paths σ reaching A exactly onc e , at the end of the execution. It is easy to chec k that these sets ar e pr efi x fr e e , i.e. contain finite paths such tha t none of them is a pre fix o f another one. 2.2 Sc hedulers Schedulers (also called str a tegies, a dv ersar ies, o r p olicies) res olv e the nondeterminis tic choices in a MDP [PZ9 3,V ar85,BdA95]. Definition 2 .5. Let D = ( S, s 0 , τ , L ) b e a MDP. A sche duler η on D is a function from Paths ⋆ ( D ) to Distr( ℘ (Distr( S ))) s uc h that for all σ ∈ Paths ⋆ ( D ) we hav e η ( σ ) ∈ Distr( τ (last( σ ))). W e denote the set of a ll schedulers on D by Sc h( D ). Note that our schedulers are ra ndomized, i.e., in a finite path σ a scheduler cho oses an element of τ (last ( σ )) probabilistica lly . Under a scheduler η , the pr obabilit y that the next state reached after the path σ is t , equals P π ∈ τ (last( σ )) η ( σ )( π ) · π ( t ). In this wa y , a scheduler induces a pr obabilit y measure on B s as usual. Definition 2.6. Let D b e a MDP, s ∈ S , and η a n s -scheduler on D . W e define the probability measure µ s,η as the unique mea sure on B s such that for all s 0 s 1 . . . s n ∈ Paths ⋆ ( s ) Pr s,η ( h s 0 s 1 . . . s n i ) = n − 1 Y i =0 X π ∈ τ ( s i ) η ( s 0 s 1 . . . s i )( π ) · π ( s i +1 ) . 4 W e no w recall the notions of deterministic and memoryless schedulers. Definition 2. 7. L et D b e a MDP, s ∈ S , and η an scheduler of D . W e say that η is deterministic if η ( σ )( π i ) is either 0 or 1 for a ll π i ∈ τ (last ( σ )) and all σ ∈ Paths ⋆ ( D ). W e s a y that a s c heduler is memoryless if for a ll finite paths σ 1 , σ 2 of D with last( σ 1 ) = last( σ 2 ) we hav e η ( σ 1 ) = η ( σ 2 ) Definition 2.8. Let D b e a MDP, s ∈ S , and ∆ ∈ B s . Then the maximal and minimal pr ob abilities of ∆ , Pr + s ( ∆ ) , Pr − s ( ∆ ), are defined by Pr + s ( ∆ ) , sup η ∈ S c h s ( D ) Pr s,η ( ∆ ) and Pr − s ( ∆ ) , inf η ∈ S c h s ( D ) Pr s,η ( ∆ ) . A scheduler that attains Pr + s ( ∆ ) or Pr − s ( ∆ ) is ca lled a max imi zing o r minimizing scheduler resp ectively . A Markov chain (MC) is a MDP asso ciating exactly one probability distribution to each state. In this way no ndeter ministic choices a re not longer a llo wed. Definition 2. 9 (Mar k ov ch a in) . Let D = ( S, s 0 , τ , L ) be a MDP. If | τ ( s ) | = 1 for all s ∈ S , then w e say tha t D is a Markov chain (MC). 2.3 Linear T emp oral Logic Linear temp oral logic (L TL) [MP9 1] is a mo dal tempor al logic with mo dalities referring to time. In L TL is p ossible to encode formulas ab out the future of paths: a condition will even tually be true, a condition will b e true until ano ther fa c t b ecomes true, etc. Definition 2.10 . L TL is built up fr om the set of prop ositional v ariables V , the logical connectives ¬ , ∧ , a nd a temp oral mo dal op erator by the follo wing gr ammar: φ ::= V | ¬ φ | φ ∧ φ | φ U φ. Using these op erators we define ∨ , → , ♦ , and in the standar d wa y . Definition 2.11 . Let D = ( S, s 0 , τ , L ) be a MDP. W e define sa tisfiabilit y for paths ω in D and L TL formulas φ, ψ inductively by ω | = D v ⇔ v ∈ L ( ω 0 ) ω | = D ¬ φ ⇔ not( ω | = D φ ) ω | = D φ ∧ ψ ⇔ ω | = D φ and ω | = D ψ ω | = D φ U ψ ⇔ ∃ i ≥ 0 .ω ↓ i | = D ψ and ∀ 0 ≤ j

p φ in a simila r wa y . In cas e the MDP is fully probabilistic, i.e., a MC, the satisfiability pro blem is reduced to D | = ⊲ ⊳p φ ⇔ Pr D (Sat( φ )) ⊲ ⊳ p , where ⊲ ⊳ ∈ { <, ≤ , >, ≥} . 5 3 Coun terexamples In this se c tion, we define what counterexamples ar e and how the pro ble m of finding counterexamples for a ge neral L TL pro perty over Markov Decision Pr ocesse s reduces to finding counterexamples to reachabilit y pr oblems ov er Marko v chains. Definition 3 . 1 (Counterexamples) . Let D b e a MDP and φ an L TL for m ula. A c oun- ter example to D | = ≤ p φ is a mea surable set C ⊆ Sat( φ ) such that Pr + D ( C ) > p . Coun- terexamples to D | = p φ and D | = ≥ p φ cannot be defined straightforwardly a s it is a lw ays p ossible to find a set C ⊆ Sa t( φ ) such that Pr − D ( C ) ≤ p or Pr − D ( C ) < p , no te that the empty set trivially satisfies it. Ther efore, the b est w ay to find counterexam- ples to low er b ounded proba bilities is to find counterexamples to the dual pro perties D | = < 1 − p ¬ φ and D | = ≤ 1 − p ¬ φ . That is , while for upp er b ounded pro babilities, a counterex- ample is a set of paths sa tisfying the prop erty b eyond the bo und, for low er b ounded probabilities the counterexample is a set of paths that do es not satisfy the prop erty with sufficient pro babilit y . s 0 s 1 s 2 s 3 s 4 { v } s 5 { v } π 1 π 2 0 , 6 0 , 2 0 , 7 0 , 5 0 , 99 0 , 5 0 , 01 0 , 4 0 , 1 Fig. 4 : Example 1. Consider the MDP D of Figure 4 and the L TL formula ♦ v . it is easy to chec k that D 6| = < 1 ♦ v . The set C = Sat( ♦ v ) = { γ ∈ Paths( s 0 ) |∃ i ≥ 0 .γ = s 0 ( s 1 ) i ( s 4 ) ω } ∪ { γ ∈ Paths( s 0 ) |∃ i ≥ 0 .γ = s 0 ( s 3 ) i ( s 5 ) ω } is a co un terexam- ple. Note that Pr + s 0 ,η ( C ) = 1 where η is any deter- ministic scheduler of M s atisfying η ( s 0 ) = π 1 . L T L for m ulas a re ac tua lly chec ked by reduc- ing the mo del chec king pro blem to a rea chability problem [dAKM97]. F or chec king upp er bo unded probabilities, the L TL formula is tr a nslated into an equiv a len t deterministic Rabin automaton and comp osed with the MDP under v erifica tion. O n the obtained MDP, the set of states fo r ming accepting e nd comp onents (maximal comp onen ts that traps acc e pting co nditions with probability 1) ar e identified. The maximum probability of the L TL pr operty on the original MDP is the same a s the maximum pr o babilit y of rea ching a state o f an accepting end co mponent in the fina l MDP. Hence, from now on we will fo cus on counterexamples to prop erties of the for m D | = ≤ p ♦ ψ or D | =

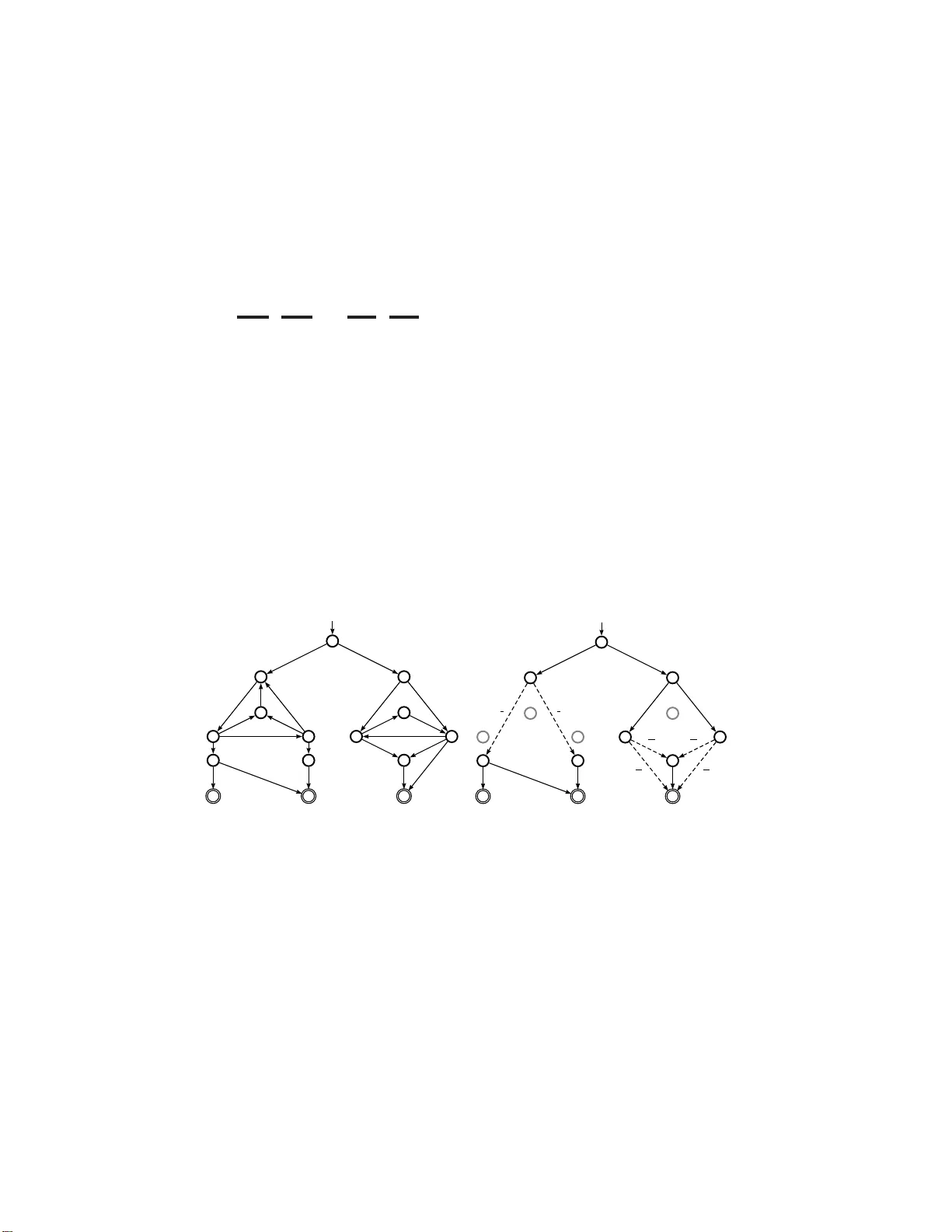

p . W e denote the set of all represe n tative counterexamples to M | = ≤ p ♦ ψ by R ( M , p, ψ ). Theorem 4. 2. L et D b e a MDP , ψ a pr op ositional formula and p ∈ [0 , 1] . If C is a r epr esent ative c ounter example to D | = ≤ p ♦ ψ , then hC i is a c ounter example to D | = ≤ p ♦ ψ . F urthermor e, ther e exists a c ounter example to D | = ≤ p ♦ ψ if and only if ther e exist s a r epr esent ative c ounter example to D | = ≤ p ψ . F o llo wing [HK07a], we present the notions of minimum c ounter example , str ongest evidenc e and m ost indic ative c ounter examples . Definition 4.3 (Minimum counterexample) . Let M b e a MC, ψ a pro positiona l for - m ula and p ∈ [0 , 1]. W e say that C ∈ R ( M , p, ψ ) is a minimum c ounter example if |C | ≤ |C ′ | , for all C ′ ∈ R ( M , p, ψ ). Definition 4 . 4 (Strongest evidence) . Let M b e a MC, ψ a prop ositional formula and p ∈ [0 , 1]. A str ongest evidenc e to M 6| = ≤ p ♦ ψ is a finite path σ ∈ Reach ⋆ ( M , Sat( ψ )) such that Pr M ( h σ i ) ≥ Pr M ( h ρ i ), for all ρ ∈ Reach ⋆ ( M , Sat( ψ )). Definition 4.5 (Most indicative co un terexample) . Let M be a MC, ψ a pr opositiona l formula and p ∈ [0 , 1]. W e ca ll C ∈ R ( M , p, ψ ) a most indic ative c ounter example if it is minim um and Pr ( hC i ) ≥ Pr ( hC ′ i ), for all minimum co unterexamples C ′ ∈ R ( M , p, ψ ). Unfortunately , very often most indicative counterexamples are very lar ge (even infinite), many of its elements hav e insignificant measur e and elements can b e extremely similar to each other (consequently providing the s ame diagnostic information). E v en worse, sometimes the finite pa ths with highes t probability do not exhibit the wa y in which the system accumulates higher pro babilit y to reach the undesired prop erty (and consequently where an er ror o ccurs with hig her probability). F or these reaso ns, we are of the opinion that representative counterexamples a re s till to o general in order to b e useful a s feedback information. W e approach this problem by splitting out the representative counterexample into sets of finite paths following a “ similarit y” criteria (in tro duced in Section 5). These se ts are called witnesses of the c ount er example . Recall that a se t Y of nonempt y sets is a partition of X if the elements of Y cov er X a nd the elements of Y are pairwis e disjoint. W e define co un terexample partitions in the following way . 7 Definition 4 . 6 (Counterexample partitio ns and witnesses) . Let D b e a MDP, ψ a prop ositional for m ula, p ∈ [0 , 1 ], and C a representativ e count er example to D | = ≤ p ♦ ψ . A c ounter example p artition W C is a par tition of C . W e ca ll the elements of W C witnesses . Since not every par tition genera tes useful witnesses (fro m the debugging p ersp ec- tive), we now state prop erties that witnesses must satisfy in order to b e v aluable as diagnostic informatio n. In Sec tio n 7 we show how to partition the detailed counterex- ample in order to obtain useful witness es. Similarity: E le ments o f a witness should provide similar debugging informa tion. Accuracy : Witnesses with higher probability sho uld show evolution of the system with higher proba bility o f containing er rors. Originality: Different witnesses should provide different debugging informatio n. Significance: The pr obabilit y of a witnesses sho uld b e clos e to the probability b ound p . Finiteness : The num b er of witnesses of a co unterexamples partition sho uld b e finite. 5 Rails and T orren ts As ar g ued be fo re we co nsider that representative counterexamples are exces s iv ely gen- eral to b e useful a s feedba ck infor mation. Therefore, we gro up finite paths o f a repr e- sentativ e c o un terexample in witnesses if they are “similar enough” . W e will consider finite paths that b eha ve the same outside SCCs of the system as providing simila r feedback information. In order to for malize this idea , we fir st reduce the origina l Ma rk ov chain to an acyclic one that preser v es r e ac hability probabilities. W e do so by removing all SCCs K of M keeping just input states of K. In this wa y , we get a new ac yclic MC denoted by Ac( M ). The pr o babilit y matrix of the Mar k ov chain relates input s tates of each SCC with its output states with the rea c hability probability b et ween these states in M . Seco ndly , we establish a map b et ween finite pa ths σ in Ac( M ) ( r ails ) and sets of finite paths W σ in M ( torr ents ). Each torrent contains finite paths that are similar, i.e., b ehav e the same outside SCCs. Additionally we show tha t the proba bilit y of σ is equal to the pro babilit y o f W σ . Reduction to Acyclic Mark ov Chains Consider a MC M = ( S, s 0 , P , L ). Rec a ll that a subset K ⊆ S is called str ongly c onne cte d if for every s, t ∈ K ther e is a finite path from s to t . Additionally K is called a str ongly c onne cte d c omp onent (SCC) if it is a maximally (with resp ect to ⊆ ) strongly connected subset of S . Note that every state is a member of exactly one SCC of M (even thos e states that are not inv olved in cycles, since the trivial finite path s connects s to itse lf ). F ro m now on we let SCC ⋆ be the set of non trivia l strong ly connected comp onent s of a MC, i.e., those comp osed o f more than one state. A Markov chain is c alled acyclic if it do es not hav e non triv ia l SCCs. Note that an acyclic Markov chain still ha s abso rbing states. Definition 5.1. Let M = ( S, s 0 , P , L ) b e a MC. Then, for ea c h SCC ⋆ K of M , we define the sets Inp K ⊆ S of all states in K that hav e an incoming trans ition from a state outside of K and Out K ⊆ S o f all sta tes outside of K that have an incoming transition from a state o f K in the following w ay Inp K , { u ∈ K | ∃ s ∈ S \ K . P ( s, u ) > 0 } , Out K , { s ∈ S \ K | ∃ u ∈ K . P ( u, s ) > 0 } . 8 W e also define for each SCC ⋆ K a MC re lated to K a s M K , (K ∪ Out K , s K , P K , L K ) where s K is any state in Inp K , L K ( s ) , L ( s ), and P K ( s, t ) is equal to P ( s, t ) if s ∈ K and equal to 1 s otherwise. Additionally , for every state s inv olved in non triv ial SCCs we define SCC + s as M K , where K is the SCC ⋆ of M such that s ∈ K . Now we are able to define an ac y clic MC Ac( M ) r elated to M . Definition 5.2. Let M = ( S, s 0 , P , L ) be a MC. W e define Ac( M ) , ( S ′ , s 0 , P ′ , L ′ ) where • S ′ , S com z }| { S \ [ K ∈ SCC ⋆ K S S inp z }| { [ K ∈ SCC ⋆ Inp K • L ′ , L | S ′ , • P ′ ( s, t ) , P ( s, t ) if s ∈ S com , Pr M ,s (Reach(SCC + s , s, { t } )) if s ∈ S inp ∧ t ∈ Out SCC + s , 1 s if s ∈ S inp ∧ Out SCC + s = ∅ , 0 otherwise. Note that Ac( M ) is indeed a cyclic. Example 2. Cons ide r the MC M o f Figure 5(a). The strong ly connected co mponents of M are K 1 , { s 1 , s 3 , s 4 , s 7 } , K 2 , { s 5 , s 6 , s 8 } a nd the singletons { s 0 } , { s 2 } , { s 9 } , { s 10 } , { s 11 } , { s 12 } , { s 13 } , and { s 14 } . The input states of K 1 are Inp K 1 = { s 1 } and its output s ta tes ar e Out K 1 = { s 9 , s 10 } . F or K 2 , Inp K 2 = { s 5 , s 6 } and Out K 2 = { s 11 , s 14 } . The reduced acyclic MC of M is shown in Figure 5(b). s 0 s 1 s 2 s 3 s 4 s 5 s 6 s 7 s 8 s 9 s 10 s 11 s 12 s 13 s 14 0 , 4 0 , 6 1 0 , 4 0 , 6 0 , 3 0 , 4 0 , 3 0 , 2 0 , 3 0 , 5 0 , 6 .. 0 , 4 . 0 , 3 . 0 , 5 0 , 2 1 .. 1 0 , 2 0 , 8 1 1 (a) Original MC s 0 s 1 s 2 s 5 s 6 s 9 s 10 s 11 s 12 s 13 s 14 s 3 s 4 s 7 s 8 0 , 4 0 , 6 0 , 4 0 , 6 0 , 2 0 , 8 1 1 2 3 1 3 35 41 6 41 35 41 6 41 (b) Derived A cyclic MC Fig. 5: Rails and T orrents W e now r elate (finite) paths in Ac( M ) (rails) to sets o f (finite) paths in M (tor ren ts). Definition 5.3 (Rails) . Let M b e a MC. A finite path σ ∈ Paths ⋆ (Ac( M )) will b e called a r ail of M . Consider a rail σ , i.e., a finite path of Ac( M ). W e will use σ to r epresen t those paths ω of M that behave “similar to ” σ outside SCCs of M . Naively , this means that σ is a subsequence of ω . There a re t wo technical subtleties to deal with: every input state in σ must b e the first s tate in its SCC in ω (freshness) and every SCC visited by ω m ust be also visited b y σ (inertia) (see Definition 5.5). W e need these extra co nditions to make sure that no path ω b ehav es “similar to” tw o distinct rails (see Lemma 5.7). Recall that g iv en a finite sequence σ and a (p ossible infinite) s equence ω , we say that σ is a su bse quenc e o f ω , denoted by σ ⊑ ω , if and only if there exists a strictly incre a sing function f : { 0 , 1 , . . . , | σ | − 1 } → { 0 , 1 , . . . , | ω | − 1 } such that ∀ 0 ≤ i< | σ | .σ i = ω f ( i ) . If ω is an infinite sequence, we in terpr et the codo main o f f a s N . In ca se f is such a function we write σ ⊑ f ω . Note that finite paths and paths are seque nc e s. 9 Definition 5.4. Let M = ( S, s 0 , P , L ) b e a MC. On S we consider the eq uiv alence relation ∼ M satisfying s ∼ M t if and only if s and t are in the same strongly co nnected comp onen t. Again, we usua lly o mit the subsc r ipt M from the no tation. The following definition r efines the notion of subseque nc e , taking car e of the tw o techn ica l subtleties noted above. Definition 5 . 5. Let M = ( S, s 0 , P , L ) b e a MC, ω a (finite) path of M , and σ ∈ Paths ⋆ (Ac( M )) a finite path of Ac( M ). Then we write σ ω if ther e exis ts f : { 0 , 1 , . . . , | σ | − 1 } → N such that σ ⊑ f ω and for all 0 ≤ i < | σ | we hav e ∀ 0 ≤ j 0 } is the set of states reaching ψ in M . The following theo rem shows the relation b et ween paths, finite paths, a nd pr ob- abilities o f M , M ψ , and Ac( M ψ ). Most imp ortantly , the pro babilit y of a r ail σ (in Ac( M ψ )) is equal to the probability of its as s ociated to rren t (in M ) (item 5 b elo w) and the probability of ♦ ψ is no t affected by reducing M to Ac( M ψ ) (item 6 b elo w). Note that a ra il σ is a lways a finite path in Ac( M ψ ), but that we can talk ab out its asso ciated torrent T or r ( M ψ , σ ) in M ψ and ab out its asso ciated torrent T or r ( M , σ ) in M . The former exists for tec hnical convenience; it is the latter that w e ar e ultimately int er e s ted in. The following theorem also shows that for our purp oses, viz. the definition of the ge nerators of the torrent and the pr o babilit y of the tor ren t, there is no difference (items 3 and 4 b elow) . Theorem 6.2. L et M = ( S, s 0 , P , L ) b e a MC and ψ a pr op ositional formula. Then for every σ ∈ Paths ⋆ ( M ψ ) 1. Rea ch ⋆ ( M ψ , s 0 , Sat( ψ )) = Rea c h ⋆ ( M , s 0 , Sat( ψ )) , 2. Pr M ψ ( h σ i ) = Pr M ( h σ i ) , 3. GenT or r( M ψ , σ ) = GenT or r( M , σ ) , 4. Pr M ψ (T o rr( M ψ , σ )) = Pr M (T o rr( M , σ )) , 5. Pr Ac( M ψ ) ( h σ i ) = Pr M (T o rr( M , σ )) , 6. Ac( M ψ ) | = ≤ p ♦ ψ if and only if M | = ≤ p ♦ ψ , for any p ∈ [0 , 1] . Definition 6. 3 (T orr en t-Counterexamples) . Let M = ( S, s 0 , P , L ) b e a MC, ψ a prop ositional formula, and p ∈ [0 , 1] such that M 6| = ≤ p ♦ ψ . Let C b e a repres en tative counterexample to Ac( M ψ ) | = ≤ p ♦ ψ . W e define the set T o rRepCoun t( C ) , { GenT orr( M , σ ) | σ ∈ C } . W e call the set T orRepCount( C ) a torr ent-c ounter example of C . Note that this s et is a partition of a coun terex a mple to M | = ≤ p ♦ ψ . Additionally , we deno te by R t ( M , p, ψ ) to the set of all to rren t-co unterexamples to M | = ≤ p ♦ ψ , i.e., { T orRepCo un t( C ) | C ∈ R ( M , p, ψ ) } . 11 Theorem 6.4 . L et M = ( S, s 0 , P , L ) b e a MC , ψ a pr op ositional formula, and p ∈ [0 , 1] such that M 6| = ≤ p ♦ ψ . T ake C a r epr esentative c ounter example to Ac( M ψ ) | = ≤ p ♦ ψ . Then the set of finite p aths U W ∈ T orRepCount( C ) W is a r epr esent ative c ounter example to M | = ≤ p ♦ ψ . Note that for each σ ∈ C we get a witness GenT orr( M , σ ). Also note tha t the nu mber of rails is finite, so there are also only finitely ma n y witnesses. F o llo wing [HK0 7a], we extend the notions of m inimum c ounter examples , str ongest evidenc e and s m allest c ounter example to torrents. Definition 6 .5 (Minim um torr en t-counterexample) . Let M be a MC, ψ a prop osi- tional for m ula a nd p ∈ [0 , 1]. W e say that C t ∈ R t ( M , p, ψ ) is a minimum t orr ent- c ounter example if |C t | ≤ |C ′ t | , for all C ′ t ∈ R t ( M , p, ψ ). Definition 6 . 6 (Strongest torrent-evidence) . Let M be a MC, ψ a prop ositional for- m ula and p ∈ [0 , 1]. A stro ngest torr ent- evidenc e to M 6| = ≤ p ♦ ψ is a to rren t W σ ∈ T o rr( M , Sat( ψ )) such that Pr M ( W σ ) ≥ Pr M ( W ρ ) for all W ρ ∈ T o rr( M , Sat( ψ )). Now we define our notio n of significant diagnos tic co unterexamples. It is the gen- eralization of most indicative co un terexample from [HK07 a ] to our setting. Definition 6. 7 (Most indicative tor ren t-counterexample) . Let M b e a MC, ψ a prop o- sitional fo rm ula and p ∈ [0 , 1]. W e call C t ∈ R t ( M , p, ψ ) a most indic ative torr ent- c ounter example if it is a minim um torr e nt-counterexample and Pr ( S W ∈C t h W i ) ≥ Pr ( S W ∈C ′ t h W i ) for all minimum tor ren t counterexamples C ′ t ∈ R t ( M , p, ψ ). By Theo rem 6 .4 it is p ossible to obtain stronges t torr e n t-evidence and most indica- tive torrent-counterexamples o f a MC M by o btaining str ongest evidence and most indicative counterexamples o f Ac( M ψ ) resp ectiv ely . 7 Computing Coun terexamples In this s ection we show how to compute most indicative torrent-count er examples. W e also discus s what information to pres en t to the use r: how to present witness es and how to deal with ov er ly la rge stro ng ly connected comp onen ts. 7.1 Maximizing Sc hedulers The calculation of a maximal probability on a reachabilit y problem can be per formed by solving a linear minimizatio n problem [BdA95,dA97]. This minimization problem is defined on a system of inequalities that has a v ariable x i for each differ e n t state s i and an inequality P j π ( s j ) · x j ≤ x i for each distribution π ∈ τ ( s i ). The maximizing (deterministic memoryles s) scheduler η c an b e easily extra c ted out o f such system of inequalities after obtaining the s olution. If p 0 , . . . , p n are the v alues that minimize P i x i in the pr e vious system, then η is such that, for all s i , η ( s i ) = π whenever P j π ( s j ) · p j = p i . In the following we deno te P s i [ ♦ ψ ] , x i . 7.2 Computing most indi cat i ve torren t-counterexa mpl es W e divide the co mputation of mo st indicative torrent-count er examples to D | = ≤ p ♦ ψ in three stages: pr e-pr o c essing , SCC analysis , and se ar ching . Pre-pro cessing s tage. W e first mo dify the original MC M b y making a ll states in Sat( ψ ) ∪ S \ Sat ♦ ( ψ ) abso rbing. In this wa y we obtain the MC M ψ from Definition 6 .1. Note that we do no t hav e to s p end additional computational resour c es to compute this set, since Sa t ♦ ( ψ ) = { s ∈ S | P s [ ψ ] > 0 } and hence all req uir ed data is already av ailable from the L TL mo del ch ecking phas e. 12 SCC analysis s tage. W e re move all SCCs K of M ψ keeping just input states of K, getting the acyclic MC Ac( M ψ ) according to Definition 5 .2. T o compute this, we first need to find the SCCs of M ψ . Ther e exists well known algorithms to achiev e this: K osara ju’s, T ar jan’s, Gab o w’s algo rithms (among others). W e also hav e to compute the r eac hability pro babilit y from input states to output states of every SCC. This can b e done by using stea dy state a nalysis techniques [Cas 9 3]. Searc hing stage. T o find most indicative torrent-counterexamples in M , we find most indicative counterexamples in Ac( M ψ ). F o r this we us e the same approa c h as [HK07a], turning the MC in to a weigh ted digr aph to exchange the problem of finding the finite path with highest pro babilit y by a s hortest path problem. The no des of the digraph are the states of the MC and there is an edge b et ween s and t if P ( s, t ) > 0. The weigh t of such an edge is − log P ( s, t ). Finding the mo st indicative co un terexample in Ac( M ψ ) is now reduced to finding k sho r test paths. As explained in [HK07a], our algo rithm has to compute k o n the fly . Eppstein’s alg orithm [Epp98] pr oduces the k s hortest paths in gener al in O ( m + n log n + k ), where m is the num b er of no des and n the num ber of edges. In our case, since Ac( M ψ ) is acyclic, the complexity dec r eases to O ( m + k ). 7.3 Debugging i ssues Representa tive finite paths. What we have co mputed so far is a mos t indicative counterexample to Ac( M ψ ) | = ≤ p ♦ ψ . This is a finite set of r a ils, i.e ., a finite set of paths in Ac( M ψ ). E ac h of these pa ths σ repr e sen ts a witness GenT orr( M , σ ). No te tha t this witness itself has usua lly infinitely many elements. In pra ctice, one somehow has to display a witness to the user. The obvious wa y would b e to show the user the r ail σ . This, how ever, may b e confusing to the user as σ is no t a finite path of the original Markov Decision P roces s. Instead o f presenting the user with σ , we therefor e show the user the element o f GenT orr( M , σ ) with highest probability . Definition 7. 1. Let M be a MC, a nd σ ∈ Paths ⋆ (Ac( M ψ )) a ra il of M . W e define the r epr esentant of T orr( M , σ ) as repT orr ( M , σ ) = r e pT orr ] ρ ∈ GenT orr( M ,σ ) h ρ i , a rg max ρ ∈ GenT orr( M , σ ) Pr ( h ρ i ) Note that given repT or r ( M , σ ), one can easily recover σ . Therefor e, no information is lost by prese nting torrents as a single element o f the torre nt instea d of as a r a il. Expanding SCC . It is po ssible that the system contains some very lar g e stro ngly connected comp onents. In that case , a single witness co uld have a very lar g e probability mass and one could arg ue that the infor mation presented to the us er is not detailed enough. F or instance, consider the Markov chain o f Figure 6 in w hich ther e is a sing le large SCC with input state t and output state u . K 1 s t u Fig. 6 : The most-indicative tor ren t co un terexample to the prop ert y M | = ≤ 0 . 9 ♦ ψ is simply { GenT or r ( stu ) } , i.e., a sin- gle witness with probability mass 1 asso ciated to the r a il stu . Although this may seem uninfor mativ e, we argue tha t it is more infor mativ e than listing several paths of the form st · · · u with probability summing up to, say , 0 . 91 . Our single witness counterexample suggests that the outgoing edge to a state not rea c hing ψ was simply forgo tten; the listing of paths still allows the p ossibility that one of the probabilities in the who le system is simply wro ng. 13 Nevertheless, if the user needs more informatio n to tackle bugs ins ide stro ngly connected comp onents, note that there is mo re information av a ilable at this p oin t. In particular, for every strongly co nnected comp onen t K, ev ery input state s of K (even for every state in K ), and e v ery output sta te t of K, the proba bilit y of reaching t from s is already av aila ble from the computation of Ac( M ψ ) during the SCC analysis stage of Section 7.2. 8 Final Discussion W e have presented a nov el technique for r epresent ing a nd co mputing co un terexamples for nondeterministic and probabilistic sys tems. W e pa rtition a counterexample in wit- nesses and state five prop erties that w e believe go o d witness es should sa tisfy in or der to b e useful as debugg ing to ol: (simila rit y) elements o f a witness should provide simila r debugging infor mation; (origina lit y) different witnesses s hould provide different debug- ging information; (a ccuracy) witnesses with higher pro babilit y should indicate system behavior more likely to contain err ors; (sig nificance) pro babilit y of a witness should be relatively high; (finiteness ) there should b e finitely many witnesses. W e achiev e this by gr ouping finite paths in a coun tere xample together in a witness if they behave the same outside the strong ly connected comp onent s. Presently , some work has b een done on counterexample genera tio n techniques for different v aria n ts of probabilistic mo dels (Discrete Markov chains and Con tinues Marko v chains) [AHL05,AL06,HK07 a ,HK07 b ]. In o ur ter mino logy , these works consider wit- nesses consis ting of a single finite path. W e hav e alr eady discussed in the Intro duction that the single path approach do es not meet the prop erties of a ccuracy , originality , significance, and finiteness. Instead, our witness/ torrent approach provides a high level of abstraction of a coun- terexample. By g rouping together finite paths that b eha ve the same outside strongly connected comp onen ts in a single witness, w e can achiev e these prop erties to a higher extent. Behaving the s a me outside str ongly connected co mponents is a r easonable wa y of for malizing the concept of providing similar debugging information. This gr ouping also makes witnesses significantly different form each other: each witness co mes form a different rail and each rail provides a differen t wa y to reach the undesired pr o perty . Then ea ch witness provides original information. Of co urse, our witnesse s are more sig- nific ant than single finite paths, b ecause they are s e ts of finite paths. This a lso gives us more ac cur acy than the approa c h with single finite paths, as a collection of finite paths behaving the s ame a nd r eac hing an undesired c ondition w ith high proba bilit y is more likely to show ho w the system reaches this c o ndition than just a single path. Finally , bec ause there is a finite num b er of rails, there is a lso a fin ite num b er of witnes ses. Another key difference of our work to prev ious ones is that our tec hnique allows us to generate counterexamples for probabilistic systems with nondeterminis m. How ever, a recent rep ort [AL07] also consider s counterexample generatio n for MDPs. This work is limited to upp er b ounded pCTL formulas without nested temp oral op erators . Besides, their technique significa n tly differs from ours . Finally , among the r elated work, we would like to str ess the res ult of [HK07a], which provides a sy stematic characterization of co un terexample genera tion in terms of shortest paths pro ble ms . W e use this result to gener ate counterexamples for the acyclic Marko v Cha ins. In the future we intend to implement a to ol to generate our significant diagnostic counterexamples; a very preliminary version has alrea dy b een implemented. There is still work to b e done on improving the v isualization of the witnesses, in particular, when a witness captures a lar ge strongly connected comp onen t. Another direction is to investigate how this work can b e ex tended to timed systems, e ither mo deled with contin uous time Markov chains or with probabilistic timed automata. 14 References AHL05. Husain A lj azzar, Holger Hermanns, and Stefan Leue. Counterexamples for timed probabilistic reachabili ty . In F ormal Mo deling and Analysis of Time d Systems (F ORMA TS ’05) , volume 3829, pages 177–195, 2005. AL06. Husain A lj azzar and S tefa n Leue. Extended directed search for probabilistic timed reac hability . In F ormal Mo deling and Analysis of Tim e d Systems (FORMA TS ’06) , pages 33–51, 2006. AL07. Husain Aljazzar and Stefan Leue. Coun terexamp les for mo del chec king of marko v decision pro cesses. Computer Science T echnical Rep ort soft-08-01, Un iv ersity of Konstanz, December 2007. BdA95. Andrea Bianco and Luca de Alfaro. Model checking of probabilistic and non- deterministic sy stems. In G. Go os, J. Hartmanis, and J. v an Leeuw en, editors, F oundations of Softwar e T e chnolo gy and The or etic al Computer Scienc e (FSTTCS ’95) , volume 1026, pages 499–513, 1995. Bel57. Ric hard E. Bellman. A Marko vian decision pro cess. J. Math. M e ch. , 6:679–684, 1957. BLR05. Gerd Behrmann, Kim G. Larsen, and Jacob I. Rasmussen. Optimal scheduling using priced t imed automata. SIGMETRICS Perform. Eval. R ev. , 32(4):34–4 0, 2005. Cas93. Christos G. Cassandras. Di scr ete Event Systems: Mo deling and Performanc e Ana l- ysis . R ic hard D. Irwin, Inc., and Ak sen Asso ciates , I nc., 1993. CGJ + 00. Edmund M. Clark e, Orna Grumberg, Somesh Jha, Y uan Lu, and Helmut V eith. Countere x amp le-guided abstraction refinement. In Computer Aide d V erific ation , pages 154–169, 2000. dA97. Luca de Alfaro. F ormal V erific ation of Pr ob abil istic Systems . PhD thesis, Stanford Universit y , 1997. dAKM97. Luca de Alfaro, Arjun Kapur, and Zohar Manna. H ybrid diagrams: A deductive- algorithmic approac h to hybrid system verification. In Symp osium on The or etic al Asp e cts of Computer Scienc e , pages 153–164, 1997. Epp98. David Eppstein. Finding the k shortest paths. In SI AM Journal of C omput i ng , pages 652–673, 1998. FV97. J. Filar and K. V rieze. Comp etitive Markov De cision Pr o c esses . 1997. HK07a. T ingting Han and Jo ost-Pieter Kato en. Coun terexamples in probabilistic mo del chec king. In T o ol s and Algorithms f or the Construct ion and Analysis of Systems: 13th International Confer enc e (T ACAS ’ 07) , vol ume 4424, pages 60–75, 2007. HK07b. Tingting Han and Joost-Pieter Kato en. Pro viding evidence of likely b eing on time counterexample generation for ctmc mo del chec king. In International Symp osium on Automate d T e chnolo gy f or V erific ation and Analysis (A TV A ’ 07) , volume 4762, pages 331–346, 2007. MP91. Z. Manna an d A. Pnueli. The T emp or al L o gic of R e active and Concurr ent Systems: Sp e cific ation . S pringer, 1991. PZ93. Amir Pnueli and Lenore D. Zuck. Probabilistic verification. Information and Computation , 103(1):1–29, 1993. SdV04. Ana S ok olo v a and Erik P . de Vink. Probabilistic automata: System types, parallel composition and comparison. I n Christel Baier, Boudewijn R. H averk ort, Holger Hermans, Jo ost-Pieter Kato en, and Markus Siegle, editors, V al idation of Sto chastic Systems: A Guide to Curr ent R ese ar ch , volume 2925, pages 1–43. 2004. SL95. Rob erto Segala and Nancy Ly nc h. Probabilistic sim ulations for probabilistic pro- cesses. Nor dic Journal of Computing , 2(2):250–273 , 1995. V ar85. M.Y. V ardi. Aut oma tic verification of probabilistic concurrent finite-state systems. In Pr o c. 26th IEEE Symp. F ound. C omp. Sci. , pages 327–338, 1985. 15 App endix: Pro ofs In this app endix we give pro ofs of the results tha t were omitted from the pap e r for space reaso ns. Observ atio n 8.1. L et M b e a MC . Sinc e Ac( M ) is acyclic we have σ i 6∼ σ j for every σ ∈ Paths ⋆ (Ac( M )) and i 6 = j (with the exc eption of absorbing s tates). Observ atio n 8.2. L et σ, ω and f b e such that σ f ω . Then ∀ i : ∃ j : ω i ∼ σ j . This fol lows fr om σ ⊑ f ω and the inert ia pr op erty. Lemma 8 .3. L et M b e a MC , and σ ts ∈ Paths ⋆ (Ac( M )) . Ad ditional ly let ∆ σts , { ρ tail( π ) | ρ ∈ GenT or r ( σ t ) , π ∈ Paths ⋆ (SCC + t , t, { s } ) } . Then ∆ σts = GenT o r r( σts ) . Pr o of. . ( ⊇ ) Let ρ 0 ρ 1 · · · ρ k ∈ GenT orr( σ ts ) and n t the low est s ubindex of ρ such that ρ n t = t . T a k e ρ , ρ 0 ρ 1 · · · ρ n t and π , ρ n t · · · ρ k (Note tha t ρ 0 ρ 1 · · · ρ k = ρ tail( π )). In order to prov e that ρ 0 ρ 1 · · · ρ k ∈ ∆ σts we need to prov e that (1) ρ ∈ Ge nT o r r( σt ), and (2) π ∈ Paths ⋆ (SCC + t , t, { s } ). (1) Let f b e such that σ ts f ρ 0 ρ 1 · · · ρ k and f ( | σ ts | − 1) = k . T a k e g : { 0 , 1 , . . . , | σ t | − 1 } → N b e the restr iction of f . It is easy to ch eck that σ t g ρ . Additionally f ( | σ t | − 1) = n t (otherwise f w ould not satisfy the fr eshness prop ert y for i = | σ t | − 1). Then, by definition of g , we have g ( | σt | − 1) = n t . (2) It is clear that π is a path from t to s . Ther e fore we only hav e to show that every state of π is in SCC + t . By definition of SCC + t , π 0 = t ∈ SCC + t and s ∈ SCC + t since s ∈ Out SCC + t . Additionally , since f satisfies inertia prop erty we have that ∀ f ( | σ t |− 1)

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment