Fair and Efficient TCP Access in the IEEE 802.11 Infrastructure Basic Service Set

When the stations in an IEEE 802.11 infrastructure Basic Service Set (BSS) employ Transmission Control Protocol (TCP) in the transport layer, this exacerbates per-flow unfair access which is a direct result of uplink/downlink bandwidth asymmetry in t…

Authors: Feyza Keceli, Inanc Inan, Ender Ayanoglu

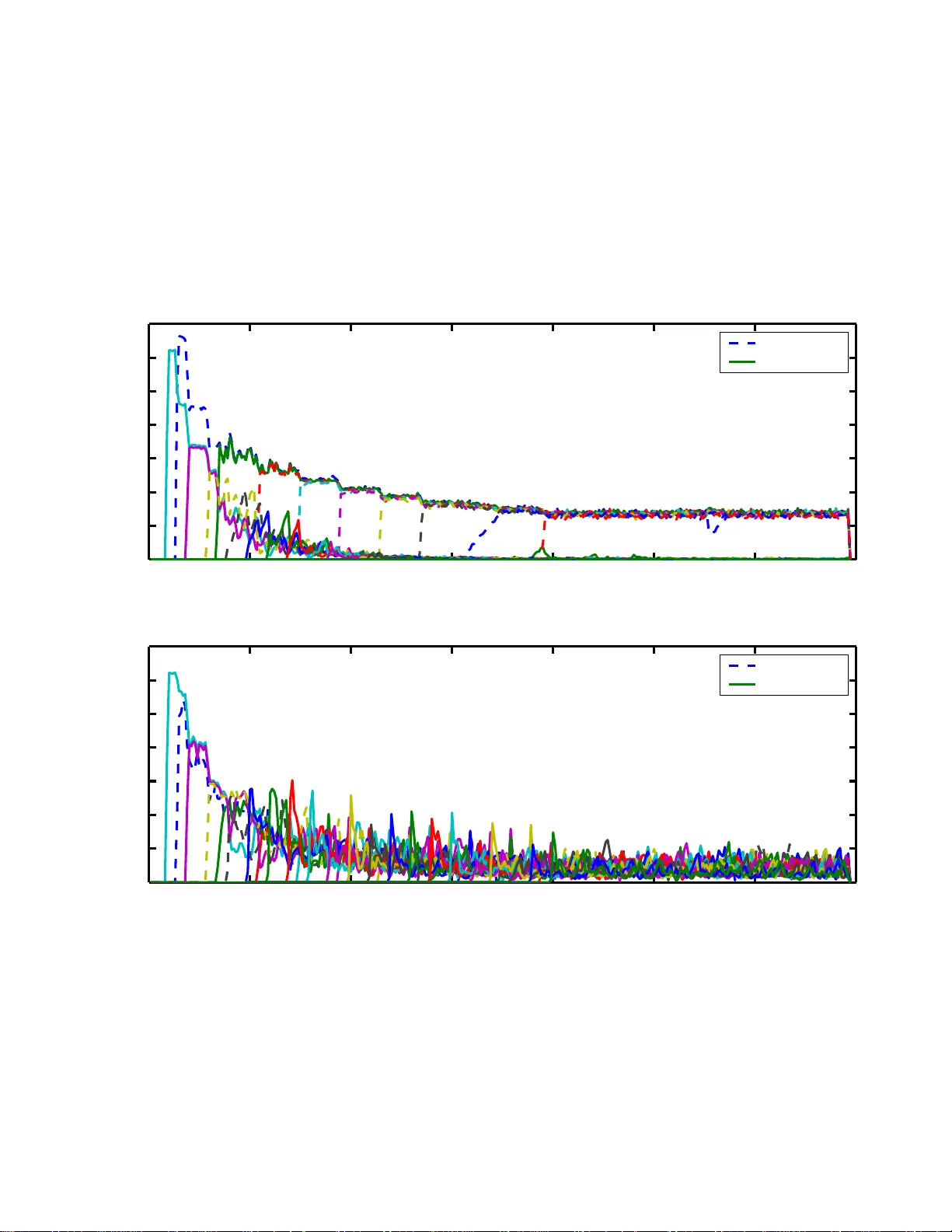

1 F air and Ef ficient TCP Access in the IEEE 80 2.11 Infrastructure Basic Service Set † Feyza Ke celi, Inanc Inan, and Ender A yanoglu Center for Perv asiv e Communi cations and Computing Department of Electrical Engineering and Comp uter Science The Henry Samueli School of Engineering Univ ersity of Californi a, Irvin e, 92 697-2625 Email: { fkeceli, iinan, ayanoglu } @uci .edu Abstract When th e stations in an IEEE 802 .11 inf rastructur e Basic Service Set (BSS) employ T ransmission Control Pro- tocol (TCP) in the tran sport layer, this exacerbates per-flow unfair access wh ich is a direct result of uplink/downlink bandwidth asymmetry in the BSS. W e prop ose a novel a nd simple analytical mo del to approximately calculate the per-flo w TCP congestion window lim it that provides fair and efficient TCP access in a hetero geneo us wired- wireless scenario. The proposed analysis is unique in th at it considers th e effects of varying number of u plink and downlink TCP flows , differing Round T rip Times ( R TTs) amon g TCP connec tions, and the use o f delayed TCP Acknowledgment (ACK) m echanism. Motiv ated b y the findings of this analysis, we design a link layer access control block to be e mployed only at the Access Point (AP) in ord er to resolve th e unfair ac cess prob lem. Th e novel and simple id ea o f the proposed link layer access control b lock is employin g a congestion control an d filtering algorithm o n TCP AC K packets of uplin k flows, thereby prioritizing the access of TCP da ta p ackets of downlink flo ws at the AP . V ia simu lations, we show that short- and long -term fair access can be provisioned with the intro duction o f the pr oposed link layer access con trol block to the protocol stack of the AP while improving channel utilization and ac cess delay . I . I N T RO D U C T I O N In the IEEE 802.11 W ireless Local Area Networks (WLANs), the Medium Access Control (MAC ) layer employs the Distributed Coordination Function (DCF) which is a contention-based channel access scheme [1]. The DCF adopt s a Carrier Sense M ultiple Access with Collision A voidance (CSMA/CA) scheme using binary exponential backoff procedure. In DCF , the wireless stati ons, us ing all equal contention parameters, † This work is supported b y the Center for Perv asiv e Commu nications and Computing, an d by National S cience Foundation under Grant No. 0434928. Any opinions, findings, and conclusions or recommen dations expressed in this material are those of authors and do not necessarily reflect the vie w of t he National Science Foundation. 2 hav e equal o pportunity to access the channel. Over a sufficiently long int erva l, this resul ts in station-based fair access which can also be referred as MA C layer fair access. On th e other hand, p er -station MA C layer fair access does n ot simply transl ate into achieving per -flow transport layer fair access i n the commo nly deployed infrastructure Basic Service Set (BSS), where an Access Point (AP) serves as a g ate way between the wi red and wireless dom ains. Since the AP has the same access prio rity with the wi reless st ations, an approxi mately equal bandwidt h that an upli nk 802.11 station may get is shared among all downlink traf fic. Th is result s in a cons iderable asymm etry between per -flo w up link and downlink band width. The network traf fic is currently domi nated by data traf fic mainly using T ransmission Control Protocol (TCP) in the transport layer . TCP employs a reliable bi-directional com munication scheme. The TCP recei ver returns TCP AC K packets to the TCP transmitter in o rder to confirm the successful reception of the data packets. In t he case of multip le uplin k and downlink flo ws in the WLAN, returning TCP A CK packets of upst ream TCP data are queued at the AP to gether with the downstream TC P data packets. When the bandw idth asymmetry in t he forward and re verse path builds up the q ueue in the AP , the dropped packets impair the TCP flow and congestion control mechanisms whi ch assume equal transmiss ion rate both in th e forward and the rev erse path [2]. As will be described i n more d etail in Section II-B, unfair bandwidth allocation is observed between no t only uplink and downlink TCP flows but also ind ividual uplink TCP flo ws. A solution for resolving the unfair access p roblem in the 802.11 BSS for TCP is li miting the TCP packet source rate for all flo ws su ch that no packet drops occur at the AP . This simply translates in to lim iting the maximum congest ion window s ize of each TCP congest ion. In this paper , we propose a simple analyt ical method to calculate the TCP congestion window limi t t hat pre vents packet drops from the AP queue. The proposed analysis sh ows that this window l imit can be approx imated by a simple linear function of the bandwidth of the 802.11 WLAN, t he number of unlink and downlink flows, the wired link delay of the TCP conn ection, the M A C buf fer size of the AP , and the number of T CP data packets each TCP A CK packet acknowledges. The prop osed analysis is generic so t hat it considers varying num ber of uplink and downlink TCP flows, the u se of delayed TCP A CK algorit hm, and varying Round Trip T imes (R TTs) among TCP connections. V ia simulati ons, we show that th e analyti cally calculated congestion window setting provides fair access and hi gh channel utilization. As we will also describe, the propo sed analysis frame work can also be used for b uffer sizing at the AP in o rder to provision fair TCP access. Motiv ated by the findings of the proposed analysis and pointing out the potenti al practical li mitations 3 of implementati on, we also design a novel link layer access control block to be employed at th e AP . The control bl ock manages the lim ited AP bandwidth intelligentl y b y prioritizing the access of th e TCP data packets of downlink flows over the T CP A CK packe ts o f up link flows. This is achieved by em ploying a congestion control and filtering algorithm on t he TCP A CK packets of u plink flows. The specific algori thm parameters are q uantified based on t he measured av erage downlink data transm ission rate. W e test t he performance of the proto col stack enhanced with t he proposed access control bl ock in terms of transport layer fairness and throughput v ia simulatio ns. The s imulation resul ts show that fairness and h igh channel utilization can be m aintained i n a wide range of s cenarios. The rest of th is paper is or ganized as follows. W e il lustrate the TCP un fairness p roblem and provide a brief literature re view on th e subject in Section II. Section III describes t he proposed analy tical method t o calculate the TCP con gestion window limit that prev ents packet drops from the AP qu eue and provides per -flow fair access in the WLAN. Section IV describes the proposed link layer access cont rol blo ck which uses A C K congest ion control and filtering for fair access p rovisioning and ev aluates its performance. W e provide our conclud ing remarks in Section V. I I . B AC K G RO U N D A. TCP F airness The congest ion av oidance m echanism adopted in T CP can be characterized by an Additive Increase Multipli cativ e Decrease (AIMD) algorit hm [3]. In the con gestion av oidance phase, the congest ion wi ndow is increased by one at every R T T and is decreased by h alf (multip licative decrease) when a packet loss is detected. Consider a simp le scenario where two flows share a single bottleneck link who se capacity is C a nd they ha ve the same R TT . Assume th at both flows are operating in congestion avoidance phas e. Let R i denote the throughput of TCP flo w i , i = 1 , 2 . In Fig. 1, x - and y-axis denote t he throughput each flo w achie ves. Fig. 1 als o shows the sys tem capacity limit and the fair throughput li nes. Initially , sup pose t hat the values of congestion windows for both flows are such that the throughput pai r ( R 1 , R 2 ) is achieved as sho wn b y point x 0 in Fig. 1. Note that the process described below is independent o f wh ere this initial poi nt li es. Since R 1 + R 2 < C , packet losses rarely occur and both flows increase their congestion windows in an add itive increase manner . This increase corresponds to the l ine in Fig. 1 which connects point x 0 to point x 1 . As both flows increase 4 their congestion windows in t he same rate, the slope of this li ne is 1/2. At poi nt x 1 , since R ′ 1 + R ′ 2 > C , packet losses occur . On detecting the packe t loss, both flows decrease their congestion windows in a multipli cativ e manner; to point x 2 , ( R ′ 1 / 2 , R ′ 2 / 2) . This process of alternating increases and decreases continues. But, eventually , the poin t ( R 1 , R 2 ) reaches the fair throughput line and stays always on t his line. The fluctuation along th is line continues without con verging to (0.5C, 0.5C). Therefore, the AIMD algorithm can provide fair bandwidth sharing at the expense of oscillati ons in t he throughp ut. The AIMD algorithm can be represented as th e foll owing generalized form. W [ n ] = W [ n − 1] + α , if AI β W [ n − 1] , if M D . (1) where W [ N ] denotes the congestion wind ow value at the N th transmissio n roun d, α > 0 , and 0 < β < 1 . For TCP , the additiv e increase and the multiplicative decrease factors, α and β i n (1), are s et to 1 and 0.5, respectiv ely , as described pre viously . A higher v alue of α increases the con v ergence rate t o the fair throughput. Similarly , a high er value of β reduces t he oscillation s in t he throu ghput after fair share is achie ved. If our assumpti on that all th e flows have the same R TT does not hold, the AIMD algorithm cannot assure fairness. A flow with smaller R TT updates its congesti on window q uickly and tends to get more bandwidth comp ared to a flow with lar ger R TT . Thus, the TCP congest ion control shows unfairness among flo ws wi th different R TTs . B. TCP Unfai rness in the 802.11 WLAN In the 802.11 WLAN, a bandwidth asymmetry exists between contending upload and download flows. This is due to the fact that the M A C layer content ion parameters are all equal for the AP and the stations. If N stat ions and an AP are alwa ys contending for the access to the wi reless channel (saturation 1 ), each host ends up ha ving approximately 1 / ( N + 1) share of the t otal transmit opportunities o ver a lo ng time interval. This resul ts in N / ( N + 1) of the transmissions being in the upl ink, whi le on ly 1 / ( N + 1) of the transmissio ns belong to the downlink flows. This bandwidth asymm etry in the forward and rever se path m ay build up the AP queue resul ting in packet drops. As pre viously st ated, ups tream TCP A CKs and downstream TCP data are queued at t he 1 Saturation is the limit reached by the system when each station always has a pack et to transmit. Con versely , in nonsaturation , the (nonsaturated) stations experience idle times since the y sometimes have n o pack et to send. 5 AP together . Any TCP data packet that is dropped from the AP buf fer i s retransmitt ed by the TCP sender following a timeou t or the reception of dupl icate A CKs. Con versely , any received TCP A CK can cum ulatively acknowledge all the data packets s ent before the data packet for which the A CK is intended for , i.e., a consequent TCP A CK can compensate for the loss of th e previous TCP A CK. When the packet loss is se vere in the AP b uffer , the d ownstream flows will experience frequent ti meouts thus congestion window size decreases, resul ting in significantly low throughput . On the other hand, due to the cum ulative property of the TCP A CK mechanism, ups tream flows with large congesti on wi ndows will not experience such frequent tim eouts. In the latter case, it is a low p robability that many consecutive TCP A CK losses occur for the same flo w . Con versely , the upstream flo ws with small congestion windows (fe wer packets currently on flight) may also experience timeou ts and decrease their congestio n windo ws e ven m ore. Therefore, a n umber of upstream flo ws may starve in terms of throughput while some ot her upstream flows enjoy a high throughput. In summ ary , the up link/downlink bandwidth asy mmetry creates a congest ion at the AP buf fer which results in unfa ir TCP access. In t he first set of experiments, we show that the A IMD congestion avoidance algorithm of T CP leads to fair access when the b andwidth asymm etry problem between the forward and backward links does not exist. W e consider a scenario consist ing of 1 5 downlink TCP connections. Each connection is initi ated between a separate wireless station and a separate wired station where an AP i s the gateway bet ween the WLAN and the wired network. Each s tation runs a File Transfer Protocol (FTP) session over TCP . Each station uses 802.11g PHY layer with physical layer (PHY) data rate s et to 54 Mbps while the wired link data rate is 100 Mbps . The default DCF MA C parameters are used [1]. The AP b uffer size is 100 packets. The recei ver advertised congestion window limi ts are set t o 4 2 packets for each flo w . No te that the scale on the buf fer size and TCP congest ion win dow limit is inherited from [14]. Although the practical limi ts may be lar ger , t he unfairness problem exists as long as the ratio of the b uffer size to the congestion window limit is not arbit rarily l ar ge (which is not the case in practice). The packet size is 1500 b ytes for all flows. Fig. 2 shows the average throughput for each downlink TCP connection. The results show that the unfair access problem d oes not exist if there are no coexisting uplink connections (which l imit the forward link bandwidth for downlink connections due to 802.11 uplink/downlink bandwid th asymm etry as described pre viously ). The resul ts il lustrate the fair behavior of TCP’ s AIM D congestio n av oidance algorithm . When a similar experiment i s repeated for the scenario consisting of only uplin k TCP connections, 6 the outcome is very different. Fig. 3 shows the average throughput for each u plink TCP connection a scenario con sisting of 15 uplin k TCP connections. The result s ill ustrate the unfairness in the throughput achie ved by the uplink FTP flows when t he backward lin k bandwidt h for TCP A CKs is li mited. E ight of the TCP flows st arve in terms of through put as a result of frequent A C K packet losses in the backward link at the AP buf fer . As described previously in this section, an A CK packet drop at the AP buff er more likely results in a congest ion window decrease when the flow has a small congest ion window (i.e., a new connectio n, or a connection recov ering from a recent timeout, etc.). Conv ersely , a flow with a high er congestion windo w size may not b e affected because of the cumulative A CK feature of TCP . As the comparison of Fig. 2 and Fig. 3 clearly shows, the cumu lativ e nature of TCP AC Ks affec ts the fair share of t he bandwidth s ignificantly , when t he bandwi dth asym metry in between the forward and backward links exists. In the second set of experiments, we show t he upl ink/downlink bandwidth asymm etry for DCF and how thi s is exacerbated if TCP is employed. W e us e the same s imulation parameters as in the previous experiment. Fig. 4 shows the total TCP throughput in the d ownlink and the u plink when there are 10 download TCP connections and the nu mber of upl oad TCP connecti ons is var ied from 0 to 10. The unfairness problem between upstream and downstream TCP flows is e vident from the resul ts. For example, in t he case of 2 uplo ad connectio ns, 10 download TCP connections share a total bandwidth of 6.09 M bps, while 2 upload TCP connections enjoy a lar ger total bandwid th of 9.62 Mbps. As the num ber of upload connections i ncreases, th e download TCP con nections are almost s hut down. C. Literatur e Overview The st udies in the literature on t he un fair access problem in the 80 2.11 WLAN can mainly be classified into t wo. The first group mainly p roposes access parameter dif ferentiation bet ween th e AP and the station s to combat the p roblem. Distributed al gorithms for achieving MA C layer fairness in 802.11 WLANs are proposed in [4], [ 5]. Se veral studies propose using t he traf fic c ategory-based MA C prioritizati on schemes o f IEEE 802.1 1e standard [6] mai nly designed for Quality-of-Service (QoS) provisio ning for uplink/downlink direction-based dif ferentiation in order t o improve fa irness and channel utilization [7]–[11]. A lgorithms that study enhancements on th e backof f procedure for fairness provisioning are proposed in [12], [13 ]. Although MA C parameter dif ferentiation, adaptation, and backof f procedure enhancements ca n be effecti ve in fair access provisioning , the 802 .11 hardware (Network Int erface Cards (NICs), APs, et c.) without t hese 7 capabilities is still wid ely deployed. Th erefore, in this paper , we focus o n techniques that do not require any changes in the 802.11 stand ard or in the non-AP s tations and can directly be implemented via simpl e software modul es in the AP protocol stack. The s econd group focuses on design ing hig her l ayer sol utions such as employing queue management, packet filt ering schemes, etc., especially for TCP . The TCP uplink and do wnlink asymmetry problem i n the IEEE 802 .11 infrastructure BSS is first stud ied i n [14]. The proposed solution o f [14] is to manipulate advertised recei ver windows of the TCP pack ets at the AP . In thi s paper , we propo se a simple analytical model to calculate the congest ion wi ndow limi t of TCP flows for the generic case of delayed TCP A CK schemes and v arying R TTs amo ng T CP connecti ons. The results of the propos ed analys is can be used in the same way as proposed in [14] for fair and ef ficient access provisioning. Per-fl ow queueing [15] and per -direction queueing [16] algorithms where dis tinct queues access t he medium with dif ferent probabilities are designed for fair access provisionin g. A rate-limiter b lock which filters data packets both in the uplink and the d ownlink using in stantaneous WLAN bandwidth estimations is proposed in [17]. Diffe ring from all of these techniques, in our previous work, we prop osed using congestion control and filtering techniques on top of the MA C queue to solve the TCP uplink unfairness problem [18]. The work presented in this paper proposes a nove l cong estion control and filtering technique which also considers the TCP downlink traffic. Not e that since TCP downlink t raf fic load is expected to be larger than the uplink traf fic load, this enhancement i s vital for a practical implementation. An extensive body of work exists relating to the i mpact of asy mmetric paths on TCP performance [19], [20] i n th e wired li nk context. The effects of A CK cong estion control on the performance of an asymmetric network are analyzed in [21] for wired scenarios consisti ng of o nly one or two simu ltaneous flo ws. Th e effec ts of forward and backw ard link bandwidth asymm etry have been analyzed in [22] for a wired scenario cons isting only o ne flo w . Similar ef fects are als o observed in practical broadband satellite networks [23 ]. The ef fects of delayed acknowledgements and byt e counting on TCP performance are studied in [24]. Sev eral schemes are analyzed in [25] for improving the performance of two-way TCP traf fic over asymm etric links where the band widths in two dimensions diffe r substantiall y . The A CK compression ph enomenon th at occurs due to t he dynamics of two-way traf fic usi ng the same buf fer is presented in [26]. In t his p aper , we design a novel A CK cong estion cont rol and filtering algorithm t o be implemented as a link layer access control block in the protocol stack at an 802.11 AP . The congestion control and filtering algorithm is unique in that t he parameters of the algorit hm are quantified according 8 to the TCP access characteristics in an 802.11 infrastructure BS S. I I I . T C P F A I R N E S S A N A L Y S I S The TCP un fairness problem orig inating from t he u plink/downlink access asymmetry can be resol ved if packet drops at the AP buf fer are prev ented such as in th e unrealistic case of infinitely long AP queue. In thi s case, cong estion wind ows of all TCP flows wheth er i n t he downlink or uplin k reach up t o t he recei ver advertised congestion window limit and stay at this value. T his results in fair access in o pposed to t he fact that the access is asym metric in the 802.11 infrastructure BSS as described in Section II-B. As the infinitely long queue assum ption is unrealistic, the exact same result of no packet drops can be achie ved if TCP senders are throttl ed by li miting the number of packets in flight, i.e., the TCP congestion windows are assigned regarding the a vailable AP bandwidth in the downlink. In this section, we propose a simple and novel analytical model to calculate th e maximum congestion window limit of each TCP flo w that prevents packet l osses at the AP buf fer , therefore provides fair and effic ient TCP access i n the BSS. Each random access system exhibits cyclic beha vior . The cycle time is d efined as the aver age duration in which an arbitrary tagged s tation successfully transmits one packet on a verage. Our analytical method for calculating th e TCP congestion wi ndow limit that achieves fair and efficient access is based on the cycle time analysis previously proposed for 802.11 MA C performance modeling [27], [28]. T he sim ple cycle time analys is assesses the asymptotic performance of th e DCF accurately (when each contending A C alwa ys has a packet in s ervice). W e use the approach in [27] to deri ve the explicit m athematical expression for the av erage DCF cycle time when necessary . In Section III-B, we will describe the necessary extensions to empl oy the cycle time analy sis in the proposed analysis. Due to s pace limit itations, the reader is referred to [27], [28] for details on the deri v ation of cycle time. W e consider a typical network topology where a TCP connection i s in itiated between a wireless s tation and a wired station either in the do wnlink or th e uplink of the WLAN . The WLAN traf fic is relayed to the wi red network t hrough the AP and vice versa. Let Round T rip T ime (R TT) denote the average length of the interval from the t ime a TCP data packet is generated unt il the corresponding TCP A C K packet arri ves. R TT is compos ed of three main components as follows. • Wired Link Delay ( LD ): The flow- specific average propagation delay of the packet between the AP and the wired node. 9 • Q ueueing Delay ( QD ): The av erage d elay experienced by a packet at th e wireless station buf fer until it reaches to the head of the qu eue. Note that due to t he unequal traffi c load at the AP and th e stations, QD AP and QD S T A may high ly differ . • Wireless Medium Access Delay ( AD ): The a verage access delay experienced by a packet from the time it reaches to the head of th e M A C queue until the transmission is completed successfully . Then, R TT is calculated as follows 2 . RT T = 2 · LD + QD AP + QD S T A + AD AP + AD S T A (2) For the first part of the analysis, each TCP data packet is ass umed to be acknowledged by a TCP A CK packet where this assumpt ion is later released and the delayed TCP A CK algorithm is considered. W e claim that if the sys tem is to be st abilized at a point such that no packet drops occur at the AP queue, then the follo wing conditi ons should h old. • Al l the non-AP st ations are in nonsat urated cond ition. Let’ s assume a s tation has X packets (TCP dat a or A CK) in its q ueue. A ne w packet is generated only if t he station recei ves packets (TCP A CK or data) from the AP (as a result of A CK-oriented rate control of TCP). Let Y > 1 users to be activ e. E very station (includ ing the AP) sends one packet successfully e very cycle time [27]. In the stable case, while the tagged station sends Y packets every Y cycle time, it receiv es only one p acket. Note that the AP also sends Y packets during Y cycle times, b ut on the a verage, Y − 1 of these packe ts are destined to the stations other t han the t agged one. Therefore, after Y cycle times, the tagged stations queue size will drop down to X − Y + 1 . Since Y > 1 , the tagged station s queue will get empty ev entually . A new packet will on ly be created wh en the AP sends a TCP packet to th e tagged station which wi ll be served before it receiv es another packet (on av erage). Thi s prov es t hat all the non-AP stations are in nonsaturated condition i f n o packet losses occur at t he A P . • The AP contends with at most one station at a time on a verag e. Follo wing t he previous claim, a non-AP station (which is nonsaturated) can hav e a packet ready for transmissio n if the AP h as previously sent a packet to the station. There may be t ransient cases where the instantaneou s number o f active stations may become lar ger than 1. On the other hand, as we have 2 R TT is calculated as in (2) irrespective of the direction of the TCP connec tion. On t he other hand, specific v alues of AD and QD depend on the packet size, the number of contending stations, etc. Therefore, R TT of an uplink connection may dif fer from R TT of a do wnlink connection. 10 pre viously shown, when Y > 1 , the queue at any no n-AP station e ventually empties. If we assume the trans ient d uration being very short, the number actively contending stations on average is one. Therefore, at each DCF cycle time, the AP and a dist inct station wil l transmit a packet successfully . W e define C T AP as the durati on o f the av erage cycle t ime during which t he AP sends an arbi trary packet (TCP data or A C K) successfully . W e will deriv e C T AP in Section III-B. Let the a vera ge duratio n between two successful packet transm issions of an arbitrary flow at the AP (or at t he non-AP station) be C T f low . Ass uming there are n up and n down upload and do wnload TCP connections respectively , we make the following approxim ation based on our claims that the AP contends wit h one station on av erage and the TCP access will be fair if no packet drops are observed at the AP b uffer C T f low ∼ = ( n up + n down ) · C T AP . (3) As it will be shown by comparing with simulation results in Section III-E, the approximation in (3) l eads to analyti cally correct results. Then, the t hroughput of each station (whether it is running an u plink or a d ownlink TCP connection) is lim ited by 1 /C T f low (in terms of packets per second). W e can also wri te the TCP throughput using W lim /RT T , where we define W lim as the TCP congestion window limit for a TCP connection. Following our previous claims, QD S T A = 0 (the stations are nonsaturated), QD AP = ( B S AP − 1) · C T AP (we consider the limiti ng case when the AP buf fer is full, but no packet drop is obs erved), and AD AP + AD S T A = C T AP (the AP cont ends with one s tation on avera ge), where B S AP is t he b uffer size of the AP MAC queue. Using 1 /C T f low = W lim /RT T , we find W lim = 2 · LD C T f low + B S AP n up + n down . (4) Note that C T f low is an i ndication of the bandwidth at the bottl eneck (at the AP). If the data rate exceeds this bandwidth, the excess data will b e q ueued at the AP , eventually ove rflowing the AP buf fer . W e calculate W lim considering a full AP b uffe r , therefore, W lim is the maximum congestion win dow lim it for a TCP connection that prev ents the packe t drops at the AP queue o f size B S AP . W e can make following observations from (4). • W lim is a function of LD . Therefore, W lim is flow-specific and varies amo ng connections with diffe rent LD . • Th e first term is the eff ectiv e n umber o f packets that are in flig ht i n t he wired l ink for any flow , 11 while the second term is t he number of p ackets that are in t he AP buf fer for t he same flo w . A. Delayed TCP Ac knowledgements In the delayed TCP AC K mechanism, the TCP recei ver acknowledges e very b TCP data packets ( b > 1 ). A t ypical value (widely used in practice) is b = 2 . The use of delayed TCP A CK mechanism changes the system d ynamics. On the other hand, we still employ our ass umption that the AP contends one station at a tim e on the average to calculate C T AP . As wi ll be sh own by com parison wit h si mulation results in Section III-E, thi s ass umption still leads to analytically accurate results. W e update (3) and (4) accordingly for d elayed TCP acknowledgments. Let the avera ge duration between two successful packet transmiss ions of t he flow at the non-AP statio n be C T f low ,del when delayed TCP acknowledgment mechanism is us ed. Each upli nk flow completes the successful transmissio n of b packets in an interval of ave rage leng th b · C T f low ,del . When the access is fair , the AP transmi ts b data packets for each downlink flow (i.e., a total of b · n down ), and one A CK packet for each uplink flow (i.e., a total of n up A CK packets) during the same interval. Then, C T f low ,del ∼ = ( n up b + n down ) · C T AP (5) W lim = 2 · LD C T f low ,del + B S AP n up /b + n down (6) B. Calculating C T AP W e are interested in the case when there are two acti ve (saturated) stations (as the AP contends wi th one station at a time). The aver age cycle time in this scenario can easily be calculated using t he model in [27]. In our case, t he AP sends the TCP A CK packets of the uplink TCP connections and th e TCP data packets of the downlink TCP connections which contend with the TCP AC K packets of the d ownlink TCP connections and t he TCP data packets of the uplin k connections that are generated at the s tations. Note that the cycle time varies according to the packet size of contending station s. Then, C T AP = X p 1 ∈ S Pr( p AP = p 1 ) X p 2 ∈ S Pr( p S T A = p 2 ) C T p 1 ,p 2 (7) where S = { AC K , D AT A } is the set of diff erent typ es of packets, Pr( p AP = p 1 ) i s the probability t hat the AP is sendi ng a packet of typ e p 1 , Pr ( p S T A = p 2 ) is t he probabi lity th at th e non-AP statio n i s send ing 12 a packet of t ype p 2 , and C T p 1 ,p 2 is t he average cycle time when one station is usi ng a packet of type p 1 and the other is using a packet type of p 2 . W e differentiate between the data and the A CK packets because the size of the p ackets thus the cycle tim e duration depends on the packet t ype. Using simp le probabili ty theory , we can calculate Pr( p AP ) and Pr( p S T A ) as follows Pr( p AP = p 1 ) = n down n up /b + n down , if p 1 = D AT A n up /b n up /b + n down , if p 1 = AC K , (8) Pr( p S T A = p 2 ) = n up n down /b + n up , if p 2 = D AT A n down /b n down /b + n up , if p 2 = AC K . (9) C. F air Congestion W indow Assignment (FCW A) A control block located at the AP can modify the advertised recei ver window field of the A CK packets that are all relayed th rough with the W lim value calculated using the proposed m odel. Therefore, we call this procedure Fair Congest ion W indo w Ass ignment (FCW A). The analysis requires accurate esti mations on LD and b . The control block may dis tinguish among TCP connections via the IP addresses and the ports they use. An av eraging algorithm can be used to calculate the average time that passes between sending a data (A CK) packet int o the wired link and receiving the A CK (data) p acket which generated by the reception of the former packe t (which is 2 · LD ). The TCP header of cons ecutiv e A CK packets may be parsed to figure o ut the value of b . It is also worth to no te that althoug h the analytical calculation uses a simpl e cycle time m ethod in calculating C T AP and C T f low , th e AP may use a measurement-based technique rather than the model-based techni que used in thi s paper . D. Buffer Sizing The propo sed analys is can also directly be used for buf fer sizing purposes. T he 802.11 vendors may use the proposed method with statistics of TCP connection s and WLAN traf fic t o decide on a good size of AP buf fer that would provide fair TCP access. B S AP = ( W lim − 2 · LD C T f low ) · ( n up /b + n down ) (10) 13 E. P erf ormance Evalua tion W e validate the analytical result s obtained from the propo sed mod el via comparing them with the simulatio n results obtained from ns-2 [29]. W e obtained W lim via sim ulations in such a way that i ncreasing the TCP congestion window l imit of TCP connections by one results in a packet loss ratio larger t han 1% at the AP buf fer . As previously st ated, the network topol ogy is such that each wireless st ation initiates a con nection with a wired station and where the traffic is relayed to/from the wired network through t he AP . The TCP traffi c uses a File Transfer Protocol (FTP) agent which mo dels bulk data t ransfer . TCP NewReno with its default parameters in ns-2 is u sed. All the s tations h a ve 802.11g PHY [30] with 54 Mbps and 6 Mbps as the data and the basic rate respectiv ely . Th e wired link data rate is 100 Mbps. The default DCF MA C p arameters are u sed [1]. The packet size is 150 0 b ytes for all flows. Th e MAC b uffe r size at the stations and the AP is set to 100 packets. In the first set o f experiments, we set the wired lin k del ay of each connection t o 50 ms. Each TCP data packet is acknowledged b y an A CK packet ( b = 1 ). In Fig. 5, we com pare the est imation of (4) on the congestion window limit with t he values obtained from the simulation results and the proposed meth od of [14] for increasing num ber of TCP connections. The numb er of upload flo ws is equal to the n umber of download flo ws. As Fig . 5 implies, the analytical results for FCW A and the si mulation results are w ell in accordance. The analy sis in [14] calculates the congestion wind ow l imit by B S AP / ( n up + n down ) and underestimates the actual fair TCP congest ion window limit . The total throughput of the system when the TCP con nections employ analyti cally calculated congest ion window limi ts in simul ation for increasing number of TCP connections is sh own in Fig. 6. As the comparison with [14] rev eals, the congestion window limi ts calculated v ia FCW A result in approx imately 35% - 50% higher channel util ization for the specific scenario. Al though the correspondi ng results are not displayed, both methods achiev e perfect fairness in terms of per-connection FTP through put (Jain’ s fairness index [31], f > 0 . 99 99 where 1 s hows perfect fairness). In the second set o f experiments, we consider a scenario where wired link delays ( LD ) among TCP connections differ . First TCP conn ection has a 1 ms wired l ink delay , and n th connection has n ms larger wired link delay th an ( n − 1) th connection. Fig. 7 shows the individual throughput for each TCP connection for FCW A and [14] for 4 differ ent scenarios. In Fig. 7, for any scenario, the first half are upl oad flows and the rest are download flows. As the results present, the congestion window l imits calculated by t he 14 proposed model maintains fair access e ven in th e case of varying wired link d elays. On the other hand, the method proposed in [14] f ails to do so. In the t hird set of experiments, we consid er a scenario where the TCP connections use th e delayed A CK mechanism with b = 2 . W e consider 9 differe nt scenarios where in each scenario the number of uplink and downlink TCP connections varies. In th e first three scenarios, the number of downlink flows is set to 5 and th e number of uplink flows is varied among 5, 10, and 15, respectively . V arying the number of uplin k flows in the same range, the next three scenarios use 10, and the following three scenarios use 15 downlink flows. In Fig. 8, we compare the esti mation of (6) on the con gestion wind ow limit with the values obtained from t he si mulation results and the proposed method of [14]. The analytical resul ts for the proposed model and the simul ation results are well in accordance.The t otal throughput of th e system wh en th e TCP connecti ons employ the analyticall y calculat ed congest ion wind ow l imits is shown in Fig. 9. As the comparison with [14] re veals, the congestion window l imits calculated via our method result in approxim ately 9 0% - 105% higher channel ut ilization. Although the corresponding results are not presented, the congesti on windo w li mits calculated by both the proposed m ethod and the method of [14] achiev e perfectly fair resource allocatio n in terms o f throughput (Jain’ s fairness index, f > 0 . 9 98 ). On the other hand, the proposed FCW A method results in a significantly high er channel utilization . I V . L I N K L AY E R A C C E S S C O N T RO L B L O C K As i llustrated in Section II-B, unfair access problem origi nates from the uplin k/downlink bandwi dth asymmetry in th e 802.1 1 BSS. As our analysis in Section III shows, fair access can be achieved if the congestion window l imits of t he do wnlink and t he uplink TCP sources are set regarding the network bandwidth so that no packet drops occur at the AP buf fer . Actually , for the default DCF scenario wh en only downlink flows are present, the data pack et drops at the AP b uff er implicitly t hrottles the do wnlink TCP sources ef fecti vely (i.e., TCP access is fa ir among do wnlink flo ws in th is case as also we present in [32] via sim ulations). Con versely , the coexistence of uplink flows shuts do wn the do wnlink as som e uplink flows are fortunate enough to reach a high cong estion window by making us e of t he cumulative property of TCP A CKs. The T CP A CKs of uplink flo ws occupy most of the AP buffe r which results in data p acket drops for downlink flo ws. These observa tions motiv ate the app roach of our nove l idea: Prioritize TCP data packets of downlink flo ws over TCP AC K packets of upli nk flows at th e AP MA C buf fer . W e design a novel link layer access control block which employs an A CK Congestion Control and Filt ering (A CCF) scheme. The 15 proposed A CCF scheme delays the TCP A CK p ackets of uplink flows (using a separate control block buf fer) regarding the measured avera ge packet interarriv al tim e of the downlink TCP data packe ts. In other words, t he do wnlink data to uplink A CK prio ritization rati o is quantified by means of estimating what the upli nk A CK transmission rate should be for t he given av erage downlink TCP data transmission rate. The rationale behind the proposed method is sending the TCP A CKs of upli nk connections onl y as often as the TCP data of downlink connections are sent. The proposed AC CF algorithm us es the cumulative property of TCP A CKs by empl oying A CK filtering. If anoth er A CK packet of flow i is recei ved while t here is an A CK packet of flow i in the control block buf fer , th e previous A CK in the buf fer is replaced with the ne w one. Ou r rationale behind int roducing A CK filtering is to reduce the number of A C K packets t ransmitted b y th e AP . This creates m ore room in the AP buff er for TCP data packets of do wnlink flows (which in turn decreases TCP data packet loss ratio). M oreover , filtering A CK p ackets also s lows the growth rate of TCP congesti on windows of uplink flo ws (since t he TCP senders receiv e less frequent A CK packets) which further limit s the share of the uplink bandwidth. W e define the foll owing n otation for the description of the algorithm p rovided in the sequ el. L et num cum,i be t he current number of accumulated A C Ks for flo w i in the access control block b uffer . Let t buf ,i denote the total time th at has p assed since the last TCP A CK for flo w i has been sent to the MA C queue. Let β be a constant weighi ng factor and γ be a variable weighing factor which is a function of num cum,i . Let Av g I nt i be the measured average packet interarriv al time for flow i 3 . Let Av g D ataI nt be t he a verage downlink data interarri val duration which we use o n deciding how frequent the A CKs of uplink flows should be sent down to the MA C queue for transmissio n. W e calculate Av g D ataI nt by taking the mean o f Av g I nt of t he downlink flows with Av g I nt < α · m in( Av g I nt j : ∀ j in dow nl ink ) where 1 < α is a constant. Note that t his aver aging calculati on excludes the TCP sources with packet interarriv als h igher than a th reshold (as q uantified by α ) i n order to prevent slow downlink flows limi ting the frequenc y of uplink AC Ks, therefore the uplink bandwidth unnecessarily . According to the proposed A CCF algorithm, the TCP A CKs are scheduled for transmissio n (sent down to the MA C queue) such that the av erage per -flo w A C K rate does not exce ed the a verage per-flo w TCP downlink packet rate. Using t his idea, we quanti fy the control queue buf fering time for each A C K packet 3 Av g I nt i denotes the average TCP data packet interarriv al t ime if fl o w i is a downlink TCP flow and the av erage TCP A CK packe t interarriv al time i f flo w i is an uplink TCP flow . Av g I nt i can be calculated by employing simple av eraging method s (such as e xponential moving averaging tha t we have emp loyed for uplink measurements in [18]) on p eriodic measurements results. 16 of upl ink flow i as D i = γ · num cum,i · Av g DataI nt − t buf ,i . The rationale behind th is equation is as follows. • W e cons ider th e cumulative number o f A CK packets th at th e currently buf fered A CK packet rep- resents. The transmis sion of an accumul ated A CK packet is e xpected to trig ger the generation of num cum,i data p ackets in the uplink. Therefore, any accumulated TCP A CK packet is delayed until that many T CP downlink data transmissions can be made on av erage ( num cum,i · Av g D a taI nt ) . • If a few consecutive tim eouts are experienced when the TCP congestion window is small, the uplink TCP flow may hardly recover , and consequently m ay suffer from low throughput (as we also observed via simulation s). Therefore, we introduce an adaptive w eighing factor γ min ≤ γ ≤ 1 in th e minimum buf fering duration. W e u se th e value of num cum,i as an indication of t he current size of the TCP congestion wi ndow of the correspondi ng flo w . The value of γ is set smaller than 1 when num cum,i is smaller than a threshold, num thr esh . The i dea is to p re vent longer delays at the control b lock buf fer thus poss ible tim eouts at t he TCP agent at the station if t he upl ink TCP connection is expected to hav e a small instant aneous congestion window (e.g., a recently initiated TCP connection). • W e su bstract t buf ,i from γ · num cum,i · Av g DataI nt in order to m ake the duration of the interval between two consecutiv e AC Ks sent down to the MAC buf fer app roximately equal to num cum,i · Av g D ataI nt (in the case num cum,i > num thr esh ). As we ha ve also observed via simulation s, the A CK filtering scheme makes the A CK arri vals to the AP queue bursty [2]. For an arbitrary uplink flo w , this b eha vior corresponds to alternating idle times with no packet arriv als and active times consistin g of a bunch of h ighly frequent A CK arriv als to t he AP queue. Th is bursty behavior may result in t buf ,i > γ · num cum,i · Av g DataI nt (probably when the corresponding idle d uration is long), t herefore D i < 0 , especiall y for the first few A CK arriv als at the AP queue following an i dle time for t he corresponding flow . Note that the case of D i < 0 actuall y translates into the case of the A CK being already due for transmission . In this case, ou r design takes one of the two alternative action s regarding the v alue of D i as fol lows. • D i + β · Av g I nt i < 0 : This serves as an indicatio n of the last A CK pass to the MAC queue having been done probably with in the pre vious burst. Although the AC K transmissi on is due ( D i < 0 ), an im mediate pass to the M A C queue pu nishes upl ink throug hput unnecessarily as t buf ,i /num cum,i (which is an indi cation of a verage data transmission interval for uplink flo w i ) is much larger than Av g D ataI nt . In this case, t he A CK packet of flow i is delayed for the du ration equal to D ′ i = 17 β · Av g I nt i in the control block queue (countin g on the hi gh p robability of further A CK arriv als in t he current burst). Our i ntuition behind the calculation o f D ′ i is t he po ssibilit y of the next A CK of the same flow arri ving possibly in an av erage interarriv al time Av g I nt i . W e also int roduce the constant weighing fa ctor β > 1 in order to compensate for the potential variance of the inst antaneous A CK interarriv al time. A new A CK arri val wi ll probably decrease t buf ,i /num cum,i taking it closer to Av g D ataI nt . • If D i + β · Av g I nt i > 0 , the relaying A C K packet is sent down to the MA C queue as the A CK is already due for transmission and t buf ,i /num cum,i is close to Av g D ata I nt . As pre viously stated, i f a ne w TCP A CK packet arriv es before the timer that is initi ally set to D i (or D ′ i ) at the arriv al of p re vious A CK expires, the new A CK replaces the previously buffe red A CK. The link layer access control block parses the TCP header to calculate num cum,i and restarts the timer with the new D i (or D ′ i ) for the accumulated A CK. When the timer expires, the TCP A C K is sent down to the MA C queue and both t buf ,i and num cum,i are reset to 0 . A CK filtering m ay s low down t he congestio n window growth rate, ne gatively impact t he performance during loss recovery and s low start, and increase the round trip time [21]. On the other hand, si nce our idea is tryi ng to s low down uplink TCP flows in order to prioritize downlink TCP flows, most of these issues do not negatively af fect fairness and overall channel uti lization. Still, the propos ed algorithm does not filter t he TCP A CKs wit h flags set s uch as du plicate A CKs wh ich are directly enqueued to the MA C queue. The proposed A CCF algorithm introduces a n umber of configurable variables. As point ed out in Section IV -B, we decided the values for these v ariables through extensi ve si mulations . A. F airness Measur e Most of the s tudies in the literature qu antify the fairness by employing Jain’ s fairness index [31] or providing the ratio of the throughput achieved by individual or all flows in the specific directions. On the other hand, s uch measures have the implicit assumption of each flow or statio n dem anding asym ptotically high bandwidth (i.e., in saturati on and having always a packet ready for transmission). As these measures quantify , a perfectly f air access transl ates into each flow o r station receiving an equal bandwidth. On the other hand , in a practical scenario of flo ws wi th finite and different bandwidth requi rements (i.e., some stations in nonsatu ration and experiencing frequent idle times with no packets to transmit), these measures cannot directly be used to quant ify the fairness of the system . 18 W e define the fair access in a scenario where flows with different bandwidth requirements coexist as follows. • Th e flo ws with total band width requirement lower than th e fair per -flo w channel capacity i n the specific directio n receiv e the necessary bandwi dth. • Th e flows with tot al bandwidth requirement hi gher than the fair per-fl ow channel capacity recei ve an equal bandwidth. In order to quantify fair access, we prop ose t o use the MAC queue packet loss rate (a p acket los s rate of 0 for all flows corresponds t o fair access) for the latter together with t he comparison on channel access rate (equal channel access rate corresponds to fair access) for the former . Note that the latter can empl oy Jain’ s fairness index, f , which is defined in [31] as fol lows: i f there are n concurrent connections in the network and the throug hput achiev ed by connection i is equal to x i , 1 ≤ i ≤ n , then f = ( P n i =1 x i ) 2 n P n i =1 x 2 i . (11) B. P erf ormance Evalua tion W e imp lemented the proposed link layer control access b lock employing A CCF i n ns-2 [29]. The network topology and t he stated parameters in III-E are used. The TCP traffic uses either a File Transfer Protocol (FTP) agent, which model s bulk data transfer or a T elnel agent, which simulates the b eha vior of a user with a terminal emul ator or web browser . Unless oth erwise stat ed, flows are considered t o be lasting t hrough the simulatio n duration and called long-liv ed in the sequel. On th e other hand, i n s ome experiments, we als o use short-live d TCP flows which consi st of 31 packets and lea ve the system after all the data is transferred. The recei ver advertised congestion windo w limits are set t o 42 packets for each flow . Note that the scale on the buf fer size and TCP cong estion window lim it is inherited from [14]. Although the practical li mits may be larger , the unfairness probl em exists as long as t he ratio of the buf fer size t o the congestion window limit i s not arbitrarily large (which i s not the case in practice). W e found α = 1 . 5 , β = 2 , γ min = 0 . 5 , and num thr esh = 10 to be appropriate t hrough extensive simulations. The simulatio n duration i s 350 ms. W e i n vestigate the sys tem performance when wired link delays ( LD ) diffe r among TCP con nections. The wi red l ink delay of the first u pload or download TCP connection is al ways set to 1 0 ms. Then, any newly g enerated up load or download TCP connectio n has a wi red l ink d elay of 2 ms larger than the pre vious one in the same direction. 19 a) The Basic Sce nario: In the fi rst set of e xperiments, we generate 3, 5, or 10 upload FTP connections and var y the number of download FTP connections from 5 to 30. The wireless channel is assum ed to be errorless. Fig. 10 shows the fairness index among all connections. W e compare the default DCF resul ts with the results obt ained when t he AP em ploys the prop osed i) FCW A or ii) A CCF . As the resu lts im ply , with t he introduction of any of the proposed control blocks at the AP , an almost perfect fair resource allocation can be achie ved in both cases. In Fig. 11 , Fig. 12, and Fig. 13, we plot the uplink, th e downlink, and t he t otal TCP throughput i n the infrastructure BSS, when there are 3, 5, and 10 upload TCP connection s, respectiv ely . As t he results show , using th e propos ed A CCF scheme, the do wnlink flows (which s tarve in the default DCF case) can achieve reasonable throughput. If we employ FCW A instead, the tot al t hroughput observed is slightly lo wer . In this case, t he propo sed A CCF scheme m akes use of t he A CK filtering scheme to achiev e a high er channel utilization. The comparison with t he performance o f th e default DCF algorithm impli es t hat the proposed methods do not sacrifice channel utilization while providing fair access. b) Delayed TCP A CKs: In the second set of experiments, we use a scenario when TCP conn ections use the delayed TCP A CK mechanism ( b = 2 ). W e start download and upload FTP connections in 10 s and 20 s intervals, respectively . Fig. 14 shows the instantaneous throug hput for individual TCP flo ws over sim ulation durati on. As the results i mply , i n the default case, TCP download connections starve i n terms of th roughput as the number of T CP upload connections increase. In the meanti me, som e upload flows experience long delays in st arting and achieving high throu ghput while some do no t. On t he ot her hand, us ing the proposed A CCF scheme, all upl ink and downlink TCP flows enjoy fair access. The results are important in showing the proposed alg orithm’ s ef fectiv eness ev en when the d elayed TCP A CK mechanism is used. c) W ir eless Channel Err ors: In the third set of experiments, we assume the wireless channel to be an Additive White G aussian Noise (A WG N) channel. On top of the energy-based PHY m odel of ns -2, we implemented a BER-based PHY model according to the frame work presented in [33] us ing the way of realization in [34]. Our model cons iders the chann el no ise power in Signal-to-Nois e Ratio (SNR). W e set wireless channel noise le vels such that each station e xperience a finite data packet error rate (PER). W e repeat the tests for A WGN channel SNR values when PER i s 0.001 or 0.01. W e only present t he results on fairness index for the case when PER is 0.01, sin ce the results slightly dif fer and a simil ar discus sion 20 holds for the case when PER is 0 .001. As i n the first set of experiments, w e generate 3, 5, or 10 up load T CP connecti ons and var y the numb er of download TCP connections from 5 t o 30 . Fig. 1 5 sh ows that the proposed A CCF scheme provides fair access. The performance of AC CF is resil ient to wi reless channel errors, i.e., fair access is preserved e ven when there are errors in the wireless channel. As shown in Fig . 16, the throug hput drops sl ightly when compared to errorless wi reless channel case due to the M A C retransmiss ions. Still, high channel utilization m aintained. DCF has sl ightly hi gher channel utilization at the expense of fair access. d) V ar ying sour ce pack et rates among TCP connection s: In the forth set of experiments, we test t he performance when half of th e st ations use the FTP agent, while the o ther h alf use th e T elnet agent with packet rates between 150 Kbps and 550 Kbps. Fig. 17 and Fig . 1 8 compares the performance in terms of fa ir access and total throughput for def ault DCF and A CCF for in creasing number of TCP stations in each di rection, respectiv ely . In, Fig. 17, t he right y-axis denotes th e fairness ind ex, f , among t he FTP (saturated) flows, wh ile the left y-axis d enotes the av erage Pack et Loss Rate (PLR ) for T elnet (non saturated) flows. As the results present, t he proposed A CCF scheme can provide fair access (i.e., f = 1 and P LR = 0 ) i rrespectiv e of th e nu mber of stations. As Fig. 18 sho ws, high channel utili zation is also maintained. e) Short-lived TCP flows: In the fifth set of experiments, we test the performance in terms of short- term fairness. First, we generate 5 u plink and 10 d ownlink long-lived FTP flows. Then, 15 s hort-live d uplink and downlink FTP flows are generated with 5 s intervals consecutively . Fig.19 sho ws the total transmissio n duration for individual short-lived FTP flows for the p roposed A CCF algorithm and the default DCF . No te that the flo w in dices from 1 to 15 represent upli nk FTP flows while flow indices from 16 to 3 0 represent do wnlink FTP flows. As the results impl y , the short-l iv ed file transfer can be complet ed in a significantly shorter tim e when the proposed algorithm is used. W e can conclude that the proposed A CCF algorit hm is s hort-term fa ir . Alt hough n ot explicitly presented, most of the do wnlink connections experience con nection timeou ts and eve n cannot complete the whole transaction within the si mulation duration for the def ault case. f) V a rying TCP Congestion W indows among Connections: In the si xth set of experiments, we generate TCP connections with recei ver advertised congest ion window sizes of 12, 20, 4 2, or 84. W e var y the n umber of FTP connections from 4 to 24 and the wi red link delay from 0 to 50 ms. For each scenario, the number of flo ws using a specific congest ion window size is uniformly d istributed among the 21 connections (i.e., when there are 12 upload and 12 download TCP flows, 3 of the u pload/download TCP connections use the congest ion window size W , where W is selected from the set S = 12 , 20 , 42 , 84 ). The wireless channel is assum ed to be errorless. The TCP delayed A CK mechanism is enabled. Fig. 20 shows the fairness index among all connections. W e compare the default DCF resul ts with the results obtained when the AP employs the proposed A CCF . As the results imply , with the i ntroduction of the p roposed control block at the AP , a better fair resource allocation can be achiev ed. Howe ver , a perfect fairness i s not observed when the li nk delay is larger and the n umber of flows is small er . In these cases, the bandwi dth-delay product is l ar ger th an the receiv er advertised TCP congesti on w indow size for connecti ons wit h smal l congesti on windows. As a result, th e th roughput is limited by the congestio n window it self, not by t he net work bandwidth. In Fig. 21, we plot the total TCP throughput. As the results show , the default DCF has higher channel utilization than A CCF . On the other hand, this comes at the expense of fair access as s hown in Fig. 20 . Although not explicitly shown in Fig. 21, all TCP do wnlink flows are shut down when default DCF is employed (see Section II-B). In thi s case, t he shared chann el can m ainly be u tilized by data packets of uplink TCP conn ections. In a fair scenario, as for A CCF , the TCP A CKs of do wnlink connections sharing the channel are considerably higher in num ber than the d efault DCF . As M A C effi ciency decreases when packets of shorter length access the channel, AC CF channel utilization ef ficiency is slightly lo wer th an DCF . Th e diffe rence is more notable for higher numb er of flows, s ince the AP , whi ch is the main source of s hort TCP A CK packet transm issions for the default DCF , has a smaller share of the bandwidth. V . C O N C L U S I O N In this p aper , we focused on unfair TCP access problem i n an IEEE 802.11 infrastructure BSS. W e hav e presented a novel and simple analytical m odel to calculate th e TCP congestio n wi ndow limit that provides fair TCP access in a wired/wireless scenario. The key contribution of this study is that t he proposed analytical model considers varying wi red link delays among connections , varying nu mber of uplink and downlink conn ections, and the use of delayed A C K m echanism. V ia sim ulations, we ha ve shown that the congestion wi ndow lim its calculated via the propo sed analysis (FCW A) provides fair TCP access and high channel u tilization. The same m odel can also be used to decide o n t he required AP b uffer size for fair TCP access given the TCP cong estion window limits used by the connections. T he cycle t ime analysis can be extended for IEEE 8 02.11e WLANs [6] as in [28], therefore the analysis in this paper can also be extended for the case when MA C parameter differentiation is used. 22 W e have also desi gned a nove l link layer access control block for the AP that provides fa ir TCP access in an 802. 11 infrastructure BSS. Our simpl e idea for resolvi ng the unfairness prob lem in the WLAN is prioritizing TCP data packets of uplink flows over TCP A CK packets of uplink flows at the AP . This idea originat es from the m ain finding of the proposed analytical m odel whi ch shows that fair access can be achieve d by throt tling TCP traffic (i.e., limitin g congestion windows). T he proposed link layer access control bl ock employs an AC K congestion control and filtering (A CCF) algorithm . The proposed A CCF algorithm is unique in that the specific algorithm parameters are based on the measured av erage data transm ission rate at the AP . V ia simulations , we show that fair resource allocation for uplink and downlink TCP flows can be provided in a wide range of practical scenarios when the prop osed A CC F method is used. A ke y insight that can be obtained from thi s study is that fair and effic ient TCP access in a WLAN can simply be achieved by intelligently schedulin g TCP A CK transm issions at the AP . As an attractive feature, A CCF does not require any changes in t he 80 2.11 standard, nor any enhancement at t he statio ns. R E F E R E N C E S [1] IEEE Standar d 802.11: W ir eless LAN medium access contro l (MAC) and physical layer (PHY ) specifications , IEEE 802.11 Std., 1999. [2] H. Balakrishnan, V . Pad manabhan, and R . H. Katz, “The Ef fects of Asymmetry on TCP Performance, ” ACM Baltzer Mobile N etworks and Applications (MONET ) , 1999. [3] D. Chiu and R. Jain, “Analysis of the Increase/Decrease Algorithms for Congestion A v oidance in Computer Networks, ” Journa l of Computer Networks and ISDN , pp. 1–14, June 1989 . [4] N. H. V aidya, P . Bah l, and S. Gupta, “Distributed Fair Scheduling in a W ireless LAN, ” in Pr oc. A CM Mobicom ’00 , Augus t 2000. [5] T . Nandagop al, T . Kim, X. Gao, and V . B hargha v an, “Achie ving MA C Layer Fairness in Wireless Pack et Networks, ” in Pr oc. ACM Mobicom ’00 , August 2000. [6] IEEE Sta ndar d 802.11: W ireless LA N medium access contr ol (MAC) and physical layer (PHY) specifications: Medium access contr ol (MAC) Quality of Service (QoS) Enhancemen ts , IEE E 802.11e Std., 2005. [7] C. Casetti and C. F . Chiasserini, “Impro ving Fairness and T hroughpu t for V oice Traf fic in 802.11e E DCA, ” in Proc . IEEE PIMRC ’04 , September 200 4. [8] D. J. Leith, P . Clifford, D. Malone, and A. Ng, “TC P Fairness in 802.11e WLANs, ” IEEE Commun. Lett. , pp. 964–966, Novem ber 2005. [9] J. Freitag, N. L. S. da Fonseca, and J. F . de Rezen de, “Tuning o f 802.11e Network P arameters, ” IE EE Commun. Lett. , pp. 611–613, August 200 6. [10] I. T innirello and S . Choi, “Efficienc y Analysis of Burst T ransmissions with Block ACK i n Contention-Based 802.11e WL ANs, ” in Pr oc. IEEE ICC ’05 , M ay 2005. [11] F . Keceli, I. Inan, and E. A yanoglu, “W eighted Fair Uplink/Do wnlink Access Provisioning in IEEE 802.11e WLANs, ” in IEEE ICC ’08, Beijing, China , May 2008. 23 [12] S. W . Kim, B.-S. Kim, and Y . Fang, “Do wnlink and Uplink Resource Allocation in I EEE 802.11 W ireless LANs, ” IEEE T ra ns. V eh. T echno l. , pp. 320– 327, January 2005 . [13] J. Jeong , S. Choi, and C.-K. Kim, “Achiev ing W eighted Fairness between Uplink and D o wnlink in IEEE 802.11 DCF-based WLANs, ” in Pr oc. IEEE QSHINE ’05 , August 2005 . [14] S. Pi losof, R. Ramjee, D. Raz, Y . Shavitt, and P . Sinha, “Understanding TCP Fairness ov er Wireless LAN, ” in Pro c. IEEE Infocom ’03 , April 2003. [15] Y . Wu, Z. Niu, and J. Zheng, “Study of the TCP Upstream /Downstream Unfairness Issue with Per -flow Queueing over Infrastructure- mode WLANs, ” W ireless Commun. and Mobile C omp. , pp . 459–4 71, June 2005. [16] J. Ha and C.-H. Choi, “TCP Fairness for Uplink an d Do wnlink Flo ws in WLANs, ” in Pro c. IEEE Gl obecom ’06 , Nov ember 2006 . [17] N. Blef ari-Melazzi, A. Detti, I. Habib, A. Ordin e, and S . Salsano , “TCP Fairness Issues in IEEE 8 02.11 Netwo rks: Problem Analysis and Solutions Based on Rate Co ntrol, ” IEEE T r ans. W ireless Commun. , pp. 1 346–1355 , April 2 007. [18] F . Keceli, I. Inan, and E. A yanoglu, “TCP ACK Congestion Control and Fil tering for Fairness Provision i n the Uplink of IEEE 802.11 Infrastructure Basic Service Set, ” in Pr oc. IE EE ICC ’07 , June 2 007. [19] “RFC3449 - TCP Performance I mplications of Netw ork Path Asymmetry, ” 2002. [20] “RFC2760 - Ongoing TCP Rese arch Related to Satelli tes, ” 2000. [21] H. Balakrishnan, V . N. Padmanabhan , and R. H. Katz, “The E ffects of Asymmetry on T CP Performance, ” in Proc . ACM/IEEE MobiCom ’97 , Nov ember 1 997. [22] T . V . Lakshman, U. Mad how , and B. S uter , “W indo w-based Error Recov ery and Flow Control with a Slow Acknowledgem ent Channel: A Study of TCP/IP Performance, ” in Pr oc . IEEE Infocom ’97 , April 1997. [23] G. Fairhurst, N. K. G. Samaraweera, M. Sooriyabandara, H. Harun, K. Hodson, an d R. Donadio, “Performance Issues in Asy mmetric TCP Service P rovision using Broadband Satellite, ” IEE Proc . Commun. , pp. 95–99, 2001. [24] M. Allman, “On the Generation and Use o f TCP Ackno wledg ements, ” A CM Comp. Comm. Review , October 199 8. [25] L. Kalampouskas, A. V arma, and K. K. Ramakrishnan, “Imp roving T CP Throughput ov er T wo-W ay Asymmetric Links: Analysis and Solutions, ” in Pr oc. ACM Sigmetrics ’98 , 199 8. [26] L. Zhang, S. S henk er , and D. D. Clark, “Observ ations on the Dyanmics of a Congestion Control Algorithm: The Effects of T wo -W ay T raffic, ” A CM Comp . Comm. Re view , pp. 133–147 , 1991. [27] K. Medepalli and F . A. T ob agi, “Through put Analysis of IEEE 802.11 Wireless LANs using an A verage C ycle Time Approach, ” in Pr oc. IEEE Globecom ’05 , No vember 2005. [28] I. Inan, F . Keceli, and E . A yanoglu, “P erformance Analysis of the IE EE 802.11e Enhanced Distributed Coordination F unction using Cycle T ime Approach, ” in Proc . IEEE Globecom ’07 , No vember 2007. [29] (2006) The Network Simulator , ns-2. [Online]. A v ailable: http://www . isi.edu/nsnam/ns [30] IEEE Standar d 802.11: W ir eless LAN medium access contr ol (MAC) and physical layer (PH Y) specifica tions: F urther Hi gher Data Rate Extension in the 2.4 GHz Band , IEEE 802.11g Std., 200 3. [31] R. Jain, The Art of Computer Systems P erformance Analysis: T echniques for Experimental Design, Measur emen t, Simulation, and Modeling . John W ile y and Sons, 1991. [32] F . K eceli, I. Inan, and E. A yanoglu, “Fair TCP Access Provisioning in the IEEE 802.11 Infrastructure Basic Service Set, ” T ech. Rep., May 20 08. [33] D. Qijao and S. Choi, “Goodput Enhancement of IE EE 802.11a Wireless LAN via Li nk Adaptation, ” in Pr oc. IEEE ICC ’01 , June 2001. [34] M. Lacage. (2006) Ns-2 80 2.11 Support. INRIA Sophia Antipolis. France . [Online]. A vailable: http://spoutnik.inria.fr/code/ns- 2 24 R 1 R 2 x 0 x 2 x 3 x 1 Fairness Li ne R 1 = R 2 C C Capacity Li ne R 1 + R 2 = C Fig. 1. AIMD con v ergen ce to fair share of the bandwidth 25 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 0 0.2 0.4 0.6 0.8 1 1.2 1.4 Flow Index Throughput (Mbps) Fig. 2. The through put of each TC P connection when there are 15 do wnlink TCP co nnections. 26 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 0 0.5 1 1.5 2 2.5 3 3.5 Flow Index Throughput (Mbps) Fig. 3. The throu ghput of each TC P connection when there are 15 uplink TCP connections. 27 0 1 2 3 4 5 6 7 8 9 10 0 5 10 15 20 25 Number of upload TCP flows Total Throughput (Mbps) 10 TCP Download Flows vs n TCP Upload Flows upload download Fig. 4. The total TCP throughput in the do wnlink an d the uplink when t here are 10 do wnload T CP connections and the number of upload TCP con nections v aries from 1 to 10. 28 0 5 10 15 20 25 0 50 100 150 200 250 Number of TCP flows W lim FCWA Simulation [14] Fig. 5. Congestion windo w limits calculated by FCW A, t he simu lation, and [14]. 29 0 5 10 15 20 25 10 11 12 13 14 15 16 Number of TCP flows Total throughput (Mbps) FCWA [14] Fig. 6. T otal throughpu t of the system when the TCP connections emplo y analytically calculated congestion wind ow limits b y FCW A and [14]. 30 5 10 15 20 0 0.5 1 1.5 2 Number of TCP flows at each direction Throughput (Mbps) [14] 5 10 15 20 0 0.5 1 1.5 2 Number of TCP flows at each direction Throughput (Mbps) FCWA Fig. 7. Indi vidual throughput of TCP connections when the TCP connections wit h different wired link delays employ the analytically calculated con gestion windo w limits by FCW A and [14]. 31 1 2 3 4 5 6 7 8 9 0 5 10 15 20 25 30 35 Scenario W lim FCWA Simulation [14] Fig. 8. Congestion window limits calculated by FCW A, the simulation, and [14] when TCP connections employ d elayed A CK mechanism with b = 2 . 32 1 2 3 4 5 6 7 8 9 8 10 12 14 16 18 20 22 24 Scenario Total throughput (Mbps) FCWA [14] Fig. 9. T otal throughpu t of the system when the TCP connections emplo y analytically calculated congestion wind ow limits b y FCW A and [14] in the case delay ed A CK mechan ism is used ( b = 2 ). 33 5 10 15 20 25 30 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Number of download TCP flows Fairness Index ( f ) Default, 3 upload Default, 5 upload Default, 10 upload ACCF, 3 upload ACCF, 5 upload ACCF, 10 upload FCWA, 3 upload FCWA, 5 upload FCWA, 10 upload Fig. 10. Fairness inde x among all TCP flo ws when 3, 5, or 10 upload TCP conn ections are generated and the number of download TCP connections are varied from 5 to 30 . 34 5 10 15 20 25 30 0 5 10 15 20 25 Number of download TCP flows Total throughput (Mbps) Default, total Default, upload Default, download ACCF, total ACCF, upload ACCF, download FCWA, total FCWA, upload FCWA, download Fig. 11. Thro ughput of upload and downloa d T CP connections when 3 upload TCP connections are generated and the number of do wnload TCP con nections are v aried from 5 to 30 . 35 5 10 15 20 25 30 0 5 10 15 20 25 Number of download TCP flows Total throughput (Mbps) Default, total Default, upload Default, download ACCF, total ACCF, upload ACCF, download FCWA, total FCWA, upload FCWA, download Fig. 12. Thro ughput of upload and downloa d T CP connections when 5 upload TCP connections are generated and the number of do wnload TCP con nections are v aried from 5 to 30 . 36 5 10 15 20 25 30 0 5 10 15 20 25 Number of download TCP flows Total throughput (Mbps) Default, total Default, upload Default, download ACCF, total ACCF, upload ACCF, download FCWA, total FCWA, upload FCWA, download Fig. 13. Throughput of upload and do wnload T CP connections when 10 uplo ad TCP connections are generated and the number of do wnload TCP con nections are v aried from 5 to 30 . 37 0 50 100 150 200 250 300 350 0 2 4 6 8 10 12 14 Default Time (s) Instantaneous Throughput (Mbps) 0 50 100 150 200 250 300 350 0 2 4 6 8 10 12 14 Upload Download Upload Download ACCF Fig. 14. Individual i nstantaneous throughput for upload and do wnload TCP flo ws when TCP receiv ers emplo y the delay ed A CK mechanism. 38 5 10 15 20 25 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Number of download TCP flows Fairness Index ( f ) Default, 3 upload Default, 5 upload Default, 10 upload ACCF, 3 upload ACCF, 5 upload ACCF, 10 upload Fig. 15. Fairness inde x among all TCP flo ws ov er an A WGN channel with 0.1% PER,when 3, 5, or 10 upload TCP connections are generated and the number of download TCP connections are v aried from 5 to 3 0. 39 5 10 15 20 25 19 20 21 22 23 24 25 Number of download TCP flows Total Throughput (Mbps) Default, 3 upload Default, 5 upload Default, 10 upload ACCF, 3 upload ACCF, 5 upload ACCF, 10 upload Fig. 1 6. T otal throug hput of TCP flows ove r an A WGN channel with 0.1% P ER, when 3, 5, or 10 upload TCP connections are generated and the number of do wnloa d TCP conne ctions are v aried from 5 to 30. 40 4 8 12 16 20 24 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Number of TCP flows at each direction Fairness Index ( f ) 4 8 12 16 20 24 0 0.2 0.4 0.6 0.8 1 4 8 12 16 20 24 0 0.2 0.4 0.6 0.8 1 PLR Default ( f ) ACCF ( f ) Default (PLR) ACCF (PLR) Fig. 17. Fairness index f for saturated and Packet L oss Rate (PLR) for unsaturated TC P flows when the default EDCA or ACCF is employed. 41 4 8 12 16 20 24 18 19 20 21 22 23 24 25 Number of TCP flows at each direction Total TCP Throughput (Mbps) Default ACCF Fig. 1 8. T otal TCP throughput when th e default EDCA or A CCF is employed . 42 0 5 10 15 20 25 30 0 10 20 30 40 50 60 70 80 90 100 Flow index Total transmission time (s) Default ACCF Fig. 1 9. The total transmission du ration for indi vidual sho rt-live d TCP flo ws. 43 4 6 8 10 12 14 16 18 20 22 24 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Number of TCP flows at each direction Fairness Index ( f ) Default, LD=0ms Default, LD=15ms Default, LD=50ms ACCF, LD=0ms ACCF, LD=15ms ACCF, LD=50ms Fig. 2 0. Fairne ss inde x among all TCP flo ws with different co ngestion windo w sizes. 44 4 6 8 10 12 14 16 18 20 22 24 18 19 20 21 22 23 24 25 26 27 Number of TCP flows at each direction Total throughput (Mbps) Default, LD=0ms Default, LD=15ms Default, LD=50ms ACCF, LD=0ms ACCF, LD=15ms ACCF, LD=50ms Fig. 21. T hroughpu t of TCP conn ections when the y use different cong estion windo w sizes.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment