Energy and Time Efficient Scheduling of Tasks with Dependencies on Asymmetric Multiprocessors

In this work we study the problem of scheduling tasks with dependencies in multiprocessor architectures where processors have different speeds. We present the preemptive algorithm "Save-Energy" that given a schedule of tasks it post processes it to i…

Authors: Ioannis Chatzigiannakis, Georgios Giannoulis, Paul G. Spirakis

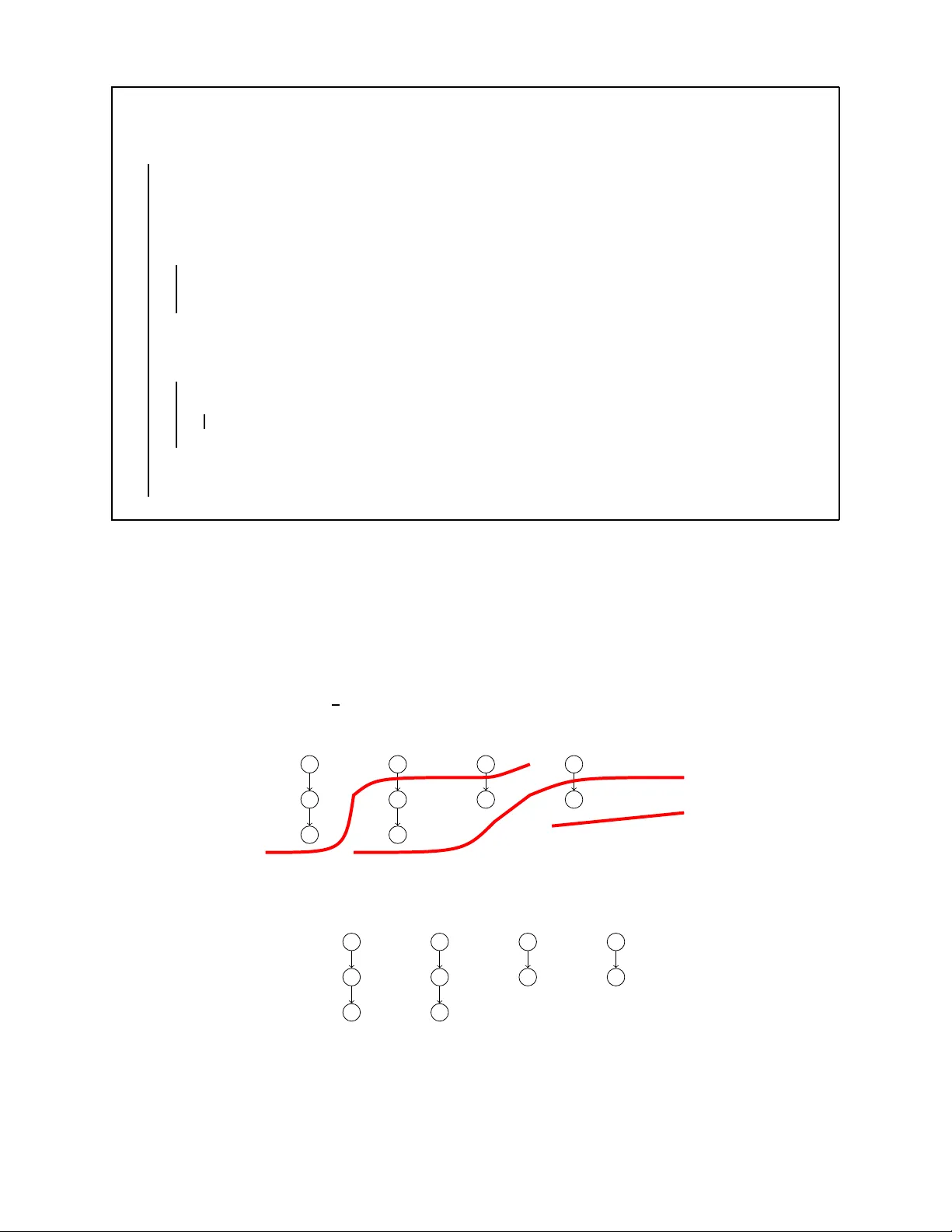

Energy and T ime Efficient Schedulin g of T asks with Dependen cies on Asymme tric M ult ipr oc essors ⋆ ⋆⋆ Ioannis Chatzigiannakis 1 , 2 , Georgios Giannoulis 2 , and Pa ul G. Spirakis 1 , 2 1 Research Academic Computer T echnolog y Institute (CTI), P .O. Box 1382, N. Kazantzaki Str ., 26500 Patras, Greec e 2 Department of Computer Engineering and Informatics (CEID), Univ ersity of Patras, 26500, Patras, Greece Email: { ichatz,spirakis } @cti.gr, giannulg@ceid .upatras.gr Abstract. In this work we study the pro blem of scheduling tasks with dependencies in multiprocessor architectures where processors have diffe rent speeds. W e present the preemptiv e al gorithm “Save-Ener gy” that given a schedule of tasks it post processes i t to improve the energy efficienc y without any deterioration of t he makespan. In terms of time ef ficiency , we show that preemptiv e scheduling in an asymmetric system can achiev e the same or better optimal makespan than in a symmetric system. Motivited by real multiprocessor systems, we in vestigate architec- tures that exhibit limited asymmetry: there are two essentially differen t speeds. Interestingly , this special case has not been st udied in the field of parallel computing and scheduling theory; only the general case was studied where processors h ave K essentially different speeds. W e p resent the non -preemptiv e algorithm “Remnants” that achie ves almost optimal makespan. W e pro vide a refined analysis of a recent scheduling method. Based on this analysis, we specialize the scheduling policy and prov ide an algorithm of (3 + o (1)) expected approximation factor . Note that this improves the previous best factor (6 f or two speeds). W e believ e that our work will con vince researchers to rev isit this well studied sched uling problem for these simple, y et realistic, a symmetric multiprocessor architectures. 1 Introd uction It is clear that processors technology is un der go ing a vigorous s haking-up t o allow one processo r socket to pro vi de access to multi ple logical cores. Current tec hn ology alre ady allows multiple pro- cessor cores to be cont ained ins ide a single p rocessor modu le. Such chip multiprocessors seem to overc om e the th ermal and power problems that lim it the performance that sin gle-processor chips can deli ver . Recently , researchers ha ve propo sed multi processor platforms where individual p ro- cessors ha ve diff erent com putation capabilities (e.g., see [6]). Such architectures ar e att ractiv e because a fe w high-performance complex processors can provide good serial performance, and many low-performance simpl e processors can provide high p arallel performance. Such asy mmet- ric platforms can als o achie ve energy-ef ficiency since the lower t he processing speed, the lower the power consumpti on is [10]. Reducing the energy cons umption is an i mportant i ssue not only for battery operated mobile computing de vi ces but also in deskto p computers and serv ers. As the number of chip mul tiprocessors is growing tremendously , the need for algorithmi c solu- tions that effi cient ly use su ch platforms is in creasing as well. In these plat forms a key assumpt ion is that processors may ha ve different speeds a nd capabilities b ut that the speeds and ca pabi lities do not change. W e consider mult iprocessor architectures P = { P k : k = 1 , . . . , m } , where c ( k ) i s the speed of processor p k . The total processing capability of t he platform is denoted by p = P m k =1 c ( k ) . One of the key challenges of asymmetric computing is the scheduling prob lem. Given a parallel program of n tasks represented as a dependence graph, the scheduling problem d eals with mapping each task onto the a vailable asymmetric resou rces i n order to min imize the makespan, that is, ⋆ This work has been partially supported by the IST Pr ogramme of the European Union under contract number IST -2005 -15964 ( AEOLUS ) and by the ICT Programme of the European Union under contract number ICT -2008-21527 0 ( FRONTS ). ⋆⋆ An early version of some of the ideas of our work will appear as a brief announcement in the 27 th A CM Symposium on Principles of Distributed Computing (P ODC 2008). the m aximum completi on time of the jobs. In this work we als o lo ok into ho w t o reduce ener gy consumption without affecting the makespan of the schedule. Energy effi ciency for speed scali ng of parallel processors, which is not assumed in this work, was considered in [1]. Our notion of a parallel program to be executed is a set of n tasks represented by G = ( V , E ) , a Directed Acyclic Graph (D A G). The set V represents n = | V | sim ple tasks each of a unit pro- cessing tim e. If task i precedes task j (denoted via i ≺ j ), then j cannot start un til i is finis hed. The set E of edges represents precedence const raints among the tasks . W e assum e that the whole D A G is pre sent ed as an input to the multiprocessor architecture. Our objectiv e is to giv e schedules that complete the processing of t he whole D A G in small t ime. Using term inology from schedul - ing theory , the problem is that of scheduling prec edence-constrained tasks on re l ated proce ss ors to minimize the m akespan. In our m odel the speed asymmetry is the basic characteristic. W e assume that the overhea d of (re)assigning processors to tasks of a parallel job to be executed is ne gl igible . The special case in which the D A G is just a collection of chains is of im portance because general D A Gs can b e scheduled via a maximal chain decomposi tion technique of [4]. Let L = { L 1 , L 2 , . . . , L r } a program of r Cha i ns of tasks to be processed. W e denote the length of chain L i by l i = | L i | , the count of the jobs in L i ; wit hout loss of generality l 1 ≥ l 2 ≥ . . . ≥ l r . Clearly n = P r i =1 l i . In this case the problem is also k nown as Chains Scheduling . Note that the decomp osition technique o f [4] requires O ( n 3 ) ti me and t he maxi mal chain decomposition depends only on the jobs of the giv en instance and is independent of the machine en vironment. Because the prob lem is NP-hard [9] even when al l processors have the s ame s peed, t he schedu l- ing community has concentrated on de veloping approximati on algorithms f or the makespan. Early papers introduce O ( √ m ) -approximati on algorithms [7, 8], and more recent papers propose O (log m ) - approximation algorithms [5, 4]. Numerous asy mmetric processor organizations ha ve been pro- posed for power and performance ef ficiency , and ha ve in vestigated the beha vior of multi-program- med s ingle threa ded applications on them. [2] in vestigate the impact of performance asymmetry in emerging mul tiprocessor architectures. They conduct an experimental methodolo gy on a mul- tiprocessor sys tems where i ndividual processo rs have different p erformance. They report that t he running times of commercial application may benefit from such performance asymmetry . Pre vious research assumed the g eneral case where m ultiprocessor platforms have K distinct speeds. Y et rec ent technologi cal adv ances (e.g., see [6, 10]) build systems of two essential speeds. Unfortunately , in the scheduling lit erature, the case of just 2 disti nct processor speeds has not been giv en much attention. In fact, the best ti ll now results of [4] reduce instances of arbitrary (but related) speeds, to at most K = O (log m ) distinct speeds. Then the s ame work gives schedules of a makespan at most O ( K ) times the optimal mak espan, where O ( K ) is 6 K for general D A Gs. W e consider arc h itectures of chip multiprocessors consisti ng of m processors, with m s fast processors of speed s > 1 and of m − m s slow processors of speed 1 where the ener gy consumpti on per unit time is a con vex function of the processor speed. Thus, our model is a special case of the uniform ly related m achines case, w ith on ly two disti nct speeds. In fact, the notion of distin ct speeds used in [5] and [4] a l lows sev eral speeds for our model, but not differing much from each other . So for the case of 2 speeds, considered here, this gives a 12 -factor approximation for general D A Gs. Our g oal here i s to improv e on t his and under this si mple mod el provide schedules with better makespan. W e als o focus on the special case where the multiprocessor system is comp osed o f a single f ast processor and mult iple slo w processors, like the one d esigned in [6]. Note that [3] h as recently worked on a different model that assumes asynchronous processors with time v arying speeds. 2 Energy Efficiency of Sched uling on Asymmetric Multipr ocessors Asymmetric pl atforms can achie ve energy-ef ficiency s ince the lower the processing speed, the lower the po wer consumpti on is [10]. Reducing ener gy consumption is important for battery oper- ated mobile comput ing devices but also for desktop computers and servers. T o e x amine the energy usage of mul tiprocessor systems we adopt the m odel of [1]: the ener gy cons umption per unit t ime is a con vex function of the p rocessor sp eed. In particular , the energy consu mption of processor k is p roportional to c ( k ) α · t , where α > 1 is a con stant. C l early by increasing t he makespan of a schedule we can reduce the ener gy usage. W e design the pr eemptive algorithm “Save-Ener gy” (see Alg.1) that post processes a schedule of tasks to processors in o rder to improve the ener gy efficienc y by reassigning tasks to processors of slower speed. W e assume no restrictions in the number of speeds of the processors and rearrange tasks so that the makespan is not af fected. This reduces the ener gy consumption since in our model the energy spent to process a task is proportional to the speed of the processor to t he power of α (where α > 1 ). In this s ense, our algorith m wi ll optimize a given schedule so th at maximum ener gy efficiency is achieved. Input : An assignment of tasks to processors Output : An assignment of tasks to processors with reduced energy con sumption Split schedule in interv als t j , where j ∈ [1 . . . τ 0 ] Sort times in ascending order . τ ← τ 0 fo r c = c (2) to c ( m ) do fo r i ← 1 to τ do H holes in lower spee ds that processing of t i can fit without conflict in other assignments Fit task h in as many slo wer speeds starti ng from ho l es at c(1) to c, but if at τ i , h fits to 2 or more speeds fill the hole closest to α − 1 p 1 α · c if h does not fit exactly then Create a ne w t ′ at the time preemption happens Fit h in extended slot end end τ ← τ ( pr eviou s ) + Set of times that pree mption occured end Algorithm 1 : “Sa ve-Ener gy ” W e st art by sorting the processors according to the processing capability p 1 , . . . , p m so that c (1) ≥ c (2 ) . . . ≥ c ( m ) . W e then split time in intervals t j , where j ∈ [1 . . . τ 0 ] , where τ 0 is such that between these intervals there is not any preemption, no task completes and no changes are made to the precedence constraints. Furthermore we denote x j i = 1 if at t j we use c ( i ) and 0 otherwise. So the total ener gy con suption of the schedule is E = P m i =1 P τ 0 j =1 x j i c ( i ) α t j . Theor em 1 (Condition of optimality). If E is the optimal energy consumption of a schedule (i.e., no further ener gy savings can be achieve d), the following holds: There does not exist any t i , t j , where i, j ∈ [1 . . . τ 0 ] , so that a list l ini tially assig ned to speed c ( u ) at time t i can be rescheduled to t j with speed c ( v ) 6 = c ( u ) and reduce energy . Pr oo f. Suppo se that w e can reduce the energy E of the schedule. W e obtain a contradictio n. W e can assum e without any loss of generality that there exists at tim e t i a core u that processes a list at speed c ( u ) and there is a t j so that we can reschedule it to processor v with speed c ( v ) < c ( u ) . This is so because if c ( v ) ≥ c ( u ) we will not have energy reductio n. Therefore since t i , t j exists then the ne w energy E ′ must be lo wer than E . There e xist only three cases when we try to reschedule a list l from t i to t j from c ( u ) to c ( v ) where c ( v ) < c ( u ) : (1) The process of l at t i fits ex actl y to t j . This is the case when t i · c ( u ) = t j · c ( v ) . In this case E ′ = E − c ( u ) α t i + c ( v ) α t j . But thi s violates the requirement E ′ < E since c ( v ) α t j c ( u ) α t i < 1 because c ( v ) α c ( u ) α · t j t i = c ( u ) α c ( v ) α · c ( u ) c ( v ) = c ( u ) c ( v ) α − 1 < 1 (reca l l that α > 1 ). (2) The pr ocess o f l at t i fits to t j and ther e re mai ns time at t j . This is the case when t i · c ( u ) < t j · c ( v ) . Again we reach a contradict ion since the new energy is the sam e with the previous case since t i · c ( u ) < t j · c ( v ) and there exists t ′ j so that t i · c ( u ) = t ′ j · c ( v ) . (3) The pr ocess of l at t i does not fit c omp letely to t j . This is the case when t i · c ( u ) > t j · c ( v ) . Now we cannot mo ve all the processing of l from t i to t j . So there e xists t ′ i so that c ( u ) · t ′ i = c ( v ) · t j . So the process ing splits i n two, at ti me t i for t i − t ′ i and completely to t j . The energy we sav e is c ( u ) α · ( t i − t ′ i ) + c ( v ) α · t j − c ( u ) α · t i = c ( v ) α · t j − c ( u ) α · t ′ i < 0 because c ( v ) c ( u ) < 1 ⇒ c ( v ) α c ( u ) α · c ( u ) c ( v ) < 1 ⇒ c ( v ) α c ( u ) α · t j t i < 1 which proves the theorem. ⊓ ⊔ Theor em 2. If the processing of list l at t i at s peed c ( u ) fits completely to t j to two dif ferent speeds or more, we sav e more energy if we reschedule the list to the speed which is closer to α − 1 q 1 α · c ( u ) , and when α i s 2 it simpli fies to c ( u ) 2 . Pr oo f. The whol e processing of l must not change. So t ′ i is the time that the list will remain on speed c ( u ) and can be calculated by the equation t ′ i · c ( u ) + t j · c ( v ) = t i · c ( u ) . So the ener gy that we spend if we do not use c ( v ) is E start = c ( u ) α · t i and if we use c ( v ) is E c ( v ) = c ( u ) α · t ′ i + c ( v ) α · t j . So E c ( v ) = t ′ i · c ( u ) α + t j · c ( v ) α = E start − c ( v ) · t j ( c ( u ) α − 1 − c ( v ) α − 1 ) and because c ( u ) > c ( v ) we always sav e energy if we reschedule an y task to a lower speed. The minimum energy occurs when t he differential equals t o zero. That happens when ( t j · c ( v ) · c ( u ) α − 1 − t j · c ( v ) α ) ′ = 0 ⇒ t j · c ( u ) α − 1 = αt j · c ( v ) α − 1 ⇒ c ( v ) = α − 1 q 1 α · c ( u ) . Now if the fragment of the li st can be reassigned to a further smal ler c ( i ) we obt ain an even smaller energy schedule. Th us we try to fill all the holes starting from lower speeds and going upw ards, in order to prevent total f ragm entation of t he who le schedule and obtain a schedule of n early opt imal energy consu mption on the condi tion of unharmed makespan. ⊓ ⊔ The algori thm “Sa ve-Energy” clearly does not increase the makespan s ince it does not delay the processing of any task, ins tead there may be even a reduction of t he makespan. The new hole has size c ( v ) c ( u ) < 1 of the previous si ze a nd in e very execution, a hole that can be filled goes to a faster processor . In arbitrary D AGs the problem is t hat due to precedence const raints we cannot s wap two t ime intervals. T o overcome th is problem we proceed as follows: we define the supported s et ( ST i ) to be all the tasks that have been completed until time t i as well as those currently run ning and those who are ready to r un . Between two intervals that have the same ST we ca n swap, or reschedule any assignment so we run the above algorithm between all of t hese marked time int erv als distinctly to create local optimums. In this case the complexity of th e algorithm reduces to O ( m 2 · P θ 2 i ) where θ i is th e time between t wo time int erv als with different ST whi le in l ist of tasks the t ime complexity is O ( τ 2 0 · m 2 ) . W e note that in general D A Gs the best scheduling alg orithms for distinct speeds produces an O (log K ) -approximation (where K is the number of essential speeds). In c ases where schedules are far from tight, the ener gy reduction that can be achieved in high. 3 Time Efficien cy of Sch eduling o n Asymmetric Multipr ocess ors W e continue by providing some arguments for using asymmetric multi processors in terms of tim e ef ficiency . W e show that preempt iv e schedulin g in an asymm etric multi processor platform achieves the same or better optimal makespan than in a symmetric multi processor platform. The basic c har- acteristic of our approach is speed asymmetry . W e assume that the overhead of (re)assigning pro- cessors to tasks of a parallel job to be executed is ne gligib le . Theor em 3. Given an y l ist L of r chains of tasks to be scheduled on preempt iv e machines, an asymmetric multiprocessor system will alw ays ha ve a better or equal optim al makespan th an a symmetric one, given that both hav e the same a verage speed ( s ′ ) and th e same to tal number of processors ( m ). The equality holds if during the whole schedule all processors are busy . Pr oo f. Again we start by sorting the processors according to the processing capabil ity p 1 , . . . , p m so that c (1 ) ≥ c (2) . . . ≥ c ( m ) . W e then split time i n interv als t j , where j ∈ { 1 . . . m ] , so that between these m interva ls there i s not an y preemption, no task complet es and no changes are made to the precedence constraint s. Thi s is feasibl e since the optim al schedule is feasible and has finite preemptions. Let OP T σ the optimal schedul e for the symmetric mult iprocessor system . Now consider the interval ( t i , t i +1 ) where all processors process a list and di vide it in m time intervals. W e ass ign each li st to each of the m asymmetric processors that are activ e, so that a task is assigned sequen- tially to all processors in the orig inal s chedule of O P T σ . So each task wil l be processed by any processor for 1 m · ( t i +1 − t i ) time. Thus e very task will hav e been p rocessed during ( t i , t i +1 ) with an a verage speed of P m i =1 c ( i ) m , which is the speed of ev ery symmetric process or . Thus giv en an optimal schedule for the symmetric system we can produce one that has at most the same makespan on the asymmetric set of processors. The above is true when all processors are processin g a list, at all times. Then the processing in both cases is the same. Of course there a re instances of sets of lists t hat cannot be made t o hav e all processo rs runnin g at all times. In such schedules the optimal makespan on the asymmetric platform is better . Recall that we ha ve sorted all speeds. Since the system is asymmetric it must hav e at least 2 speeds. If at any tim e of O P T σ we process less lists than processors, fol lowing the analysis above, we will h a ve to divide the tim e in ( number of lists processing ) < m (denoted by λ ). So during time-int erva l ( t i , t i +1 ) the processing of any l ist that is processed on symm etric systems wi ll b e s ′ · ( t i +1 − t i ) . Whil e for the asy mmetric system, the processing speed for t he same time-interv al will be P λ i =1 c ( i ) λ . Note that sum in the second equation is bigger than that of the first. That is v alid because we use only the fastest processo rs. More formally c (1) 1 ≥ c (1)+ c (2) 2 ≥ . . . c (1)+ ... + c ( λ ) λ ≥ . . . > c (1)+ ... + c ( m ) m . So we produced a s chedule t hat has a better makespan than O P T σ . In other words, if durin g the optimal schedule for a symm etric syst em there exists at least one interval where a processor i s idle, we can produce an optimal schedule for the asymmetric multiprocessors platform with smaller makespan. ⊓ ⊔ Theor em 4. Given any D A G G of tasks to be scheduled on preemptive machines, an asym metric multiprocessor system will al ways ha ve a better or equal optim al makespan than a sym metric one, provided that both hav e the s ame ave rage speed ( s ′ ) and the same total num ber of processors ( m ). The equality holds if during the whole schedule all processors are b us y . Pr oo f. W e proceed as above. T he di f ference is that we split time i n ( t 1 , t 2 , . . . , t m ) t hat h a ve the following property: betwee n any of these times ( t i , t i +1 ) th ere is not an y preemption on processors or completion of a list or support for an y list that we could no t process at t i due to precedence- constraints. When all processors are processing a list , at all ti mes, the processing in both asymmetric and symmetric sy stems is the sam e, i.e., m · s ′ · ( t i +1 − ti ) . Of course there are D A Gs t hat cannot be made to have all processors running at all times due to precedence-constraints or due to lack of tasks. In such D A Gs the op timal makespan on the a ss ymetric system is bet ter than that of th e symmetric one. If at any t ime of O P T σ we process less lists than processors, following the analysis of Theorem 3 we h a ve t hat on the sy mmetric system the total processing will be P λ j =1 s ′ · ( t i +1 − t i ) = λ · s ′ · ( t i +1 − t i ) while the processing speed during the same int erv al on the asymm etric one will be P λ i =1 c ( i ) · ( t j +1 − t j ) which is bett er . ⊓ ⊔ 4 Multipr ocesso r Systems of Limited Asymmetry W e no w focus on the case where the mult iprocessor system is composed of a single f ast processor and mult iple slow ones, like the one designed in [6]. Consider t hat the fast processor has speed s and the remaining m − 1 processors ha ve speed 1 . In the sequel preemption of tasks is not allowed. W e desig n the non -pr eemptive algo rithm “Remnants” (see Alg.2) th at always giv es schedules with makespan T ≤ T opt + 1 s . W e g reedily assign the fa st processor first in each round. Then we try to maximi ze parallelism using the slow processors. In the beginning of round k we denote r em k ( i ) the suffix of list L k not yet done. L et R k ( i ) = | r em k ( i ) | . For n tasks , t he algorithm can be implemented to run in O 1 s n 2 log n time. The slow processors, whose “list” is t aken by the speedy processor in round k , can be reassigned to free remnants. Remark i n the speed assignment produced by “Remnants” we can even name the processors assigned to tasks (in contrast of general speed assignment methods, see e.g., [8, 5, 4 ]). Thus the actu al scheduling of tasks is much more easy and of reduced overhea d. As an example, consider a system with 3 processors ( m = 3 ) where the speedy processor has s = 4 . In other words, we have a fast processor and two slow ones. W e wish to schedule 4 lists, where l 1 = 3 , l 2 = 3 , l 3 = 2 and l 4 = 2 . The “remnants” algorithm produces the following assignment with a makespan of T = 2 : L 1 L 2 L 3 L 4 Round 1 s s s s 1 1 Round 2 s s s s Input : Lists L 1 , . . . , L r of tasks Output : An assignment of tasks to processors k ← 1 while ther e are no nempty lists do fo r i ← 1 to r do r em k ( i ) = L i g k ← number of nonempty lists Sort and rename the remnants so that R k (1) ≥ R k (2) ≥ . . . ≥ R k ( g k ) u ← s, v ← 1 / * Assign the fast processor sequentiall y to s tasks * / while u > 0 and v ≤ g k do p ← min ( u, R k ( v )) Assign p tasks of r em k ( v ) to fast processor and remov e from re m k ( v ) u ← u − p, v ← v + 1 end / * Assign slow processors to beginning task of each remnant lists not touched by the fast speed assignment * / if v ≤ g k then q ← min( g k , m − 1) fo r w ← v to q d o Assign first task of r em k ( w ) to slow pro cessor and remo ve from r em k ( w ) end end Remov e assigned tasks from the lists k ← k + 1 end Algorithm 2 : “Remnants” Notice that the slow processors, wh ose “list” is taken by the speedy processor i n round k , can be reassigned to free remnants (one per free rem nant). So our assig nment tries to us e all av ailable parallelism per round. Now consider the case where the fast processor has s = 3 , t hat is, it runs slower than the processor of the above example. For the same list s of tasks, the algorithm now produces a s chedule with a makespan of T = 2 + 1 s : L 1 L 2 L 3 L 4 Round 1 s s s 1 1 Round 2 s s s 1 Round 3 s Notice that for this configuration, the following schedule produces a makespan of 2 : L 1 L 2 L 3 L 4 s s s s s s 1 1 1 1 In the foll owing theorem we sho w that the performance of Remnants is actually very close to optimal, in the sense of ar gui ng that the above counter-e xampl e is essentially the only one. Theor em 5. F or any set of l ists L and multipr ocessor pla tform with one fast pr ocessor of speed s and m − 1 slow pr ocesso rs of speed 1 , if T is the makespan of Algor ithm Remnan ts then T ≤ T opt + 1 s . Pr oo f. W e apply here the construction of Graham, as i t was modified by [5], which we use in order to s ee if T can be improved. Let j 1 a task t hat completes last in Remnants. W ithout loss of genera l ity , from the way Remnant works, w e c an always assume t hat j n was executed by th e speedy processor . W e consider now t he logical chain ending with j 1 as follows: Iteratively define j t +1 as a predecessor of j t that compl etes last of all predecessors of j t in Remnants. In this chain (a) either al l its tasks were done at speed c (in whi ch case and since the fast processors works all the time, the mak espan T of R emn ants is optimal ), or (b) there is a task t ∗ at distance at most s − 1 from t 1 that was done by speed 1 in Remnants. In the later case, if x is the start tim e of t 1 , this means that before x all speed 1 p rocessors are busy , else t 1 could be hav e scheduled earlier . (b .1) If there is no other task in the chain done at speed 1 and before t 1 then again T is optimal since before t 1 all processors of all speeds are b us y . (b .2) Let t 2 be another task in t he chain done at speed 1 and t 2 < t 1 . Then t 2 must be an i mmediate predecessor of t 1 in a chain (because of the way Remnants work) and, during the execution of t 2 , speed s is b usy but there coul d be some pro cessor of speed 1 a vailable. Define t 3 , . . . , t j similarly (tasks of the last chain , all done in speed 1 and t k < t k − 1 , k = j . . . 2 ). Thi s can go up t o the cha i n’ s s tart, wh ich could ha ve been done earlier by anot her speed 1 processo r and this is t he only t ask that could be done by an av ailable processor , jus t one step befor e . So, t he makespan T of algorithm Remnants can be compressed by only one task, and become optimal. But then T ≤ T opt + 1 s (i.e., it is the start of the last list that has no predecessor and which could go at speed 1 toget her with nodes in the pre vious list). ⊓ ⊔ 4.1 An LP-r elaxation appr oach f or a schedule of good expected makespan In this section we relax the limitatio ns to asymmetry . W e work on the more gener al case of having m s fast processors of speed s and m − m s slow processors of speed 1 . Note that we still ha ve two distinct speeds and pree m ption of tasks is not all owed. W e follow th e basic i deas of [4] a nd specialize the gener al lower b ounds on mak espan for the more general case. Clearly , the maximum rate at which the multiprocesso r system of lim itted asymmetry ca n p rocess tasks is m s · s + ( m − m s ) · 1 , w hich is achie ved if and only if all machin es are busy . Therefore to finish all n tasks requires time at least A = n m s · s +1 · m − m s . Now let B = max 1 ≤ j ≤ min( r ,m ) P j i =1 l i P j i =1 c ( i ) where c (1) = . . . = c ( m s ) = s and c ( m s + 1) = . . . = c ( m ) = 1 are the i ndividual processor speeds from the fast to the slow . I t follows that, l 1 s ≥ l 1 + l 2 2 s ≥ . . . ≥ l 1 + . . . + l j j s ( j = m s ) The inter esting case is w hen m s < r . So, we assume m s < r and let l s = l 1 + l 2 + . . . + l m s . Thus B = max m s +1 ≤ j ≤ min( r ,m ) l s + P j i = m s +1 l i m s · ( s − 1) + j − 1 ! By [4] then Lemma 1. Let T opt the optimal makespan of r chains. Then T opt ≥ max( A, B ) . Since the a verage load is also a lower bound for pree m ptive schedules we get Corollary 1. max( A, B ) is also a lower bound for pre em ptive schedules. As for t he case where we hav e only one fast processor , i.e. m s = 1 , in each step, at m ost s + min( m, r − 1) tasks can be done since no two processors can work in parallel on the same list. This giv es T opt ≥ n s +min( r − 1 ,m ) . Of course the bound T opt ≥ B still also holds. For a natural va riant of list scheduling wher e no preemption tak es place, called speed-based list scheduling, dev elo ped in [5], is const rained to schedule according to t he speed assignment s of the jobs. In classical list schedul ing, whene ver a machine is fr ee the first a vailable job from the list is scheduled on it. In this method, an av ail able task i s scheduled on a free m achine provided th at the sp eed of th e free machine matches the speed assignment of the task. T he speed assignments of tasks ha ve to be done in a clever wa y for good schedules. In th e sequel, l et D s = 1 s · m s · n s where n s < n is the number of t asks assi gned to speed s . Let D 1 = n − n s m − m s . Finally , for each chain L i and each task j ∈ L i with c ( j ) being the speed assigned to j , compute q i = P j ∈ L i 1 c ( j ) and let C = max i ∈ L q i . The proof of the following theorem fo llows from an easy generalization of Graham’ s analysis of list scheduling. Theor em 6 (specializatio n of Theorem 2.1, [5]). For any speed assignment ( c ( j ) = s or 1 ) to tasks j = 1 . . . n , t he non-preemptive speed-based list schedu ling m ethod produces a schedule of makespan T ≤ C + D s + D 1 . Based on the above specializations , we wish to provide a non-preemptive schedule (i.e., sp eed assignment) that achieves good m akespan. W e either assig n tasks to speed s or to speed 1 so that C + D s + D 1 is not too large. Let, for task j : x j = ( 1 wh en c ( j ) = s 0 o therwise and y j = ( 1 wh en c ( j ) = 1 0 o therwise Since each task j must be assig ned to some speed we get ∀ j = 1 . . . n x j + y j = 1 (1) In time D , the fast processo rs can com plete P n j =1 x j tasks and the slow processors can complet e P n j =1 y j tasks. So P n j =1 x j m s · s ≤ D (2) and P n j =1 y j m − m s ≤ D (3) Let t j be the completion time of task j ( t j ≥ 0) (4) If j ′ < j t hen clearly x j s + y j ≤ t j − t j ′ (5) Also ∀ j : t j ≤ D (6) and ∀ j : x j , y j ∈ { 0 , 1 } (7) Based on the above constraints, consider the following mixed integer program: MIP: min D under (1) to (7) MIP’ s optim al solutio n is clearly a lower bound on T opt . Note that (2) ⇒ D s ≤ D and (3) ⇒ D 1 ≤ D . Also note that since t j ′ ≥ 0 ⇒ x j s + y j ≤ t j by (5) and thus also C ≤ D , by adding times on each chain. So, if we could solve MIP then we would get a schedule of makespan T ≤ 3 · T opt , by Theorem 6. Suppose we relax (7) as follows: x j , y j ∈ [0 , 1] j = 1 . . . n (8) Consider the following linear program: LP: min D under (1) to (7) and (8) This LP can be solved in poly nomial time and its optimal solu tion x j , y j , t j , where j = 1 . . . n , giv es an optimal D , also D ≤ T opt (because D ≤ best D of M IP). W e now use randomized rounding, to get a speed assignm ent A 1 ∀ task j : c ( j ′ ) = s with probability x j c ( j ) = 1 with probability 1 − x j = y j Let T A 1 be the mak espan of A 1 . Since T A 1 ≤ C + D s + D 1 ⇒ E ( T A 1 ) ≤ E ( C ) + E ( D s ) + E ( D 1 ) . But note that E ( n s ) = n X j =1 x j and E ( n − n s ) = n X j =1 y j so E ( D 1 ) , E ( D 2 ) ≤ D by (2,3) and, for each lis t L i E X j ∈ L i 1 c ( j ) = X j ∈ L i E 1 c ( j ) ! = X j ∈ L i 1 s · x j + 1 · y j ≤ D by (5), (6) I.e., E ( C ) ≤ D . So we get the following theorem: Theor em 7. Our speed assignment A 1 giv es a non-preempt iv e schedule of expected m akespan at most 3 · T opt Our MIP formulati on also holds for general D A Gs and 2 speeds, when all tasks are of unit length. Since Theorem 6 of [5] and the lower bound of [4] also hol ds for general D A Gs, we get: Corollary 2. Our speed assignment A 1 , for general D A Gs of unit tasks gi ves a non-preemp tiv e schedule of expected make sp an at most 3 · T opt . W e continue by making so me special cons ideration for l ists of tasks, that is we think abo ut D A Gs that are decomposed in sets of lists. Then, A 1 can be greedily improved since all t asks are of unit processing ti me, as fol lows. After doi ng the assignment experiment for the nodes of a list L i and get l 1 i nodes on the fast processors and l 2 i nodes on slow processors. W e then reassign the first l 1 i nodes of L i to t he fast processors and the remaining nodes of L i to the slo w processors. Clear l y this does not change any of the expectations of D s , D 1 and C . Let f A 1 be this modified (improved) schedule. Also, because all tasks are equilenght (unit processing tim e), any reordering of them in the same li st will not change the optim al soluti on o f LP . But then, for e ach list L i and for each task j ∈ L i , x j is the same (call it x i ), and the same holds for y j . Then the processing time of L i is just f i s + (1 − f i ) where f i is as the Bernoulli B ( l i , x i ) . In the sequel, let ∀ i : l i ≥ γ · n , for some γ ∈ (0 , 1) and let s · m = o ( n ) = n ǫ , where ǫ < 1 . Then from f A 1 we produce the speed assignment f A 2 as follows: f oreach list L i , i = 1 . . . r do if x i < log n n then assign all the nodes of L i to unit speed else for L i , f A 2 = f A 1 end end Since for the makespan T f A 1 of f A 1 we ha ve E T f A 1 = E ( T A 1 ) ≤ 3 T opt we get E T f A 2 ≤ 3 · T opt + s log n But T opt ≥ n s · m s + ( m − m s ) ≥ n sm = n 1 − ǫ Thus E T f A 2 ≤ (3 + o (1)) T opt Howe ver , in f A 2 , t he prob ability that T f A 2 > E T f A 2 (1 + β ) , where β i s a const ant (0 , 1) , is at most 1 γ exp − β 2 2 · l i · x i (by Chernoff boun ds), i.e., at m ost 1 γ 1 n β 2 2 . This implies that it i s enough to repeat the randomized assignment of speeds at most a polynomi al number of times and get a schedule of actual makespan at most (3 + o (1)) T opt . So, we get our next theorem: Theor em 8. When each list has length l i ≥ γ · n (where γ ∈ (0 , 1) ) and s · m = n ǫ (where ǫ < 1 ) then we get a (deterministi c) schedule of actual makespan at most (3 + o (1)) T opt in expected polynomial time. 5 Conclusions and Futur e work Processors techno logy is undergoing a vigorous shaking-up to enable low-cost multiprocessor plat- forms where i ndividual processors have different com putation capabilities. W e examined th e en- er gy consum ption of such asymmetric arcitectures. W e presented the preemptive algorithm “Sav e- Ener gy ” t hat post processes a schedule o f t asks to reduce the ener gy usage wit hout any deteriora- tion of the makespan. Then we examined the time ef ficiency of such asymmetric architectures. W e shown that pree m ptive scheduling in an asymmetric multiprocessor platform can achieve t he same or better optimal makespan than in a symmetric multiprocessor platform. Motivited by r eal mult iprocessor systems de veloped in [6, 10], we in v esti gated the special case where the system is composed of a single fast proce ss or and mult iple slow processors. W e say that these architectures ha ve lim ited asymmetry . Interestingly , alghough the problem of scheduling has been studied extensiv ely in the field of parallel computing and scheduling theory , it w as considered for the general case where multiprocessor platforms ha ve K distinct speeds. Our work attempts to bridge between the assumpti ons in these fields and recent advances in multiprocessor sy stems technology . In our si mple, yet realistic, model where K = 2 , we presented the non-preemptiv e algorithm “Remnants” that achiev es almost optimal makespan. W e th en gener ali zed the limited asymmetry to systems that hav e more than one fast proce ss ors while K = 2 . W e refined the scheduling policy of [5] and give a non-preemptive speed based list Randomized scheduling of D AGs that has a makespan T whose expec t ation E ( T ) ≤ 3 · O P T . This improves the previous best factor (6 for two speeds). W e then shown how to con vert the schedule into a deterministic one (in polynom ial expected ti me) in the case of long lists. Regarding futu re work w e wish to e xamine trade-offs between mak espan and ener gy and we also wish to in vestigate e xtensi ons for our model a ll owing other aspects of heterogeneit y as well. Refer ences 1. Susanne Al bers, Fabian M ¨ uller , and Swen Schmelzer . Speed scaling on parallel processors. In SP A A ’07: Pr oceedings of the nineteenth annual ACM symposium on P arallel algorithms and ar chitectur es , pages 289–298, New Y ork, NY , USA, 2007. A CM. 2. Saisanthosh Balakrishnan , Ravi Rajw ar, M i chael Upton , and K onrad K. Lai. The impact of performance asymmetry in emerg- ing multicore architectures. I n 32st I nternational Symposium on Computer Ar chitectur e (ISCA) , pages 506–51 7. IEEE Com- puter Society , 2005 . 3. Michael A. Bender and Cynthia A. Phillips. Scheduling dags on asynchronou s processors. In SP AA ’07: P r oceedings of the nineteenth annu al ACM symp osium on P arallel algorithms and ar chitectur es , pages 35–45 , Ne w Y ork, NY , USA, 200 7. A CM. 4. Chandra Chekuri and Michael Bender . An efficient approximation algorithm f or minimizing makespan on uniformly related machines. J . Algorithms , 41(2):21 2–224, 2001. 5. Fabian A. Chudak and David B. Shmoys. Approximation algorithms for precedence-constrained scheduling problems on parallel machines that run at dif ferent speeds. J ournal of Algorithms , 30:323–343, 1999. 6. Jianjun Guo, Ku i Dai, and Zhiying W ang. A heterogeneous multi-core processor architecture for high performan ce computing. In Advances in Computer Systems Arc hitectur e (ACSA) , pages 359–365 , 2006. Lecture Notes in Computer Science (LNCS 4186). 7. Edward C. Horv ath, Shui Lam, and Ravi Sethi. A le vel algorithm for preempti ve scheduling. J. A CM , 24(1):32–43, 1977. 8. Jef frey M. Jaffe. An analysis of preemptiv e multiprocessor job scheduling. Mathematics of Operations Resear ch , 5(3):415– 421, August 1980. 9. Jef frey D. Ullman. NP-complete scheduling problems. J ournal Computing System Science , 10:384 –393, 1975. 10. Mark W eiser, Brent W elch, Alan Demers, and Scott Shenker . Mobile Computing , chapter Scheduling for Reduced CPU En ergy , pages 449–471 . The International Series in E ngineering and Computer Science. Springer US, 1996 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment