Least angle and $ell_1$ penalized regression: A review

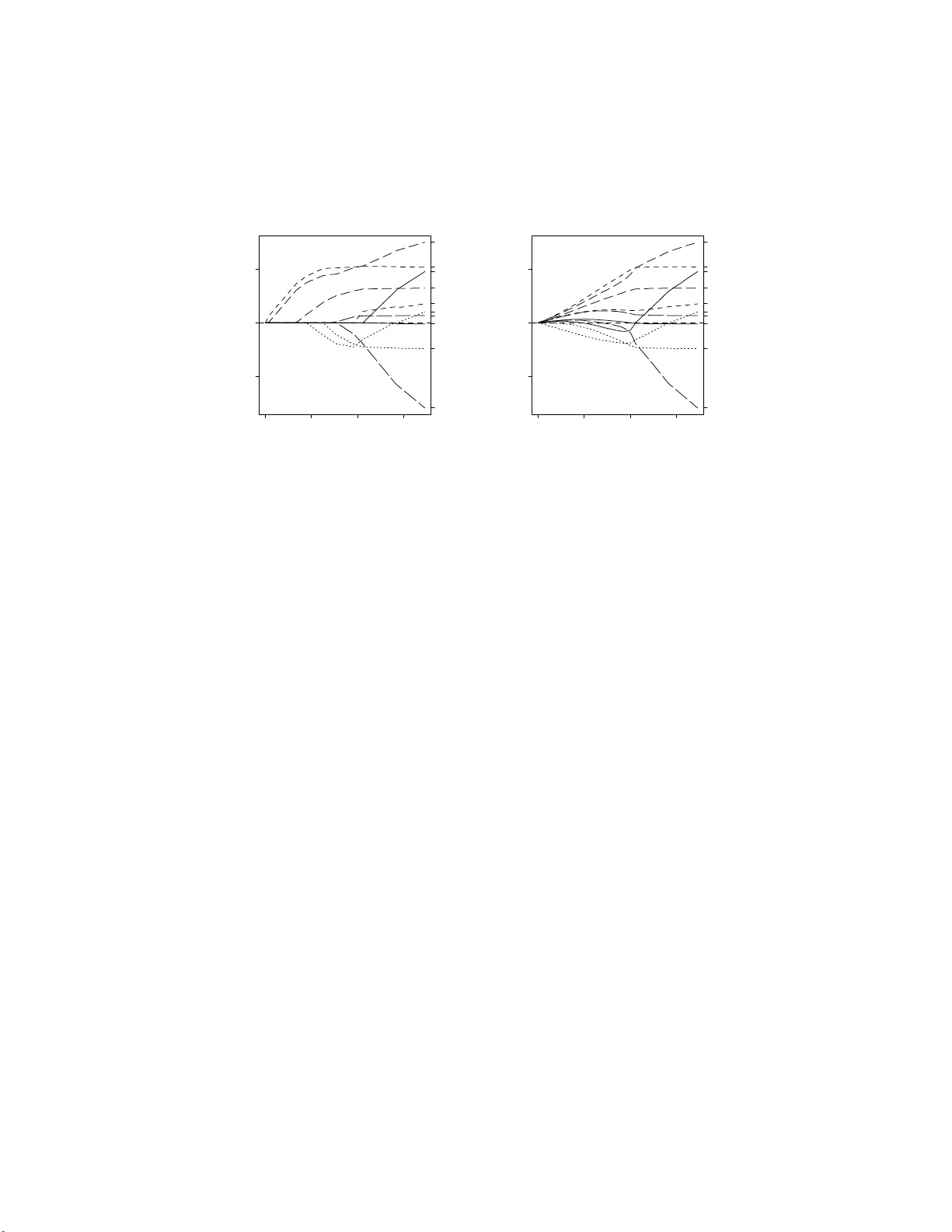

Least Angle Regression is a promising technique for variable selection applications, offering a nice alternative to stepwise regression. It provides an explanation for the similar behavior of LASSO ($\ell_1$-penalized regression) and forward stagewis…

Authors: Tim Hesterberg, Nam Hee Choi, Lukas Meier