Causal inference using the algorithmic Markov condition

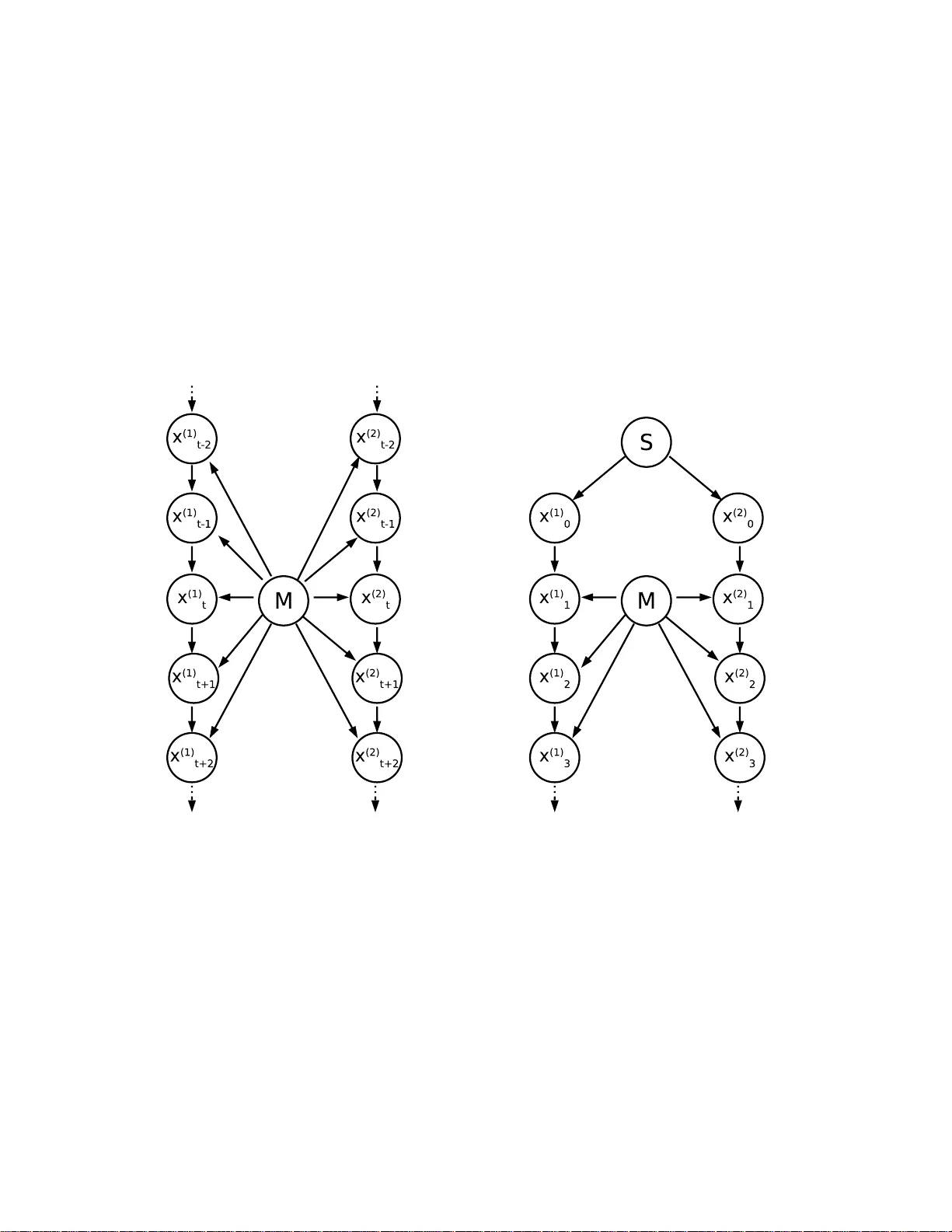

Inferring the causal structure that links n observables is usually based upon detecting statistical dependences and choosing simple graphs that make the joint measure Markovian. Here we argue why causal inference is also possible when only single obs…

Authors: Dominik Janzing, Bernhard Schoelkopf