Low-Complexity LDPC Codes with Near-Optimum Performance over the BEC

Recent works showed how low-density parity-check (LDPC) erasure correcting codes, under maximum likelihood (ML) decoding, are capable of tightly approaching the performance of an ideal maximum-distance-separable code on the binary erasure channel. Su…

Authors: Enrico Paolini, Gianluigi Liva, Michela Varrella

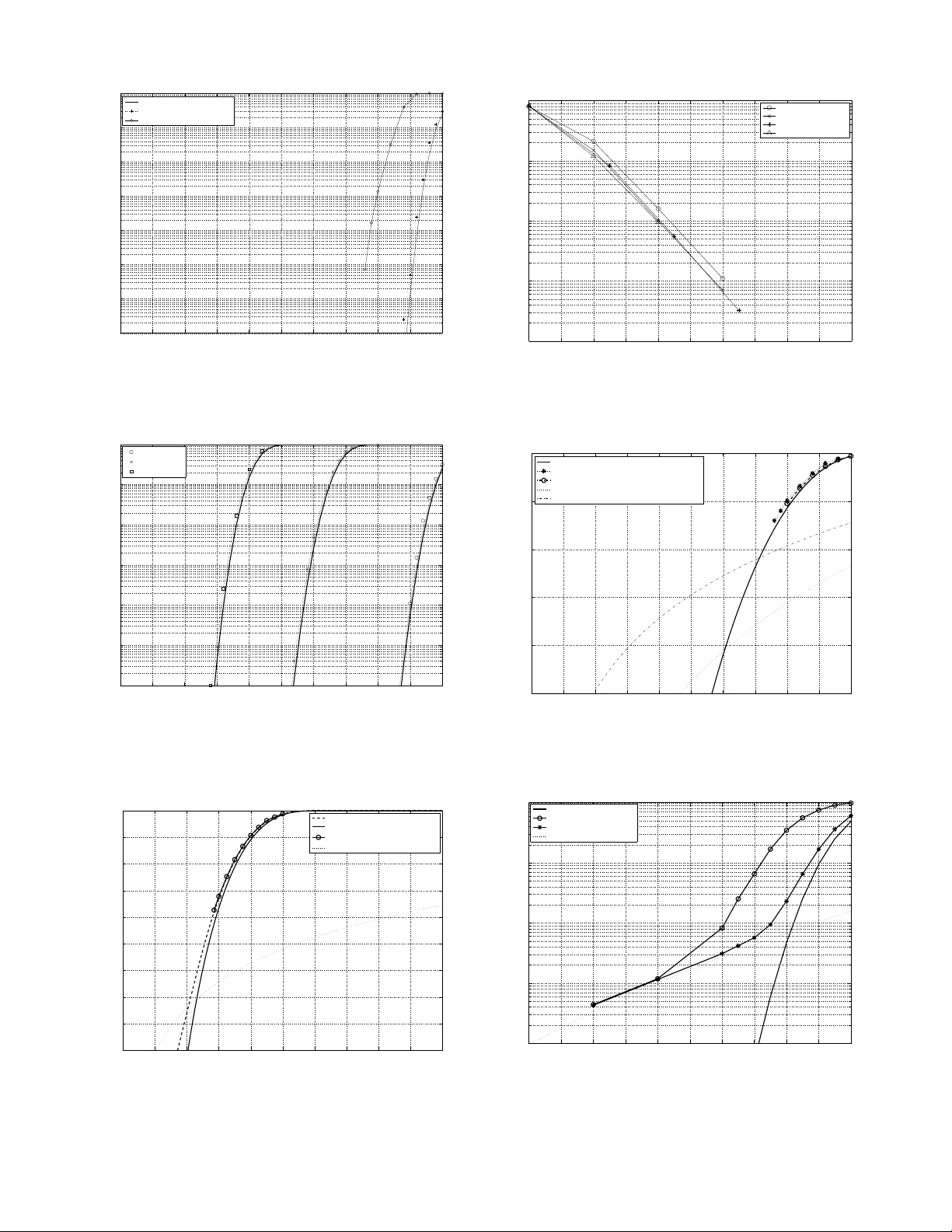

Lo w-Comple xity LDPC Codes with Near - Optimum Performance o ver the BEC Enrico Paolini, Michela V arrella and Marco Chiani DEIS, W iLAB University of Bologna via V en ezia 5 2, 47023 Cesena (FC), Italy { e.paolini,michel a.varrella,marco .chiani } @unibo.it Balazs Matuz and Gian luigi Li va Institute of Communic ation and Navigation Deutsches Zentrum fur Luft- un d Rau mfahrt ( DLR) 82234 W essling, Germany { Balazs.Matuz,Gia nluigi.Liva } @dlr.de Abstract —Recent works showed ho w low-density parity-check (LDPC) erasure cor recting codes, under maximum likelihood (ML) decoding, are capable of tightly approaching the per - fo rmance of an id eal maximum-distance-separable code on the binary erasure channel. Such result i s achiev able down to low error r ates, ev en for small and modera t e block sizes, while keeping the decoding complexity low , t hanks to a class of decoding algorithms which exploits the sparseness of the parity- check matrix to reduce the complexity of Gaussian elimination (GE). In this paper the main concepts u nderlying ML decoding of LDPC codes are recalled. A performa nce analysis among various LDPC code classes is then carried out, including a comparison with fi xed-rate Raptor codes. The results show t hat LDPC and Raptor codes provide almost identical performance in terms of decoding f ailure probability vs. overhead. Index T erms —LDPC codes, Raptor codes, binary erasure channel, maximum likelihood decoding, ideal codes, MBMS, packet-lev el coding. I . I N T RO D U C T I O N Low-density parity-check codes [ 1] exhibit extraordina ry perfor mance under iterativ e (IT) decod ing over a wid e range of commun ication channels. It was e ven proved that some classes of LDPC co de ensembles can asymptotica lly approach, under IT de coding, the bin ary erasure ch annel ( BEC) capacity with an arbitrarily small gap [2]. Howe ver , prob lems arise when using th ese asymp totically optima l constru ctions in conjunc tion with a finite-length ( n, k ) LDPC code. In fact, the correspo nding IT perform ance curve though quite good at high error rates, denotes a coding gain loss with respect to that of an ideal code matching the Single ton b ound . Furth ermore, at low error r ates the perfo rmance curve d eviates e ven mor e fr om th e ideal behavior due to the error floor phen omeno n caused, for IT deco ding, by small size stopping sets. In general, lowering the IT error floor implies a sacrifice in terms of coding gain respect to the Singleton bo und a t high error rates. It is well known that failures of the IT decoder over the BEC are due to sto pping sets. Since there exist sets of variable no des (VNs) repr esenting stoppin g sets for the IT decoder, but not for the ML decoder, a deco ding strate gy consists in performin g IT deco ding an d, upon an IT decoder failure, employing the ML d ecoder to try to reso lve the residua l stopp ing set. This hybrid d ecoder achieves the same perform ance as ML. The perfor mance curve obtained after the ML step app roaches the Singleton bo und curve more closely than th at relative to IT decodin g and down to lower e rror r ates. In fact, the er ror floo r under ML decoding only dep ends on the distance spectrum. If the communicatio n channel is a binary erasure chann el (BEC), ML decoding is equiv alen t to solvin g the lin ear equa- tion x K H T K = x K H T K , (1) where x K ( x K ) denotes the set of erased (correctly re ceiv ed ) encoded bits and H K ( H K ) the subm atrix composed of the co rrespond ing columns o f the parity-ch eck matrix H . Then, ML d ecoding fo r th e BEC c an be implemen ted as a Gaussian elimina tion per formed on the bina ry matrix H T K : its co mplexity is in gener al O ( n 3 ) , where n is the codeword length. It is o bvious that f or long block le ngths ML deco ding becomes imp ractical so that I T decoding is preferred. For LDPC codes, it is indeed possible to take advantage of both th e ML an d IT ap proach. T o keep co mplexity low , a fir st d ecoding attempt is do ne in an iterati ve man ner [3]. If no t successful, the r esidual set of unkn owns is pr ocessed by an ML decoder . Efficient ways of imp lementing M L dec oders fo r LDPC codes can be foun d in [4], whose approac h takes basically ben efit from the sparse nature of the parity-check matrix of th e code. A tho rough perfor mance analysis of LDPC codes un der reduced -complexity ML deco ding is pr ovided in this pap er . W e u se a class o f fixed- rate Raptor co des as a benchmark for our perfo rmance ev alu ations. These fixed- rate Raptor co des are o btained fro m the rate-less co des recom mended for th e Multimedia Broadcast Multicast Serv ice (MBMS) by selecting a priori the cod ew or d len gth n . Raptor cod es are un i versally recogn ized as the state-of-the-art codes for the BEC, and they are cu rrently u nder inv estiga tion for fix ed -rate app lications within the Dig ital V ideo Bro adcasting (DVB ) standard s family [5]. As for L DPC codes, also for Raptor cod es effi cient ML decoder s are av a ilable [6]. The ou tcomes p resented in th is pap er are of great interest for many different applica tions, su ch as those listed next. • W ireless video /audio str ea ming. Lin k-layer cod ing is cur- rently applied to the video streams in the framework of the D VB-H/SH stan dards. In such a con text, er asure cor- recting codes take care o f the fading mitigation, whic h is crucial especially in the case o f mobile users, in ch alleng- ing propagation e n v ironmen ts (urban/suburban and land- mobile-satellite chan nels). Capacity-ap proachin g perfor- mance is here hig hly d esired in order to increa se the service av ailability . Mobile applications r equire low- complexity d ecoders as well. • F ile delivery in b r oadca sting/multicasting networks. Re- liable file delivery in broad casting/multicasting networks finds a n ear-optimal solution in era sure correcting code s. In such a scenario, reliability can not be guaran teed by any auto matic repeat request (ARQ) mechanism , du e to the broadca st nature of the chann el. Erasur e co rrecting codes would limit ( or av oid ) the usage of packet retrans- missions. • F ile delivery in poin t-to-poin t commun ications. Also in point-to- point links, file deli very may require further protection at u pper layers. This is true especially if retransmissions ar e impossible (due to the absen ce of a return channel or due to long ro und-tr ip delays). • Deep space co mmunication s. Deep space co mmunicatio n has bee n always a n ideal application field fo r err or correcting codes. The Con sultati ve Committee for Space Data Systems (CCSDS) is currently in vestigating the adoption o f erasure co rrecting cod es to f urther prote ct the telemetry down-link, especially for deep-space missions, which are n ot suitab le for ARQ. In such a context, th e possibility of p rocessing the d ata off-line, to gether with the r elativ ely -low data rates (up to some Mbps), makes ML deco ding of linear block cod es a concrete solutio n, ev e n in absence of low-complexity decoder s. A manda- tory featu re is instead represented by low-complexity encoder implemen tations. The paper is o rganized as follows. In Section II red uced- complexity ML decoding of LDPC codes on the binary erasure channel is revie wed, including some insights on the code design for ML. In Section II I a class of fixed-rate Raptor codes is introd uced, togeth er with a sum mary on their ML encoder /decoder imp lementations. Sec tion IV p rovides sim- ulation results for b oth L DPC an d fixed-rate Rapto r co des. Conclusions follow in Sec tion V. I I . L D P C C O D E S A N D M L D E C O D I N G This section is organized in a two-fold way . First, th e main concepts of the ML decoder of [4 ] will be explained . Second, new code designs f or ML will be presented and evaluated with regard to complexity and p erforman ce. A. Efficient maximum-likelihoo d decoding for LDPC cod es over the erasur e channel The problem o f GE over large sparse, binary matrices has been widely inv estiga ted in the pa st. A common appro ach relies on structured GGE with the purp ose of converting the giv en system of sparse linear equations to a new smaller system th at can be solved afte rwards by b rute-for ce GE [4], [7], [8 ]. Here, we’ll provide an simplified overvie w of the approa ch pr esented in [4 ]. F or sake of clarity , let’ s apply column permutatio ns to arran ge the parity c heck matrix H as in ( 1): the le ft part shall c ontain all th e co lumns related to known variable nodes ( H K ), w hereas the right p art shall be made u p of all the colum ns related to erased variable no des ( H K ). Thus, to solve the unk nowns, we proceed as follows: • Perform diagona l extension steps on H K . This results in the sub-m atrices B , as well as P that is in a lower triangular form , and column s th at canno t be put in lower triangular form (columns of matrices A a nd S ) . The variable n odes corresponding to the former set of columns build up the so-called piv o ts (see Figure 1(b)). Note that all rem aining un known v ariab le can be obtained by linear combinatio n of the p iv ots and of the known variables. • Zero the ma trix B which elem ents can b e expressed by the sum of the piv o ts (Figure 1(a)). • Resolve the system by perfor ming Gaussian elimination only on A ′ . Out of the piv o ts the un known variables can be ob tained quite easily due to the lower trian gular structure of P . It sho uld be o bvious that the main strength o f this alg orithm lies in the fact that GE is o nly perfo rmed on A ′ and not on the entire set o f unk nown variables. Therefor e, it is o f great interest to keep the dimensions of A ′ rather small. This can be o btained by sophisticated ways of ch oosing the piv ots [4] and by a judicious co de design [3]. Besides, to red uce the complexity f urther th e brute-fo rce Gaussian elimination step on A ′ could be replaced b y other algorithms. Note that the ML dec oder for an ( n, k ) LDPC co de operates on a sp arse matr ix with at most n − k colu mns and n − k ro ws. The relev an ce of this considera tion will be come mo re clear after the description of the ML Rapto r decod er [6 ] provid ed in Section III. B. On the code design The usual code design employed for LDPC codes over BEC deals with the selection of proper d egree distributions (or protogr aphs) achieving high iterative decoding thresholds ǫ I T (as close as po ssible to the limit g iv en by 1 − R ). A ( n, k ) LDPC code is then picked from t he ensemble defined by the above-men tioned degree distributions. T he selection may be perfor med following so me g irth optimization tech niques. Such an iterativ e -decodin g-based design criterion does not answer to the need of finding g ood codes for ML d ecoding . Namely , a different fig ure shall b e put in the focu s o f the degree distribution optimization . In our code design , the cor- respond ing featu re of ǫ I T under ML d ecoding, i.e., the ML decodin g thresho ld ǫ M L , is the subject of the figur e dr iving the optimization . A method for deriving a tight up per bo und on the ML thresho ld for an LDPC ensemb le can be found in [9]. The upp er b ound on ǫ M L can be derived as fo llows. • Consider an ( n, k ) LDPC code and its correspo nding IT decoder . The extrin sic information transfer (E XIT) curve of the c ode (under I T decoding) can be derived in terms of extr insic erasur e p r obability at the output of the d ecoder ( p E ) as a functio n o f the a priori erasure pr obab ility Fig. 1. ML deco der as in [4]. (a) Piv ots selection within H K . (b) Zeroin g of matrix B . (c) Ga ussian Elimination on A ′ . (input of the decoder, p A ). For n → + ∞ , the EX IT curve o f the ensemble defined b y λ ( x ) and ρ ( x ) is a function of th e degre e distributions, a nd can be obtained in parametric form as p A = x λ (1 − ρ (1 − x )) (2) p E = Λ(1 − ρ (1 − x )) (3) with x ∈ [ x B P , 1] , being x B P the value of x for which p A = ǫ B P , and Λ( x ) = P Λ i x i , being Λ i the fraction of variable nodes with degree i . EXIT f unctions o f regular LDPC ensembles are displayed in Figure 2 (dashed lines). • Due to the Area Theorem [10 ], the ar ea below the EXI T function of th e code, un der ML de coding, must equal the code ra te R . No te that the EXIT fun ction defined by (2),(3) is IT -decoder-based. Hence , the are b elow the EXIT curve might be larger than the code rate. • Consider the extrinsic erasure p robability at the output of an ML and of an IT d ecoder . Obviously , p M L E ≤ p I T E . • Therefo re, by drawing a vertical line on the EXIT function plo t o f the en semble, in co rrespond ence with p A = p ∗ A , such that Z 1 p ∗ A p E ( p A ) dp A = R , we obtain an upper bound on the ML thr eshold, i.e., ǫ M L ≤ p ∗ A . For regular LDPC ensembles, see th e ex- ample in Figure 2. 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 p A p E (3,6) Regular Ensemble EXIT ε IT =0.42944 ε ML =0.48811 Area = R 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 p A p E (5,10) Regular Ensemble EXIT ε IT =0.34155 ε ML =0.49944 Area = R Fig. 2. EXIT functions for the (3,6) and the (5,10) regular LDPC ensembles. Dashed lin es represent the itera ti ve decoder EXIT funct ion. Solid lin es are place d in correspondence of the ML threshol ds upper bounds. In [9 ] it was shown that th is bound is extremely tight for regular LDPC ensembles, and for ensemb les w hose IT EXI T curve presents on e jump (for fu rther details, see [9] ). Slightly different (but still rather simple) techn iques to obtain tight bound s are applicable also in th e oth er cases [9]. Ex tensions to the above-mentioned techniq ues ca n be applied to other code ensembles, o nce the IT EXIT cur ve is provided. For proto- graph LDPC en sembles [ 11], a rather simple a pproach would then be the application of the protograph EXIT analysis of [12] to obtain the IT EXIT curve for a g iv en pro tograph ensemble. The upper b ound on the maximu m-likelihood threshold c an then b e obtained as for conv en tional ( λ, ρ ) ensembles. An example of th e IT EXIT curve for a n accu mulate-rep eat- accumulate (ARA) en semble [13 ] is provided in Figure 3, as well as the deriv ation of the related ML threshold upper bound . Proofs o n the tightness o f the bound fo r pr otograp h ensembles are currently missing and are no t in the scope of this paper . 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 p A p E Protograph AR3A Ensemble EXIT ε IT =0.477 ε ML =0.496 Area = R Fig. 3. EXIT functio n for the accumulate -repeat-accumulate ensemble. Dashed lin es represent the itera ti ve decoder EXIT funct ion. Solid lin es are place d in correspondenc e of the ML thresholds upp er bound s. For the regular ensemb les, the imp rovement given b y the ML decoder is usually large (see T able I). A rule of thu mb for the design of capacity -appro aching LDPC codes unde r ML consists in the selection of sufficiently dense parity- check matrices, by keeping fo r instance a rela- ti vely large average check n ode degree. T o giv e n an ide a, we found that for rate 1 / 2 LDPC en sembles, an average check node degree d c ≥ 9 is sufficient to provide ML thresholds T ABLE I M L A N D I T D E C O D I N G T H R E S H O L D F O R R E G U L A R L D P C E N S E M B L E S V S . T H E S H A N N O N L I M I T , ǫ S h . Ensemble ǫ M L ǫ I T ǫ S h (3,6) 0 . 4881 0 . 4294 0 . 5000 (4,8) 0 . 4977 0 . 3834 0 . 5000 (5,10) 0 . 4994 0 . 3416 0 . 5000 (6,12) 0 . 4999 0 . 3075 0 . 5000 (3,9) 0 . 3196 0 . 2828 0 . 3333 (4,12) 0 . 3302 0 . 2571 0 . 3333 (5,15) 0 . 3324 0 . 2303 0 . 3333 close to the Shanno n lim it [3 ]. This heur istic r ule seem s to work for both regular a nd irregular ensembles. C. GeIRA codes with low-comp lexity ML decod er In [3], it is shown tha t go od iterative decoding th resholds are indeed highly desirable for ML decoding, since they allows reducing the decoder co mplexity . More specifically , in [3] some simple d esign rules are provided, leading to codes with good IT th resholds, n ear-Shannon-limit ML th resholds, low error floors, with simple (turb o-code- like) encoders [ 14]. The propo sed code design leads to a class of gen eralized irregular repeat accu mulate (GeIRA) cod es tailor-made for efficient ML decodin g. In Section I V, num erical r esults on GeIRA codes with different cod ing rates/block lengths will be provided . I I I . F I X E D - R A T E R A P T O R C O D E S Raptor co des were in troduced by Sho krollahi in [ 15]. They are an instance o f the conc ept o f fou ntain code 1 [16] and, thanks to the large degrees of f reedom in parame ter ch oice, they can be applied to several sy stems, increasing their relia- bility . Recently , a f ully specified version of Raptor cod es has been appr oved as a means to efficiently disseminate data over a broadcast n etwork [6, Annex B]. A ( n, k ) fixed-rate Rapto r code can be o btained by limiting to n the amount of symbols produ ced by the Rapto r enc oder . Fixed-rate Raptor cod es derived from the MBMS standard [6, Annex B] ar e currently under in vestigatio n for the multi protocol encapsulatio n (MPE) lev el protection within the D VB standards family [5] . In the following, we will provid e first a descrip tion of the Rap tor codes specified in [6, Ann ex B], includ ing some insights on their encodin g an d decoding algorithms. The Rap tor code can be vie wed as the con catenation of se veral codes. For example the Raptor enco der specified in [6] is d epicted in Fig. 4. T he m ost-inner cod e is a non sys- tematic Luby-tr ansform (L T) co de [17] w ith L inp ut sym bols F , producin g the encod ed symbols E . The symbols F are known as intermediate symbols , and are generated throug h a pre-cod ing, made up of some oute r h igh-rate block co ding, effected o n the k symb ols D . T he s interme diate sym bols D s are k nown as LDPC symbols , while th e h in termediate symbols D h are k nown a s h alf symbols . The comb ination of pre-cod e and L T code produces a non sy stematic Raptor co de. 1 Commonly , the expre s sion “fountain code” is used to refer to a code which can produce on-the-fly an y desired number of encoded symbols from k informati on s ymbols. The par ameters s and h are functio ns of k , accord ing to [ 6]. Some pre-pr ocessing is to be put b efore th e n on-systematic Raptor enco ding to ob tain a systema tic one. Such a pre- processing consists in a r ate-1 linear cod e g enerating the k symbols D from the k inf ormation symbols C . Θ i k E F D H D S D C RANDOM GENERATOR G LDPC G H G LT G T - 1 i k s h L n Non- systematic Raptor encoder Systematic Raptor en code r Fig. 4. Block diagram of the systemat ic Rapto r enco der spec ified in [6] . L T codes ar e the fir st p ractical implemen tation of foun tain codes. An uniqu e encoded symbol ID (ESI) is assigned to each encoded sy mbol. Startin g f rom an ESI i , the encoded symbo l E i is comp uted by xor-ing a su bset Θ i of d i intermediate symbols. T he number d i , known as the degr ee ass ociated with the encoded sym bol E i , is a rando m in teger between 1 and L : the d i intermediate symb ols are chosen at rando m according to a spec ific probability distribution. As a consequence , in or der to r ecover the inf ormation symb ols the decoder need s b oth the set of encoded symbols E i and of the correspond ing Θ i . This last information can either be e x plicitly transmitted or obtained by the decod er th rough the same pseud o-rand om generato r used for the encod ing, starting from ESIs, which hav e therefore to be sent togeth er w ith the correspo nding enco ded symbols. Some of th e main properties of L T co des are that the encoder can g enerate as many enco ded symbols as desired an d that th e deco der is able to r ecover the b lock of source symbols from any set of re ceiv ed en coded sy mbols, who se num ber is only slightly greater than that of the sourc e symbols ( in fact the code cla ims a low level of overhead ). A Rap tor co de, whose core consists of an L T code, inh erit su ch p roperties. The a ddiction o f a pre-cod ing phase is used to ob tain an encodin g/decodin g complexity linear with k ; a featur e which is missing in the mere L T code . A. F ixed-rate Raptor generator matrix Considering a systematic Raptor code as a finite leng th ( n, k ) lin ear block code (fixed-rate Raptor code), we can ask what is the structure of its generator matrix . This prob lem is addressed next f or the Raptor cod e specified in [ 6] 2 . The generato r matr ix of the first pre-coding stage is gi ven by [ I k | G T LDPC ] T . Accor ding to th e specifications in [6], G LDPC consists in colu mns all o f weig ht equ al to 3 , regard less the 2 Throughout this section, the vectors are intend ed as column vectors (unless expl icitly m ention ed) and t he gen erator matr ix of a ( n, k ) linear block code is expressed as a ( n × k ) mat rix. value o f k . On th e oth er hand, th e ge nerator matrix of the second pre-cod ing stage is given by [ I s + k | G T H ] T , where G H is a ( h × ( s + k )) matr ix consisting in column s all of c onstant weight: each column is an element of the Gr ey sequ ence of weight h ′ , wh ere h ′ = ⌈ h/ 2 ⌉ . Finally , let us den ote by G L T the ( n × L ) L T code genera tor matrix (regard ed as a finite len gth n linear block code). It is built in such a way tha t the row of index i has d i ones in Θ i positions, where d i and Θ i are derived from the ESI i , through pseud o- random algo rithms describ ed in in [6]. Next, we use the notation G L T ( i 1 , i 2 , . . . , i r ) to denote th e ( r × L ) subm atrix of G L T composed of th e rows with in dexes ( i 1 , i 2 , . . . , i r ) . The notation G L T is equivalent to G L T (1 , . . . , n ) . The L = k + s + h in termediate sy mbols F ar e ob tained from D as F = D D s D h throug h the re lations D s = G LDPC · D (4) D h = G H · D D s . (5) The intermediate symbols F are the inputs to the L T encod er for deriving the n encoded symbols E as E = G L T · F . (6) Let us subd i v ide G L T as G L T = G I L T G II L T G III L T , where the sizes of the three submatrices a re ( n × k ) , ( n × s ) and ( n × h ) , respectively . If also G H is subdivided a s G H = G I H G II H , that is into two submatrices whose sizes are ( h × k ) and ( h × s ) , respectively , then the non- systematic Raptor co de g enerator matrix can be expressed as G R,n-sys = G I L T + G II L T · G LDPC + G III L T G I H + G II H · G LDPC which satisfies the relation: E = G R,n-sys · D . Let’ s now subdi v ide G R,n-sys into the two su bmatri- ces G I R,n-sys and G II R,n-sys , whose sizes are ( k × k ) and (( n − k ) × k ) , respectively: G R,n-sys = G I R,n-sys G II R,n-sys . For a systematic code it must be valid the following E i ≡ C i ∀ i = 1 , ..., k , P S f r a g r e p l a c e m e n t s s s k h h n G LDPC I s Z G H I h G L T (1 , . . . , n ) Fig. 5. Structure of the encoding matrix A for an ( n, k ) Raptor code specified in [6] ( L = k + s + h ). and therefo re G I R,n-sys G II R,n-sys · D = E [1 ,..,k ] E [ k +1 ,.., n ] (7) = C E [ k +1 ,.., n ] . (8) W e hav e introduced in (7) the notation s E [1 ,..,k ] and E [ k +1 ,.., n ] to den ote the fir st k and th e last n − k encoded sym bols, respectively . W e can state that the p re-pro cessing matrix gene rating D from C can be obtain ed by G − 1 T = ( G I R,n-sys ) − 1 and, as a consequen ce, the systematic Raptor code generator matrix is G R,sys = I k G II R,n-sys (9) In (9) I k denotes the ( k × k ) id entity matrix. Obviously , G I R,n-sys can be inverted if and only if it has f ull rank k . By initializing the rando m gene rator of inne r L T co de th rough the so-called systematic index (defined in [6 ]), this property is fulfilled for all k = 4 , . . . , 819 2 . B. Raptor Encoding The relatio ns (4), (5) and (6) can conveniently be repre- sented as: A · F = 0 E [1 ,..,n ] whereby A is a (( s + h + n ) × ( s + h + k )) b inary m atrix called encoding matrix , whose structure is shown in Fig. 5. In this figure, I s is the ( s × s ) identity matrix, I h is the ( h × h ) iden tity matrix an d Z is the ( s × h ) all- zero matr ix. The matrix A d oesn’t properly represent the Rap tor co de generato r matrix (wh ich is defined in (9) instead ), but in cludes the set of constraints imp osed by the pre-coding and L T co ding to gether . W e use next the notation A ( i 1 , i 2 , .., i r ) to indicate the (( s + h + r ) × L ) sub matrix of A obtain ed by selecting only the rows of G L T with indexes ( i 1 , i 2 , .., i r ) . Again, A is eq uiv alent to A (1 , . . . , n ) . A possible Raptor encoding a lgorithm exploits a subm atrix of A . Su ch a matrix, co nsisting of the first L rows of A , is used to obtain F solv ing the system of linear equatio ns: A (1 , ..., k ) · F = 0 C . At this poin t it is sufficient to multiply F by the L T gen erator matrix to produ ce th e encoded symbols E , accor ding to (6). C. Raptor Decoding The mo st direct way to deco de the rec ei ved sequence lies in in vertin g each enco ding step of Fig. 4; in this case yo u work on ind i vidual sub -codes. When using ML d ecoding at ea ch sub-cod e, such a method req uires th e in version of a matrix f or each code, so it doesn’t appea r to be the b est solutio n fr om the com putational vie wpoint [18]. Moreover , if the number of received enco ded symbo ls is no t larger en ough than (which in many cases ma y mean mu ch higher th an) the nu mber of source symbo l k , it shows an hig h failure probability . For example, let’ s assume that only a subset of encoded symbols of ESIs ( i 1 , i 2 , ..., i r ) are a vailable at the decoder . The first step the decoder should perfor m is to solve the system of linear equation s: G L T ( i 1 , i 2 , .., i r ) · F = E [ i 1 ,i 2 ,..,i r ] . The matrix G L T ( i 1 , i 2 , .., i r ) has ( r × L ) size and, obviously , the necessary condition to solve the system is that r ≥ L . If such a condition is no t fulfilled, th e dec oding fails. It means that to rec over the source sy mbols the decod er requires at le ast L encod ed symb ols (let’ s re call that L = k + s + h ). Such a method do esn’t exploit the fact that th e L intermedi- ate symbols are n ot independ ent f rom each other, but subject to the pre- coding constraints, instead. Therefo re, to obtain the intermediate symbols F b y using a sub matrix o f A ( which consider such constraints) turns out to be a far more e fficient solution. According to the above-mentioned assumption, the first decodin g step will tur n into: A ( i 1 , i 2 , .., i r ) · F = 0 E [ i 1 ,i 2 ,..,i r ] where A ( i 1 , i 2 , .., i r ) is a (( s + h + r ) × L ) matrix, as defined above. The system can be solved by Gaussian elimination (ML decodin g) on ly if s + h + r ≥ L , that is r ≥ k (note that this is a necessary con dition fo r successful M L d ecoding, n ot a sufficient on e). In this way the number of encode d symbo ls required at the decod er is definitely lower comp ared to that in the previous case and , n otably , is clo se to the number of source symbols k . Once F is k nown, the source symbols F are easily recovered b y C = G L T (1 , ..., k ) · F . T o sum up , when the d escribed encoding and d ecoding algo- rithms are emp loyed, bo th the encoding and the decod ing are perfor med by making u se of operatio ns which are an alogous in the two case (Fig. 6). D. Some r emarks on the decoding c omplexity of LDPC and fixed-rate Raptor c odes If we take into consideration the first decoding step, an algo- rithm to p erform GE in a more ef ficien t way on A ( i 1 , i 2 , .., i r ) has be en prop osed in [ 6, An nex E]. Th is algor ithm share so me similarities with that proposed in [4 ] fo r LDPC c odes. In both cases, the erased symbols are solved by mean o f a structured GE, exploiting th e sparse nature of the eq uations to reduce the size of the matrix on which b rute-fo rce GE is perfo rmed. The targets of the structu red GE are H K for LDPC codes and A f or Raptor codes. Conside r now a ( n, k ) L DPC code and its fixed-rate Raptor counterpart. Sup pose also an erasur e pattern (introdu ced by the commun ication channel) leading to a small overhead δ , i.e., that the am ount of correctly received symbols is k + δ . On the LDPC cod e side, the structu red GE will be performed on H K with size ( n − k ) × ( n − k − δ ) . For the Raptor code, th e structured GE will work on A with size ( k + δ + s + h ) × ( k + s + h ) . Hence, w hile fo r the L DPC co de the comp lexity of the ML decoder is driv e n by ( n − k ) (i.e., the amou nt o f redu ndancy , thus by the code rate R ), for the Raptor cod e the complexity dep ends just o n k (i.e., it’ s cod e rate indepen dent). The result is that f or high rates( R > 1 / 2 ) LDPC cod es have an inh erent advantage in complexity . On the other hand, for lower rates Raptor codes shall be prefer able. I V . N U M E R I C A L R E S U LT S In this section, some numerical results will be provided for LDPC and fixed-rate Rap tor codes unde r ML over the BEC. The perfor mance is provided in terms of codeword erro r ra te (CER) vs. the ch annel erasure probab ility ǫ . The section is organized in subsections. First, some performan ce bounds fo r a ( n, k ) linear blo ck code over the BEC are reviewed. Then the p erform ance of some moderate-b lock-leng th LDPC co des is provided. The comparison with fixed-ra te Raptor codes is presented in a dedicated subsection . Finally , some results fo r a proto graph-b ased ARA code are gi ven. A. Bounds on the code performance A lo wer bound for the CER on the BEC is gi ven by the well- known Singleton b ound, which is matched just by an ( n, k ) ideal maximum distance separable (MDS) cod e: P e ≥ n X i = n − k +1 n i ǫ i (1 − ǫ ) n − i . (10) There exist o nly a fe w binar y codes ach ieving (10) with equality . An upp er bound on the CER for the rand om code ensembles was introduced by Berlekamp [19]. The bound can be expressed a s: P e ≤ n − k X i =0 n i ǫ i (1 − ǫ ) n − i 2 − ( n − k − i ) + + n X i = n − k +1 n i ǫ i (1 − ǫ ) n − i , (11) ERASURE CHANNEL E [1,..,n] i (n) A (1,...,k) G LT (1,..,n) [1:n] (k) (L) F C G LT (1,..,k) (k) (L) A (i 1 ,..,i r ) (n) E [i1,..,ir] F C (i 1 ,i 2 ,..,i r ) ENCODER DECODER Fig. 6. Overvie w of th e encoding and decoding proce ss for the s ystemati c Raptor code specified in [6]. where P e represents the a vera ge er ror probability for the ( n, k ) random codes ensemble. Even if (11) con stitutes an up per bound to the erro r proba bility , for sufficiently-large block lengths such bound c an be considered as a g ood benc hmark for the code perfo rmance [20]. B. Moderate block-size LDPC codes The performa nce o f som e mo derate-leng th L DPC co des is provided in Figures 7, 8 and 9. In Figure 7, th e CER for a (2048 , 1024 ) GeIRA code from [3] is presente d. The cod e is pic ked fro m an L DPC ensemble with ǫ I T = 0 . 4 80 and ǫ M L = 0 . 496 . The code perfo rmance, u nder ML decodin g, tightly appro aches the Singleton bound, and pratically matches the Berlekamp bound . The iterative deco ding cu rve, alth ough not so f a r fro m the state-of -the-art for iterati vely-deco ded codes, lies qu ite far f rom the boun d. The sub- optimality of the IT cu rve is therefor e not due to the code by itself, but to the sub-o ptimality of the decoder . The result is confirmed f or a family of rate -compatib le GeIRA codes with cod e rates rangin g f rom 1 / 2 to 4 / 5 and input block size k = 50 2 (Fig ure 8 . T he higher rates ar e obtained by pu ncturing the mother R = 1 / 2 code, which has been derived from the con struction p roposed in [ 3]. For the code r ates u nder investigation, th e per formanc e is unifor mly close to the correspon ding Singleton bound, down to low code word err or rates (CER ≃ 10 − 6 ). I n Figur e 9, the codew or d err or r ate for a (116 0 , 104 4) R = 9 / 10 is shown. The cod e is a n ear-regular Ge IRA code with almost constant column weight w c = 5 and feedb ack polyn omial giv en by 1 + D + D 4 + D 10 + D 20 . The ML thresh old is ǫ M L = 0 . 0994 , wh ile ǫ I T = 0 . 0699 . Also in this case, the error perfor mance curve matches the Berlekamp bound down to lo w error rates. The m inimum d istance of this co de (and its correspo nding m ultiplicity) has been ev aluate by [21]. An error floor estimation h as been c arried out b y m ean of the truncated union bo und on th e co dew o rd error p robability , which is given by P e ≃ A min ǫ d min , (12) where A min represents the minimum distan ce multip licity . Four codewords at d min = 11 have been f ound, lead ing to the error floor estimation provided in Figure 9. Even if suc h results re present only an estimation of the actual er ror floor, they are quite remar kable. The cod e perfo rmance would in fact d eviate r emarkab ly fr om the Sin gleton bound ju st at error rates below 10 − 14 . C. Comp arisons with fixed-rate Raptor codes A compar ison with fix ed -rate Raptor codes specified in the MBMS stand ard is provide d next. I n Figure 1 0, the decodin g failure prob ability (i.e. , the CER) as a function of the overhea d is dep icted for the codes specified in [ 6] and for som e GeIRA cod es. Th e overhead δ is here defined as the n umber of codeword sym bols that are correctly r eceiv e d in excess respect to k (recall that k r epresents the min imum amount of correctly-received bits allowing successful decoding with an ideal MDS code). The comparison is carried out for various b lock sizes. T here is basically no difference in perfor mance between the MBMS Raptor codes and pro perly- designed LDPC cod es under ML deco ding. As already pointed out in [22], the decoding failure p robability vs. overhead do es not seem to depen d o n the input block size. A compa rison between a (5 12,25 6) fixed-rate Rap tor code and a near-regular GeIRA cod e from [3] with co nstant co lumn weight w c = 4 is provid ed in Figure 11. In the waterfall region the two codes exhibit almo st the same perf ormance. A minimum distance estimation ac cording to [21 ] was conducted on the two codes. For the (512,2 56) fixed-rate Raptor code, the m inimum distance is gi ven by d min = 2 5 , with A min = 2 . The lowest Hamm ing-weigh t codewords can be obtaine d b y feeding the encoder with the k -bits inp ut sequ ences u (1) , u (2) , where the n on-nu ll bits are u (1) 13 , u (1) 21 , u (1) 32 , u (1) 39 , u (1) 63 , u (1) 90 , u (1) 91 , u (1) 95 , u (1) 98 , u (1) 102 , u (1) 115 , u (1) 118 , u (1) 133 , u (1) 142 , u (1) 181 , u (1) 230 , u (1) 243 , u (1) 247 and u (2) 6 , u (2) 13 , u (2) 18 , u (2) 75 , u (2) 88 , u (2) 101 , u (2) 123 , u (2) 131 , u (2) 140 , u (2) 143 , u (2) 176 , u (2) 220 , u (2) 231 , u (2) 243 , be ing u (1) 0 and u (2) 0 the first bit of u (1) and u (2) , resp ectiv ely . For the G eIRA cod e, the estimated minimum distance is d min = 4 0 , with multip licity A min = 2 . In both cases, the estimated minimum distance is quite large, and would per mit to achieve very low error floo rs. For th e Raptor code, the erro r floo r estimation predicts a deviation from the Berlekam p boun d at CER ≃ 10 − 11 , while fo r th e GeIRA code the erro r flo or would a ppear a t CER ≃ 10 − 20 . The later result is quite a stonishing, and would sug gest the use of the near-regular GeIRA constru ction f or application s 3 requirin g very lo w error floors. A final remark on the minimum distance e valuation for fixed-rate Rapto r codes. The minimum distance evaluation has b een ap plied to fixed-rate MBMS Raptor codes with various blo ck length s. For a (128 , 64) 3 Almost all the current wirel ess systems adopting erasure correcti ng code s hav e requi rements which are usually much abov e the error floor of the Raptor code. Raptor code, the lo west-weight codeword found b y [ 21] was 14 ( A min = 2 ). In the case of a (2048 , 1024 ) Rap tor code, d min = 26 ( A min = 2 ). Recalling th e resu lt for the (512 , 256) Raptor cod e ( d min = 25 ), it appears from this pr eliminary analysis that fo r fixed-rate Raptor cod es the minimu m distance might scale sub-lin early with th e block length. D. ML decod ing of a (10 24 , 51 2) ARA c ode In this subsection we provide some numerical results d eal- ing with ML decodin g o f a (1024 , 5 12) ARA cod e. The ARA protog raph ensemb le is defined by the base matrix [23 ] B = 2 1 1 1 0 1 2 1 1 0 2 0 0 0 1 where the first column corresponds to pu nctured v a riable nodes. Its iterative decoding th reshold is ǫ I T = 0 . 47 7 . The upper bound on the ML th reshold is ǫ M L ≤ 0 . 496 ( see Figure 3). The code p erform ance is shown in Figure 12, fo r both iterativ e and ML d ecoding . The gain obtained by th e ML decoder in the waterfall region (the err or rate perf ormance is actually quite clo se to the Singleton bound ) indicates th at the bound on the ML th reshold is quite tight. Both the iterati ve and the ML cur ves at low error r ates present an evident er ror floor, due to th e pre sence in the codew ord set o f 16 co dew ords with Hamming weight 10 . V . C O N C L U D I N G R E M A R K S In th is paper we pr ovided some insights on the co de design for ML-decoded LDPC on the erasure channel, together with an overview on efficient ML de coding algorithms. T he complexity on the decoder si de can be kept lo w with a pro per code design . Such approach allows to design c odes with a large flexibility in terms of block lengths and c ode rates. A compariso n with ML-decoded fixed-rate Raptor codes (derived from the MBMS specificatio n) has been carried out as well. The results show that LDPC codes und er ML decod ing can tightly appro ach th e boun ds down to very low error rates, ev e n for short block sizes, as their Raptor co unterpar t. I n some cases, the estimated er ror floor for the L DPC cod e is much lower than the estimated e rror floor of the c orrespon ding fixed-rate Raptor code. Since for fixed-r ate Raptor codes the error floors are usually very low , the results achieved with the propo sed LDPC are astonishing. ML-d ecoded LDPC codes represent therefore a practical too l to approach the idea l M DS codes performan ce in many wireless communications contexts, down to v e ry low error rates, and with limited decoding complexity . V I . A C K N OW L E D G M E N T S This research was supp orted, in part, by the University of Bo logna Grant I nternazion alizzazione, and by the EC-IST SatNEx-II project (IST -2739 3). R E F E R E N C E S [1] R. G. Gallager , Low-Density P arity-Chec k Codes . Cambridge , MA: M.I.T . Press, 1963. [2] H. D. Pfister , I. Sason, and R. Urbanke , “Capacity-a chieving ensemble s for the bi nary erasure channel with bounded comple xity , ” IE EE Tr ans. Inform. Theory , vol . 51, no. 7, pp. 2352–2379, July 2005. [3] E. Paoli ni, G. Liv a, B. Matuz, and M. Chiani , “Ge neralized IRA Erasure Correcting Codes for Hybrid Iterati ve / Maximum Likelihood Decoding , ” IE EE Commun. Lett . , 20 08, ac cepted for publication. [4] D. Burshtein and G. Miller , “ An effic ient maximum likeliho od decoding of LDPC code s ov er the binary erasure chan nel, ” IEEE T rans. Inform. Theory , vol. 50, no. 11, no v 2004. [5] “Framing structu re, channel coding and modulati on for Satellite Services to Handhel d devi ces (SH) belo w 3GHz, ” Digital Vi deo Broadcasting (D VB), ” Blu e Book, 2007. [6] 3GPP TS 26.346 V7.4.0, “T echnical speci ficatio n group services and system aspects; multimedia broadc ast/mult icast service; protocols and codecs, ” June 2007. [7] A. M. Odlyzk o, “Discret e logarithms in finite fields and thei r cryp- tographi c significance, ” in Theory and Application of Crypto graphi c T echni ques , 1984, pp. 224– 314. [8] T . Richardson and R . Urbanke, “Ef ficient enco ding of l o w -density parity- ceck codes, ” IEEE T rans. Inform. Theory , vol. 47, pp. 638–656, Feb . 2001. [9] C. Measson, A. Montanari , T . Richardson, and R. Urbank e, “Life abo ve threshold : From list dec oding to area theore m and mse, ” in Pr oc. 2004 IEEE Information Theory W orkshop , San Antonio, USA, October 2004. [10] A. Ashikhmin, G. Kramer , and S. ten Brink, “Extrinsic informati on transfer functions: Model and era sure cha nnel properties, ” IEEE T rans. Inform. Theory , vol . 50, no. 11, pp. 2657–2673, No v . 2004. [11] J. Thorpe, “Low-De nsity Pa rity-Che ck (LDPC) Codes Constructed Pro- tographs, ” JPL INP , T ech. Rep. 42-154, Aug. 2003. [12] G. Liv a and M. Chiani , “Protograph LDPC codes design based on EXIT analysi s, ” in Proc. IEEE Global Communicati ons Confer ence (GLOBECOM) , W ashington, D.C., USA, Nov . 200 7. [13] A. Abbasf ar, K. Y ao, and D. Disvala r , “ Accumulate repeat accumulat e codes, ” in Proc. IEEE Globecomm , Dallas, T exas, Nov . 200 4. [14] G. Liv a, E . Paolin i, a nd M. Chiani, “Si m ple reconfigurable low-densit y parity-c heck codes, ” IEEE Commun. Lett. , vol. 9, no. 3, pp. 258–260, Mar . 2005. [15] M. Shokrollah i, “Raptor codes, ” IE EE T rans. Inform. Theory , vol. 52, no. 6, pp. 2551–2567, June 2006. [16] J. Byers, M. Luby , and M. Mitz enmache r , “ A digital fountain a pproach to reliable distrib ution of b ulk data, ” IEEE J. Select. Ar eas Commun. , vol. 20, no. 8, pp. 1528– 1540, Oct. 2002. [17] M. Luby , “L T codes, ” in Proc. of the 43rd Annual IEEE Symposium on F oundation s of Computer Science , V ancouve r , Canada, Nov . 2002, pp. 271–282. [18] M. Luby , M. W atson, T . Gasiba , T . Stoc khammer, and W . Xu, “Rapto r codes for reli able download deli very in wireless broadcast systems, ” in Pr oc. of 2006 IEEE Consumer Communications and Ne tworking Conf . , vol. 1, Jan. 2006, pp. 192–197 . [19] E. Berle kamp, “ The technology of e rror-c orrecti ng codes, ” IEEE Pr oc. , vol. 68, pp. 564–593, 1980. [20] S. MacMul lan and O.M.Collins, “ A comparison of kno wn codes, random codes, and t he best code s, ” IEEE T rans. Info rm. Theory , vol. 44, Nov . 1998. [21] X.-Y . Hu, M. P . C. Fossorier , and E. Elefthe riou, “On the computation of the minimum distance of lo w-density parity-che ck codes, ” in P r oc. ICC’04 , June 2004, pp. 767–7 71. [22] M. Luby , T . G asiba, T . St ockhammer , and M. W atson, “Reliable multimedi a do w nload deliv ery in cellu lar broadcast networks, ” IEEE T ransacti ons on Broadc asting , vol. 53, pp. 235–246, Mar . 2007. [23] G. Li va, S. Song, L. Lan, Y . Zhang, W . Ryan, and S. Lin, “Design of L DPC codes: A survey and ne w results, ” J . Comm. Softwar e and Systems , Sept. 2006. 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 ε CER Singleton Bound (2048,1024) GeIRA (2048,1024), ML GeIRA (2048,1024), IT Fig. 7. Code word error rate for a (2048,1024) GeIRA co de. The solid line represent s the Singleton bound on the CER, while the dott ed line rep resents the Berlekamp random coding bou nd. 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 ε CER GeIRA R ∼ 1/2 GeIRA R ∼ 2/3 GeIRA R ∼ 4/5 Fig. 8. Code word error rates for a family of GeIRA codes with input block size k=502 and code rates spanning from 1 / 2 to 4 / 5 . The solid lines represent the respecti ve Singl eton bounds on the CER, while dotted lines represent the respect i ve Berlekamp random coding bounds. 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 10 −18 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 ε CER Berlekamp Bound (1160,1044) Singleton Bound (1160,1044) GeIRA 1+D+D 4 +D 10 +D 20 , w c =5, ML GeIRA error floor prediction Fig. 9. Code word error rate for a (1160,1044) GeIRA code. The performance is c ompared the the Berleka mp bound and to t he Singleton bound. 0 2 4 6 8 10 12 14 16 18 20 10 −4 10 −3 10 −2 10 −1 10 0 Overhead δ CER MBMS Raptor, k=2048 MBMS Raptor, k=256 GeIRA, k=1044 GeIRA, k=247 Fig. 10. Cod e word error rate vs. overhe ad δ for the MBMS Raptor code [22] and for some GeIR A c odes, various input block size. 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 10 −25 10 −20 10 −15 10 −10 10 −5 10 0 ε CER Singleton Bound (512,256) Regular GeIRA (512,256) MBMS Raptor (512,256) Regular GeIRA (512,256), error floor prediction MBMS Raptor (512,256), error floor prediction Fig. 11. Co de word error rates and error floor pre dictions for (512,256) Rapt or and GeIRA code s. 0.3 0.32 0.34 0.36 0.38 0.4 0.42 0.44 0.46 0.48 0.5 10 −4 10 −3 10 −2 10 −1 10 0 ε CER Singleton Bound (1024,512) AR3A (1024,512), IT AR3A (1024,512), ML Error floor prediction Fig. 12. Co de word error rates for a (1024,512) accumul ate-repeat-a ccum ulate code under ite rati ve and max imum-lik elihood dec oding.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment