Testing Consistency of Two Histograms

Several approaches to testing the hypothesis that two histograms are drawn from the same distribution are investigated. We note that single-sample continuous distribution tests may be adapted to this two-sample grouped data situation. The difficulty …

Authors: Frank C. Porter

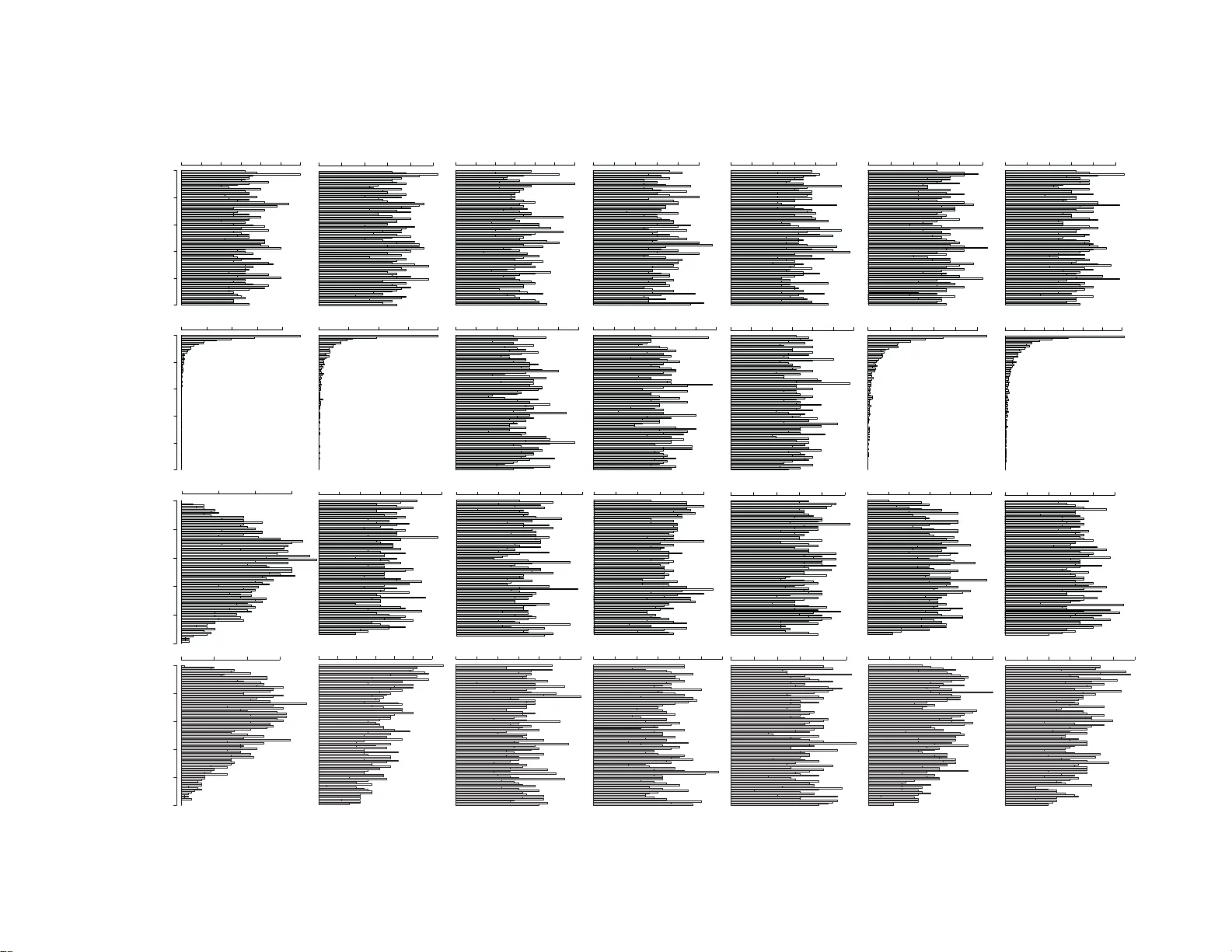

T esting Consis tency of Tw o Histograms * F rank P orter California Institute of T ec hnology Lauritsen Lab or a tory for High Energy Ph ysics P asadena, California 91125 Marc h 7, 20 08 Abstract Sev eral approac hes to testing the h yp othesis that tw o histograms are dra wn from the same distribution are in v estigated. W e note that single-sample contin uous distribution tests ma y b e adapted to this tw o-sample group ed data situation. The difficult y of not ha ving a fully-sp ecified n ull h yp othesis is an imp ortant consid eration in the general case, and car e is required in estimating probabilities with “toy” Mon te Carlo sim ulations. The p erformance of sev eral common tests is compared; no single test p erforms b est in all situations. 1. In tro duction Sometimes w e ha v e t w o histograms a nd are faced with the question: “ Are they consisten t?” That is, are our t w o histograms consisten t with ha ving b een sampled from the s ame paren t distribution. F or example, w e migh t hav e a kinematic distri- bution in tw o similar c hannels that w e think should be consisten t, and wish to tes t this hypothesis. Each histogram represen ts a sampling from a multiv ariate P oisson distribution. The question is whether the means are bin-b y-bin equal b etw e en the t w o distributions. Or, if w e are only interes ted in “ shap e”, ar e the means related b y the same scale factor for all bins? W e in ve stigate this question in the con text of frequency statistics. F or example, consider Fig. 1. Are the tw o histograms consisten t or can we conclude that they are dr awn from differen t distributions? * This work supp orted in part by the U.S. Departmen t of Energy under g r an t D E-F G02 - 92-ER4070 1 . 1 0 10 20 30 40 0 5 10 15 20 25 30 bin index u or v 0 10 20 30 40 0 5 10 15 20 25 30 bin index u or v Fig. 1. Tw o histograms (blue squares and red circles) to b e compared for consistency . There are at least tw o v arian ts of in terest to t his question: 1. W e wish to test the hypothesis: H 0 : The means of the t wo histograms are bin-by-bin equal, against H 1 : The means of the t wo histograms are not bin-by -bin equal. 2. W e wish to test the hypothesis: H ′ 0 : The densities of t he t w o histogra ms are bin-b y-bin equal, a g ainst H ′ 1 : The densities of t he t w o histogra ms are not bin- b y-bin equal. In the second case, the relative normalization of the tw o histograms is not an issue: w e o nly compare the shap es. It ma y b e not ed that there a re a large v ariet y o f tests that attempt to answ er the question of whethe r a give n dataset is consisten t with having b een drawn from some sp ecified con tin uous distribution. These tests ma y t ypically b e adapted to address the question of whether t w o datasets hav e b een draw n from the same con tinuous distribution, often referred to as “tw o-sample” tests. These tests ma y furt her b e adapted to the pres ent problem, that of determining whethe r t w o histograms hav e 2 the same shap e. This situation is also referred to as comparing whether t w o (or more) rows of a “ t a ble” are consisten t. The datasets of this form are a lso referred to as “group ed data”. Although w e k eep the discussion fo cussed on the comparison of tw o histograms, it is worth remarking that many o f the observ ations apply also to other situations, suc h as the comparison of a histog ram with a mo del prediction. 2. Notation W e assume that w e ha ve formed our t w o histogr a ms with the same n um b er of bins, k , with iden tical bin b o undar ies. The bin conte nts of t he “first” histogram are giv en by realization u of random v ariable U , and of the second by realization v of random v ariable V . Thus , the sampling distributions are: P ( U = u ) = k Y i =1 µ u i i u i ! e − µ i , P ( V = v ) = k Y i =1 ν v i i v i ! e − ν i , (1) where the vec tors µ a nd ν a r e the mean bin con tents of the resp ectiv e histogra ms. W e define: N u ≡ k X i =1 U i , total con tents of first histogram, N v ≡ k X i =1 V i , total con tents of second histogram, µ T ≡ h N u i = k X i =1 µ i , ν T ≡ h N v i = k X i =1 ν i , t i ≡ u i + v i , i = 1 , . . . , k. W e are in terested in the p ow er of a test, at any giv en confidence lev el. The p o w er is the proba bilit y that the n ull h yp othesis is rejected when it is false. Of course, the 3 p o we r depends on the true sampling distribution. In other w ords, the p ow er is one min us the probabilit y of a Type I I error. The confidence lev el is the probability that the n ull h yp othesis is accepted, if the n ull h yp othesis is correct. Th us, the confidence lev el is o ne min us the probability of a T yp e I error. In ph ysics, we usually don’t sp ecify the confidence leve l of a t est in a dv ance, a t least not formally . Instead, w e quote the P -v alue f o r our result. This is the probabilit y , under the n ull h yp othesis, of obta ining a result as “ba d” or w orse than our observ ed v alue. This w ould b e the probabilit y of a T yp e I error if our observ ation w ere used to define the critical region of the test. Note that we are dealing with discrete distributions here, and exact statemen ts of frequency are problematic, tho ugh not imp ossible. Instead of attempting to construct exact statemen ts, our treatmen t of the discrete ness will b e suc h as to err on the “conserv at ive” side. By “conserv at ive”, w e mean that w e will tend to accept the n ull h yp othesis with greater than the stated probabitlity . It is imp ortant to understand that this is not alwa ys the “conserv ativ e” direction, f or example it could mislead us in to a ccepting a mo del when it should b e rejected. W e will drop the distinction betw een the random v ar iable (upp er case sym b ols U and V ) and a realization (low e r case u and v ) in the following, but will p oin t out where this infor malit y may yield confusion. The computations in this note are carr ied out in the framew ork of the R statistics pac k age [1]. 2.1 Large Statistics Case If a ll of the bin con tents of b oth histograms a re larg e, then w e ma y use the appro ximation that the bin conte nts are normally distributed. Under H 0 , h u i i = h v i i ≡ µ i , i = 1 , . . . , k . More prop erly , it is h U i i = µ i , etc., but w e are p ermitting u i to stand for the random v ariable as w ell as its realization, as noted ab ov e. Let the difference in the con tents 4 of bin i b et w een the t w o histograms b e: ∆ i ≡ u i − v i , and let the standard deviation for ∆ i b e denoted σ i . Then t he sampling distribution of the difference b et w een the t w o histograms is: P (∆) = 1 (2 π ) k/ 2 k Y i =1 1 σ i ! exp − 1 2 k X i =1 ∆ 2 i σ 2 i ! . This suggests t he test statistic: T = k X i =1 ∆ 2 i σ 2 i . If t he σ i w ere kno wn, this w ould simply b e distributed according to the c hi-square distribution with k degrees of freedom. The maxim um-lik eliho o d estimator for the mean of a Poiss on is just the sampled num ber. The mean of the P oisson is also its v ariance, and w e will use the sampled n um b er also as the estimate of the v ariance in the normal appro ximation. W e suggest t he followin g a lg orithm for t his test: 1. F or σ 2 i form the estimate ˆ σ 2 i = ( u i + v i ) . 2. Statistic T is th us ev aluated according to: T = k X i =1 ( u i − v i ) 2 u i + v i . If u i = v i = 0 f or bin i , the con tribution to the sum from that bin is zero. 3. Estimate the P -v alue according to a chi-square with k degrees of freedom. Note that this is not an exact result. If it is desired to only compare shap es, then the suggested algorithm is to scale b oth histogram bin conte nts: 5 1. Let N = 0 . 5 ( N u + N v ) . Scale u and v acc ording to: u i → u ′ i = u i ( N/ N u ) v i → v ′ i = v i ( N/ N v ) . 2. Estimate σ 2 i with: ˆ σ 2 i = N N u 2 u i + N N v 2 v i . 3. Statistic T is th us ev aluated according to: T = k X i =1 u i N u − v i N v 2 u i N 2 u + v i N 2 v . 3. Estimate the P -v alue according to a chi-sq uare with k − 1 degrees of freedom. Note that this is not an exact result. Due to the presence of bins with small bin counts, we migh t not expect this metho d to b e esp ecially go o d for the data in Fig. 1, but w e can try it an yw ay . T able I gives the results of applying this test, b oth including the nor malizat io n and only comparing shap es. T able I. Results of tests for consistency of the tw o datasets in Fig. 1. The t ests b elo w the χ 2 lines are described in Section 3. T yp e o f test T NDOF P ( χ 2 > T ) P -v alue χ 2 Absolute comparison 29.8 40 0.88 0.86 χ 2 Shap e comparison 24.9 39 0.96 0.95 Lik eliho o d Ratio Shap e comparison 25.3 39 0.96 0.96 Kolmogorov-Smirno v Shap e comparison 0.043 39 NA 0.61 Bhattac haryy a Shap e comparison 0.986 39 NA 0.97 Cram ´ er-V on-Mises Shap e comparison 0.132 39 NA 0.45 Anderson-Darling Shap e comparison 0.849 39 NA 0.45 Lik eliho o d v alue shap e comparison 79 39 NA 0.91 6 In the column lab eled “ P -v alue” a n attempt is made to compute (b y sim ulation) a more reliable estimate of the probabilit y , under the n ull h ypothesis, that a v alue for T will b e as la r ge as tha t observ ed. This ma y b e compared with the P ( χ 2 > T ) column, whic h is the pro ba bilit y assuming T follows a χ 2 distribution with NDOF degress of freedom. Note that the absolute comparison yields sligh tly p o o rer agreemen t b et w een the histograms tha n the shap e comparison. The total n um b er of coun ts in one dataset is 492; in the other it is 424. T reating these as samplings from a nor mal distribution with v ariances 492 a nd 424, w e find a difference of 2 .2 standard deviations or a tw o- tailed P -v alue of 0 . 025 . This lo w probability is diluted by the bin-b y-bin test. Using a bin-b y-bin test to chec k whether the tota ls a re consisten t is not a p o w erful approac h. In fact, the tw o histograms w ere generated with a 10% difference in exp ected coun ts. The ev aluation by sim ulation of the probability under the n ull h yp othesis is in fact problematic, since the n ull hypothesis actually isn’t completely sp ecified. The problem is the dep endence of P oisson probabilities on the absolute n um b ers of coun ts. Probabilities for differences in Poiss on counts are not inv ariant under the total n um b er of coun ts. Unfortunately , w e don’t know the true mean n umbers o f coun ts in eac h bin. Thus , we m ust estimate these means. The pro cedure adopted here is to use the maximum lik eliho o d estimators (see b elow) f or the mean num bers, in the n ull hypothesis. W e’ll ha v e further discussion o f this pro cedure b elo w – it do es not alw ay s yield v alid results. In our example, the pro babilities estimated according to our simulation and the probabilities according to a χ 2 distribution are close to eac h other. This suggests the p ossibilit y o f using the χ 2 probabilities – if we can do this, the problem that we ha v en’t completely sp ecified the n ull hypothesis is av oided. W e o ffer the following conjecture: Conjecture: Let T b e the test statistic describ ed a b o v e, for either the absolute or the shape comparison, as desired. Let T c b e a p ossible v alue of T (p erhaps the critical v alue to b e used in a h yp othesis test). Then, for large v alues of T c : P ( T < T c ) ≥ P T < T c | χ 2 ( T , ndof) , where P T < T c | χ 2 ( T , ndof) is the probabilit y that T < T c according to a χ 2 dis- 7 tribution with ndof degrees of freedom (either k or k − 1, according to which test is b eing p erfo rmed). W e’ll only suggest an approa c h to a pro of, whic h could presumably also b e used to dev elop a for mal condition for T c to b e “la rge”. The conjecture also app ears to b e true anecdotally , and for in teresting v alues of T c , noting that it is la rge v alues of T c that w e care most a b out for an in teresting h yp othesis test. W e provide some intuition for the conjecture b y considering the case of one bin. F or sim plicity w e’ll also supp o se t hat ν = µ and that µ is small ( ≪ 1, sa y). Since w e a re in terested in large v alues of the statistic, w e are in terested in the situation where one of u, v is lar g e, and the other small (since µ is small). Supp ose it is u that is large. Then T = ( u − v ) 2 u + v ≈ u. F or giv en v (0, sa y), the probabilit y o f T is thus P ( T ) ≈ µ T T ! e − µ . This may b e compared with the chi-sq uare probabilit y distribution for one degree o f freedom: P ( T = χ 2 ) = 1 √ 2 π e − T / 2 √ T . The ratio is, dropping t he constan ts: P ( T ) P ( T = χ 2 ) ∝ µ T e T / 2 √ T T ! = exp T 1 2 + ln µ √ T Γ( T ) , whic h a pproac hes zero for large T , for any g iv en µ . W e conclude that t he conjecture is v alid in t he case of o ne bin, and strongly suspect that the argumen t generalizes to m ultiple bins. According to the conjecture, if w e use the probabilities from a χ 2 distribution in our test, the error that w e make is in the “conserv ativ e” direction (as long as T c is larg e). That is, we’ll reject the n ull h yp othesis less of t en than w e would with the correct probabilit y . It should b e emphasized that this conjecture is indep enden t of the statistics of the sample, bins with zero coun ts are fine. In the limit of la rge statistics, the inequality approaches equalit y . 8 Lest w e conclude that it is acce ptable to just use this great simplification in all situations, w e hasten to p oin t out that it isn’t as nice as it sounds. The problem is that, in low statistics situations, the p o w er of the test according to this appro a c h can b e dismal. That is, we might not reject the null hypothesis in situations where it is o bviously implausible. W e may illustrate t hese considerations with some simple examples, see Fig. 2. The plot for high statistics on t he left sho ws excelle nt agreemen t betw een the actual distribution and the χ 2 distribution. The lo w er statistics plots in the middle and righ t, for tw o differen t models, sho w that the chi-square approximation is ve ry con- serv ative in general. Th us, using the c hi-square probabilit y la c ks p ow er in this case, and is not a recommended a ppro ximation. Fig. 2. Comparison of the a ctual (cumulativ e) probabilit y distribution for T with the c hi-square distribution. The solid blue curve s sho w the actual distributions, and the dashed red curv es the chi-square distributions. All plots are for 100 bin histograms. (a) Eac h bin has mean 100. (b) Each bin has mean 1. (c) Bin j has mean 30 /j . 3. G e neral Case If the bin con tents are not necessarily la r g e, then the normal appro ximation may not b e go o d enough. There are v arious approac hes w e could take in this case. W e’ll discuss and compare sev eral p ossibilities. 9 3.1 Com bining Bins A simple approa c h is to com bine bins un til the nor ma l approximation is go o d enough. In man y cases this do esn’t lose to o m uc h statistical p ow er. It may be necessary to che c k with sim ulations that probability statemen ts are v alid. Figure 3 sho ws the results of this appro ac h on t he data in Figure 1, as a function of the minim um num ber of ev en ts p er bin. The comparison b eing made is f or the shap es. The algorithm is to com bine corresp onding bins in b oth histograms un til b oth hav e at least “ minBin ” coun ts in each bin. Fig. 3 . Left: The blue dots sho w the v alue of the test statistic T , and the red pluses sho ws the num ber of histogram bins for the data in Fig. 1, as a function of the minim um num ber of coun ts per his tog r am bin. Right: The P - v alue for consistency of the tw o da t a sets in F ig. 1. The red pluses sho w the pro ba bilit y for a ch i-square distribution, and the blue circles sho w the probability for the actual distribution, with an estimated nu ll h yp othesis. 3.2 T esting for Equal Normalization An alternative is to w ork with the P oisson distributions. Let us separate the problem of the shap e from the problem of the o v erall norma lizat io n. In the case o f testing equality of o v erall normization, there is a w ell-motiv ated c hoice for the test statistic, ev en for low statistics. 10 T o test the normalization, w e simply compare totals ov er all bins b et w een the t w o histograms. Our distribution is P ( N u , N v ) = µ N u T ν N v T N u ! N v ! e − ( µ T + ν T ) . The null hy p ot hesis is H 0 : µ T = ν T , to b e tested a g ainst a lt ernativ e H 1 : µ T 6 = ν T . W e are th us intere sted in the difference b et we en the t w o means; the sum is effectiv ely a n uisance parameter. That is, w e are inte rested in P ( N v | N u + N v = N ) = P ( N | N v ) P ( N v ) P ( N ) = µ N − N v T e − µ T ( N − N v )! ν N v T e − ν T N v ! ( µ T + ν T ) N e − ( µ T + ν T ) N ! = N N v ! ν T µ T + ν T N v µ T µ T + ν T N − N v . This proba bility now p ermits us to construct a uniformly most p ow erful test of our hypothesis (Ref. 2). Note that it is simply a bino mia l distribution, for giv en N . The uniformly most p o w erful prop ert y holds indep enden tly of N , although the probabilities cannot b e computed without N . The null h yp o thesis corresp o nds to µ T = ν T , that is: P ( N v | N u + N v = N ) = N N v ! 1 2 N . F or our example, with N = 916 and N v = 42 4, the P - v alue is 0 . 027, assuming a t w o-ta iled probability is desired. This may b e compared with our earlier estimate of 0 . 025 in the normal approximation. Note that for our binomial calculation we ha v e “conserv a tiv ely” included the endp oints (424 and 4 9 2). If w e try to mimic more closely the normal estimate by subtracting one-half the probability at the endp oin ts, w e obtain 0 . 025, essen tially the normal n um b er w e found earlier. The dbinom function R ef. 3 in the R pack age has b een used for this computation. 11 3.3 Shap e Comparison Statistics There are man y different p ossible statistics for comparing the shap es of the histograms. W e in v estigate sev eral c hoices. T able I summarizes the result of eac h of these t ests a pplied to the example in Fig. 1 . W e list the statistics here, and discuss p erformance in the following sections. 3.3.1 Chi-squ are test for shape Ev en though w e don’t exp ect it to follo w a χ 2 distribution, w e ma y ev aluate t he test statistic: χ 2 = k X i =1 u i N u − v i N v 2 u i N 2 u + v i N 2 v . If u i = v i = 0, the contribution to the sum from that bin is zero. W e ha v e a lr eady discusse d application of this statistic to t he example of F ig. 1. 3.3.2 Geometric test for shape Another test statistic w e could try ma y b e motiv ated from a geometric persp ec- tiv e. W e consider the bin con ten ts of a histogram to define a v ector in a k -dimensional space. If tw o such vectors are drawn from the s ame distribution (the n ull h ypothe- sis), then t hey will tend to p oin t in t he same direction (we are not in terested in the lengths of t he ve ctors here). Th us, if w e represe nt eac h histogram as a unit v ector with comp onen ts: { u 1 / N u , . . . , u k / N u } , and { v 1 / N v , . . . , v k / N v } , w e may form the test statistic: T BDM = r u N u · v N v = k X i =1 u i v i N u N v ! 1 / 2 . This is known a s the “Bhattac haryy a distance measure”. W e’ll refer to it as the “BDM” statistic for short. W e assume tha t neither histogram is empt y for this statistic. All v ectors lie in the p ositive direction in all co ordniates, so there is no issue with taking the square ro ot. 12 It may be noticed t ha t t his statistic is related to the χ 2 statistic – the u N u · v N v dot pro duct is close to the cross term in t he χ 2 expression. W e apply t his formalism to the example in Fig. 1. The resulting terms in the sum o v er bins are sho wn in Fig. 4. The sum ov er bins give s 0.986 (See T able I for a summary). According to our estimated distribution of this statistic under the null h yp othesis, this gives a P -v alue o f 0.97, similar to the χ 2 test result. 0 10 20 30 40 0.00 0.01 0.02 0.03 0.04 0.05 Bin index BDM per bin BDM statistic Frequency 0.95 0.96 0.97 0.98 0.99 0 5000 10000 15000 Fig. 4. Left: Bin-b y-bin contributions to the g eometric (“BDM”) test statistic fo r the example of Fig. 1. Righ t: Estimated distribution of the BD M statistic for the n ull hypothesis in t he example of Fig. 1. 3.3.3 Kolmogoro v-Smirno v test Another approach to a shap e test may b e based on the Kolmogorov-Smirno v (KS) idea. Recall that the idea o f the KS test is to estimate the maxim um differ- ence b et w een observ ed and predicted cum ulativ e distribution f unctions (CDFs) and compare with expectations. W e may adapt this idea to the presen t case. It should b e remark ed that if w e hav e the a ctual data points fro m whic h the histograms are deriv ed, then w e may use the K olmogorov -Smirnov (“KS”) pro cedure directly on those p oin ts. This would incorp orat e additional information and yield a p otentially more p ow erful test. Ho w ev er, if the bin widths are small compared with p ossible structure it may b e exp ected to not mak e muc h difference. 13 W e mo dify the KS statistic to apply to comparison of histograms as follo ws. W e assume that neither histogram is empty . F orm the “cum ulative distribution histograms” according to: u ci = i X j =1 u j / N u v ci = i X j =1 v j / N v . Then compute the test statistic: T KS = max i | u ci − v ci | . T est statistics ma y also b e formed for one-tail tests, but w e consider o nly the tw o-tail test here. W e apply this formalism t o the example in Fig. 1. The bin-by -bin distances are sho wn in Fig. 5. The maxim um ov er bins gives 0.0 43 (See T able I f or a summary). According to our estimated distribution of this statistic under the n ull h yp o thesis, this giv es a P -v alue of 0.61, somewh at smaller than for the χ 2 test resu lt, but still indicating consistency o f the t wo histograms. Note that the KS test tends to empha- size the region near the p eak of the distribution, that is the region where the largest fluctuations ar e exp ected in P oisson statistics. 0 10 20 30 −0.10 0.00 0.10 Bin index KS distance KS statistic Frequency 0.02 0.08 0.14 0 1000 2500 Fig. 5. Left: Bin-b y-bin distances for the Kolmogorov-Smirno v test statistic for the example of Fig. 1. Right: Estimated distribution of the Kolmogorov-Smirno v distance for the n ull h yp othesis in the example of F ig. 1. 14 3.3.4 Cram ´ er-v on-Mises t est Somewhat similar to the Ko lmogorov -Smirnov test is the Cram ´ er-v on-Mises (CVM) test. The idea in this test is t o add up the squared differences betw een the cum ulativ e distributions b eing compared. Again, this test is usually thought of as a test to compare an observ ed distribution with a presumed paren t contin uous probabilit y distribution. How ev er, the algo rithm can b e ada pted to t he t w o-sample comparison, a nd t o the case of comparing t w o histograms. The test statistic for comparing the tw o samples x 1 , x 2 , . . . , x N and y 1 , y 2 , . . . , y M is [4]: T = N M ( N + M ) 2 N X i =1 [ E x ( x i ) − E y ( x i )] 2 + M X j =1 [ E x ( y j ) − E y ( y j )] 2 , where E x is the empirical cumulativ e distribution for sampling x . That is, E x ( x ) = n/ N if n of the sampled x i are less t ha n or equal to x . W e adapt this fo r the presen t application of comparing histograms with bin con ten ts u 1 , u 2 , . . . , u k and v 1 , v 2 , . . . , v k with iden tical bin b oundaries: Let z be a p oin t in bin i , and define the empirical cum ulativ e distribution function for histogram u as: E u ( z ) = i X j =1 u i / N u . Then the test statistic is: T CVM = N u N v ( N u + N v ) 2 k X j =1 ( u j + v j ) [ E u ( z j ) − E v ( z j )] 2 . W e apply this formalism to the example in Fig. 1, finding T CVM = 0 . 132. The resulting estimated distribution under the n ull hy p o thesis is sho wn in Fig. 6. Ac- cording to our estimated distribution of this statistic under the null hypothesis, this giv es a P - v alue of 0.45 ( See T able I for a summary), somewhat smaller than the χ 2 test result. 15 CVM statistic Frequency 0.0 0.5 1.0 1.5 0 1000 2000 3000 4000 AD statistic Frequency 0 2 4 6 0 1000 2000 3000 4000 Fig. 6 . Estimated distributions of the test statistic for the null h yp othesis in the ex- ample of Fig. 1 Left: The Cram ´ er-von-Mis es statistic. Rig h t: The Anderson-Darling statistic. 3.3.5 Anderson-Darlin g test for shap e The Anderson-Darling test is anot her v ar ian t on the theme of non-parametric comparison of cum ulativ e distributions. It is similar to the Cram ´ er-v on-Mises statis- tic, but is designed to b e sensitiv e to the tails of the CDF. The original statistic w as, once again, designed to compare a dataset dra wn from a contin uous distribution, with CDF F 0 ( x ) under the n ull h yp othesis: A 2 m = m Z ∞ −∞ [ F m ( x ) − F 0 ( x )] 2 F 0 ( x ) [1 − F 0 ( x )] dF 0 ( x ) , where F m ( x ) is the empirical CDF of dataset x 1 , . . . x m . Sch olz and Stephens [5] pro vide a form of this statistic f o r a k -sample test on group ed data (e.g., as might b e used to compare k histograms). Based on the result expres sed in their Eq. 6 , the expression of interest to us for tw o histograms is: T AD = 1 N u + N v k max − 1 X j = k min t j Σ j ( N u + N v − Σ j ) [( N u + N v )Σ uj − N u Σ j ] 2 / N u + [( N u + N v )Σ v j − N v Σ j ] 2 / N v , 16 where k min is the first bin where either histogram has non-zero coun ts, k max is the n um b er of bins coun ting up the the last bin where either histogram has non- zero coun ts, and Σ uj ≡ j X i =1 u i , Σ v j ≡ j X i =1 v i , and Σ j ≡ j X i =1 t i = Σ uj + Σ v j . W e apply this formalism to the example in Fig. 1. The resulting estimated distribution under the null hy p ot hesis is s hown in Fig. 6. The sum o v er bins giv es 0.849 (See T able I for a summary). According to our estimated distribution of this statistic under the null h yp othesis, this giv es a P -v alue of 0.45, somewhat smaller than the χ 2 test result, but similar with the CVM result. 3.3.6 Lik eliho o d r at io t est for shape W e may base a test whether the histograms are sampled from the same shap e distribution on the same binomial idea a s we used for the no rmalization test. In this case, how ev er, there is a binomial asso ciated with eac h bin of the histogram. W e start with the n ull h yp othesis, that the t w o histograms a re sampled from the join t distribution: P ( u, v ) = k Y i =1 µ u i i u i ! e − µ i ν v i i v i ! e − ν i , where ν i = aµ i for i = 1 , 2 , . . . , k . That is, the “shap es” of the t w o histograms are the same, although the tot al con ten ts ma y differ. With t i = u i + v i , and fixing the t i at the obse rve d v alues, w e ha v e the multi- binomial form: P ( v | u + v = t ) = k Y i =1 t i v i ! ν i ν i + µ i v i µ i ν i + µ i t i − v i . The n ull h ypo t hesis is that ν i = aµ i for all v alues of i . W e w ould lik e to test this, but there are no w t w o complications: 17 1. The v alue of “ a ” is not sp ecified; 2. W e still hav e a m ultiv ariate distribution. F or a , w e will substitute an estimate from the data, namely the maxim um like- liho o d estimator: ˆ a = N v N u . Note that this estimate is a random v ariable; its use will reduce the effectiv e n um b er of degrees of f reedom b y one. W e prop ose to use a lik eliho o d ratio statistic to reduce the problem to a single v ariable. This will be the like liho o d under the n ull h yp othesis (with a give n b y its maxim um lik eliho o d estimator), divided b y the maxim um o f the like liho o d under the alternativ e h yp othesis. Thus, w e f o rm the ra tio: λ = max H 0 L ( a | v ; u + v = t ) max H 1 L ( { a i ≡ ν i /µ i }| v ; u + v = t ) = k Y i =1 ˆ a 1+ˆ a v i 1 1+ˆ a t i − v i ˆ a i 1+ˆ a i v i 1 1+ˆ a i t i − v i . The maxim um lik eliho o d estimator, under H 1 , for a i is just ˆ a i = v i /u i . Th us, we rewrite our test statistic according to: λ = k Y i =1 1 + v i /u i 1 + N v / N u t i N v N u u i v i v i . In practice, w e’ll w ork with − 2 ln λ = − 2 k X i =1 t i ln 1 + v i /u i 1 + N v / N u + v i ln N v N u u i v i . Before attempting to apply this, w e inv e stigate how to handle zero bin conten ts. It is p ossible that u i = v i = 0 for some bin. In this case, P ( v i | u i + v i = t i ) = 1, 18 under b oth H 0 and H 1 , and this bin con tributes zero to the sum. It is also p ossible that t i 6 = 0, but v i = 0 o r u i = 0. If v i = 0, t hen P (0 | t i ) = µ i ν i + µ i t i . Under H 0 , this is 1 1 + a t i , and under H 1 it is 1 1 + a i t i . The maxim um lik eliho o d estimator f o r a i is ˆ a i = 0. Thus , the lik eliho o d ratio for bin i is λ i = 1 1 + ˆ a t i , and this contributes to the sum an amount: − 2 ln λ i = − 2 t i ln N u N u + N v . If instead u i = 0, t hen P ( t i | t i ) = ν i ν i + µ i t i . and the contribution to the sum is − 2 ln λ i = − 2 t i ln N v N u + N v . W e apply t his formalism to the example in Fig. 1. The resulting terms in the sum ov er bins are shown in Fig. 7. The sum ov er bins giv es 25.3 (See T able I for a summary). This statistic should asymptotically b e distributed a ccording to a χ 2 distribution with the num ber of degrees of freedom equal to one less than the n um b er of bins, or N DOF = 39 in this case. If v a lid, this giv es a P - v alue of 0.96 in t his ex ample. This ma y b e compared with a probability of 0.9 6 according to the estimated actual distribution. In this example we obtain nearly t he same a nsw er as the naiv e application of the chi-sq uare calculation with no bins com bined. 19 0 10 20 30 0 1 2 3 4 Bin index −2ln(lambda) 0 10 20 30 0 1 2 3 4 Fig. 7. V alue of − 2 ln λ i or χ 2 i as a function of histog r a m bin in the comparison of the tw o distributions of Fig. 1. Blue circles are − 2 ln λ i ; red squares are χ 2 i . W e may see that this close agreemen t is a result of nearly bin-by-bin equality of the t w o statistics, see Fig. 7. T o in v estigate when this migh t hold more generally , we compare the v a lues of − 2 ln λ i and χ 2 i as a function of u i and v i , Fig. 8 . W e observ e that the t w o statistics agree when u i = v i with increasing difference aw a y from that p oin t. This observ ation is readily ve rified analytically . This agreemen t ho lds ev en for lo w statistics. Ho w ev er, we shouldn’t conclude that the chi-sq uare approx imation ma y b e used fo r lo w statistics – fluctuations aw a y from equal n umbers lead to quite differen t results when we get into the tails at low statistics. Our example do esn’t really sample t hese tails. The precise v alue of the probability should not b e take n to o seriously , exce pt to conclude that the t w o distributions a re consisten t according to these tests. F or example, when we combine bins to improv e exp ected χ 2 b eha vior, w e see fairly large fluctuations in the probability estimate just due to the re-binning (Fig. 3). 20 Fig. 8. V alue of − 2 ln λ i or χ 2 i as a function of u i and v i bin conten ts. This plot assumes N u = N v . The i subscript is dropp ed, with the understanding that this comparison is for a single bin. 3.3.7 Lik eliho o d v alue test for shap e An of ten-used but contro v ersial go o dness-of-fit statistic is the v alue of the lik e- liho o d at its maxim um v alue under the null h yp othesis. It can b e demonstrated that this statistic carries little or no infor mation in some situations. Ho w ev er, in the limit of lar g e statistics it is essen tially the chi-squ are statistic, so there are kno wn situations were it is a plausible statistic to use. W e thus lo ok at it here. Using the results in the previous section, the test statistic is: T = − ln L = − k X i =1 " ln t i v i ! + t i ln N u N u + N v + v i ln N v N u # . If either N u = 0 o r N v = 0, t hen T = 0. W e apply this formalism to the example in Fig. 1. The resulting estimated distribution under the null hy p ot hesis is s hown in Fig. 9. The sum o v er bins giv es 90 (See T able I fo r a summary). Acc ording to our estimated distribution of this statistic under the null hy p o thesis, this giv es a P - v alue o f 0.29, similar t o the χ 2 test result. The fa ct that it is similar may b e exp ected from the f act that our example is reasonably we ll-a ppro ximated b y the large statistics limit. 21 ln Likelihood Frequency 75 80 85 90 95 100 105 0 500 1000 1500 Fig. 9 . Estimated distribution of the ln L test statistic for the null h yp othesis in the example of Fig . 1. There are many ot her p o ssible tests that could b e considered, for example, sc hemes that “partitio n” the χ 2 to select sensitivit y t o differen t c haracteristics [6]. 3.4 Distributions Under the Null Hyp othesis F or the situatio n where the asymptotic distribution may not b e go o d enough, w e w ould like to kno w the probability distribution of our test statistic under the n ull h yp othesis. Ho we v er, we encoun ter a difficult y: our nu ll h yp othesis is not completely sp ecified! The problem is that the distribution dep ends on the v alues of ν i = aµ i . Our n ull h ypo thesis only sa ys ν i = aµ i , but says nothing ab out what µ i migh t b e. Note that it also do esn’t sp ecify a , but we hav e already discu ssed that complication, whic h app ears manageable (althoug h in extreme situations one migh t need to c hec k for dep endence on a ). W e turn once again to the data to make an estimate for µ i , to b e used in es ti- mating the distribution of our test statistics. The straig htforw ard approach is to use the maxim um lik eliho o d para meter estimators (under H 0 ): ˆ µ i = 1 1 + ˆ a ( u i + v i ) , ˆ ν i = ˆ a 1 + ˆ a ( u i + v i ) , 22 where ˆ a = N v / N u . The data is then rep eatedly sim ulated using these v alues fo r the parameters o f the sampling distribution. F or eac h sim ulation, a v alue of the test statistic is o btained. The distribution so obtained is then an estimate of the distribution of the test statistic under the n ull h yp o thesis, and P - v alues ma y b e computed from this. V aria t io ns in the estimates for ˆ µ i and ˆ a may b e used to chec k robustness of the probability estimates obtained in this wa y . W e hav e just described the approach that w as used to compute the estimated probabilities fo r the example o f Fig. 1. The bin con tents in this case are reasonably large, and this approac h w orks well enough for t his case. Unfortunately , this approach do es ve ry p o orly in t he lo w-statistics realm. W e consider a simple test case: Supp ose o ur data is sampled from a flat distribution with a mean o f 1 coun t in eac h of 100 bins. W e test ho w w ell our estimated n ull h yp othesis w orks for any giv en test statistic, T , as f o llo ws: 1. Generate a pair of histogra ms according to the distribution just describ ed. (a) Compute T for this pa ir of histogra ms. (b) Giv en t he pa ir of histograms, compute the estimated null hy p ot hesis accord- ing to the sp cified prescription ab ov e. (c) Generate many pairs of histograms according to the estimated null h yp othesis in order to obtain an estimated distribution for T . (d) Using the estimated distribution f or T , determine the estimated P - v alue for the v alue of T found in step 1a. 2. Rep eat step 1 many times and mak e a histogram of the estimated P - v alues . Note that this histogram should be uniform if the estimated P - v alues are g o o d estimates. 23 The distributions of the estimated pro babilities for the sev en test statistics under the n ull hypothesis are shown in t he second column of F ig. 10 . If t he null h yp othesis w ere to b e rejected at the estimated 0.0 1 probabilit y , this algo r tihm would actually reject H 0 19% o f the time fo r the χ 2 statistic, 16% of the time for the BD M statistic, 24% of the time fo r the ln λ stat istic, a nd 29% of the time for the L statistics, all unacceptably la r ger tha n the des ired 1%. The KS, CVM, and AD s tatistics are a ll consisten t with the desired 1%. F or comparison, the first column of Fig. 10 sho ws the distribution for a “large stat istics” case, where sampling is from histograms with a mean of 100 counts in eac h bin. W e find that a ll test statistics displa y the desired flat distribution in this case. T able I I summarizes t hese results. T able I I. Probabilit y that the null hy p o thesis will b e rejected with a cut at 1% on the estimated distribution (see text). H 0 is estimated with the bin-by -bin algorithm in the first t w o columns, by the unifor m histogram algo rithm in the third column, and with a Gaussian k ernel estimation in the fourth column. T est statistic Probability (%) Probability (%) Probability (%) Probability (%) Bin mean = 1 00 1 1 (uniform) 1 (ke rnel) H 0 estimate bin-b y-bin bin-b y-bin uniform k ernel χ 2 0 . 97 ± 0 . 2 4 18 . 5 ± 1 . 0 1 . 2 ± 0 . 3 1 . 33 ± 0 . 28 BDM 0 . 91 ± 0 . 2 3 16 . 4 ± 0 . 9 0 . 30 ± 0 . 14 0 . 79 ± 0 . 22 KS 1 . 12 ± 0 . 2 6 0 . 97 ± 0 . 24 1 . 0 ± 0 . 2 1 . 21 ± 0 . 27 CVM 1 . 09 ± 0 . 2 6 0 . 85 ± 0 . 23 0 . 8 ± 0 . 2 1 . 27 ± 0 . 28 AD 1 . 15 ± 0 . 2 6 0 . 85 ± 0 . 23 1 . 0 ± 0 . 2 1 . 39 ± 0 . 29 ln λ 0 . 97 ± 0 . 2 4 24 . 2 ± 1 . 1 1 . 5 ± 0 . 3 2 . 0 ± 0 . 34 ln L 0 . 97 ± 0 . 2 4 28 . 5 ± 1 . 1 0 . 0 ± 0 . 0 0 . 061 ± 0 . 0 61 24 0 5 10 15 20 25 0 5 10 15 20 25 30 0 5 10 15 20 25 0 5 1 0 1 5 2 0 2 5 0 5 10 15 20 25 30 0 5 10 15 20 25 30 Estimated probability 0.0 0.2 0.4 0.6 0.8 1.0 0 1 0 2 0 3 0 F requency 0 5 10 15 20 25 0 5 0 150 250 F requency 0 5 10 15 20 25 0 5 0 100 200 F requency 0 5 10 15 20 25 0 5 10 15 20 25 30 F requency 0 5 10 1 5 2 0 2 5 0 5 1 0 1 5 2 0 2 5 3 0 F requency 0 5 10 15 20 25 30 0 5 10 15 20 25 30 F requency 0 5 10 15 20 25 0 100 200 300 400 Estimated probability F requency 0.0 0.2 0.4 0.6 0.8 1.0 0 5 10 15 20 25 30 Estimated probability 0.0 0.2 0.4 0.6 0.8 1.0 0 100 200 300 400 2 χ BDM KS CVM AD ln ln λ L 0 5 10 15 20 25 30 0 5 10 15 20 25 30 0 5 10 15 20 25 0 5 10 15 20 25 30 0 5 10 15 20 25 30 0 5 10 15 20 25 30 Estimated probability 0.0 0.2 0.4 0.6 0.8 1.0 0 10 20 30 Fig. 10. See caption on next page 25 Fig. 10. (Figure on previous pag e) Distribution of the estimated probabilit y that the test statistic is w orse than that o bserv ed, f or sev en differen t test statistics. The data are generated according to the n ull hypothesis, consisting of 100 bin histograms with a mean of 100 coun ts (left column) o r one count (other columns). The first and second columns are fo r an estimated H0 computed as the w eighted bin-b y-bin a v erage. The third column is fo r an es timated H0 where eac h bin is the a v erage o f the total con ten ts of b oth histograms, divided b y the nu mber of bins. The rig h tmost column is for an estimated H0 estimated with a Gaussian kerne l estimator using the con ten ts of b oth histograms. The χ 2 is computed without com bining bins. It may b e noted that the issue really is one app earing at lo w statistics. W e can giv e some intuition for the observ ed effect. Consider the lik ely scenario at lo w statistics t ha t some bins will ha v e ze ro coun ts in b oth histograms. In this case our algorithm for the estimated n ull h ypothesis yields a zero mean for these bins. The sim ulation used to determine the probabilit y distribution f o r the test statistic will alw a ys ha v e zero coun ts in these bins, that is, there will alwa ys b e ag r eemen t b etw ee n the t w o histograms in thes e bins. Th us, the sim ulation will find that v alues o f t he test statistic are more probable than it should. If w e tried the same study with, sa y , a mean of 1 0 0 counts p er bin, w e would find that the probabilit y estimates are v alid, at least this f ar in to the tails. The left column of Fig. 10 shows that more sensible b eha vior is a chiev ed with la rger statistics. The χ 2 , ln λ , a nd ln L statistics perfo rm essen tially iden tically at hig h statistics, as exp ected, since in the normal approxim atio n they are equiv alent. The AD, CVM, and KS tests are more robust under our estimates of H 0 than the others, as they tend to emphasize the largest differences and are not so sens itiv e to bins that alw ay s agree. F or these statistics, w e see that our pro cedure for estimating H 0 do es w ell ev en for lo w statistics, although w e caution aga in that w e are not examining the far tails o f the distribution. There a re v arious p ossible approache s to salv aging the situation in t he low statis- tics regime. Pe rhaps the simplest is to rely o n the t ypically v alid ass umption that the underlying H 0 distribution is “smo oth”. Then instead o f hav ing an unkno wn parameter for eac h bin, w e o nly need to estimate a f ew parameters t o describ e the smo oth distribution, and effectiv ely more statistics are a v ailable. 26 F or example, w e may rep eat the algorithm for o ur example of a mean of one coun t p er bin, but no w assuming a smo o th background represen ted b y a uniform distribution. This is che ating a bit, sinc e w e p erhaps aren’t supp osed to kno w t ha t this is really what we ar e sampling from, but w e’ll pretend that w e lo ok ed at the data and decided that this w as plausible. As usual, w e would in practice wan t to try other p ossibilities to ev aluate systematic effects. Th us, we estimate: ˆ µ i = N u /k , i = 1 , 2 , . . . , k ˆ ν i = N v /k , i = 1 , 2 , . . . , k . The resulting distributions for the estimated probabilities are shown in the third column of Fig. 10. These distributions are m uc h more reasonable, at least at the lev el of a p er cen t (1 650 sample exp erimen ts are generated in eac h case, and the estimated P v alue is estimated for eac h exp erimen t with 1650 ev aluations of the null h yp othesis for that exp eriment). It should b e remark ed that the ln L and, p erhaps, to a muc h lesser exten t the BDM statistic, do not give the desired 1% result, but now err on the “conserv ative ” side. It ma y b e p ossible to mitig ate this with a differen t algo rithm, but this ha s not b een in v estigated. W e ma y expect the p o w er of these statistics to s uffer under the approac h t ak en here. Since w e aren’t supp o sed t o kno w that our null distribution is uniform, w e also try anot her approac h to get a feeling for whether w e can really do a legitimate analysis. Thus , w e try a k ernel estimator for the n ull distribution, using the sum of the observ ed histograms as input. In this case, w e ha v e chos en a Gaussian k ernel, with a standar d deviation of 2. The “densit y” pac k age in R [1 ] is used for t his. An example of suc h a kerne l estimated distribution is sho wn in Fig. 11. The resulting estimated probability distributions of our test statistics are show n in the righ tmost column of Fig. 10 . In general, this w orks prett y w ell. The bandwidth w as chosen here to b e rather small; a larger bandwidth w ould presumably impro v e the results. 27 0 20 40 60 80 100 0.004 0.008 0.012 0.016 bin Density Fig. 11. Sample Ga ussian k ernel density estimate of the n ull hypothesis ( for sampling from a tr ue n ull). 3.5 Comparison of P o w er of T ests The p o w er dep ends on what the a lternativ e h yp othesis is. Here, w e mostly inv es - tigate adding a Gaussian comp onen t on top of a uniform bac kground distribution. This c hoice is motiv ated b y the scenario where one distribution app ears to sho w some p eaking structure, while the other do es not. W e also lo o k briefly at a differen t extreme, that of a rapidly v arying alternat ive. The data for this study are generated as follo ws: The bac kground (n ull distri- bution) has a mean of one ev en t p er histogram bin. The Gaussian has a mean of 50 and a standard deviation of 5, in units of bin n um b er. W e v ary the amplitude of the Gaussian and coun t how often the null hy p o thesis is rejected at t he 1% confidenc e lev el. The amplitude is measured in p ercen t, for example a 25% Gaussian has a tota l amplitude corresp onding to an av erage of 25 % of the total coun ts in t he histogra m, including the (small) tails extending b ey ond the histogram b oundaries. The G a us- sian coun ts are a dded to the coun ts fr o m the n ull distribution. An example is sho wn in Fig. 12 . 28 Fig. 12. Left: The mean bin con tents for a 2 5 % Gaussian on a flat bac kground of one count/bin (note the suppressed zero). Rig h t: Example sampling fro m the 25% Gaussian (filled blue dots) and from the uniform bac kground (op en red squares). The distribution of estimated probabilit y , under H 0, that the test statistic is w orse tha n that observ ed (i.e., the distribution of P -v alues) is shown in Fig. 13 for sev en differen t test statistics. Three differen t magnitudes o f the Gaussian amplitude are displa y ed. The p ow e r of the tests to reject the n ull hy p o thesis at the 99% confi- dence lev el is summarized in T able I I I and in Fig. 14 fo r sev eral differen t alternativ e h yp othesis amplitudes. T able I I I. Estimates of p ow er for sev en different test statistics, a s a function of H 1 . The comparison histogram ( H 0 ) is g enerated with a ll k = 100 bins Pois son of mean 1. The selection is at the 99% confidence level, t ha t is, the null h yp othesis is accepted with (an estimated) 99% pro ba bilit y if it is true. H0 12.5 25 37.5 50 -25 Statistic % % % % % % χ 2 1 . 2 ± 0 . 3 1 . 3 ± 0 . 3 4 . 3 ± 0 . 5 12 . 2 ± 0 . 8 34 . 2 ± 1 . 2 1 . 6 ± 0 . 3 BDM 0 . 30 ± 0 . 14 0 . 5 ± 0 . 2 2 . 3 ± 0 . 4 10 . 7 ± 0 . 8 40 . 5 ± 1 . 2 0 . 9 ± 0 . 2 KS 1 . 0 ± 0 . 2 3 . 6 ± 0 . 5 13 . 5 ± 0 . 8 48 . 3 ± 1 . 2 91 . 9 ± 0 . 7 7 . 2 ± 0 . 6 CVM 0 . 8 ± 0 . 2 1 . 7 ± 0 . 3 4 . 8 ± 0 . 5 35 . 2 ± 1 . 2 90 . 9 ± 0 . 7 2 . 7 ± 0 . 4 AD 1 . 0 ± 0 . 2 1 . 8 ± 0 . 3 6 . 5 ± 0 . 6 42 . 1 ± 1 . 2 94 . 7 ± 0 . 6 2 . 8 ± 0 . 4 ln λ 1 . 5 ± 0 . 3 1 . 9 ± 0 . 3 6 . 4 ± 0 . 6 22 . 9 ± 1 . 0 67 . 1 ± 1 . 2 2 . 4 ± 0 . 4 ln L 0 . 0 ± 0 . 0 0 . 1 ± 0 . 1 0 . 8 ± 0 . 2 6 . 5 ± 0 . 6 34 . 8 ± 1 . 2 0 . 0 ± 0 . 0 29 F requency 0 5 10 15 20 25 3 0 0 1 0 3 0 5 0 7 0 0 100 200 300 400 500 12.5% 25% 50% 2 χ F requency 0 5 10 15 20 25 30 0 1 0 2 0 3 0 4 0 0 100 300 500 BDM F requency 0 1 0 2 0 3 0 4 0 5 0 6 0 0 5 0 100 150 200 0 5 00 1000 1500 KS F requency 0 1 0 2 0 3 0 4 0 0 2 0 4 0 6 0 8 0 0 500 1000 1500 CVM 0 5 00 1000 1500 F requency 0 1 0 2 0 3 0 4 0 0 2 0 4 0 6 0 8 0 100 AD 0 200 400 600 800 F requency 0 1 0 2 0 3 0 0 2 0 4 0 6 0 8 0 100 ln λ Estimated probability 0.0 0.2 0.4 0.6 0.8 1.0 0 100 200 300 400 500 Estimated probability F requency 0.0 0.2 0.4 0.6 0.8 1.0 0 5 10 20 30 Estimated probability 0.0 0.2 0.4 0.6 0.8 1.0 0 1 0 2 0 3 0 ln L Fig. 13. See caption, next pa g e. 30 Fig. 13. (Figure on previous pa g e) Distribution of estimated probabilit y , under H 0, that the test statistic is w orse than tha t observ ed, for sev en differen t test statistics. The data are generated a ccording to a uniform distribution, consisting o f 100 bin histograms with a mean of 1 count, for one histogra m, and for the other histogram with a uniform distribution plus a Gaussian of strength 12.5% (left column), 25% (middle column), and 50% (right column). The χ 2 is computed without com bining bins. Fig. 14. Summary of p ow er of sev en differen t test statistics, for the alternativ e h yp othesis with a G aussian bump. Left: linear vertical scale; Righ t: logarithmic v ertical scale. [Best view ed in color. A t a n amplitude of 3 5%, the ordering, from to p to b ottom, of the curves is: KS, AD, CVM, ln λ , χ 2 , BDM, ln L .] In T a ble IV we ta k e a lo ok at the p erformance of our sev en statistics for his- tograms with la rge bin con ten ts. It is intere sting that in this large-statistics case, for the χ 2 and similar tests, the p ow er to reject a dip is greater tha n the p ow e r to reject a bump o f the same area. This is presumably b ecause the “error estimates” for the χ 2 are based on the square ro ot of the observ ed counts , and hence give smaller errors for smaller bin conten ts. W e also o bserv e that the comparativ e strength of the KS, CVM, and AD tests v ersus the χ 2 , BD M, ln λ , a nd ln L tests in the small statistics situation is la rgely rev ersed in the large statistics case. 31 T able IV. Estimates of p ow er fo r sev en differe nt test stat istics, as a function of H 1 . The comparison histogram ( H 0 ) is g enerated with a ll k = 100 bins Pois son of mean 100. The selection is at the 99% confidence lev el. H0 5 -5 Statistic % % % χ 2 0 . 91 ± 0 . 23 79 . 9 ± 1 . 0 92 . 1 ± 0 . 7 BDM 0 . 97 ± 0 . 24 80 . 1 ± 1 . 0 92 . 2 ± 0 . 7 KS 1 . 03 ± 0 . 25 77 . 3 ± 1 . 0 77 . 6 ± 1 . 0 CVM 0 . 91 ± 0 . 23 69 . 0 ± 1 . 1 62 . 4 ± 1 . 2 AD 0 . 91 ± 0 . 23 67 . 5 ± 1 . 2 5 7 . 8 ± 1 . 2 ln λ 0 . 91 ± 0 . 23 79 . 9 ± 1 . 0 92 . 1 ± 0 . 7 ln L 0 . 97 ± 0 . 24 7 9 . 9 ± 1 . 0 91 . 9 ± 0 . 7 T o get an idea o f what happ ens for a radically differen t alternativ e to the n ull distribution, w e consider sensitivit y to sampling fr o m the “sa wto ot h” distribution as sho wn in figure 15. This is to b e compared once again to samplings from the unifor m histogram. The results are tabulated in T able V. The “p ercen tage” sawtooth here refers to the fraction of the n ull h yp othesis mean. That is, a 1 00% sa wto oth on a 1 coun t/bin background oscillates b et we en a mean of 0 coun ts/bin and 2 counts /bin. The p erio d of the saw to oth is a lwa ys t wo bins. 0 20 40 60 80 100 0.0 0.5 1.0 1.5 2.0 Bin number H0 or H1 mean 0 20 40 60 80 100 0.0 0.5 1.0 1.5 2.0 Fig. 15. Left: The mean bin con ten ts for a 50% sa wto oth on a flat back ground of one count/bin (blue), compared with the flat bac kground means (red). Righ t: Example sampling from the 50% sawtooth (filled blue dots) and from the unifor m bac kground (op en red squares). 32 In this example, the χ 2 and lik eliho o d ratio tests are the clear winners, with BDM ne xt. The KS, CVM, and AD tests reject the null h yp othesis with the same probabilit y as for sampling from a true n ull distribution. This v ery p o or p erformance for these tests is readily understoo d, as these tests a r e all based on the cum ulativ e distributions, whic h av erage out lo cal oscillations. T able V. Estimates of p ow er for sev en differen t test statistics, for a “sa wto o t h” alternativ e distribution. 50 1 00 Statistic % % χ 2 3 . 7 ± 0 . 5 47 . 8 ± 1 . 2 BDM 1 . 9 ± 0 . 3 33 . 6 ± 1 . 2 KS 0 . 85 ± 0 . 23 1 . 0 ± 0 . 2 CVM 0 . 91 ± 0 . 23 1 . 0 ± 0 . 2 AD 0 . 91 ± 0 . 23 1 . 2 ± 0 . 3 ln λ 4 . 5 ± 0 . 5 49 . 6 ± 1 . 2 ln L 0 . 30 ± 0 . 14 1 0 . 0 ± 0 . 7 4. C onclus i o ns These studies hav e demonstrated some imp ortant lessons in “go o dness-of-fit” testing: 1. There is no single “b est” test for all applications. Statemen ts suc h as “t est X is b etter than test Y” are empt y without giving more con text. F or example, the Anderson-Darling test is often v ery pow erful in t esting normalit y o f da ta against alternativ es with non-nor mal tails (suc h as the Cauc h y distribution) [7 ]. Ho w ev er, w e ha v e seen that it is not alwa ys especially p ow erful in ot her situations. The more w e kno w ab out what w e wish to test for, the more reliably w e can c ho ose a p o we rful test. Eac h of the tests in ve stigated here may b e reasonable to use , dep ending on the circu mstance. Ev en the con tro v ersial L tes t w orks as w ell as the others sometimes. Ho w ev er, there is no kno wn situation where it actually p erforms b etter than all of the others, and indeed the situatio ns where 33 it is observ ed t o p erfor m as w ell are here limited to those where it is equiv alen t to another test. 2. Computing probabilities via s imulations is a very useful tec hnique. How ev er, it m ust b e done with care. The issue of tests with an incompletely sp ecified n ull h yp othesis is particularly insidious. Simply generating a distribution according to some assumed null distribution can lead to badly w rong results. Where t his could o ccur, it is imp ortan t to v erify the v alidit y of the pro cedure. Note that we ha v e only lo ok ed into the tails to the 1% lev el. The v alidit y m ust b e c hec k ed to whatev er lev el of probability is nee ded for the results. Th us, w e cannot blindly assume the r esults quoted here at t he 1% lev el will still b e true at, say , the 0.1% lev el. W e hav e concen trated in this pap er on the sp ecific question of comparing t w o histograms. Ho w ev er, the general considerations apply more generally , to testing whether tw o datasets are consisten t with b eing drawn from the same distribution, and to testing whether a dataset is consisten t with a predicted distribution. The KS, CVM, AD, ln L , and L tests may all b e constructed for t hese other situatio ns (as w ell as the χ 2 and BDM, if we bin the data ). References 1. R Deve lopmen t Core T eam, R: A La ng uage and Environmen t for Statistical Com- puting, R F oundation for Statistical Computing, Vienna 2007, ISBN 3-900051- 07-0, http://www .r-pr o ject.org/. 2. E. L. Lehmann and Joseph P . Romano, T esting Statistical Hyp otheses , Third edition, Springer, New Y ork (2 0 05), Theorem 4.4.1. 3. h ttp://www.herine.net/stat/soft w are/dbinom.html. 4. T. W. Anderson, On the Distribution of the Tw o-Sample Cram ´ er-V on Mises Criterion , Annals Math. Stat., 33 (1962 ) 1148. 5. F. W. Sc holz and M. A. Stephe ns, k - Sample Anderson-Darling T ests , J. Amer. Stat. Asso c. 82 (1987 ) 918. 34 6. D. J. Best, No npa r a metric Comparison of Tw o Histograms , Biometrics 50 (1994) 538. 7. M. A. Stephens, EDF Statistics for Go o dness of Fit and Some Comparisons , Jour. Amer. Stat. Asso c. 69 (1974) 730. 35

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment