Tight Bounds on the Capacity of Binary Input random CDMA Systems

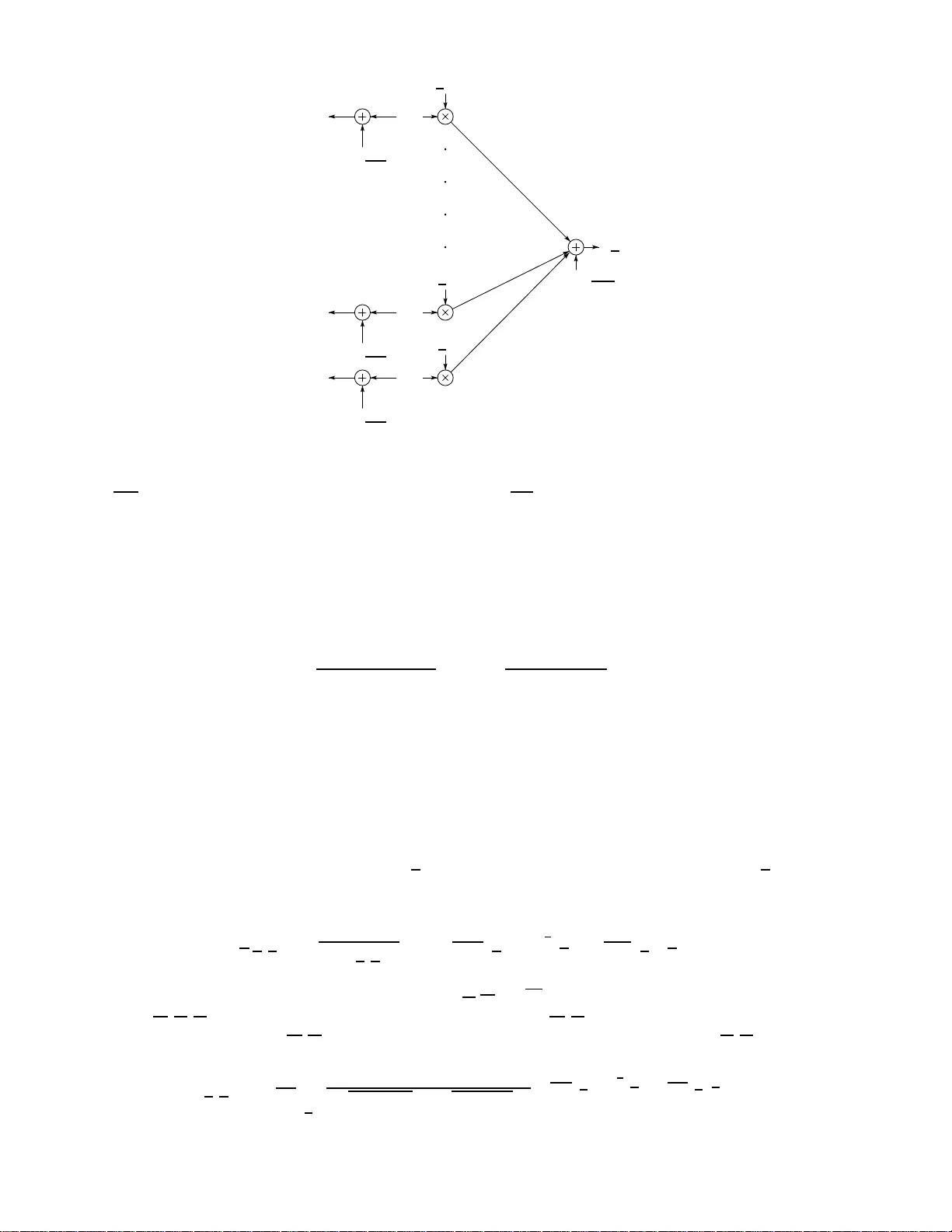

We consider multiple access communication on a binary input additive white Gaussian noise channel using randomly spread code division. For a general class of symmetric distributions for spreading coefficients, in the limit of a large number of users,…

Authors: Satish Babu Korada, Nicolas Macris