Sketch-Based Estimation of Subpopulation-Weight

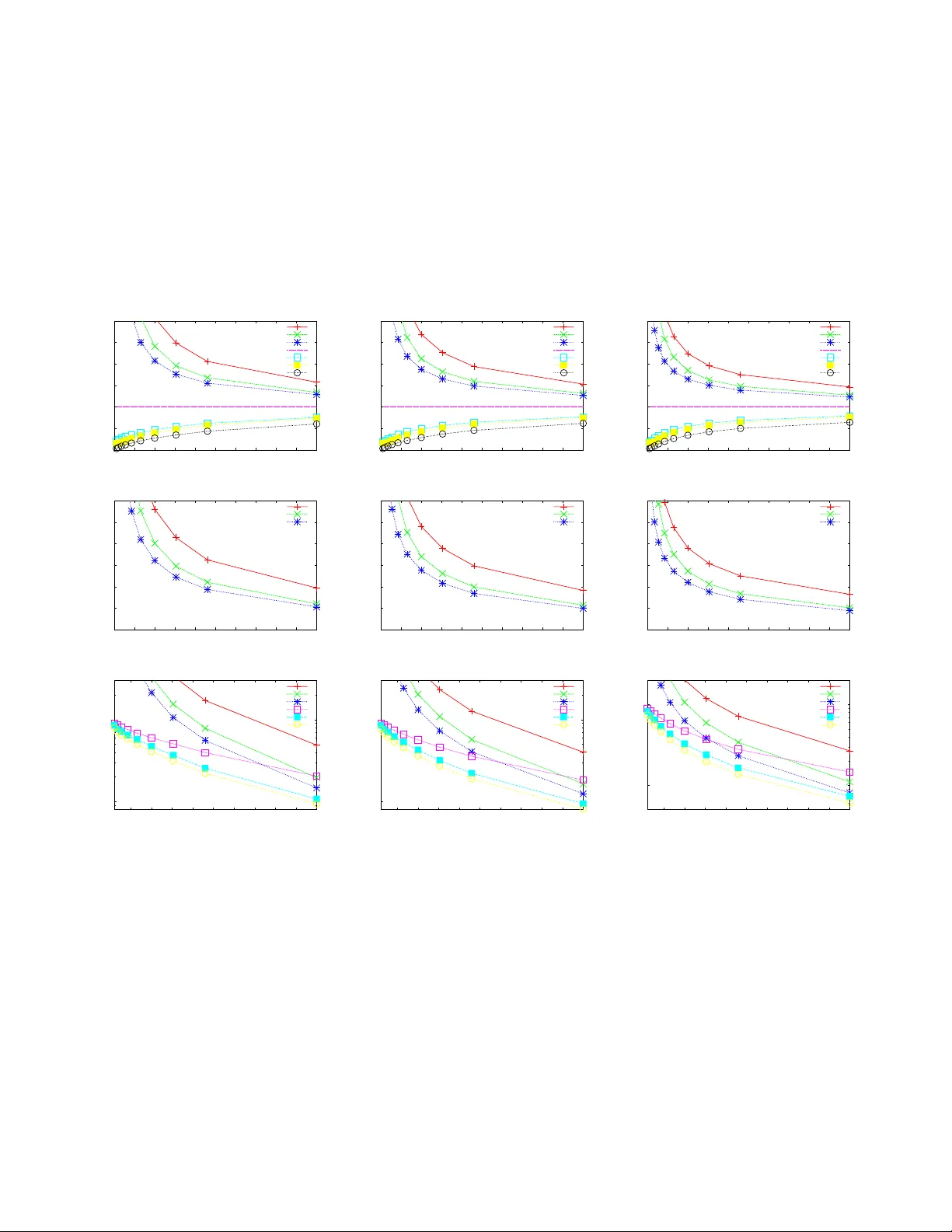

Summaries of massive data sets support approximate query processing over the original data. A basic aggregate over a set of records is the weight of subpopulations specified as a predicate over records' attributes. Bottom-k sketches are a powerful su…

Authors: Edith Cohen, Haim Kaplan