Multiparty Communication Complexity of Disjointness

We obtain a lower bound of n^Omega(1) on the k-party randomized communication complexity of the Disjointness function in the `Number on the Forehead' model of multiparty communication when k is a constant. For k=o(loglog n), the bounds remain super-p…

Authors: Arkadev Chattopadhyay, Anil Ada

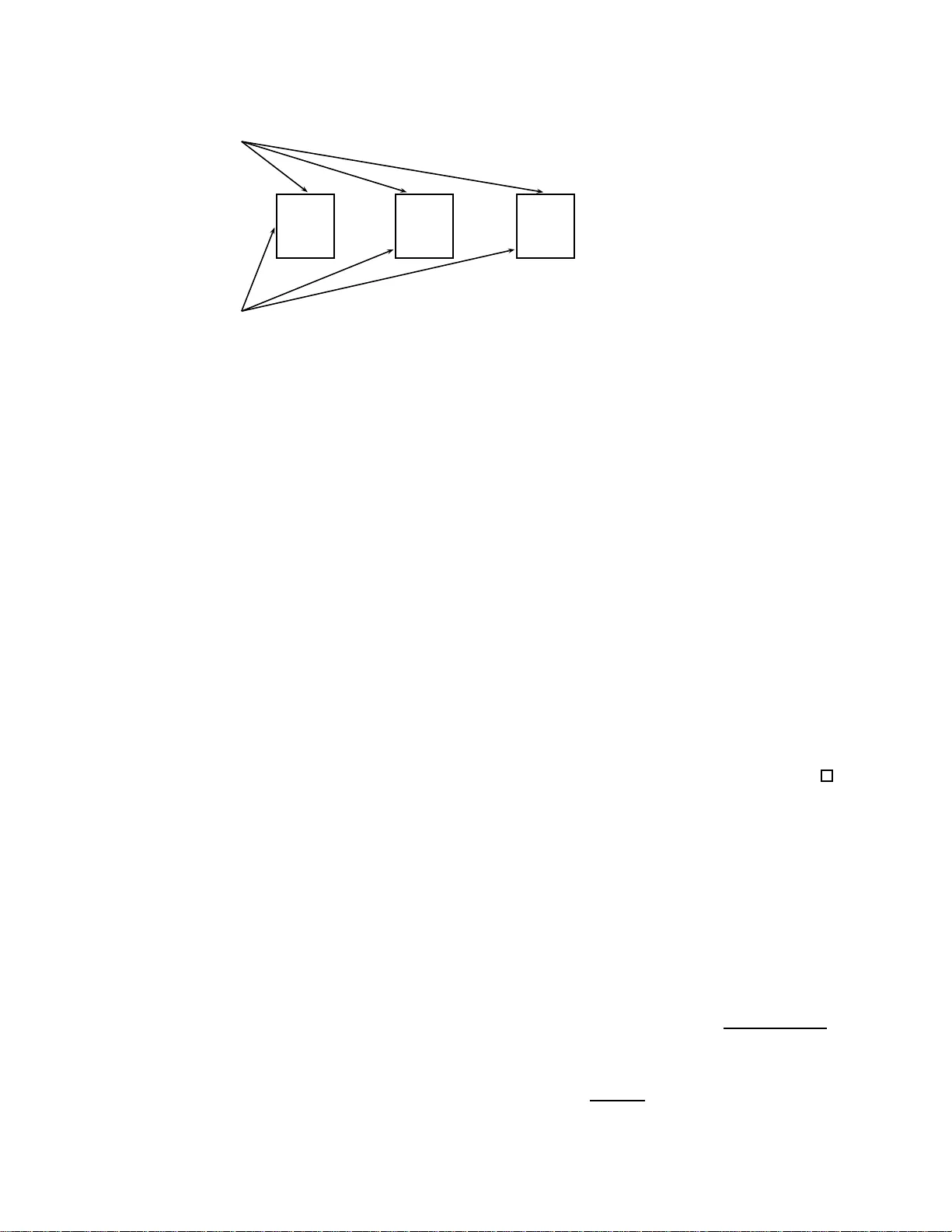

Multipart y Comm unication Complex it y of Disjoi n tness Ark adev Chattopadh y ay and Anil Ada ∗ Sc ho ol of Computer Science McGill Univ ersit y , Mon treal, Canada achatt 3,aad a@cs. mcgill.ca Abstract W e o btain a low er b o und of Ω n 1 k +1 2 2 k ( k − 1)2 k − 1 on the k -pa rty randomized communication complexity of the Disjointness function in the ‘Number on the F orehead’ mo del o f multiparty communication. In particular, this yields a b ound of n Ω(1) when k is a constant. The previous bes t low er bound for three play ers un til recently w as Ω(log n ). Our b ound se pa rates the communication complexity classes N P C C k and B P P C C k for k = o (log log n ). F urthermore, b y the results of Beame, P ita ssi and Seg e r lind [4], our b ound implies pro of size low er b ounds for tree - like, degre e k − 1 threshold systems a nd sup erp o lynomial size low er b ounds for Lov´ asz-Schrijv er pro o fs. Sherstov [16] r ecently developed a nov el technique to o bta in lower b ounds on t wo-party communication using the appr oximate p o lynomial degree o f b o olea n functions. W e obtain our results b y extending his technique to the multi-party setting using ideas from Chattopa dhy ay [8]. A similar b ound for Disjointness ha s b een recently and indep endently obtained by Lee and Shraibman. 1 In tro duction Chandra, F urst and Lipton [7] in tro duced the ‘Numb er on the F orehead’ mo del of multipart y comm unication as an extension of Y ao’s [20] tw o p art y comm unication m o del. This mo del, b esides b eing interesting in its o wn righ t, has foun d numerous conn ections with circuit complexit y , pro of complexit y , bran ching p rograms, pseudo-random generators and other areas of theoretical compu ter science. Both pr o vin g upp er and lo w er b ounds for this mo d el remain a v ery challe nging task as it is kno wn that th e o v erlap of information accessible to play ers pro vides signifi cant p o w er to it. In fact, pro ving a sup er-p olylogarithmic lo w er b ound on the communicatio n needed b y p oly-loga rithmic n umber of pla y ers for computing a function f in the restricted setting of sim ultaneous deterministic comm unication, is enough to s h o w that f is n ot in ACC 0 , a class for whic h no strong b ou n ds are kno wn. Although sev eral efforts [2, 9 , 14, 10] hav e b een m ad e, this goal cur ren tly remains out of reac h as n o su p erlogarithmic low er b ounds exist f or ev en log n play ers. More mo destly , one would lik e to b e able to determine th e comm unication complexit y of simp le functions for at least constan t n um b er of pla y ers. Ho wev er, despite intensiv e researc h (see for ∗ authors are su pp orted by research grants of Prof. D. Th´ erien. The first author th anks M. David and T. Pitassi for several discussions. 1 example [5, 6, 19, 18]) th e b est kno wn lo w er b ounds on the comm unication complexit y of simple functions lik e Disjoin tn ess and P ointe r Ju mping w as Ω(log n ) ev en for three pla yers. Th e root cause of th is pr oblem is that there w as essentiall y only one metho d that was the bac kb one of almost all strong lo wer b ounds. This metho d is kno wn as the discrepancy metho d and was introdu ced in the seminal w ork of Babai, Nisan and S zegedy [2 ]. It is how eve r kno wn that for functions lik e Disjoin tness th is metho d at b est yields Ω(log n ) lo we r b oun ds. Razb oro v [15] in tro duced the multi- dimensional discrepancy metho d to establish a tigh t re- lationship b etw een the qu antum communicat ion complexity of fun ctions indu ced by a symmetric base function and th e appro ximation degree of the base function. Recen tly , Shersto v [16] dev el- ops an elegan t tec hnique that is simpler and generalizes the results of Razb oro v by ob viating the need for the b ase fu nction to b e symmetric. More imp ortantly for us, the tec hn ique in [16] sho ws that the classical discrepancy metho d can b e mo dified in a natural w a y that allo w s one to obtain strong b ound s on t w o-part y quant um comm unication with b ounded error ev en for fu nctions like Disjoin tness that ha v e large discrepancy . In this work, we su itably mo d ify this tec hnique to extend it to the m ulti-part y setting. In ord er to achiev e this, we use to ols d ev elop ed in Ch attopadh y ay [8], extending the earlier work of Sherstov [17], for estimating discr ep ancy under certain n on -u niform distributions. Our result h as in teresting consequences for communicati on complexit y classes and pro of com- plexit y . It pro vides the first example of an explicit fu nction that has small non-determin istic comm unication complexit y , but exp onen tially high randomized complexit y . In the language of complexit y classes, this separates BPP C C k and NP C C k for k = o (log log n ). In fact, the separation is exp onen tial when k is any constan t. Although su c h a separation w as already known f r om the w ork of [3], b efore our work no explicit fu nction was kno wn to separate these classes. By the work of Beame, Pittasi and S zegerlind [4], our lo w er b oun ds on th e k -party complexit y of Disjoin tness implies strong lo wer b ounds on the pro of size for a family of pro of systems kno wn as tree-lik e, degree k − 1 thr eshold systems. Proving lo w er b ounds for these systems w as a ma jor op en problem in prop ositional pr o of complexit y . 1.1 Our Main Result Let y 1 , . . . , y k − 1 b e k − 1 n -bit binary s tr ings. Defin e the k − 1 × n b oolean matrix A obtained b y placing y i in the i th ro w of A . F or x ∈ { 0 , 1 } n , let x ⇐ y 1 , . . . , y k − 1 b e the n -bit string x i 1 x i 2 . . . x i t 0 n − t , where i 1 , . . . , i t are the in dices of the all-one column s of A . Let g : { 0 , 1 } n → {− 1 , 1 } b e a b ase function. W e define G g k : ( { 0 , 1 } n ) k → {− 1 , 1 } by G g k ( x, y 1 , . . . , y k − 1 ) := g ( x ⇐ y 1 , . . . , y k − 1 ). O bserve that G P ARITY k is the Generalized Inn er Pro d- uct fu n ction and G NOR k is the Disjointness function. Ou r main result sho ws ho w to use the high appro ximation degree of a base fu nction to generate a fu nction with h igh rand omized comm unica- tion complexit y . Let R ǫ k ( f ) d en ote the randomized k -party communicatio n complexit y of f with adv an tage ǫ . Then, Theorem 1.1. L et f : { 0 , 1 } m → {− 1 , 1 } have δ - appr oximate de gr e e d . L et n ≥ 2 2 k ( k − 1) e d k − 1 m k , and f ′ : { 0 , 1 } n → {− 1 , 1 } b e such that f ( z ) = f ′ ( z 0 n − m ) . Then R ǫ k ( G f ′ k ) ≥ d 2 k − 1 + log ( δ + 2 ǫ − 1) . 2 As a corollary we sho w that R ǫ k (DISJ k ) = Ω n 1 k +1 2 2 k ( k − 1)2 k − 1 for every constant ǫ > 0. In brief, this follo ws from the f ollo wing facts. Let NO R n denote the NOR function for inputs of length n . Then f ′ = NOR n and f = NOR m satisfy f ( z ) = f ′ ( z 0 n − m ) and b y a result of P aturi [13], we kn o w that the 1 / 3-appro ximate degree of NOR m is Θ( √ m ). A similar b ound for the Disjoin tness fu nction h as b een recen tly and indep en den tly obtained b y Lee and Sh raibman [12]. 1.2 Pro of Ove rview Shersto v [16] devised a nov el s trategy to make a passage from appro ximation degree of b o olean functions to lo w er b oun ds on t wo -part y comm unication complexit y . W e adapt th is strategy f or our purp ose. This adap tation is outlined in Figure 1 . W e u se three main ingredients, the fir s t of whic h is the Generalize d Discrepancy Metho d. The classical discrepancy metho d states that if a function has lo w d iscr ep ancy , then it has high randomized comm unication complexit y . In the generalized discrepancy metho d this id ea is extended as follo w s: If a function g correlates w ell with f and h as lo w d iscrepancy , then f has high randomized comm unication complexit y . The second ingredient is the “Appro ximation/Orthogonalit y P rinciple” of Sherstov [16]. It states that giv en a fun ction f with high approximati on degree, we can find a f u nction g that correlates w ell with f , and a d istribution µ su c h that g is orthogonal to every lo w degree p olynomial under µ . The third ingredien t, called the Orth ogonalit y-Discrepancy Lemma, is derive d from the work of Chattopadh y a y [8]. This tak es a f u nction that is orthogonal with lo w degree p olynomials and constructs a new mask ed function that h as lo w d iscrepancy . W e can th en summarize the strategy as follo w s. W e start with a function f : { 0 , 1 } n → {− 1 , 1 } with high ap p ro ximation degree. By the Approxima tion/Orthogonalit y Pr inciple, w e obtain g that highly correlates with f and is orthogonal with lo w degree p olynomials. F rom f and g we construct new masked functions F f k and F g k , similar to the constru ction of G f k . Since g is orthogonal to lo w degree p olynomials, by the Orthogonalit y-Discrepancy Lemma we dedu ce that F g k has lo w discrepancy u nder an appr opriate distribu tion. Under this distribu tion F g k and F f k are highly correlated and ther efore applying th e Generalized Discrepancy Metho d, we conclud e that F f k has high randomized comm unication complexit y . This implies, b y the construction of F f k , that the randomized comm unication complexit y of G f k is high. 2 Preliminaries 2.1 Multipart y Comm unication Mo del In the multipart y communicatio n mo del in tro duced by [7], k play ers P 1 , . . . , P k wish to collab o- rate to compute a fun ction f : { 0 , 1 } n → {− 1 , 1 } . The n inp ut bits are partitioned in to k sets X 1 , . . . , X k ⊆ [ n ] and eac h participan t P i kno ws the v alues of all the input bits exc e pt the ones of X i . Th is game is often referred to as the “Num b er/Inp ut on the forehead” m o del since it is con v en ient to p ictur e that pla y er i h as the bits of X i written on its f orehead, av ailable to eve ryo ne 3 Appro ximation Orthogonalit y f high approx-deg g E µ g ( x ) p ( x ) = 0 for lo w deg( p ) F f k F g k high corr. high corr. disc F g k is lo w Orthogonalit y- Discrepancy Generalized Discrepancy Metho d R ǫ k ( F f k ) is high R ǫ k ( G f k ) is high Figure 1: Pro of outline but itself. Pla y ers exc hange bits, according to an agreed up on protocol, by w riting them on a public blac kb oard. The p roto col sp ecifies wh ose turn it is to sp eak, and what the pla y er broadcasts as a function of the comm u nication history and the input the pla y er has access to. The proto col’s output is a fun ction of what is on the blac kb oard after the proto col’s termin ation. W e d enote b y D k ( f ) th e deterministic k -party communicati on complexit y of f , i.e. the num b er of bits exc hanged in the b est deterministic proto col for f on the w orst case inp ut. By allo wing the p la yers to access a public random string and the pr otocol to err, one d efines the rand omized comm unication complexit y of a fu nction. W e say that a proto col computes f with ǫ adv an tage if the probabilit y that P and f agree is at least 1 / 2 + ǫ for all inp uts. W e denote b y R ǫ k ( f ) the cost of the b est p roto col that computes f with adv an tage ǫ . One further introd uces non-determinism in proto cols b y allo wing ‘Go d’ to help the pla y ers b y fu rnishing a pro of str in g. As is usual with non-determinism in other mo dels, a correct non-deterministic p roto col P for f has the follo w ing prop ert y: on ev ery input x at whic h f ( x ) = − 1, P ( x, y ) = − 1 for some pro of string y and wheneve r f ( x ) = 1, P ( x, y ) = 1 for all pr o of strings y . The length of th e pr o of string y is no w in cluded in the cost of P on an input and N k ( f ) denotes the cost of the b est n on-deterministic proto col for f on the wo rst in put. Comm unication complexit y classes were introd u ced for t w o pla yers in [1] in whic h “efficien t” proto col was defined to h a v e cost no more than pol y l og ( n ). This idea n aturally extends to the multipart y mo d el giving r ise to th e follo win g classes: P C C k := { f | D k ( f ) = p olylog( n ) } , BPP C C k := { f | R 1 / 3 k ( f ) = p olylog( n ) } and NP C C k := { f | N k ( f ) = p olylog( n ) } . Determining the relationship among these classes is an in teresting researc h theme within the broader area of un- derstanding the relativ e p o w er of determinism, non-determinism an d randomness in computation. While Beame et.al. [3 ] show that BPP C C k 6 = NP C C k , no explicit function w as kno wn that separated these classes. 2.2 Cylinder In tersections and Discrepancy The ke y com b inatorial ob ject that arises in the study of multipart y communicat ion is a cylinder- interse ction . A k -cylinder in th e i th dimension is a subs et S of Y 1 × · · · × Y k with the prop ert y that mem b ersh ip in S is indep en d en t of the i th co ord inate. A set S is called a cylinder -intersectio n if 4 S = ∩ k i =1 S i , where S i is a cylinder in the i th d imension. One can r epresen t a k -cylinder in the i th dimension by its c haracteristic function φ i : ( { 0 , 1 } n ) k → { 0 , 1 } . Here φ i ( y 1 , ..., y k ) do es not dep end on y i . A cylinder in tersection is repr esen ted as the p ro du ct φ ( y 1 , ..., y k ) = φ 1 ( y 1 , ..., y k ) ...φ k ( y 1 , ..., y k ) . It is wel l kno wn that a proto col th at computes f with cost c partitions the inp ut space of f in to at m ost 2 c mono c hromatic cylind er in tersections. An imp ortan t measure, d efined for a fu nction f : Y 1 × ... × Y k → {− 1 , 1 } , is its discr ep ancy . With resp ect to any probab ility distrib ution µ o v er Y 1 × · · · × Y k and cylinder intersec tion φ , d efine disc φ k ,µ ( f ) = Pr µ f ( y 1 , . . . , y k ) = 1 ∧ φ ( y 1 , . . . , y k ) = 1 − Pr µ f ( y 1 , . . . , y k ) = − 1 ∧ φ ( y 1 , . . . , y k ) = 1 . Since f is -1/1 v alued, it is not h ard to verify that equ iv alen tly: disc φ k ,µ ( f ) = E y 1 ,...,y k ∼ µ f ( y 1 , . . . , y k ) φ ( y 1 , . . . , y k ) . (1) The discrepancy of f w .r.t. µ , denoted b y disc k ,µ ( f ) is max φ disc φ k ,µ ( f ). F or remo ving notational clutter, w e often drop µ from the subscript when the distribution is clear from the con text. W e now state the discrepancy m etho d whic h connects the discrepancy and the ran d omized comm unication complexit y of a function. Theorem 2.1 (see [2, 11]) . L e t 0 < ǫ ≤ 1 / 2 b e any r e al and k ≥ 2 b e any inte ger. F or every function f : Y 1 × ... × Y k → { 1 , − 1 } and distribution µ on inputs fr om Y 1 × · · · × Y k , R ǫ k ( f ) ≥ log 2 ǫ disc k ,µ ( f ) . (2) 2.3 F ourier Expansion W e consider th e ve ctor space of functions from { 0 , 1 } n → R . Equip this space with the standard inner pr o duct h f , g i h f , g i = E x ∼U f ( x ) g ( x ) (3) F or eac h S ⊆ [ n ], defin e χ S ( x ) = ( − 1) P i ∈ S x i . Then it is easy to v erify that the set of fun ctions { χ S | S ⊆ [ n ] } form s an orth onormal b asis for th is inn er pro du ct space, and so ev ery f ca n b e expanded in terms of its F ourier c o efficients f ( x ) = X S ⊆ [ n ] ˆ f ( S ) χ S ( x ) (4) where ˆ f ( S ) is defined as h f , χ S i . This expansion is uniqu e and the exact de gr e e of f is defi ned to b e the largest d suc h that ther e exists S ⊆ [ n ] w ith | S | = d and ˆ f ( S ) 6 = 0. 5 2.4 Appro ximation Degree A natural question is the follo wing. How large degree is needed if we wan t to simply approxima te f w ell? Define the ǫ - appr oximate de gr e e of f , den oted by deg ǫ ( f ) to b e the smallest in teger d for whic h there exists a fun ction φ of exact degree d su c h that max x ∈{ 0 , 1 } n f ( x ) − φ ( x ) ≤ ǫ F or an y D : { 0 , 1 , . . . , n } → { 1 , − 1 } , define ℓ 0 ( D ) ∈ { 0 , 1 , . . . , ⌊ n/ 2 ⌋} ℓ 1 ( D ) ∈ { 0 , 1 , . . . , ⌈ n/ 2 ⌉} suc h that D is constan t o v er the interv al [ ℓ 0 ( D ) , n − ℓ 1 ( D )] and ℓ 0 ( D ) and ℓ 1 ( D ) are the smallest p ossible v alues for whic h this h app ens. P aturi’s theorem pro vides b ounds on the appro ximate degree of symmetric functions. Theorem 2.2 (Pat uri[13 ]) . L et f : { 0 , 1 } n → { 1 , − 1 } b e any symmetric fu nc tion induc e d fr om the pr e dic ate D : { 0 , . . . , n } → { 1 , − 1 } . Then, de g 1 / 3 ( f ) = Θ p n ( ℓ 0 ( D ) + ℓ 1 ( D )) (5) In particular, th e 1/3-appro ximate d egree of NOR is Θ ( √ n ). 3 The Generalized Discrepancy Metho d Babai, Nisan and Szegedy [2] estimated the discrepancy of functions lik e GIP k w.r.t k -wise cylinder in tersections and the uniform distr ib ution. These e stimates resulted in the fir st s trong low er b ound s in the k-party mo del via Theorem 2.1. Unf ortu nately , the applicabilit y of Theorem 2.1 is limited to those functions that h av e s mall discrepancy . Disjointe ss is a classical example of a fu nction that do es not h a v e small discrepancy . Lemma 3.1 (F olklore) . Under every distribution µ over the inputs, disc k ,µ ( D I S J k ) = Ω(1 /n ) . Pr o of. Let X + and X − b e the set of d isjoin t and non-disj oin t inp uts r esp ectiv ely . The first th ing to ob s erv e is that if | µ ( X + ) − µ ( X − ) | = Ω(1 /n ), then w e are done imm ediately by considering the discrepancy o ver the intersectio n corresp onding to the entire set of inputs. Hence, we may assume | µ ( X + ) − µ ( X − ) | = o (1 /n ). Th us, µ ( X − ) ≥ 1 / 2 − o (1 /n ). Ho w ev er, X − can b e co v ered b y th e follo win g n mono chr omatic cylind er inte rsections: let C i b e the s et of inputs in which th e i th column is an all-one column. Th en X − = ∪ n i =1 C i . By av eraging, there exists an i suc h that µ ( C i ) ≥ 1 / 2 n − o (1 /n 2 ). T aking the d iscrepancy of this C i , we are done. It is therefore imp ossible to o btain b etter than Ω(log n ) boun ds on the comm unication complex- it y of Disjointness by a d irect application of the d iscrepancy metho d. In fact, th e ab o ve argument sho ws that T heorem 2.1 fails to give b etter than p olylogarithmic low er b ound for every function that is in NP C C k or co-NP C C k . 6 Shersto v [16, Sec 2.4] pro vides a nice rein terpretation of Razb oro v’s discrepancy metho d for t w o p art y qu an tum comm unication complexit y by p ointi ng out the follo win g: in ord er to pro v e a lo w er b ound on the communicati on complexit y of a function f in an y b oun ded error mo del, it is sufficient to fi nd a function g that correlates w ell with f und er some distribution bu t has large comm unication complexit y . Based on this observ ation, we mo dify the discrep an cy metho d to the follo win g: Lemma 3.2 (Generalized Discrepancy Metho d) . Denote X = Y 1 × ... × Y k . L et f : X → {− 1 , 1 } and g : X → {− 1 , 1 } b e such that under some distribution µ we have Corr µ ( f , g ) ≥ δ . Then R ǫ k ( f ) ≥ log δ + 2 ǫ − 1 disc k ,µ ( g ) (6) Pr o of. Let P b e a k -part y randomized pr otocol that compu tes f with adv ant age ǫ and cost c . Then for eve ry distr ib ution µ o v er the inputs, we can d eriv e a deterministic k -p la yer p r oto col P ′ for f that err s only on at most 1 / 2 − ǫ fraction of the inpu ts (w.r.t. µ ) and has cost c . T ak e µ to b e a distribution satisfying the correlation inequalit y . W e kno w P ′ partitions the input sp ace in to at m ost 2 c mono c hromatic (w.r.t. P ′ ) cylinder intersecti ons. Let C den ote this set of cylinder in tersections. T hen, δ ≤ E x ∼ µ f ( x ) g ( x ) = X x f ( x ) g ( x ) µ ( x ) ≤ X x P ′ ( x ) g ( x ) µ ( x ) + X x ( f ( x ) − P ′ ( x )) g ( x ) µ ( x ) Since P ′ is a constan t o ver ev ery cylind er in tersection S in C , we h av e δ ≤ X S ∈C X x ∈ S P ′ ( x ) g ( x ) µ ( x ) + X x g ( x ) f ( x ) − P ′ ( x ) µ ( x ) ≤ X S ∈C X x ∈ S g ( x ) µ ( x ) + X x f ( x ) − P ′ ( x ) µ ( x ) ≤ 2 c disc k ,µ ( g ) + 2(1 / 2 − ǫ ) . This giv es us imm ed iately (6 ). Observe that when f = g , i.e. Corr µ ( f , g ) = 1, we get the classical discrep ancy metho d (Theorem 2.1). 4 Generating F unctions With Lo w Discrepancy 4.1 Masking Sc hemes W e ha v e already defin ed one masking sc h eme through th e notation x ⇐ y 1 , . . . , y k . Th is allo w ed us to define G g k for a base function g . W ell-kno wn f u nctions suc h as GIP k and DISJ k are respresen table in this notation b y G P ARITY k and G NOR k resp ectiv ely . W e no w define a second masking sc heme whic h pla ys a crucial role in low erb ounding the comm unication complexit y of G g k . Th is masking sc h eme is obtained by first s lightly simplifying the pattern matrices in [16] and then generalizing the simplified matrices to h igher dimension for d ealing with multiple p lay ers. 7 S 2 1 0 1 1 1 0 1 1 0 x = 0 0 0 0 1 0 1 1 1 1 1 0 0 0 0 0 1 0 S 1 x ← S 1 , S 2 = 001 Figure 2: Illustration of the m asking sc heme x ← S 1 , S 2 . The p arameters are ℓ = 3 , m = 3 , n = 27. Let S 1 , . . . S k − 1 ∈ [ ℓ ] m for some p ositiv e ℓ and m . Let x ∈ { 0 , 1 } n where n = ℓ k − 1 m . Here it is con v en ient to thin k of x to b e divided into m equal blo c ks where eac h blo c k is a k − 1-dimensional arra y with eac h dimension ha ving size ℓ . E ac h S i is a v ector of length m with e ac h co- ordinate b eing an elemen t fr om { 1 , . . . , ℓ } . The k − 1 vecto rs S 1 , . . . , S k − 1 join tly unm ask m bits of x , denoted by x ← S 1 , . . . , S k − 1 , precisely one f rom eac h b lo c k of x i.e. x [1][ S 1 [1] , S 2 [1] , ..., S k − 1 [1]] , . . . , x [ m ][ S 1 [ m ] , S 2 [ m ] , . . . , S k − 1 [ m ]] . where x [ i ] refers to the i th blo c k of x . See Figure 2 for an illustration of this masking sc h eme. F or a give n base fun ction f : { 0 , 1 } m → {− 1 , 1 } , w e defin e F f k : { 0 , 1 } n × ([ ℓ ] m ) k − 1 → {− 1 , 1 } as F f k ( x, S 1 , . . . , S k − 1 ) = f ( x ← S 1 , . . . , S k − 1 ). Lemma 4.1. If f : { 0 , 1 } m → {− 1 , 1 } and f ′ : { 0 , 1 } n → {− 1 , 1 } have the pr op erty that f ( z ) = f ′ ( z 0 n − m ) (her e n = ℓ k − 1 m as describ e d in the c onstruction of F f k ), then R ǫ k ( F f k ) ≤ R ǫ k ( G f ′ k ) . (7) Pr o of Sketch. Observ e that there are f unctions Γ i : [ ℓ ] m → { 0 , 1 } n suc h that F f k ( x, S 1 , . . . , S k − 1 ) = G f ′ k ( x, Γ 1 ( S 1 ) , . . . , Γ k − 1 ( S k − 1 )) for all x, S 1 , . . . , S k − 1 . Th er efore the pla yers can priv ately conv ert their inp uts and apply the proto col for G f ′ k . Note that the pro of shows (7) holds not jus t for randomized bu t any mo del of communicati on. 4.2 Orthogonalit y and Discrepancy No w we p ro v e that if the base fu nction f in our masking scheme has a certain n ice prop erty , th en the masked function F f k has small discrepancy . T o d escrib e the nice prop ert y , let us define the follo win g: for a distribution µ on the inputs, f is ( µ, d )-orthogonal if E x ∼ µ f ( x ) χ S ( x ) = 0, for all | S | < d . Then , Lemma 4.2 (Orthogonalit y-Discrepancy Lemma) . L et f : {− 1 , 1 } m → {− 1 , 1 } b e any ( µ, d ) - ortho gonal function for some distribution µ on {− 1 , 1 } m and some inte ger d > 0 . Derive the pr ob a- bility distribution λ on {− 1 , 1 } n × [ ℓ ] m k − 1 fr om µ as fol lows: λ ( x, S 1 , . . . , S k − 1 ) = µ ( x ← S 1 ,...,S k − 1 ) ℓ m ( k − 1) 2 n − m . Then, disc k ,λ F f k 2 k − 1 ≤ ( k − 1) m X j = d ( k − 1) m j 2 2 k − 1 − 1 ℓ − 1 j (8) 8 Henc e, for ℓ − 1 ≥ 2 2 k ( k − 1) em d and d > 2 , disc k ,λ F f k ≤ 1 2 d/ 2 k − 1 . (9) R emark. Th e Lemma ab o v e app ears very s im ilar to the Multipart y Degree-Discrepancy Lemm a in [8] that is an extension of the t w o p art y Degree-Discrepancy Theorem of [17]. T h ere, the magic prop erty on the base function is high v oting degree. It is worth noting th at ( µ, d )-orthogonalit y of f is equiv alent to v oting degree of f b eing at least d . Indeed the pro of of the ab o ve Lemma is almost ident ical to the p ro of of the Degree-Discrepancy Lemma sa v e for the minor details of the difference b et wee n our masking scheme and the one used in [8 ]. Pr o of of L emma 4.2. The starting p oin t is to write the expression for discrepancy w.r.t. an arbi- trary cylinder in tersection φ , disc φ k ( F f k ) = X x,S 1 ,...,S k − 1 F f k ( x, S 1 , . . . , S k − 1 ) φ ( x, S 1 , . . . , S k − 1 ) · λ ( x, S 1 , . . . , S k − 1 ) (10) This c hanges to the more con v enien t exp ected v alue notation as follo ws: disc φ k ( F f k ) = 2 m E x,S 1 ,...,S k − 1 F f k ( x, S 1 , . . . , S k − 1 ) × φ ( x, S 1 , . . . , S k − 1 ) µ x ← S 1 , . . . , S k − 1 (11) where, ( x, S 1 , . . . , S k − 1 ) is no w un iformly d istributed o v er { 0 , 1 } ℓ k − 1 m × [ ℓ ] m k − 1 . Then, we use the tr ic k of rep eatedly com b ining triangle inequalit y with Cauch y-Sch wa rz exactl y as done in Chattopadh y a y[8] (or ev en b efore by Raz[14]) to obtain the follo wing: (disc φ k ( F f k )) 2 k − 1 ≤ 2 2 k − 1 m E S 1 0 ,S 1 1 ,...,S k − 1 0 ,S k − 1 1 H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 (12) where, H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 = E x ∈{ 0 , 1 } ℓ k − 1 m Y u ∈{ 0 , 1 } k − 1 F f k ( x, S 1 u 1 , . . . , S k − 1 u k − 1 ) µ ( x ← S 1 u 1 , . . . , S k − 1 u k − 1 ) (13) W e lo ok at a fixed S i 0 , S i 1 , for i = 1 , . . . , k − 1. Let r i = S i 0 ∩ S i 1 and r = P i r i for 1 ≤ i ≤ 2 k − 1 . W e n o w mak e tw o claims that are analogous to Claim 15 and C laim 16 resp ectiv ely in [8]. Claim 4.3. H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 ≤ 2 (2 k − 1 − 1) r 2 2 k − 1 m (14) Claim 4.4. L et r < d . Then, H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 = 0 (15) W e pro v e these claims in the next section. Claim 4.3 simply follo ws from the fact that µ is a pr ob ab ility distr ibution and f is 1/-1 v alued w h ile Claim 4.4 u ses the ( µ, d )-orthogonalit y of f . 9 W e n o w con tin ue with the pro of of the Orthogonalit y-Discrepancy Lemma assumin g these claims. Applying them, w e obtain (disc φ k ( F f k )) 2 k − 1 ≤ ( k − 1) m X j = d 2 (2 k − 1 − 1) j X j 1 + ··· + j k − 1 = j Pr r 1 = j 1 ∧ · · · ∧ r k − 1 = j k − 1 (16) Substituting the v alue of the probabilit y , we fu rther obtain: (disc φ k ( F f k )) 2 k − 1 ≤ ( k − 1) m X j = d 2 (2 k − 1 − 1) j X j 1 + ··· + j k − 1 = j m j 1 · · · m j k − 1 ( ℓ − 1) m − j 1 · · · ( ℓ − 1) m − j k − 1 ℓ ( k − 1) m (17) The follo wing s im p le com b inatorial iden tit y is well kno wn: X j 1 + ··· + j k − 1 = j m j 1 · · · m j k − 1 = ( k − 1) m j Plugging this iden tit y in to (17) immediately yields (8) of the Orthogonalit y-Discrepancy Lemma. Recalling ( k − 1) m j ≤ e ( k − 1) m j j , and c ho osing ℓ − 1 ≥ 2 2 k ( k − 1) em/d , we get (9). 4.3 Pro ofs of Claims W e ident ify the set of all assignmen ts to b oolean v ariables in X = { x 1 , . . . , x n } with the n -ary b o olean cub e { 0 , 1 } n . F or an y u ∈ { 0 , 1 } k − 1 , let Z u represent the set of m v ariables ind exed j oin tly b y S 1 u 1 , . . . , S k − 1 u k − 1 . There is precisely one v ariable chosen from eac h b lo c k of X . Denote by Z i [ α ] the unique v ariable in Z i that is in the α th blo ck of X , f or eac h 1 ≤ α ≤ m . Let Z = ∪ u Z u . W e abuse notation for the sak e of clarit y and u se Z u in the con text of exp ected v alue calculations to also mean a uniformly c hosen rand om assignmen t to the v ariables in the set Z u . Pr o of of Claim 4.4. H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 = E Z 0 k − 1 f ( Z 0 k − 1 ) µ ( Z 0 ) E X − Z 0 k − 1 Y u ∈{ 0 , 1 } k − 1 u 6 =0 f ( Z u ) µ ( Z u ) (18) Observe that for any blo c k α and any u 6 = 0 k − 1 , Z u [ α ] = Z 0 k − 1 [ α ] iff f or eac h i such that u i = 1, S i 0 [ α ] = S i 1 [ α ]. Recall that r i is the num b er of indices α su c h that S i 0 [ α ] = S i 1 [ α ]. T h erefore, th ere are at most r = P k − 1 i =1 r i man y in dices α suc h that Z u [ α ] = Z 0 k − 1 [ α ] for some u 6 = 0 k − 1 . T his means the inner exp ectat ion in (18) is a function that dep ends on at m ost r v ariables. Since f is orthogonal und er µ with ev ery p olynomial of degree less than d and r < d , we get the d esired result. 10 Pr o of of Claim 4.3. Ob s erv e th at since F f k is 1/-1 v alued, w e get the follo w in g: H f k S 1 0 , S 1 1 , . . . , S k − 1 0 , S k − 1 1 ≤ E x Y u ∈{ 0 , 1 } k − 1 µ ( x ← S 1 u 1 , . . . , S k − 1 u k − 1 ) = E X − Z E Z Y u ∈{ 0 , 1 } k − 1 µ ( Z u ) = E X − Z 1 2 | Z | X Z ∈{ 0 , 1 } | Z | Y u ∈{ 0 , 1 } k − 1 µ ( Z u ) (19) ≤ E X − Z 1 2 | Z | X y 1 ,...,y k − 1 ∈{ 0 , 1 } m k − 1 Y i =1 µ ( y i ) (20) where the last inequalit y holds b ecause ev ery pr o duct in the inner su m of (19) app ears in the in ner sum of (20). Usin g th e fact that µ is a probability distr ibution, we get: RHS of (20) = E X − Z 1 2 | Z | k − 1 Y i =1 X y i ∈{ 0 , 1 } m µ ( y i ) = E X − Z 1 2 | Z | = 1 2 | Z | . W e no w find a low er b ound on | Z | . Let t u denote the Hammin g weig ht of the strin g u and { j 1 , . . . , j t u } d enote the set of in dices in [ k − 1] at whic h u has a 1. Define Y u = Z u [ α ] | S j s 1 [ α ] 6 = S j s 0 [ α ]; 1 ≤ s ≤ t i ; 1 ≤ α ≤ m (21) The follo wing f ollo w from the ab o ve definition. • | Y 0 k − 1 | = m and | Y u | ≥ m − P 1 ≤ s ≤ t i r j s ≥ m − r for all u 6 = 0 k − 1 . • Y u ∩ Y v = ∅ , for u 6 = v . This follo ws from the follo w in g argumen t: wlog assum e there is an index β wh er e u has a one but v has a zero. Consider any blo c k α suc h that Z u [ α ] is in Y u . It m ust b e tru e that S β 1 [ α ] 6 = S β 0 [ α ]. Th is means that Z u [ α ] 6 = Z v [ α ]. Th erefore Z u [ α ] is not in Y v and we are done. • Y := ∪ u ∈{ 0 , 1 } k − 1 Y u = Z . Th is is b ecause if Z u [ α ] is not in Y u then there are indices j 1 , . . . , j s where u contai ns a one and S j i 0 [ α ] = S j i 1 [ α ]. Let v b e the strin g th at contai ns a zero at p ositions j 1 , . . . , j s and at other p ositions, corresp ond s to u . Then by d efi nition, Z u [ α ] = Z v [ α ] ∈ Y v . Th us, | Z | = | Y | = P u | Y u | ≥ m + P u 6 =0 ( m − r ) = 2 k − 1 m − (2 k − 1 − 1) r and the result fo llo ws. 5 The Main Result Before pr o ving the main result, we b orro w from Sherstov [16] a b eautifu l d ualit y b et ween approx- imabilit y and orthogonalit y . The intuition is that if a function is at a large distance from the linear space spanned b y the c haracters of degree less than d , th en its pro jection on the dual space s p anned b y charact ers of degree at least d is large. More p recisely , 11 Lemma 5.1. L et f : {− 1 , 1 } m → R b e given with de g δ ( f ) = d ≥ 1 . Th en ther e exists g : {− 1 , 1 } m → {− 1 , 1 } and a distr ibution µ on {− 1 , 1 } m such tha t g is ( µ, d ) -ortho gonal and Corr µ ( f , g ) > δ . W e do not pro v e this Lemma b ut the interested reader can read its sh ort pr o of in [16] which is based on an application of linear p rogramming dualit y . Theorem 5.2 (Main Theorem) . L et f : { 0 , 1 } m → {− 1 , 1 } have δ - appr oximate de gr e e d . L et n ≥ 2 2 k ( k − 1) e d k − 1 m k , and f ′ : { 0 , 1 } n → {− 1 , 1 } b e such that f ( z ) = f ′ ( z 0 n − m ) . Then R ǫ k ( G f ′ k ) ≥ d 2 k − 1 + log ( δ + 2 ǫ − 1) . (22) Pr o of. Applying Lemma 5.1 we obtain a function g and a d istribution µ su c h that Corr µ ( f , g ) > δ and E x ∼ µ g ( x ) χ S ( x ) = 0 for | S | < d . These g and µ satisfy the conditions of Lemma 4.2 , therefore w e hav e disc k ,λ F g k ≤ 1 2 d/ 2 k − 1 (23) where λ is obtained fr om µ as s tated in Lemma 4.2 and ℓ ≥ 2 2 k ( k − 1) em/d . Sin ce n = ℓ k − 1 m , (23) holds for n ≥ 2 2 k ( k − 1) e d k − 1 m k . It can b e easily verified that Corr λ ( F f k , F g k ) = Corr µ ( f , g ) > δ . T h us, by plugging the v alue of disc k ,λ F g k in (6) of the generalized discrepancy metho d w e get R ǫ k ( F f k ) ≥ d 2 k − 1 + log ( δ + 2 ǫ − 1) . The desired r esult is obtained by applying Lemma 4.1. 5.1 Disjoin t ness Separates BPP C C k and NP C C k As a corollary to our main theorem, w e ob tain the follo win g lo w er b ound for th e Disjoin tness function. Corollary 5.3. R ǫ k ( DISJ k ) = Ω n 1 k +1 2 2 k ( k − 1)2 k − 1 for any c onstant ǫ > 0 . Pr o of. Let f = NOR m and f ′ = NOR n . W e kno w deg 1 / 3 (NOR m ) = Θ ( √ m ) b y T heorem 2.2. Setting n = 2 2 k ( k − 1) e deg 1 / 3 (NOR m ) k − 1 m k , and writing (22) in terms of n giv es the result for any constant ǫ > 1 / 6. Th e bou n d can b e made to w ork for ev ery constan t ǫ b y a sta ndard b o osting argument. Observe that w e get the s ame b ound for the f unction G OR k . It is n ot difficult to see that there is a O (log n ) b it non-deterministic pr otocol for G OR k and therefore this fun ction separates the comm unication complexit y classes BPP C C k and NP C C k for all k = o (log log n ). 12 5.2 Other Symmetric F unctions Theorem 5.2 do es not immediately provide strong b ounds on the communicati on complexit y of G f k for every symmetric f . F or in stance, if f is the MAJORITY function then one has to w ork a little more to d er ive str ong low er b ounds. In this section, u s ing the main result and Pa turi’s Th eorem (Th eorem 2.2), we obtain a low er b ound on the comm unication complexit y of G f k for eac h symmetric f . Let f : { 0 , 1 } n → { 1 , − 1 } b e the symmetric f unction in duced from a pr edicate D : { 0 , 1 , . . . , n } → { 1 , − 1 } . W e d enote by G D k the function G f k . F or t ∈ { 0 , 1 , . . . , n − 1 } , define D t : { 0 , 1 , . . . , n − t } → { 1 , − 1 } b y D t ( i ) = D ( i + t ). Observe that the communicat ion complexit y of G D k is at least the comm unication complexity of G D t k . Corollary 5.4. L et D : { 0 , 1 , . . . , n } b e any pr e dic ate with de g 1 / 3 ( D ) = d . L et ℓ 0 = ℓ 0 ( D ) and ℓ 1 = ℓ 1 ( D ) . Define T : N → N by T ( n ) = n (2 2 k ( k − 1) e/d ) k − 1 1 k Then for any c onstant ǫ > 0 , R ǫ k ( G D k ) = Ω Ψ( ℓ 0 ) + T ( ℓ 1 ) 2 k − 1 wher e Ψ( ℓ 0 ) = min { Ω p T ( n ) ℓ 0 2 k − 1 , Ω T ( n − ℓ 0 ) 2 k − 1 } . Pr o of. There are three cases to consider. Case 1: Supp ose ℓ 0 ≤ T ( n ) / 2. Let D ′ : { 0 , 1 , . . . , T ( n ) } → { 1 , − 1 } b e su c h that for an y z ∈ { 0 , 1 } T ( n ) , we ha v e D ( | z | ) = D ′ ( | z | ). By Theorem 5.2, the complexit y of G D k is Ω( d/ 2 k − 1 ) where d = deg 1 / 3 ( D ′ ). By P aturi’s Th eorem, deg 1 / 3 ( D ′ ) ≥ p T ( n ) ℓ 0 ( D ′ ) = p T ( n ) ℓ 0 and so R ǫ k ( G D k ) = Ω p T ( n ) ℓ 0 2 k − 1 Case 2: Supp ose T ( n ) / 2 < ℓ 0 ≤ n/ 2. W e fi n d a lo wer b ound on the comm unication complexit y of G D t where t = ℓ 0 − T ( n − ℓ 0 ) / 2. Let D ′ t : { 0 , 1 , . . . , T ( n − ℓ 0 ) } → { 1 , − 1 } b e such that D ′ t ( | z | ) = D t ( | z | ). S o by Theorem 5.2, the complexit y of G D t k is Ω ( d/ 2 k − 1 ) wh ere d is the appro ximation degree of D ′ t . W e know D ′ t ( T ( n − ℓ 0 ) / 2) = D t ( T ( n − ℓ 0 ) / 2) = D ( T ( n − ℓ 0 ) / 2 + ℓ 0 − T ( n − ℓ 0 ) / 2) = D ( ℓ 0 ) 6 = D ( ℓ 0 − 1) = D ′ t ( T ( n − ℓ 0 ) / 2 − 1) . Th us by Paturi’s Theorem, deg 1 / 3 ( D ′ t ) ≥ p T ( n − ℓ 0 ) 2 / 2. This implies R ǫ k ( G D k ) = Ω T ( n − ℓ 0 ) 2 k − 1 . 13 Case 3: Sup p ose ℓ 0 = 0 and ℓ 1 6 = 0. Th e argument is similar to the one for Case 2. Con s ider D t where t = n − ℓ 1 − T ( ℓ 1 ) / 2. L et D ′ t : { 0 , 1 , . . . , T ( ℓ 1 ) } → { 1 , − 1 } b e such that D ′ t ( | z | ) = D t ( | z | ). As in case 2, one sees th at D ′ t ( T ( ℓ 1 ) / 2) 6 = D ′ t ( T ( ℓ 1 ) / 2 + 1), so deg 1 / 3 ( D ′ t ) ≥ p T ( ℓ 1 ) 2 / 2. Th erefore, R ǫ k ( G D k ) = Ω T ( ℓ 1 ) 2 k − 1 . Com bining these th r ee cases, we get the d esired result. References [1] L. Babai, P . F rankl, and J. Simon. Complexit y classes in comm unication complexit y theory . In FOCS , pages 337–347, 1986. [2] L. Babai, N. Nisan, and M. Szegedy . Multipart y proto cols, pseudorandom generators for logspace, and time-space trade-offs. J. Comput. Syst. Sci. , 45(2):204–2 32, 1992. [3] P . Beame, M. Da vid, T. Pitassi, and P . W o elfel. Separating deterministic from non- deterministic NOF m ultipart y comm unication complexit y . In ICALP , pages 134–145 , 2007. [4] P . Beame, T. Pitassi, and N. Segerlind. L o wer b ounds for lo v asz–sc hr ijv er systems and b eyond follo w from multipart y comm unication complexit y . SIAM Journal on Computing , 37(3):84 5– 869, 2007. [5] P . Beame, T. Pitassi, N. S egerlind, an d A. Wigderson. A strong direct p ro du ct theorem for corruption and the m ultipart y comm unication complexit y of Disjoint ness . Computa tional Complexity , 15(4):391– 432, 2006. [6] A. C h akrabarti. Low er b ounds for multi-pla y er p oin ter jum ping. In IEEE Con f. Computational Complexity , p ages 33–45, 2007. [7] A. Chan d ra, M. F urst, and R. Lipton. Multi-part y p r otocols. I n STOC , pages 94–99, 1983. [8] A. Chattopadh y a y . Discrepancy and the p o w er of b ottom fan -in in depth-three circuits. In F OCS , 2007. [9] F. Chung and P . T etali. Communicat ion complexit y and quasi-randomness. SIAM J. Discr ete Math. , 6(1):110 –123, 1993. [10] J. F ord and A. G´ al. Hadamard tensors and low er b oun ds on multipart y comm unication com- plexit y . In ICALP , pages 1163–11 75, 2005. [11] E. Kushilevitz and N. Nisan. Communic ation Complexity . Cambridge Universit y Press, 1997. [12] T. Lee an d A. Sh r aibman. Disjoin tness is hard in the m ulti-part y n umber in the foreh ead mo del. In Ele c tr onic Col lo quium on Computationa l Complexity , n um b er TR08-003, 2008. [13] R. P aturi. On the degree of p olynomials that approxima te symmetric b oolean functions. In STOC , pages 468–474, 1992. [14] R. Raz. T h e BNS-Chung criterion for m ulti-part y comm unication complexit y . Computational Complexity , 9(2):113–1 22, 2000. 14 [15] A. Razb oro v. Quant um communicatio n complexit y of sym m etric predicates. Izve stiya: Math- ematics , 67(1):14 5–159, 2003. [16] A. Shersto v. Th e pattern matrix metho d for lo wer b ounds on quan tum comm u n ication. In Ele ctr onic Col lo q u ium on Computational Complexity , n um b er TR07-100. 2007 . [17] A. S hersto v. Separating A C 0 from depth-2 ma jorit y circuits. In STOC , p ages 294–30 1, 2007. [18] P . T esson. Computational c omplexity questions r elate d to finite monoids and semigr oups . P hD thesis, McGill Un iv ersit y , 2003. [19] E. Viola and A. Wigderson. One-wa y m ulti-part y communicati on lo wer b ound for p oint er jumping with applications. In FOCS , pages 427–437, 2007. [20] A. C.-C. Y ao. Some complexit y q u estions related to distribu tiv e computing. In STOC , p ages 209–2 13, 1979. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment