Combining Expert Advice Efficiently

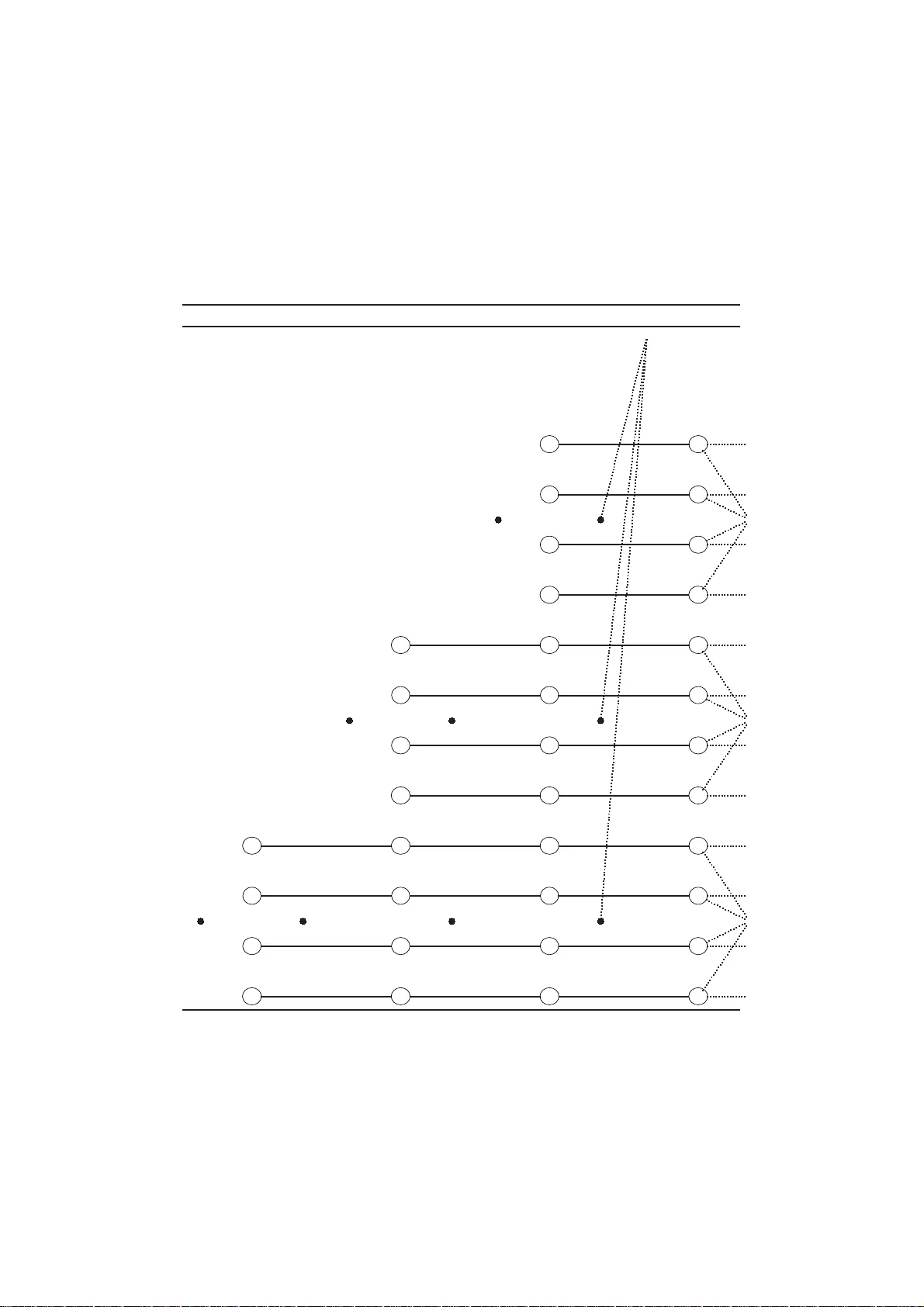

We show how models for prediction with expert advice can be defined concisely and clearly using hidden Markov models (HMMs); standard HMM algorithms can then be used to efficiently calculate, among other things, how the expert predictions should be w…

Authors: Wouter Koolen, Steven de Rooij