A multiple covariance approach to PLS regression with several predictor groups: Structural Equation Exploratory Regression

A variable group Y is assumed to depend upon R thematic variable groups X 1, >..., X R . We assume that components in Y depend linearly upon components in the Xr's. In this work, we propose a multiple covariance criterion which extends that of PLS re…

Authors: Xavier Bry (I3M), Thomas Verron (CEFE), Pierre Cazes (CEREMADE)

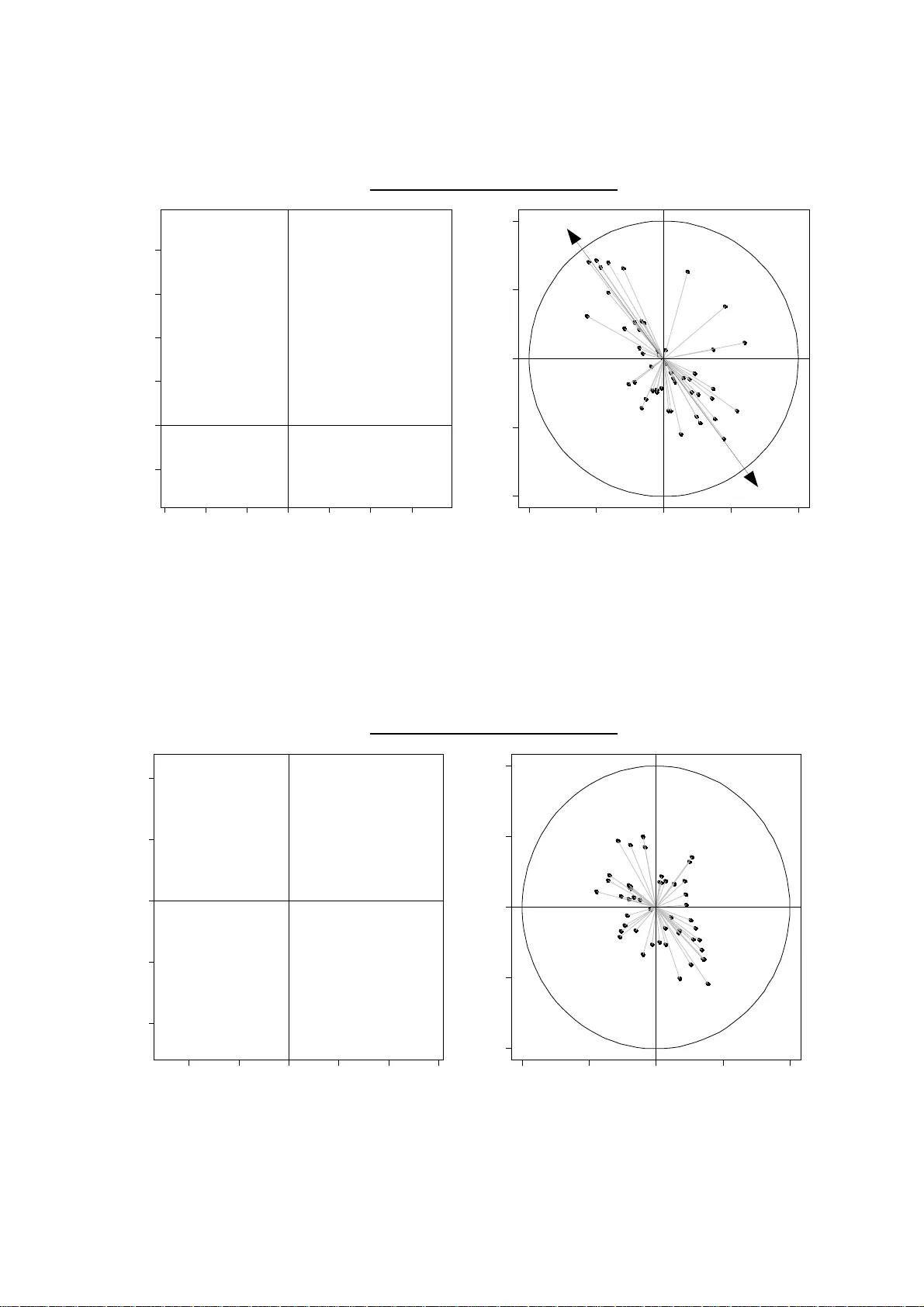

A multiple covariance approach to PLS regression with several predic tor groups: Structural Equation Exploratory Regression X. Bry * , T. Verron **, P. Cazes*** * I3M, Université Montpellier 2, Place Eugène Bataillon, 34090 Montpellier ** ALTADIS, Centre de recherche SCR, 4 rue André Dessaux, 45000 Fleury l ès Aubrais *** LISE CEREMADE, Université Paris IX Dauphine, Place de Lattre de Tassigny, 75016 Paris Abstract : A variable group Y is assumed to depend upon R thematic variable groups X 1 , ..., X R . We assume that components in Y depend linearly upon components in the X r 's. In this work, we propose a multiple covariance criterion which extends that of PLS r egression to this m ultiple predictor groups s ituation. On this criterion, we build a PLS-type exploratory method - Structural Equation Exploratory Regression (SEER) - that allows to simultaneously per form dimension reduction in groups and investigate the linear model of the components. SEER uses the multidimensional structure of each group. An application example is given. Keywords : Linear Regression, Latent Variables, PLS Path Modelling, PLS Regres sion, Structural Equation Models, SEER. Notations: Lowercase carolingian letters generall y stand for c olumn-vectors ( a , b, ... x, y ... ) or current index values ( j , k ... , s , t ...). Greek lowercase letters ( α , β ,... λ , µ ,...) stand for scalars. < u 1 , ... , u n > is subspace spanned by vectors u 1 , ... , u n . e n stands for the vector in ℝ n having all components equal to 1. Uppercase l etters generall y stand f or matrices ( A, B...X, Y . ..), or m aximal index values ( J, K...S, T. ..). Π E y = orthogonal projecti on of y onto subspace E , with respect to a euclidia n metric to be specified. X being a ( I,J ) matrix: x i j is the value in row i and colu mn j ; x i stands for vector ( x i j ) j= 1 à J ; x j stands for vector ( x i j ) i= 1 à I < X > refers to the subspace spanned by column vectors of X Π X is a shorthand for Π st ( x ) = standardized x variable. a ( k ) = the current value of element a in step k of an algorithm. ( a s ) s = column vector of elements a s [ a s ] s = line vector of elements a s A' = transposition of matrix A Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 1 diag(a,...,b) : if a,...,b ar e scalars, refers t o diagonal matrix with diagonal elements a,...,b . If a,...,b are square matrices, refers to block-diagonal matrix with block-diagonal elements a,...,b . < X,...,Z > , where X,...,Z are matric es having t he same row number, refers to the subspace spanned by column vectors of X,...,Z . 〈 x ∣ y 〉 M is the scalar product of vectors x and y wit h respect to euclidian metric matrix M . ∥ x ∥ M is the norm of vector x with respect t o metric M . PCk ( X,M,P ) refers t o the k th principal component of matrix X with col umns (variables) wei ghed by metric matrix M , and lines (observations) weighed by matrix P. In E X , M , P = inertia of ( X,M,P ) along subspace E . λ 1 ( X,M,P ) = largest eigenvalue of ( X,M,P )'s PCA. Other conventions: • Variables de scribe the s ame set of n obs ervations. Value of variable x f or observation i is x i . A variable x is identified to a column-vector x = x i i = 1 t o n ∈ ℝ n . • All variables are taken centred. Moreover, original numerical variables are taken standardized. • Observation i has weight p i . Let P = diag ( p i ) i =1 to n . • Variable space ℝ n has euclidian P -scalar product. So, we have: 〈 x ∣ y 〉 P = x ' Py = cov x , y . • A variable group X containing J variables x 1 ,..., x J i s identified to the ( n,J ) matrix X = [ x 1 ,..., x J ]. From X 's point of view, observation i is identified to the i th row-vector x i ' of X . • A va riable group X = [ x 1 ,..., x J ] is c urrently "weighed" by a ( J,J ) defi nite positive matrix M . This matrix acts as an euclidian metric in the observation space ℝ J attached to X . The scalar product between observations i and k is: 〈 x i ∣ x k 〉 M = x i ' M x k . Acronyms: IVPCA = Instrumental Variables PCA, also known as MRA MRA = Maximal Redundancy Anal y sis, also known as IVPCA OLS = Ordinary Least Squares PC = Principal Component PCA = Principal Components Analysis PCR = Principal Component Regressi on PLS = Partial Least Squares PLSPM = PLS Path Modelling SEER = Structural Equation Explorator y Regression SE(M) = Structural Equation (Model) Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 2 Introduction In this pa per, we built up a multidi mensional exploration technique that takes into account a single equation conceptual model of data: Structural Equation Exploratory Regression (SEER). The situation we deal with is the following: n individuals are described through a dependant variable group Y and R predictor groups X 1 ,..., X R . Each group has enough conceptual unity t o advocate the grouping of its variables apart from the others. This is why these groups will be referred to as "thematic groups". For e xample's sake, consider n wines described through 3 variable groups: X 1 being that of olfaction sensor y variables, X 2 that of palate sensory variables, a nd Y that of hedonic judgments (all variabl es may for instance be averaged marks given by a jur y ). Now, these groups are linked through a dependenc y network, just as variabl es are in an explanat ory model. This model, called thematic model , may be pictured by a dependenc y graph where groups Y and X r are nodes and X r → Y vertices i ndicate that "the structural pattern exhibited b y Y depends, to a certain extent and amongst other t hings, on that exhibited by X r " (cf. f ig. 1). In our example, it is not irrelevant t o assume that the pattern of hedonic j udgements depends on both olfaction and palate perceptions. It must be clear that a X r → Y vertex means that dimensions in X r bear a relation to variati ons of di m ensions in Y , controlling f or the variati ons of dimensions in all other X s predictor groups . Therefore, we c onsider relations between groups to be partial relations, and must deal with them accordingl y . One im portant feature of data is that every thematic group may contain several important underlying dimensions , without us knowing how m any and which. What we need is a m ethod digging out t hese di mensions. PCA performed separately on each t hematic gr oup certai nly digs out hierarchically ordered and non-redundant principal di mensions in the theme, but regardless of the role t hey may have to pla y according to the available conceptual model of the situation. What we would like is to be able t o extract from ever y theme a hierar chy of dimensions that are reasonabl y "strong in the group" and "fit for the dependency model" (the precise meaning of these expressions is given later). Thus, we stand near the starting point of the modelling process: we have a conceptual model built up from qualit ative and l ogical considerations, but this model involves concepts that are fuzzy , insofar as they may i nclude several unidentified underl y ing aspects, each of whi ch may i n turn lead to miscell aneous measures. This fuzzi ness bars the way to us ual statis tical modelling, be cause s uch modelling requires that the measures be conceptually precise and the m odel parsimonious. To make our way t o such a model, we need to explore ea ch theme in rel ation to the others . Thi s means a multidimensional exploration tool (as PCA is) that seeks thematic structures that are linked through the conceptual model. The purpose of SEER has connexions to that of the PLS Path Modelling technique or more generally Structural Equation Estimation t echniques as LISREL. But there are fundamental differences, in approach as well as in computation: - Unlike PLSPM, SEER really takes partial relations into account in regression models. - Contrary to PLSPM and LISREL, SEER allows to extract several dimensions in e very thematic group (as many as one wishes and the group may provide). This makes it closer to an exploration tool than to a latent variable estimation technique. Indeed, latent variables are a handy way t o model h y potheti cal dimensions. But, like in PCA, they may be viewed a s a mere intermediate tool to extract pr incipal p -di mensional subspaces that provide useful variable projection opportunities. Allowing t o visualiz e the variable correlation patterns on "thematic planes", SEER proves helpful in predictor selection. When t here is but one predict or gr oup X , PLS regression digs out strong dimensions in X that best model Y . SEER seeks to extend PLS regression to s ituations where Y de pends on several predictor groups X 1 ,..., X R . Of course, in such a situation, one could consi der performing PLS regression of Y Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 3 on group X = ( X 1 ,..., X R ). But doing so woul d le ad to components that may be, first: concept ually hybrid and second: constrained to be mutuall y orthogonal, which may drive them awa y from significant variable bundles. Both are li kely to make components more difficult to interpr et. 1. The Thematic Model 1.1. Thematic groups and components X 1 ,..., X r ,..., X R and Y are t hematic groups. Group Y has K varia bles, and is weighed by a ( K,K ) definite positive matrix N . Group X r ha s J r vari ables, and is weighed by a ( J r , J r ) def inite posit ive matrix M r . We assume that every group X r (respectivel y Y ) may be summ ed up using a given number J' r (respectively K' ) of components. Let F r 1 , ... , F r j , ... , F r J r ' (resp. G 1 ,..., G K' ) be t hese components. We impose that ∀ j , r : F r j ∈ 〈 X r 〉 and ∀ k : G k ∈ 〈 Y 〉 . 1.2. Thematic model The thematic model is the dependenc y pattern assumed between thematic groups. We term it single equation model in that there is but one dependant group. It is graphed in figure 1a. Figure 1a: Single equation thematic model Figure 1b: The univariate case X r m m m m l l l l l l l m = Compone nt l = Variable = The matic Group m m y k Y x r j l l l l l l l l F r l G m m m l l l l l l l m = Component l = Varia ble = Thematic Group y l X r m m x r j l l l l F r l When the depe ndant group Y is reduced to a single variable, we get t he particular ca se of the univariate model (fig. 1b). 1.3. General demands When extracting the thematic components, we have a double demand: ➢ We de mand that the statistical model expressing the dependenc y of y k 's onto the predictor components F r j 's have a good fit; ➢ We demand that a group's components have so me "structural strength", i.e. be far from the group's residual (noise) dimensions. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 4 1.3.1. Goodness of fit It will be measured using the classical R² coefficient. 1.3.2. Structural strength • Consider a group of numeric variables: X = ( x 1 , ... , x J ) weighed by ( J,J ) sy mmetric definite positive matrix M and let u ∈ ℝ J , with || u ||² M = u'Mu = 1. Let F = XMu be the coordinate of observations on axis < u >. The inertia of { x i , i = 1 to n } along < u > in metric space ℝ J , M is: ∥ F ∥ P 2 = F ' P F = u ' MX ' P XMu It is one possible measure of structural str ength for direction < u > in space ℝ J , M . • The possibilit y of choosing M makes this measure rather flexible. Let us review important examples. 1) If all variables i n X are numeric and standardized, the criterion is that of standard PCA. Its extrema correspond to principal components. 2) If we do not want to consider structural strength in the group, i.e. consider that all variables in < X > are to have equal strength, then we may take M = ( X'PX ) -1 . Indeed, we have then: ∀ u : u ' X ' PX − 1 u = 1 ⇒ ∥ XMu ∥ P 2 = u ' X ' PX − 1 X ' PX X ' PX − 1 u = 1 This choice leads to take group X as mere s ubspace < X >. 3) Suppose group X is made of K cat egorical variables C 1 , ..., C K . Each categorical variable C k is coded t hrough a matrix X k set up, as follows, from the du mm y variabl es corresponding to its values: all dummy variables are centred, and one of them is re m oved to avoid si ngularity. Now, equating M to block-diagonal matrix Diag (( X k 'PX k ) -1 ) k =1 to K yields a structural strength criteri on whose maximization leads to Multi ple Correspondence Analysis, which extends PCA to categorical variables. 4) More generally, when gr oup X is partitioned into K subgr oups X 1 ,... X K , such that inter- subgroup correlations a re of intere st, but not within-subgroup correlations, then each subgroup X k is considered as m ere subspace < X k >. Equating M to block-diagonal matrix Diag (( X k 'PX k ) -1 ) k =1 to K allows to neutralize e very wit hin-subgroup correlation structure, and y iel ds a criterion whose maximizati on leads to generalized canonical correlati on analysis. 2. A single predictor group X : PLS regression 2.1.Group Y is reduced to a single variable y: PLS1 Consider a nu meric variable y and a predictor group X containing J variables and weighed b y metric M . The component we are looking for is F = XMu . Under constraint u'Mu = 1, || F || P ² is the inertia measure of F 's structural strength. 2.1.1. Program The criterion that is classicall y maxim ize d under the constraint u'Mu = 1 is: C 1 X , M , P ; y =〈 XMu ∣ y 〉 P = ∥ XMu ∥ P cos P XMu , y ∥ y ∥ P (1) It leads to the following progra m: Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 5 Q 1 X , M , P ; y : Max u ' M u = 1 〈 XMu ∣ y 〉 P N.B.: y being standardized, ∥ y ∥ P = 1 . Then: M = ( X'PX ) -1 ⇒ ∥ XMu ∥ P = 1 ⇒ C 1 = cos P XMu , y . 2.1.2. Solution: rank 1 PLS1 component L = 〈 XMu ∣ y 〉 P − 2 u ' Mu − 1 = y ' P XMu − 2 u ' Mu − 1 ∂ L ∂ u = 0 ⇔ MX ' Py = Mu (2) (2) ⇒ XMX ' Py = XMu (3) (2) ⇒ u = 1 X ' Py and then, u'Mu = 1 ⇒ = ∥ X ' Py ∥ M We shall write R X , M , P = XMX ' P and term R ( X,M,P ) y «linear r esultant o f y onto triplet ( X , M , P )». Let u 1 = Arg Max u ' Mu = 1 〈 XMu ∣ y 〉 P and F 1 = XMu 1 , which we shorthand: F 1 = Arg F Q 1 ( X , M , P ; y ). According to (3), component F 1 is collinear to R X , M , P y : F 1 = 1 R X , M , P y = 1 ∥ X ' Py ∥ M R X , M , P y N.B. M = ( X'PX ) -1 ⇒ R X , M , P y = X X ' PX − 1 X ' Py = X y . Ignoring X 's principal correlation structures leads to classic al regression. 2.1.3. Rank k PLS1 Components Let generally X k be the matrix of residual s of X regressed onto PLS components up to rank k : F 1 ,..., F k . The rank k PLS component is defined as the component s olution of Q 1( X k -1 ,M,P;y ). Computing it that way ensures that F k is orthogonal to F 1 ,..., F k -1 . 2.2. Y contains several dependant variables Consider now two variable groups X ( J variables, wei ghed by m etric M ) and Y ( K variables, weighed by metric N ). We may want to perform dimensional r eduction in X onl y (looking for component F = XMu ) or in both X and Y (then looking for component G = YNv as well). 2.2.1. Dimensional reduction in X only a) Criterion and Program: Let { n k } k =1 to K be a set of weights associated to the K variables in Y and let N = diag({ n k } k ). Then, consider criterion C 2 : C 2 X , M ; Y , N ; P = ∑ k = 1 K n k 〈 XMu ∣ y k 〉 P 2 = ∑ k = 1 K n k C 1 2 X , M , P ; y k It leads to the following progra m: Q 2 X , M ; Y , N ; P : Max u ' Mu = 1 C 2 X , M ; Y , N ; P Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 6 b) Rank 1 solution: C 2 = u ' MX ' P ∑ k = 1 K n k y k y k ' PXMu = u ' MX ' PYNY ' PXMu N.B. Note that according to this matrix e xpression of C 2 , N need not be diagonal. L = C 2 − u ' Mu − 1 ∂ L ∂ u = 0 ⇔ MX ' PYNY ' PXMu = Mu (4) u' (4) = C 2 ⇒ λ is the largest eigenvalue. X (4) ⇔ R X , M , P R Y , N , P F = F with λ maxim um (5) 2.2.2. Dimensional reduction in X and Y a) Criterion and program: We are now looking for components F = XMu and G = YNv . The criterion that compounds structural strengt h of components and goodness of fit is: C 3 =〈 XM u ∣ YNv 〉 P = ∥ XMu ∥ P ∥ YNv ∥ P cos P XMu , Y Nv (6) It leads to the program: Q 3 X , M ; Y , N ; P : Max u ' Mu = 1 v ' Nv = 1 〈 XMu ∣ YNv 〉 P b) Rank 1 Solutions: There is an obvious link between programs Q 3 and Q 1 : ( F,G ) = arg F,G Q 3 ( X,M;Y,N;P ) ⇔ F = arg F Q 1 ( X,M,P;G ) and G = arg G Q 1 ( Y,N,P;F ) (7) This leads us to the characterization of the soluti ons: Given v , program Q 3 ( X,M;Y,N;P ) boils down to Q 1 ( X,M,P;YNv ). Therefore: (2) ⇒ MX ' PYNv = Mu (8a) (8a) ⇒ XMX ' PY Nv = XMu ⇔ R X , M , P G = F (9a) Sym metr icall y , given u , program Q 3 ( X,M;Y,N;P ) boils down to Q 1 ( Y,N,P;XMu ). Therefore: (2) ⇒ NY ' PXMu = Nv (8b) (8b) ⇒ YNY ' PXMu = YNv ⇔ R Y , N , P F = G (9b) u' (8a) and v' (8b) imply that λ = µ . Let η = λ ² = µ ². We have: = v ' NY ' PXMu = C 3 , which must be maximized. (9a) and (9b) imply that F and G can be characteriz ed as eigenvectors: R X , M , P R Y , N , P F = F (10a) ; R Y , N , P R X , M , P G = G (10b) η being the largest eigenvalue of operators R X,M,P R Y,N,P and R Y,N,P R X,M,P . N.B. Component F 's characteriz ation (10a) is none other than (5). So, as far as F is concerned, programs Q 2 ( X,M;Y,N,P ) and Q 3 ( X,M;Y,N,P ) are equivalent. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 7 c) Choice of metrics M and N, and consequences • When M = I and N = I , we get the first step of Tucker's i nter-battery analysis, as well as Wold's PLS regression. • Take M = ( X'PX ) -1 . Program Q 3 is equivalent to: Max ∥ XMu ∥ P 2 = 1 v ' Nv = 1 〈 XMu ∣ YNv 〉 P Correlation s tructures in X are no longer taken into account. To reflect that, program Q 3 ( X,M;Y,N;P ) will then be short-handed Q 3 (< X >; Y,N;P ). In such cases, the method is called Maximal Redundancy Analysis , or Instrumental Variables PCA . • If we have both M = ( X'PX ) -1 and N = ( Y'PY ) -1 , we get canonical correlation anal y sis. d) Rank 2 and above: • Our basic ai m is to m odel Y using strong dimensions in X . Once the first X -component F 1 extracted, we look for a strong dimension F 2 in X that is orthogonal to F 1 and may best help model Y . To achieve that, we regress X onto F 1 , which leads to residuals X 1 . Rank 2 co mponent F 2 is then sought in X 1 so a s to be structurally strong and predict Y as well as pos sible (together with F 1 which is orthogonal, so that predictive powers c an be separated). According to these re quirements, one wants to solve: Max F ∑ k = 1 K n k C 1 2 X 1 , M , P ; y k ⇔ Q 3 X 1 , M ; Y , N ; P It is easy to see that this approach leads to sol ving Q 3 ( X k -1 , M ; Y,N;P ) to compute component F k . Hereby, we get dimension reduction in X , in or der to predict Y . • Now, gi ven F = ( F 1 ,..., F K ), if we al so want dimension r eduction in Y with respect t o the regression model, we should look for strong structures in Y best predicted using the F k 's. To achieve that, we consider the following program: Q 3 (< F >; Y,N;P ) Solving the pr ogram yields G 1 . As dimension reduction is now wanted in Y , Y is re gressed onto G 1 , which leads to residuals Y 1 . Generally, Y k -1 being the residuals of Y regressed onto G 1 ,..., G k -1 , component G k will be obtained solving Q 3 (< F >; Y k -1 , N,P ). 3. Structural Equation Exploratory Regression In this section, we review multiple covariance criteria proposed in [Br y 2004], and use the m in structural equation model esti mation. 3.1. Multiple covariance criteria 3.1.1. The univariate case • Consi der the situation describe d in §1.1 and §1.2. and depicted on fig. 1b. Consider now the following criterion: C 4 y ; X 1 , ... , X R = ∥ y ∥ P 2 cos P 2 y , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 8 = co s P 2 y , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 where: ∀ r , F r = X r M r u r with u r ' M r u r = 1 C 4 clearly compounds structural strength of c omponents in groups (|| F r || P ²) and regression's goodness of fit ( cos P 2 y , 〈 F 1 , ... , F R 〉 ). It obviously extends criterion ( C 1 )² to t he case of multiple predictor groups. • If one chooses to ignore structural strength of components in groups b y taking M r = X r ' P X r − 1 ∀ r , we have: ∥ F r ∥ P 2 = 1 ∀ r ⇒ C 4 = cos P 2 y , 〈 F 1 , ... , F R 〉 So, we get back plain linear regression's criterion. 3.1.2. The multivariate case • If N were diagonal ( N = diag( n k ) k =1 to K ), and dimensional reduction in Y were secondary, we might consider the following criterion based on C 4 : C 5 = ∑ k = 1 K n k C 4 y k ; X 1 , ... , X R = ∑ k = 1 K n k cos P 2 y k , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 (11) • If we want to primarily pe rform dimensional reduction in Y as well as i n the X r 's, as pictured on fig. 1a, we should consider the following criterion: C 6 : ∥ G ∥ P 2 cos P 2 G , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 (12) where: G = YNv with v ' Nv = 1 ; ∀ r , F r = X r M r u r with u r ' M r u r = 1 C 6 is a compound of structural strength of components in groups (|| F r || P ² and || G || P ²) and regressi on's goodness of fit ( cos P 2 G , 〈 F 1 , ... , F R 〉 ). • Once again, if one chooses to ignore structural strength of components in groups b y taking M r = X r ' P X r − 1 ∀ r and N = Y ' P Y − 1 , we have: ∥ G ∥ P 2 = 1 , ∥ F r ∥ P 2 = 1 ∀ r ⇒ C 6 = cos P 2 G , 〈 F 1 , ... , F R 〉 3.2. Rank 1 Components 3.2.1. The univariate case a) A simple case • Consider figure 3: an observed variable y i s dependant upon component F in group X, along with other explanator y varia bles grouped in Z = [ z 1 , ... , z S ]. Each z s is taken as a unidimensional group having obvious component F s = z s . Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 9 Figure 3: variable y depending on a X -com ponent F and a Z group l l l l X F x j l y l l Z F is found maximizing the multipl e covariance criterion which, in this case, leads to: Max F = XMu u ' M u = 1 C 4 ⇔ Q 4 * y ; X , M ; Z : Max F = XMu u ' M u = 1 cos P 2 y , 〈 F , Z 〉 ∥ F ∥ P 2 Property Π : If one ignores structures in X by taking M =( X'PX ) -1 , program Q 4 * boils down to: Max F ∈ 〈 X 〉 , ∥ F ∥ P = 1 cos P 2 y , 〈 F , Z 〉 Then, let y X Z = X Z y be the X -component of y 〈 X , Z 〉 = 〈 X , Z 〉 y ; the obvious solution of the program is: F = st y X Z • Let us rewrite program Q 4 * . cos 2 y , 〈 F , Z 〉 = 〈 y ∣ 〈 F , Z 〉 y 〉 P = y ' P 〈 F , Z 〉 y Now, consider figure 4. We have 〈 F , Z 〉 y = Z y Z ⊥ F y . Figure 4 < F ,Z > y F Z t = Π Z ⊥ F Π y Π Z y Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 10 Let t = Z ⊥ F . We have: 〈 F , Z 〉 y = Z y t y = Z y 〈 t ∣ y 〉 P 〈 t ∣ t 〉 P t = Z y t ' Py t ' Pt t Z ⊥ is P -sym metri c, so: Z ⊥ ' P = P Z ⊥ . As a consequence: 〈 F , Z 〉 y = Z y F ' Z ⊥ ' Py F ' Z ⊥ ' P Z ⊥ F Z ⊥ F = Z y F ' Z ⊥ ' Py F ' P Z ⊥ F Z ⊥ F ⇒ y ' P 〈 F , Z 〉 y = y ' P Z y F ' Z ⊥ ' Py F ' P Z ⊥ F y ' P Z ⊥ F ⇔ cos 2 y , 〈 F , Z 〉 = y ' P Z y F ' Z ⊥ ' Py y ' P Z ⊥ F F ' P Z ⊥ F = y ' P Z y F ' P Z ⊥ F F ' Z ⊥ ' Pyy ' P Z ⊥ F F ' P Z ⊥ F = F ' [ y ' P Z y P Z ⊥ Z ⊥ ' Pyy ' P Z ⊥ ] F F ' P Z ⊥ F So: C 4 = F ' P F F ' [ y ' P Z y P Z ⊥ Z ⊥ ' Pyy ' P Z ⊥ ] F F ' P Z ⊥ F (13) We can write it: C 4 = F ' P F F ' A y F F ' B F , with P , A ( y ) and B sym metr ic matrices: B = P Z ⊥ = P − PZ Z ' P Z − 1 Z ' P ; A y = y ' P Z y B B ' yy ' B N.B. When unambiguous, A ( y ) will be short-handed A . Replacing F with XMu , we get the progra m: Q 4 * y ; X , M ; Z : Max u ' M u = 1 u ' MX ' P XMu u ' MX ' AXMu u ' MX ' BXMu • Let us now try to characterize the solution of Q 4 * ( y;X,M;Z ). L = u ' MX ' P XMu u ' MX ' AXMu u ' MX ' BXMu − u ' Mu − 1 ∂ L ∂ u = 0 ⇔ u MX ' A u MX ' P − u u MX ' B XMu = Mu (14) with: u = u ' MX ' AXMu u ' MX ' BXMu ; u = u ' MX ' P XMu u ' MX ' BXMu Notice that β ( u ) and γ ( u ) are homogeneous functions of u with 0 degree. Besides, let us calculate u '(14) and use c onstraint u'Mu = 1, which gives: Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 11 u ' u MX ' A u MX ' P − u u MX ' B XMu = ⇔ u u ' MX ' AXMu u u ' MX ' PXMu − u u u ' MX ' BXMu = ⇔ = u ' MX ' P XMu u ' MX ' AXMu u ' MX ' BXMu = C 4 As a consequence, λ must be maximum . To characterize directl y com ponent F = XMu , we calculate: X (14) ⇔ u XMX ' A u XMX ' P − u u XMX ' B XMu = XMu ⇔ XMX ' F A F P − F F B F = F (15) with F = F ' AF F ' BF ; F = F ' PF F ' BF (16) N.B.1: These coe fficients are hom ogeneous functions of F wi th 0 degree, which allows to se ek solution F of (15) sparing a multiplicative constant. N.B.2: It is easy to s how that at the fixed point, β and γ receive interesting substantial interpretations: F r = F r ' PF r F r ' BF r = ∥ F r ∥ P 2 ∥ 〈 F s , s ≠ r 〉 ⊥ F r ∥ P 2 = 1 cos 2 F r , 〈 F s , s ≠ r 〉 Besides: F r = F r ' AF r F r ' BF r = F r ' [ y ' P 〈 F s , s ≠ r 〉 y P 〈 F s , s ≠ r 〉 ⊥ 〈 F s , s ≠ r 〉 ⊥ ' Pyy ' P 〈 F s , s ≠ r 〉 ⊥ ] F r F r ' P 〈 F s , s ≠ r 〉 ⊥ F r = y ' P 〈 F s , s ≠ r 〉 y F r ' P 〈 F s , s ≠ r 〉 ⊥ F r F r ' 〈 F s , s ≠ r 〉 ⊥ ' Py 2 F r ' P 〈 F s , s ≠ r 〉 ⊥ F r = y ' P 〈 F s , s ≠ r 〉 y F r ' 〈 F s , s ≠ r 〉 ⊥ ' Py 2 F r ' P 〈 F s , s ≠ r 〉 ⊥ F r = ∥ 〈 F s , s ≠ r 〉 y ∥ P 2 〈 〈 F s , s ≠ r 〉 ⊥ F r ∣ y 〉 P 2 ∥ 〈 F s , s ≠ r 〉 ⊥ F r ∥ P 2 = ∥ 〈 F s , s ≠ r 〉 y ∥ P 2 ∥ 〈 〈 F s , s ≠ r 〉 ⊥ F r 〉 y ∥ P 2 = ∥ 〈 F s , s ≠ r 〉 〈 〈 F s , s ≠ r 〉 ⊥ F r 〉 y ∥ P 2 = ∥ 〈 F s , s ≠ r 〉〈 F r 〉 y ∥ P 2 = ∥ 〈 F s , s = 1 to R 〉 y ∥ P 2 = cos²( y ; < F r , r = 1 to R >) if y is standardized • As coeffici ents γ and β depend on the solution, it is not obvious to solve analytically equations (15) and (16) where λ is maximum. As an alternative, we propose to look for Q 4 * 's solution as the fixed point of the following algorith m: Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 12 Algorithm A0: Iteration 0 (initialization) : - Choose a n arbitrary initial value F (0) for F i n < X >, for example one of X 's columns, or X 's first PC. Standardize it. Current iteration k > 0: - Calculate coefficients γ = γ ( F ( k -1)) and β = β ( F ( k -1)) through (16). - Extract the eigenvector f associated with the largest eigenvalue of matrix: XMX ' A P − B - Take F ( k ) = st ( f ) - If F ( k ) is close enough to F ( k -1), stop. This al gorithm has bee n empiricall y tested on matrices exhibiting miscella neous patterns. It has shown rather quick convergence in most cases (less than 30 iterations to reach a relative difference between two consecutive values of one component lower than 10 -6 ). b) The general univariate case The program to be solved in the general case is: Q 4 : Max ∀ r : u r ' M r u r = 1 C 4 ⇔ Max ∀ r : u r ' M r u r = 1 cos P 2 y , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 where: ∀ r , F r = X r M r u r We propose to maximize the criterion i teratively on each F r component, taking all ot her components { F s , s ≠ r } as fixed and using algorithm A0 . So, we get the following algorithm: Algorithm A1: Iteration 0 (initialization) : - For r = 1 to R : choose an arbitrar y initial val ue F r (0) for F r in < X r >, for example one of X r 's columns, or X r 's first PC. Standardize it. Current iteration k > 0: - For r = 1 to R : set F r ( k ) = F r ( k -1) - For r = 1 to R : use algorithm A0 to compute F r ( k ) as the solution of program: Q 4 * ( y ; X r , M r ;[ F s ( k ) , s ≠ r ]) - If ∀ r , F r ( k ) is close enough to F r ( k -1), stop. 3.2.2. The multivariate case a) A simple case Consider now y 1 ,..., y K standardized, and suppose the y depend upon F = XMu together with other predictors z 1 , ... , z S considered each as a unidimensional group as in §3.2.1. Let Z = [ z 1 , ... , z S ]. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 13 Use of criterion C 5 : Let N = diag( n k ) k =1 to K . In this case: C 5 = ∥ F ∥ P 2 ∑ k = 1 K n k cos P 2 y k , 〈 F , Z 〉 From (13) we draw: C 5 = F ' PF F ' BF ∑ k = 1 K n k F ' A y k F = F ' P F F ' B F F ' AF with: A = ∑ k = 1 K n k A y k As a consequence, algorithm A0 may be used to s olve program: Q 5 * Y , N ; X , M ; Z : Max F = XMu , u ' Mu = 1 ∥ F ∥ P 2 ∑ k = 1 K n k cos P 2 y k , 〈 F , Z 〉 Expression of matrix A : A y k = y k ' P Z y k P Z ⊥ Z ⊥ ' Py k y k ' P Z ⊥ ⇒ A = ∑ k = 1 K n k A y k = P Z ⊥ ∑ k = 1 K n k y k ' P Z y k Z ⊥ ' P ∑ k = 1 K n k y k y k ' P Z ⊥ = P Z ⊥ ∑ k = 1 K n k y k ' P Z y k Z ⊥ ' P YNY ' P Z ⊥ y k ' P Z y k = tr y k ' P Z y k = tr y k y k ' P Z So: ∑ k = 1 K n k y k ' P Z y k = tr ∑ k = 1 K n k y k y k ' P Z = tr YNY ' P Z And: A = P Z ⊥ tr YNY ' P Z Z ⊥ ' P YNY ' P Z ⊥ Use of criterion C 6 : • Let us show t hat maximizing C 5 and C 6 do not lead to the same F -solution. Let us rewrite both criteria in our simple case: C 5 = ∥ F ∥ P 2 ∑ k = 1 K n k cos P 2 y k , 〈 F , Z 〉 = ∥ F ∥ P 2 ∑ k = 1 K n k 〈 y k ∣ F , Z y k 〉 P = ∥ F ∥ P 2 ∑ k = 1 K n k tr y k ' P F , Z y k = ∥ F ∥ P 2 tr ∑ k = 1 K n k y k y k ' P F , Z = ∥ F ∥ P 2 tr YNY ' P F , Z (17) Whereas: C 6 : ∥ G ∥ P 2 cos P 2 G , 〈 F , Z 〉 ∥ F ∥ P 2 = ∥ F ∥ P 2 〈 G ∣ F , Z G 〉 P = ∥ F ∥ P 2 v ' N ' Y ' P F , Z YNv (18) From (18) we know that, given F , program: Max v ' Nv = 1 C 6 has a G solution characterized by: NY ' P F , Z YN v = Nv (19) Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 14 Y (19) ⇔ YNY'P Π F,Z G = η G v '(19) ⇒ = v ' N ' Y ' P F , Z YN v = C 6 maximum So: C 6 F = ∥ F ∥ P 2 F (20) where η ( F ) is the largest eigenvalue of YNY'P Π F,Z When there is no Z group, YNY'P Π F has rank 1, and its trace is al so its only non 0 ei genvalue which, being positi ve, is its largest one. So both criteria boil down t o the same thing. But when there is a group Z , t hey no longer coi ncide. Of course, maximizing either criterion might possibl y lead to the same F component; in appendi x 1, we show that it does not. • We think that, i n a multidimensional regressive approach, C 5 should be preferred to C 6 , because the a im is to obtain, first, thematic dimensions that may help pre dict group Y as a whole. Only then arises the secondary question of which dimensions in Y are best predicted. b) The general case C 5 = ∑ k = 1 K n k cos P 2 y k , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 Q 5 : Max ∀ r : F r = X r M r u r ; u r ' M r u r = 1 ∑ k = 1 K n k cos P 2 y k , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 We shall simply use an algorithm maximizing C 5 on each F r in turn: Algorithm A2: Iteration 0 (initialization) : - For r = 1 to R : choose an arbitrar y initial val ue F r (0) for F r in < X r >, for example one of X r 's columns, or X r 's first PC. Standardize it. Current iteration k > 0: - For r = 1 to R : set F r ( k ) = F r ( k -1) - For r = 1 to R : use algorithm A0 to compute F r ( k ) as the solution of program: Q 5 *( Y,N ; X r , M r ;[ F s ( k ) , s ≠ r ]) - If ∀ r , F r ( k ) is close enough to F r ( k -1), stop. 3.3. Rank k Components When we have m ore than one predict or group, a proble m appears of hierarchy between components. Indeed, wit hin a predictor group, the components m ust be ordered as they are for instance in PLS r egression, but how should we relate the components between predictor groups? The solution that seems to us most consistent with regression's proper logic is t o c alculate sequentially (as in PLS) each predictor group's components cont rolling for all t hose of the other predictor groups . This i m plies that we state, ab initio , how many components we shall look for in each predictor group. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 15 3.3.1. Predictor group component calculus Let J r be the number of components F r 1 , ... , F r J r that are wanted in group X r . We s hall use t he following algorithm, extending algorith m A2: Algorithm A3: Iteration 0 (initialization) : - For r = 1 to R and for j = 1 to J r : set F r j 's initial value F r j (0) as X r 's j th PC. Standardize it. Current iteration k > 0: - For r = 1 to R : set F r ( k ) = F r ( k -1). - For r = 1 to R : For j = 1 to J r : Let X r 0 ( k ) = X r and, if j > 1: X r j − 1 k = 〈 F r 1 k , ... , F r j − 1 k 〉 ¿ X r . Let ∀ s , m : F s m =[ F s 1 m , ... , F s J s m ] . Use algorithm A0 to compute F r j ( k ) as the solution of program: Q 5 * ( Y,N ; X r j -1 ( k ), M r ;[{ F s ( k ) , s ≠ r } ∪ { F r h ( k ) , 1 ≤ h ≤ j -1}]) - If ∀ r , j F r j ( k ) is close enough to F r j ( k -1), stop. Consider J' 1 ≤ J 1 , ... , J' R ≤ J R . Let M J 1 ' , ... , J R ' = [ [ F r j ] 1 ≤ j ≤ J r ' ] 1 ≤ r ≤ R . The component- set - or model - M J 1 , ... , J R produced by algorithm A3 contains sub-models. A sub-model i s defined by any ordered pair ( r , J' r ) where 1 ≤ r ≤ R and J' r ≤ J r , as: SM r , J r ' = M J 1 , ... , J r − 1 , J r ' , J r 1 ... , J R The set of all sub-models is not totall y ordered. But we have the following pr operty, referred to as local nesting : Every se quence SM ( r ,.) of sub-models defined by SM r , . = SM r , J r ' 0 ≤ J r ' ≤ J r is totally ordered through the relation: SM r , J r ' ≤ SM r , J r * ⇔ J r ' ≤ J r * This order may be interpreted easily , considering that t he component F r j making the difference between SM ( r,j -1) and its successor SM ( r,j ) is t he X r -component orthogonal to [ F r 1 ,..., F r j -1 ] that "best" completes model SM ( r,j -1) (as meant in PLS) controlling f or all other predictor components in SM ( r,j -1). 3.3.2. Predictor group component backward selection • Let model M = M ( j 1 , ... , j R ). When we remove predictor component F r j r , going from model M to its sub-model SM r = SM ( r,j r -1), criterion C 5 is changed so that: C 5 M C 5 SM r = ∥ F r j r ∥ P 2 ∑ k cos P 2 y k , 〈 M 〉 ∑ k cos P 2 y k , 〈 SM r 〉 Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 16 But, t o make norms ∥ F r j r ∥ P 2 comparable between groups , one should standardiz e all X r 's inertia mom enta using a proper weighting. For instance, ∥ F r j r ∥ P 2 might be divided by In ( X r ,M r ,P ) or alternatively b y λ 1 ( X r ,M r ,P ), so that its upper bound would be 1 for every group. • Practicall y , to select components in X r 's, one may initiall y set ever y J r to a value t hat i s "too large", and then remove group components through the foll owing backward procedure: On current step m , having current model M = M j 1 , ... , j R where ∀ r , 1 ≤ j r ≤ J r : - Find s , such that: s = Arg Min r r ∥ F r j r ∥ P 2 ∑ k cos P 2 y k , 〈 M 〉 ∑ k cos P 2 y k , 〈 SM r , j r − 1 〉 with r = 1 In X r , M r , P or r = 1 1 X r , M r , P - Set j s = j s - 1 and rerun the estimation procedure. 3.3.3. Calculating the dependent group components Now, given the components in predictor groups: F = M J 1 , ... , J R , we may want to achieve dimension reduction in Y with respect to the regression model. Let us proceed as in section 2.2.2.d, and look for strong structures in Y using the progra m of ( Y,N,P )'s MRA onto < F >: Q 3 (< F >; Y,N;P ) Solving the program y iel ds G 1 . Generall y , Y k -1 being the residuals of Y regressed onto G 1 ,..., G k -1 , component G k will be obtained solving Q 3 (< F >; Y k -1 , N,P ). 3.4. Starting from C 6 : an alternative What we want to do now is to perfor m dimension reduction in Y and the X r 's "at the same t ime". This means that the components G in Y and F r in the X r 's are co-determined through a unique criterion maximization. 3.4.1. One component per thematic group Supposing we want a single component in each thematic group. Let us look back at criterion C 6 : C 6 : ∥ G ∥ P 2 cos P 2 G , 〈 F 1 , ... , F R 〉 ∏ r = 1 R ∥ F r ∥ P 2 We shall use the sam e approach as for C 5 's maximization, i.e. iteratively maximize C 6 on each component in turn: - Given G and F 1 , ..., F r -1 , F r +1 , ..., F R : Max F r = X r M r u r u r ' M r u r = 1 C 6 ⇔ Max F r = X r M r u r u r ' M r u r = 1 ∥ G ∥ P 2 cos P 2 G , 〈 F 1 , ... , F R 〉 ∥ F r ∥ P 2 This Q 4 * -type program is solved through algorithm A0. - Given F = [ F 1 , ..., F R ]: Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 17 Max G = YNv v ' Nv = 1 C 6 ⇔ Max F r = X r M r u r u r ' M r u r = 1 ∥ G ∥ P 2 cos P 2 G , 〈 F 〉 ⇔ Q 3 Y , N , P ; 〈 F 〉 The G solution is the rank 1 co mponent of ( Y,N,P )'s MRA onto < F >. Finally, we get the following algorith m: Algorithm B1: Iteration 0 (initialization) : - For r = 1 to R : choose an arbitrar y initial val ue F r (0) for F r in < X r >, for example one of X r 's columns, or X r 's first PC. Standardize it. - Choose an arbitrar y initial value G (0) f or G in < Y >, for example one of Y 's columns, or Y 's first PC. Standardize it. Current iteration k > 0: - Calculate F ( k ) = [ F 1 ( k ), ..., F R ( k )] as follows: - For r = 1 to R : set F r ( k ) = F r ( k -1). - For r = 1 to R : use algorithm A0 to compute F r ( k ) as the solution of program: Q 4 * ( G ; X r , M r ;[ F s ( k ) , s ≠ r ]) - Calculate G ( k ) as the G -solution of: Q 3 ( Y,N,P ;< F ( k )>) - If G ( k ) is close enough to G ( k -1) and ∀ r , F r ( k ) to F r ( k -1), stop. 3.4.2. Several components per thematic group What if we want J r c omponents in group X r a nd L components in group Y ? Again, we may conveniently consider the local nesting approach to extend t he rank 1 al gorithm B1 of section 3.4.1. Having to deal with several components in Y , we shall consider t hem as a new dependant variable gr oup on each step, and use criterion C 5 to find predictor components that best predict them. Thus, we get: Algorithm B2: Iteration 0 (initialization) : - Set all F r j 's initial values to those given using algorith m A2. Let F (0) = [[ F r j (0)] r ] j . - Set all G l 's initial values to those calculate d as in section 3.3.3.: G l (0) is the solution of Q (< F (0)>; Y l -1 , N,P ). Current iteration k > 0: Let: G ( k -1) = [ G l ( k -1)] l = 1 to L ∀ s , m : F s m = [ F s 1 m , ... , F s J s m ] ∀ m : F m = [ F 1 m , ... , F R m ] Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 18 - For r = 1 to R : For j = 1 to J r : Set F r j ( k ) = F r j ( k -1). - For r = 1 to R : For j = 1 to J r : Let X r 0 ( k ) = X r . If j > 1, let X r j − 1 k = 〈 F r 1 k , ... , F r j − 1 k 〉 ¿ X r . Use algorithm A0 to compute F r j ( k ) as the solution of program: Q 5 * ( ; X r j -1 ( k ), M r ;[ F s ( k ) , s ≠ r ]) - For l = 1 to L : Let Y 0 ( k ) = Y , and if l > 1: Y l − 1 k = 〈 G 1 k , ... ,G l − 1 k 〉 ¿ Y . Compute G l ( k ) as the G -solution of: Q 3 ( Y l -1 ,N,P ;< F ( k )>) - If ∀ l , G l ( k ) is close enough to G l ( k -1) and ∀ r , j F r j ( k ) to F r j ( k -1), stop. 4. Compared applications of PLS and SEER 4.1. Data and goal 100 french citi es have bee n de scribed from various points of view through nu meric variables 1 , which may be thematicall y structured as shown in table 1: Table 1: variables describing the french towns Theme Sub-theme Variable label Variable description demographic dynamics PopGrowth Population growth rate Ageing Nr of over 75 / Nr of below 20 (in 1999) PopAttract Population attraction rate (nr of immigrants on 1990-1999 over population in 1999) ActivePopAttract Active population attraction rate ( nr of ac tive immigrants on 1990-1999 over population in 1999) Economy Work Unemployt Unemployment rate YouthUnemployt Unemployment rate of the <25 y rs LongUnemployt % of those unemployed for > 1yr VarJobCreat A nnual variation of the nr of jobs created in a year Activity Pct of active population FemActivity Pct of women in active population ActiveInCity Pct of active population working in the city 1 Source: Le Point - issue n r 1530 - 11/01/2002 Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 19 Theme Sub-theme Variable label Variable description CieFailures Pct of failures in companies created in a year AvgWage Average yearly net wage Wealth IncomeTax Average amount of the income tax WealthTax Pct of taxpayers having to pay the wealth tax Taxpayers Pct of persons having to pay the income tax Cost of living: SquaMeter Average cost of 1 m² in ancient lodgings InhabDuty Average amount of the inhabited house duty RealEsTax Average amount of the real estate tax WaterM3 Cost of the water cubic meter Housing Owners Pct of house owners House4rooms Pct of houses having 4 rooms or more HouseInsal Pct of insalubrious houses HouseVacant Pct of vacant houses HouseNewBuilt Nr of houses started in 2000 over total nr of houses Risks Cri me , road Criminality Criminality rate (nr of crimes and offences per capita) CrimVar Criminality rate variation (%) RoadRisk Nr of inhabitants killed or injured owing to road traffic in 2000 Health MortInfant I nfant Mortali ty Rate (nr of children deceased before 1 yr over nr of living births) MortLungCancer Standar dized lung cancer-related Mortality Rate MortAlcohol Standardized alcohol-related Mortalit y Rate MortCorThromb Standardized coronary thrombosis-related Mortality Rate MortSuicide Standardized suicide-related Mortality Rate Environmental risks Floods Nr of floodings between 1982 and 2001 PollutedLand Nr of polluted tracts of land IndustRisk Nr of factories classified 1 on the Seveso scale Educational risks SchoolDelay1 P ct of children bey ond age in the first y ear of secondary school SchoolDelay4 P ct of children beyond age i n the fourth year of secondary school SchoolDelay7 P ct of children beyond age in the seventh and last y ear of secondary school Resources Natural SeaSide Sea side less than two hours far by car Ski Ski resort less than two hours far by car Sun Annual duration of sunshine Rain Annual nr of days with precipitation over 1mm Temperature Average annual temperature from 1961 to 1990 Walkers Pct of employed going to work on foot Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 20 Theme Sub-theme Variable label Variable description Cultural Museums Nr of museums Cinema Nr of cinema entries per inhabitant in 2000 Monuments Nr of listed historical monuments BookLoan Nr of loaned books per inhabitant in 2000 Restaurants Nr of restaurants graded wit h at l east one star in t he Michelin guide in 2001 Press Nr of magazine issues sold per inhabitant in 2000 Students Pct of students in population PrimClassSize Average size of primary school classes What we want to achieve is to quickly, effici ently and understandably relate the demographic dynamics to structures in the other themes. We shall first tr y a non-the matic approach a nd t hen our SEER the matic approach, and see if the use of a thematic m odel helps. The que stion naturall y arises of which thematic model to choose. One may have a substantial socio-econo mic theory to back a specific thematic model, as in current structural equation modelling. But f or want of such a theory, one may find reasonable to start with a rather "poor" c onceptual model, and gradually refine i t b y taking into account the empirical findings pr ovided b y its SEER-esti mation, in so far as these structural facts may receive satis fy ing conceptual interpretation. It is all the more necessary to proceed that way as conceptual partitioning is far from univocal. 4.2. Local nesting PLS regression (LN-PLS2) Initially, we want ed to use standard PLS2 analy si s as non-thematic t echnique - taking the demographic dynamics as dependant group, and all other groups merged into one as t he predictor group (the conceptual m odel can be seen in appendix 2, fig. 2a). But as PLS2 gives correlated components in the dependant group Y , it makes graphing of Y awkward. Of course, there exists a variant of PLS2 dealing with groups X and Y identically 2 a nd thus y i elding uncorrelated components in both of them, but the nesting of co m ponents would still be different in this variant and in SEER, making their results theoreticall y impossible to compare. Therefore, we chose to perform our local nesting variant of PLS2 anal y sis: LN-PLS2, which is merely what SEER boils down to when there is but one predictor group. As s hown b y figure 5, demographic va riables are ver y well projected on plane ( G 1 , G 2 ). Component G 2 is highly c orrelated with population growth rate. Co mponent G 1 is positivel y correlated wit h ageing on one hand, a nd att raction rates on the ot her. Yet, as t hese are uncorrelate d, G 1 is less clearly interpretable than G 2 . Dependant plane ( G 1 , G 2 ): The R² column in table 2 shows that prediction of G 2 and population growth is poor, wherea s that of G 1 and associated variables i s much be tter. Components F 1 and F 3 appear to be important to predict ageing , and F 2 and F 4 to predict population attraction . 2 Canonical PLS [ Tenenhaus 1998]; note that thi s symmetric P LS variant departs from the initial non-symmetric approach, which was to model Y through X . Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 21 Figure 5: Demographic Plane (G 1 ,G 2 ) -2 -1 0 1 2 3 -2 -1 0 1 2 ax e 1 axe 2 Ag en AixEn Pro v en c e Aja ccio Alb i Amie ns An ge rs An go ule me An ne cy An tibe s Arl es Au xer re Av igno n Ba stia Bayo nne Be au v ais Be lfo r t Be san con Be zier s Blois Bor dea ux Bou log ne Billan cou rt Bo ur g EnBr esse Bo ur ge s Bre st Bri v eL aG aillar de C ae n Ca lais Ca nn es Ca rcasso nn e C astre s C ha lonS ur Sao ne Ch amb ery Ch ar lev illeMe z ier es C ha rtre s Ch olet C ler mon tFer ran d Co lmar C om pieg ne C or be ilEssonn es Cr eteil D ijon D un k er qu e Ep ina l Ev ry Ga p Gr eno ble La Ro che lle La Ro che S ur Yon La v a l Le Ha v re Le Man s Lill e Lim og es L yon Ma rse ille Me lun Me t z Mo nta uba n Mo ntlu con Mo ntp ellier Mu lho use N an c y Na ntes N eu illySurS ein e Ne v ers Ni ce Ni me s Ni or t Or lean s Pa ris Pa u Pe rig ue ux Pe rp ign an Po itier s Qu impe r R eim s R en nes R ou en R ue il Ma lmaiso n Sa intBr ieu c Sa intD enis Sa intEtien ne Sain tGer ma inE nL aye Sa intMa lo Sain t N aza i re Sa intQ ue ntin Sa rce lles Se te Stra sbo ur g T ar be s T oulo n T oulo use T our s T roye s Va len ce Va len cien nes Va nn es Ve rsa illes Vichy Ville ur ba nne -1.0 -0.5 0. 0 0.5 1.0 -1.0 -0.5 0.0 0.5 1.0 ax e 1 axe 2 PopGrowt h Agei ng PopA tt ract ActivePopAttr act Table 2: Goodness of fit and importance of LN-PLS2 predictor components Modelling: R² F 1 F 2 F 3 F 4 F 5 F 6 G 1 .634 .393 *** .569 *** -.320 *** -.168 ** .153 * G 2 .205 .312 ** -.225 * PopGrowth .118 -.286 ** Ageing .539 .513 *** -.403 *** .187 ** PopAttract .442 .578 *** -.292 *** ActivePopAttract .534 .657 *** -.298 *** P-value coding: 0 <'***' <0.001 <'**' < 0.01< '*' <0.05 <' ' <1 N.B: Standard l inear model P-value has been used to measure the importance of predictive components. It is of course not possible to view this indicator as a proper P-value, si nce predicti ve components here are not exogenous. This also goes for all subsequent similar tables. Let us now see whether F -components may easil y receive interpretation. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 22 Predictor components planes: Plane (F 1 , F 2 ): Figure 6: Predictor plane (F 1 ,F 2 ) -3 - 2 -1 0 1 2 3 -1 0 1 2 3 4 ax e 1 axe 2 Age n AixEnP ro v en c e Ajac cio Albi Amie ns An ge rs An go ule me Ann ecy An tibe s Arle s Au xer re Av igno n Bastia Bayo nne Be au v ais Belf or t Besa ncon Bezie r s Blois Bor dea ux Bou logn eBilla nco ur t Bou rgE nBr esse Bo ur ge s Bre s t Briv eL a Ga illar d e C ae n C ala is C an nes C ar casso nn e C astre s C ha l on Sur Sao n e C ha mbe ry C ha rle v illeMe z ier es C ha rtre s C ho let C ler mon tFer ra nd C olm ar C om pie g ne C or beilEsso nn es C re teil D ijon D un k er qu e Ep ina l Ev ry Ga p Gr en ob le La Ro che lle L aR oche Su rYo n La v al L eH av re Le Man s Li lle Li mo ge s Lyo n Ma rse ille Me lu n Me tz Mo nta ub an Mo ntlu c on Mo ntp ellie r Mu lho u se N an cy N an t es N eu i llySur Se ine N ev er s N ice N imes N ior t Or le an s Pa ris Pa u Pe rig ue u x Pe rp ign an Po itier s Qu imp er R eim s R en nes R ou en R u e i lMa lma i son Sa intBr ieu c Sa intD enis Sa intEtie nn e Sain tGer ma inE nL aye Sain t Ma lo Sain tNaza ire Sa intQ ue ntin Sar c elle s Se te Stra sbo ur g T arb es T ou lon T ou lou se T our s T roye s Va len ce Vale ncien ne s Van n es Ve rsa illes Vichy Ville ur ba nne -1. 0 -0.5 0.0 0.5 1. 0 -1. 0 -0. 5 0.0 0.5 1.0 ax e 1 axe 2 Un employ t Y ou th Un employ t Lon gUn employ t VarJobCreat Activit y FemActivit y Activ eI nCity Ci eFai lu res Av gWage I nc omeT ax Wealt h T ax T ax paye rs SquaM eter I n habDu ty Real EsTax Water M3 Ow ners Hou se4rooms Ho useI nsal Hou seV acant Ho useN e wBu i lt Criminality CrimVar RoadR isk Floods Pollu tedLan d I ndu stRisk Mort I nf an t Mort Lu n gCancer Mort Alcoh o l Mor tCorT h romb Mort Su ici de Sch oolDelay 1 School Delay 4 School Delay 7 SeaSi de Ski Sun Rain T emperat ur e Wal ker Mu seum s Ci n ema Mon u ment s BookLoan Rest au ran ts Press Stu d en t PrimClassSi z e We alth / activity Unempl oyment Figure 6 shows that components F 1 and F 2 are not separatel y interpretable, whereas there is a clearly interpretabl e direction in plane ( F 1 , F 2 ): that of wealth / activit y. Figure 7 shows that components F 3 and F 4 are poorly correlated to any pre dictor. This lack of interpretation of predictive co mponents means failure of the PLS2-t y pe non-thematic method for our explorat ory modelling purpose. Plane (F 3 , F 4 ): Figure 7: Predictor plane (F 3 ,F 4 ) -2 -1 0 1 2 3 -2 -1 0 1 2 ax e 3 axe 4 Agen AixE nPr ov ence Ajaccio Albi Amien s Ang ers Ango uleme Ann ecy Antibe s Arles Auxer re Av igno n Bastia Bayon ne Beau v ais Belf or t Besan con Bezier s Blois Bor dea ux Bou log neBilla ncou rt Bou rg EnBre sse Bour ges Bre st Briv eLa Ga illard e Ca en Ca lais C an nes Ca rcasson ne Ca stres C ha lonSu rSao ne Ch amb ery C ha rlev illeMezier es Ch ar tres Ch ole t C l e r montFe rr an d Co lmar Co mpie gne Co rb eilEssonn es C rete il Dijo n D un k er qu e Epin al Ev ry Ga p Gr en ob le La Ro chelle La R oche SurYo n La v al Le H av re Le Ma ns Lille Limo ges Lyon Mar s eille Melu n Metz Mon taub an Mo ntlucon Mon tpellie r Mulh ou se Na ncy N an tes Ne uillySur Seine Ne v er s N ic e N imes Nio rt Or lea ns Par is Pau Per igue ux Per pig na n Poitier s Qu imper R eims R en nes Ro ue n Ru eilMa lmaison Sain tBrieuc SaintD en is Sain tEt ienn e Sain tGerm ain EnLa ye SaintMa lo Sain tNazair e SaintQu entin Sar celles Sete Strasbo ur g T ar be s T oulon T ou lou se T ou rs T royes Valen c e Valen c ienn es Van ne s Ver sailles Vichy Villeur ba nn e -1. 0 -0.5 0.0 0.5 1. 0 -1. 0 -0.5 0.0 0.5 1.0 ax e 3 axe 4 Un emp loy t Y ou th U n empl oyt Lon gUn empl oy t VarJobCr eat Activ i ty FemAct iv ity Activ eI n C ity CieF ailu res Av gWage I n com eT ax Weal th T ax T ax pay ers Squ aMe ter I nh a bDu ty R ealEsT ax Wat erM 3 O wn ers Ho u se4ro oms H ou seI n sal H ou seVacan t Ho u seN ewBu ilt Crimin a lit y CrimVar R oadR isk Floods Poll u tedLan d I n du stRisk Mo rtI n fan t Mor tLu n gCan cer Mo rtAl cohol Mor tCor Th rom b Mor tSu i cide Sch ool Delay 1 Sch oolDelay 4 Sch o ol Delay 7 SeaSide Ski Su n R ain T empe rat u re Wal ker Mu seu ms Cinem a Mon u men ts BookLoan Re stau ran ts Press Stu den t PrimCl assSiz e Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 23 4.3. SEER 4.3.1. Rough thematic model of the data Our initial thematic model must be rather gros s, yet conceptually defendable. Thus, we first partition predictors into three explanator y themes: Economy, Risks, Resources (see tabl e 1 and appendix 2, fig. 2b). To merel y have a comparison basis for SEER, let us first perform "Thematic" Principal Components Regressi on. We extract the first two PCs of each theme: l et G 1 and G 2 be those of the dependant the me Y , and F r 1 and F r 2 those of explanatory t heme X r . Then G 1 , G 2 and all y k 's are regressed onto { F r 1 , F r 2 } r = 1 to 3 . Table 3 gives the goodness of fit (R²) of each model. Table 3: Thematic PCR goodness of fit (3 themes model) R² G 1 .303 G 2 .272 PopGrowth .067 Ageing .298 PopAttract .375 ActivePopAttract .417 4.3.2. SEER Results Now, SEER is performed using the rough thematic model. Two com ponents are extracted per theme. Convergence threshold for a unit norm vector was set to 10 -9 . Convergence was always reached in less than 30 iterations. Dependant plane (G 1 , G 2 ): Figure 8 shows that plane ( G 1 , G 2 ) is very similar to that of LN-PLS2 (cf. figure 5). Figure 8: Demographic Plane (G 1 ,G 2 ) -2 -1 0 1 2 3 -2 -1 0 1 2 3 ax e 1 ( 30.53 %) axe 2 ( 12.11 %) Age n AixEnProv en ce Ajaccio Albi Amien s Ang er s Ang ou leme Ann ecy Antibe s Arle s Auxer re Av igno n Bastia Bayon ne Bea uv ais Belf or t Besan con Bezier s Blois Bord eau x Bou logn eBillancou rt Bou rgEn B re sse Bou rg es Bre st Briv eL aG ailla rde C ae n Ca l ais C ann es C arca ss on ne C astres Ch alonSu rSa on e C ha mber y C har lev illeMezie re s C ha rtres Ch olet Cle rmo ntFe rr an d C olmar C omp ieg ne C orb eilEss on ne s Cr ete il D ijon D un kerq ue Epin al Ev ry Ga p Gr en ob le La Ro c helle La R oche SurYo n La v al Le Ha v re Le Man s Lille Limo ges Lyon Mar seille Melu n Me tz Mo ntau ban Mo ntlucon Mon t pellie r Mulh ou se Na ncy Na ntes N euillySur Sein e N ev er s N ice Nime s N ior t Or lea ns Paris Pau Per igu eu x Per pig na n Poitier s Qu impe r R eims R en nes Ro uen Ru eilMalma ison Sain tBrieu c Sain tDe nis Sain tEt ienn e Sain tGer main EnL aye Sain tMalo Sain tNa zaire Sain tQue ntin Sarce lles Sete Strasb our g T arb es T o ulon T oulou s e T ou rs T ro yes Vale nce Vale ncien ne s Van ne s Versa illes Vichy Villeur ban ne -1.0 -0.5 0.0 0.5 1 .0 -1.0 -0.5 0.0 0.5 1.0 axe 1 axe 2 Po pGrowth Ag ei ng Po pA ttract ActivePo pA ttract Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 24 The R² colu mn in table 4 s hows t hat, com pared to Thematic PCR, SEER has s ignificantly improved model a djustment, except for population growth whose prediction is poor (R² = 0.07) for both t echniques. Prediction of ageing is much better (R² = 0.42), a nd that of population attraction rates is relativel y good (R²=0. 61). The first t wo economic components F 1 1 a nd F 1 2 , together with the f irst risk -component F 2 1 appear to be important to predict population attraction , and onl y the first risk -component F 2 1 together with the first resource -co mponent F 3 1 to predict ageing . Table 4: Goodness of fit and importance of SEER predictor components (3 themes model) R² F 1 1 F 1 2 F 2 1 F 2 2 F 3 1 F 3 2 G 1 .516 .400 *** -.629 *** .509 *** -.265 ** -.193 * G 2 .370 -.545 ** PopGrowth .070 .240 * Ageing .417 .265 * .375 *** -.652 *** PopAttract .608 .430 *** -.589 *** .376 *** .180 * ActivePopAttract .608 .521 *** -.556 *** .353 *** -.181 * P-value coding: 0 <'***' <0.001 <'**' < 0.01< '*' <0.05 <' ' <1 Predictor components planes: Economic Plane ( F 1 1 , F 1 2 ): Figure 9 e xhibits two easily interpretable economic components. F 1 1 i s a wealth/activi ty component, the onl y structural direction dug up by LN- PLS2. F 1 2 looks close to a housing component, which has a ne gative partial effect on populati on attraction, which means that, controlling f or ever y t hing else, towns wit h higher attraction rates ha ve more vacant houses and a lower percentage of people owning their house. Figure 9: Economic predictor plane ( F 1 1 , F 1 2 ) -1 0 1 2 3 4 5 -3 -2 -1 0 1 2 3 ax e 1 ( 30.53 %) axe 2 ( 12.11 %) Ag en AixEnP ro v en c e Aja ccio Alb i Ami en s An ge rs Ang oul em e An ne c y An tibe s Ar les Auxe rre Av igno n Bastia Ba yon ne Be au v ai s Bel fo rt Be san con Be zier s Bloi s Bor dea ux Bou log ne Billa nco ur t Bou rgE nBr esse Bo ur ge s Br est Briv eL aG aillar de C ae n C al ais C an nes C ar c asso nn e C astre s C ha lo nSu rSao ne C ha mb er y C ha rlev illeM ezie re s C ha rtr es C ho le t C ler mo ntF er ran d C ol ma r C om pie gne C or beilE sson ne s C re teil D ijon D un ker qu e Epi na l Ev ry Ga p Gr eno ble La R och elle La Ro che SurY on La v al Le Ha v re Le Man s Lil le Lim oge s Lyo n Ma rse ille Me lu n Me tz Mo nta ub an Mo ntlu c on Mo ntp el lier Mu lh ou se N an cy N an tes N eu illySu rS e i n e N ev er s N ice N ime s N ior t Or lea ns Par is Pau Per igue ux Per pign an Po itier s Qu imp er R eim s R en nes R ou en R ue ilMalm aiso n Sai ntBr ieu c Sa intD enis Sa intEtie nn e Sai ntG er ma in EnL aye Sai ntMa lo Sa intN azair e Sai ntQ ue n tin Sa rce lles Se te Stra s bo urg T arb es T ou lon T ou lou se T ou rs T roye s Val en ce Va len cien nes Va nn es Ver sailles Vichy Ville ur ba nne -1.0 - 0.5 0.0 0. 5 1.0 -1. 0 -0.5 0.0 0.5 1. 0 ax e 1 axe 2 Un employ t Y out h Un employ t Long Un employ t VarJobC reat Activity FemActivity Activ eI nCity Ci eFai lu res AvgW age I ncom eTax Wealt h T ax T ax payer s SquaM eter I nh abD u ty Real EsTax Water M3 Ow n ers Hou se4rooms H ous eI n sal Hou seVa can t Hou seNewBu i lt Wealth Activity Unemployment Owner s Houses vacant Large hous es Risk Plane ( F 2 1 , F 2 2 ): Figure 10 exhibits a clear and interesti ng pattern: that of two distinct variable bundles which are also conc eptually apart: one of social risks ( school delays , criminality ), and one of mortali ty risks owing to diseases related to alcohol and tobacco. First component F 2 1 being negatively correlated to Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 25 both bundles, it may be i nterpreted as a global security co m ponent. Its partial effect on populati on attraction is positive (cf. t able 4). Yet, through its intermediate position, this component clearly appears to be an unsatisfactor y compromise between two distinct risk struct ures. This pleads in favour of splitting the risk theme into t wo sub-themes: that of social risks a nd that of s anitary risks. We c an see here all t he be nefit of graphing the themes in explanator y component planes: it allows to investigate their structure fr om an explanatory viewpoint, and further refi ne the thematic model appropriately. Figure 10: Risk plane ( F 2 1 , F 2 2 ) -3 -2 -1 0 1 2 3 -2 -1 0 1 2 3 ax e 1 ( 1 9.09 %) axe 2 ( 14.59 %) Ag e n AixEn Pro v en ce Aja ccio Alb i Am ien s An g er s An go ule me An ne cy An tibe s Ar les Au xer re Av ign o n Ba stia Ba yon ne Be au v ais Be lf or t Be san con Be zier s Blo is Bo rd ea ux Bo ul og n eB illa nco ur t Bo ur gEn Bre sse Bo u rg e s Br est Briv e La Ga illa rd e C ae n C ala is C an ne s C a rca sson ne C astre s C h alo nSu rS ao ne C ha mb er y C ha rle v illeM ezie re s C ha rtre s C h ole t C le rm on tFer ra nd C o lma r C omp ieg ne C orb eilEsso n ne s C rete il D ijo n D u nke rq ue Ep ina l Ev ry G ap G re n ob le L aR och el le La Ro che Su rY on La v al L eH av re Le Ma ns Lill e L imo ge s L yon Ma rseil le Me lun Me tz Mo ntau ba n Mo ntluco n Mo ntpe llier Mu lho u se N ancy N a nte s N e uillySu rS e i n e N ev ers N ice N imes N ior t Or lea n s Pa ri s Pa u Pe r igu eux Pe rp ign an Po itier s Q uim pe r R eims R e nn e s R o ue n R u eilM a lma ison Sa in tBrie uc S a in tDe nis Sa in tEtien ne Sa in tGe rma inEn La ye Sa in tMa lo Sa in tN aza ire Sa intQ ue n tin Sar celle s Se te Stra sbo ur g T ar be s T ou lon T ou lou se T ou rs T ro yes Va len ce Va len cien n es Va n ne s Ve rsa illes Vich y Ville ur ba nne -1.0 -0.5 0.0 0.5 1.0 -1.0 -0.5 0.0 0.5 1.0 ax e 1 axe 2 C riminal ity C rimVar RoadRisk Floods Pollu tedLand I ndu stRisk Mort I nf ant Mort Lun gC ancer MortAl coh ol MortCorT h romb MortSu ici de School D elay 1 School D elay 4 School D elay 7 Social risks Health risks Resource Plane ( F 3 1 , F 3 2 ): Figure 11 also exhibits a two-bundle structure in the resource the me, but this time, each of t he first two components matches a bundle. F 3 1 is a climatic component opposing warm and sunny towns to cold and rainy ones. F 3 2 is a cultural com ponent pointing at monuments , museums and luxury restaurants . On the town plane, we notice the pe culiar s ituation of Paris , which alone may acc ount for the second component. Indeed, here is a second benefit of thematic planes: they all ow to explore the individuals' thematic structure with respect t o the explanatory m odel. It appears necessary to later remove Paris from the data, or better, to replace the original variables b y the corresponding rank variables, in order to shrink the influence of outliers. For t he time being, i t is not neces sary to split the the me into two s ub-themes (one of natural resour ces and one of cultural resources), si nce e ach of the two structures is satisfactorily reflected b y a component. According to table 4, the effect of these co mponents on population attraction are weak, but the partial e ffect of F 3 1 on ageing is important, and negative: warmer climes are linked to older populations, controlling for all other predictive components. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 26 Figure 11: Resource plane ( F 3 1 , F 3 2 ) -2 -1 0 1 0 2 4 6 8 axe 1 ( 21 .38 % ) axe 2 ( 22 %) Ag en AixEnPr ov en ce Aja ccio Albi Amie ns Ang ers Ang oule me Ann ecy Antib es Arle s Auxe rr e Av ign on Bastia Bayo nn e Bea uv ais Belf ort Besa nco n Bezie rs Blo is Bor dea ux Bou l og neBilla ncou rt Bou rgEn Bre sse Bou rge s Bre s t Briv eL aG ai llar de C ae n C ala is C an ne s C ar casson ne C astre s C ha lon Sur Saon e C ha mbe ry C ha rle v i lleM ezie re s C ha rtre s C ho l et C ler mon tFer ra nd C olma r C om pie gn e C or be i lEsson ne s C re teil D ijon D un kerq ue Epi nal Ev ry Ga p G ren oble La Ro chel le La Roch eSu rYon La v al Le Hav re Le Man s Li lle Li mog es Lyo n Ma rse ille Me lun Me tz Mo nta ub an Mo ntlu con Mo ntp ellie r Mu lho use N an cy N an tes N eu illySur Sein e N ev er s N ice N imes N ior t O rlea ns Par is Pa u Per igue ux Per pign an Poitie rs Q uimp er R eims R en ne s R ou en R ue ilMa lma ison Sain tBrie uc Sai ntD en i s Sai ntEtien ne Sai ntGe rm ain EnLa ye Sain tMa lo Sain tNa z air e Sai ntQu entin Sar c elle s Sete Stra sbo ur g T arb es T oulo n T oul ou se T our s T roye s Vale nce Vale ncie nne s Van nes Ver saille s Vichy Villeu rba nn e -1.0 -0.5 0.0 0 .5 1 .0 -1.0 -0.5 0 .0 0 .5 1.0 axe 1 ax e 2 S e aS i de S ki Sun Rai n Tempe rature Wa lker Museums C i nema Monument s Bo o kL oa n Resta uran ts P res s S tu de nt Pr imC lass S i z e Warmer Colder Museums Monument s Restaurants 4.3.3. Refining the thematic model Splitting the Risk -theme into t wo sub-themes ( social risk and health risk ), we get a 4 theme-model (graphed in appendix 2, fig. 2c). The SEER estimation of this model does not change the conclusions regarding the economy and resource factors (cf. fig. 13 and 16). But the risk factors are now t wofold: as shown on figures 14 and 15, we now have a soci al risk component ( F 2 1 ) as well as a health security component ( F 3 1 ). According to table 5, the social r isk c omponent F 2 1 appears not to be clearly partially correlat ed to population attraction , whereas the health security is (with positive effect). On the other hand, F 2 1 is partially positi vely correlated to ageing : school delay is marginally more i mportant in areas with older populations. Figure 12: Demographic Plane (G 1 ,G 2 ) -2 -1 0 1 2 3 -2 -1 0 1 2 3 axe 1 ( 31 .64 %) ax e 2 ( 11.5 9 %) Age n AixEnPr ov en ce Ajaccio Alb i Amie ns Ang er s Ang ou leme Ann ecy Antib es Ar les Auxe rr e Av ign on Bastia Bayo nn e Bea u v ais Belf ort Besa nco n Bezie rs Blois Bor dea ux Bou log neBilla n cour t Bou rg EnBre s se Bou rg es Bre s t Br iv eLa Gailla rde C ae n C ala is C an ne s Ca rcasson ne C astre s Ch alon Su rSao ne Ch amb er y Ch arle v illeM ezier es C ha rtre s C ho let Cl er mon t Fe rr an d Co lmar Co mpie gn e C or be ilEsson ne s Cr eteil D ijon D un ker qu e Epi nal Ev ry Ga p Gr en oble La Roch elle La R och eSu rYo n La v al Le H av re Le Ma ns Lille Lim og es Lyo n Ma rseill e Me lun Me tz Mo nta ub an Mo ntluco n Mo ntpe llier Mu lho use Na ncy N an t es N eu illySur Sein e N ev ers Ni ce Ni mes N ior t Or lea n s Par is Pau Per igue ux Per pig n an Poitie rs Qu impe r Re ims R en ne s R ou en R ue i lMa lma ison Sai ntBrie uc Sain tDe nis Sain tEtienn e Sai ntGe rmain EnL a ye Sain t Ma lo Sain tNa z air e Sai ntQu entin Sar celle s Sete Stra sbou rg T arb es T oulo n T oulo use T our s T roye s Vale nce Val encie n ne s Van nes Ver s aille s Vichy Villeu rb a nn e -1.0 -0 .5 0.0 0 .5 1.0 -1.0 -0 .5 0.0 0 .5 1.0 axe 1 ax e 2 P op Gro wth A ge i ng P op A ttract A cti v e P op A ttract Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 27 Table 5: Goodness of fit and importance of SEER predictor components (4 themes model) R² F 1 1 F 1 2 F 2 1 F 2 2 F 3 1 F 3 2 F 4 1 F 4 2 G 1 .528 .397 *** -.547 *** -.244 ** .181 * -.363 *** -.213 * -.236 ** -.185 * G 2 .383 -.586 *** PopGrowth .126 .347 ** Ageing .426 -.259 * .290 ** -.630 *** PopAttract .607 .427 *** -.477 *** .214 ** .371 *** .260 ** ActivePopAttract .610 .533 *** -.457 *** .160 * .280 ** -.190 * -.167 * P-value coding: 0 <'***' <0.001 <'**' < 0.01< '*' <0.05 <' ' <1 Figure 13: Economic predictor plane ( F 1 1 , F 1 2 ) -1 0 1 2 3 4 5 -3 -2 -1 0 1 2 ax e 1 ( 31.64 %) axe 2 ( 11.59 % ) Age n AixEnPr ov en ce Ajaccio Albi Amie ns Ang e rs Ang o ule me Ann e cy Antib es Arle s Auxe rr e Av i gn on Bastia Bayo nn e Bea u v ais Belf ort Besa nco n Bezie rs Blois Bor de aux Bou logn e Billan cou rt Bou rgEn Bre s se Bou rg es Bre s t Briv eL aG ailla rde C ae n C ala is C an ne s C ar casson ne C astre s C ha lon S ur Sa on e C ha mb er y C ha rle v i lleM ezier es C ha rtr es C ho let C ler mo ntFe rr an d Co lmar Co mpie gn e Co rbe ilEsson ne s C re teil D ijon D un ker qu e Epin al Ev ry Ga p Gr eno ble La R och elle La R och eSu rYo n La v al Le Hav re Le Mans Lille L imog es L yon Ma rseille Me lun Me tz Mo nta ub an Mo ntlu con Mo ntp ellie r Mu lho use N an cy N an tes N eu illySur S e i n e N ev ers Ni ce Ni mes N ior t Or lea n s Par is Pau Per igu e ux Per pign an Poitie rs Qu imp er R eim s R en ne s R ou en R ue ilMa lma ison Sain t Brie uc Sain t D en is Sain t Etienn e Sain t Ge rma inEnL aye Sain t Mal o Sain t Na z air e Sain t Qu en tin Sar c elle s Sete Stra sbou rg T arb es T oulo n T oulo use T our s T roye s Vale nce Vale ncien ne s Van n es Ver saille s Vichy Villeu rba nn e -1.0 - 0.5 0. 0 0.5 1.0 -1.0 -0. 5 0.0 0 .5 1.0 ax e 1 axe 2 Un e mploy t Y out h Un employ t LongUn employ t VarJob Crea t Activity FemA ctivit y ActiveI nCity Ci eF ai lu res AvgWage I ncomeT ax Wealth Tax Tax pa y ers SquaMeter I nh ab Duty RealEsT ax WaterM3 Ow n ers H ouse4rooms Hou seI nsal Hou seVa cant Hou seNew Built Wealth Activity Unemployment Owners Houses vacant Large ho uses Figure 14: Social risk plane ( F 2 1 , F 2 2 ) -3 -2 -1 0 1 2 3 4 -3 -2 -1 0 1 2 3 axe 1 ( 38.88 %) axe 2 ( 18.48 %) Ag e n AixEn Pro v en ce Aja ccio Alb i Ami en s An ge rs An go ule me An n ecy An tibe s Ar le s Au xer re Av ign on Ba stia Ba yon ne Be au v ais Be lf or t Be san con Be zie rs Blo is Bo rd ea ux Bo ul og n eBi llan cou rt Bo ur gEn Bres se Bo ur ges Br est Br iv eL aG ail lar de C a en C alai s C an ne s C a rca sson ne C astr es C ha lon Su rS ao ne C h am b er y C h ar le v illeM ezie re s C h ar t re s C ho let C le rm o ntF er ra nd C olm a r C o mp ie gn e C o rb eilEsso nn es C re t e il D ijo n D un kerq ue Ep in al Ev ry Ga p Gr en ob le La Ro c h elle La Ro c h eS ur Yo n L av al Le Ha v re L eM an s L ille Lim og es L yon Ma rse ille Me lun Me t z Mo ntau ba n Mo ntluco n Mo ntpe llier Mu lho use N an c y N an tes N e u i llySur Sein e N e v er s N ice N ime s N io rt Or lea ns Pa ris Pa u Pe ri gu eux Pe rp ign an Po itier s Qu imp er R e ims R e nn es R ou en R u eilM alma ison Sa in tBrie uc Sa in tDe n i s Sa in tEtien ne Sa in tGe rm ain En La y e Sa in tMa lo Sa in tNa zaire Sa in tQu en tin Sa rce lles Se te Str asb our g T ar be s T ou lo n T ou lo use T ou rs T ro yes Va le nce Va le ncie nn es Va nn es Ve rs aille s Vich y Ville u rb an ne -1.0 -0 .5 0 .0 0.5 1.0 -1.0 -0.5 0 .0 0.5 1.0 axe 1 axe 2 Cri mi nal ity Cri mVa r Road Risk SchoolD elay1 S choolDelay4 S choolDelay7 School delay Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 28 Figure 15: Health risk plane ( F 3 1 , F 3 2 ) -3 -2 -1 0 1 2 -3 -2 -1 0 1 2 3 axe 1 ( 2 7.52 %) axe 2 ( 18.41 %) Ag en AixEnPr ov ence Ajaccio Albi Amien s An ger s Ang oulem e Ann ecy Antib es Arle s Au xerr e Av ign on Bastia Ba yonn e Bea uv ais Belf ort Besa nco n Be ziers Blois Bor de au x Bou log ne Bill an cou rt Bou rgEn Bre sse Bou rge s Bre s t Briv eLa Gailla rd e C ae n C ala is C an ne s C ar casson ne C astres C ha lon Sur Sao ne C ham be ry C har lev illeMe zier es C ha rtre s C ho let C lerm on tFer ra nd C olma r C omp ieg ne C orb eilEssonn es C rete il D ijon D unke rq ue Ep inal Ev ry Ga p Gr en ob le La R och elle La R och eSur Yon La v al Le H av re Le Ma ns Lille Lim og es Lyo n Ma rseille Me lun Metz Mo ntau ba n Mo ntluco n Mo ntpe llie r Mu lho use N ancy N ante s N eu illySur S e i n e N ev ers N ice Nim es Nio rt Or lea ns Par is Pau Per igu eu x Per pig na n Poitie rs Qu imp er R eims R enn es R ou en R ue ilMalm aison Sa intBrie uc Sa intDe nis Sain t Etienn e Sain tGe rma inEn Laye Sa intMalo Sa intNa zair e Sa intQu en tin Sar celle s Sete Stra sbou rg T a rbe s T ou lon T oulo use T ou rs T ro y es Va lence Vale ncienn e s Van nes Ver saille s Vichy Villeu rb an ne -1.0 -0.5 0.0 0.5 1 .0 -1.0 -0.5 0.0 0 .5 1 .0 axe 1 axe 2 Flood s P ollu ted Land I ndustR is k MortI nfan t MortLun gC a ncer MortAlcohol MortCo rTh ro mb MortSuici de Environm ental risk Alcohol & tobacco risks Figure 16: Resource plane ( F 4 1 , F 4 2 ) -2 -1 0 1 0 2 4 6 8 axe 1 ( 21. 4 %) axe 2 ( 22.02 %) Agen Aix EnProv ence Ajacc io Albi Amiens Anger s Angou leme Annecy Antibes Arles Auxerre Av i gn on Bast ia Bayonne Beau v ais Belfo rt Besancon Bezi er s Blois Borde aux Boulog neBillancour t Bourg EnBresse Bourg es Brest Briv eLa Gaillard e Cae n Cal ais Can ne s Car c ass onne Castres Cha lonSur Saone Cha mber y Cha rlev ill eMeziere s Cha rtres Cho l et Cle rmontFer ran d Colma r Co mpiegn e Cor beilEs s on nes Cr eteil Dijon Dun kerqu e Epinal Ev ry Gap Gre noble LaR oc helle LaR oc heSu rYon Lav al LeH av re Le Mans Lille Limog es Lyon Marseille Melun Metz Montau ban Montlucon Montpellie r Mulhou se Na ncy Nan tes Ne uillyS urSe ine Nev ers Nice Nimes Nio rt Orle ans Paris Pau Perigu eux Perpig nan Poitiers Quimpe r Rei ms Ren ne s Rou en Ru eilMalmaison SaintBrieuc SaintDen i s SaintEt ienn e SaintGer mainEnLaye SaintMalo SaintNazair e SaintQue ntin Sarcelles Sete Strasbour g T ar bes T oulon T oulo use T ou rs T royes Valence Valencienn es Vann es Versailles Vic hy Vill eur ban ne -1.0 -0.5 0.0 0.5 1.0 -1.0 -0. 5 0. 0 0.5 1.0 axe 1 axe 2 Sea Si de Ski Sun Rain T empe ratu re Walker Mu s eums Ci nema Mon u ment s Book Loan Restau ran ts Press Studen t PrimC lassS ize Warmer Colder Museums Monuments Restaurants 4.4. Conclusion: comparing PLS and SEER Local nesti ng of components ha s allowed us to build models nested in an understandable way. Thi s is imperative if one wants t o produce multidimensional gr aphs of every variable group in relation to a model linking groups. Having a depe ndent group and a predictor one, we may then partiti on the latter thematicall y (SEER), or not (LN-PLS2). Compared to non-thematic LN-PLS2, the use of gradually refined the matic m odels has helped a good deal in outlining possibly important explanatory factors. SEER components are naturall y easier to interpret, for three main reasons: ➢ Each component being local to a the matic subspace, it has conceptual unity. ➢ Components are constr ained to be uncorrelated within each the me, but not bet ween themes. Thus, they gain freedom to better adjust structures i n themes. ➢ Thematic planes all ow clearer vision of the matic structures, thus allowing to sub-partition themes according to noticeable substruct ures. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 29 Appendix 1 Maximizing C 5 or C 6 does not lead to the same component . The situation we are dealing with is that pictured on fig. 1. Figure 1: variable group Y depending on a X -component F and a Z group l l l l X F x j l l Z G l l l l Y y k Let us consider the particul ar case where M = ( X'PX ) -1 and see what becomes of the two maximizations. ● Max C 5 : Max F ∈〈 X 〉 C 5 ⇔ M ax F ∈〈 X 〉 tr Y NY ' P F , Z tr YNY ' P F , Z = tr YNY ' P F , Z 2 = tr F , Z YNY ' P F , Z = tr F , Z YN F , Z Y ' P = tr Y F , Z N Y F , Z ' P (1) where : Y F , Z = F , Z Y So: tr Y NY ' P F , Z = In 〈 F , Z 〉 Y , N , P (2) Besides: F ∈ 〈 X 〉 ⇔ F = Xb And: F = Z F Z ¿ F So: 〈 F , Z 〉 = 〈 Z ¿ F , Z 〉 = 〈 Z ¿ X b , Z 〉 = 〈 Z ¿ X b 〉 ⊕ 〈 Z 〉 (3) From (2) and (3), and 〈 Z ¿ X b 〉 ⊥ 〈 Z 〉 , we draw: tr Y NY ' P F , Z = In 〈 Z ¿ X b 〉 Y , N , P In 〈 Z 〉 Y , N , P Let: X Z = Z ¿ X In 〈 Z 〉 Y , N , P being constant: Max F ∈ 〈 X 〉 tr Y NY ' P F , Z ⇔ Max b ∈ ℝ J In 〈 X Z b 〉 Y , N , P This latter program is none other t han that of MRA (i.e. I VPCA) of ( Y,N,P ) onto 〈 X Z 〉 . So, solution F is Xb with X Z b being the first component of this MRA. Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 30 Figure 2 z x 2 y 1 =x 1 y 2 =y 3 Let us now consider a ver y simple case, pictured on fig. 2, where X = { x 1 , x 2 } wit h x 1 ⊥ x 2 , and Z = { z }, with z ⊥ X . We obviously have the n: X Z = Z ¿ X = X . So, F is the first component of M RA of ( Y ,N,P ) onto X . Now, let Y = { y 1 , y 2 , y 3 } with y 1 = x 1 and y 2 = y 3 = z + ε x 2 , where ε ≈ 0, and N = I . MRA of ( Y,I,P ) onto X is PCA of ( Π X Y,I,P ). But Π X Y = { x 1 , ε x 2 , ε x 2 }. So this PCA leads to F = x 1 . ● Max C 6 : Max F ∈〈 X 〉 C 6 ⇔ M ax F ∈〈 X 〉 1 YNY ' P F , Z where λ 1 ( Ω ) denotes the largest eigenvalue of operator Ω . YNY ' P F , Z u = u ⇒ F , Z YNY ' P F , Z u = F , Z u ⇔ F , Z YNY ' P F , Z F , Z u = F , Z u So any eigenvalue of YNY ' P F , Z is also one of F , Z YNY ' P F , Z = F , Z YNY ' F , Z ' P . Since, according to (1), both operat ors have t he same trace, we may state that they have identical ei genvalues. So, in particular: 1 YNY ' P F , Z = 1 F , Z YNY ' P F , Z = 1 Y F , Z N Y F , Z ' P Besides: 1 Y F , Z N Y F , Z ' P = Ma x v ' Pv = 1 v ' P Y F , Z N Y F , Z ' Pv = In v Y F , Z , N , P (4) Note that v is then the standardized first principal component of Y F , Z , N , P 's PCA, and so: v ∈ 〈 F , Z 〉 (5) From (4) and (5), we deduce: 1 Y F , Z N Y F , Z ' P = In 〈 v 〉 Y F , Z , N , P = In 〈 v 〉 Y , N , P provided that it has maximal value for v ∈ 〈 F , Z 〉 . Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 31 So, we may write: Max F ∈ 〈 X 〉 C 6 ⇔ Max F ∈〈 X 〉 Max v ∈ 〈 F , Z 〉 In 〈 v 〉 Y , N , P ⇔ Max v ∈〈 X , Z 〉 In 〈 v 〉 Y , N , P (6) (6) is none other than the program of ( Y,N,P )'s MRA onto subspace < X,Z >. So, t o get the F maximizing C 6 , one has to perform this MRA, get rank 1 solution v , a nd t hen decompose v onto < X > and < Z > . The standardized X -component of this deco mposition is the sought F . Let us apply this t o the case pi ctured on fig. 2: MRA of ( Y,I,P ) onto subspace < X,Z > is PCA of ( Π Y,I,P ). But in t his ca se: Π Y = Y . And ( Y,I,P )'s PCA leads to f irst com ponent y 2 = y 3 = z + ε x 2 , whereby we get F = x 2 . Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 32 Appendix 2: Thematic partitioning of predictors Figure 1: Thematic hierarchy of predictor partitions for the city data Demographic dynamics All Economy Risks Resources W ork W ealth Cost of Living Housing Crime, Road Educational Health Environmen tal Cultural Natural Unemployt ... IncomeT ax ... ... SchoolDelay1 Floods PollutedLand ... Monuments ... Figure 2: Some thematic models of the city data Econom y, Nature, Cult ure, Health, Education ... Demog raphical dynami cs Econom y Risks R esources Demog raphical dynami cs Economy Social Risks Resources Demographical dynamics Physical Risks a: No thematic distinction between predictors b: Partition of predictors into 3 thematic groups c: Partition of predictors into 4 thematic groups W ork W ealth Social Risks Natural Resources Demographic dynamics Physical Risks Cultural Resources Cost of living Housing ... Demographic dynamics Unemployt IncomeT ax Floods ... ... ... ... d: Partition of predictors into 6 thematic groups ... e: Partition of predictors into degenerate thematic groups (single variables) Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 33 References Bry X., V erron T. (2008) : Modélisation factorielle des interactions entre deux ens embles d'observations: la méthode FILM (Factor Interaction Linear Modelling) , to appear in Journal de la SFdS/RSA (Paris). Bry X. (2006) : Extension de l' Analyse en Composantes Thématiques univariée au modèle linéaire généralisé , RSA vol. 54, n°3. Bry X. (2004) - a : Une méthodologie exploratoire pour l'analyse et la synthèse d'un modèle explicatif , PhD Thesis, Université de Paris IX Dauphine. Bry X. (2004) - b : Estimation empirique d'un modèle à variables latentes comportant des interactions , RSA vol. 52, n°3. Bry X. (2003) : U ne méthode d’estimation empirique d’un modèle à variables latentes : l’Analyse en Composantes Thématiques , RSA vol. 51, n°2, pp. 5-45. Cazes P. (1997) : Adaptation de la régression PLS au cas de la r égression après Analyse des Correspondances Multiples , RSA vol. 45, n°2, pp. 89-99 Derquenne C h. et Hallais C., (2003): Une méthode alterna tive à l'approche PLS : comparaison et application aux modèles conceptuels marketing , Revue de Statistique Appliquée, LII, 37-72. Durand J.-F. (2001): Local polynomial additive regression through PLS and splines: PLSS , Chemometrics and Intelligent Laboratory Systems 58, 235–246. Jöreskog, K . G. and Wold, H. (1982) The ML and PLS techniques for modelling with latent variables:historical and competitive aspect, i n Jöreskog, K. G. et Wold, H. (Editors), Systems under indirect observation, Part 1, pages 263 – 270, North – Holland, A msterdam Lohmöller J.-B. (1989) : Latent Variables Path Modelling with Partial Least Squares , Physica- Verlag, Heidelberg. Stan, V. and Saporta, G. (2005) Conjoint use of variables clustering and PLS s tructural equations modelling , PLS’05, 4 th International S ym posium on PLS and Related Methods; Barcelone Tenenhaus M. (1998) : La régression PLS, théorie et pratique , Technip. Tenenhaus, M. & al. (2005) PLS path modelling , Computational Statistics & Data Analy sis, volume 48, pages 159-205 Vivien M. (2002) : Approches PLS linéaires et non linéaires pour la modélisation de multi- tableaux : théorie et applications , Thèse de doctorat, Université Montpellier I. Vivien M., Sabatier R. (2001): Une extension multi-tableaux de la régression PLS . Revue de Statistique Appliquée, vol. 49, n°1, p. 31-54 Wold H . (1985) : Partial L east Squares , Encyclopedia of Statistical Sciences, John Wiley & Sons, pp. 581-591 Bry X., Verron T., Cazes P. (2007): Structural Equation Exploratory Regression 34

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment