Design and Analysis of LDGM-Based Codes for MSE Quantization

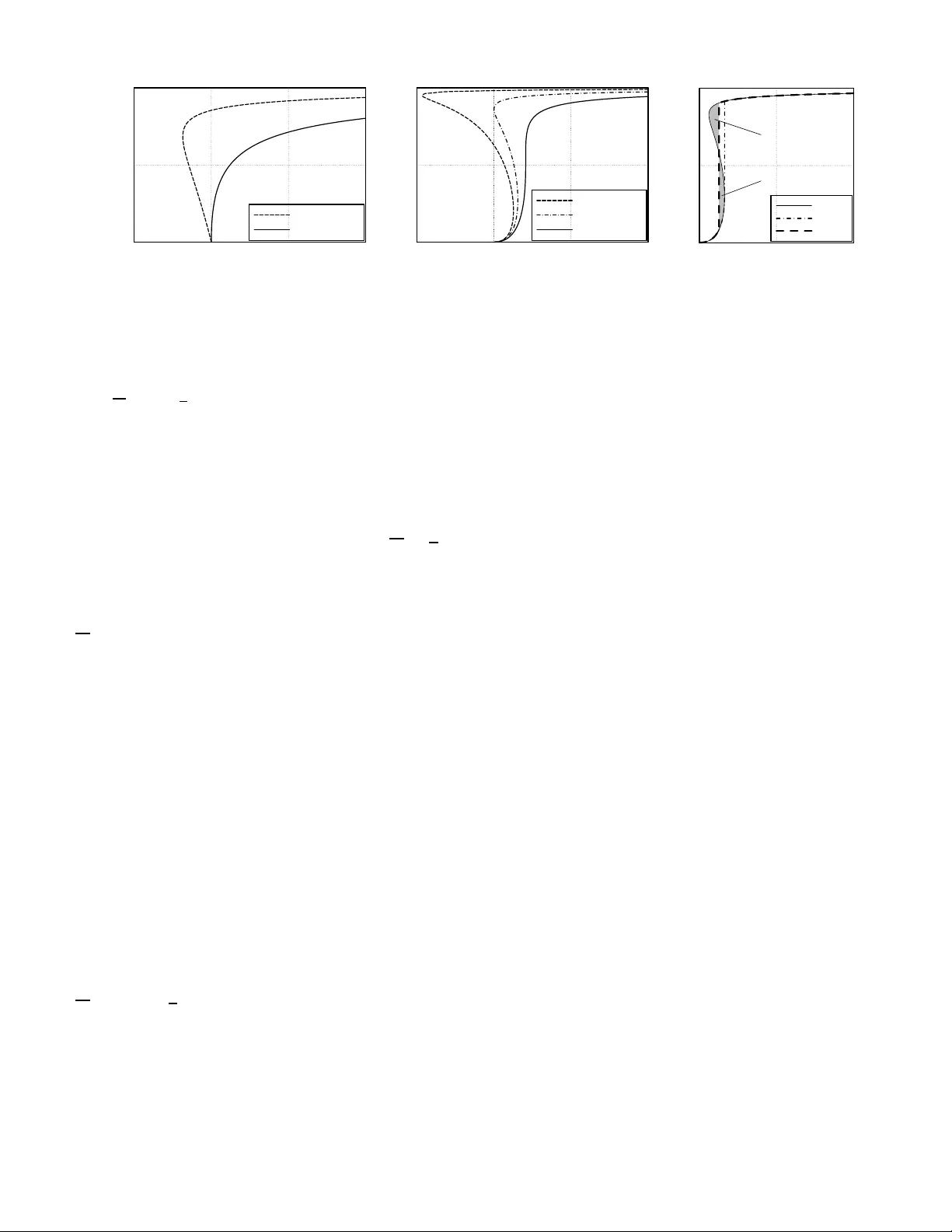

Approaching the 1.5329-dB shaping (granular) gain limit in mean-squared error (MSE) quantization of R^n is important in a number of problems, notably dirty-paper coding. For this purpose, we start with a binary low-density generator-matrix (LDGM) cod…

Authors: Qingchuan Wang, Chen He