Blind decoding of Linear Gaussian channels with ISI, capacity, error exponent, universality

A new straightforward universal blind detection algorithm for linear Gaussian channel with ISI is given. A new error exponent is derived, which is better than Gallager's random coding error exponent.

Authors: Lorant Farkas

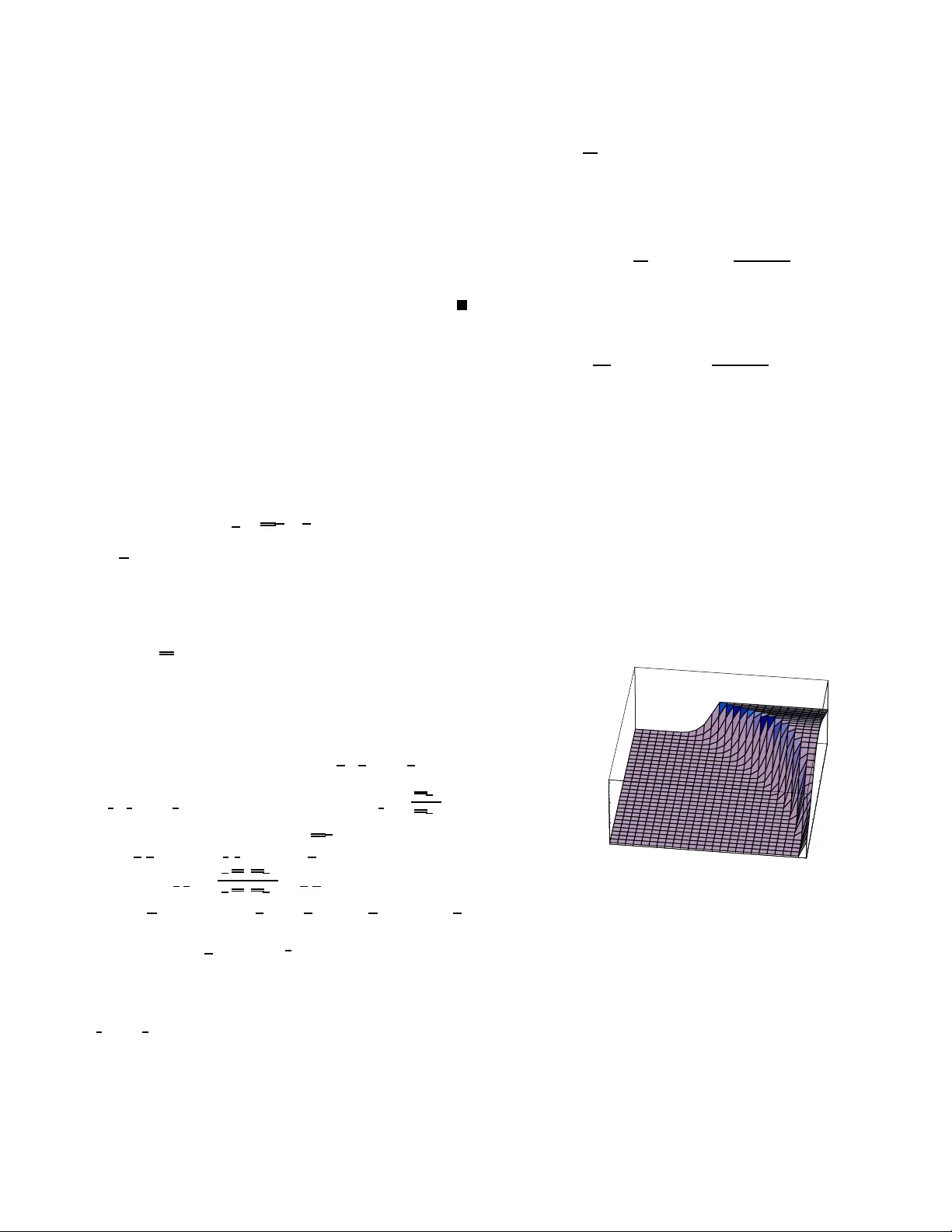

1 Blind decoding of Linear Gaussian channels wit h ISI, capaci ty , error e xponent, uni v ersali ty Farkas Lóránt Abstract — A new straightforward universa l blin d detection algorithm f or linear Gaussian channel with ISI is given. A n ew error ex ponent is deriv ed, which is better than Gallager ’ s random coding error exponent. I . I N T R O D U C T I O N In this paper, the discrete Ga ussian ch annel with intersym - bol interf erence (ISI) y i = l X j =0 x i − j h j + z i (1) will be considered , where the vector h = ( h 0 , h 1 , . . . , h l ) represents the ISI, and { z i } is white Gaussian n oise with variance σ 2 A similar con tinuous tim e model has been stud ied in Gal- lager [6]. He showed that it could be reduc ed to the form y n = v n x n + w n (2) where the v n are e igen values of the correlatio n operator . Th e same is true also fo r the discrete model (1), but the reduc- tion requir es kno wledge of the covariance matrix R ( i, j ) = P l k =0 h ( k − i ) h ( k − j ) whose eigenvectors sh ould be u sed as new basis vectors. Here howe ver su ch knowledge will not be assumed, our goal is to study u niv ersal cod ing fo r the class o f ISI channels of for m (1). As a m otiv atio n , note th at the altern ate method of first identifyin g the chann el by transmitting a known “training sequences” has some d rawbacks. Becau se the leng th of the training sequence is limited, the estimation of the channel can be imp recise, and the data sequence is thus deco ded acco rding to an in correct likelihood function. This results in an inc rease in erro r rates [2], [3] and in a d ecrease in c apacity [4]. As the training seq uence con tains no valuable info rmation, the longer it is the less informa tion bits can be carried. One ca n thin k this p roblem cou ld be solved b y choo sing the training seque nce sufficiently large to ensur e precise channel estimation, and then ch oose the d ata block suf ficiently lon g, but this solutio n seldom work s due to th e delay constraint, and to th e slow change in time of the chann el. So we will giv e a straightforward metho d of coding and decodin g, witho ut a ny Channel Side In formatio n (CSI) T o achieve this, we generalise the r esult of Csiszár and Körner [5] to Gaussian cha nnel with white noise and ISI, u sing an analogu e of the Maximal Mutual Informatio n (MMI) decoder in [5]. Thanks for Imre Csiszár for his numerous correction in this work. W e will show that our new method is no t only universal, not depend ing on the chann el, but its error exponent is better in many cases than Gallager ’ s [6] lower bound fo r the case when complete CSI is av a ilable to the r eceiv er . Previously , Gallager’ s error exponen t has been impr oved for some chan- nels, using an MMI dec oder, such as for discrete memor yless multiple-acce ss ch annels [7]. W e don’t use Gen eralised Max imum likeliho od dec oding [9], but a g eneralised version of MMI d ecoding. This is done by firstly app roximating the channel p arameters b y m aximum likelihood estimation , an d then adop t the message whose mutual informatio n with the estimated param eters is max imal. By using an extension o f the powerful me thod of types, we can simply derive the cap acity region, and rand om co ding error expon ent. At the end, we h av e a mor e g eneral result, namely: W e sho w how th e method of ty pes can be extend ed to a continuou s, non memory less environmen t. The struc ture of this correspon dence is as follows. In Section I I we generalise typ ical sequen ces to ISI ch annel en vironmen t. Th e main goal of section III is to give a new method of blind detection. In section IV we sh ow by numerical results, that for some param eters the new er ror exponen t is b etter than Ga llager’ s r andom co ding err or expo nent. In Section V we discu ss the result, a nd gi ve a genera l for mula to the channels with fading. I I . D E FI N I T I O N S Let γ n be a sequ ence of positive num bers with limit 0 . The sequence x ∈ R n is γ n -typical to an n -dimension al continuou s distribution P , denoted by x ∈ T P , if |− lo g ( p ( x )) − H ( P ) | n < γ n (3) where p ( x ) denotes the density f unction, and H ( P ) the differential entropy of P . Similarly sequences x ∈ R n , y ∈ R n + l are jointly γ n -typical to 2 n + l d imensional joint ran dom distribution P X,Y denoted by ( x , y ) ∈ T P X Y , if − lo g( p X,Y ( y , x )) − H ( p X,Y ) < nγ n In the same way , a sequ ence y is γ n -typical to the con- ditional distribution P Y | X , given that X = x , den oted by y ∈ T P Y | X ( x ) if − lo g( p Y | X ( y | x )) − H ( Y | X ) < nγ n For simplify ing the proo f, in th e following, P X = P is always th e n dimensional i.i.d. standard normal distrib ution, the op timal inp ut distribution of a Gaussian cha nnel with 2 power co nstrain 1. The condition al distribution will b e chosen as P ( n,σ ) Y | X with d ensity 1 σ n (2 π ) ( n + l n ) / 2 exp ( −| y − h ∗ x | 2 2 σ 2 ) where h = ( h 0 , h 1 , h 2 , . . . , h l ) and ( h ∗ x ) i = P l j =0 x i − j h j where x k = 0 is un derstood for k < 0 . So in this case H h,σ ( Y | X ) = H ( h ∗ X + Z | X ) = H ( Z ) = n [ ln(2 π e ) 2 +ln( σ )] The limit of the entropy of Y = X ∗ h + Z a s n → ∞ , is lim n →∞ H h,σ ( Y ) n = 1 2 ln(2 π e ) + 1 2 π Z 2 π 0 ln ( σ + f ( ξ )) dξ where f ( λ ) = P ∞ k = −∞ ( P l −| k | j =0 h j h j + | k | ) e ikλ see [1] (here R m,n = r ( m − n ) = r ( k ) = P l −| k | j =0 h j h j + | k | is the correlation matrix). So th e lim it of th e average mutu al info rmation per symbol, that is lim n →∞ I n ( h, σ ) = lim n →∞ H h,σ ( Y ) − H h,σ ( Y | X ) n is equa l to I ( h, σ ) ⊜ 1 2 π Z 2 π 0 ln 1 + f ( ξ ) σ dξ moreover the sequence I n ( h, σ ) is no n-increa sing (see [1]). W e will consider a finite set of chan nels that grows su bex- ponen tially with n , and in the limit dense in the set of all ISI channels. T o this e nd, defin e the set of ap proxima ting ISI, as H n = { h ∈ R l n : h i = k i γ n , | h i | < P n , k i ∈ Z , ∀ i ∈ { 1 , 2 , . . . , l n }} where l n is the length of the ISI, P n is the power constraint per symbol, and γ n is the “coarse graining”, intu iti vely the prec i- sion o f de tection. Similarly we define the set of app roximatin g variances a s V n = { σ ∈ R : σ = k γ n , 1 / 2 < | σ | < P n , k ∈ Z + ∀ i ∈ { 1 , 2 , . . . , l n }} These two sets form the appr oximating set of par ameters, denoted by S n = H n × V n . Below we set l n = [lo g 2 ( n )] , P n = n 1 16 , γ n = n − 1 4 1 Definition The ISI ty pe o f a pair ( x , y ) ∈ R n × R n + l n is the p air ( h n , σ n ) ∈ S n defined by h ( i ) = argmin h ( i ) ∈H n k y − h ( i ) ∗ x i k σ ( i ) 2 = a r gmin σ ( i ) ∈V n | σ ( i ) 2 − min h ( i ) ∈H n k y − h ( i ) ∗ x i k 2 n | Note that this typ e concept does no t apply to separate input or ou tput sequences, o nly to p airs ( x , y ) . I I I . L E M M A S , T H E O R E M W e summarise th e resu lt of this section: The first Lemma shows that the above d efinition o f ISI type is consistent, in the sense th at y is conditio nally P h,σ Y | X typical given x , at least in the case when k y − h ∗ x k 2 is not too large. Lemma 2 g i ves the pro perties which we need for our method, and pr oves that almost all ran domly gene rated sequences h as these proper ties. Lemma 4 g iv es an up per bound to the set of output signals, which are “p roblematic” thus typical to two cod ew o rds, na mely they can be result of two different codeword with different channel. Lemma 5 shows that if the channel par ameters estimated via m aximum likelihood ( ML), the codewords a nd the noise cannot be very correlated. Lemma 6 giv es the fo rmula o f th e p robability of the e vent that an output seq uence is typical with an other cod ew ord with respect to an other chann el. All Lem mas are used in The orem 1, which giv es the main result, and defin es the detection metho d strictly . Lemma 1 When | k y − h ( i ) ∗ x i k 2 n − σ ( i ) 2 | < γ n so the detected variances is in the interior of the set of appr oximating variances, then y ∈ T P h ( i ) ,σ ( i ) Y | X ( x i ) Pr oof: Indeed , if | k y − h ( i ) ∗ x i k 2 n − σ ( i ) 2 | < γ n then k y − h ( i ) ∗ x i k 2 2 σ ( i ) 2 − n 2 < nγ n . W ith − lo g( P h ( i ) ,σ ( i ) Y | X ( y | x i )) = n 2 log(2 π σ ( i ) 2 ) + k y − h ( i ) ∗ x i k 2 2 σ ( i ) 2 we ge t | − log( P h ( i ) ,σ ( i ) Y | X ( y | x i )) − H P h ( i ) ,σ ( i ) Y | X ( Y | X ) | = k y − h ( i ) ∗ x i k 2 2 σ ( i ) 2 − n 2 and by the definition y ∈ T P h ( i ) ,σ ( i ) Y | x ( x i ) if | − log( P h ( i ) ,σ ( i ) Y | X ( y | x i )) − H P h ( i ) ,σ ( i ) Y | X ( Y | X ) | < nγ n Lemma 2 F or arbitrarily small δ > 0 , if n is lar ge enoug h, ther e exis ts a set A ⊂ T n P , with P n ( A ) > 1 − δ , wher e P is the n - dimensiona l stand ar d normal distribution, such that for all 3 x ∈ A , k , l ∈ { 0 , 1 , . . . , l n } k 6 = l P n j =0 x j − k x j − l n < γ n (4) P n j =0 x j − k x j − k n − 1 < γ n (5) − 1 n ln p ( x ) − 1 /n H( P ) < γ n (6) Pr oof: T ake n i.i.d , standard Gau ssian random variables X 1 , X 2 , . . . , X n . Fix k, l 1 < k , l < n . By Cheb ishev’ s inequality , P r ( P n i =1 X i − k X i − l n > ξ r 1 n ) < 1 ξ 2 From this, with ξ = γ n n 1 2 P r P n i =1 X i − k X i − l n > γ n < 1 ( γ n n 1 2 ) 2 = δ n Which means that, there exist a set in R n whose P n measure is at least 1 − δ n and for all seque nces f rom this set it is true that P n j =0 x j − k x j − l n < γ n Similarly there exist such sets for all k 6 = l in { 0 , 1 , . . . , l n } × { 0 , 1 , . . . , l n } . By a completely analogous pro cedure we can make sets which satisfy 5 and 6. The intersection of these sets P -measure at least 1 − 2 δ n ( l 2 n + 1 ) . As δ n l 2 n → 0 , this proves the L emma. The Leb esgue measu re will be den oted by λ ; its dimension is not specified, it will be always clear wh at it is. Lemma 3 If n is larg e eno ugh then the set A in Lemma 2 satisfies 2 H ( P ) − 2 nγ n < λ ( A ) < 2 H ( P )+ nγ n And for any m -d imensional continuo us distrib ution Q ( · ) λ ( T Q ) < 2 H ( Q )+ nγ n wher e T Q is the set of typ ical sequ ences to Q , see (3) Pr oof: Since 1 > P ( A ) = Z A p ( x ) λ ( dx ) > 1 − δ by the p revious Lemma, by using 2 − ( H P X − nγ n ) > p ( x ) > 2 − ( H P X + nγ n ) on T P X , an d A ⊂ T , we ge t 2 H ( P )+ nγ n ) > λ ( A ) > (1 − δ )2 H ( P ) − nγ n ) > 2 H ( P ) − 2 nγ n ) if n is large en ough. Similarly fr om 1 > Q ( T Q ) = Z T Q q ( x ) λ ( dx ) 2 H ( Q ) − nγ n > λ ( T Q ) The next lemma is an analog ue of the Packing Le mma in [5] Lemma 4 F or all R > 0 , δ > 0 , , th er e exist at least 2 n ( R − δ ) differ ent sequences in R n which a r e elements of the set A fr om Lemma 2, and for each pa ir of ISI channels with h, ˆ h ∈ H n , σ , ˆ σ ∈ V n , and for all i ∈ { 1 , 2 , . . . , M } λ T P ( h,σ ) Y | X ( x i ) ∩ [ j 6 = i T P ( ˆ h, ˆ σ ) Y | X ( x j ) ≤ 2 − [ n ( | I( ˆ h, ˆ σ ) − R | + ) − H h,σ ( Y | X )] (7) pr ovided that n ≥ n 0 ( n, m, δ ) Pr oof: W e shall u se the method of rando m selection. For fixed n, m con stans , let C m be the family of all ordered col- lections C = { x 1 , x 2 , . . . , x m } , of m not necessarily different sequences in A . Notice that if some C = { x 1 , x 2 , . . . , x m } ∈ C m satisfies (7) for every i and pair o f Gaussian ch annels ( h, σ ) , ( ˆ h, ˆ σ ) , then x i ’ s are necessarily distinct. For any col- lection C ∈ C m , denote the left-hand side of (7) by u i ( C, h, ˆ h ) . Since for x ∈ T P λ {T P h,σ Y | X ( x ) } ≤ 2 n (H h,σ ( Y | X ) − γ n ) from Lemm a 3, a C ∈ C m satisfy (7), if for all i, h, ˆ h u i ( C ) ⊜ X h, ˆ h ∈H u i ( C, h, ˆ h ) · 2 n [I( ˆ h, ˆ σ ) − R ] − H h,σ ( Y | X ) is at most 1, for every i . Notice that, if C ∈ C m 1 m m X i =1 u i ( C ) ≤ 1 / 2 (8) then u i ( C ) ≤ 1 for a t least m 2 i indices i . Furth er , if C ′ is the subcollection, states the above indexes, th en u i ( C ′ ) ≤ u i ( C ) ≤ 1 for ev ery such index i . Hence the Lemma will be proved, if for an m with 2 · 2 n ( R − δ ) ≤ m ≤ 2 n ( R − δ 2 ) (9) we find a C ∈ C m which satisfy 8. Choose C ∈ C m at random, acco rding to unif orm distribu- tion from A . In other words, let W m = ( W 1 , W 2 , . . . , W m ) be ind ependen t R V’ s, each unif ormly distributed over A . In order to prove that 8 is tr ue for som e C ∈ C m , it su ffices to show that E u i ( W m ) ≤ 1 2 i = 1 , 2 , . . . , m (10) T o this end , we bou nd E u i ( W m , h, ˆ h ) . Recalling that, u i ( C, h, ˆ h ) denotes the lef t-hand side o f 7, we have E u i ( W m , h, ˆ h ) = (11) Z y ∈ R Pr { y ∈ T P h,σ Y | X ( W i ) ∩ [ j 6 = i T P ˆ h, ˆ σ Y | X ( W j ) } (12) 4 As the W j are inde pendent identically distrib uted the pro ba- bility the integration is bo unded above by X j : j 6 = i Pr { y ∈ T P h,σ Y | X ( W i ) ∩ T P ˆ h, ˆ σ Y | X ( W j ) } = (13) ( m − 1) · Pr { y ∈ T P h,σ Y | X ( W i ) } · Pr { y ∈ T P ˆ h, ˆ σ Y | X ( W j ) } (14) As the W j ’ s are uniformly distributed over A , we hav e for all fixed y ∈ R n Pr { y ∈ T P h,σ Y | X ( Z i ) } = λ { x : x ∈ T P X , y ∈ T P h,σ Y | X ( x ) } λ {A} The set in the enumerato r is n on-void only if y ∈ T P h,σ Y . In this case it can b e written as T ¯ P X | Y ( y ) , where ¯ P is a condition al distribution, which P X ( a ) P h,σ Y | X ( b | a ) = P h,σ Y ( b ) ¯ P X | Y ( a | b ) Thus by Lemma 3, and Lemm a 2 Pr { y ∈ T P h,σ Y | X ( Z i ) } ≤ 2 H h,σ ( X | Y )+ nγ n 2 H( X ) − 2 nγ n ) = 2 − n ( I ( h,σ ) − 3 γ n ) If y ∈ T P h,σ Y , and Pr { y ∈ T P h,σ Y | X ( W i ) } = 0 otherwise. So, if we up per boun d λ ( T P h,σ Y ) by 2 H h,σ ( Y )+ nγ n - with the use of Lemma 3 - fro m (14), (12) and (9) we g et, E u i ( W m , h, ˆ h ) ≤ λ ( T P h,σ Y )( m − 1)2 − n [I( h,σ )+I( ˆ h, ˆ σ ) − 6 γ n ] ≤ 2 − n [I( ˆ h, ˆ σ ) − R + δ − 7 γ n ]+H h,σ ( Y | X ) Let n be so large that 7 γ n < δ / 2 , then we get E u i ( W m ) ≤ |H 2 n ||V 2 n | 2 − n ( δ/ 2) which pr oves (10) Lemma 5 F or x ∈ A fr om Lemma 2, and y as is (1), and ˜ h = argmin h ∈H ( n ) k y − h ∗ x k , an d z = y − ˜ h ∗ x , P n j =1 z j x j − k n < γ n k ∈ { 0 . . . l n } Pr oof: (Indire ct) Suppose that P n j =1 z j x j − k n = λ k > γ n for som e k ∈ { 0 . . . l n } . Then let ˆ h j = ( ˜ h j if j 6 = k ˜ h j + γ n if j = k W e will show , that k y − ˆ h ∗ x i k < k y − ˜ h ∗ x i k , which contradicts to th e definition of ˜ h ). Now , k y − ˆ h ∗ x i k 2 = n X j =1 ( y j − l n X g =0 ˆ h g x j − g ) 2 = = n X j =1 ( y j − l n X g =0 ˜ h g x j − g − γ n x j − k ) 2 = = n X j =1 ( z j − γ n x j − k ) 2 = n X j =1 ( z 2 j − 2 γ n z j x j − k + γ 2 n x 2 j − k ) On accou nt of ( 4), ≤ k z i k 2 − 2 nγ n λ k + γ 2 n ( n + γ n ) ≤ k z i k 2 − ( n − γ n ) γ 2 n = k y − ˜ h ∗ x i k − nγ 2 n + γ 3 n < k y − ˜ h ∗ x i k Lemma 6 Let δ > 0 , a nd x ∈ A fr om Lemma 2. Let h, σ ∈ H × V an d h o , σ o ∈ H × V be two arb itrarily (I SI fun ction, varian ce) pairs. Let y and x be such th at y ∈ T h,σ Y | X ( x ) (15) h = argmin h ∈H ( n ) k y − h ∗ x k (16) σ 2 = k y − h ∗ x k /n (17) Then p h o ,σ o Y | X ( y | x ) ≤ 2 − n [ d (( h,σ ) k ( h o ,σ o )) − δ ] − H h,σ ( Y | X ) Her e d (( h, σ ) k ( h o , σ o )) = − 1 2 log( σ 2 σ 2 o ) − 1 2 + σ 2 + k h − h o k 2 2 ∗ σ 2 o is an information diverg ence fo r Ga ussion distributions, p ositive if ( h, σ ) 6 = ( h o , σ o ) . Pr oof: P h o ,σ o Y | X ( y | x i ) = 2 − n " − 1 n log P h o ,σ o Y | X ( y | x i ) P h,σ Y | X ( y | x i ) !# +log( P h,σ Y | X ( y | x i ))] (18) and y ∈ T h,σ Y | X ( x ) by the d efinition, so: − lo g( P h,σ Y | X ( y | x i )) ≥ H h,σ ( Y | X ) − γ n > H h,σ ( Y | X ) − δ 3 (19) if n is large enou gh. W ith this: log P h o ,σ o Y | X ( y | x i ) P h,σ Y | X ( y | x ) ! = log 1 (2Π) n 2 σ n o exp ( − k y − h ∗ x k 2 2 σ 2 o ) 1 (2Π) n 2 σ n exp ( − k y − h ∗ x k 2 2 σ 2 ) = n log ( σ σ o ) + k y − h ∗ x k 2 2 σ 2 − k y − h o ∗ x k 2 2 σ 2 o ≤ n 2 log( σ 2 σ 2 o ) + n + nγ n 2 − k y − h o ∗ x k 2 2 ∗ σ 2 o (20) 5 Introd uce th e fo llowing n otation z = y − h ∗ x , T hen k y − h o ∗ x k 2 = k z + h ∗ x − h o ∗ x k 2 = = n X j =1 ( z j + l n X k =0 ( h k − h o k ) x j − k ) 2 = n X j =1 ( z 2 j + 2 z j l n X k =0 ( h k − h o k ) x j − k + ( l n X k =0 ( h k − h o k ) x j − k ) 2 = k z k + 2 l n X k =0 ( h k − h o k ) n X i =1 z j x j − k + + n X j =1 ( l n X k =0 ( h k − h o k ) x j − k ) 2 = using Lem ma 5 n X j =0 z j x i,j − k < nγ n = k z k + 2 l n X k =0 ( h k − h o k ) nγ n + l n X k =0 ( h k − h o k ) 2 n X j =0 x 2 i,j − k + + n X j =0 l n X k 6 = m ( h m − h o m )( h k − h o k ) x i,j − k x i,j − m ≥ ≥ k z k − n 4 l n P n γ n + ( n − nγ n ) k h − h o k 2 − n ( l n P n ) 2 γ n W ith this we can bo und (20) − 1 n log P h o ,σ o Y | X ( y | x i ) P h,σ Y | X ( y | x i ) ! = − 1 2 log( σ 2 σ 2 o ) − 1 2 + σ 2 + k h − h o k 2 2 ∗ σ 2 o − γ n 2 − 4 l n P n γ n − γ n l 4 P 2 n − ( l n P n ) 2 γ n = (21) while k z k = nσ 2 . If n large en ough, then max(4 l n P n γ n , 4 γ n l n P 2 n , ( l n P n ) 2 γ n ) < δ 6 since lim n →∞ P 2 n l 2 n γ n = 0 . Using (6) we con tinue from (21) = d (( h, σ ) k ( h o , σ o )) − γ n 2 − (4 l n H n γ n + γ n l 4 H 2 n + ( l n H n ) 2 γ n ) ≥ ≥ d (( h, σ ) k ( h o , σ o )) − δ / 2 Substituting this and (19) to (18) giv es the desired result. Now we can state, and pr ove ou r main theorem Theorem 1 F or arbitrarily given R > 0 ε > 0 , and blocklength n > n 0 ( R, ǫ ) , ther e exist a code ( f , φ ) (cod- ing/decod ing fun ction pair), with rate ≥ R − ε such th at for all ISI channels, with parameters h o ∈ R l n , | h o i | < P n , σ o < P n , σ o 6 = 0 , the average err or p r obab ility satisfies P e ( h o , σ o , f , φ ) ≤ 2 − n ( E r ( R,h o ,σ o ) − ε ) Her e E r ( R, h o , σ o ) ⊜ (22) min h,σ { d (( h, σ ) k h o , σ o )) + | I( h, σ ) − R | + } (23) wher e d (( h, σ ) k h o , σ o )) is the in formation diverg ence (6), Remark 1 The expr ession minimised above is a contin uous function of h o , σ o , h, σ , R Pr oof: Let δ = ε/ 3 , and let C = { x 1 , x 2 , . . . , x M } the set of codesequ ences from Lem ma 4 , so M ≥ 2 n ( R − δ ) . The coding function send s the i - th co dew ord f or message i , f ( i ) = x i . The dec oding hap pens as follows : Let denote the ISI-type of y , x i by h ( i ) , σ ( i ) fo r all i ∈ { 1 , 2 , . . . , M } . Using these parameters we define the d ecoding ru le as fo llows φ ( y ) = i ⇐ ⇒ i = argmax j I ( h ( j ) , σ ( j )) in case of no n-uniq ueness, we d eclare an error . Now we boun d the error P e = P out + 1 M M X i =1 P h o ,σ o Y | X ( φ ( y ) 6 = i | x i , e c 1 ) (24) where P out denotes the pr obability of ev ent E that the detec ted variance, for som e i ∈ { 1 , 2 , . . . , M } do es not satisfy | σ ( i ) 2 − k y − h ( i ) ∗ x i k 2 n | < γ n . Bound the probab ility of this ev ent. I f E occurs then σ ( i ) is extremal po int of th e appro ximating set of parameters, so | y − h ( i ) ∗ x i k 2 > nP n . Since h = (0 , 0 , . . . , 0 ) is element of the app roximating set of ISI, this m eans that the power of th e incoming sequence is greater than nP n , the probab ility of this P out ≤ 2 Z ∞ P n l n 1 σ n (2 π ) n/ 2 e −| z | 2 2 σ 2 n = = (2 erfc ( P n l n σ )) n << e − n 1+1 / 8 where e rfc ( · ) th e co mplemen t normal e rror function. this probab ility converges to 0 faster that exponen tial, which - as we will see - means that in (24) the seco nd term is the dominan t. Consider the second te rm from (24). I f we sent i th en φ ( y ) 6 = i o ccurs if an d only if I( h ( j ) , σ ( j )) ≥ I( h ( i ) , σ ( i )) W e kn ow that y ∈ T P h ( i ) ,σ ( i ) Y | X ( x i ) ∩ T P h ( j ) ,σ ( j ) Y | X ( x j ) while we supposed that we are not in the event E . So the probab ility of the secon d term of (24) P h o ,σ o Y | X ( φ ( y ) 6 = i | x i , e c 1 ) = X ( ˆ h, ˆ σ ) , ( h,σ ) ∈ ( H n , S n ) , ( H n , S n ) I( ˆ h, ˆ σ ) ≥ I( h,σ ) Z {T P h ( i ) ,σ ( i ) Y | X ( x i ) ∩ T P h ( j ) ,σ ( j )( x j ) } Y | X P h o ,σ o Y | X ( y | x i )d y 6 W ith Lemm a 6 ( substituting ( h, σ ) = ( h ( j ) , σ ( j )) , ( ˆ h, ˆ σ ) = ( h ( i ) , σ ( i )) , δ = ε/ 3 ) and fro m Lemma 4 we g et P e ≤ X ( ˆ h, ˆ σ ) , ( h,σ ) ∈ ( H n , V n ) 2 I( h ( j ) ,σ ( j )) ≥ I ( h ( i ) ,σ ( i )) 2 − n m in h,σ [ d (( h ( i ) ,σ ( i )) k ( h o ,σ o ))+ | I( h ( j ) ,σ ( j )) − R | + − 2 ε/ 3] if n is large enou gh. From this - since the num ber of th e approx imating chan nel par ameters grows subexponen tially - we ge t P e ≤ 2 − n m in h,σ [ d ( σ o k σ )+ | I( h,σ ) − R | + − ε ] I V . N U M E R I C A L R E S U LT W e compare th e new err or expon ent with Gallager’ s e rror exponent. Gallager de riv ed the meth od to send dig ital infor- mation thro ugh channel with continuo us time and alph abet, with given channel function. This result can b e easily modified to discrete time, as in e.g. [10], [ 8]. The Linear Gau ssian channel with d iscrete time parameter, with fadin g vector h = ( h 0 , h 1 , . . . , h l ) can b e formu lated as fo llows : y = H x + z (25) where x is th e input vecto r and H = h 0 0 0 . . . 0 h 1 h 0 0 . . . 0 . . . . . . . . . . . . . . . h l h l − 1 h l − 2 . . . 0 0 h l h l − 1 . . . 0 . . . . . . . . . . . . . . . 0 0 0 . . . h l (26) From [6] we get the id ea to d efine a right an d a left eig enbasis for the matrix H . So the righ t eig enbasis r 1 , r 2 , . . . , r n , is not else then the eigenbasis of H T H whe re T means the transpose. And l 1 , l 2 , . . . , l m is the left eig enbasis, where l i = H r i | H r i | (or basis of H H T ), an d den oting λ i = | H r i | . As in the work of Gallager r i r j = δ i,j = l ∗ i l j , bec ause r i -s for m an ortho normal eigenbasis and l i l j = r i H T H r i | r i H T H r i | = r i r j . So write x in a good basis r 1 , . . . , r n we get x = P n i =1 ˜ x 1 r i - we know that ˜ x i -s form also an i.i.d. gaussian sequen ce. Write the outpu t as y = P n i =1 ˜ y i l i , we get ˜ y i = λ i ˜ x i + ˜ z i (27) for all i , where ˜ z i is the white Gaussian n oise in the basis of l 1 , . . . , l m (in which is also white). I n m any work s this equation is used as a chann el with fading, where λ i s are i.i.d. random variables. Th is is a false app roach. If the receiver knows the ISI h then these constant can be co mputed. Is th e ISI is a r andom vector f rom, e.g., i.i.d. ran dom variables, then λ i s are not nec essarily i.i.d. If we in terlace o ur codew ord, with this formula we ca n get n ′ = n + l par allel channels, each have SNR λ i /σ . W e know that the error expo nent given by Gallager for the i -th channe l is E r ( ρ, λ i ) = − 1 n ′ ln " Z y Z x q ( x ) p ( y | x, λ i ) 1 / (1+ ρ ) dx 1+ ρ # If the in put distribution q ( x ) is the optimal, Gaussian distri- bution, with u se of [8] the above expression can be rewritten as E r ( ρ, λ i ) = − 1 n ′ n ′ X i =1 l n 1 + λ i σ (1 + ρ ) − ρ Now we can use the Szeg ˝ o theor em from [1], and we get that the av erage of the exponent, so th e exponent o f the system is E r ( ρ ) = 1 2 π 2 π Z 0 − ln " 1 + f ( x ) σ (1 + ρ ) − ρ # dx where f ( x ) = P ∞ k = −∞ ( P l −| k | j =0 h j h j + | k | ) e ikx , wh ich is same as [10], [8] In the simulation we simu lated a 4 dimensional fading vec- tor who se compon ents was r andomly gener ated with unifo rm random distribution in [0 , 1] . For other random ly gener ated vectors, we ge t similar r esult. Th e two err or exponen t were positive in the same regio n, but for surprise the new err or exponent was better (h igher) than Gallager’ s one . Figure 1 shows, that the new err or exponent is al ways as good, or better than the Gallager’ s one. P S f r a g r e p l a c e m e n t s Difference Difference SNR (dB) SNR (dB) R -10 -10 10 10 20 20 0 0 0 1 2 3 4 5 0 0 0.15 0.15 0.1 0.1 0.05 0.05 Fig. 1. Differe nce of the ne w error e xponent and the Gallager’ s error e xponent The new metho d gives better er ror expo nent, howe ver it ca n be har dly com puted. W e could estimate the difference only in 4 dimension , because of the compu tational hardness to give the glob al optimum o f a 4 dimen sional function. V . D I S C U S S I O N Firstly our result can be used as a new lower bo und to err or exponents with no CSI at the tran smitter . Note that, our err or exponent (23) is positi ve if the rate is smaller than the capacity . 7 Secondly it gives a ne w idea for decod ing in coming signals without any CSI: May be it is worth to p erform a m ore difficult maximisation, but not dealing with chann el estimation. This can be d one because o f the universality of the cod e, which means, the detection meth od doesn’t depend o n the ch annel. W e hav e proved that if the ISI fu llfills some criteria (see Theorem 1), then the m essage can be d etected, with expo nen- tially small error pr obability . Howe ver these criteria can be relaxed, because in the Theorem P n → ∞ an d l n → ∞ , so any ISI with finite length and finite energy , and finite white noise variance, can be appro ximated well via the appro ximating set of par ameters. It can be easily seen, that the lemmas and theorem remain true w ith small ch anges, if the input distribution is an arbitrar- ily chosen i.i.d . (absolute continu ous or discrete) distribution. Only the functio nal f orm of the mu tual infor mation I ( · ) , and the e ntropy of the output variable H ( · ) ( Y ) changes. So, this result c an b e used for lo wer bou nding the error e xponen t, if non-g aussian i.i.d. ran dom variables are used for the random selection of the codeboo k. Howe ver in these cases the entropy of the output can hardly be expressed in closed form. W ith the result of Theor em 1, we can d efine channe l capacity f or compound fading channels. If the fading re mains unchan ged dur ing the transmission, and the fading length satisfies l n << n , we can state the following theorem: Theorem 2 Let F be an arbitrarily g iven not ne cessarily finite set of cha nnel parameters, the n th e capac ity of the ISI channel without a ny CSI, with channel parameter fr o m F , is C ( F ) = inf ( h,σ ) ∈ F I ( h, σ ) Pr oof: In the limit of the set H n is dense in th e space of the real sequen ces with any len gth, so for every ( h, σ ) ∈ F th ere exists a sequenc e ( h n , σ n ) ∈ H n V n such that ( h n , σ n ) → ( h, σ ) . W e know from remark 1 that the error exp onent in The orem 1 is a co ntinuou s function, so the Theorem 1 proves th at C ( F ) is an a chiev ab le ra te. Giv en linear ga ussian cha nnel with ISI h a nd variance σ the capacity is I ( h, σ ) if the transmitter has no CSI. For so me ( h, σ ) our error exponent g i ves a better n umerical result, th an the rand om codin g error exponent derived b y Gallager [6], im proved by Kaplan and Sham ai [8] (th e ran dom coding err or exponent used h ere is d educed in Section IV). This result is not so surpr ising, if we kn ow that the Maximum Mu tual Inform ation (MMI ) decoder g i ves better exponent in some cases (like in multiaccess environmen t) than the r andom coding err or expon ent d eriv ed by Gallager . This work doesn’t contr adict with [9]. W e k now , that in the discrete ca se th e MMI decode r is not else th an th e gen eralised likelihood (GML ) decoder [9], and also in [9] was showed, that GML decoder is not op timal in the no n-memor yless case. Howe ver th is is not the case in the con tinuous case, wher e the entropy of the incoming signal dep ends of the used parameter ( h, σ ) . R E F E R E N C E S [1] G. Szeg ˝ o ”Beitra ge zur Theorie der T oplitz schen Formen I“ Mathema- tisc he Zeitsc hrift vol. 6, pp 167 1920. [2] J.K. Omura and B.K. Le vitt, "Coded error proba bilit y ev aluation for antij am communic ation system" IE EE T rans. Commun. , vol. COM-30, pp. 869-903, May 1982. [3] A. Lapidoth and S. Shamai, "A lowe r bound on the bit-error -rate re- sulting from mismatche d V iterbi decodi ng" Eur op. T rans. T elecommun. , ?? [4] N. Merhav , G.Kaplan, A. Lapidoth and S. Shamai, "On informatio n rates on mismatc hed decoders" IEEE T rans, Inform. Theory , , vol . 40, pp. 1953-1967, Nov . 1994 [5] I. Csiszár J. Körner , Information Theory Coding Theore ms for Dicret e Memoryle ss Systems Akadémia i kiadó, 1986. [6] R. Gallager , Informati on Theory , and R eliabl e Communic ation 1969 [7] J. Pokorny , H. M. W allmeier , “Ra ndom coding bound and codes produced by permutatio ns for the m ultipl e -acce ss channel, ” IEEE T ranaction s on informat ion theory , vol . IT -31, Nov . 1985. [8] G.Kaplan and S. Shamai, "Achie v able perfo rmance over the correlat ed Richia n channel," IE EE T rans. Commun. , vol. 42, pp. 2967-2978, Nov . 1994 [9] A. Lapidoth and J . Ziv “On th uni versalit y of the LZ-based decoding algorit hm, ” IEEE T ranactions on information theory , vol. 44, no. 5, Sep. 1998 [10] W . Ahmed, P . McLane “Ran dom Coding Error Exponen ts for T wo- Dimensional Flat Fading Channels with Complete Channe l State Infor- mation” IEEE T rana ctions on information theory , vol. 45. no. 4, May . 1999

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment