The price of certainty: "waterslide curves" and the gap to capacity

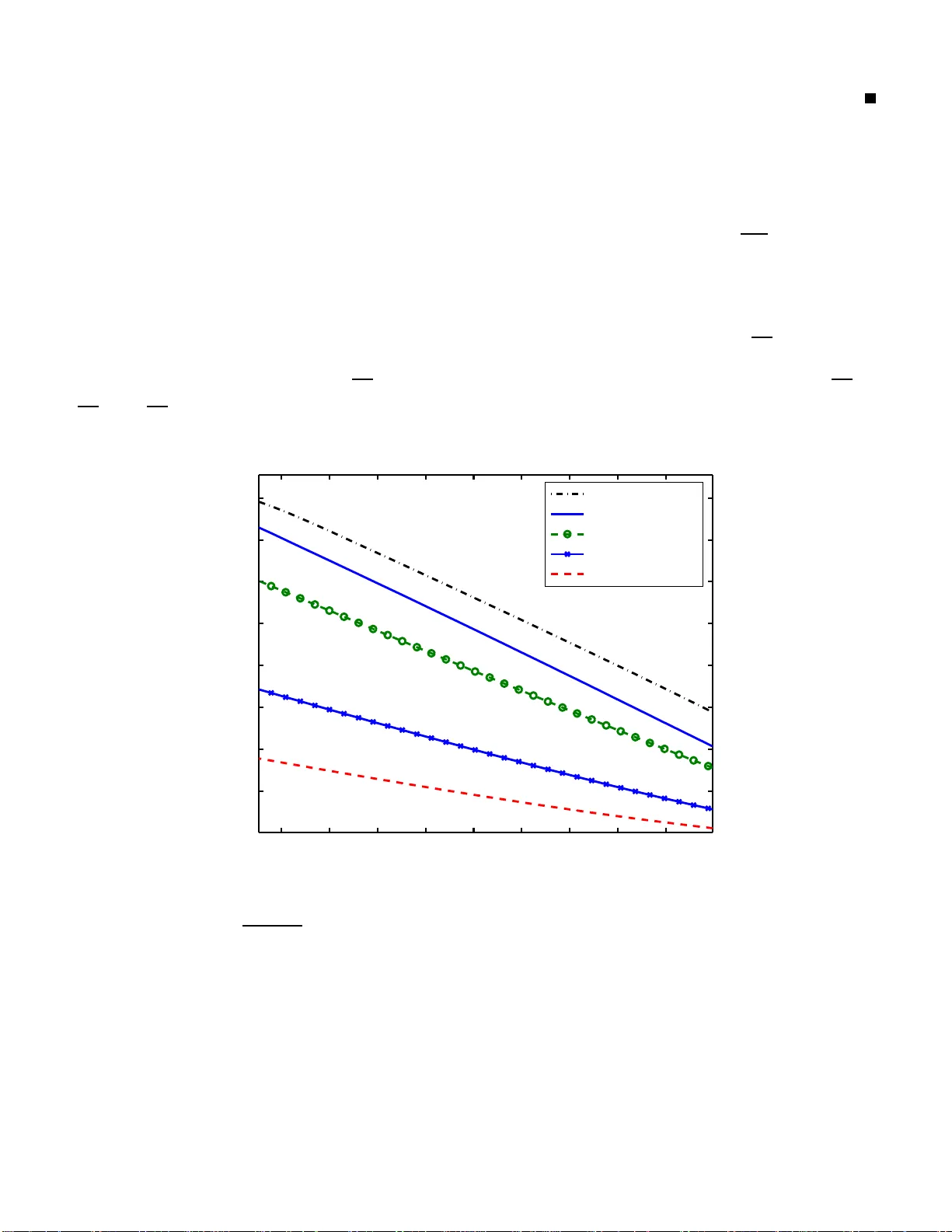

The classical problem of reliable point-to-point digital communication is to achieve a low probability of error while keeping the rate high and the total power consumption small. Traditional information-theoretic analysis uses `waterfall' curves to c…

Authors: Anant Sahai, Pulkit Grover