The Second Law as a Cause of the Evolution

It is a common belief that in any environment where life is possible, life will be generated. Here it is suggested that the cause for a spontaneous generation of complex systems is probability driven processes. Based on equilibrium thermodynamics, it…

Authors: Oded Kafri

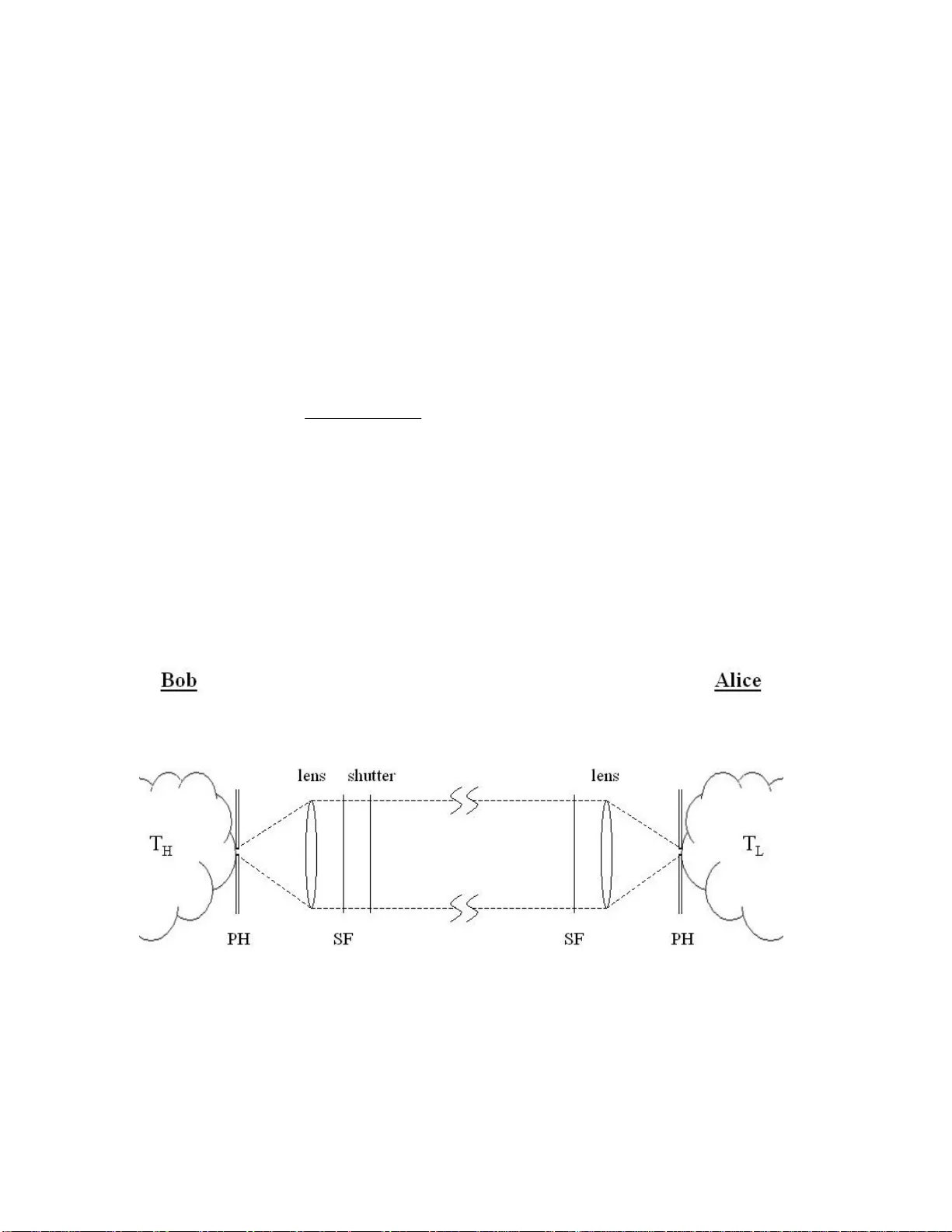

The Second La w as a Cause of the Evolution Oded Kafri Varicom Communications, Tel Aviv 68165 Israel Abstract It is a common belief that in any environm ent where life is possible, life w ill be generated. Here it is sugges ted that the cause for a spont aneous generation of complex systems is probability driven process es. Based on equilibrium therm odynamics, it is argued that in low occupation number st atistical systems, the second law of thermodynamics yields an increase of ther mal entropy and a canonic energy distribution. However, in high occupation number statisti cal system s, the same law for the same reasons yields an increase of informa tion and a Benford's law/power-law energy distribution. It is therefore, pl ausible, that eventually the h eat death is not necessarily the end of the universe. Introduction Till the late 17 th century the common hypothesis about the origin of life was Abiogenesis or a spontaneous generation of lif e from organic substances. For example, if we put an orange on a table, after a while we will probably f ind worms in it. The conclusion that the worms are generated from the orange is only part of the truth. W e know today that eggs of a fly called Drosophila melanogaster have to be present in the orange, in the first place in order for the worm s to be generated. Nevertheless, the "explanation" that the eggs are th e reason fo r the generation of the worm s in the orange does not diminish the appeal of the Abioge nesis hypothesis. For an observer in space looking at the earth over billions of years, the formation of life, buildings, roads, cars etc, cannot be explained in a similar way as the formation of worm s in the orange. For him, the explanation for life and even buildings, books etc. is a spontaneous generation. What are the "eggs" of these objects ? Contemporary science deals with evolution, namely how life evolved from the simple to the complex. Nevertheless, evolut ion theories do not d eal and do not explain the reason for spontaneous generation of complex objects as life and arti ficial objects [1]. Sometimes life is reg arded as a thriving for order. It seem s we are constantly fighting against the chaos invading our life, c onstantly looking for rules and laws, and if we don't find them we invent them. However, th ere is no scientific defin ition of order. It seems odd that order is not defined in science, while entropy, the ent ity that is conceived as a measure of disorder, is so widely used. According to the 2 nd law of thermodynamics, 2 any process that causes the entropy to increase is likely to happen. In other words, such processes are spontaneous. This is the reason for the bad reputation of the second law "which fights our tendency to order". The Inte rnet is loaded w ith theological materia l claiming that life is a violation of the second law of thermodynam ics. In this paper it is argued exactly the o pposite: The second law is responsible for life because life is rela ted to a spontaneous information increase, and info rmation is a part of entropy. It is argued that entropy comprise s of two parts: the thermal entropy and the informatics entropy. While the thermal entropy and its connection to the 2 nd law are well known, the connection between information and the 2 nd law was only recently discussed [2,3,4]. Information is conceived (erroneously) as or der. It was suggested that information contains null or even negative entropy [5]. Th is "intuitive reasoning" is logically sound, as many understand inform ation as the require ment that Alice will obtain the sam e value for the i th bit each time Bob sends her an identical file. Since the bits location is fixed, the file is a frozen entity and thus contains zero entropy. Even more popular intuition is that information is negative entropy as was suggest ed by Brillouin [6,7]. The reasoning of this conclusion is that the file, before it is r eceived, is unknown, and thus contains entropy, which is reduced with every bit that Alice r eads. Therefore, inform ation is a reduction of entropy. Nevertheless, Shannon, in his 1 st theorem, defined the information as the maximum amount of data that can be transmitte d in a noiseless channel. H is expression, which is identical to the Gibbs entropy, repr esents the randomness of the distribution of 3 the bits in a file [8]. The Shannon information tells us nothing about the actual content of a file and has no connection to it. When Alice receives a file of Λ bits, all we know is that there are maximum 2 Λ different possible (configurations) contents. In some of these 2 Λ files the distribution of the bits is cor relate d. In others the distribution of the bits is random. When Alice receives a Λ bits random file, the am ount of the Shannon information is I = Λ bits. If the distribution of the bits is not random Bob can com press the file and send a shorter file of length I such that I < Λ or in general I ≤ Λ . How many random distributions are th ere as compared with correlated distributions in a file ? Jaynes has shown [15,19,20] that if we have a statistical ensemble, the most honest guess about its distribution is the Shannon information. The finding of Jaynes, in simple words, is that there are much more random distributions than there are correlated distributions. The work of Jaynes s uggests that for a statis tical ensemble the 2 nd law is a mere probabilistic effect. If we don’t know anything about a s tatistical ensemble, our best bet is that it is random. If we reshuffle a distribution of som ething, it will become more rando m. Jaynes work is applicable to information. If we add a noise to a file, it will probably increase the amount of the Shannon in form ation in the file. Nevertheless the subjective meaning of the message in the file m ay be lost. While the similarity between the Gibbs entropy and the Shannon information is clear, there is a distinctive difference between them. Informa tion is a log ical quantity; the Shannon information is a mathem atical entity. It is neither a function of the file energy nor a function of the temperature, as it is not made of a mate rialistic substance. A binary 4 information file contains only "1"'s and "0"s. Entropy on the other hand, is a physical quantity and has a physical dimension. The phys ical meaning of the entropy is derived from the second law. The basic outcome of the second law is that heat flows spontaneously from a hot bath to a cold ba th; it does so, because in this process the entropy increases. Another difference is that entropy is a dynamic quantity while information is a quenched quantity. Boltzmann obtained his approximation of the Clausius entropy for an ideal gas, which contains a large nu mber of atoms exchanging energy constantly [12]. The Boltzmann statistics contains inherent ly the canonic non-deterministic Maxwell- Boltzmann distribution. This distri bution is a cornerstone of m echanical statistics as well as of quantum mechanics. Nevertheless, the canonic distribution is not applicable to information, which is a quenched quantity. I. The paper in a nutshell In previous publications [2,3] it was s hown that if we assign energy to the information bits, it is obtained, from the 2 nd law of thermodynamics, that the Shannon information is entropy, a random file is a st ate of equilibrium and the temperature is proportional to the bit's energy. In this paper a to y model is used to describe a generic file transmission from Bob to Alice using electrom agnetic radiation. Bob is using a blackbody-based transmitter that enables hi m to control the temperature and the frequency of the radiation. In addition, Bob can modulate the radiation. It is shown that when Bob transmits to Alice a low occupati on number energy (the quantum limit), the thermodynamic functions of the energy tr ansm ission are the well-known canonic ones. 5 However, when Bob increases the occupa tion number of the photons, a power-law distribution replaces the canonic distributi on, inf ormation replaces the entropy, and the canonic statistical physics becomes a statisti cs of harmonic oscill ators. In the high occupation limit the obtained normalized therm odynamic functions are independent of any physical quantity and/or physical constant and therefore become purely logical functions. A qualitative discussion about whether nature prefers generation of information or generation of canonic entropy yi elds a conclusion that the two are equally welcome. In section II, a toy model, in which Bob sends a collimated light beam to Alice, is described. Bob is using a blackbody radiati on source that delivers energy per mode according to the Bose-Einstein statistics. Bob can change to his wish (without any physical limitations) the temper ature of his source and to select a frequency or frequencies of the radiation by a spectral filte r. In addition, Bob has a shutter that enables him to modulate the r adiation wi thin the limitations of the laws of optics. It is assumed that in equilibrium all the radiation m odes that Alice receives have the sa me temperature. In section III, the quantum limit , it is assumed that the energy of the photons is much higher than the average energy. The Bose-Einstein equa tion yields the familiar canonic distribution. The obtained entropy of th e radiation is the Gibbs expression and the ratio between the number of the photons and the number of empty m odes is the Maxwell-Boltzmann distribution. In section IV , the high occupation limit , Bob is using his toy model to reduce the energy of the photon as compared with that of the average energy of the mode to the 6 extent that the energy can be added or be removed smoothly to the m ode. In this limit the number of the photons is much larger than the number of the modes. The Bose-Einstein equation yields that each mode is a h armoni c oscillator. It means, that each m ode's entropy is one Boltzmann constant, and the temper ature is a linear function of the m ode's energy. In section IV-a, modulation and information , Bob is modulating the sequence of the harmonic oscillators to a binary file. The Shannon inform ation is calculated. It is shown that in a random file, when the numbe r of the harm onic osci llators (energetic modes) is equal to the number of the vacan cies (empty m odes), the Shannon information is equal to the length of the file. In other cases it is show n that the amount of the Shannon information is sm aller. In section IV-b, equilibrium and entropy , the entropy of the f ile, which consists of harmonics oscillators and vacancies, is calculated. It is shown that the Shannon information is the Gibbs mixing entropy. The Boltzm ann H function is equivalent to the amount of information H in a correlated file . In section IV-c, logical quantities , it is shown that the no rmalized entropy in Bob's transmission is a function that does not contain any physical va riable or constant. This is with contradistinction to a canonic entropy transmission. Therefore the normalized entropy, in the high occupa tion limit, is a logical quantity. In section IV-d, logical equilibrium –the Benford's law , Bob generates a set of modes in which each represents a digit. A possibl e way to con struct such a set is to put in the mode that represents the digit N , N tim es more energy than the mode that rep resents 7 the digit 1. To obtain equilibrium (equal temp erature in the Bose-Einstein distributio n) Bob has to use either a different frequency fo r each digit-mode or alternatively a different density for each digit in a file. The ob tained normalized distributi on function of the digit- modes in equilibrium is a pure logical func tion, which is not a function of the initial temperature and/or frequency chosen by Bob. The result is identical to the famous Benford's law. In section IV-e, energy distribution, the power-law , the log of the occupation number vs. the log of the photon energy divide d by the average energy is plotted for the Bose-Einstein distribution. It is seen that in the low occupa tion number, a straight line of slop, –1,is obtained. This behavior appears in many quantities in nature (a good example are the natural nets). With analogy to the m omentum Gaussian distribution obtained from the canonic energy distribution If we consider the electric fiel d of the radiation instead of the energy, a –2 slope is obtained. Slopes around "-2" appear in many sociological statistics [20]. In section V, mixed systems , the question whether inform ation can survive side by side with the canonic thermal entr opy in equilibrium is discussed. In section V-a, Hook-law harmonic oscillator , a thermodynamic analysis of a mixed system consists of a single Hook-law os cillator coupled to a heat bath at room temperature is presented. It is shown that th e amplitude increase of a Hook-law oscillator has the Carnot efficiency, similar to that of the amplification of a file [3,4]. A Hook-law oscillator will relax its energy spontaneously to the heat bath as the relaxation increases the entropy. 8 In section V-b, information vs. thermal entropy , it is shown that in the case of the blackbody emission, both the low occupation number photons as well as the high occupation number photons coexist in equili brium. Therefore, it is concluded that information and thermal entropy are equally welcom e. In section VI, summary and discussion, a table that shows the differences between the thermodynamic functions in th e canonic distribution and in the high- occupation harmonic distribution is presented. In view of these differences, the meaning of the logical quantities obt ained in the thermodynamic theory of comm unication is discussed. It is concluded that, in equilib ri um, inert quanta distributed in modes yield a power-law/Bedford-law distribution. It is suggested that the in formatics aspect of life is a tendency for reproduction and a compressed communication. II. The Toy Model In Fig. 1 the setup in which Bob sends Alice a flux of photons is described. The analysis is based on the classical Carnot Clausius thermodynamic. In the classical thermodynamic the entropy is S ≥ Q / T where the equality sign stands for equilibrium , Q is the heat that Bob is sendi ng or Alice is receiving, and T is the temperature of the transmitter or the r eceiver. This inequality is the Clausius inequality [13] derived d irectly from the efficiency of the Carnot cycle [12]. Bob is sending a sequence of photons in a single longitudinal mode to Alice, as described in Fig.1. Bob has a blackbody at temperature T H that emits a blackbody radiation. Bob attaches a pinhole fi lter (PH) of a diameter of λ 2 with a positive lens in order to obtain a collimated single longitudi nal m ode. After the pinhole spatial filter Bob 9 attaches a spectral filer and a polarizer (SF) that passes only the frequency ν , with a spectral width Δν . Here λ and ν , are the wavelength and the frequency of the transm itted signal. The spectral width determines the number of the tem poral modes. Bob can modulate the photons beam by using a mechanical or electro-optical sh atter. Photons are bosons with a zero chemical potenti al. Therefore the number of photons n in a single mode obtained from the Bose-Einstei n distribution [14] is given by; 1 1 / − = H B T k h i e n ν (1) Where i index the temporal modes, h ν is the energy of the photon, T H is the temperature of the Bob's source and k B is the Boltzmann constant. B Fig 1. A setup for a single trans verse mode energy transmi ssion from Bob to Alice 10 Alice uses a detector to receive the me ssage. In general, Alice uses a similar positive lens, a f ilter and a detector at the focal le ngth of the lens. If the detector of Alice is at a temperature T L = T H , namely the temperature of the transmitter of Bob, the noise emitted by the detector will be as strong as the signal, and Alice will not be able to read the signals. Therefore, a prerequisite requi rement for energy transm ission from Bob to Alice is that T H > T L . In practice, Bob can heat his blackbody to a temperature lim ited by the physical properties of the blackbody's m ate rial. However, we assume that Bob does not have such limitations and he can produce a beam as hot as a laser beam to his wish. Also there are no limitations of the freque ncies that Bob can send. In practice, the wavelength of the radiation cannot exceed the diameter of the blackbody; neverth eless we let Bob enjoy the benefit of a toy model. Her eafter, two limits are discussed: the quantum limit in which h ν >> k B T and the high occupation limit in which h ν << k B B B T. III. The Quantum Limit In the quantum limit, the energy of the photon h ν is much higher than the averag e energy, k B T , of a mode emitted from a thermal bath (the blackbody). Therefore classically it is impossible to emit a photon. However, when m any modes ar e collecting their energies together they emit a single high-en ergy photon in an arbitrary (lucky) mode. In other words, when n << 1, it is assumed that a group of 1/ n modes will emit a single photon in an unknown mode of th e group. Occupation numbers smaller than one exist in many systems in physics i.e. in ideal gas. In this case Eq(1) yields, B , (2) i B T k h i e n / ν − = 11 which is the canonic distribution. Consider a sequence of Λ temporal m odes emitted from a radiation source, which is not in e quilibrium. Non-equilibrium state means that any mode may have its own temperature. Th e num ber of photons in the sequence is . The average energy of a single mode is q ∑ Λ = 1 i i n i = n i h ν . The temperature is calculated from Eq.(2) to be, T i = - h ν / k B ln n B i i 1 1 = = i. . The entropy of a single mode will be S i = q i / T i or, . Since the entropy is extensive, the total entropy of a sequence of Λ modes is, i i B n n k ln − . (3) i i B i n n k S S ln ∑ ∑ Λ Λ − = = When n i <<1 , n i = p i and Eq.(3) is simply the Gibbs entropy. Assuming that all n i are equal to n (which means an equilibrium state as all the tem peratures T i are equal to T ), we obtain from Eqs(3&2); T q T nh T h S S e T k h i B Λ = Λ = Λ = = − Λ = ∑ ν ν ν i 1 , (4) The entropy of the sequence of Λ temporal m odes, in the quantum lim it, is a function of the mode energy and its temperat ure. Any loss of a photon will change the entropy as well as any fluctuation of the source temperature. IV. The High Occupation Limit When the source is hot and/or th e frequency is low such that h ν << k B T, Eq(1) yields, B n i h ν = q i = k B T B i or S i = k B B (5) 12 This is the well-known relation of a harmonic oscillator. In this limit the photon energy is negligible as comp ared with the average en ergy of the mode, and therefore energy can be removed or added in a continuous way. The uncertainty of ½ h ν is also neglig ible. To some extent it is a surprising resu lt. Intu itively, we expect from one mode, which contains many photons, to have ze ro entropy. Nevertheless, one k B is a very small amount of entropy, i.e. a laser mode, which sometim es contains as much as 10 photons, has the same amount of entropy as one vibration mode of a single m olecule. The Gibbs, Boltzmann or Von-Neumann entropies yield nul l entropy for a single m ode radiation, as they are only an approximation of the entropy for a statistical ensemble [9]. Finite entropy to a single mode is a must, as entropy is an extensive quantity and the em ission of entropy by a blackbody radiation is the sum of the single modes em ission. Therefore if a single mode would not carry entropy, a blac kbody would not emit entropy as well. B i = 1 20 When Bob is using his Blackbody (in this case he will prefer a CW laser) and sending Alice Λ classical modes, the total entropy th at is rem oved from his source is, B i k S S Λ = = ∑ Λ , ( 6 ) In the next two sections it will be shown th at entropy in the high occupation limit is not a simple sum of the entropies of the modes. A sequence of Λ oscillators is not always a state of equilibrium since we can add as m any empty modes as we wish. Therefore, Eq.(6) is a lower bound of the entropy. The entropy is defined only in eq uilibrium. The equation of the entropy can be used away fr om equilibrium , however the obtained value 13 (usually known as the Boltzmann - H function) is not unique and is always sm aller than the entropy [15]. The entropy of a single oscill ator in the high occupation limit is not a function of the energy or of the temperatu re. In fact, when a mode is divided to N fractions, each fraction, when received, carries the same amount of entropy as the undivided mode. It was shown previously [2,3] that this property is the basis of information transmission and is the cornerstone of IT. IV-a. Modulation and Information A possible way for Bob to modulate his sour ce is to use a shutter (Fig. 1). Every temporal mode has a duration of its c oherence length, namely Δ t = c / Δν . Where c is the speed of light. Therefore if the shutter is opened for a time interva l Δ t , an amount k B of entropy is transmitted . When Bob is transmittin g a file he possibl y starts by sending a header to inform Alice about the file length Λ that he intends to send and so me other inform ation about the kind of compression or the language he uses . Usually Alice replies to confirm the acceptance of the header and her consent or refusal to receive the data an d so on. However, although this handshaking process is vital to any communication, it will not be discussed here. The discussion here assume s that Bob and Alice have pre-agreed language, compression, protocol and an open channel of communication. If Bob sends L energetic bits in Λ modes where, Λ > L , there are sev eral different messages that can be sent. The number of po ssible messages is the b inomial coefficient 14 Λ !/( Λ -L )! L !. The Shannon inform ation is defined, in bits, as the shortest file I that has this number of messages. Therefore, 2 I = Λ !/( Λ -L )! L !. Hereafter the inform ation will be expressed in nats, namely, e I = Λ !/( Λ -L )! L !. Stirling formula yields, I = Λ ln Λ - L ln L - ( Λ -L )ln( Λ -L ). (7) Under the assumption that all the co mbinations have equal probability, it is seen that if Λ = 2 L then I = Λ ln2. Namely, a random file contains the maxim um amount of information. IV-b. Equilibrium and Entropy The basic definition of equilib rium is obtained from Clausius inequality, namely T Q S ≥ . When the heat transmitted divided by the tem perature is equal to the en tropy, the system is in equilibrium . This implies that when Q / T is a maximum, the system is in equilibrium. If the system is not in equilib rium, the obtain ed temperature (that is alwa ys higher than T ) is not unique and can yield different values for different histories of a system. If we designate p ≡ L / Λ , then the RHS of Eq.(7) can be rewritten as, I = - Λ { p ln p + (1 - p )ln(1 – p )} (8) Consider p Λ oscillators, each carries k B entropy, in a sequence of Λ modes. In the setup of Fig 1, each mode has the same frequency a nd temperature. That means that each mode is in equilibrium with the em itting Blackbody an d with the other modes. However, there B 15 is mixing entropy of the energetic modes and the em pty modes. The mixing entropy of the ensemble a la Gibbs is, )} 1 ln( ) 1 ( ln { p p p p k H B − − + Λ = (9) where H is the Boltzmann H function. The - H function is the entropy calculated away from equilibrium such that . In equilibrium p = ½, H has a minim um and Eq.(8) yields that . H S − ≥ 2 ln Λ = B k S Therefore it is possible to conclude that entropy and in formation are identical and a random sequence is a state of equilibrium. In the case that p < ½ one obtains, S ≥ - H= k B I (10) B Eq.(10) is the Clausius inequality for inform atics. It worth noting that Eq.(9) yields, in st atistical physics, th e canonic distribution [2,3]. Consider p Λ energetic particles of energy h ν in Λ m icrostates. The energy of the sequence is Q = p Λ h ν .The temperature is defined a la Clausius as T = ∂ Q / ∂ S , Therefore, ∂ S / ∂ p = - Λ h ν / T = Λ k B {ln p - ln(1- p )} or T k h B e p p / ) 1 ( ν − = − which is the canonic distribution of Eq.(2) for a two level system(see table 1) [2,3]. What is the reason for such different results, in s tatistical physics and in informatics, obtained from the same Eq.(9)? Th e explanation is tha t in statistical physics, the canonic distribution prevails and the entropy of a mode is a function of the energy and the temperature, as is seen in Eq(4). Ther efore, equilibrium (a maximum entropy) may be 16 obtained, for any value of h ν and T , for any p ≤ ½. In informatics the entropy of a mode is not a function of the energy or th e temperature, therefore eq uilibrium exists on ly for a single value p = ½. IV-c. Logical Quantities When Bob modulates the transmitted radiati on of the setup of Fig.(1), he usually does not care about the coheren ce length of his radiation s ource. He transmits a sequence of pulses and vacancies of equal length. Ther efore, each "1" bit will usually carry m ore entropy than one k B . Practically it will carry K = mk B B B entropy units, were m is some integer (see Eq.(6)), therefore Eq.(10) can be rewritten as, S ≥ KI , (11) When Bob transmits a file, all he wants is for Alice to receive correctly one of the 2 Λ possible files in a Λ bits transmission. However, here we are interested in the amount of the Shannon information of this particular transmission. A possible way to calculate the amount of information in the transm ission is to use Eq.(9) to calculate -H / S which is the normalized information. W hich yields, 1 2 ln ) 1 ln( ) 1 ( ln ≤ − − + − = − p p p p S H . (12) Eq.(12) is the logical Clausius inequ ality in inform atics. It says that the maxim um amount of information in a file that has a fraction p of the bits "1" or "0" is not a function of K . In fact, Eq.(12) is a mere inequa lity, free from any physical quantity. 17 IV-d. Logical Equilibrium – The Benford's Law Eq.(12) demonstrates that the fraction p of the "1" or "0" bits d etermines how far a file is from equilibr ium. If p =1/2, it means that a f ile might be in equilibrium . Nevertheless, information transmission is not done usually in bits. In our everyday life, we are using a much larger amount of sym bols to communicate. An important set of symbols is the numerical digits. A possible wa y to form a set that represents the nine digits is to use nine kinds of modes. Each one contains 1,2,3,4,5,6,7,8,9 energy units respectively. W hat will be the relativ e distribu tion of these modes in equilibrium? If all the bosons have the same energy, each occupation number n yields a different temperature, which means a non-equilibri um state. Eq.(1) is rewritten as, ) 1 1 ln( ) ( n T k h n B + = = Φ = Φ ν ( 1 3 ) It is seen that as n increases, the temperature in creases. A possible way to obtain equilibrium, namely an equal tem perature for a ll the dig its, is to use a spectral filter, with nine different frequencies that can be obtained from Eq.(13). An alternative way to obtain an equilibrium state is to keep Φ constant and to distribute the nine sym bols according to a density function ) ( ) ( n n Φ = Φ ρ . Such that, ) 1 1 ln( ) ( i n n + = Φ ρ i ( 1 4 ) 18 The relative distribution of digits in equilib rium is, ∑ ∑ = = = Φ Φ = 9 1 9 1 ) ( ) ( ) ( ) ( ) ( i i i i i i i n n n n n ρ ρ ρ ρ ϕ (15) From Eq.(15) it is seen that Φ disappears altogether, simila rly to the way the norm alized entropy is independent of the frequency and tem perature and became inform ation, in the high occupation limit. Since ) 10 ln( ) 1 1 ln( ) ( 9 1 9 1 = + = ∑ ∑ = = i i i i n n ρ . Therefore, ) 1 1 ( log ) ( 10 i n n + = ϕ i ( 1 6 ) Eq.(16) is the Benford's law [16,17,18] th at was found empirically in many statistical ensembles of digits that orig inate from natural sources and are not produced by artificial randomizers. Fig.2 The Benford's law is the probabilities of the digits in many numerical data files. 19 It is worth noting that with the cancellation of Φ we see that the normalized distribution function is independent, not onl y of the temperature that was chosen arbitrarily in Eq.(13), but also of the energy of the boson as well. Moreover, it is free of any physical variable and/or physical parameter. IV-e. Energy distribution – Power-law For a canonic ensemble, the energy distribut ion obtained from the Bose-Einstein statistics is, . The Bose-Einstein distribution gives us the number of photons for any ratio Φ = h ν / k i B i T k h i e n / ν − = B T . Φ may be viewed as the relative energy of a photon with respect to the average energy. Therefore, the occupation num ber n of all the modes having the same temperature T (in e quilibrium) is given by, B 1 1 / − = T k h i B i e n ν (17) The setup that describes Eq.(17) is the same one as in Fig. (1), but without the spectral filer, therefore all f requencies are transm itted. In high occupation number Eq.(17) can be approximate to, ) 1 1 ln( i i n + = Φ or . We expand ln n i i n ln ≈ Φ i around 1 and obtain that i i n n 1 1 ln ≈ therefore 1 ln ln − ≈ ∂ Φ ∂ n . In Fig.(2) a plot of ln n i Vs. ln Φ i is shown, for in the classical regime a power-law like distribution is obtained, and m oreover, the exponential truncation in the qua ntum regime appears. It is worth noting that the only 20 assumption of this curve is equilib rium. Namely, all m odes are at the same tem perature [19]. Fig.3 A log-log plot of the occupation num ber versus the relative boson energy Since many phenomena that are related to natural processes e xhibit the power-law distribution [20] it attracts a c onsiderable atten tion. To men tion few: the frequency use of words, the number of hits of web sites, th e copies of books sold, the population of cities etc. The slopes obtained from the empirica l power-law distributi ons are around –2. This slope is obtained if we plot the log of the electrical field E instead of the log of energy in the Bose-Einstein distribution (as ). This is similar to the Gaussian distribution of momentum that is obtained from the exponential distribution of energy in the canonic limit. A connection of the Bose–E instein statistics to com plex networks was discussed previously [21] and the similar ity between the mapping of the Bose-Einstein gas and a network model was pointed out. A possible explanation, based on the present 2 E ∝ ∝ ∝ Φ ε ν 21 theory, for the reason why so many phenom ena exhibit a power-law distribution, is found in section VI. V. Combined Systems In previous publications [3 ,4] it was s hown that for a classical ensemble the Shannon information is entropy, an amplifier is a Carnot cycle and broadcasting from one antenna to several antennas is a he at flow from a hot bath to a cold bath. In addition, an informatics perpetuum mobile of the second kind was defined. In this paper it is shown that a classical ensemble has a power-law en ergy distribution while in the quantum limit when Φ >>1, the canonic distribution dominates and the regular Gibbs -Boltzmann ther mal thermodynamics takes place. Therefore, the Shannon information and th e thermal entropy are two faces of the same entropy. Under what condition thermal entrop y w ill be generated and under what condition information will be generated ? When there ar e two possible w ays to generate entropy in a system, the sum entropy will be the com bina tion of the two that maximizes the total entropy of the system [22]. Hereafter a simple exam ple of such a combined system is considered. V-a. Hook-law Harmonic Oscillator Consider a Hook –law oscillat or, at a room temperature T , having a spring constant κ and an amplitude A L . The total energy of the oscillator is 2 2 1 L L A E κ = . The temperature of this oscillator from Eq(5) is T L = E L / k B . To increase the amplitude of the oscillator to higher amplitude E H , a work W should be applied. The new amplitude will be 22 H B H L H T k A W E E = = + ≤ 2 2 1 κ . The inequality stands for the s ituation in which the applied work is not with a resonance with the frequency of the os cillator and therefore part of the work is wasted to heat. It is seen that, H L H T T E W − ≤ 1 , ( 1 8 ) Namely, the efficiency of the am plification of the oscillator is the Carnot efficiency. Increasing the energy of the bits in a file was shown [3,4] to be a classical Carnot cycle, which comprises of two isotherms and two adiabatic. Her e it is shown that single oscillator amplification is also a Carnot cycle. The Hook oscill ator has a weight of a finite mass that affects its frequency. The mass of the weight con sists of a large number of particles; each particle has its own degrees of freedom. Each of th ese particles carries similar entropy to that of the whole Hook- oscillator, which is a single oscillator. Therefore, the temperature of the Hook osci llator is much higher than the therm al temperature of the weight, which is in th e room tem perature. The Hook oscillator temperature is sim ilar to that of antennas [2 ] (for a typical cellular antenna was shown to be ~10 15 K) or a laser (for a 0.7µ laser with 10 16 photons per mode is ~10 20 K) and is of the same order of amplitude, nam ely ~10 20 K. These kinds of temperatures are impossible to obtain by heating up a blackbody by conven tional means. Nevertheless, these temperatures can be obtained by non-th erm al resonance pumped sources. Removing energy from the Hook oscillator does not change its entropy because it is a harmonic oscillator and ther efore it has a constant entropy k B . However, dumping the oscillator's energy to a canonic ensemble increases the entrop y according to Eq(4). 23 Therefore, the Hook oscillator will dump s pontaneously its energy to its therm al bath. This example and similar phenom ena are res ponsible for the comm on intuition that the information energy is dumped spontaneously in to a therm al energy. In fact, this is an example of heat flow from a hot harmoni c oscillator to a cold thermal bath. V-b. Information versus Thermal Entropy Does nature prefers the informatics syst ems or the thermal canonic system s? This is an interesting question, as we know that our world consists of a mixture of the two. The common intuition, which is based on the canonical thermal physics, suggests a pessimistic end to any closed system, name ly, a canonic thermal equilibrium (the heat death that was suggested by Kelvin). The commo n intuition suggests that informatics is a non-equilibrium phenomenon [23]. Since a file, is a sequence of harmonic oscillators, at the end, the information's energy will re lax into a thermal equilib rium exactly as the Hook's oscillator transfers its energy to its b ath. However, this is not what the Bose- Einstein statistics suggests. As we see in Fig. 3, there are much more low energy bosons than high-energy bosons. Eq.(13) suggests that for a given temperature T , when Φ is decreased, n is increased according to, ) 1 1 ln( i i n + = Φ ( 1 9 ) When Φ <1, it means that a boson has less energy than the average. W hen Φ >1 it means that a boson has more energy than th e average. Eq(19) suggests that in equilibrium there 24 are more poor energy classical bosons as co m pared with rich energy (lucky) canonic bosons. A blackbody radiation is a good exampl e of a mixed system. The num ber of modes in the volume of a blackbody increases with the frequency cube; the wavelength of the light is limited by the diameter of the blackbody. Therefore the occupation num ber decreases with the frequency according to Eq .(17). The resu lt is the familiar Blackbody radiation spectrum curve that gives similar am ount of energy to the poor photons and to the rich photons. Therefore in blackbody em ission, the num ber of the poor photons is much higher than that of the rich photons. VI. Summary and Discussion Based on a toy model, it is shown that the Bose–Einstein distribution, in the quantum limit, yields the regular canonic th ermodynamics. In the high occupation limit, the harmonic oscillator statistics replaces th e canonic statistics. Th e harmonic oscillators statistics differs in several aspects from the can o nic statistics as is shown in table 1. An important feature of this stat istics is that the normaliz ed thermodynam ic functions like entropy, and particles distribution do not c ontain physical quantities. In the canonic entropy the exponential term does not cancele d out in the norm alization process. Therefore, the canonic entropy is a function of the temperature and the frequency. Any fluctuation of the energy and/or the temp erature affects its magnitude. In the high occupation limit entropy, all the physical va riables and parameters disappear and we obtain the Shannon information. Therefore, the entropy is not sensitive to any fluctuation in the occupation number, the sou rce temperat ure and/or frequency. It is not even 25 sensitive to the number of modes in a bit. This property of the entropy, in the high occupation limit, makes it appropriate to convey data. High Occupation n >> 1 Canonic n << 1 Temperature B k nh T ν = n k h T B ln ν − = Equilibrium p = 1 /2 T k h B e p p ν − = − 1 Average mode entropy S = k B ln2 B T k h B e T h S ν ν − = Energy Distribution Power-law Exponential Carnot cycle Amplifier Heat engine Table 1 The thermodynamic properties of the Bo se-Einstein gas in equilibrium at temperature T for photons (with zero chemic al potential) for the classical and the canonic distribution. p is the probability of the energetic modes and n is the occupation number. The logical quantities, in the high occupation limit, are therefore applicable to many phenomena of our life. The Bose-Einst ein distribution of photons is a simple combinatory of states and particles without interactions, as th e chemical potential μ =0. The only constraint encapsulate in it is the quantization. Nam e ly, it is possible to add or to remove energy from any m ode in an in teger amount of some undivided particle (a quant). As is seen in Eq.(15) when we keep the quant size fixed (a constant frequency) 26 and we also assumed equilibrium (equal temperature for all the m odes), than the normalized distribution of the photons is not a function of the energy, the frequency, the average energy or the temperature. The physic s is faded away, and we remain with a statistical system of inert quanta. System s like these are very c ommon in life. Consider the distribution of the population of cities. Each city may be considered as a mode. When we count the number of the peoples in a city, the peoples are, per definition, indistinguishable. Since the number of the peopl es is quantized, therefore this system is identical to that of Eq.(15). Similarly, th e number of books being sold in a certain period of time is a hom ological system to that of th e population of cities. In this case the number of the titles is the number of the modes and a single copy sold is a quant. The number of hits in the Internet is also a system of this kind as the numbe r of the sites is the num ber of modes and a hit is a quant. In the derivation of Benford's law Eq.(14) was used, namely, ) 1 1 ln( ) ( i i n n + = Φ ρ . This equation yields slope of "-1". In the normalization process Φ disappears. A slope "- 2" is obtained if we substitute ψ 2 ( n )= ρ ( n ), with a phenomenological analogy to the substitution of momentum instead of ener gy in canonic exponentia l distributions to obtain the Gaussian distribution. The present model does not consider any interactions between the quantized particles. Nevertheless, interaction s do exist. If we consider, for example, the distribution of the hits among the sites in th e Internet, it is obvious that there are interactions between the visitors of the sites. The inte ractions mi ght be advertisem ents by the sites and/or viral spread of the recommendations by the visito rs. So what is the reason for a som ewhat 27 oversimplified model without interactions bei ng so effective? A possible explanation is that the distribution of the hits is ind epende nt of the interactio ns , however a specific rank of a certain site does depend on the interactions. Namely, the inte ractions are responsible only for the specific site locati on in the distribution. If that is true, removing a several popular sites will not change th e norm alized distribution. Othe r sites will take the place of the removed sites and the distr ibution will r each equilibrium again. Indeed, this is what is seen in almost any economical system , na mely "there is no empty space". Unlik e the derivation of Benford's law, th e present model does not prete nd to be a co mplete solution to the power-law distribution in social system s. Nevertheles s, it is argued that extensive equilibrium thermodynam ics may predict the qualit ative behavior of social systems. Another notable property of the logical equilib rium is the quenched random ness. For the receiver, a random f ile is content. However, within the contex t of IT, a random file, which is a compressed file, is an ensemble of harmonic oscillator s in equilibrium, as is seen in Eq(12). An outcome of this conclu sion is that files should have a tendency to be compressed spontaneously. Aside from the na tural spontaneous noise, we are obsessed with compression. In IT we compress files for econom ical reasons. However, an observer in space sees that most of the transm itted files on earth are compressed. This observer will rightly, conclude that files have a te ndency to be compressed. Our tend ency to compress is seen also in art. We find ourselv es im pressed by an artist who can express a complex feeling with a few words, or by a painter who can repres ent a detailed picture with a few lines and colors. The artistic kind of compression is known in IT as a lossy compression and is very popular in multimed ia technology. The language is a most powerful compressor; sometimes, the amount of information in a short sentence is 28 enormous considering the fact that it contai ns just a few bytes. A notable example is the mathematics, which enab les to write relative ly short form ulas that describe complex logical processes. It is possi ble that our tendency for sym bolism and mathematics is the natural tendency toward equilibrium . The last issue and the most intrigu ing one is how the tendency of inf ormation to increase affects life. Conventional canonic thermodynamics explains how we decompose chemical compounds in order to produce mech anical work, and heat to enable our body to function properly. This paper suggests that we also want to increase inform ation. The increase of information can be done by reproduc tion and by broadcasting. It is clear that the present evolution theories a re with full agreement with the present theory [22]. The only modification required is th at reproduction and evolution are spontaneous processes. It was shown previously [ 2,3] that information is m ultiplied in broadcasting. Therefore, it is not surprising that we are obsessed with a desire to broadcast ourselves. When Bob broadcasts a file with I bits to N receivers, he will increase th e information by NI . A receiver will increase the information by I bits. Therefore, it is better, thermodynamically, to broadcast than to receive. It is an observable fact that informati on and life in their various forms increase with time; therefore, it is plausib le that aside from the chem istry necessary for the existence, life means a reproduction and a compressed comm unication. Acknowledgments : I thank J. Agass i, Y. B. Band, Y. Kafri, H. Kafri, the late O. Meir and R. D. Levine for many discussions thro ughout this work. 29 References 1. P. L. Simeonov " A post Newtonian View into the Logos of Bios " arxiv:0703,002 2. O. Kafri " Information and Thermodynamics " arxiv:0602,023 3. O. Kafri " The second Law and Informatics " arxiv:0701,016 4. O. Kafri " Informatics Carnot Cycle " arxiv:0705,2535 5. L. Brillouin " The Negentropy Princi ple of Information " J. App. Phys. 24 ,1152 (1953) 6. L. Brillouin Science and Information Theory , Academic Press NY pp161 (1962) 7. H. W. Woolhouse, " Negentropy, Information and the Feeding of Organisms " Nature 952(1967) 8. C. E. Shannon, “ A Mathematical Theory of Communication ”, (University of Illinois Press, Evanston, Ill., 1949) 9. E. T. Jaynes, " Gibbs vs. Boltzmann Entropies " Amer. J. Phys. 33 , 391 (1965) 10. E. T. Jaynes " Information Theory and Statistical Mechanics I " Phys. Rev. A 106 620 (1957) 11. E. T. Jaynes " Information Theory and Statistical Mechanics II " Phys. Rev. A 108 171(1957) 12. E.T. Jaynes, " The Evolution of Carnot's Principle " in Maximum-Entropy and Bayesian Methods in Science and Engineering, 1, G. J. Erickson and C. R. Smith eds., Kluwer, Dordrecht, pp 267 (1988) 13. J. Kestin, ed. " The Second Law of Thermodynamics " Dowden, Hutchinson and RossStroudsburg, pp 312 (1976) 14. N. Gershenfeld " The Physics of Information Technology " Cambridge University Press, pp 143 (2000) 15. K. Huang " Statistical Mechanics " (John Wiley, New York) pp. 68 (1987). 16.T. P. Hill " A statistical derivation of the significant-d igit law " Statistical Science 10 354 (1996) 17. F. Benford "The law of anomalous numbers" Proc. Am er. Phil. Soc. 78 ,551 (1938) 18. T. P. Hill " The first digit phenomenon " American Scientist 4, 358(1986) 19.H. M. Gupta et.al." Power-law distribution for the citation index of scientific publications and Scientists " Braz. J. Phys. 35 , 4(2005) 20. M. E. Newman " Power-law, Pareto Distribution and Zipf's law " arxiv:0412,00421 21. G. Bianconi and A. L. Barabasi " Bose-Einstein Condensation in Complex Networks " Phys. Rev. Letters 86 , 5632 (2001) 22. R. Dawkins " The Selfish Gene " Oxford University Press, (1976) 23. X. Xiu-San " Spontaneous Entropy Decrease and its Statistical Physics " arxiv:0710,4624 30

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment