Dualities Between Entropy Functions and Network Codes

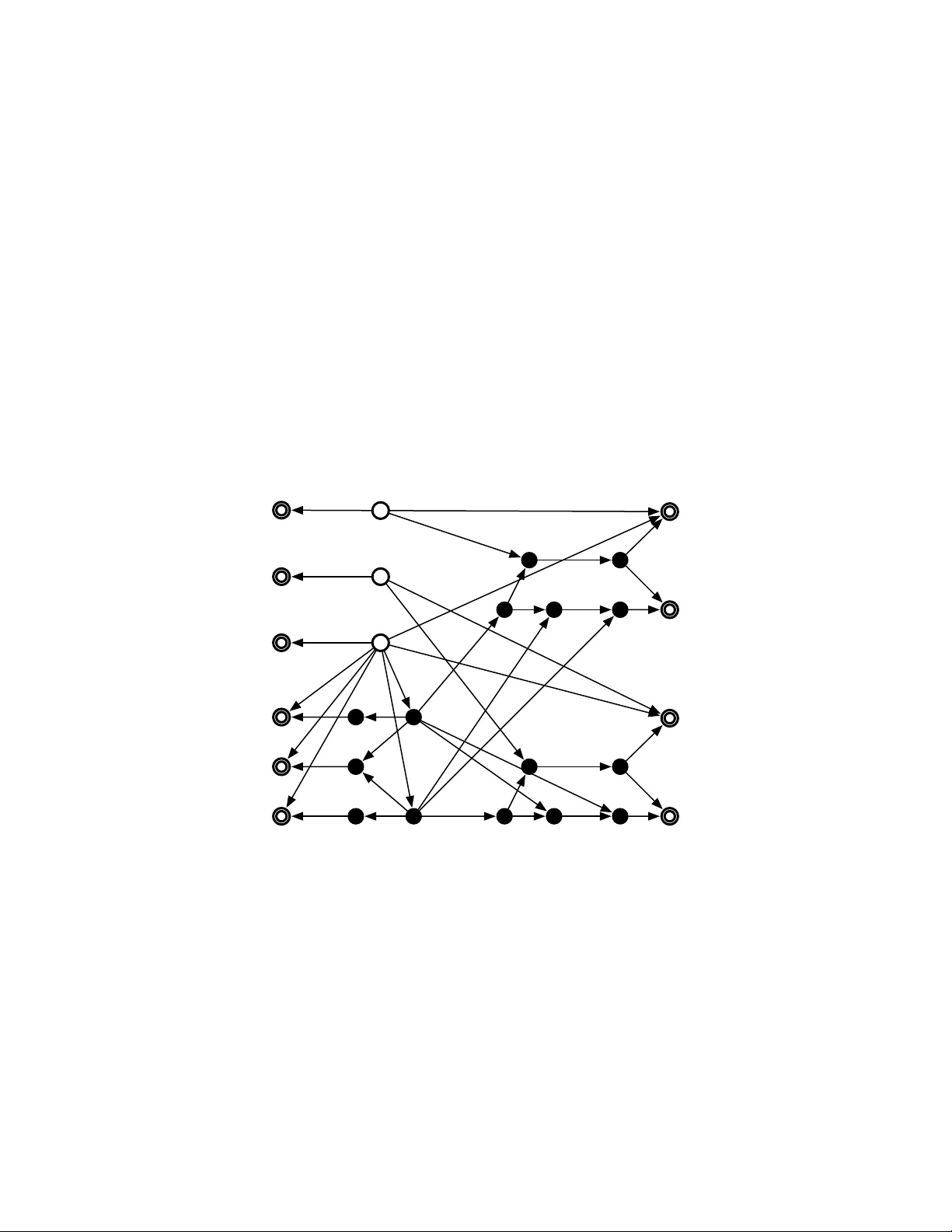

This paper provides a new duality between entropy functions and network codes. Given a function $g\geq 0$ defined on all proper subsets of $N$ random variables, we provide a construction for a network multicast problem which is solvable if and only i…

Authors: Terence Chan, Alex Grant