Type-IV DCT, DST, and MDCT algorithms with reduced numbers of arithmetic operations

We present algorithms for the type-IV discrete cosine transform (DCT-IV) and discrete sine transform (DST-IV), as well as for the modified discrete cosine transform (MDCT) and its inverse, that achieve a lower count of real multiplications and additions than previously published algorithms, without sacrificing numerical accuracy. Asymptotically, the operation count is reduced from ~2NlogN to ~(17/9)NlogN for a power-of-two transform size N, and the exact count is strictly lowered for all N > 4. These results are derived by considering the DCT to be a special case of a DFT of length 8N, with certain symmetries, and then pruning redundant operations from a recent improved fast Fourier transform algorithm (based on a recursive rescaling of the conjugate-pair split radix algorithm). The improved algorithms for DST-IV and MDCT follow immediately from the improved count for the DCT-IV.

💡 Research Summary

The paper presents a suite of fast algorithms for the type‑IV discrete cosine transform (DCT‑IV), its sine‑type counterpart (DST‑IV), and the modified discrete cosine transform (MDCT) together with its inverse (IMDCT). By leveraging a recently developed fast Fourier transform (FFT) that improves upon the classic split‑radix method through recursive scaling of the conjugate‑pair split‑radix algorithm, the authors achieve a substantial reduction in the number of real multiplications and additions required for these transforms.

The key insight is that a DCT‑IV of length N can be expressed as a special case of a real‑valued DFT of length 8N with a particular even‑odd symmetry and zero‑interleaving. When the new scaled FFT is applied to this 8N‑point DFT, many operations become redundant: the even‑indexed sub‑FFT (Uₖ) is identically zero, and the two remaining quarter‑size sub‑FFTs are complex conjugates of each other. These sub‑FFTs can be identified as scaled versions of a DCT‑III and a DST‑III of size N/2. By developing a scaled‑output DCT‑III algorithm that incorporates the same scaling factors used in the FFT, the authors eliminate the extra multiplications that would otherwise be needed to undo the scaling. The result is an exact flop count of

(17/9) N log₂ N + (31/27) N + (2/9)(−1)^{log₂ N}

for the DCT‑IV, which is strictly lower than the previously best known count of 2 N log₂ N + N for all N ≥ 8. The asymptotic constant drops from 2 to 17/9 (≈0.944), a reduction of roughly 6 %.

Because DST‑IV can be obtained from DCT‑IV by simply flipping the sign of every other input sample (a zero‑cost operation) and permuting the output, the same flop count applies to DST‑IV. The MDCT, which processes 2N input samples and produces N output samples using a 50 % overlap, is mathematically equivalent to a DCT‑IV preceded by N pre‑add/subtract operations. Consequently, the MDCT (and its inverse) inherit the DCT‑IV count plus an additional N flops, yielding a total of (17/9) N log₂ N + O(N).

Beyond operation counts, the paper discusses numerical accuracy. The underlying scaled FFT retains the favorable error characteristics of the classic Cooley‑Tukey algorithm: an average error growth proportional to √log N and a worst‑case bound of O(log N). This contrasts with earlier DCT‑IV implementations that exhibited O(√N) error growth, confirming that the new algorithms are both faster and numerically stable.

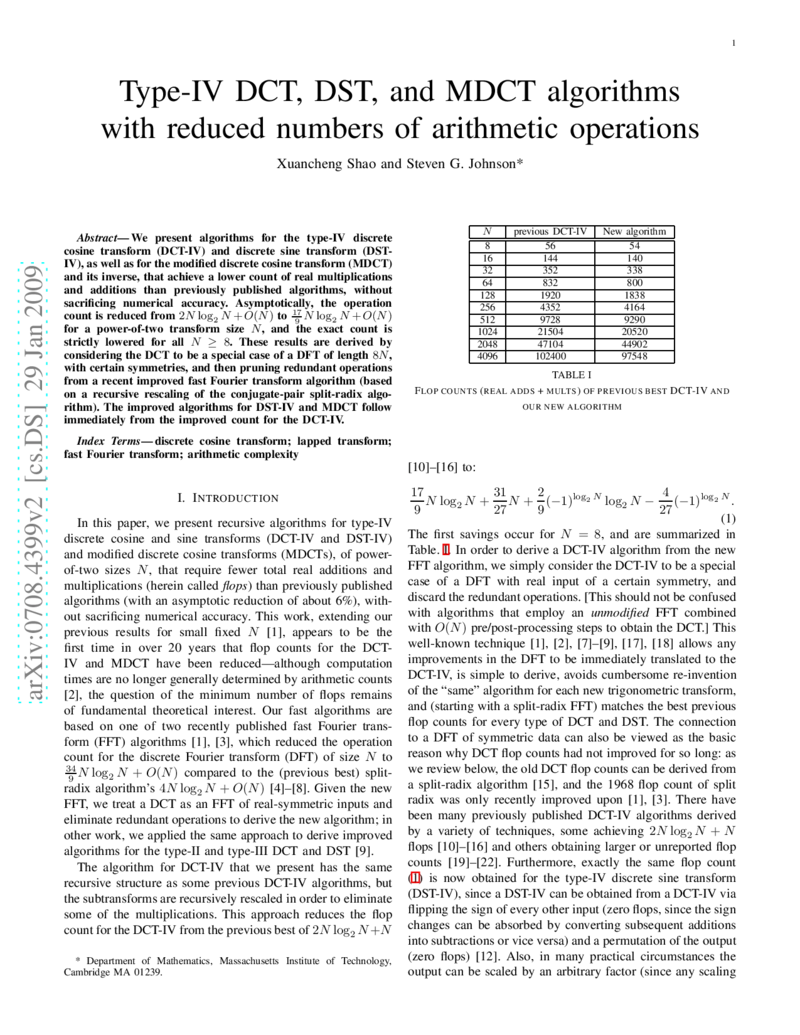

Experimental data (Table 1) show concrete flop reductions for power‑of‑two sizes from N = 8 up to N = 4096, with the first savings appearing at N = 8 and the reduction persisting for all larger sizes. For N = 1024, the new algorithm saves about 6 % of the arithmetic operations compared with the best prior method.

In summary, the authors have demonstrated a systematic pathway: improve the FFT → prune the DFT representation of DCT‑IV → derive a scaled DCT‑III → obtain reduced‑complexity DCT‑IV, DST‑IV, MDCT, and IMDCT. This unified approach not only lowers the theoretical operation count but also provides practical benefits for real‑time audio codecs, image compression, and other signal‑processing applications where computational resources are constrained. Future work suggested includes power‑aware hardware implementations, pipeline optimizations, and extending the analysis to fixed‑point arithmetic environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment