Side-information Scalable Source Coding

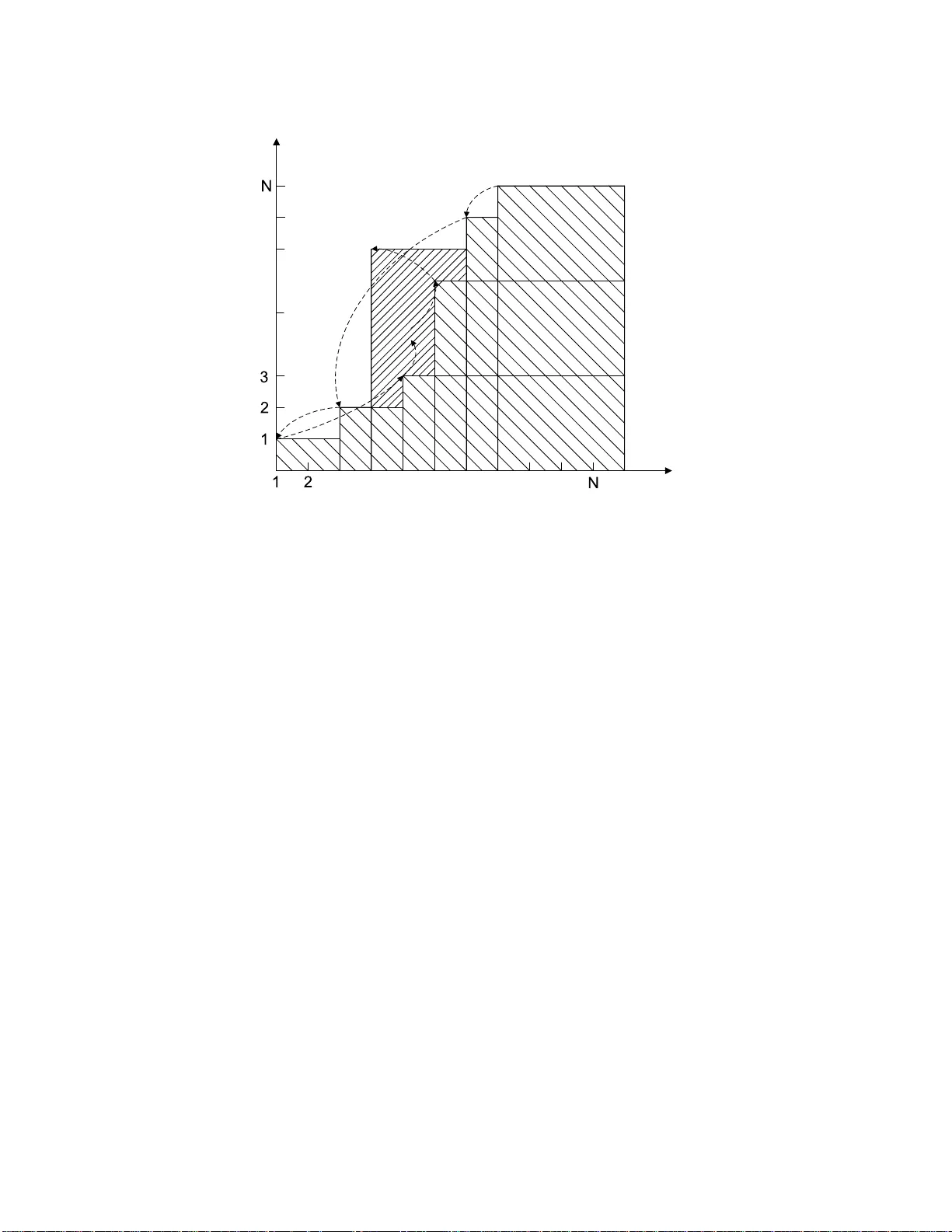

The problem of side-information scalable (SI-scalable) source coding is considered in this work, where the encoder constructs a progressive description, such that the receiver with high quality side information will be able to truncate the bitstream …

Authors: ** Chao Tian, Suhas N. Diggavi **