Subjective Information Measure and Rate Fidelity Theory

Using fish-covering model, this paper intuitively explains how to extend Hartley's information formula to the generalized information formula step by step for measuring subjective information: metrical information (such as conveyed by thermometers), …

Authors: Chenguang Lu

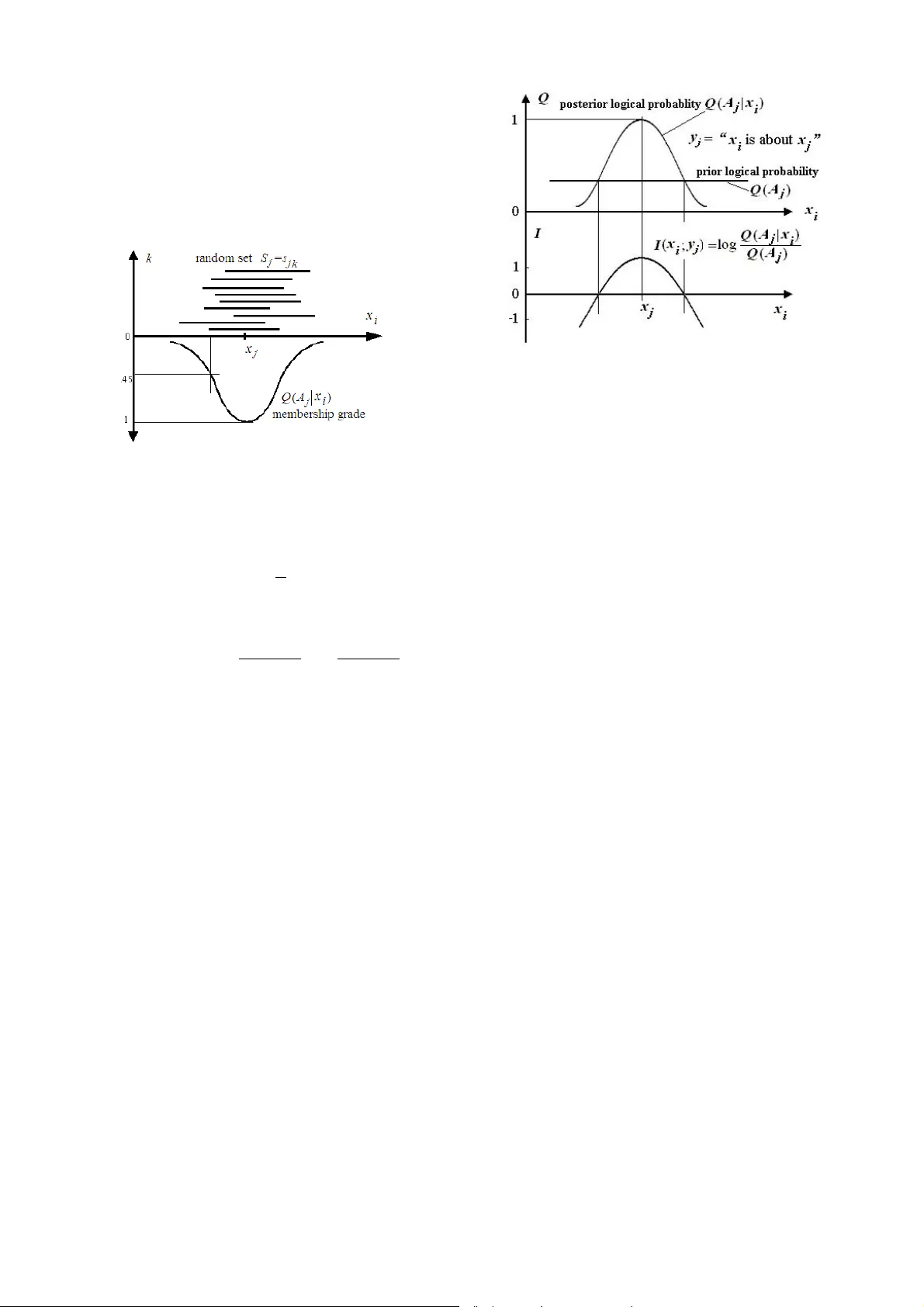

1 Subjective Information Measure and Rate Fidelity Theory Chenguang Lu ① Independent Researcher, Survival99@hotmail.com Abstract —Using fish -covering model, this pape r intuitively explains how to extend Hartley’s information formula to the generalized in form ation formula step by step for measuring subject ive information: metri cal information (such as c onveyed by thermometers), sens ory information (such as c onveyed by color vision), and semantic information (such as conveyed by weather forecasts). The pivotal ste p is to differentiate conditi on probability and log ical condition probab ility of a message. The paper illustrates the ra tionality of the formula, discusses the coherence of the gener alized information formula and Popper’s knowle dge evolution theor y. For optimizing data com pression, the paper d iscusses rate-of- limiting-errors and its similarity to complex ity-distortion based on Kolmogorov’s comple xity theory, and improves the rate-distortion theory into the rate-fidelity the ory by replacing Shannon’s distorti on with subjective mutual information. It is proved tha t both the rate-distorti on function and the rate-fidelity functi on are equivalent to a rate-of-limiting-err ors function w ith a group of fuzz y sets as limiting condition, and c an be expressed by a formula of generaliz ed mutual in formation for lossy c oding, or by a formula of gener alized entropy for lossless coding. By analyzin g the rate-fidel ity functi on related t o visual discrimination and digitized bi ts of pixels of i mages, the paper concludes th at subjective informati on is less than or equal to objective (Shannon ’s) informatio n; there is an optimal matching point at which two kinds of information are equa l; th e matching information increases with visual dis crimination (de fined by confusing probability) rising; for giv en visual discrimination, too high resolut i on of images or too much objective information is wasteful. I. I NTRODUCTION To measure senso ry inform ation and sem antic info rmation, I set up a generalized i nforma tion theory thirteen years ago [4-8] and published a m onograph f ocusing on t his the ory in 1993 [5]. But, my research es are still rarely known by English researchers of i nform ation theory. Recently, I read some papers about complexity distortio n theory [2], [9] based on Kolm ogorov’s c omplexi ty theory. I fou nd that, actually, I ha d discussed c omple xity-distort ion functi on and proved that the generalized entrop y in my theory was just such a function , and had concluded t hat the complexi ty- distortion function with size-un equal fuzzy error-limiting balls could be expressed by a fo rmula of ge neralized mutu al information. I also found that some researchers did some efforts [9 ] similar to mine fo r improving Sh annon’s rate- distortion theory . This paper f irst explain s how to e xtend Hartl ey’s information form ula to the generalized in formation fo rmula, and then discusses the generalized mutual in formation and some questions related to Popper’s theory, complexity distortion theory, an d rate-distortion theory. II. H ARTLEY ’ S I NFORMATION F ORMULA AND A S TORY OF C OVERING F ISH Hartley’s information form ula is [3] I =log N, (1) where I denotes the informatio n conveyed by the occurrence of one of N events with equal probability. If a message y tells that uncertain exten sion changes from N 1 to N 2 , then informati on conveyed by y is I r = I 1 - I 2 =log N 1 -log N 2 =log( N 1 / N 2 ). (2) We call (2) relative inform ation formula. Before discussing its properties, Let’ s hear a story about co vering fish with fish covers. Figure 1: Fish-covering m odel for relative inform ation I r . Fish covers a re made o f bamboo. A fish co ver looks like a hemisphere with a roun d hole at top for hum an hand to catch fish. Fish covers are suitable for catching fish in adlittoral pond. When I wa s a teenag e, af ter watching peasants catch fish with fis h covers, I decided to do the sam e thing. I fo und a basket with a hole at bottom and followed those peasants to catch fish. Fortunately, I succe ssfully caught som e fish, but no so much as the peasa nts did. Then I com pared my smaller basket with much bigger fish cov er to get the following conclusions. The fish cover is bigger so that covering fish is easier; yet, catching fish with hands is more difficult. If a fis h cover is big enough to cov er the pond, it must be able to cover fish. However, this huge fish co ve r is useless because catching fish with hands is as difficult as without fish cover. When one uses the basket or sm aller fish co ver to cover fi sh, though covering fish is more difficult, catching fish with hands is much easier. An uncertain event is alike a fish with random position in a pond. Let a sentence y =” Fish is covere d”; y will convey information about the position of fish. Let N 1 be the area of the pond, N 2 be the area c overed by the fish cover, then informat ion conveyed by y is I r =log( N 1 / N 2 ). The smaller N 2 is than N 1 , the bigger the information amoun t is. This just 2 reflects the advantage of t he basket. If N 2 = N 1 , then I =0. This just tells us that covering fish is meaningless if the cover is as big as t he pond. The ab ove form ula cannot tell the advantage of fi sh covers (covering fish with less failure) in comparison with the basket because in the classical information theory, there seems a hypothesis that the failure of coverin g fish neve r happens. The gene ralized inf ormation formula introduced bellow will contain “possible failure of covering fish”. III. I MPROVING THE F ISH - COVERING F ORMULA WITH P ROBABILITY Hartley’s i nformati on formul a requires N eve nts with equal probab ility P =1/ N . Yet, the probabilities o f events are unequal in general. For exam ple, the fish stays in deep water in bigger probability and in shallow water in smaller probability. In these cases, we need to replace 1/ N with probab ility P so that I =log(1/ P ) (3) and I r =log( P 2 / P 1 ). (4) IV. R ELATIVE I NFORMATION F ORMULA WITH A S ET AS C ONDITION Let X denote t he random vari able takin g values from set A ={ x 1 , x 2 , …} of events, Y denote the rando m variabl e taki ng values from set B ={ y 1 , y 2 ,…} of sentences or messages. For each y j , the re is a s ubse t A j o f A and y j = “x i ∈ A j ”, which can be cursori ly underst ood as “Fis h x i is in cover A j ” . Then P 1 abov e beco mes P ( x i ), P 2 becomes P ( x i | x i ∈ A j ). We simply denote P ( x i | x i ∈ A j ) by P ( x i | A j ), which is conditional probability with a set as conditio n. Hence, the above relative information form ula becomes )] ( / ) | ( log[ ) ; ( i j i j i x P A x P y x I = . (5) For convenience, we call this formula as the fish-covering information form ula. Note that the most important thing is generally P ( x i | A j ) ≠ P ( x i | y j ), because P ( x i | y j )= P ( x i |“ x i ∈ A j ”)= P ( x i |“ x i ∈ A j ” is reported); yet, P ( x i | A j )= P ( x i | x i ∈ A j )= P ( x i |“ x i ∈ A j ” is true), where y j may be an i ncorrect reading datum, a wrong message, or a lie, yet, x i ∈ A j means that y j must be correct. If they are always equal, then formula (5) will be come classical information formula )] ( / ) | ( log[ ) ; ( i j i j i x P y x P y x I = , (6) whose average is just Shannon mut ual informati on [11]. V. B AYESIAN F ORMULA FOR THE F ISH - COVERING I NFORMATION F ORMULA Let the feature function of set A j be Q ( A j | x i ) ∈ {0,1}. According to Bayesian formula, there is P ( x i | A j )= Q ( A j | x i ) P ( x i )/ Q ( A j ), (7) where ∑ = i i j i j x A Q x P A Q ) | ( ) ( ) ( . From (5) and (7), we have ) ( ) | ( log ) ( ) | ( log ) ; ( j i j i j i j i A Q x A Q x P A x P y x I = = , (8) which (illustrated by Figure 2) is the transition fro m classical information form ula to genera lized information form ula. Figure 2: Illustrati on of fish-covering inform ation formul a related to Bayesian formula. Let us use a t hermom eter to explai n how to use the fi sh- covering inf ormation f ormul a to measure m etrical information. The reading datum of a thermo meter may be considered to be reporting senten ce y j ∈ B ={ y 1 , y 2 ,…} , and real temperature as the fish position x i ∈ A ={ x 1 , x 2 , …}. Let y j =“ x i ∈ A j ”, and A j = [ x j - △ x , x j + △ x ] according to the resolution of t he thermom eter and eyes' visual discri mination . Hence, we can use t he fish-c ove ring inf ormation form ula to measure thermometric information. VI. G ENERALIZED I NFORMATION F ORMULA WITH A F UZZY S ET AS C ONDITION Informat ion conveyed by a readin g datum of t hermom eter and information conveyed by a forecast “The rainfall will be about 10 mm” are the same in essence. Using a clear set as condition as above is no t good enough becau se the informat ion am ount should change wit h x i continuously . We wish tha t the bigger the error ( i.e. x i - x j ), the less the information. Now, using a fuzzy set to replace the clear set as condition, we can realize this purpose (see Fi gure 4). Now, we cons ider y j to be sentence “ X is x j ” (or say y j = j x ˆ ). For a fuzzy set A j whose feature function Q ( A j | x i ) takes value fro m [0, 1] and Q ( A j | x i ) can be considered t o be confusing probab ility of x i with x j . If i = j , then t he confusi ng probability reaches its maximum 1. Actually, the confusing prob ability Q ( A j | x i ) is only different parlance of the m embership grade of x i in fuzz y set A j or the logical probability of proposition y j ( x i ). There is Q ( A j | x i )=feature function of A j =confusing probability o r similarity of x i with x j =mem bership grade of x i in A j =logical probability or cr editability of proposition y j ( x i ) 3 The discrim ination o f human sense organs, such as visual discriminat ion for gray l evels of pi xels of im ages, can also be described by confusing prob ability functions. In these cases, a sensation can be considered to be a reading dat um y j = j x ˆ of the thermom eter. The vi sual discrim ination funct ion of x j is Q ( A j | x i ), i =1, 2,… where A j is a fuz zy set containi ng all x i that are confused with x j . We may use the statistic of random clear sets t o obtain this functi on [13]. Figure 3: Confusin g probability fu nction from clear sets. First we do many tim es experim ents to get the clear confusing sets s jk , k =1, 2… n , by pu tting x j on one side of a screen and c hanging x i on anot her side of the scree n for ey es to discern. And then we calculate , ) | ( 1 ) | ( ∑ = k i jk i j x s Q n x A Q (9) Now, by replacing a clear set with a fuzzy set as condition, we get the generalized inform ation formul a: . ) ( ) | ( log ) ( ) | ( log ) ; ( j i j i j i j i A Q x A Q x P A x P y x I = = (10) It looks the same as the fish -covering info rmation form ula (8), but Q ( A j | x i ) ∈ [0,1] instead of Q ( A j | x i ) ∈ {0,1}. And also, this form ula allows wron g reading d ata or m essages, bad forecasts, or lies which convey ne gative information. T he generalized inf ormation fo rmula can be un derstood as fis h- covering info rmation fo rmula with f uzzy cover. Because of fuzziness, gen erally, the am ount of negat ive inform ation is finite. The property of the fo rmula can be illustrated by Figure 4. Figure 4 tell s us that when a reading datum or a sensation y j = j x ˆ is provi ded, the bigger th e diffe rence of x i from x j , the less the information; and the less the Q ( A j ), the bi gger t he absolute val ue of inform ation. From this form ula, we can conclude that inform ation am ount not onl y depen ds on the correctness of r eflection, but also depends on the precision of reflection. Figure 4: Generalized inform ation form ula for measuring metrical informati on, sensory informati on, and number- forecasting information. VII. C OHERENCE OF THE S EMANTIC I NFORMATION M EASURE AND P OPPER ’ S C RITERION OF A DVANCE OF K NOWLEDGE The generalized information fo rmula can also be used to measure semanti c informat ion in ge neral, such as information from weather forecast “Tomorrow will be rainy or heavy rainy”. We may assume that for any proposition y j , there is a Plato’s idea x j that mak es Q ( A j | x j )=1. The id ea x j is probably not in A j . Hence, any logical conditio n probability Q ( A j | x i ) can be considered to be the con fusing probability of x i with the idea x j . From my view-point, forecasting information is more general information in comparison with descriptive information. If a forecast is always correct, then the forecasting information will become descriptive information. About the criterion of adv ance of scientific theory, philosopher Karl Popper wrote: “The criterion of relative potentia l satisfactoriness… characterizes as preferable the theory which tell us more; that is to say, the the ory whic h contains the greate r amount of empirical information or content; wh ich is logically stron g; which has the grea ter explanatory and p redictive power; and which can therefore be more severely tested by compari ng predicted facts with observa tions. In short, we prefer an interesting, daring, and h ighly informative theory to a trivial one. ” ( in [10], pp. 250) Clearly, Popper used informati on as th e criterion to value the advance of scientific th eories. According to Popper’s theory, the more easily a proposition is falsified logically and the more it can go through fact s (in m y words, the less the prior logical probability Q ( A j ) is, and the bigger the posterior logical probabilit y Q ( A j | x i ) is ), the more inform ation it conveys and the more meanin gful it is. Contrarily, a proposition that can not be falsified logically (in my words, Q ( A j | x i ) = Q ( A j ) = 1) conveys no inform ation and is insignificant in scien ce. Obviously, the generalized informat ion measure is very cohere nt with Po pper’s informati on criterion; the ge neralized inf ormation f ormula functions a s a bride between Shannon’s i nformat ion theory and Popper’s knowledge evolution t heory. 4 VIII . G ENERALIZED K ULLBACK ’ S I NFORMATION AND G ENERALIZED M UTUAL I NFORMATION Calculating the average of I ( x i ; y j ) in (10), we have generalized Fullback’s info rmation formula for gi ven y j : ) ( ) | ( log ) | ( ) ; ( i j i i j i j x P A x P y x P y X I ∑ = . (11) Actually, the probabilities on the right of the log should be prior probabilities or lo gical probabilities, the probab ility on the left of the log should be posterior probability. Sin ce now we differentiate two kinds of probabilities and use Q (.) for those probabilities after log . Hence the above formula becomes ) ( ) | ( log ) | ( ) ; ( i j i i j i j x Q A x Q y x P y X I ∑ = . (12) We can prove that as Q ( X|A j )= P ( X|A j ), which means subjective probability forecasts co nforms to objective statistic, I ( X ; y j ) reaches its ma ximum. The m ore different the Q ( X ) is from P ( X|A j ), which means that the facts are m ore unexpected, the bigger the I ( X ; y j ) is. This formula also conforms to Popper’s theory . Further, we have generalized mut ual informati on formula ) | ( ) ( ) | ( ) ( )] ( / ) | ( log[ ) , ( ) ; ( ) ( ) ; ( Y Y H Y H Y X H X H x Q A x Q y x P y X I y P X X I i j i i j i j j j − = − = = = ∑ ∑ (13) where ∑ − = i j i x Q x P X H ) ( log ) ( ) ( (14) ∑∑ − = ji j i i i A x Q y x P Y X H ) | ( log ) , ( ) | ( (15) ∑ − = j j j A Q y P Y H ) ( log ) ( ) ( (16) ∑∑ − = ji i j i i x A Q y x P X Y H ) | ( log ) , ( ) | ( (17) I call H ( X ) forecasting entropy, which reflects the ave rage coding length when we ec onomically encode X according to Q ( X ) while real source is P ( X ), and reaches i ts minim um as Q ( X )= P ( X ). I call H ( X | Y ) posterior forecasting entropy, call H ( Y ) general ized entropy , and call H ( Y | X ) generali zed condition entropy or fuzzy entropy [6]. I think that the generalized information is subj ective information and Shannon in formation is objective information. If two weather forecasters always provide opposite forecasts and one is alw ays correct and another is always incorrect. They convey t he same obje ctive informat ion, but the di ffere nt subjective informat ion. If Q ( X )= P ( X ) and Q ( X|A j )= P ( X|y j ) for each j , which means subjective forecasts conform to objective facts, then the subjective mutual information equals obj ective mutual information. IX. R ATE - OF - LIMITING - ERRORS AND I TS R ELATION TO C OMPLEXITY - DISTORTION In [5], I define d rate-of-lim iting-errors, whi ch is similar to complexity distortion [2]. The difference is that the error- limiting cond ition for rate-of-limiting-errors is a grou p of sets or fuzzy sets A J= { A 1 , A 2 …} instead of a group of balls with the same size and cl ear bounda ries for com plexity distortion. We know that the color space of digital images is v isually ununiform and huma n eyes’ discrim ination i s fuzzy. So, in some cases, such as coding for digital images, using si ze- unequal balls or fuzzy b alls as limiting conditio n will be more reasonable. Assume P ( Y ) is a source; encode Y into X ; allow y j is encoded into any x j in clear set A j , j =1, 2…; then the minim um of Shann on mutual inform ation for different P ( X | Y ) is defined as rate-of-limiting-errors R ( A J ). Interestingly, it can be proved that R ( A J ) is just equal to the generalized en tropy H ( Y ) [5]. To realize this rate, there mus t be P ( X|y j )= Q ( X|A j ) for each j . Furthe rmore, whe n the limiting sets are fuzzy, i.e. P ( X|y j ) ≤ Q ( X|A j ) for each j as Q ( A j |xi)<1, there is ) ( ) | ( log ) | ( ) ( ) ( i i j ji j i j J A Q x A Q A x Q y P A R ∑∑ = (18) To realize this rate, there must be P ( X )= Q ( X ) and P ( X|y j )= Q ( X|A j ) for each j so that Shannon’s mutual information equals the generalized mutual information. Now, from the vie w-point of the com plexit y distort ion theory, the generalized entro py H ( Y ) is just prior co mplexity, the fuzzy entropy H ( Y | X ) is just the posterior complexity, and I ( X ; Y ) is the reduced complexity. X. R ATE F IDELITY T HEORY : R EFORMED R ATE D ISTORTION T HEORY Actually, Shannon mentioned fidelity criterion for lossy coding bef ore. He used the distortio n as the criterion for optimizing lossy coding becau se the fidelity criterion is hard to be form ulated. Howe ver, dist ortion is not a goo d criterion in most cases. How do we value a person? We value him according to not only hi s errors b ut also his co ntributio ns. For thi s reason, I replace the error fun ction d ij = d ( x i , y j ) with generalized information I ij = I ( x i ; y j ) and distorti on d ( X , Y ) with generalized mutual inform ation I ( X ; Y ) as criterion to search the minim um of Shann on mutual in formation I s ( X ; Y ) for given P ( X )= Q ( X ) and the lower limit G of I ( X ; Y ). I call this criterion the fidelity criterion, call th e minimum the rate- fidelity function R ( G ), and call the reform ed theory the rat e fidelity theory. In a way similar to that in th e classical inform ation theory [1], we ca n obtain t he expressi on of fu nction R ( G ) with parameter s : ∑∑ ∑ ∑ + = = ji i i ji ij i ij j i x P s sG s R I sI y P x P s G λ λ log ) ( ) ( ) ( ) exp( ) ( ) ( ) ( (19) wher e s = dR/dG indicates the slope of function R ( G ) ( see Figure 5) and ) exp( ) ( / 1 ij j j i sI y P ∑ = λ . We define a gro up of sets B I= { B 1 , B 2 …}, where B 1 , B 2 … are subset of B ={ y 1 , y 2 ,…} , by fuzzy feature function m A Q x A Q m sI y B Q s i i j ij j i / )] ( / ) | ( [ / ) exp( ) | ( = = (20) where m is th e max i mum o f e xp ( sI ij ); then from (19) and ( 20) we have 5 ). ( ) ( ) | ( log ) | ( ) ( ) ( I ji i j i i j i B R B Q y B Q B y P x P G R = = ∑∑ (21) This function is just the rate-of-limiting- errors with a group of fuzzy sets B I = { B 1 , B 2 …} as limiting conditio n while codi ng X in A into Y in B . From this formula, we can find there is profoun d relati onship between rate- of-lim iting- errors and rate-fidelity (or rate-distortion). In the above formulas, if we replace I ij with d ij = d ( x i , y j ), (21) is also tenable. So, actually rate-d istortion function can b e expressed by a formula of generali zed mutual inform ation. In [7], I define d inform ation val ue V by the increment of growing speed of fund because of inform ation, and suggested to use th e information valu e as criterion to optimize communication in some cases to get function rate- value R ( V ), which is also m eaningful. XI. R ATE - FIDELITY F UNCTION FOR O PTIMIZING I MAGE C OMMUNICATION Now let’s exa mine the relat ionships among subject ively visual inform ation, visual discrimi nation, and o bjective information. For simplicity, we con sider the information provided by di fferent gray le vels of pixels of im ages (see [4] for details). Let the gray level of digitized pixel be a source and the gray level is x i =i, i =0, 1... b =2 k -1 with normal probability distribution whose expectat ion= b /2 a nd standa rd de viation= b /8. Assume that after decodi ng, the pi xel also has gray level y j = j =0, 1... b ; the percepti on caused by y j is also denot ed by y j ; and discrimination function or confusin g probability function of x j is )] 2 /( ) ( exp[ ) | ( 2 2 d j X X A Q j − − = (22) wher e d is d iscrimination parameter. The smaller the d , the higher the discrim ination. Figure 5. Relati onship between d and R ( G ) for b =63. Figure 5 indi cates that when R =0, G <0, which means that if a coded image has noth ing to do with the original image, we still believe it reflects the original i mage, then the information will be negative. When G =-2, R >0, which means that certain objective informati on is necessary when one us es lies to deceive his or her ene my to some extent; or say, lies against facts are more terrible than lies according to not hing. The each line of function R ( G ) is tangent with the line R = G , which means there is a matching point at which objectiv e information is equal to su bjective information, and the higher the discrimi nation (the le ss the d ), the bigger t he matching informat ion am ount. The slope of R ( G ) become s bigger and bigger with G increasing. This tells us for given discrimination, it is limited to increase subjective information. Figure 6 t ells us t hat for gi ven di scriminat ion, there e xists the optimal digitized -bit k ' so that the matching value of G and R reaches the maximum. If k < k ', the matching information increases with k ; if k > k ', the m atching information no longer increases with k . This means that too high resolution of images is unnecessar y or uneconomical for given visual discrim ination. Figure 6: Relat ionship between m atching value of R with G , discriminat ion paramet er d , and digitized bi t k. XII. C ONCLUSIONS This paper has deduced gen eralized infor mation form ula for measuring sub jective info rmation by replacing condition probability with logical con dition probability, an d improved the rate-distortion th eory into the rate fidelity theo ry by replacing Shannon distorti on with subjective mutual information. It has also discussed the rate-fidelity function related to vi sual discrim ination and di gitized gra des of images, and gott en some m eaningful results. R EFERENCES [1] T. Berger, Rate Distortion Theory , Englewood Cliffs, N.J.: Prentice- Hall, 1971. [2] M. S. Daby and E. Alexandr os, “Complexity Distortion Theory”, IEEE Tran. On Information Theory, Vol. 49, No. 3, 604-609, 2003. [3] R. V. L. Hartley , “Transm ission of information”, Bell System Technical Journal, 7 , 535, 1928. [4] C. Lu, “Coherence between the ge neralized mutual information form ula and Popper's theory of scien tific evolution”(in Chinese), J. of Changsha University , No.2, 41-46, 1991. [ 5 ] C. Lu, A Generalized Information Theory (in Chinese), China Science and Technology University Press, 1993. see http://survivor99.com/lcg/books/GIT [6] C. Lu, “Coding m eaning of genera lized entropy and generalized m utual information” (in Chinese), J. of China Institute of Communications , Vol.15, No.6, 38-44, 1995. [7] C. Lu, Portfolio’s Entropy Theory and Information Value , ( i n Chinese), China Science and Technology University Press, 1997 [8] C. Lu, “ A generalization of Shannon's information theory ”, Int. J. of General Systems , Vol. 28, No.6, 453-490, 1999. see http://survivor99.com/lcg/englis h/inform ation/GIT/index.htm [9] G. Peter and P. Vitanyi, Shannon inform ation and Kolm ogorov complexity, IEEE Tran. On Information Theory, submitted, http://homepages.cwi.nl/~paulv/paper s/info.pdf [10] K. Popper, Conjectures and Refutations—the Growth of Scientific Knowledge , Routledge, London and New York, 2002. [11] C. E. Shannon, “A m athematical theor y of comm unication”, Bell System Technical Journal, Vol. 27, pt. I, pp. 379-429; pt. II, pp. 623-656, 1948. [12] P . Z . W a n g , Fuzzy Sets and Random Sets Shadow (in Chinese), Beijing Normal University Press, 1985. ① See author’s website: http://survivor99.com/lcg/english for more information

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment