Scanning and Sequential Decision Making for Multi-Dimensional Data - Part II: the Noisy Case

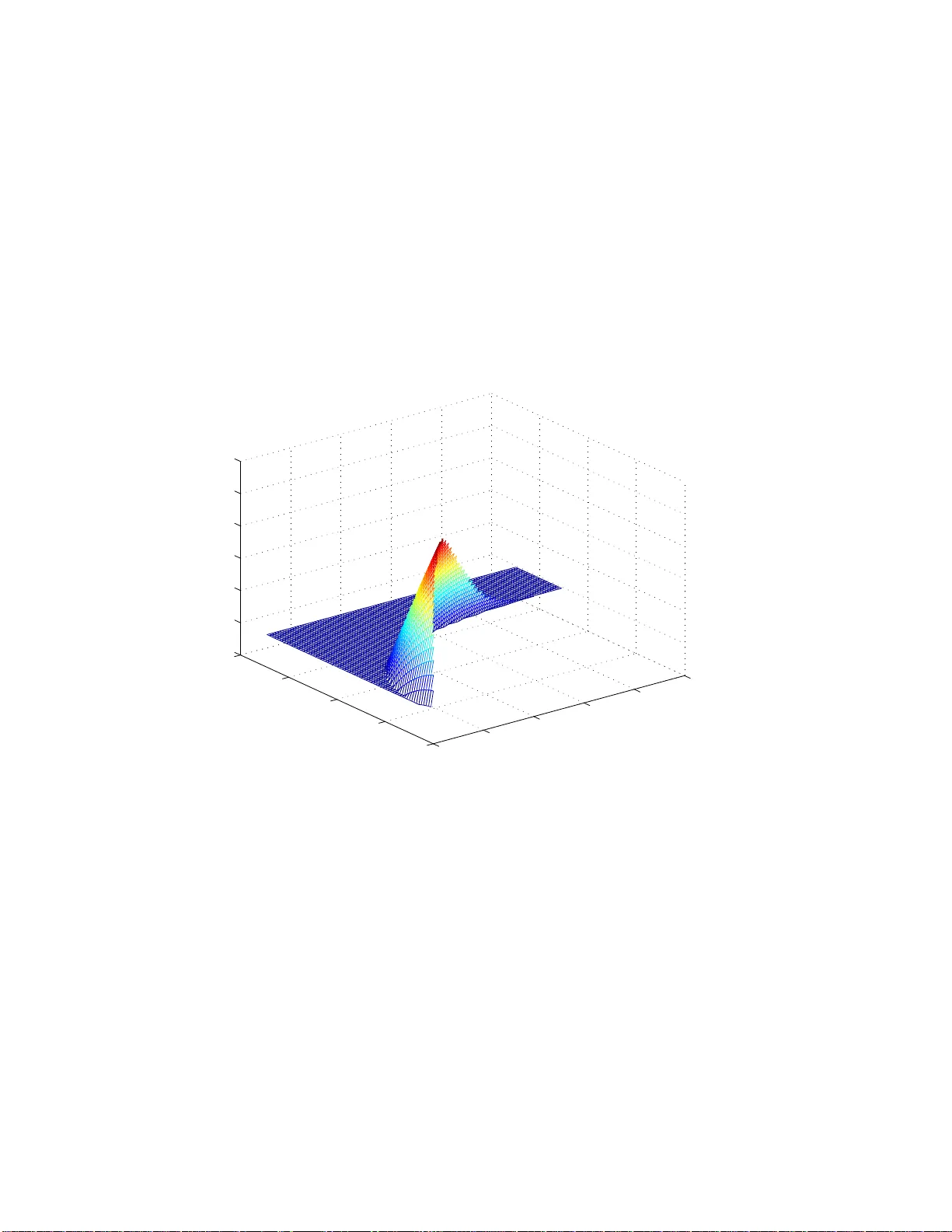

We consider the problem of sequential decision making on random fields corrupted by noise. In this scenario, the decision maker observes a noisy version of the data, yet judged with respect to the clean data. In particular, we first consider the prob…

Authors: Asaf Cohen, Tsachy Weissman, Neri Merhav