Cs-Cl

FS-DFM: Fast and Accurate Long Text Generation with Few-Step Diffusion Language Models

Let LLMs Speak Embedding Languages: Generative Text Embeddings via Iterative Contrastive Refinement

Estimating Semantic Alphabet Size for LLM Uncertainty Quantification

R-Align: Enhancing Generative Reward Models through Rationale-Centric Meta-Judging

Generating Data-Driven Reasoning Rubrics for Domain-Adaptive Reward Modeling

RARe: Retrieval Augmented Retrieval with In-Context Examples

TrailBlazer: History-Guided Reinforcement Learning for Black-Box LLM Jailbreaking

On the Wings of Imagination: Conflicting Script-based Multi-role Framework for Humor Caption Generation

Learning Rate Scaling across LoRA Ranks and Transfer to Full Finetuning

Reading Between the Waves: Robust Topic Segmentation Using Inter-Sentence Audio Features

Designing Computational Tools for Exploring Causal Relationships in Qualitative Data

Investigating the structure of emotions by analyzing similarity and association of emotion words

Beyond Static Alignment: Hierarchical Policy Control for LLM Safety via Risk-Aware Chain-of-Thought

EoRA: Fine-tuning-free Compensation for Compressed LLM with Eigenspace Low-Rank Approximation

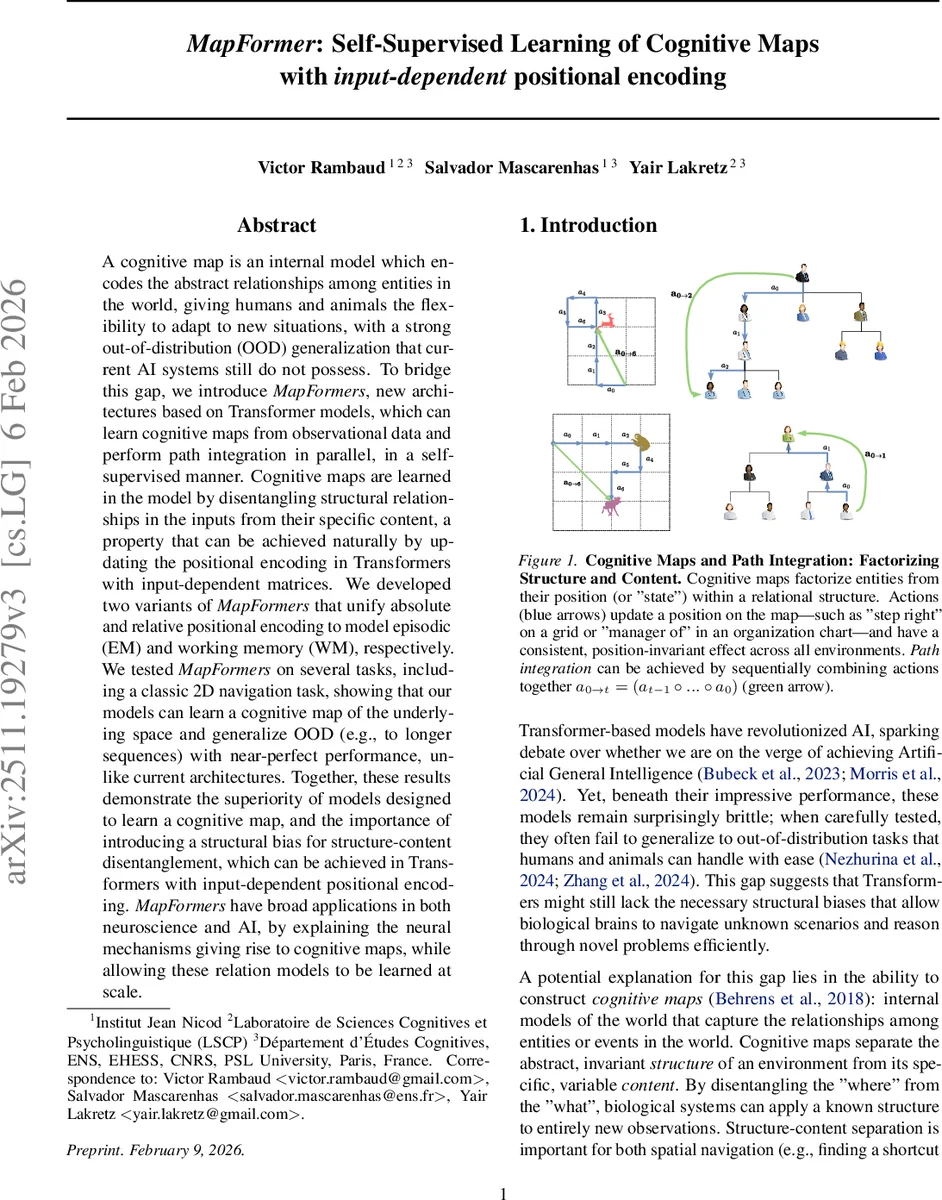

MapFormer: Self-Supervised Learning of Cognitive Maps with Input-Dependent Positional Embeddings

You Had One Job: Per-Task Quantization Using LLMs' Hidden Representations

CLaRa: Bridging Retrieval and Generation with Continuous Latent Reasoning

Context-Free Recognition with Transformers

Quantifying the Effect of Test Set Contamination on Generative Evaluations

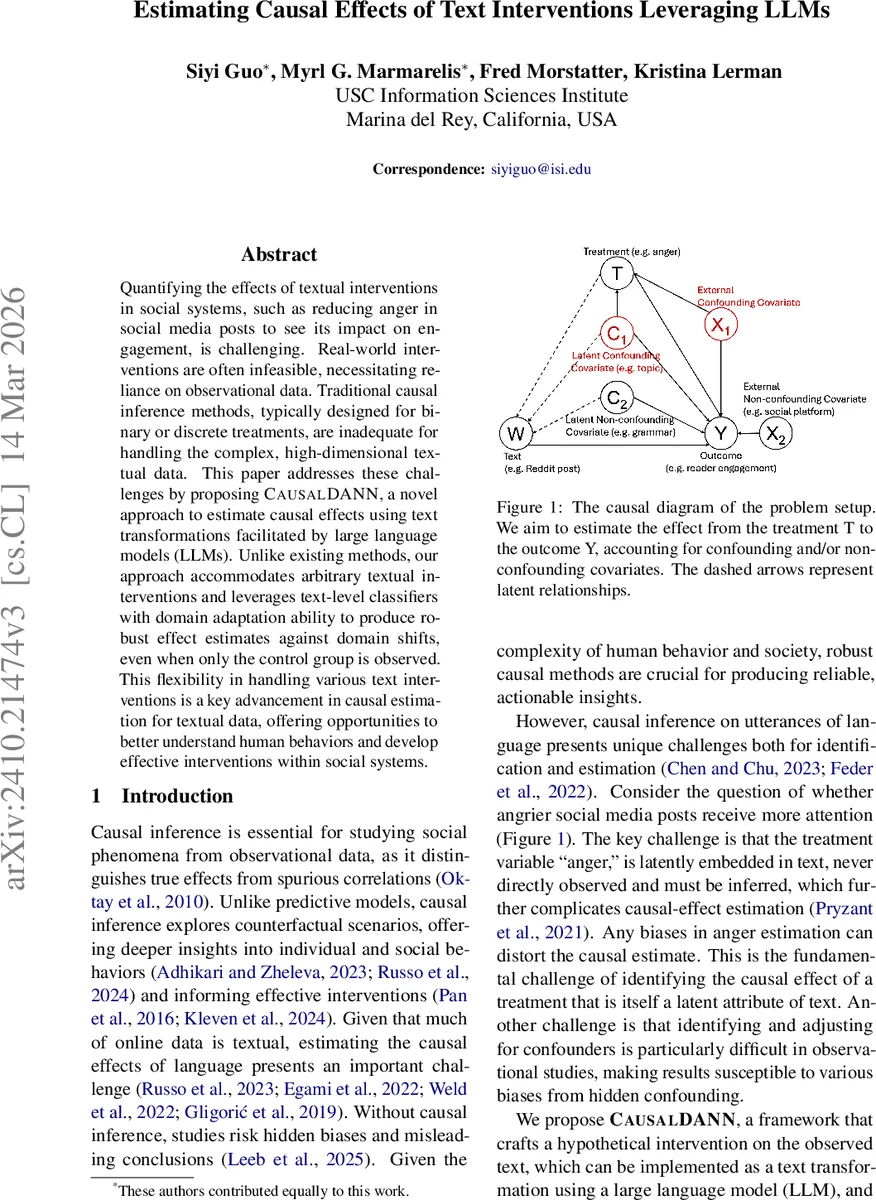

Estimating Causal Effects of Text Interventions Leveraging LLMs

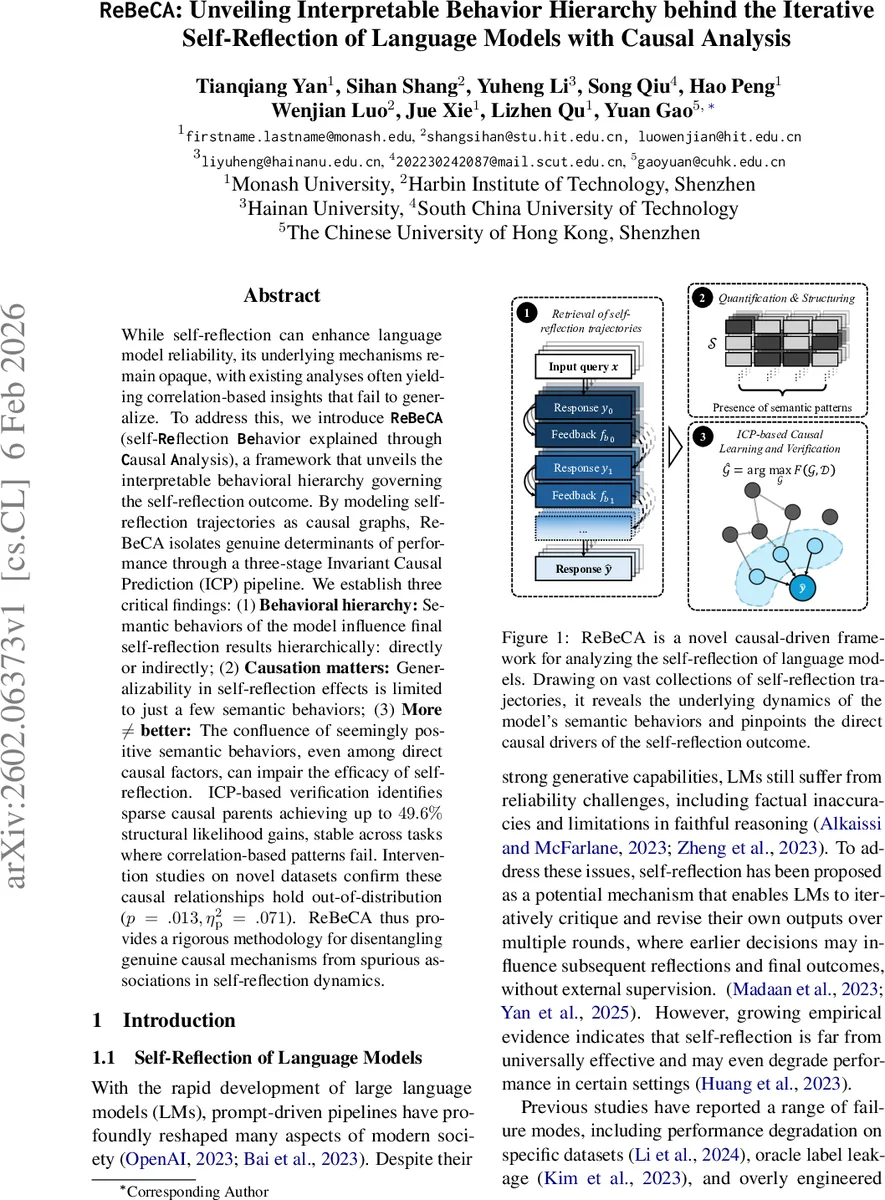

ReBeCA: Unveiling Interpretable Behavior Hierarchy behind the Iterative Self-Reflection of Language Models with Causal Analysis

Cost-Aware Model Selection for Text Classification: Multi-Objective Trade-offs Between Fine-Tuned Encoders and LLM Prompting in Production